Interviews are opportunities to demonstrate your expertise, and this guide is here to help you shine. Explore the essential Machine Learning and Predictive Maintenance interview questions that employers frequently ask, paired with strategies for crafting responses that set you apart from the competition.

Questions Asked in Machine Learning and Predictive Maintenance Interview

Q 1. Explain the difference between supervised, unsupervised, and reinforcement learning in the context of predictive maintenance.

Predictive maintenance leverages machine learning to anticipate equipment failures. The type of machine learning employed depends on the data available and the problem’s nature. Let’s explore the three main types:

- Supervised Learning: This is like having a teacher. We have labeled data—historical equipment sensor readings paired with known outcomes (failure or no failure). The algorithm learns to map sensor readings to failure probabilities. For example, we might use past data showing vibration levels, temperature readings, and run times, all linked to whether a specific machine failed within a certain timeframe. The model learns from this labelled data to predict future failures based on new sensor readings. A common supervised learning algorithm for this is logistic regression or support vector machines.

- Unsupervised Learning: This is like exploring a new city without a map. We have sensor data, but no labels indicating failures. The algorithm aims to find patterns and structures in the data. For instance, we might use clustering techniques (like k-means) to group similar equipment operating profiles. Identifying unusual clusters might indicate potential malfunctioning equipment requiring further investigation. It’s valuable for anomaly detection where we aren’t sure what ‘failure’ looks like in the data initially.

- Reinforcement Learning: This is more like training a dog with rewards and punishments. An agent (our algorithm) interacts with an environment (the equipment) and receives feedback (rewards for correct actions and penalties for incorrect actions). It learns optimal maintenance strategies through trial and error, aiming to maximize a reward function (e.g., minimizing downtime, maximizing equipment lifespan). While less common currently in predictive maintenance than supervised learning, it holds great promise for optimizing maintenance schedules dynamically.

Q 2. What are some common machine learning algorithms used for predictive maintenance?

Many machine learning algorithms are suitable for predictive maintenance. The best choice depends on the specific data and problem. Here are some commonly used ones:

- Regression Models (Linear Regression, Support Vector Regression): Useful for predicting the remaining useful life (RUL) of equipment.

- Classification Models (Logistic Regression, Support Vector Machines, Random Forests, Gradient Boosting Machines, Neural Networks): Predict the probability of equipment failure within a specific timeframe. These are particularly popular because failure is often a binary outcome (failure/no failure).

- Survival Analysis Models (Weibull, Cox Proportional Hazards): Ideal for analyzing time-to-event data, directly modeling the time until equipment failure.

- Anomaly Detection Algorithms (One-class SVM, Isolation Forest): Detect unusual patterns in sensor data that might indicate impending failures, particularly valuable in unsupervised learning settings.

The choice often involves experimentation and comparing performance metrics on different algorithms.

Q 3. How do you handle imbalanced datasets in predictive maintenance applications?

Imbalanced datasets, where one class (e.g., failure) has significantly fewer instances than the other (no failure), are common in predictive maintenance. This can lead to biased models that perform poorly on the minority class (failure). Here are some strategies to handle this:

- Resampling Techniques:

- Oversampling: Duplicate or synthesize instances of the minority class (e.g., SMOTE – Synthetic Minority Over-sampling Technique).

- Undersampling: Remove instances from the majority class.

- Cost-Sensitive Learning: Assign higher misclassification costs to the minority class, penalizing the model more for misclassifying failures.

- Ensemble Methods: Combining multiple models trained on different subsets of the data can improve performance on imbalanced datasets.

- Anomaly Detection: If the failure cases are truly rare, focusing on anomaly detection techniques might be more appropriate.

The best approach often involves experimentation and evaluating performance metrics like precision, recall, and F1-score on both classes.

Q 4. Describe the process of feature engineering for predictive maintenance.

Feature engineering is crucial for building effective predictive maintenance models. It involves transforming raw sensor data into informative features that improve model performance. This is often more art than science and requires domain expertise.

- Domain Knowledge: Understanding the equipment and its failure mechanisms helps in selecting relevant features. For example, high vibration might indicate bearing wear in a pump.

- Time Series Features: Since sensor data is often time series, features like rolling averages, standard deviations, and moving averages capture trends and patterns over time. We might calculate the average temperature over the last hour or the rate of change in vibration frequency.

- Statistical Features: Calculate summary statistics like percentiles, skewness, and kurtosis of sensor readings.

- Frequency Domain Features: Using Fast Fourier Transform (FFT), we can extract frequency components from vibration data to identify specific fault frequencies associated with particular component failures.

- Feature Selection and Dimensionality Reduction: Techniques like Principal Component Analysis (PCA) can reduce the number of features while retaining important information, avoiding the curse of dimensionality.

Iterative feature engineering is key. Start with intuitive features, evaluate the model, and refine features based on performance.

Q 5. What are the key performance indicators (KPIs) you would use to evaluate a predictive maintenance model?

Key performance indicators (KPIs) for evaluating a predictive maintenance model focus on balancing accuracy, cost-effectiveness, and operational impact:

- Precision and Recall: For classification models, measuring how accurately the model predicts failures (precision) and how many actual failures it correctly identifies (recall).

- F1-Score: The harmonic mean of precision and recall, providing a balanced measure of model performance.

- AUC (Area Under the ROC Curve): A measure of a model’s ability to distinguish between failures and no-failures across different thresholds.

- Mean Time Between Failures (MTBF): The average time between equipment failures, a common metric to assess the effectiveness of preventive measures.

- Mean Time To Repair (MTTR): The average time it takes to repair a piece of equipment after failure. Predictive maintenance aims to reduce MTTR by allowing for scheduled repairs.

- Downtime Cost Reduction: The ultimate goal – quantifying the cost savings achieved by avoiding unplanned downtime through accurate predictions.

Q 6. How do you assess the accuracy and reliability of a predictive maintenance model?

Assessing the accuracy and reliability of a predictive maintenance model requires a rigorous approach.

- Cross-Validation: Using techniques like k-fold cross-validation to evaluate the model’s performance on unseen data, ensuring robustness and generalizability.

- Test Set Evaluation: Hold out a portion of the data for final evaluation, ensuring the model hasn’t overfit to the training data. Metrics from this test set are most crucial.

- Error Analysis: Examine the types of errors the model makes to identify areas for improvement, such as refining features or adjusting the model.

- Calibration: Check whether the model’s predicted probabilities are well-calibrated. A well-calibrated model will have a close match between predicted probabilities and observed frequencies.

- Monitoring: Continuously monitor the model’s performance in the real world and retrain it regularly with new data to maintain accuracy and adapt to changing equipment conditions.

Reliability means the model consistently provides accurate and trustworthy predictions over time and under changing conditions.

Q 7. Explain the concept of model explainability and its importance in predictive maintenance.

Model explainability, also known as interpretability, refers to the ability to understand how a machine learning model arrives at its predictions. This is crucial in predictive maintenance because high stakes are involved.

- Importance of Explainability: Understanding why a model predicts a failure is essential for building trust, identifying potential biases, debugging the model, and gaining insights into the equipment’s failure mechanisms. Imagine predicting a costly shutdown; decision-makers need to understand the reasoning behind the prediction.

- Techniques for Explainability:

- Feature Importance: Identify which features contribute most significantly to the model’s predictions.

- SHAP (SHapley Additive exPlanations): A game-theoretic approach to explain individual predictions.

- LIME (Local Interpretable Model-agnostic Explanations): Explains individual predictions by approximating the model locally with a simpler, interpretable model.

- Rule Extraction: Extract easily understandable rules from the model (if applicable, like with decision trees).

Choosing a model that balances predictive performance and explainability is often a trade-off. Simpler models (like decision trees) are more easily interpretable, but may sacrifice accuracy compared to complex models (like deep neural networks).

Q 8. Discuss different types of sensor data used in predictive maintenance.

Predictive maintenance relies heavily on sensor data to monitor the health of equipment. The types of sensors used depend greatly on the specific machinery and the parameters you want to track. Here are some common examples:

- Vibration Sensors: These measure vibrations in equipment, indicating imbalances, wear, or impending failures. Think of a car engine – excessive vibration points to a problem. Accelerometers and proximity sensors are frequently used.

- Temperature Sensors: These monitor the temperature of components. Overheating is a common precursor to failure in many systems, such as motors or bearings. Thermocouples and resistance temperature detectors (RTDs) are common choices.

- Pressure Sensors: Used to monitor pressure levels within systems, crucial for detecting leaks, blockages, or other pressure-related issues in hydraulic or pneumatic systems. Examples include strain gauge pressure sensors and piezoelectric sensors.

- Current Sensors: These measure the electrical current flowing through components, identifying anomalies that can indicate motor winding issues, or other electrical faults. Current transformers are often employed.

- Acoustic Sensors: These detect unusual sounds that can signal wear, friction, or impending failure. Microphones or ultrasonic sensors are often used for this purpose.

- Optical Sensors: These sensors can use techniques like image processing to monitor wear or damage on surfaces. For example, to monitor the condition of a conveyor belt or a component’s surface.

The choice of sensors is a critical step in designing an effective predictive maintenance system. The data they provide forms the basis for our predictive models.

Q 9. How do you handle missing data in sensor data for predictive maintenance?

Missing data is a common challenge in sensor data streams. It can be caused by sensor malfunctions, communication failures, or simply data acquisition issues. Ignoring missing data can significantly bias our models. Several strategies can be employed:

- Deletion: The simplest approach is to remove data points with missing values. This is only suitable if the amount of missing data is small and not systematically biased.

- Imputation: This involves filling in missing values with estimated values. Common methods include:

- Mean/Median/Mode Imputation: Replacing missing values with the average, median, or mode of the observed values for that variable. This is simple but can distort the variance.

- K-Nearest Neighbors (KNN) Imputation: Estimating missing values based on the values of similar data points. This is more sophisticated and considers the relationship between variables.

- Multiple Imputation: Generating multiple plausible imputations for each missing value and then combining the results. This accounts for uncertainty in the imputation process.

- Model-Based Imputation: This involves using a predictive model (e.g., regression or machine learning model) to predict the missing values based on other available data. This can be a very powerful method, especially when we have a substantial amount of contextual data.

The best approach depends on the nature and extent of missing data, the characteristics of the data set, and the specific predictive model used. It often requires careful consideration and experimentation.

Q 10. What are some common challenges in implementing predictive maintenance?

Implementing predictive maintenance presents several hurdles:

- Data Acquisition and Quality: Getting reliable, high-quality sensor data from diverse sources can be challenging. Sensor noise, inconsistent sampling rates, and missing data are common problems.

- Feature Engineering: Extracting meaningful features from raw sensor data requires domain expertise and significant effort. Transforming raw sensor data into features that a machine learning model can use effectively is crucial. This often involves time-series analysis and signal processing techniques.

- Model Selection and Training: Choosing the right model for a specific application, obtaining sufficient training data, and optimizing the model for accuracy and performance is an iterative process that needs careful design and experimentation.

- Computational Resources: Processing large volumes of sensor data can require significant computational resources, particularly when using complex models. This is also applicable for advanced algorithms for time series data.

- Integration with Existing Systems: Integrating a predictive maintenance system with existing operational processes and systems can be complex and require changes in workflows and organizational structures. It requires close collaboration with multiple stakeholders.

- Explainability and Trust: Understanding why a model makes a particular prediction is crucial for building trust and acceptance among stakeholders. This is especially important for making critical maintenance decisions.

Successfully addressing these challenges requires a multidisciplinary approach, combining engineering, data science, and domain expertise.

Q 11. Describe your experience with time series analysis in predictive maintenance.

Time series analysis is fundamental to predictive maintenance. Sensor data is inherently sequential, with measurements taken over time. I have extensive experience using various time series techniques, including:

- ARIMA/SARIMA Modeling: For modeling stationary and non-stationary time series to forecast future values of sensor readings to predict potential failures.

- Exponential Smoothing: Methods like Holt-Winters for forecasting trends and seasonality in sensor data, which are crucial for detecting deviations from expected behavior.

- Recurrent Neural Networks (RNNs), specifically LSTMs and GRUs: These powerful deep learning models excel at capturing long-term dependencies in sequential data, making them ideal for analyzing complex sensor patterns. For example, in my previous role, we used LSTMs to predict bearing failures in wind turbines, achieving significantly improved accuracy compared to traditional methods.

- Change Point Detection: Methods to identify sudden shifts or changes in the sensor data that might indicate the onset of a fault. Bayesian Online Change Point Detection (BOCPD) is particularly useful.

My approach always involves careful data preprocessing, including cleaning, normalization, and feature engineering, before applying any time series model. Model selection and evaluation are also crucial steps, and I routinely use metrics like Mean Absolute Error (MAE), Root Mean Squared Error (RMSE), and Mean Absolute Percentage Error (MAPE) to assess model performance. A combination of these methods is frequently needed to capture the nuances of the time-series data and to yield robust predictive results.

Q 12. How do you select appropriate features for a predictive maintenance model?

Feature selection is critical for building effective predictive maintenance models. Poorly chosen features can lead to overfitting, reduced accuracy, and increased computational cost. My approach involves a combination of techniques:

- Domain Expertise: Leveraging knowledge of the equipment and its operating conditions to identify potentially relevant features. This often involves discussions with subject matter experts.

- Statistical Methods: Using statistical measures like correlation analysis, variance inflation factor (VIF) to identify features that are strongly related to the target variable (e.g., remaining useful life or failure probability) while avoiding multicollinearity.

- Feature Importance from Models: Training a model (e.g., Random Forest, Gradient Boosting) and examining the feature importance scores provided by the model. This can help identify which features contribute most significantly to predictive performance.

- Dimensionality Reduction Techniques: Applying techniques like Principal Component Analysis (PCA) or t-distributed Stochastic Neighbor Embedding (t-SNE) to reduce the number of features while preserving important information. This is especially useful when dealing with high-dimensional data.

- Recursive Feature Elimination (RFE): Iteratively removing features based on their importance scores until an optimal subset is found.

The best feature selection strategy depends on the specific data and model used, and often involves an iterative process of experimenting with different combinations of features to optimize model performance. For instance, in one project involving pump failure prediction, using a combination of statistical analysis and feature importance from a gradient boosting model enabled us to reduce the number of features from over 50 to a highly effective subset of 10, significantly improving the model’s predictive power.

Q 13. Explain the concept of anomaly detection and its application in predictive maintenance.

Anomaly detection is the process of identifying unusual patterns or outliers in data that deviate significantly from expected behavior. In predictive maintenance, this is crucial for detecting potential equipment failures before they occur. Anomalies can manifest as sudden spikes, drifts, or other unusual patterns in sensor data. Common anomaly detection techniques include:

- Statistical Process Control (SPC): Using control charts (e.g., Shewhart, CUSUM) to monitor sensor data and identify points that fall outside pre-defined control limits. This is particularly well-suited for monitoring simple, well-understood processes.

- One-Class SVM: Training a Support Vector Machine (SVM) model on data representing normal operating conditions to identify data points that fall outside the learned boundary.

- Isolation Forest: An unsupervised learning algorithm that isolates anomalies by randomly partitioning the data. Anomalies are typically isolated quicker than normal data points.

- Autoencoders: Neural networks trained to reconstruct input data. Anomalies are detected as data points that cannot be well reconstructed.

For example, in a manufacturing setting, anomaly detection can alert operators to a machine running at an unusual temperature, potentially indicating an impending overheating problem. Early detection can prevent major failures and reduce downtime.

Q 14. What are the ethical considerations in using machine learning for predictive maintenance?

Ethical considerations are paramount when using machine learning for predictive maintenance:

- Bias and Fairness: Models trained on biased data can perpetuate and even amplify existing inequalities. For instance, a model trained primarily on data from well-maintained equipment may inaccurately predict failures in equipment subjected to harsher conditions, leading to unfair resource allocation.

- Privacy: Sensor data can contain sensitive information about equipment operation and potentially even user behavior. Data privacy and security must be prioritized through anonymization, encryption, and appropriate access control measures.

- Transparency and Explainability: It is crucial for stakeholders to understand how a model arrives at its predictions. Opaque models can erode trust and hinder effective decision-making. Techniques to improve model explainability, such as SHAP values or LIME, are important.

- Accountability: It’s essential to establish clear lines of accountability in case a model makes an incorrect prediction leading to undesirable consequences. Thorough model validation and testing are critical.

- Job Displacement: Predictive maintenance can automate tasks previously performed by human operators. Strategies for reskilling and upskilling the workforce are needed to mitigate potential job displacement.

Addressing these ethical concerns requires careful planning, transparent communication, and ongoing monitoring of model performance and its societal impact.

Q 15. How do you deploy and monitor a predictive maintenance model in a production environment?

Deploying and monitoring a predictive maintenance model in a production environment involves a multi-stage process. First, the trained model needs to be packaged into a deployable format, often a containerized application using Docker. This ensures consistency across different environments. Then, it’s deployed to a suitable infrastructure, such as a cloud platform (AWS, Azure, GCP) or on-premise servers. A robust monitoring system is crucial; this typically involves integrating the model with a monitoring platform that tracks key performance indicators (KPIs) like prediction accuracy, latency, and resource utilization. We also need to set up alerts for anomalies or deviations from expected performance. For instance, if the model’s accuracy drops below a defined threshold, an alert triggers investigation. Finally, regular retraining and updates of the model are necessary using fresh data to maintain accuracy and adapt to changing conditions. Think of it like regularly servicing a car – you wouldn’t expect peak performance without regular maintenance!

Imagine a scenario where we’re monitoring wind turbines. We deploy our model to AWS, using Lambda functions for real-time predictions and CloudWatch for monitoring metrics like prediction accuracy and latency. If the prediction latency suddenly increases, CloudWatch alerts us to a potential problem, allowing for swift investigation and resolution, preventing any downtime.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. Describe your experience with cloud platforms for deploying machine learning models (e.g., AWS, Azure, GCP).

I have extensive experience with AWS, Azure, and GCP for deploying machine learning models, particularly in the context of predictive maintenance. Each platform offers unique strengths. AWS, with its robust suite of services like SageMaker, provides excellent tools for model training, deployment, and management. Azure Machine Learning provides similar functionalities with strong integration into the broader Azure ecosystem. GCP’s Vertex AI offers competitive capabilities, often excelling in scalability and cost optimization. My experience involves using these platforms to deploy various model types, including time-series models (ARIMA, LSTM) and tree-based models (Random Forest, Gradient Boosting), depending on the specific application and dataset. The choice of platform often depends on existing infrastructure, cost considerations, and specific needs like integration with other systems. For example, in a project involving a large-scale deployment of models for a manufacturing plant, we chose AWS due to its mature infrastructure and existing partnerships with the client.

In one project, I used AWS SageMaker to deploy a real-time predictive maintenance model for a fleet of delivery trucks. We used SageMaker’s hosting capabilities to deploy the model as a real-time endpoint, allowing for seamless integration with the truck’s telematics data. SageMaker’s built-in monitoring tools were also instrumental in tracking model performance and identifying potential issues.

Q 17. How do you handle real-time data streaming in predictive maintenance?

Handling real-time data streaming in predictive maintenance requires a robust infrastructure capable of ingesting, processing, and analyzing data as it arrives. This often involves using technologies like Apache Kafka or Amazon Kinesis for data ingestion, followed by real-time processing frameworks such as Apache Spark Streaming or Flink. These frameworks allow for continuous model updates and predictions based on the latest data. The challenge lies in balancing the need for speed with accuracy and resource consumption. We might use techniques like windowing to group data into manageable chunks before processing, or employ approximate computation methods if speed is paramount. The processed data is then fed into the predictive model, which generates predictions in near real-time. This allows for immediate actions to be taken, such as scheduling maintenance before a potential failure.

Consider a scenario where we are monitoring sensor data from a power grid. Using Kafka to collect real-time data from various sources, we feed this data into a Spark Streaming application that processes the data and feeds it to the model, which then predicts the probability of a fault within the next hour. This allows operators to intervene proactively, reducing downtime and preventing outages.

Q 18. Explain the difference between RUL (Remaining Useful Life) prediction and failure prediction.

While both RUL (Remaining Useful Life) prediction and failure prediction aim to anticipate equipment issues, they differ significantly in their output. Failure prediction focuses on predicting whether a failure will occur within a specific timeframe (e.g., will this engine fail in the next 24 hours?). It’s a binary classification problem (failure or no failure). RUL prediction, on the other hand, estimates the remaining operational time until failure (e.g., this engine has approximately 100 hours of useful life remaining). It’s a regression problem predicting a continuous value. RUL prediction offers more granular insight, enabling better maintenance scheduling and resource allocation. Failure prediction is simpler to implement but may lack the precision of RUL prediction.

Imagine a manufacturing plant with multiple machines. Failure prediction would tell you if a machine is likely to fail soon (yes/no), whereas RUL prediction would give you the exact number of hours before failure, allowing you to schedule maintenance during a planned downtime, minimizing production disruption.

Q 19. What are some common data preprocessing techniques used in predictive maintenance?

Data preprocessing is crucial in predictive maintenance because the quality of your data directly impacts the accuracy and reliability of your model. Common techniques include:

- Handling Missing Values: Imputation using mean, median, or more sophisticated methods like k-Nearest Neighbors.

- Outlier Detection and Treatment: Identifying and removing or transforming outliers using techniques like IQR or Z-score methods. Outliers can skew results and negatively impact model performance.

- Data Cleaning: Correcting inconsistencies, removing duplicates, and handling incorrect data formats.

- Feature Scaling: Standardizing or normalizing features to ensure that they have a similar range of values. This prevents features with larger values from dominating the model.

- Feature Engineering: Creating new features from existing ones to improve model accuracy. This might involve creating rolling averages, lag features (previous values), or other domain-specific features.

- Data Smoothing: Techniques like moving averages to reduce noise and highlight trends in time-series data.

For example, in a wind turbine dataset, missing wind speed values might be imputed using the average wind speed at a similar time of day. Outliers, representing sensor malfunctions, might be removed or replaced with imputed values. Feature engineering might involve calculating the average wind speed over the last hour to predict potential failures.

Q 20. How do you validate a predictive maintenance model?

Validating a predictive maintenance model involves rigorously assessing its performance and generalizability. This process typically involves splitting the dataset into training, validation, and test sets. The training set is used to train the model, the validation set is used to tune hyperparameters and prevent overfitting, and the test set provides an unbiased evaluation of the model’s performance on unseen data. Metrics like precision, recall, F1-score, AUC (Area Under the ROC Curve), and RMSE (Root Mean Squared Error) are used to evaluate the model’s accuracy. Cross-validation techniques, such as k-fold cross-validation, further enhance the robustness of the evaluation. It’s also crucial to consider domain-specific knowledge to assess whether the model’s predictions align with real-world expectations. We want to be sure that the model is not only accurate but also generalizes well to new data and is interpretable, allowing us to trust its predictions.

For example, after training a model to predict bearing failures, we would evaluate its performance on a held-out test set using metrics such as precision and recall. A low recall would indicate that the model is missing many actual failures, while a low precision might mean that it’s incorrectly predicting failures when there are none. We might also perform domain expert review to ensure that the model’s insights make sense from a mechanical engineering standpoint.

Q 21. What is the difference between precision and recall in the context of predictive maintenance?

In predictive maintenance, precision and recall are crucial metrics that assess the performance of a model in classifying failures. Precision measures the proportion of correctly predicted failures out of all predicted failures. A high precision means that when the model predicts a failure, it’s very likely to be correct. Recall measures the proportion of correctly predicted failures out of all actual failures. A high recall means the model is good at identifying most of the actual failures. There’s often a trade-off between precision and recall. A model with high precision might miss some failures (low recall), while a model with high recall might generate many false positives (low precision). The choice of which metric to prioritize depends on the specific application and its associated costs. For example, in situations where false positives are expensive (e.g., unnecessary maintenance), high precision is preferred. Conversely, if missing actual failures is more costly (e.g., equipment failure leading to a safety hazard), then high recall is crucial.

Imagine a scenario involving aircraft engine maintenance. High precision is vital because a false positive (unnecessary engine overhaul) is extremely expensive. Conversely, in a system detecting potential cybersecurity breaches, high recall is prioritized as missing a true positive (actual breach) has far more serious implications than some false positives (alerts that turn out to be benign).

Q 22. Explain the concept of a confusion matrix and how to interpret it.

A confusion matrix is a visualization tool used to evaluate the performance of a classification model. It’s a table that summarizes the predictions made by the model against the actual ground truth values. Imagine you’re building a model to detect faulty equipment. The matrix would show you how many times your model correctly predicted faulty (True Positives) and non-faulty (True Negatives) equipment, and how many times it made incorrect predictions (False Positives and False Negatives).

Interpreting the Matrix:

- True Positive (TP): Correctly predicted positive cases (e.g., correctly identified faulty equipment).

- True Negative (TN): Correctly predicted negative cases (e.g., correctly identified non-faulty equipment).

- False Positive (FP): Incorrectly predicted positive cases (e.g., predicted faulty equipment when it was actually working fine – a false alarm).

- False Negative (FN): Incorrectly predicted negative cases (e.g., predicted non-faulty equipment when it was actually faulty – a missed detection).

From this, you can calculate crucial metrics such as accuracy, precision, recall, and F1-score, which help you understand the strengths and weaknesses of your model.

Example:

Let’s say we have a confusion matrix for a predictive maintenance model:

Predicted Positive Predicted Negative

Actual Positive 20 5

Actual Negative 2 73

Here, TP=20, TN=73, FP=2, FN=5. This shows the model performed relatively well, but 5 faulty pieces of equipment were missed (FN), which is crucial information in predictive maintenance.

Q 23. Describe your experience with different model evaluation metrics (e.g., AUC, F1-score).

My experience encompasses a wide range of model evaluation metrics, each providing a unique perspective on model performance. I frequently use:

- AUC (Area Under the ROC Curve): This metric is particularly useful for imbalanced datasets, common in predictive maintenance where failures are less frequent than normal operation. A higher AUC indicates better discrimination between positive and negative classes. I’ve used it extensively to compare the performance of different models under varying data distributions.

- F1-score: The F1-score is the harmonic mean of precision and recall, offering a balanced measure of model performance. This is vital in predictive maintenance where both minimizing false positives (unnecessary maintenance) and false negatives (missed failures) are critical. A low F1-score highlights the need for model improvements or data augmentation.

- Precision: Precision answers the question: ‘Of all the positive predictions, how many were actually correct?’ It’s particularly relevant when the cost of false positives is high (e.g., unnecessary downtime).

- Recall (Sensitivity): Recall answers the question: ‘Of all the actual positive cases, how many were correctly predicted?’ It’s crucial when the cost of false negatives is high (e.g., catastrophic equipment failure).

- Accuracy: A simple metric reflecting the overall correctness of predictions, but it can be misleading with imbalanced datasets. I use it in conjunction with other metrics to get a holistic view.

In my projects, I’ve often found that focusing solely on accuracy can be deceptive. By using a combination of AUC, F1-score, precision, and recall, I gain a comprehensive understanding of my model’s performance and its limitations in a predictive maintenance context.

Q 24. How do you handle outliers in your data?

Outliers are a common challenge in predictive maintenance data. Ignoring them can significantly skew model results. My approach is multi-faceted:

- Visual Inspection: I always start with visualizing the data using box plots, scatter plots, and histograms to identify potential outliers. This provides an intuitive understanding of data distribution and helps spot anomalies.

- Statistical Methods: I use techniques like the Interquartile Range (IQR) method to identify outliers based on their distance from the median. Data points falling outside a certain IQR multiple are flagged as potential outliers.

- Domain Knowledge: Crucially, I leverage domain expertise to contextualize outliers. Sometimes, an apparent outlier may actually represent a genuine, albeit rare, event (e.g., a severe operational condition). Understanding the underlying physics or process behind the data is vital.

- Robust Algorithms: Some algorithms (like Random Forests) are naturally more robust to outliers than others (like linear regression). Choosing an appropriate algorithm can mitigate the impact of outliers without explicit removal.

- Transformation Techniques: Methods like logarithmic transformation or Box-Cox transformation can sometimes normalize the data and reduce the influence of outliers.

- Winsorizing or Trimming: In cases where outliers are likely due to measurement errors, I might Winsorize (cap values at a certain percentile) or trim (remove a percentage of extreme values) the data.

The best strategy depends on the nature of the data, the type of model used, and the potential impact of the outliers. The goal is not to blindly remove everything deemed an outlier, but rather to handle them intelligently while preserving valuable information.

Q 25. Discuss your experience with different types of regression models.

My experience with regression models is extensive, covering various types suitable for predictive maintenance:

- Linear Regression: I use this when a linear relationship exists between the predictor variables and the target variable (e.g., predicting remaining useful life based on sensor readings). It’s simple to interpret, but its assumptions (linearity, independence, normality) must be carefully checked.

- Polynomial Regression: When the relationship is non-linear, I employ polynomial regression to capture more complex patterns. However, higher-order polynomials can lead to overfitting.

- Ridge and Lasso Regression: These regularized regression methods address multicollinearity (highly correlated predictors) and overfitting, common issues in high-dimensional sensor data. They’re effective in reducing model complexity and improving generalization.

- Support Vector Regression (SVR): SVR is particularly useful for high-dimensional data and non-linear relationships. Its ability to handle outliers reasonably well makes it suitable for real-world predictive maintenance applications.

- Decision Tree Regression and Random Forest Regression: These tree-based methods are non-parametric and handle non-linear relationships well, often requiring less data pre-processing. They’re highly useful when interpretability is essential, allowing us to understand the factors contributing to equipment degradation.

The choice of the regression model is determined by the specific problem, the characteristics of the data, and the desired level of interpretability. In predictive maintenance, I often favour models that balance predictive accuracy with interpretability, allowing for a clear understanding of the model’s predictions and their implications.

Q 26. How do you choose the right machine learning algorithm for a given predictive maintenance problem?

Selecting the right algorithm for predictive maintenance requires careful consideration of several factors:

- Data Characteristics: Is the data time-series, tabular, or image-based? Is it high-dimensional? Is it labeled or unlabeled? The algorithm must be compatible with the data type and structure.

- Problem Type: Is the goal to predict remaining useful life (regression), detect anomalies (anomaly detection), or classify equipment states (classification)? Different algorithms excel at different task types.

- Interpretability Needs: Is it crucial to understand *why* the model makes specific predictions? Some algorithms (e.g., linear regression, decision trees) are more interpretable than others (e.g., deep learning models). In predictive maintenance, understanding the model’s logic can be essential for building trust and actionable insights.

- Computational Resources: Some algorithms are computationally expensive and require significant resources. This constraint must be balanced with model accuracy and performance.

- Data Size: The amount of available data influences the algorithm choice. Some algorithms perform better with large datasets (deep learning), while others are suitable for smaller datasets (support vector machines).

Often, I start with simpler models (e.g., linear regression or decision trees) as a baseline and then explore more complex models (e.g., random forests, neural networks) if necessary. A structured approach involving experimentation and model comparison using appropriate evaluation metrics is key to making an informed decision.

Q 27. Explain the importance of data quality in predictive maintenance.

Data quality is paramount in predictive maintenance. Poor data leads to inaccurate models and ultimately, unreliable predictions, resulting in costly consequences, such as unexpected downtime or unnecessary maintenance. Here’s why it’s crucial:

- Accuracy of Predictions: The accuracy of the model’s predictions is directly tied to the quality of the input data. Inaccurate or incomplete data will lead to faulty predictions, hindering effective decision-making.

- Model Reliability: High-quality data strengthens the model’s reliability and robustness. A model trained on noisy or inconsistent data is less likely to generalize well to new, unseen data.

- Cost Savings: High-quality data helps optimize maintenance schedules, reducing unnecessary downtime and associated costs. By accurately predicting potential failures, we can plan maintenance proactively, preventing costly breakdowns.

- Safety: In critical infrastructure, accurate predictive maintenance is crucial for ensuring safety and preventing catastrophic failures. Poor data can have severe safety implications.

- Effective Decision-Making: High-quality data provides the foundation for informed decision-making related to maintenance strategies, resource allocation, and risk management. This allows for optimized maintenance schedules and reduces operational costs.

Ensuring data quality involves rigorous data cleaning, preprocessing, and validation procedures, including handling missing data, outlier detection, and feature engineering.

Q 28. Describe a time you had to debug a predictive maintenance model. What was the issue, and how did you resolve it?

In one project, we developed a model to predict bearing failures in wind turbines. Initially, the model performed poorly. After careful investigation, we discovered that a significant number of sensor readings had been incorrectly logged due to a temporary communication glitch during a severe storm. This introduced spurious high-frequency oscillations in the data, which the model misinterpreted as precursors to bearing failure.

Issue: The model was overfitting to this erroneous data, producing false positives (predicting failures that didn’t occur).

Resolution: We implemented a multi-step debugging strategy:

- Data Inspection: We carefully examined the sensor data for anomalies, visualizing it using time series plots and statistical summaries. This highlighted the unusual readings around the time of the storm.

- Data Cleaning: We identified and removed the erroneous readings associated with the communication glitch. This involved comparing sensor readings with other available data sources to validate their accuracy.

- Model Retraining: After cleaning the data, we retrained the model on the corrected dataset. We carefully monitored the model’s performance using appropriate evaluation metrics (AUC, F1-score, precision, and recall) to ensure improvement.

- Robustness Testing: We tested the model’s robustness by introducing synthetic noisy data and evaluating its performance. This ensured the model’s resilience to similar future events.

By addressing the data quality issue, we significantly improved the model’s accuracy and reliability, reducing both false positives and false negatives. The improved model enabled more effective predictive maintenance and minimized costly downtime.

Key Topics to Learn for Machine Learning and Predictive Maintenance Interviews

- Machine Learning Fundamentals: Regression (linear, logistic, polynomial), Classification (SVM, decision trees, random forests, naive Bayes), Clustering (k-means, hierarchical), Model Evaluation (precision, recall, F1-score, AUC-ROC).

- Predictive Maintenance Techniques: Time series analysis, anomaly detection (using statistical methods and ML algorithms), sensor data analysis, Remaining Useful Life (RUL) prediction, condition-based maintenance.

- Practical Applications: Developing predictive models for equipment failure prediction, optimizing maintenance schedules, reducing downtime and operational costs, implementing real-time monitoring systems.

- Data Preprocessing & Feature Engineering: Handling missing data, outlier detection, feature scaling, dimensionality reduction, feature selection for improved model performance.

- Model Deployment & Monitoring: Deploying ML models in production environments (cloud platforms, edge devices), monitoring model performance, retraining models with new data, addressing concept drift.

- Algorithmic Considerations: Understanding the strengths and weaknesses of different ML algorithms, choosing the right algorithm for a given problem, optimizing model parameters, and interpreting model outputs.

- Domain Knowledge: Demonstrate understanding of the specific industry or application you’re targeting (e.g., manufacturing, aerospace, energy). Show familiarity with common equipment and failure modes.

Next Steps

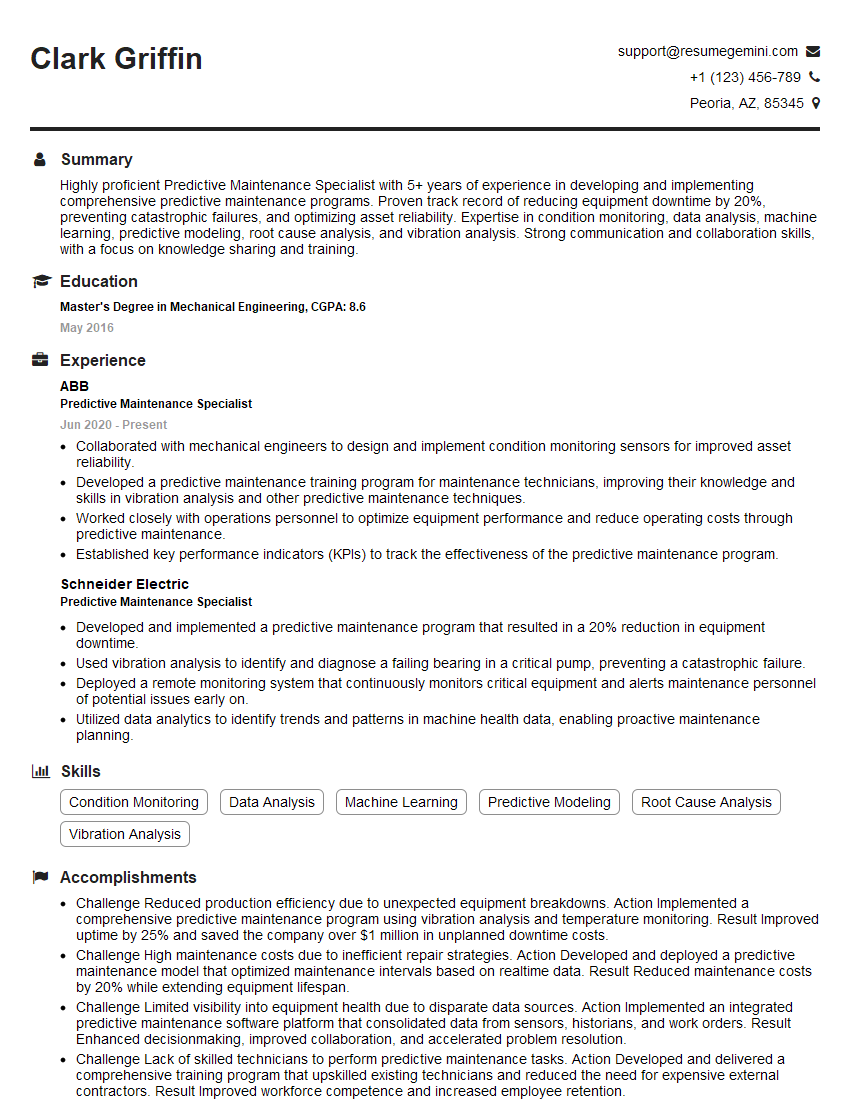

Mastering Machine Learning and Predictive Maintenance opens doors to exciting and high-demand roles in various industries. These skills are highly valued, leading to increased earning potential and career advancement. To maximize your job prospects, it’s crucial to present your qualifications effectively. Creating an ATS-friendly resume is key to getting your application noticed by recruiters and hiring managers.

We recommend using ResumeGemini to build a professional and impactful resume tailored to the specific requirements of Machine Learning and Predictive Maintenance roles. ResumeGemini provides tools and resources to help you craft a compelling narrative highlighting your skills and experience. Examples of resumes specifically tailored for these roles are available to guide you. Invest time in crafting a strong resume; it’s your first impression and a crucial step in landing your dream job.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Very informative content, great job.

good