Cracking a skill-specific interview, like one for Mathematical and Analytical Abilities, requires understanding the nuances of the role. In this blog, we present the questions you’re most likely to encounter, along with insights into how to answer them effectively. Let’s ensure you’re ready to make a strong impression.

Questions Asked in Mathematical and Analytical Abilities Interview

Q 1. Explain the difference between correlation and causation.

Correlation and causation are two distinct concepts in statistics. Correlation refers to a statistical relationship between two or more variables, indicating that they tend to change together. However, correlation does not imply causation. Just because two variables are correlated doesn’t mean one causes the other. Causation implies a direct cause-and-effect relationship; a change in one variable directly leads to a change in another.

Example: Ice cream sales and crime rates are often positively correlated – both tend to increase during summer. However, this doesn’t mean that increased ice cream sales cause increased crime, or vice versa. The underlying factor, hot weather, influences both.

To establish causation, we need to demonstrate a mechanism connecting the variables and often rely on controlled experiments, careful observational studies, and temporal precedence (cause preceding effect).

Q 2. How would you approach a problem involving missing data in a dataset?

Handling missing data is crucial for data analysis. Ignoring it can lead to biased results. My approach involves a multi-step process:

- Understanding the nature of missing data: Is it Missing Completely at Random (MCAR), Missing at Random (MAR), or Missing Not at Random (MNAR)? The type of missingness influences the best imputation method.

- Visualization and exploratory analysis: I’d explore the patterns of missing data using visualizations like heatmaps or missingness plots to identify potential relationships between missingness and other variables.

- Imputation techniques: Depending on the nature and extent of missing data, I might use various methods:

- Deletion: Listwise or pairwise deletion, removing rows or pairs with missing values. This is simple but can lead to significant data loss, especially with many missing values.

- Mean/Median/Mode imputation: Replacing missing values with the mean, median, or mode of the non-missing values. Simple but can underestimate variance.

- Regression imputation: Predicting missing values using a regression model based on other variables.

- Multiple imputation: Creating multiple plausible datasets with imputed values, analyzing each, and combining the results to account for uncertainty in imputation.

- Model selection: Some models (e.g., tree-based models) can handle missing data intrinsically, reducing the need for imputation.

The choice of method depends on the dataset characteristics, the amount of missing data, and the analytical goals. It’s essential to document the chosen method and its potential impact on the results.

Q 3. Describe a time you had to interpret complex data to solve a problem.

In a previous role, I was tasked with analyzing customer churn data for a telecommunications company. The data was extensive, encompassing demographic information, usage patterns, customer service interactions, and billing details. The challenge was to identify key predictors of churn to develop targeted retention strategies.

I began by exploring the data visually, creating histograms, scatter plots, and box plots to understand the distribution of each variable and identify potential relationships. I then applied various statistical techniques, including logistic regression to model the probability of churn, and performed feature selection to identify the most influential variables. The analysis revealed that high call volumes to customer service, low data usage, and a longer tenure were strong predictors of churn. This led to the implementation of targeted retention campaigns focusing on improving customer service, promoting data packages, and incentivizing long-term customers, resulting in a significant reduction in churn.

Q 4. What statistical methods are you familiar with and when would you apply them?

I’m proficient in a range of statistical methods, including:

- Descriptive statistics: Mean, median, mode, standard deviation, variance, percentiles, etc., for summarizing and understanding data.

- Inferential statistics: Hypothesis testing (t-tests, ANOVA, chi-square tests), confidence intervals, and regression analysis for making inferences about populations based on samples.

- Regression analysis: Linear, logistic, polynomial regression for modeling relationships between variables.

- Time series analysis: ARIMA, exponential smoothing for analyzing data collected over time.

- Clustering techniques: K-means, hierarchical clustering for grouping similar data points.

- Dimensionality reduction: PCA, factor analysis for reducing the number of variables while retaining important information.

The choice of method depends heavily on the research question and the nature of the data. For example, I’d use a t-test to compare the means of two groups, ANOVA for comparing means of multiple groups, and logistic regression to predict a binary outcome.

Q 5. Explain your understanding of hypothesis testing.

Hypothesis testing is a formal procedure for making decisions about populations based on sample data. It involves formulating a null hypothesis (H0), representing the status quo or a claim to be tested, and an alternative hypothesis (H1), representing the opposite of the null hypothesis. We then collect data, calculate a test statistic, and determine the p-value, which represents the probability of observing the obtained results (or more extreme results) if the null hypothesis were true.

If the p-value is below a predetermined significance level (alpha, typically 0.05), we reject the null hypothesis in favor of the alternative hypothesis. Otherwise, we fail to reject the null hypothesis. It’s crucial to remember that failing to reject the null hypothesis doesn’t prove it’s true; it simply means there’s insufficient evidence to reject it.

Example: Testing the effectiveness of a new drug. H0: The new drug has no effect on blood pressure. H1: The new drug lowers blood pressure. We would collect data on blood pressure before and after administering the drug and use a t-test to compare the means. A low p-value would suggest that the drug does lower blood pressure.

Q 6. How do you identify and handle outliers in a dataset?

Outliers are data points that significantly deviate from the rest of the data. Identifying and handling them is crucial because they can skew results and distort conclusions. My approach:

- Visualization: Box plots, scatter plots, and histograms help visually identify outliers.

- Statistical methods: Z-scores or IQR (Interquartile Range) method can quantify the degree of deviation. Points falling outside a certain threshold (e.g., Z-score > 3 or below -3) are considered outliers.

- Investigation: Before removing or transforming outliers, it’s essential to investigate their cause. Are they errors in data collection? Are they genuinely unusual observations representing a different population?

- Handling outliers: Options include:

- Removal: Removing outliers if they are clearly errors or represent a separate population.

- Transformation: Applying transformations like logarithmic or square root transformations to reduce the influence of outliers.

- Winsorizing or Trimming: Replacing extreme values with less extreme values (Winsorizing) or removing a certain percentage of extreme values (Trimming).

The best approach depends on the context and the reason for the outliers. It’s important to document how outliers were handled and the impact on the analysis.

Q 7. Describe your experience with regression analysis.

Regression analysis is a powerful statistical method for modeling the relationship between a dependent variable and one or more independent variables. I have extensive experience with various regression techniques:

- Linear Regression: Modeling a linear relationship between a continuous dependent variable and one or more independent variables. I utilize this to predict continuous outcomes, understand the impact of independent variables, and control for confounding factors.

- Logistic Regression: Modeling the probability of a binary (0/1) dependent variable based on independent variables. This is commonly used in classification problems, such as predicting customer churn or credit risk.

- Polynomial Regression: Modeling non-linear relationships between variables by including polynomial terms of the independent variables.

- Multiple Regression: Modeling the relationship between a dependent variable and multiple independent variables simultaneously.

In my work, I’ve applied regression analysis to various scenarios, including predicting sales based on marketing spending, modeling the impact of environmental factors on crop yield, and developing risk models for financial institutions. Understanding the assumptions of regression analysis (linearity, independence of errors, homoscedasticity, normality of errors) and conducting diagnostic checks is crucial for accurate and reliable results.

Q 8. What are the different types of biases you are aware of in data analysis?

Data analysis is susceptible to various biases that can skew results and lead to flawed conclusions. These biases can stem from the data collection process, the analysis techniques employed, or even the analyst’s own preconceptions. Here are some key types:

- Selection Bias: This occurs when the sample used for analysis doesn’t accurately represent the population of interest. For example, surveying only university students to understand the opinions of the entire adult population introduces selection bias. The results won’t be generalizable.

- Confirmation Bias: The tendency to favor information that confirms pre-existing beliefs and disregard contradictory evidence. An analyst might focus on data points supporting their hypothesis and ignore outliers or data challenging it.

- Survivorship Bias: Focusing on only the ‘survivors’ in a dataset and ignoring those that didn’t make it. For instance, studying only successful businesses and neglecting failed ones to understand business strategies overlooks critical factors.

- Measurement Bias: Systematic errors introduced during data collection. For example, an inaccurate measuring instrument or flawed questionnaire can produce consistently biased results.

- Reporting Bias: A tendency for certain data to be reported more frequently than others, possibly due to social desirability or publication bias in research.

- Observer Bias: When the researcher’s expectations influence their observations or interpretations of the data. This is common in qualitative research but can also creep into quantitative analysis.

Mitigating these biases requires careful planning, robust data collection methods, rigorous analysis techniques, and awareness of one’s own potential biases.

Q 9. How would you explain a complex statistical concept to a non-technical audience?

Let’s say we want to explain the concept of a ‘p-value’ to someone without a statistics background. We can use a relatable analogy:

Imagine you’re flipping a coin 10 times and get 8 heads. Intuitively, you might suspect the coin is biased towards heads. The p-value helps us quantify this suspicion. It answers the question: ‘If the coin were actually fair (50/50 chance of heads or tails), what’s the probability of observing 8 or more heads in 10 flips purely by chance?’

A low p-value (typically below 0.05) suggests the observed outcome (8 heads) is unlikely to have occurred by chance alone. Therefore, we might conclude the coin is indeed biased. Conversely, a high p-value indicates the result is likely due to random chance, and we wouldn’t reject the assumption of a fair coin.

So, the p-value doesn’t prove anything definitively, but it gives us a measure of how strong the evidence is against a particular hypothesis (in this case, the hypothesis that the coin is fair).

Q 10. Describe your experience with data visualization tools and techniques.

I have extensive experience with various data visualization tools and techniques. My proficiency spans several popular platforms, including Tableau, Power BI, and Python libraries like Matplotlib and Seaborn. I’m adept at choosing the right visualization based on the data type and the message I’m trying to convey.

For instance, I’ve used:

- Bar charts to compare categorical data, such as sales figures across different product categories.

- Scatter plots to explore relationships between two continuous variables, like advertising spend and sales revenue.

- Line charts to visualize trends over time, such as website traffic over the past year.

- Heatmaps to represent correlation matrices or geographical data.

- Interactive dashboards in Tableau and Power BI to allow users to explore data dynamically and filter results based on their interests.

Beyond choosing the appropriate chart type, I focus on crafting clear, concise, and informative visualizations that avoid misleading interpretations. This involves careful attention to labeling, color schemes, and the overall visual design.

Q 11. What is your approach to solving a problem you have never encountered before?

My approach to solving a novel problem involves a structured, iterative process:

- Understand the problem: Thoroughly define the problem, gathering all relevant information and context. Ask clarifying questions to ensure a complete understanding.

- Break it down: Decompose the complex problem into smaller, more manageable sub-problems.

- Research and explore: Conduct research to see if similar problems have been solved before. Explore existing literature, online resources, and consult with colleagues.

- Experiment and iterate: Develop and test potential solutions, iteratively refining the approach based on the results. This may involve prototyping, simulations, or experimentation.

- Evaluate and refine: Assess the effectiveness of the solution, measuring its performance against defined criteria. Identify areas for improvement and iterate further.

- Document and share: Document the problem-solving process, including the challenges faced, solutions implemented, and lessons learned. Share findings with relevant stakeholders.

I embrace a collaborative approach and value seeking diverse perspectives when tackling unfamiliar challenges.

Q 12. Walk me through your process for cleaning and preparing a dataset for analysis.

Data cleaning and preparation is a crucial step before any analysis. My process typically involves the following steps:

- Data Collection & Inspection: First, I gather the data from various sources, ensuring data quality from the source. This involves checking the data format, looking for inconsistencies and missing values.

- Handling Missing Values: Missing data can significantly impact the analysis. I determine the best strategy based on the context – imputation (filling in missing values using statistical methods or estimates) or removal (if the missing data is substantial or non-random).

- Data Cleaning: This involves identifying and correcting errors. This may include removing duplicates, dealing with outliers (depending on the context; sometimes outliers are important and should not be removed), and correcting inconsistencies in data formatting.

- Data Transformation: This step focuses on changing the format or structure of the data to make it more suitable for analysis. This may involve creating new variables, recoding existing variables, or transforming variables to improve normality (like using a log transformation).

- Data Validation: After all cleaning and transformation, I verify the data’s accuracy and consistency using various checks. This includes verifying calculated variables, checking distributions for anomalies and performing consistency checks across datasets.

- Data Reduction/Feature Selection: Often, large datasets are not required, this step involves removing irrelevant, redundant or less important variables.

The specific steps and techniques used will vary depending on the dataset and the analytical goals.

Q 13. Explain your understanding of probability distributions.

Probability distributions describe the likelihood of different outcomes for a random variable. They’re fundamental to statistical inference and modeling. Here are some key types:

- Normal Distribution (Gaussian): This is the bell-shaped curve, symmetrical and characterized by its mean and standard deviation. Many natural phenomena approximately follow a normal distribution.

- Binomial Distribution: This describes the probability of getting a certain number of successes in a fixed number of independent trials (e.g., flipping a coin 10 times and counting the number of heads).

- Poisson Distribution: This models the probability of a given number of events occurring in a fixed interval of time or space (e.g., the number of customers arriving at a store in an hour).

- Uniform Distribution: All outcomes have an equal probability (e.g., rolling a fair die).

- Exponential Distribution: Models the time between events in a Poisson process (e.g., time until the next customer arrives).

Understanding probability distributions allows us to make inferences about a population based on a sample, assess the significance of statistical tests, and build predictive models. Choosing the appropriate distribution depends on the nature of the data and the problem being addressed.

Q 14. How do you assess the validity and reliability of data sources?

Assessing the validity and reliability of data sources is paramount. My approach involves a multi-faceted evaluation:

- Source Credibility: I evaluate the reputation and expertise of the data source. Is it a government agency, a reputable research institution, or a less credible source? What’s their track record?

- Data Collection Methodology: I examine how the data was collected. What methods were used? Was the sample representative? Were there any biases in the data collection process?

- Data Documentation: I look for comprehensive documentation describing the data, including variables, definitions, data collection methods, limitations, and potential biases. Poor documentation is a red flag.

- Data Consistency and Completeness: I check for internal consistency within the data and look for missing values or outliers that might indicate problems.

- Cross-Validation (if possible): If possible, I compare the data with information from other sources to see if it’s consistent and reliable.

- Data Accuracy: I apply various statistical checks to ensure data accuracy, such as checking distributions for expected patterns, and identifying anomalies using descriptive statistics and data visualization.

By carefully assessing these aspects, I can build confidence in the data’s quality and make informed decisions about its suitability for analysis.

Q 15. What experience do you have with SQL or other database querying languages?

My experience with SQL and other database querying languages is extensive. I’ve used SQL extensively throughout my career, from simple data retrieval to complex queries involving joins, subqueries, and window functions. I’m proficient in writing efficient and optimized queries to extract meaningful insights from large datasets. Beyond SQL, I have experience with NoSQL databases like MongoDB, using their query languages for document-based data. For example, in a recent project analyzing customer behavior, I used SQL to join sales data with customer demographics to identify key segments and trends. I also utilized MongoDB’s aggregation framework to analyze unstructured customer feedback data, revealing insights missed by traditional methods.

Specifically, I’m comfortable with:

SELECT,FROM,WHERE,JOIN,GROUP BY,HAVINGclauses in SQL.- Writing and optimizing complex queries involving subqueries and common table expressions (CTEs).

- Working with different database management systems (DBMS) such as MySQL, PostgreSQL, and SQL Server.

- Utilizing NoSQL databases and their respective query languages for specific data structures.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. Describe your proficiency in programming languages relevant to data analysis (e.g., Python, R).

My proficiency in programming languages for data analysis centers around Python and R. Python, with its rich ecosystem of libraries like Pandas, NumPy, and Scikit-learn, is my primary tool for data manipulation, statistical modeling, and machine learning. R is particularly strong for its statistical graphics capabilities and specialized packages. I utilize both depending on the specific task at hand; Python for its versatility in broader data science tasks and R when the project demands specialized statistical analysis or high-quality visualizations.

For example, I recently used Python’s Pandas library to clean and transform a large, messy dataset containing customer transactions. Then, using Scikit-learn, I built a predictive model to forecast future sales. In another project, I leveraged R’s ggplot2 package to create insightful visualizations to communicate the findings of a statistical analysis to stakeholders who may not be statistically inclined.

# Python example: Data cleaning with Pandas

import pandas as pd

data = pd.read_csv('data.csv')

data_cleaned = data.dropna()

#Further cleaning and transformation...Q 17. How would you determine the appropriate sample size for a statistical study?

Determining the appropriate sample size for a statistical study depends on several factors: the desired level of precision (margin of error), the confidence level, the population size (if known), and the expected variability within the population. A larger sample size generally leads to greater precision and confidence but increases the cost and time required.

The most common method involves using a sample size calculator or formula. These formulas typically incorporate the above factors. For example, for estimating a population proportion (like the percentage of customers who prefer a certain product), a common formula is:

n = (Z^2 * p * (1-p)) / E^2

Where:

nis the sample sizeZis the Z-score corresponding to the desired confidence level (e.g., 1.96 for 95% confidence)pis the estimated proportion (if unknown, use 0.5 for maximum variability)Eis the desired margin of error

For instance, if we want a 95% confidence level with a margin of error of 5% (0.05) and we don’t have an estimate for p, the calculation becomes:

n = (1.96^2 * 0.5 * 0.5) / 0.05^2 ≈ 384

This suggests a sample size of approximately 384 is needed. However, remember that this is a simplified example. More complex scenarios might require more sophisticated techniques and considerations.

Q 18. Explain your understanding of A/B testing.

A/B testing, also known as split testing, is a controlled experiment used to compare two versions of something (e.g., a website, an advertisement, an email) to determine which performs better. This involves randomly assigning participants (website visitors, email recipients, etc.) into two groups: Group A (control group) receives the original version, and Group B (treatment group) receives the modified version. The results are then analyzed to determine statistically significant differences between the groups.

For example, a company might A/B test two different versions of their website homepage to see which one leads to a higher conversion rate. The key is to ensure that the only difference between the two versions is the element being tested, and that the assignment to groups is truly random to avoid bias. After collecting data from both groups, statistical tests (such as a t-test or chi-squared test) are employed to determine if the observed differences are statistically significant, ensuring the observed changes are not due to random chance.

Q 19. How do you handle conflicting data from multiple sources?

Handling conflicting data from multiple sources requires a systematic approach. The first step is to identify the nature of the conflict: Are the data points different in value, or are there inconsistencies in data structure or definitions? Next, investigate the credibility and reliability of each source. Factors to consider include the data source’s reputation, the methodology used to collect the data, and the potential for bias.

After assessing data quality, I would typically employ techniques such as:

- Data reconciliation: Attempting to identify the root cause of the conflict and resolve it by correcting errors or making informed decisions based on evidence and data quality assessment.

- Data integration: Combining data from multiple sources by identifying common keys or attributes. This might involve data transformation or standardization. If a direct reconciliation is impossible, weighted averaging based on the reliability of sources might be implemented.

- Data deduplication: Identifying and removing duplicate records to avoid double counting or biased results.

- Statistical modeling: In some cases, using statistical modeling techniques to identify and potentially adjust for systematic discrepancies. This might involve using regression analysis to account for biases from different sources.

The choice of method depends on the specific context and the nature of the data. Prioritization and documentation of every step are paramount for transparency and reproducibility of the analysis.

Q 20. What are your preferred methods for presenting analytical findings?

My preferred methods for presenting analytical findings depend on the audience and the nature of the findings. However, I strive for clarity, conciseness, and visual appeal. I typically use a combination of the following:

- Clear and concise written reports: Including an executive summary, methodology, key findings, and conclusions. I ensure the language is accessible to the target audience, avoiding technical jargon unless necessary.

- Data visualizations: Charts and graphs are crucial for communicating complex data effectively. I choose appropriate visualization techniques (e.g., bar charts, line graphs, scatter plots) to highlight key trends and patterns.

- Interactive dashboards: For more complex analysis, I might create interactive dashboards allowing users to explore the data and drill down into specific details.

- Presentations: For communicating to larger groups, I prepare presentations that clearly communicate the key findings using visuals and storytelling techniques.

Regardless of the method, I prioritize accurate representation of data and avoid misleading or biased interpretations. I often include limitations and caveats to foster trust and transparency.

Q 21. How do you stay updated with the latest advancements in data analysis and mathematical techniques?

Staying updated in the rapidly evolving fields of data analysis and mathematical techniques requires a multi-pronged approach. I regularly engage in the following activities:

- Reading research papers and publications: I keep up with the latest research published in reputable journals and online repositories.

- Attending conferences and workshops: Industry events provide opportunities to network with other experts and learn about new advancements.

- Taking online courses and webinars: Platforms like Coursera, edX, and DataCamp offer high-quality courses on various data analysis topics.

- Following influential data scientists and researchers: I follow experts on social media and subscribe to their newsletters to stay informed about their latest work.

- Engaging with online communities: Participating in forums and discussions helps me learn from others’ experiences and insights.

- Experimenting with new tools and techniques: I actively experiment with new software, libraries, and algorithms to improve my skills and stay abreast of technological advancements.

Continuous learning is crucial in this field, and I dedicate time consistently to enhancing my knowledge and skills.

Q 22. Describe a situation where you had to make a decision based on incomplete data.

Decision-making with incomplete data is a common challenge in analytical roles. It often involves weighing available information against potential risks and uncertainties. A key strategy is to acknowledge the limitations of the data and utilize techniques to mitigate the impact of missing information.

For instance, in a previous project involving customer churn prediction, we had a significant portion of missing data regarding customer demographics. Instead of discarding those records, which would have severely limited our dataset, we employed multiple imputation techniques. This involved using statistical methods to fill in the missing values based on patterns observed in the available data. We also performed sensitivity analysis to understand how variations in the imputed data affected our final model. This approach allowed us to build a more robust predictive model, despite the incomplete dataset. The key takeaway was acknowledging the uncertainty and implementing strategies to quantify and manage the risk associated with those uncertainties.

Q 23. What is your experience with time series analysis?

Time series analysis is a powerful technique used to analyze data points collected over time. It involves identifying patterns, trends, and seasonality within the data to make predictions or understand past behavior. My experience spans various methods, including:

- ARIMA modeling: I have extensively used ARIMA (Autoregressive Integrated Moving Average) models for forecasting time-dependent data. This includes model selection using tools like ACF and PACF plots, followed by parameter estimation and model diagnostic checks.

- Exponential Smoothing: I’m proficient in applying exponential smoothing methods, such as Holt-Winters, for forecasting time series exhibiting trends and seasonality. The choice of method depends on the nature of the data and the forecast horizon.

- Decomposition techniques: I’ve employed decomposition methods to separate a time series into its constituent components (trend, seasonality, and residuals), allowing for a more thorough understanding of the underlying patterns.

For example, in a project involving sales forecasting for a retail company, we used ARIMA modeling to predict future sales based on historical data. We considered factors such as seasonality (higher sales during holidays) and promotional campaigns to refine our model’s accuracy. The results were used to optimize inventory management and resource allocation, significantly impacting the company’s profitability.

Q 24. Explain your understanding of predictive modeling.

Predictive modeling involves creating a model that uses historical data to predict future outcomes. It’s a crucial tool across various domains, from finance (credit risk assessment) to healthcare (disease prediction). The process typically involves several steps:

- Data Collection and Preparation: Gathering relevant data, handling missing values, and transforming variables into suitable formats for model building.

- Feature Engineering: Selecting, creating, and transforming relevant input variables (features) to enhance model accuracy and interpretability.

- Model Selection: Choosing an appropriate model based on the nature of the data and the prediction task (e.g., linear regression, logistic regression, decision trees, neural networks).

- Model Training: Training the selected model using the prepared data to learn the relationships between input features and the target variable.

- Model Evaluation: Assessing the model’s performance using appropriate metrics (e.g., accuracy, precision, recall, AUC) and techniques (e.g., cross-validation).

- Model Deployment: Implementing the trained model to make predictions on new, unseen data.

The choice of model depends greatly on the context. For example, a linear regression model might suffice for predicting house prices based on size and location, while a more complex model like a neural network might be needed for image recognition.

Q 25. How do you evaluate the accuracy of a predictive model?

Evaluating the accuracy of a predictive model is crucial to ensure its reliability. The choice of metrics depends on the type of prediction task (classification or regression) and the business goals. Common metrics include:

- For Regression: Mean Squared Error (MSE), Root Mean Squared Error (RMSE), R-squared. These metrics measure the difference between predicted and actual values.

- For Classification: Accuracy, Precision, Recall, F1-score, AUC (Area Under the ROC Curve). These metrics assess the model’s ability to correctly classify instances.

Beyond these basic metrics, techniques like cross-validation are used to obtain a more robust estimate of the model’s performance on unseen data. Cross-validation involves splitting the data into multiple folds, training the model on some folds, and evaluating it on the remaining fold(s). This helps prevent overfitting, where the model performs well on the training data but poorly on new data. For example, a confusion matrix is a useful tool for visualizing the performance of a classification model and understanding its errors.

Q 26. What are some ethical considerations in data analysis?

Ethical considerations in data analysis are paramount. We must always ensure fairness, transparency, and accountability in our work. Key considerations include:

- Bias and Fairness: Data often reflects existing societal biases, and models trained on such data can perpetuate or amplify these biases. It’s crucial to identify and mitigate biases during data collection, preprocessing, and model development.

- Privacy and Security: Protecting the privacy and security of sensitive data is essential. This involves complying with relevant regulations (e.g., GDPR, CCPA) and employing appropriate data anonymization and security measures.

- Transparency and Explainability: Models should be transparent and explainable, particularly when used in high-stakes decision-making. This allows for scrutiny and accountability.

- Accountability: Data analysts should be accountable for the outcomes of their work and its potential impact on individuals and society.

For example, in a credit scoring model, biases in the training data could lead to discriminatory outcomes, unfairly denying credit to certain demographic groups. It’s vital to carefully consider these factors and implement strategies to create fair and equitable models.

Q 27. Describe a project where you used mathematical or analytical skills to achieve a significant outcome.

In a project for a logistics company, I utilized mathematical and analytical skills to optimize delivery routes and reduce transportation costs. The challenge was to develop an algorithm that could efficiently plan routes for a fleet of vehicles, considering factors such as distance, traffic conditions, delivery deadlines, and vehicle capacity.

My approach involved formulating the problem as a Vehicle Routing Problem (VRP), a well-known combinatorial optimization problem. I explored various solution approaches, including:

- Heuristics: I implemented several heuristic algorithms, such as the nearest neighbor and Clarke-Wright savings algorithms, to generate near-optimal solutions within reasonable computation time. These are simpler methods that may not find the absolute best solution but are relatively fast.

- Metaheuristics: To further improve the solution quality, I also employed metaheuristic algorithms such as Simulated Annealing and Genetic Algorithms. These explore a broader search space to find better solutions compared to simple heuristics.

By comparing the performance of these different algorithms, we selected the approach that provided the best balance between solution quality and computational efficiency. The implementation resulted in a significant reduction in transportation costs (approximately 15%) and improved delivery time efficiency for the company. The key was adapting standard optimization techniques to the company’s unique context and evaluating different approaches to find the most effective solution.

Key Topics to Learn for Mathematical and Analytical Abilities Interview

- Statistical Analysis: Understanding descriptive and inferential statistics, including hypothesis testing, regression analysis, and probability distributions. Practical application: interpreting data to draw meaningful conclusions and make informed business decisions.

- Algorithmic Thinking: Designing efficient algorithms to solve problems, analyzing their time and space complexity. Practical application: optimizing processes, improving software efficiency, and developing innovative solutions.

- Mathematical Modeling: Building mathematical models to represent real-world situations and predicting outcomes. Practical application: forecasting trends, simulating scenarios, and optimizing resource allocation.

- Data Interpretation and Visualization: Effectively interpreting data from various sources and presenting findings through clear and concise visualizations. Practical application: communicating insights to stakeholders, identifying patterns and trends, and supporting data-driven decision-making.

- Logical Reasoning and Problem Solving: Applying logical deduction, critical thinking, and problem-solving strategies to tackle complex challenges. Practical application: troubleshooting issues, identifying root causes, and developing effective solutions in a variety of contexts.

- Linear Algebra & Calculus (depending on role): Depending on the specific role, a strong understanding of linear algebra (matrices, vectors) and/or calculus (derivatives, integrals) might be beneficial. Practical application: machine learning algorithms, optimization problems, and simulations.

Next Steps

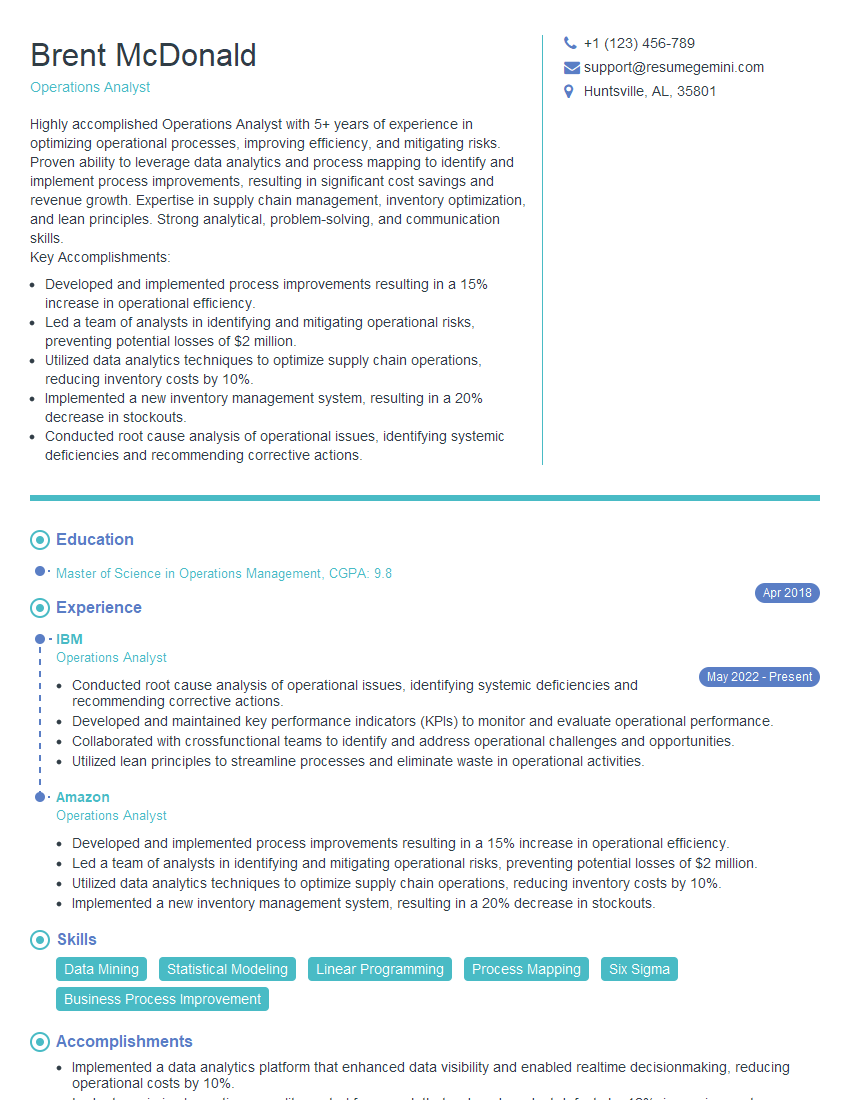

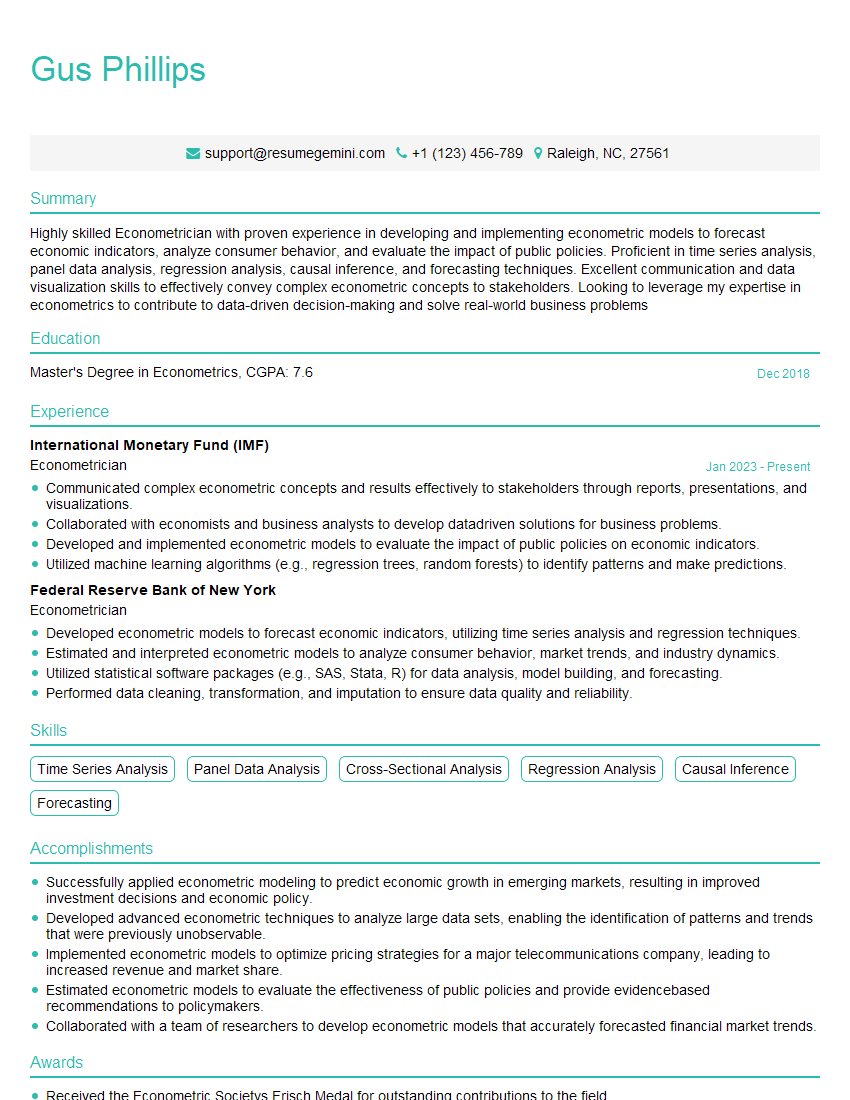

Mastering mathematical and analytical abilities is crucial for career advancement in today’s data-driven world. These skills are highly sought after across numerous industries, opening doors to exciting opportunities and higher earning potential. To maximize your job prospects, create a resume that’s both impactful and ATS-friendly. ResumeGemini can help you build a professional resume that showcases your skills effectively. We offer examples of resumes tailored to highlight Mathematical and Analytical Abilities, ensuring your application stands out from the competition. Take the next step towards your dream career; build a stronger resume with ResumeGemini.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Attention music lovers!

Wow, All the best Sax Summer music !!!

Spotify: https://open.spotify.com/artist/6ShcdIT7rPVVaFEpgZQbUk

Apple Music: https://music.apple.com/fr/artist/jimmy-sax-black/1530501936

YouTube: https://music.youtube.com/browse/VLOLAK5uy_noClmC7abM6YpZsnySxRqt3LoalPf88No

Other Platforms and Free Downloads : https://fanlink.tv/jimmysaxblack

on google : https://www.google.com/search?q=22+AND+22+AND+22

on ChatGPT : https://chat.openai.com?q=who20jlJimmy20Black20Sax20Producer

Get back into the groove with Jimmy sax Black

Best regards,

Jimmy sax Black

www.jimmysaxblack.com

Hi I am a troller at The aquatic interview center and I suddenly went so fast in Roblox and it was gone when I reset.

Hi,

Business owners spend hours every week worrying about their website—or avoiding it because it feels overwhelming.

We’d like to take that off your plate:

$69/month. Everything handled.

Our team will:

Design a custom website—or completely overhaul your current one

Take care of hosting as an option

Handle edits and improvements—up to 60 minutes of work included every month

No setup fees, no annual commitments. Just a site that makes a strong first impression.

Find out if it’s right for you:

https://websolutionsgenius.com/awardwinningwebsites

Hello,

we currently offer a complimentary backlink and URL indexing test for search engine optimization professionals.

You can get complimentary indexing credits to test how link discovery works in practice.

No credit card is required and there is no recurring fee.

You can find details here:

https://wikipedia-backlinks.com/indexing/

Regards

NICE RESPONSE TO Q & A

hi

The aim of this message is regarding an unclaimed deposit of a deceased nationale that bears the same name as you. You are not relate to him as there are millions of people answering the names across around the world. But i will use my position to influence the release of the deposit to you for our mutual benefit.

Respond for full details and how to claim the deposit. This is 100% risk free. Send hello to my email id: lukachachibaialuka@gmail.com

Luka Chachibaialuka

Hey interviewgemini.com, just wanted to follow up on my last email.

We just launched Call the Monster, an parenting app that lets you summon friendly ‘monsters’ kids actually listen to.

We’re also running a giveaway for everyone who downloads the app. Since it’s brand new, there aren’t many users yet, which means you’ve got a much better chance of winning some great prizes.

You can check it out here: https://bit.ly/callamonsterapp

Or follow us on Instagram: https://www.instagram.com/callamonsterapp

Thanks,

Ryan

CEO – Call the Monster App

Hey interviewgemini.com, I saw your website and love your approach.

I just want this to look like spam email, but want to share something important to you. We just launched Call the Monster, a parenting app that lets you summon friendly ‘monsters’ kids actually listen to.

Parents are loving it for calming chaos before bedtime. Thought you might want to try it: https://bit.ly/callamonsterapp or just follow our fun monster lore on Instagram: https://www.instagram.com/callamonsterapp

Thanks,

Ryan

CEO – Call A Monster APP

To the interviewgemini.com Owner.

Dear interviewgemini.com Webmaster!

Hi interviewgemini.com Webmaster!

Dear interviewgemini.com Webmaster!

excellent

Hello,

We found issues with your domain’s email setup that may be sending your messages to spam or blocking them completely. InboxShield Mini shows you how to fix it in minutes — no tech skills required.

Scan your domain now for details: https://inboxshield-mini.com/

— Adam @ InboxShield Mini

support@inboxshield-mini.com

Reply STOP to unsubscribe

Hi, are you owner of interviewgemini.com? What if I told you I could help you find extra time in your schedule, reconnect with leads you didn’t even realize you missed, and bring in more “I want to work with you” conversations, without increasing your ad spend or hiring a full-time employee?

All with a flexible, budget-friendly service that could easily pay for itself. Sounds good?

Would it be nice to jump on a quick 10-minute call so I can show you exactly how we make this work?

Best,

Hapei

Marketing Director

Hey, I know you’re the owner of interviewgemini.com. I’ll be quick.

Fundraising for your business is tough and time-consuming. We make it easier by guaranteeing two private investor meetings each month, for six months. No demos, no pitch events – just direct introductions to active investors matched to your startup.

If youR17;re raising, this could help you build real momentum. Want me to send more info?

Hi, I represent an SEO company that specialises in getting you AI citations and higher rankings on Google. I’d like to offer you a 100% free SEO audit for your website. Would you be interested?

Hi, I represent an SEO company that specialises in getting you AI citations and higher rankings on Google. I’d like to offer you a 100% free SEO audit for your website. Would you be interested?