Preparation is the key to success in any interview. In this post, we’ll explore crucial ElectroOptical (EO) Imaging interview questions and equip you with strategies to craft impactful answers. Whether you’re a beginner or a pro, these tips will elevate your preparation.

Questions Asked in ElectroOptical (EO) Imaging Interview

Q 1. Explain the differences between MWIR, LWIR, and SWIR imaging.

SWIR, MWIR, and LWIR refer to different spectral bands within the infrared (IR) region of the electromagnetic spectrum, each offering unique advantages for electro-optical (EO) imaging. They are categorized by their wavelength ranges:

- SWIR (Short-Wave Infrared): Typically ranges from 0.9 to 3 μm. This band is sometimes considered the bridge between visible and thermal infrared. It is useful for applications requiring penetration of certain atmospheric conditions (like haze) better than visible light, and it can reveal features not readily apparent in visible images. Examples include mineral identification and agricultural monitoring.

- MWIR (Mid-Wave Infrared): Generally covers the range of 3 to 5 μm. This band is highly sensitive to thermal radiation emitted by objects, making it ideal for thermal imaging in various scenarios, including night vision and detecting heat signatures. The atmospheric transmission in this band is relatively high, making it suitable for long-range imaging.

- LWIR (Long-Wave Infrared): Extends from 8 to 15 μm. LWIR is also sensitive to thermal radiation, but it is less affected by atmospheric attenuation compared to MWIR, making it effective for longer ranges in poor weather conditions like fog or smoke. It’s commonly used in security and surveillance applications.

The key differences lie in their atmospheric transmission, sensitivity to different materials, and the resulting image contrast and range capabilities. The choice of band depends critically on the specific application needs.

Q 2. Describe the principles of operation of a CCD and CMOS image sensor.

Both CCD (Charge-Coupled Device) and CMOS (Complementary Metal-Oxide-Semiconductor) image sensors are used to convert light into digital signals, but they operate on different principles:

- CCD: A CCD sensor uses a photoelectric effect to convert incoming photons into electrons, which are then stored in potential wells within the sensor array. These charges are then sequentially transferred across the array to a charge-to-voltage converter, where the signal is read out. CCDs are known for their high sensitivity and low noise, producing excellent image quality, particularly in low-light conditions. However, they typically require more power and are generally more expensive than CMOS sensors.

- CMOS: A CMOS sensor has integrated circuitry directly on each pixel. Each pixel independently converts light into an electrical signal and transmits it through integrated electronics. This parallel processing allows for faster readout speeds and allows for features like on-chip signal processing. CMOS sensors are widely used in many consumer electronics due to their lower cost and lower power consumption. However, they often have higher noise levels than CCDs, especially at low light levels.

Imagine CCD as a highly efficient but slower assembly line carefully transferring individual units, while CMOS is a network of independent workers processing units concurrently. Both achieve the same goal, but their approaches and resulting efficiencies differ significantly.

Q 3. What are the key factors affecting the signal-to-noise ratio (SNR) in EO systems?

The signal-to-noise ratio (SNR) is crucial for EO system performance, representing the ratio of the desired signal strength to the unwanted noise. Several factors influence SNR:

- Background Noise: Ambient light, thermal radiation, and electronic noise from the sensor and circuitry all contribute to background noise, reducing the SNR.

- Sensor Noise: Read noise, dark current (electrons generated in the absence of light), and shot noise (random fluctuations due to the discrete nature of light) inherent to the sensor itself impact SNR.

- Optical Losses: Losses in the optical system due to scattering, absorption, and reflections lower the signal, diminishing the SNR.

- Atmospheric Conditions: Attenuation and scattering of light by the atmosphere significantly impacts signal strength and thus the SNR.

- Target Characteristics: The target’s emissivity (ability to radiate heat) and reflectivity influence the signal strength, affecting the SNR. A weakly radiating target will have a lower SNR.

Improving SNR often involves strategies like using low-noise sensors, optimizing the optical design to minimize losses, employing cooling mechanisms to reduce thermal noise, and implementing effective signal processing techniques.

Q 4. How does atmospheric attenuation impact EO imaging performance?

Atmospheric attenuation refers to the reduction in the intensity of electromagnetic radiation as it passes through the atmosphere. This significantly impacts EO imaging performance. Several phenomena contribute to this attenuation:

- Absorption: Gases like water vapor and carbon dioxide absorb specific wavelengths of light, reducing the signal strength in those spectral bands. This is particularly significant for certain IR wavelengths.

- Scattering: Air molecules and aerosols (e.g., dust, fog) scatter light in various directions, reducing the amount of light reaching the sensor and causing blurring.

- Haze: Haze, a common atmospheric condition, heavily scatters light, severely reducing visibility and degrading image quality.

The impact of atmospheric attenuation varies with distance, wavelength, and atmospheric conditions. Long-range EO systems often employ compensation techniques to account for this loss, such as atmospheric correction algorithms based on meteorological data and adaptive optics.

Q 5. Explain the concept of Modulation Transfer Function (MTF) and its importance in EO systems.

The Modulation Transfer Function (MTF) is a crucial measure of an EO system’s ability to faithfully reproduce details in an image. It quantifies the system’s response to different spatial frequencies. In essence, it describes how well the system can transfer different levels of contrast (modulation) at different spatial frequencies (details).

A high MTF at high spatial frequencies indicates the system’s capability to resolve fine details. A low MTF suggests the system blurs or smears fine details. MTF is typically plotted as a function of spatial frequency.

The overall MTF of an EO system is a product of the MTFs of its individual components—the lens, the sensor, and the atmospheric conditions. Optimizing the MTF of each component is crucial for achieving high-resolution imaging. Engineers use MTF analysis to design and evaluate EO systems, ensuring the system meets the required resolution and clarity.

Q 6. Discuss various image processing techniques used to enhance EO images.

Various image processing techniques enhance EO images by improving their quality, extracting information, and correcting for distortions. Some key techniques include:

- Noise Reduction: Filters like median filters and Wiener filters are employed to reduce noise without significantly blurring edges.

- Contrast Enhancement: Histogram equalization and dynamic range compression improve the visibility of details by adjusting the intensity distribution.

- Sharpening: Unsharp masking and high-boost filtering enhance edges and fine details.

- Image Restoration: Techniques like deconvolution attempt to reverse blurring caused by atmospheric effects or optical aberrations.

- Target Detection and Recognition: Algorithms that analyze image features to identify specific objects or patterns. This might include edge detection, object classification, and pattern matching.

- Geometric Correction: Correcting image distortions due to lens effects or sensor misalignments.

The choice of techniques depends on the specific image and the desired outcome. Sophisticated algorithms often combine multiple techniques for optimal enhancement.

Q 7. What are the advantages and disadvantages of different types of lenses used in EO systems?

EO systems employ various lens types, each with its strengths and weaknesses:

- Refractive Lenses: These lenses use different refractive indices of glass to bend light. They are relatively inexpensive but can suffer from chromatic aberration (color fringes) and other optical distortions. They are common in many consumer and some professional systems.

- Diffractive Lenses: These lenses use diffraction gratings to focus light. They can have smaller sizes and lighter weight compared to refractive lenses. However, their performance may be sensitive to wavelength.

- Achromatic Lenses: These lenses are designed to minimize chromatic aberration, resulting in sharper images across a broader spectrum of wavelengths. They are typically more expensive.

- Aspheric Lenses: These lenses have non-spherical surfaces, allowing for better control of aberrations and improved image quality, often used in high-performance systems.

- Zoom Lenses: Allow for variable focal length, providing flexibility in field of view. They are commonly employed in surveillance and observation applications.

The selection of a lens type is a trade-off between cost, performance requirements, size, weight and the application’s specific needs. High-performance systems often utilize complex lens assemblies incorporating multiple lens elements to optimize image quality.

Q 8. Describe the different types of noise present in EO imaging systems.

Noise in EO imaging systems degrades image quality, reducing the ability to discern fine details or detect faint targets. Several types exist, each with different characteristics and origins.

- Shot Noise (Photon Noise): This fundamental noise arises from the discrete nature of light; the number of photons detected fluctuates randomly. Think of it like flipping a coin many times – you expect roughly half heads, but there’s always random variation. It’s more prominent in low-light conditions.

- Read Noise: Generated within the sensor’s electronics during the readout process. It’s analogous to static on an old radio; it’s a consistent level of noise regardless of light intensity.

- Dark Current Noise: Even without light, sensors produce a small current due to thermally generated electrons. This is like a small, constant leak in a system, increasing with temperature.

- Fixed Pattern Noise (FPN): This systematic noise is caused by variations in the response of individual pixels. Imagine a slightly uneven painting; some areas are brighter or darker than others. It’s relatively constant and can be corrected through calibration.

- Temporal Noise: Fluctuations that change over time, often linked to environmental factors such as temperature variations or vibration affecting the sensor.

Minimizing noise is crucial for optimal performance. Techniques like cooling the sensor (reducing dark current), using high-quality electronics (lower read noise), and implementing advanced noise reduction algorithms during post-processing are employed.

Q 9. How do you calibrate and test EO imaging systems?

Calibration and testing of EO imaging systems ensure accurate and reliable performance. This involves a multi-step process:

- Radiometric Calibration: This establishes a linear relationship between the detected signal and the actual radiance of the scene. It typically involves using calibrated light sources with known intensity to create a lookup table that corrects for sensor non-linearities.

- Geometric Calibration: This corrects for distortions in the image due to lens imperfections or sensor misalignment. Techniques like using a calibration target with known geometric features (e.g., a checkerboard pattern) are used to model and compensate for these distortions.

- Responsivity Calibration: Determines the sensor’s sensitivity to light at different wavelengths. This is crucial for accurate spectral measurements.

- Environmental Testing: EO systems must operate reliably under various conditions (temperature, humidity, vibration). Environmental chambers simulate these conditions to assess performance robustness.

Testing involves evaluating key performance metrics like spatial resolution, signal-to-noise ratio (SNR), modulation transfer function (MTF), and distortion. These tests can be performed using standardized procedures and equipment, ensuring consistent results and enabling objective comparisons between different systems.

For example, MTF is often assessed using a slanted edge target and evaluating the sharpness of the resultant edge in the image; this indirectly measures the system’s ability to resolve fine details.

Q 10. Explain the concept of spatial resolution and its relationship to sensor pixel size.

Spatial resolution refers to the ability of an EO imaging system to distinguish fine details in an image. It’s directly related to the sensor’s pixel size. Smaller pixels generally lead to higher spatial resolution.

Imagine looking at a painting with a magnifying glass. The smaller the pixels (like tiny paint dots), the more detail you can see. The spatial resolution is often expressed in line pairs per millimeter (lp/mm) or pixels per inch (PPI).

The relationship isn’t entirely straightforward. While smaller pixels offer theoretically higher resolution, other factors limit practical performance. These include lens diffraction (light bending) and the sensor’s overall architecture. A system with smaller pixels but poor optics might not show much improvement in image sharpness.

Consider a satellite imagery application. High spatial resolution is critical for tasks such as identifying individual vehicles or buildings. In contrast, a low-resolution system might only be capable of distinguishing larger features like roads or forests.

Q 11. What are the key considerations in designing an EO system for a specific application?

Designing an EO system for a specific application requires careful consideration of numerous factors:

- Application Requirements: What are the specific needs? Range, resolution, field of view, target characteristics, environmental conditions, etc.

- Spectral Range: Does the application require visible light only, infrared, or other spectral bands? This dictates the choice of sensor, optics, and filters.

- Optics: Lens selection critically impacts resolution, field of view, and distortion. Considerations include focal length, aperture, and optical quality.

- Sensor: Sensor type (CCD, CMOS), pixel size, dynamic range, and sensitivity are key choices.

- Signal Processing: Algorithms for noise reduction, image enhancement, and target detection are essential. Real-time processing requirements influence hardware choices.

- Size, Weight, and Power (SWaP): Especially critical for airborne or spaceborne systems. Miniaturization technologies and efficient power management are key.

- Cost: Balance performance requirements with budgetary constraints. Trade-offs between different components may be necessary.

For example, designing an EO system for surveillance might prioritize a wide field of view and good low-light performance, while a system for medical imaging might emphasize high resolution and spectral sensitivity.

Q 12. Describe different types of optical filters and their applications in EO imaging.

Optical filters selectively transmit or block specific wavelengths of light, enhancing image quality and tailoring the system for a given application. Different filter types exist:

- Bandpass Filters: Transmit a specific range of wavelengths while blocking others. Useful for isolating specific spectral features of interest (e.g., isolating a particular fluorescence signal in a medical application).

- Longpass Filters: Transmit light above a certain wavelength, blocking shorter wavelengths (e.g., blocking visible light to allow only near-infrared (NIR) to pass).

- Shortpass Filters: Transmit light below a certain wavelength and block longer wavelengths (e.g., blocking infrared to allow only visible light).

- Neutral Density (ND) Filters: Reduce the intensity of light across the entire spectrum without significantly altering spectral content. Useful for controlling exposure in bright conditions.

- Polarizing Filters: Reduce glare and enhance contrast by selectively transmitting light of a specific polarization. Common in photography and remote sensing applications.

For instance, in thermal imaging, bandpass filters are used to select specific infrared bands relevant to temperature measurement. In astronomy, narrow-band filters isolate emission lines from specific celestial objects, enhancing their visibility.

Q 13. Explain the role of thermal management in EO system design.

Thermal management is crucial in EO system design, especially for systems operating in harsh environments or with high-power components. Heat generation from the sensor, electronics, and other components can degrade performance, reduce lifespan, and even cause failure. Effective thermal management ensures the system operates within its specified temperature range.

Strategies include:

- Passive Cooling: Utilizing conductive, convective, or radiative heat transfer to dissipate heat to the surroundings. This might involve heat sinks, fins, or thermally conductive materials.

- Active Cooling: Employing active cooling methods such as thermoelectric coolers (TECs) or liquid cooling systems to actively remove heat. These are often necessary for high-power systems or those operating in hot environments.

- Thermal Insulation: Minimizing heat transfer into or out of the system using insulating materials. This helps maintain a stable operating temperature.

Failure to properly manage heat can lead to sensor noise increase, reduced sensitivity, and even catastrophic component failure. Therefore, thermal modelling and testing are critical steps in EO system design and verification.

Q 14. How does target detection and recognition relate to EO imaging system performance?

Target detection and recognition (TDR) are crucial applications of EO imaging, where the system’s performance directly impacts success. Detection involves simply identifying the presence of a target, while recognition involves classifying the target’s identity.

Several factors influence TDR performance:

- Spatial Resolution: Higher resolution allows for finer detail, improving the ability to distinguish targets from clutter.

- Spectral Range: Different spectral bands offer different advantages for TDR. Infrared imaging excels in low-light or camouflage detection.

- Signal-to-Noise Ratio (SNR): High SNR is essential for reliable target detection, especially in challenging environments with low light or high background noise.

- Image Processing Algorithms: Sophisticated algorithms improve TDR by enhancing image contrast, filtering out noise, and employing pattern recognition techniques.

- Environmental Conditions: Factors such as atmospheric effects, weather, and background clutter significantly influence TDR performance.

For example, in military applications, high-resolution EO systems with advanced image processing are used to detect and identify enemy vehicles or personnel. In autonomous driving, EO systems are essential for detecting pedestrians and other obstacles to ensure safety.

Q 15. Discuss the challenges in integrating different EO sensors into a single system.

Integrating different EO sensors into a single system presents several challenges. The core difficulty lies in harmonizing disparate data streams with varying spectral ranges, resolutions, frame rates, and sensitivities. Imagine trying to combine a high-resolution visible light camera with a thermal infrared camera – they’ll likely have different fields of view, requiring sophisticated image registration techniques to align them. Furthermore, each sensor may require unique interfaces and power supplies, adding complexity to the system design.

- Data Fusion: Combining data from different sensors effectively requires algorithms that can fuse information from multiple sources, taking into account variations in noise levels and signal-to-noise ratios. This is often a computationally intensive task.

- Calibration and Alignment: Precise calibration is crucial to ensure accurate data fusion. Misalignments between sensors can lead to significant errors in spatial registration. This necessitates careful calibration procedures and potentially the use of optical and mechanical alignment mechanisms.

- Power Consumption: Multi-sensor systems consume more power than single-sensor systems, creating challenges for battery-powered or resource-constrained applications. Efficient power management strategies are therefore vital.

- Data Handling: The sheer volume of data generated by multiple sensors can overwhelm data storage and processing capabilities. Effective data compression and efficient data handling strategies are needed.

For example, a multi-sensor system for autonomous driving might integrate a high-resolution color camera for object detection, a lidar sensor for range finding, and a thermal camera for night vision. Careful system integration is crucial to ensure the reliability and performance of the entire system.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. What are the different types of image distortions and how are they corrected?

Image distortions are imperfections that alter the geometric fidelity of an image. These can stem from lens aberrations, sensor imperfections, or even environmental factors. Common distortions include:

- Radial Distortion: Straight lines appear curved, often bowing outwards (barrel distortion) or inwards (pincushion distortion). This arises from imperfections in lens design or manufacturing.

- Tangential Distortion: Straight lines appear skewed, with one edge shifted more than the other. This is often caused by misalignments within the lens system.

- Geometric Distortion: A more general term that encompasses various spatial inaccuracies, like perspective distortion (caused by the angle of view) or shear distortion (non-uniform scaling).

Correction methods typically involve:

- Software-Based Correction: This involves using image processing algorithms to map distorted pixels to their correct locations based on a distortion model. This model can be derived through calibration procedures using known patterns.

- Hardware-Based Correction: This uses specialized optics to minimize distortion at the source. This is often more expensive but provides a higher degree of correction.

Consider a simple example: A wide-angle lens is prone to barrel distortion. Software correction involves detecting straight lines in the image and warping them to be straight again, based on a calculated distortion model. The algorithm essentially remaps pixels based on the pre-determined model. This is often computationally intensive but effectively rectifies the distortion.

Q 17. How does the choice of optical material affect system performance?

The choice of optical material significantly impacts EO system performance. Key factors include:

- Transmission: The material’s ability to transmit light through its volume within the desired spectral range. Different materials have different transmission characteristics. For example, certain glasses may transmit well in the visible spectrum but poorly in the infrared.

- Refractive Index: This determines how much light bends when passing through the material. The refractive index should be stable across temperature and wavelength. Variations can lead to aberrations and reduced image quality.

- Dispersion: This relates to the change in refractive index with wavelength. High dispersion causes chromatic aberration (color fringing), which can degrade image sharpness.

- Durability and Environmental Stability: Materials should withstand the environmental conditions the system will encounter, including temperature extremes and humidity. Certain materials may be susceptible to degradation over time or exposure to ultraviolet light.

- Cost: Some materials with superior optical properties, like certain crystals, can be significantly more expensive than standard glasses.

For instance, Zinc Selenide (ZnSe) is often used in infrared optics due to its high transmission in the infrared spectral range. However, it is more brittle and expensive than standard glasses used in visible light systems. The selection of optical materials involves a trade-off between optical performance, cost, and durability.

Q 18. Explain the principles of radiometry and photometry in the context of EO imaging.

Radiometry and photometry are both concerned with the measurement of light, but they differ in their approach. Radiometry deals with the *physical* measurement of light power, regardless of its perceived brightness to the human eye. Photometry, on the other hand, considers the *visual* response of the human eye to light, weighting different wavelengths based on the eye’s sensitivity.

- Radiometry: Quantifies radiant energy using units like watts (W), which measure power, or joules (J), which measure energy. Radiometric quantities include irradiance (power per unit area), radiance (power per unit area per unit solid angle), and radiant intensity (power per unit solid angle). In EO imaging, radiometric measurements are crucial for characterizing sensor sensitivity and determining the signal-to-noise ratio.

- Photometry: Measures light as perceived by the human eye, using units like lumens (lm) for luminous flux, candelas (cd) for luminous intensity, and lux (lx) for illuminance. Photometric measurements are relevant when the goal is to evaluate the image’s appearance to human observers, such as in surveillance systems.

In an EO system, radiometric measurements are used to quantify the signal strength from the target, while photometric measurements are used to estimate the perceived brightness of the image. A thermal camera, for example, uses radiometry to measure the infrared radiation emitted by objects, while a visible light camera might use photometry to calculate the luminance levels in the image for display to a human operator.

Q 19. What are some common image compression techniques used in EO systems?

Image compression techniques in EO systems are crucial for reducing storage requirements and transmission bandwidth. Common techniques include:

- JPEG (Joint Photographic Experts Group): A lossy compression technique widely used for still images. It exploits the redundancy in image data by discarding less significant information. This results in smaller file sizes but with some loss of image quality.

- JPEG 2000: An improved version of JPEG, offering better compression ratios and better preservation of image quality, especially at low bit rates. It’s also better suited to progressive transmission where a low-resolution version is displayed first followed by higher resolution.

- Wavelet Compression: This technique decomposes the image into different frequency components using wavelet transforms. This allows for more efficient compression by focusing on the most significant components. It can be both lossy and lossless.

- MPEG (Moving Picture Experts Group): Used for compressing video data, exploiting temporal redundancy between successive frames. Different MPEG standards offer various compression levels and quality trade-offs.

- H.264/AVC (Advanced Video Coding) and H.265/HEVC (High-Efficiency Video Coding): These are more recent video compression standards providing superior compression efficiency compared to older methods. They are used extensively in modern video surveillance systems.

The choice of compression method depends on factors like image characteristics, desired compression ratio, and acceptable loss of quality. For example, JPEG might suffice for static images where slight quality degradation is acceptable, while H.264 would be more appropriate for streaming video footage where reducing bandwidth is a priority.

Q 20. Describe various image registration techniques.

Image registration is the process of aligning multiple images to a common coordinate system. This is crucial in multi-sensor systems or when images of the same scene are acquired from different viewpoints or times. Techniques include:

- Feature-Based Registration: This involves identifying corresponding features (e.g., corners, edges) in different images and using these features to estimate the transformation (translation, rotation, scaling) needed to align the images. Algorithms like Scale-Invariant Feature Transform (SIFT) and Speeded-Up Robust Features (SURF) are commonly used.

- Intensity-Based Registration: This method directly compares the pixel intensities of different images to find the optimal alignment. Techniques like cross-correlation and mutual information are used to measure the similarity between images and optimize the alignment.

- Model-Based Registration: This relies on a pre-defined model of the scene or object. The transformation parameters are estimated by fitting the model to the images. This is useful when a priori knowledge about the scene is available.

For example, in satellite imagery, images taken from different orbits need to be registered to create a mosaic of a larger area. This often involves using feature-based registration, identifying common features like roads or buildings in the images. In medical imaging, aligning images from different modalities (e.g., MRI and CT scans) is essential for accurate diagnosis, which might utilize intensity-based registration to align images based on their pixel intensity values.

Q 21. Discuss the challenges of real-time image processing in EO systems.

Real-time image processing in EO systems presents significant challenges. The key issue is the need to process large amounts of data with minimal latency to ensure timely responses and effective operation. These challenges include:

- Computational Complexity: Many image processing algorithms are computationally intensive, requiring specialized hardware like GPUs (Graphics Processing Units) or FPGAs (Field-Programmable Gate Arrays) to achieve real-time performance. Simple operations like filtering, edge detection, or object recognition can still be challenging at high frame rates.

- Data Rate: High-resolution EO sensors generate enormous amounts of data. Processing and transmitting this data in real time requires high-bandwidth communication links and efficient data handling strategies. Data reduction techniques like compression are essential.

- Power Consumption: Real-time processing often consumes considerable power, especially when using high-performance hardware. This is a major limitation for battery-powered systems.

- Algorithm Optimization: For real-time operation, algorithms need to be optimized for speed and efficiency. This often involves trade-offs between accuracy and processing speed.

For example, in a guided missile system, real-time image processing is essential for accurate target tracking and guidance. The system must rapidly process images from the seeker head, identify the target, and adjust the missile’s trajectory accordingly. This requires sophisticated algorithms, specialized hardware, and careful optimization to ensure the system meets its performance requirements within strict latency constraints.

Q 22. Explain your understanding of different types of image segmentation techniques.

Image segmentation is the process of partitioning a digital image into multiple segments (sets of pixels), to simplify and/or change the representation of an image into something that is more meaningful and easier to analyze. Think of it like separating the foreground from the background in a photo. There are many techniques, broadly categorized into several approaches:

- Thresholding: This is the simplest method. A threshold value is chosen, and pixels above this value are assigned to one segment, while those below are assigned to another. This works well for images with high contrast between objects and background. For example, separating a bright object against a dark background.

- Edge-based segmentation: This method identifies boundaries (edges) between objects by detecting changes in pixel intensity. Algorithms like the Sobel operator or Canny edge detector are used. Imagine finding the outlines of objects in a picture.

- Region-based segmentation: This approach groups pixels with similar characteristics (color, texture, intensity) into regions. Examples include region growing, where pixels are recursively added to a region based on similarity, and watershed segmentation, which treats the image as a topographical map, separating regions based on ‘watersheds’. This is useful for identifying objects of similar properties, like identifying all the green trees in a satellite image.

- Clustering-based segmentation: Techniques like k-means clustering group pixels into clusters based on their feature vectors (e.g., color, texture). This allows for segmentation even when object boundaries aren’t clearly defined. Think of grouping similar colored pixels together into different segments.

- Deep learning-based segmentation: Convolutional neural networks (CNNs) are used to learn complex features from the image data and perform segmentation. These methods are powerful but require large training datasets. This is the state-of-the-art for many applications requiring high accuracy.

The choice of segmentation technique depends heavily on the specific application, image characteristics (noise, resolution, contrast), and computational constraints.

Q 23. How would you approach designing an EO system for autonomous navigation?

Designing an EO system for autonomous navigation requires careful consideration of several factors. The primary goal is to provide the system with accurate and reliable information about its surroundings in real-time. My approach would involve these steps:

- Define Requirements: Clearly specify the navigation task (e.g., obstacle avoidance, path planning), operating environment (indoor/outdoor, lighting conditions), and performance metrics (accuracy, range, update rate).

- Sensor Selection: Choose appropriate EO sensors based on the requirements. For example, a stereo vision system might be suitable for accurate depth perception, while a lidar system could provide range information. The choice might also include cameras with different spectral sensitivities to handle different lighting conditions. Thermal imaging could add further capabilities by detecting temperature differences, which are helpful in varied environments.

- System Design: Integrate the selected sensors, processing units (for image processing and sensor fusion), and actuators (for navigation control). Consider factors like power consumption, weight, size, and robustness.

- Image Processing Algorithms: Develop robust algorithms for image acquisition, preprocessing (noise reduction, geometric correction), feature extraction (edge detection, object recognition), and object tracking. Algorithms must be computationally efficient to operate in real-time.

- Sensor Fusion: Integrate data from multiple sensors to improve the overall accuracy and reliability of the navigation system. This involves combining information from different sources to form a more complete picture of the environment. For example, combining data from a camera and a lidar sensor to create a highly accurate 3D model.

- Testing and Validation: Rigorously test the system in various conditions to ensure its performance meets the specified requirements. This includes both simulated and real-world testing.

For instance, a self-driving car might use a combination of visible light cameras for object recognition, lidar for precise distance measurements, and potentially thermal imaging for night-time operation or detecting pedestrians hidden behind other objects.

Q 24. Describe your experience with different image analysis software.

I have extensive experience with various image analysis software packages. My proficiency includes:

- MATLAB: I use MATLAB extensively for image processing, algorithm development, and prototyping. Its Image Processing Toolbox provides a comprehensive set of functions for tasks such as filtering, segmentation, and feature extraction. I’ve used it to develop custom algorithms for object detection and tracking in EO imagery.

- ENVI: I’m familiar with ENVI for remote sensing applications, particularly for analyzing hyperspectral imagery. This software is powerful for geospatial analysis and data visualization.

- Python with OpenCV: I utilize Python with the OpenCV library for image processing tasks, particularly real-time applications due to its efficiency. OpenCV offers a wide range of functions for image manipulation and computer vision tasks. I’ve leveraged it for building prototype systems for autonomous navigation.

- Commercial Software (e.g., Pix4D): I’ve worked with commercial software packages for photogrammetry, used extensively in 3D modeling and mapping from EO imagery, particularly aerial imagery captured by drones or satellites.

My experience spans using these tools for both research and development projects, allowing me to select the most appropriate software for a given task, balancing functionality, efficiency and cost.

Q 25. Explain how you would troubleshoot a problem with low image quality in an EO system.

Troubleshooting low image quality in an EO system is a systematic process. I would approach it using a structured methodology:

- Identify the Symptoms: Precisely define the problem. Is it blurriness, noise, poor contrast, distortion, or something else? Document the specific conditions under which the problem occurs (lighting, temperature, etc.).

- Check the Sensor: Inspect the sensor for physical damage (dust, scratches, etc.). Verify that the sensor is properly calibrated and functioning within its specifications. Check for any degradation in sensor performance (e.g., dark current increase in IR sensors).

- Examine the Optics: Assess the quality of the lenses and optical components. Check for misalignment, damage, or contamination. Cleaning or replacing optical components may be necessary.

- Evaluate the Acquisition Parameters: Review the image acquisition settings such as exposure time, gain, and frame rate. Incorrect settings can significantly affect image quality. Optimize these parameters to improve the image.

- Analyze the Processing Chain: Review the image processing pipeline. Problems can arise from inadequate noise reduction, incorrect geometric corrections, or faulty image compression.

- Environmental Factors: Consider environmental influences like atmospheric conditions (haze, fog), temperature variations, or vibrations that may degrade image quality.

- Data Analysis: Perform a quantitative analysis of the images using metrics such as signal-to-noise ratio (SNR), modulation transfer function (MTF), and contrast ratio. This provides objective data to guide troubleshooting.

For example, if the image is blurry, the problem might be due to defocus, motion blur, or atmospheric turbulence. Each case requires a different solution, ranging from simple adjustments to lens settings to more complex adjustments in the optical system or image processing algorithms.

Q 26. What are the limitations of EO imaging, and how can these be overcome?

EO imaging, while powerful, has several limitations:

- Weather Dependency: Adverse weather conditions like clouds, fog, rain, and snow significantly affect image quality and can completely obscure the view.

- Limited Range: The effective range of EO systems depends on factors like light levels, atmospheric conditions, and target reflectivity. In low light conditions or at long ranges, image quality degrades significantly.

- Sensitivity to Illumination: EO systems rely on ambient or external light sources. Poor lighting conditions greatly reduce image quality or render the system ineffective.

- Camouflage and Concealment: Targets can be effectively concealed using camouflage or other techniques, making detection difficult.

- Atmospheric Effects: Atmospheric scattering, absorption, and turbulence can degrade image quality and introduce distortion.

These limitations can be overcome (or mitigated) through various techniques:

- Multiple Spectral Bands: Using multiple spectral bands (e.g., visible, near-infrared) allows for better object discrimination and enhanced performance in various atmospheric conditions. Thermal imaging can offer further improvements in low-light conditions.

- Advanced Image Processing: Sophisticated image processing techniques, such as super-resolution, deblurring, and noise reduction, can improve image quality.

- Sensor Fusion: Combining EO data with other sensor modalities (e.g., lidar, radar) can provide a more comprehensive understanding of the environment and overcome the limitations of EO imaging alone.

- Adaptive Optics: Techniques like adaptive optics can compensate for atmospheric distortions and improve image resolution at long ranges.

For instance, the use of thermal imaging in conjunction with visible light cameras allows for operation in low-light conditions and improves target detection even when obscured by camouflage.

Q 27. Discuss the future trends and advancements in EO imaging technology.

The future of EO imaging is bright, driven by advancements in several key areas:

- Higher Resolution Sensors: The development of high-resolution sensors with improved sensitivity will lead to enhanced image clarity and detailed information capture.

- Advanced Spectral Imaging: Hyperspectral and multispectral imaging will become increasingly prevalent, enabling detailed spectral analysis and improved object identification.

- Artificial Intelligence and Machine Learning: AI and ML will play a crucial role in automated image analysis, object recognition, and scene understanding. This leads to autonomous systems that can interpret imagery without human intervention.

- Quantum Imaging: Emerging quantum imaging technologies promise significant improvements in resolution, sensitivity, and imaging speed.

- 3D and 4D Imaging: Advances in 3D and 4D imaging will enable more comprehensive scene representation and real-time tracking of dynamic objects.

- Miniaturization and Integration: Smaller, lighter, and more energy-efficient EO systems will be developed, enabling their integration into various platforms like drones, wearable devices, and mobile robots.

These advancements will lead to applications in diverse fields, including autonomous vehicles, security and surveillance, medical imaging, environmental monitoring, and scientific research.

Q 28. Explain your experience with specific EO/IR sensor technologies (e.g., InGaAs, MCT).

I have significant experience working with various EO/IR sensor technologies, including InGaAs and MCT detectors.

- InGaAs (Indium Gallium Arsenide): InGaAs detectors are widely used in the near-infrared (NIR) region of the electromagnetic spectrum. I’ve used these detectors in applications requiring high sensitivity and speed, such as short-wave infrared (SWIR) imaging. Their relatively high operating temperature and lower cost compared to MCT make them attractive for many applications. I have worked on projects involving InGaAs cameras for hyperspectral imaging and remote sensing, where their sensitivity to specific wavelengths within the NIR spectrum is crucial.

- MCT (Mercury Cadmium Telluride): MCT detectors are known for their high sensitivity in the mid-wave infrared (MWIR) and long-wave infrared (LWIR) regions. These detectors are essential for thermal imaging applications, allowing for detection of heat signatures in various conditions. I’ve worked with MCT-based cameras for thermal imaging systems in security and defense applications, where the ability to detect objects based on their thermal signature is of critical importance, often in complete darkness.

My experience includes selecting the appropriate detector type based on the specific application requirements, understanding their performance characteristics (noise, sensitivity, spectral response), and integrating them into complete EO systems. The choice between InGaAs and MCT depends significantly on the wavelength range of interest and the required sensitivity; for example, thermal imaging almost exclusively uses MCT-based detectors while SWIR applications might favor InGaAs.

Key Topics to Learn for ElectroOptical (EO) Imaging Interview

Ace your ElectroOptical (EO) Imaging interview by mastering these key areas. We’ve structured this to help you build a strong foundation of both theory and practical application.

- Optical System Design: Understanding lens design principles, aberrations, and image formation. Consider practical applications like designing imaging systems for specific wavelengths or optimizing for resolution and sensitivity.

- Detector Technologies: Familiarize yourself with various detector types (CCD, CMOS, etc.), their characteristics (quantum efficiency, noise, dynamic range), and their suitability for different applications. Think about how detector choice impacts image quality and system performance.

- Image Processing and Enhancement: Learn about techniques for noise reduction, image restoration, and feature extraction. Consider real-world applications such as improving low-light images or identifying objects within complex scenes.

- Spectral Imaging: Explore the principles of multispectral and hyperspectral imaging, their advantages, and their application in fields like remote sensing and medical imaging. Be prepared to discuss the challenges and trade-offs involved.

- Electro-Optical System Integration: Understand the interplay between optical, electronic, and mechanical components in a complete EO imaging system. Think about practical considerations like thermal management, power consumption, and system calibration.

- Signal-to-Noise Ratio (SNR) and its Optimization: A cornerstone of EO imaging. Understand how SNR impacts image quality and the methods used to maximize it in different system designs.

- Calibration and Testing Procedures: Be prepared to discuss the methods used to calibrate and test EO imaging systems to ensure accuracy and reliability. This often involves practical procedures and troubleshooting skills.

Next Steps

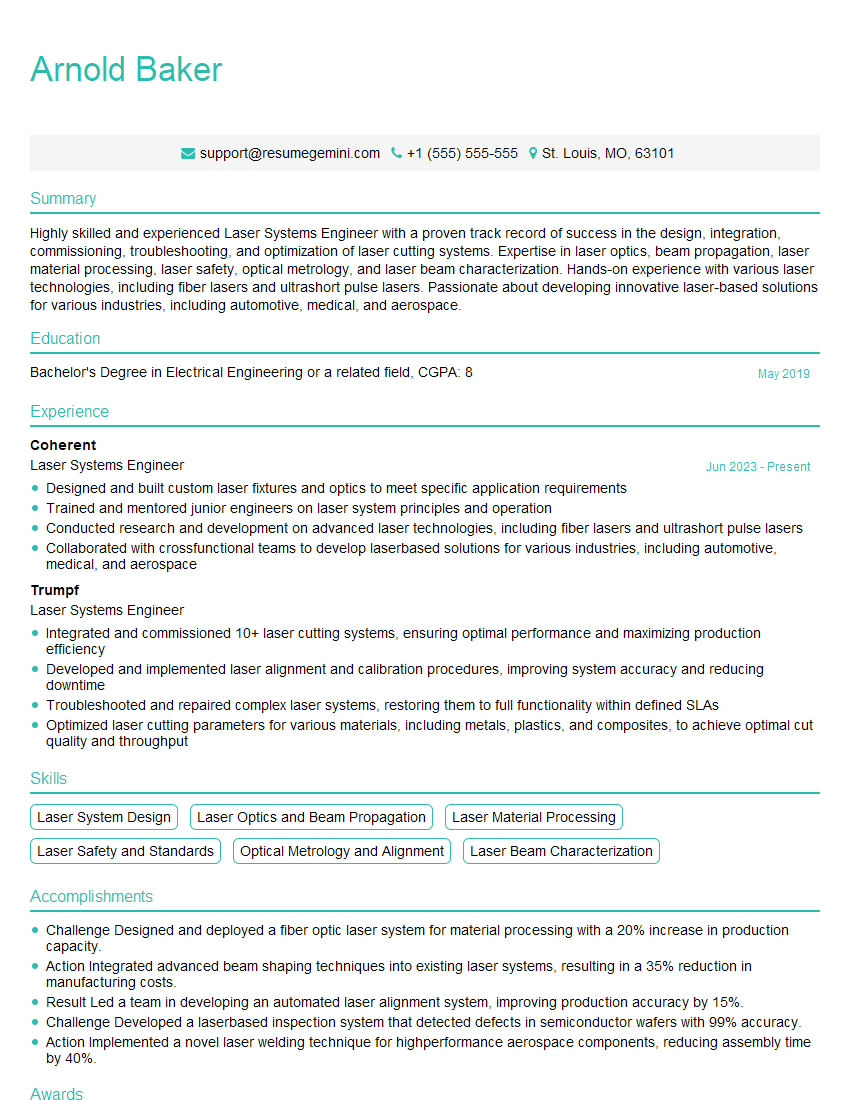

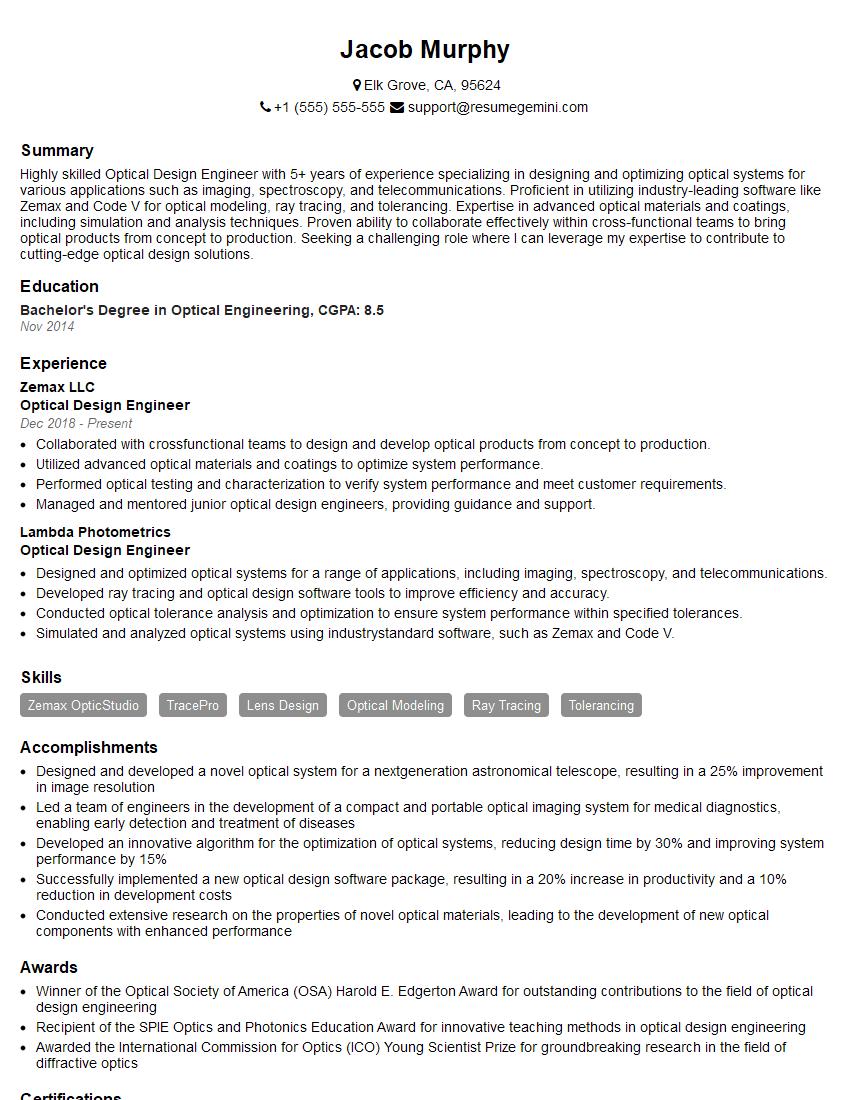

Mastering ElectroOptical (EO) Imaging opens doors to exciting and rewarding careers in cutting-edge technology. To maximize your job prospects, a well-crafted resume is crucial. An ATS-friendly resume ensures your application gets noticed by recruiters and hiring managers. We strongly recommend using ResumeGemini to build a professional and effective resume that highlights your skills and experience. ResumeGemini provides examples of resumes tailored specifically to ElectroOptical (EO) Imaging, ensuring your application stands out from the competition. Take the next step towards your dream career today!

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Very informative content, great job.

good