Every successful interview starts with knowing what to expect. In this blog, we’ll take you through the top Image Calibration interview questions, breaking them down with expert tips to help you deliver impactful answers. Step into your next interview fully prepared and ready to succeed.

Questions Asked in Image Calibration Interview

Q 1. Explain the difference between intrinsic and extrinsic camera calibration.

Intrinsic and extrinsic camera calibration parameters describe different aspects of a camera’s geometry and its position in the world. Think of it like describing a person’s physical attributes (intrinsic) and their location in a room (extrinsic).

Intrinsic parameters describe the internal characteristics of the camera itself, independent of its location or orientation. These include:

- Focal length (f): The distance between the lens and the image sensor. A longer focal length results in a narrower field of view.

- Principal point (cx, cy): The intersection of the optical axis with the image sensor. It’s essentially the center of the image.

- Radial and tangential distortion coefficients: These parameters correct for imperfections in the lens, causing lines to appear curved.

- Pixel aspect ratio: The ratio of the width of a pixel to its height.

Extrinsic parameters define the camera’s pose (position and orientation) in the 3D world. They are represented by a rotation matrix (R) and a translation vector (t):

- Rotation matrix (R): Describes the camera’s orientation in space. Three Euler angles or a quaternion can also represent this rotation.

- Translation vector (t): Represents the camera’s position in the world coordinate system.

In essence, intrinsic parameters tell us how the camera projects 3D points onto the 2D image plane, while extrinsic parameters tell us where the camera is looking from within the 3D world.

Q 2. Describe the process of camera calibration using a checkerboard pattern.

Calibrating a camera using a checkerboard pattern is a common and robust method. The process involves taking multiple images of a checkerboard from different viewpoints and orientations. The known geometry of the checkerboard (square size) allows us to establish correspondences between 3D points in the world (checkerboard corners) and their projections in the 2D images.

Here’s a step-by-step outline:

- Prepare the checkerboard: Print or create a high-contrast checkerboard pattern with precisely known square dimensions. The more squares, the better the accuracy.

- Capture images: Take multiple images (at least 10-20) of the checkerboard from various viewpoints and distances, ensuring the checkerboard is fully visible and not obscured. Vary the orientation and distance significantly for better results.

- Corner detection: Use a computer vision algorithm (like OpenCV’s

cv2.findChessboardCorners()function) to automatically detect the corners of the checkerboard in each image. This function returns the coordinates of the detected corners in the image plane. - Calibration: Utilize a calibration algorithm (like OpenCV’s

cv2.calibrateCamera()) that uses the detected corner coordinates and the known checkerboard dimensions to estimate the intrinsic and extrinsic parameters. This algorithm minimizes the reprojection error, which is the difference between the projected 3D points and the detected 2D points. - Verification: Evaluate the calibration results by checking the reprojection error. A low reprojection error indicates a good calibration.

# Example Python code snippet (using OpenCV)import cv2# ... (image loading and corner detection) ...ret, mtx, dist, rvecs, tvecs = cv2.calibrateCamera(objpoints, imgpoints, gray.shape[::-1], None, None)

This code snippet shows the core of the calibration process. objpoints are the 3D coordinates of the checkerboard corners, imgpoints are the detected 2D coordinates, and the function returns the camera matrix (mtx), distortion coefficients (dist), rotation vectors (rvecs), and translation vectors (tvecs).

Q 3. What are the common methods for color calibration?

Color calibration aims to ensure consistent and accurate color reproduction across different devices and environments. Several methods exist:

- Using Color Charts/Targets: These charts contain patches of known color values. The camera captures an image of the chart; then, software compares the captured colors to the known values, creating a color profile for the camera. This is similar to printer calibration.

- White Balancing: This is a crucial step to neutralize color casts caused by varying light sources (e.g., incandescent, fluorescent, sunlight). Algorithms analyze the image to estimate the color temperature and adjust the color balance accordingly.

- Colorimetric Calibration: This method involves measuring the spectral response of the camera and using this information to create a color transformation matrix. It’s more complex but provides highly accurate results.

- Using Color Sensors/Spectrometers: These instruments precisely measure color values, often used in conjunction with other methods for higher accuracy.

The best method depends on the application’s requirements for accuracy and complexity. For simple applications, white balancing might suffice, while more demanding applications, such as medical imaging or colorimetric analysis, necessitate more sophisticated techniques.

Q 4. How do you handle lens distortion in image calibration?

Lens distortion is a common problem in camera calibration, causing straight lines to appear curved in images. It’s handled by estimating distortion parameters during the calibration process and then correcting for these distortions in subsequent image processing.

The most common approach involves modeling distortion using radial and tangential components (discussed in the next answer). The calibration algorithm estimates parameters for these components which are then used to correct the distorted image. Libraries like OpenCV provide functions to undistort images using these estimated parameters.

# Example Python code snippet (using OpenCV)dst = cv2.undistort(img, mtx, dist, None, newcameramtx)

This line of code uses OpenCV’s undistort function to correct the distorted image img using the camera matrix mtx and distortion coefficients dist. newcameramtx is an optional rectified camera matrix.

Q 5. What are the different types of camera models used in calibration?

Several camera models are used in calibration, each with varying complexity and accuracy. The choice depends on the application and the level of accuracy required:

- Pinhole Camera Model: This is the simplest model, assuming that all light rays pass through a single point (the pinhole). It’s a good approximation for many cameras, but it doesn’t account for lens distortion.

- Generalized Camera Model: This model extends the pinhole model to include lens distortion parameters, making it more realistic and accurate for real-world cameras.

- Fisheye Camera Model: This model is specifically designed for fisheye lenses, which have a very wide field of view and significant distortion.

- Omni-directional Camera Model: These models are used for cameras with a 360-degree field of view, requiring specialized calibration techniques.

The generalized camera model, which incorporates both intrinsic and extrinsic parameters and accounts for lens distortion, is the most widely used in practical applications because of its balance of accuracy and computational efficiency.

Q 6. Explain the concept of radial and tangential distortion.

Radial and tangential distortion are two types of lens aberrations that affect the accuracy of image projections. They cause straight lines to appear curved in the image.

Radial distortion is caused by the lens’s curvature, making points closer to the image center appear less distorted than points farther away. This distortion can be either barrel distortion (lines curve inwards) or pincushion distortion (lines curve outwards). It’s often modeled using polynomial functions of the distance from the image center.

Tangential distortion is a less common type of distortion caused by imperfections in the lens alignment. It results in asymmetric distortions where lines are shifted in a non-radial pattern. It is less prominent than radial distortion but is still essential to model for high-accuracy calibration.

Both radial and tangential distortions are parameterized and corrected during the camera calibration process, significantly improving the accuracy of 3D reconstruction and other computer vision tasks.

Q 7. How do you evaluate the accuracy of a camera calibration?

Evaluating the accuracy of camera calibration is crucial for ensuring reliable results. The most common method is to examine the reprojection error.

The reprojection error measures the difference between the projected 3D points (from the calibrated camera model) and their corresponding detected 2D points in the images. A low reprojection error (typically below one pixel) indicates a high-quality calibration. Calculating the mean and standard deviation of the reprojection error provides a more quantitative assessment.

Other evaluation metrics include:

- Checking the calibration parameters: Examining the estimated intrinsic and extrinsic parameters for plausibility. For example, a negative focal length would indicate an error.

- Visual inspection: Visually inspecting the undistorted images to identify any remaining distortions.

- Using a test pattern with known 3D geometry: Similar to the calibration process, capturing images of a known object after calibration can validate the accuracy of 3D reconstruction.

A combination of these methods provides a comprehensive assessment of camera calibration accuracy.

Q 8. What are the common sources of error in image calibration?

Image calibration, while aiming for perfect accuracy, is susceptible to various errors. These errors can broadly be classified into systematic and random errors. Systematic errors are consistent and repeatable, often stemming from the imaging system itself. Random errors, on the other hand, are unpredictable and vary from image to image.

- Lens Distortion: Lens imperfections like radial and tangential distortion create deviations from a pinhole camera model. Radial distortion causes straight lines to appear curved, while tangential distortion introduces asymmetry. Think of how a wide-angle lens can make buildings appear to bulge outwards – that’s radial distortion in action.

- Camera Parameters Inaccuracy: Incorrect estimation of intrinsic parameters (focal length, principal point, etc.) and extrinsic parameters (rotation and translation) directly affects the accuracy of the calibration. Even small inaccuracies in measuring these parameters can propagate into significant errors in 3D reconstruction or measurements.

- Calibration Target Errors: The physical calibration target (e.g., a chessboard) itself can be imperfect, with inaccuracies in its dimensions or misalignment of its features affecting the calibration. This is especially true if the target is printed and not laser-cut for precision.

- Noise in Image Data: Noise in the acquired images (due to low light, sensor imperfections, etc.) can hinder the detection of features in the calibration target, leading to inaccurate parameter estimation. Think of it like trying to measure something with a shaky ruler; the readings won’t be precise.

- Environmental Factors: Temperature changes can affect the lens’s refractive index, leading to variations in distortion. Vibrations during image acquisition can also cause slight misalignment and errors.

Identifying and minimizing these error sources is crucial for obtaining a robust and accurate calibration.

Q 9. How do you calibrate a multi-camera system?

Calibrating a multi-camera system is more complex than calibrating a single camera because it involves determining not only the intrinsic parameters of each camera but also the relative extrinsic parameters (rotation and translation) between them. This is often achieved through a process called stereo calibration or multi-camera calibration.

A common approach involves:

- Individual Camera Calibration: First, each camera is calibrated individually using a standard calibration technique (e.g., using a checkerboard pattern) to obtain its intrinsic parameters.

- Common Points Identification: The same calibration target (or multiple targets visible in overlapping views) is captured by multiple cameras simultaneously. Feature points (e.g., corners of a checkerboard) are detected in each image.

- Point Correspondence Establishment: Matching corresponding points across different camera views is done. This step is critical and often involves robust feature matching techniques to handle noise and occlusions.

- Bundle Adjustment: Bundle adjustment is a crucial step. It’s a non-linear optimization technique that refines all the camera parameters (both intrinsic and extrinsic) simultaneously to minimize the reprojection error – the difference between the observed and projected positions of the points. This ensures consistency across all cameras.

- Validation and Refinement: The calibration results are validated using various metrics (e.g., reprojection error), and adjustments might be needed depending on the level of accuracy required.

Software libraries like OpenCV provide tools and functions for implementing these steps. The process often involves iterative refinement to improve the calibration accuracy.

Q 10. Describe the role of projective geometry in image calibration.

Projective geometry provides the mathematical foundation for image calibration. It deals with the transformations between different planes (e.g., the 3D world and the 2D image plane). The pinhole camera model is a fundamental concept in projective geometry, which represents a camera as a point (the optical center) projecting 3D points onto a 2D plane (the image sensor). This projection is a projective transformation.

In image calibration, projective geometry allows us to model the relationship between the 3D world coordinates and the corresponding 2D image coordinates. It enables us to define and estimate the camera’s intrinsic and extrinsic parameters, which are used to precisely map points from 3D space to their 2D image locations, and vice-versa. Understanding projective transformations (homographies, fundamental matrices, essential matrices) is crucial to accurately model the imaging process and correct for lens distortion and other geometric errors.

Q 11. Explain the use of homography in image calibration.

A homography is a projective transformation that maps points from one plane to another. In image calibration, homographies are particularly useful for planar scenes (e.g., calibrating a camera using a flat checkerboard). A homography describes the transformation between the plane of the calibration target and the image plane.

By identifying corresponding points in both the image and the known geometry of the calibration target, we can estimate the homography matrix. This matrix encapsulates the combined effect of the camera’s intrinsic parameters (focal length, principal point, distortion coefficients) and the extrinsic parameters (rotation and translation) relative to the plane of the target.

Once the homography is estimated, we can then use it to solve for the camera’s intrinsic and extrinsic parameters. This approach simplifies the calibration process for planar scenes and is computationally efficient compared to using more general methods that handle arbitrary 3D scenes.

Q 12. What are the advantages and disadvantages of different calibration techniques?

Various calibration techniques exist, each with its advantages and disadvantages. Let’s compare two common methods:

- Traditional Calibration using Checkerboard Patterns: This method is relatively simple and widely used. It involves taking multiple images of a checkerboard from different viewpoints. Advantages: Easy to implement, requires readily available equipment (checkerboard). Disadvantages: Susceptible to noise, requires careful planning to ensure sufficient view variation, and doesn’t directly handle non-planar scenes well.

- Self-Calibration: This technique requires no calibration target; instead, it infers camera parameters from multiple images of a scene with sufficient structure. Advantages: Flexible, avoids the need for a calibration target. Disadvantages: More computationally complex, requires specific scene constraints to ensure successful calibration, and can be sensitive to initialization.

Other techniques include using circles, line features, and more sophisticated methods incorporating constraints and prior information. The choice of method depends on factors such as available resources, desired accuracy, scene characteristics, and computational constraints. For instance, self-calibration might be preferable in robotic applications where setting up a checkerboard might be impractical.

Q 13. How do you handle noise in image calibration?

Noise in image data is a significant challenge in image calibration. It manifests as random variations in pixel intensities that hinder the accurate detection of features in the calibration target. Several strategies can be employed to handle this:

- Image Filtering: Applying appropriate image filtering techniques (e.g., Gaussian blurring, median filtering) can reduce noise before feature detection. This helps to improve the accuracy of feature point localization.

- Robust Estimators: Instead of using least-squares estimation (sensitive to outliers), robust estimators like RANSAC (Random Sample Consensus) are employed. RANSAC iteratively selects subsets of data to estimate the model, identifying and rejecting outliers caused by noise. This enhances the robustness of the calibration process against noisy data points.

- Multiple Images: Taking multiple images from diverse viewpoints helps to average out the effect of noise. The redundancy in data improves the overall accuracy of the parameter estimation.

- Data Preprocessing: Careful image preprocessing, such as histogram equalization or background subtraction, can improve the contrast and signal-to-noise ratio, making feature detection more reliable.

The choice of method often depends on the specific noise characteristics and the level of noise present in the images. A combination of techniques is frequently used for optimal results.

Q 14. Explain the importance of image calibration in autonomous driving.

Image calibration is paramount in autonomous driving. Accurate calibration is essential for reliable sensor fusion and accurate perception of the environment. Without proper calibration, the measurements from various sensors (cameras, LiDAR, radar) cannot be accurately combined or interpreted, leading to inaccurate localization, object detection, and path planning.

- Accurate 3D Reconstruction: Calibration allows for precise 3D reconstruction of the scene from multiple camera views, vital for object detection and scene understanding.

- Sensor Fusion: Calibration ensures consistent and accurate data fusion from various sensors, enabling the autonomous vehicle to build a comprehensive and accurate representation of its surroundings.

- Precise Localization: Accurate camera calibration is crucial for visual odometry (estimating the vehicle’s position and orientation from camera images), which is essential for navigation and localization.

- Safety-Critical Applications: In autonomous driving, precise perception is safety-critical. Incorrect calibration can lead to misinterpretations of the environment, potentially causing accidents. This is analogous to a doctor needing precise measuring instruments for accurate diagnosis.

The higher the level of autonomy, the more crucial accurate calibration becomes. Robust and reliable calibration techniques are continuously being developed to ensure safety and reliability in autonomous vehicles.

Q 15. How do you calibrate a thermal camera?

Calibrating a thermal camera involves determining the relationship between the pixel values in the image and the actual temperature. This is crucial because the raw sensor data doesn’t directly represent temperature; it represents the infrared radiation detected. The calibration process typically involves using a blackbody source with known temperatures.

- Step 1: Blackbody Calibration: A blackbody, an object that perfectly absorbs and emits radiation, is heated to several known temperatures. The thermal camera captures images of the blackbody at each temperature.

- Step 2: Data Acquisition: The average pixel values corresponding to each known temperature are recorded. This data forms the basis for the calibration curve.

- Step 3: Curve Fitting: A mathematical function, often a polynomial, is fitted to the data points. This function converts raw pixel values into temperature values.

- Step 4: Non-Uniformity Correction (NUC): Thermal cameras often suffer from non-uniformity, meaning that different pixels respond differently to the same temperature. NUC corrects this by creating a compensation map based on a reference image of a uniform temperature.

For example, in industrial applications, calibrating a thermal camera used to inspect circuit boards ensures accurate temperature readings, preventing overheating and malfunctions. Without calibration, the readings would be unreliable and potentially lead to costly errors.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. Describe the process of calibrating a structured light scanner.

Calibrating a structured light scanner involves determining the precise geometric relationship between the projector and the camera. This allows the system to accurately reconstruct 3D points from the projected patterns and their captured images. The process usually involves a calibration target with known geometry, like a checkerboard pattern.

- Step 1: Target Acquisition: Multiple images of the calibration target are captured from various angles and with different projector patterns.

- Step 2: Feature Detection: The system identifies corresponding points (e.g., checkerboard corners) in both the projected pattern and the camera images.

- Step 3: Parameter Estimation: A calibration algorithm, often based on non-linear optimization techniques, estimates the intrinsic parameters (focal length, principal point, distortion coefficients) of the camera and the extrinsic parameters (rotation and translation) between the projector and the camera.

- Step 4: Model Validation: The accuracy of the calibration is validated by comparing the reconstructed 3D points to the known geometry of the target.

Imagine scanning a human face for 3D modeling. Accurate calibration is essential to avoid distortions and ensure a realistic 3D representation. Inaccurate calibration could lead to a distorted face model unusable for animation or medical applications.

Q 17. How does image calibration impact 3D reconstruction?

Image calibration is fundamental to accurate 3D reconstruction. Without proper calibration, the geometric distortions inherent in the camera’s lens and sensor will lead to inaccuracies in the reconstructed 3D model. The calibration parameters, obtained through methods like those mentioned above, are used to correct these distortions before the 3D points are triangulated.

Think of it like drawing a perspective sketch: If you don’t understand the perspective rules (which is analogous to camera calibration), your drawing will be distorted. Similarly, if you don’t calibrate your cameras, your 3D model will be inaccurate. Accurate calibration ensures that the reconstructed points are correctly positioned in 3D space, leading to a realistic and useful 3D model. Applications range from architectural modeling and cultural heritage preservation to medical imaging and autonomous driving.

Q 18. What are the challenges in calibrating wide-angle lenses?

Calibrating wide-angle lenses presents several challenges due to their increased field of view and significant distortion effects.

- Increased Distortion: Wide-angle lenses exhibit more pronounced radial and tangential distortions compared to standard lenses. These distortions need to be accurately modeled and compensated for.

- Limited Feature Visibility: At the edges of a wide field of view, features on a calibration target can become difficult to identify and match accurately, leading to less precise calibration.

- Increased Computational Complexity: More sophisticated calibration models, which can handle the complex distortions, are required. This often increases computational cost and complexity of the calibration process.

- Vignetting: Wide-angle lenses often suffer from vignetting (reduced intensity at the edges of the image), which can affect feature detection and calibration accuracy.

For example, in robotics, accurate calibration of wide-angle cameras is critical for navigation and object recognition. The distortions need to be corrected to ensure accurate perception of the robot’s environment. Improper calibration can lead to incorrect estimations of distances and object locations, potentially resulting in collisions or failed navigation.

Q 19. Explain the concept of camera resectioning.

Camera resectioning is the process of determining the position and orientation (pose) of a camera in 3D space given a set of 2D image points and their corresponding 3D world points. This is often an essential part of camera calibration and 3D reconstruction.

Imagine you have a picture of a building, and you know the real-world coordinates of several points on that building. Camera resectioning allows you to determine where the camera was positioned when the picture was taken – its location and its orientation (tilt, pan, roll). This is typically done using non-linear optimization techniques that minimize the reprojection error (the difference between the observed image points and the points predicted by the camera’s pose). The output is the camera’s extrinsic parameters (rotation and translation).

Q 20. How do you perform image registration?

Image registration is the process of aligning two or more images of the same scene, taken from different viewpoints or at different times. This is crucial for various applications such as medical image analysis, remote sensing, and creating panoramas. The goal is to find a transformation (translation, rotation, scaling, and sometimes deformation) that maps points in one image to their corresponding points in another.

- Feature Detection and Matching: Interest points (points with distinctive characteristics) are detected in each image, and corresponding points are identified across the images. This could involve using SIFT, SURF, or ORB feature detectors.

- Transformation Estimation: Based on the matched points, a transformation model (e.g., affine, projective, or elastic) is estimated. Algorithms like RANSAC are often used to handle outliers.

- Image Warping: One or more images are warped (transformed) to align with a reference image. This involves using the estimated transformation model to map pixel coordinates.

In medical imaging, registering MRI and CT scans of the same patient allows doctors to combine information from both modalities to improve diagnosis. Without registration, it would be difficult to compare and analyze the images accurately.

Q 21. What is the role of image calibration in medical imaging?

Image calibration plays a vital role in medical imaging because it ensures the accuracy and reliability of diagnostic information. Accurate calibration is essential for various reasons:

- Quantitative Measurements: Precise measurements of organ sizes, tumor volumes, and other anatomical features rely on accurate calibration. Errors in calibration directly translate into errors in these measurements, potentially affecting treatment planning and diagnosis.

- Image Fusion: Registering images from different modalities (e.g., CT, MRI, PET) requires accurate calibration to ensure proper alignment, allowing for combined analysis.

- Image-Guided Interventions: In procedures like surgery, precise image calibration is crucial for guiding instruments and ensuring accuracy. Miscalibration can lead to inaccurate placement of instruments, potentially harming the patient.

- 3D Reconstruction: Accurate 3D models of organs are often created from medical images, requiring careful calibration to ensure the fidelity of the model.

For example, in radiotherapy, accurate calibration of the imaging system ensures precise targeting of tumors, minimizing damage to surrounding healthy tissue. Inaccurate calibration could lead to incorrect radiation dosage or targeting, impacting treatment effectiveness and potentially harming the patient.

Q 22. Describe your experience with different calibration software packages.

My experience with image calibration software spans various packages, each with its strengths and weaknesses. I’m proficient in commercial solutions like MATLAB’s Image Processing Toolbox, which offers a robust suite of tools for geometric and photometric calibration, including functions for camera model estimation and lens distortion correction. I’ve also extensively used OpenCV, an open-source library providing flexibility and customization, allowing for tailored solutions to specific calibration challenges. Finally, I have experience with specialized software designed for particular camera systems, such as those bundled with high-end industrial cameras. Choosing the right package always depends on the application, budget, and required level of control.

For instance, when working on a project requiring precise measurement accuracy, MATLAB’s rigorous algorithms and extensive documentation were invaluable. Conversely, for rapid prototyping and integration into a larger system, OpenCV’s versatility and extensive community support were preferred. The key is understanding the capabilities and limitations of each package to make informed decisions.

Q 23. How do you troubleshoot common calibration problems?

Troubleshooting calibration problems often involves a systematic approach. First, I visually inspect the images for obvious issues like blurriness, significant vignetting (darkening at the edges), or major distortions. If the problem is evident, it may simply be a matter of improving the setup—ensuring adequate lighting, stable camera positioning, and a well-defined calibration target. More subtle problems require deeper investigation.

A common issue is insufficient calibration points. If the software struggles to find a reliable solution, it often means more points are needed for robust estimation. I increase the number of points or use a higher-resolution target to improve accuracy. If the reprojection error (the difference between the observed and projected points) remains high despite sufficient points, it suggests issues with the camera model or lens distortion. In such cases, I carefully analyze the residuals (the differences between the model and the actual data) to pinpoint the source of the error. Sometimes this involves trying different camera models, such as pinhole, radial-tangential, or more complex models to better fit the lens distortion patterns. Another frequent problem is target movement during acquisition. This leads to inconsistent data and inaccurate results. Solutions could include using a rigid mounting system or employing faster image acquisition techniques to minimize the impact of movements.

Q 24. Explain the difference between geometric and photometric calibration.

Geometric calibration focuses on determining the intrinsic and extrinsic parameters of the camera. Intrinsic parameters describe the internal characteristics of the camera, such as focal length, principal point (the center of the image sensor), and lens distortion coefficients. Extrinsic parameters define the camera’s position and orientation in the 3D world, using rotation and translation matrices. Think of it as figuring out the camera’s ‘eyesight’ – its inherent properties and its location relative to the scene.

Photometric calibration, on the other hand, aims to model and correct the variations in pixel intensity across the image due to factors like uneven illumination, sensor response non-linearity, and lens vignetting. This is like adjusting the camera’s ‘sensitivity’ to ensure consistent brightness and color across the entire image. Both are essential for accurate image analysis and reconstruction. Geometric calibration provides the geometric relationship between points in the world and their projections in the image. Photometric calibration ensures that the intensity values in the image are reliable representations of the light reflected from the scene.

Q 25. How do you ensure the consistency of calibration across different images?

Ensuring calibration consistency across different images requires careful attention to several factors. First and foremost is maintaining a stable and consistent imaging setup. The camera, lighting, and target should remain unchanged between captures. Secondly, using a high-quality calibration target with clear and easily identifiable markers is critical.

Utilizing robust calibration algorithms that are less susceptible to outliers is vital. I often employ iterative methods that refine the calibration parameters by minimizing the reprojection error. Regularly checking the calibration accuracy with newly acquired images is a crucial aspect. Establishing a quality control procedure, including periodic recalibration, ensures long-term consistency. If different cameras are involved, it is crucial that they are all of similar type and each undergoes separate but rigorous calibration using consistent methods and parameters.

Q 26. What are the key performance indicators (KPIs) for image calibration?

Key Performance Indicators (KPIs) for image calibration depend heavily on the application but generally include:

- Reprojection Error: This measures the average distance between the observed and projected points during calibration. Lower values indicate better accuracy.

- Accuracy of Measurements: This assesses the precision of measurements obtained from the calibrated images, often expressed as a percentage error or standard deviation.

- Consistency: This refers to the stability of calibration parameters over time and across different images. Inconsistent calibration can be reflected in high variation in measured properties between multiple images.

- Computational Efficiency: The speed and resource usage of the calibration algorithm are important especially when dealing with a high volume of images or real-time applications.

For example, in a machine vision application requiring precise object detection, a low reprojection error is crucial. In a photogrammetry project aiming at creating a 3D model, the accuracy of depth measurements would be the dominant KPI.

Q 27. Describe your experience with different camera technologies (e.g., CMOS, CCD).

I have extensive experience with both CMOS and CCD camera technologies. CMOS (Complementary Metal-Oxide-Semiconductor) sensors are prevalent in modern cameras due to their lower cost, lower power consumption, and faster readout speeds. They are well-suited for applications requiring high frame rates or video capture. However, CMOS sensors can exhibit higher noise levels, particularly at low light levels, compared to CCDs.

CCD (Charge-Coupled Device) sensors, while generally more expensive and power-hungry, are known for their superior dynamic range and low noise, making them ideal for applications demanding high image quality and precision in low light conditions. They are commonly found in scientific and medical imaging. Calibration techniques differ slightly depending on the sensor type. CMOS sensors may need specialized noise reduction techniques incorporated into the calibration process to mitigate their inherent noise characteristics. In contrast, CCD calibration often focuses on achieving high dynamic range by correcting for non-linear sensor responses.

Q 28. How do you stay current with advancements in image calibration techniques?

Staying current in image calibration requires a multi-faceted approach. I regularly attend conferences and workshops related to computer vision and image processing, such as CVPR and ICCV. This provides access to the latest research and allows networking with other experts in the field. I actively follow leading journals and publications, such as the IEEE Transactions on Pattern Analysis and Machine Intelligence, which publish cutting-edge research in image calibration and related areas.

Furthermore, I engage with online communities and forums dedicated to image processing, which provide opportunities to learn from others’ experiences and troubleshooting advice. I also make it a point to experiment with new software packages and techniques, keeping my skillset up-to-date and allowing me to compare and contrast different methods.

Key Topics to Learn for Image Calibration Interview

- Color Spaces and Transformations: Understanding different color spaces (RGB, XYZ, LAB, etc.) and the mathematical transformations between them is fundamental. Practical application includes converting images between color spaces for optimal display or printing.

- Image Sensors and Characteristics: Gain a thorough understanding of how image sensors work, including their limitations and inherent noise. This knowledge is crucial for interpreting calibration data and identifying potential issues.

- Calibration Techniques and Methods: Explore various calibration techniques, such as using color charts and spectrophotometers. Understand the differences between hardware and software calibration approaches and their respective strengths and weaknesses.

- Gamma Correction and Linearization: Master the concept of gamma correction and its importance in achieving accurate color reproduction. Learn how to linearize image data for processing and analysis.

- White Balance and Color Temperature: Understand how white balance affects the overall color appearance of an image and the different methods for correcting it. Be prepared to discuss color temperature and its implications.

- Image Artifacts and Noise Reduction: Familiarize yourself with common image artifacts (e.g., banding, moiré patterns) and noise reduction techniques. Understanding these issues is key to optimizing image quality.

- Profiling and Characterization: Learn about creating color profiles (ICC profiles) and characterizing imaging systems. This is a crucial aspect of ensuring consistent and accurate color reproduction across different devices.

- Troubleshooting and Problem Solving: Be prepared to discuss common calibration problems and how to effectively troubleshoot them. This includes identifying the root cause of color inaccuracies and implementing effective solutions.

Next Steps

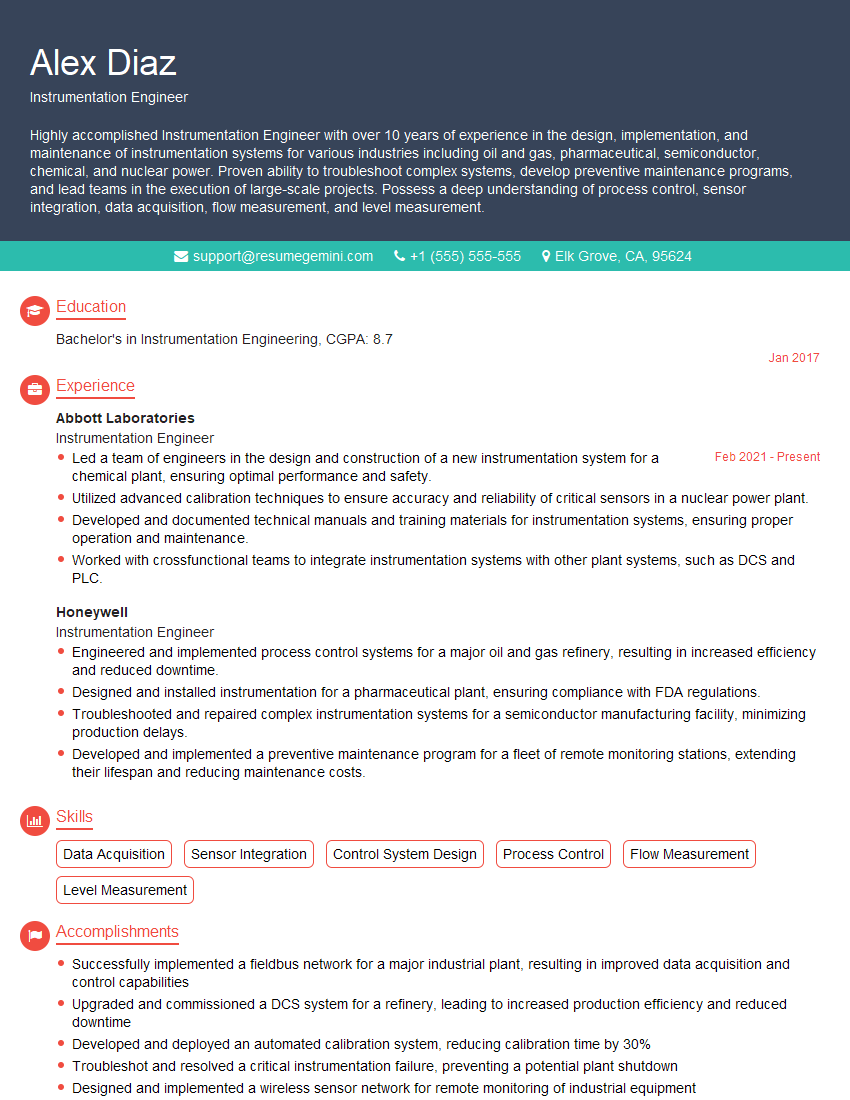

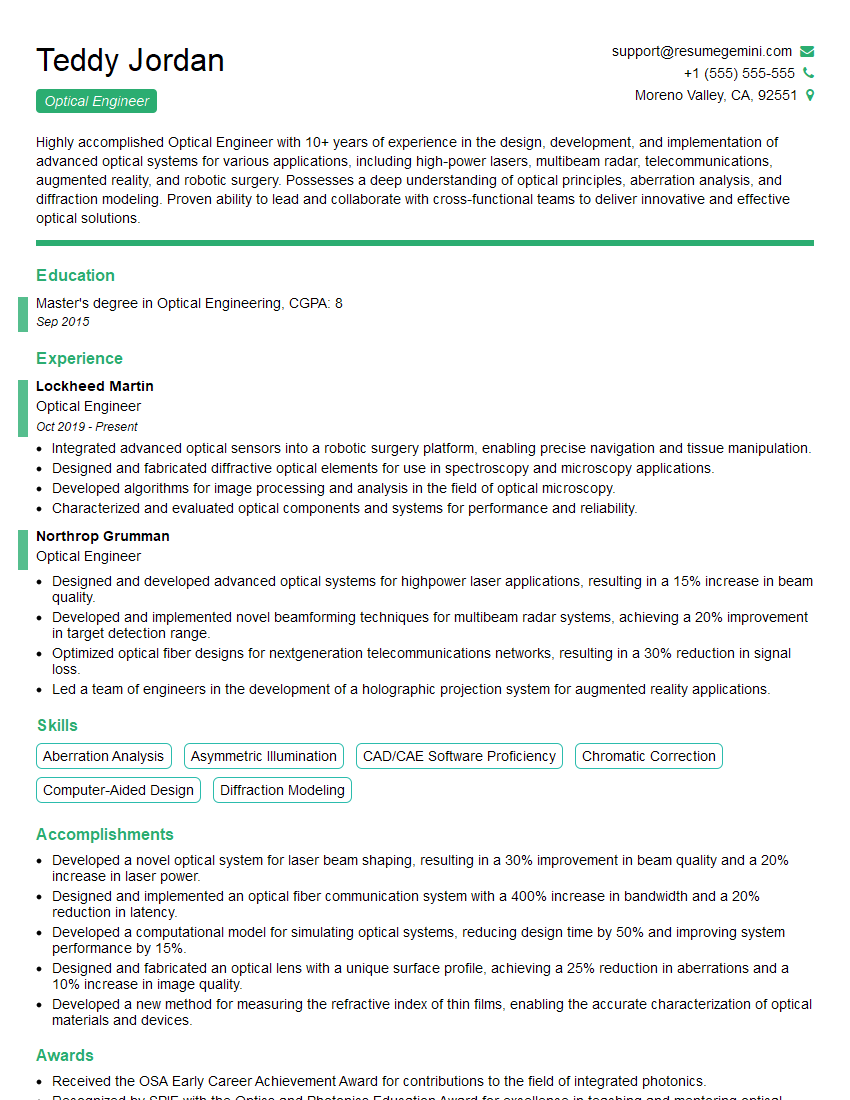

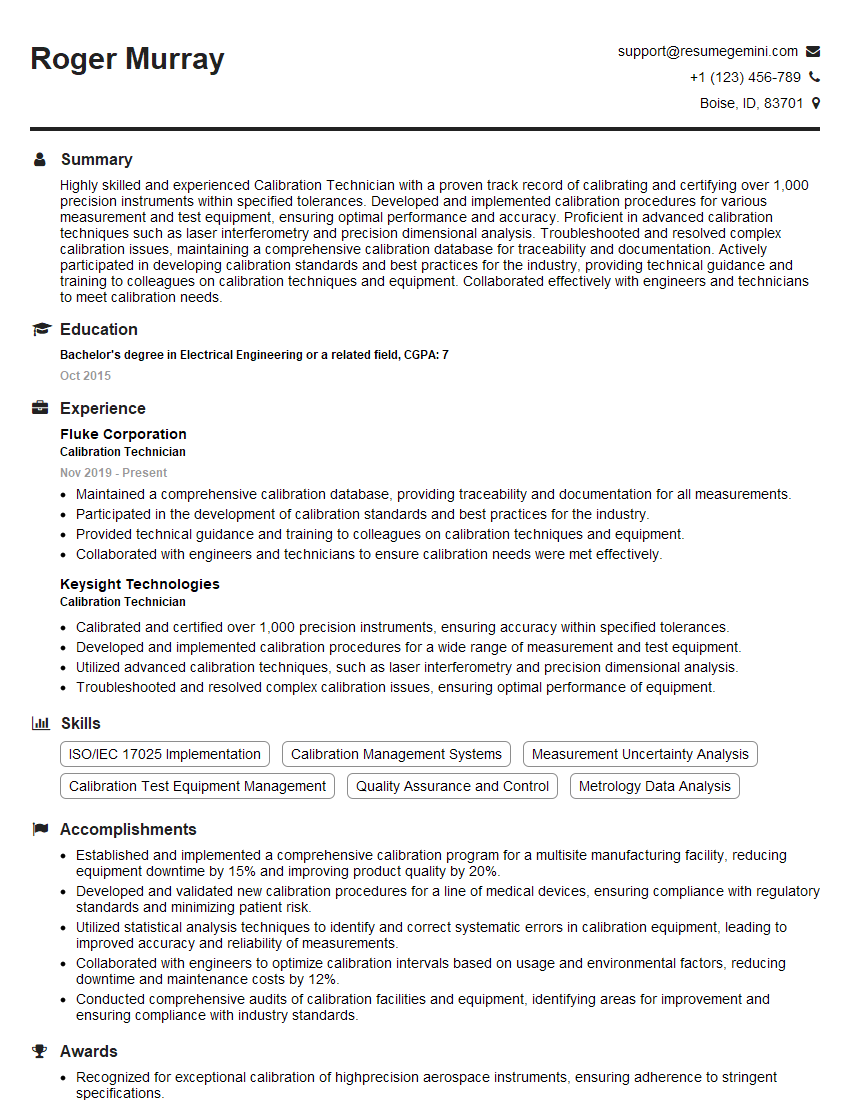

Mastering image calibration significantly enhances your career prospects in imaging-related fields, opening doors to diverse and challenging roles. To increase your chances of landing your dream job, creating an ATS-friendly resume is crucial. ResumeGemini is a trusted resource that can help you build a professional and effective resume tailored to the specific requirements of your target positions. Examples of resumes tailored to Image Calibration are available to further assist you in this process. Take the next step in your career journey today!

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Attention music lovers!

Wow, All the best Sax Summer music !!!

Spotify: https://open.spotify.com/artist/6ShcdIT7rPVVaFEpgZQbUk

Apple Music: https://music.apple.com/fr/artist/jimmy-sax-black/1530501936

YouTube: https://music.youtube.com/browse/VLOLAK5uy_noClmC7abM6YpZsnySxRqt3LoalPf88No

Other Platforms and Free Downloads : https://fanlink.tv/jimmysaxblack

on google : https://www.google.com/search?q=22+AND+22+AND+22

on ChatGPT : https://chat.openai.com?q=who20jlJimmy20Black20Sax20Producer

Get back into the groove with Jimmy sax Black

Best regards,

Jimmy sax Black

www.jimmysaxblack.com

Hi I am a troller at The aquatic interview center and I suddenly went so fast in Roblox and it was gone when I reset.

Hi,

Business owners spend hours every week worrying about their website—or avoiding it because it feels overwhelming.

We’d like to take that off your plate:

$69/month. Everything handled.

Our team will:

Design a custom website—or completely overhaul your current one

Take care of hosting as an option

Handle edits and improvements—up to 60 minutes of work included every month

No setup fees, no annual commitments. Just a site that makes a strong first impression.

Find out if it’s right for you:

https://websolutionsgenius.com/awardwinningwebsites

Hello,

we currently offer a complimentary backlink and URL indexing test for search engine optimization professionals.

You can get complimentary indexing credits to test how link discovery works in practice.

No credit card is required and there is no recurring fee.

You can find details here:

https://wikipedia-backlinks.com/indexing/

Regards

NICE RESPONSE TO Q & A

hi

The aim of this message is regarding an unclaimed deposit of a deceased nationale that bears the same name as you. You are not relate to him as there are millions of people answering the names across around the world. But i will use my position to influence the release of the deposit to you for our mutual benefit.

Respond for full details and how to claim the deposit. This is 100% risk free. Send hello to my email id: lukachachibaialuka@gmail.com

Luka Chachibaialuka

Hey interviewgemini.com, just wanted to follow up on my last email.

We just launched Call the Monster, an parenting app that lets you summon friendly ‘monsters’ kids actually listen to.

We’re also running a giveaway for everyone who downloads the app. Since it’s brand new, there aren’t many users yet, which means you’ve got a much better chance of winning some great prizes.

You can check it out here: https://bit.ly/callamonsterapp

Or follow us on Instagram: https://www.instagram.com/callamonsterapp

Thanks,

Ryan

CEO – Call the Monster App

Hey interviewgemini.com, I saw your website and love your approach.

I just want this to look like spam email, but want to share something important to you. We just launched Call the Monster, a parenting app that lets you summon friendly ‘monsters’ kids actually listen to.

Parents are loving it for calming chaos before bedtime. Thought you might want to try it: https://bit.ly/callamonsterapp or just follow our fun monster lore on Instagram: https://www.instagram.com/callamonsterapp

Thanks,

Ryan

CEO – Call A Monster APP

To the interviewgemini.com Owner.

Dear interviewgemini.com Webmaster!

Hi interviewgemini.com Webmaster!

Dear interviewgemini.com Webmaster!

excellent

Hello,

We found issues with your domain’s email setup that may be sending your messages to spam or blocking them completely. InboxShield Mini shows you how to fix it in minutes — no tech skills required.

Scan your domain now for details: https://inboxshield-mini.com/

— Adam @ InboxShield Mini

support@inboxshield-mini.com

Reply STOP to unsubscribe

Hi, are you owner of interviewgemini.com? What if I told you I could help you find extra time in your schedule, reconnect with leads you didn’t even realize you missed, and bring in more “I want to work with you” conversations, without increasing your ad spend or hiring a full-time employee?

All with a flexible, budget-friendly service that could easily pay for itself. Sounds good?

Would it be nice to jump on a quick 10-minute call so I can show you exactly how we make this work?

Best,

Hapei

Marketing Director

Hey, I know you’re the owner of interviewgemini.com. I’ll be quick.

Fundraising for your business is tough and time-consuming. We make it easier by guaranteeing two private investor meetings each month, for six months. No demos, no pitch events – just direct introductions to active investors matched to your startup.

If youR17;re raising, this could help you build real momentum. Want me to send more info?

Hi, I represent an SEO company that specialises in getting you AI citations and higher rankings on Google. I’d like to offer you a 100% free SEO audit for your website. Would you be interested?

Hi, I represent an SEO company that specialises in getting you AI citations and higher rankings on Google. I’d like to offer you a 100% free SEO audit for your website. Would you be interested?