The right preparation can turn an interview into an opportunity to showcase your expertise. This guide to Object Manipulation and Transformation interview questions is your ultimate resource, providing key insights and tips to help you ace your responses and stand out as a top candidate.

Questions Asked in Object Manipulation and Transformation Interview

Q 1. Explain the difference between pass-by-reference and pass-by-value in object manipulation.

Pass-by-value and pass-by-reference are crucial concepts in object manipulation, determining how data is handled when passed to functions or methods. In pass-by-value, a copy of the object’s value is passed. Changes made to the parameter within the function do not affect the original object. Think of it like photocopying a document; you can edit the copy without changing the original. In contrast, pass-by-reference passes the memory address of the object. Any modifications made within the function directly alter the original object. This is like giving someone the original document; any changes they make affect the master copy.

Example (Illustrative – Language-specific nuances exist):

Let’s say we have a Person object. In a pass-by-value scenario:

Person originalPerson = new Person("Alice", 30);

modifyPerson(originalPerson); //A copy is passed

// originalPerson remains unchangedIn a pass-by-reference scenario:

Person originalPerson = new Person("Alice", 30);

modifyPerson(originalPerson); // The memory address is passed

// originalPerson is now modifiedThe specific behavior (pass-by-value or pass-by-reference) often depends on the programming language and how objects are handled internally.

Q 2. Describe different methods for object serialization and deserialization.

Object serialization is the process of converting an object’s state into a byte stream, suitable for storage or transmission. Deserialization is the reverse – reconstructing the object from that byte stream. Several methods exist:

- JSON (JavaScript Object Notation): A lightweight, text-based format widely used for data interchange. It’s human-readable and readily supported by most programming languages.

- XML (Extensible Markup Language): A more verbose, structured format. It offers good schema support and is suitable for complex data structures but can be less efficient than JSON.

- Binary Serialization: This method stores the object’s data in a binary format, often resulting in smaller file sizes and faster processing speeds compared to text-based methods. However, it’s less human-readable and usually language-specific.

- Protocol Buffers (protobuf): A language-neutral, platform-neutral mechanism for serializing structured data. It’s efficient and often used in high-performance applications.

The choice of method depends on factors like performance requirements, data complexity, interoperability needs, and human readability preferences.

Q 3. How do you handle object cloning effectively?

Object cloning creates a new object that is an independent copy of the original. There are two main types of cloning: shallow and deep.

- Shallow Cloning: Creates a new object, but the references to nested objects within the original object are copied. Modifying a nested object in the clone will also affect the original object. Think of it like copying a file – changes to the copy affect the original if it’s a symbolic link rather than a full copy.

- Deep Cloning: Creates a completely independent copy of the object, including all nested objects. Changes to the clone will not affect the original. This is like creating an exact duplicate of the file.

Many languages provide built-in mechanisms or libraries for cloning. For instance, in Java, you might use clone() (which often needs to be overridden for deep cloning) or libraries like Apache Commons Lang. In Python, you could use the copy.deepcopy() function for deep cloning.

The choice between shallow and deep cloning depends on whether you need an independent copy of the object or if sharing references to nested objects is acceptable.

Q 4. What are the advantages and disadvantages of using immutable objects?

Immutable objects are objects whose state cannot be modified after creation. They offer several advantages:

- Thread Safety: Immutable objects are inherently thread-safe because multiple threads can access them without the risk of data corruption. No synchronization mechanisms are needed.

- Simplified Debugging: Since their state doesn’t change, tracking down bugs becomes easier. You don’t need to worry about unintended modifications.

- Improved Code Readability: The immutability makes code easier to reason about and understand.

However, there are also disadvantages:

- Memory Consumption: Creating many immutable objects can consume more memory than modifying mutable objects. Each modification effectively creates a new object.

- Performance Overhead: Creating new objects for every modification can have a performance impact, particularly in situations with frequent updates.

The choice between mutable and immutable objects depends on the specific needs of the application. For scenarios requiring thread safety and ease of debugging, immutability is advantageous. In performance-critical areas where frequent updates are necessary, mutable objects might be more suitable.

Q 5. Explain the concept of polymorphism and its application in object transformation.

Polymorphism, meaning “many forms,” is a powerful concept where objects of different classes can respond to the same method call in their own specific way. In object transformation, this allows you to apply transformations to various object types without needing to know their specific class. A common example is using a base class method that is overridden in subclasses to handle transformation specifics for each type.

Example: Imagine a base class Shape with a transform() method. Subclasses like Circle and Rectangle could each override transform() to implement their unique transformation logic (e.g., scaling, rotation). You can then process a collection of different Shape objects using a common transform() call, and each object will handle the transformation appropriately. This promotes flexibility and maintainability, as you don’t have to write separate transformation logic for every object type.

Q 6. Describe different approaches to object comparison (e.g., shallow vs. deep copy).

Object comparison methods vary in how deeply they check for equality.

- Shallow Comparison: Compares only the references of objects. Two objects are considered equal only if they refer to the same memory location. This means that even if the contents of two objects are identical, if they are separate objects, a shallow comparison will consider them unequal.

- Deep Comparison: Recursively compares the values of all fields in the objects. Two objects are considered equal if all their corresponding fields have the same value.

Many programming languages provide built-in mechanisms or library functions for both types of comparison. For example, Python’s == operator performs a shallow comparison, while the copy.deepcopy can be used as a part of a deep comparison. The choice depends on whether you only need to verify object identity or require a value-based comparison.

Q 7. How do you handle exceptions during object manipulation?

Exceptions during object manipulation can stem from various sources, like invalid data, null pointers, or I/O errors. Robust error handling is crucial.

- Try-Catch Blocks: Enclose object manipulation code within

try-catchblocks to catch anticipated exceptions. Handle them gracefully, providing informative error messages or taking corrective action. - Specific Exception Handling: Catch specific exception types to provide tailored responses. Instead of a generic

catch (Exception e), catch more specific exceptions (e.g.,NullPointerException,IOException) for better error management. - Logging: Log exceptions to track down errors and aid debugging. Include relevant information such as the exception type, message, stack trace, and object state.

- Input Validation: Validate input data before performing operations to prevent invalid data from causing exceptions.

A well-structured approach to exception handling improves the reliability and maintainability of your object manipulation code. Avoid bare catch blocks, and strive for specific, informative error handling.

Q 8. What are design patterns commonly used in object manipulation and transformation?

Several design patterns prove invaluable when dealing with object manipulation and transformation. These patterns help manage complexity and promote reusability. Here are a few key examples:

- Strategy Pattern: This pattern defines a family of algorithms, encapsulates each one, and makes them interchangeable. Imagine you have different ways to transform an object (e.g., convert to JSON, XML, or a custom format). The Strategy pattern allows you to easily switch between these transformation methods without modifying the core object processing logic.

- Chain of Responsibility: This pattern allows multiple objects to handle a request. Consider a data pipeline where objects undergo a series of transformations. Each object in the chain can perform a specific transformation, passing the modified object to the next in line. This makes the process modular and extensible.

- Command Pattern: This pattern encapsulates a request as an object, thereby letting you parameterize clients with different requests, queue or log requests, and support undoable operations. This is particularly useful when dealing with complex transformations that might need to be reversed or tracked.

- Decorator Pattern: This pattern dynamically adds responsibilities to an object. Think of adding validation or logging to your object transformations without altering the original object’s core functionality. The decorator pattern wraps the object with additional functionality.

- Factory Pattern: If your transformations need to create different types of objects based on certain conditions, the Factory pattern can simplify object creation. It encapsulates the logic for creating objects, making your code cleaner and more maintainable.

Choosing the right pattern depends heavily on the specific requirements of the transformation task. Understanding the strengths and weaknesses of each pattern is crucial for effective implementation.

Q 9. Explain how you would implement object pooling to improve performance.

Object pooling is a performance optimization technique where you pre-allocate a set of objects and reuse them instead of creating new ones each time they are needed. This avoids the overhead of frequent object creation and garbage collection, especially beneficial when dealing with many short-lived objects.

Implementation typically involves a pool manager that handles object allocation and release. When an object is requested, the pool manager checks if there are available objects in the pool. If so, it provides a reusable object; otherwise, it creates a new one (up to a predefined limit). Once the object is no longer needed, it’s returned to the pool for reuse.

Here’s a simplified conceptual example using Python:

class ObjectPool:

def __init__(self, object_factory, pool_size):

self.object_factory = object_factory

self.pool = [object_factory() for _ in range(pool_size)]

self.lock = threading.Lock() # For thread-safe access

def acquire(self):

with self.lock:

if self.pool:

return self.pool.pop()

else:

return self.object_factory()

def release(self, obj):

with self.lock:

self.pool.append(obj)

# Example usage:

class MyObject:

pass

pool = ObjectPool(MyObject, 10) # Pool of 10 MyObject instances

object = pool.acquire()

# Use the object

pool.release(object)The effectiveness of object pooling depends on the object’s lifecycle and the frequency of object creation/destruction. It’s most beneficial when objects are frequently created and destroyed, and the creation process is relatively expensive.

Q 10. How do you optimize object transformation for large datasets?

Optimizing object transformation for large datasets requires a multi-pronged approach focusing on efficient algorithms, data structures, and potentially distributed processing. Key strategies include:

- Data Structure Selection: Choose data structures optimized for the specific transformations. For example, if you need frequent lookups, a hash table might be far superior to a linked list. If the order of elements is crucial, consider using arrays or vectors.

- Vectorization: Utilize vectorized operations whenever possible. Libraries like NumPy (for Python) or similar libraries in other languages allow performing operations on entire arrays at once, rather than element by element. This significantly speeds up processing.

- Parallel Processing: For extremely large datasets, consider parallel or distributed processing frameworks such as Spark or Hadoop. These frameworks can divide the dataset and perform transformations concurrently on multiple processors or machines.

- Lazy Evaluation: Employ lazy evaluation techniques to avoid unnecessary computations. Only transform data when absolutely needed. This is especially important when dealing with chained transformations.

- Stream Processing: Process the data as a stream instead of loading everything into memory at once. This is memory-efficient for very large datasets that don’t fit into RAM. Libraries like Apache Kafka or Apache Flink are useful here.

- Algorithmic Optimization: Select efficient algorithms. For example, for sorting, merge sort or quicksort are generally faster than bubble sort for large datasets.

Profiling your code to identify bottlenecks is critical before applying optimization techniques. This helps pinpoint the most impactful areas for improvement.

Q 11. Discuss the trade-offs between using different data structures for object storage.

The choice of data structure for object storage significantly impacts performance and memory usage. The optimal structure depends on the specific operations you’ll perform. Here’s a comparison:

- Arrays/Lists: Provide fast sequential access but slow random access. Suitable when you need to iterate through objects sequentially.

- Hash Tables (Dictionaries): Offer fast average-case access, insertion, and deletion using keys. Ideal for frequent lookups based on unique identifiers.

- Trees (e.g., Binary Search Trees, B-trees): Support efficient searching, insertion, and deletion, particularly when data needs to be sorted or ordered. Different tree types offer different performance characteristics.

- Graphs: Represent relationships between objects. Excellent for modeling networks or dependencies.

Trade-offs: Arrays/Lists are memory-efficient for contiguous data but slow for random access. Hash tables provide fast access but require more memory and can have performance degradation with many collisions. Trees provide balanced search performance but have higher overhead than arrays. Graphs are suitable for relational data but can be complex to manage. The optimal choice requires careful consideration of access patterns and the overall application requirements.

For instance, if you frequently need to retrieve objects based on a unique ID, a hash table would be a superior choice compared to an array where you would need to linearly search.

Q 12. Explain your experience with object-relational mapping (ORM).

Object-Relational Mapping (ORM) frameworks bridge the gap between object-oriented programming languages and relational databases. They allow you to interact with database tables using objects and methods instead of writing raw SQL queries. This increases developer productivity and makes database interaction more manageable.

My experience with ORMs includes using several popular frameworks like Hibernate (Java), Entity Framework (C#), and SQLAlchemy (Python). I’ve used them to build various applications, from small-scale projects to large enterprise systems.

ORMs offer several benefits: simplified data access, improved code readability, better database abstraction (allowing easier database migrations), and generally increased developer efficiency. However, there are also potential drawbacks. ORMs can sometimes introduce performance overhead, especially if complex queries are generated. They may also require careful understanding to avoid performance pitfalls related to lazy loading or inefficient query generation. Understanding the ORM’s query optimization strategies is vital to leverage its efficiency.

In real-world projects, I have found that effective ORM usage involves careful planning of the object-relational mapping, tuning query generation to minimize database interaction, and employing caching strategies to minimize database hits. It’s always important to strike a balance between the developer productivity advantages and the performance implications.

Q 13. Describe how you would implement a custom object comparator.

A custom object comparator is a function or class that compares two objects based on specific criteria. This is crucial for tasks like sorting, searching, or checking for equality where the default comparison might be inadequate.

In languages like Python, you can implement a custom comparator using a function or class that conforms to the comparison protocol (__eq__, __lt__, __gt__, etc.). In other languages, the implementation might vary, but the fundamental concept remains the same.

Here’s an example of a Python comparator for custom objects:

class Person:

def __init__(self, name, age):

self.name = name

self.age = age

def compare_persons(p1, p2):

if p1.age < p2.age:

return -1

elif p1.age > p2.age:

return 1

else:

return 0 # Names are equal

persons = [Person('Alice', 30), Person('Bob', 25), Person('Charlie', 30)]

persons.sort(key=cmp_to_key(compare_persons))

# persons will be sorted by age, then name

cmp_to_key is used to convert a comparison function to a key function, compatible with Python’s sort(). This approach provides flexibility for ordering objects based on your custom logic. You could compare based on multiple attributes, using a weighted scoring system, or any other criteria relevant to your application.

Q 14. How do you ensure data integrity during object transformations?

Data integrity is paramount during object transformations. Several techniques help maintain accuracy and consistency:

- Validation: Implement robust validation at each transformation step. Check data types, ranges, constraints, and referential integrity. This helps prevent invalid data from propagating through the transformation process.

- Error Handling: Thorough error handling is essential. Catch exceptions, log errors, and implement strategies for handling invalid data. Consider strategies like data scrubbing, default values, or skipping problematic records.

- Logging: Maintain a comprehensive audit trail of all transformations. Logging allows you to trace the origin of data errors and facilitates debugging.

- Transactions: For database interactions, use database transactions to ensure atomicity. All transformations within a transaction either succeed completely or fail completely, preventing partial updates that could corrupt data.

- Data Transformation Frameworks: Frameworks like Apache Kafka Streams or Apache Beam offer built-in capabilities for data validation, error handling, and monitoring during transformations at scale.

- Testing: Rigorous testing is crucial. Write unit tests, integration tests, and end-to-end tests to verify the correctness of transformations and ensure data integrity.

A combination of these strategies provides a multi-layered approach, making the system more resilient to errors and ensuring high data quality throughout the transformation pipeline.

Q 15. How do you handle circular references during object serialization?

Circular references in object serialization occur when an object directly or indirectly refers to itself, creating a loop. This poses a problem because a naive serialization approach would lead to infinite recursion. The solution involves techniques to detect and break these cycles.

One common approach is to use a reference tracking mechanism. This involves maintaining a set of objects already serialized. Before serializing an object, we check if it’s already in this set. If it is, we serialize a reference instead of the object itself, typically represented as an ID. When deserializing, we use these IDs to reconstruct the object graph.

Another method involves using a graph traversal algorithm, such as Depth-First Search (DFS) or Breadth-First Search (BFS), to explore the object graph. While traversing, we can identify cycles and handle them appropriately, for example by adding a flag to prevent revisiting already visited nodes.

Consider this Python example (Illustrative, specific serialization libraries handle this internally):

class Node:

def __init__(self, data):

self.data = data

self.children = []

node1 = Node(1)

node2 = Node(2)

node1.children.append(node2)

node2.children.append(node1) # Circular reference

#Serialization with cycle detection would involve checking if node1 or node2 is already processed before serialization to avoid infinite recursion.Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. Explain your experience with different object serialization formats (e.g., JSON, XML, Protobuf).

I have extensive experience with several object serialization formats. Each has its strengths and weaknesses.

- JSON (JavaScript Object Notation): JSON is ubiquitous due to its human-readable format, broad language support, and lightweight nature. It’s ideal for data exchange between web applications and servers, but lacks the schema validation and extensibility of other formats. I’ve often used JSON for REST APIs and configuration files.

- XML (Extensible Markup Language): XML provides strong schema definition using XSD, allowing for data validation and improved structure. It’s self-describing and widely supported but is often more verbose than JSON, leading to larger file sizes. I used XML in projects requiring strict data validation and compatibility with legacy systems.

- Protobuf (Protocol Buffers): Protobuf excels in performance and efficiency. It’s binary format is compact and schema-defined, making it ideal for high-volume data exchange and services. It’s particularly beneficial for internal communication within a distributed system. I’ve used Protobuf for gRPC services and inter-process communication in large-scale systems where performance is paramount.

The choice depends entirely on the specific needs of the project. For instance, rapid prototyping often favors the simplicity of JSON. For demanding performance requirements or complex, well-defined data structures, Protobuf is a clear choice. XML serves its purpose when strict schema validation and compatibility with legacy systems are essential.

Q 17. Discuss the challenges of transforming objects between different data models.

Transforming objects between different data models presents several challenges. One major hurdle is the mismatch in structures and attributes. For example, transforming data from a relational database (tables and rows) to a graph database (nodes and edges) requires a considerable mapping effort.

Data type discrepancies also arise, such as converting date formats, handling different numeric precisions, and handling missing values. Handling relationships between objects can be complex, especially when dealing with many-to-many relationships or hierarchical data structures. Finally, maintaining data integrity during transformation is critical; ensuring that data is not lost or corrupted during the process demands rigorous testing and validation.

Techniques to mitigate these challenges include:

- Mapping definitions: Create explicit mappings between the source and target data models, defining rules for data transformation and handling inconsistencies.

- Data transformation libraries: Leveraging libraries like Apache Camel or custom transformation scripts can streamline the process.

- Data validation: Implementing data validation at each step to ensure the integrity of the transformed data.

I’ve addressed these challenges in various projects by designing and implementing custom mapping tools, ensuring that the transformations are efficient, accurate, and maintainable.

Q 18. How do you approach debugging object manipulation and transformation errors?

Debugging object manipulation and transformation errors requires a methodical approach.

Logging is crucial – placing strategic log statements at different stages of the transformation process reveals the state of the objects and data at each point. This helps pinpoint exactly where the error occurs.

Debuggers are invaluable. Setting breakpoints allows us to inspect variables and step through the code execution, observing the behavior of the objects and the transformations. Watch expressions help monitor the values of specific properties during runtime.

Unit testing is fundamental. Write thorough unit tests to cover various scenarios and edge cases in your object manipulation logic. Comprehensive tests greatly improve the likelihood of catching errors early and provide confidence in the correctness of the transformation process.

Finally, using assertion statements within the code itself can identify inconsistencies immediately. For example, check that data types match expectations, and ranges are within allowed bounds.

By combining these techniques, I’ve been able to effectively isolate and resolve a variety of complex errors in object manipulation and transformation, reducing debugging time and increasing overall system reliability.

Q 19. Describe your experience with using reflection for object manipulation.

Reflection is a powerful technique allowing us to inspect and manipulate objects at runtime, even without prior knowledge of their structure. It’s particularly useful when dealing with generic object manipulation tasks or interacting with external systems where the data format isn’t pre-defined.

I’ve used reflection extensively in scenarios where I need to dynamically access object properties, invoke methods, or create instances of classes. For instance, I’ve used reflection in projects dealing with:

- Object-relational mapping (ORM): Automating the mapping of database tables to object properties.

- Data serialization/deserialization: Dynamically handling various object types during serialization processes.

- Extensibility frameworks: Creating plugins or extensions that interact with existing systems without requiring compile-time dependencies.

However, reflection should be used carefully. It can reduce code readability and maintainability, making debugging more challenging. Excessive reflection can also introduce performance overhead. Therefore, it’s best to utilize reflection only when necessary and carefully weigh the trade-offs.

An example in Java:

Class clazz = MyClass.class;

Method[] methods = clazz.getMethods();

for (Method method : methods) {

System.out.println(method.getName());

}Q 20. Explain how you would handle object inheritance during object transformations.

Object inheritance presents unique challenges during object transformations. The approach depends on how inheritance is handled in the source and target data models. A naive approach could fail to account for properties inherited from parent classes.

A common approach is to use a polymorphic transformation strategy. This strategy involves considering the object’s type during the transformation. Specific transformation rules are applied based on the class hierarchy. You might handle base classes differently from derived classes. For example, you might transform base class properties first and then add properties specific to the derived class.

Another option is to flatten the inheritance hierarchy during transformation. This involves explicitly including all relevant properties from the parent classes in the transformed object, avoiding any implicit inheritance lookup during the transformation process. This simplifies the transformation process, but can lead to redundancy.

The choice between these approaches depends on the nature of the data models and performance requirements. If performance is critical, and inheritance plays a minor role, flattening the hierarchy may be efficient. Otherwise, a polymorphic strategy allows for a more flexible and maintainable solution.

Q 21. What are the performance implications of using different object manipulation techniques?

The performance implications of various object manipulation techniques vary significantly. Simple property accesses are generally efficient, while complex operations, such as reflection or deep cloning, can introduce considerable overhead.

Direct property access is the fastest, especially when dealing with primitive data types. Shallow copying is generally efficient if you don’t need to duplicate deeply nested objects. Deep copying, in contrast, can be computationally expensive if the objects have many nested references, creating a significant performance bottleneck.

Reflection carries a significant runtime performance penalty due to the dynamic nature of resolving members at runtime. This is particularly pronounced when working with large object graphs. Similarly, complex transformations involving many operations on the object can drastically affect performance. This is why optimization strategies like batch processing or using appropriate data structures (such as immutable data types) are essential.

Finally, serialization format selection influences performance. Compact binary formats like Protobuf perform better than more verbose formats like XML in terms of both serialization/deserialization speed and network bandwidth usage.

In my experience, profiling is crucial for identifying performance bottlenecks. I use profiling tools to pinpoint areas that require optimization, allowing me to make informed decisions to improve performance while maintaining code quality.

Q 22. How do you ensure thread safety when manipulating objects concurrently?

Ensuring thread safety when manipulating objects concurrently is crucial to prevent data corruption and unexpected behavior. The core principle is to avoid race conditions, where multiple threads access and modify shared objects simultaneously without proper synchronization. This can lead to inconsistent data and program crashes.

Synchronization Mechanisms: The most common approach is using synchronization primitives like mutexes (mutual exclusion locks), semaphores, or monitors. Mutexes, for instance, allow only one thread to access a shared resource at a time. Before accessing the object, a thread acquires the mutex; after finishing, it releases it. This ensures exclusive access.

Immutable Objects: If possible, design your objects as immutable. Immutable objects cannot be modified after creation. This eliminates the possibility of race conditions because multiple threads can safely access and read an immutable object without any need for synchronization.

Thread-Local Storage: If an object doesn’t need to be shared among threads, store it in thread-local storage. Each thread will have its own copy, avoiding concurrency issues.

Concurrent Collections: Many programming languages provide concurrent collections (e.g.,

ConcurrentHashMapin Java) specifically designed for thread-safe operations. These collections internally handle synchronization, simplifying development.

Example (Java with Mutex):

public class ThreadSafeCounter { private int count = 0; private final Object lock = new Object(); public void increment() { synchronized (lock) { count++; } } public int getCount() { synchronized (lock) { return count; } } }In this example, the synchronized block ensures that only one thread can access and modify count at a time.

Q 23. Describe your experience with using memory management techniques for objects.

Memory management for objects is critical for performance and preventing memory leaks. My experience spans several techniques:

Garbage Collection (GC): In languages like Java, C#, and Python, garbage collection automatically reclaims memory occupied by objects that are no longer reachable. Understanding GC algorithms (mark-and-sweep, generational GC) helps optimize performance by tuning heap size and GC settings. I’ve worked extensively with GC tuning to minimize pauses and improve application responsiveness.

Manual Memory Management (C++): In C++, I’ve used techniques like smart pointers (

unique_ptr,shared_ptr,weak_ptr) to manage object lifetimes effectively. These help prevent memory leaks and dangling pointers by automatically releasing memory when objects are no longer needed. Understanding RAII (Resource Acquisition Is Initialization) is essential for robust memory management in C++.Object Pooling: For frequently created and destroyed objects, object pooling is highly beneficial. It pre-allocates a set of objects and reuses them, reducing the overhead of object creation and garbage collection. I’ve successfully implemented object pools in performance-critical applications to improve throughput.

Weak References: In situations where you need to track objects without preventing them from being garbage collected, weak references are invaluable. This is crucial in scenarios like caching or event handling to avoid memory leaks and circular references.

For example, in a game engine, object pooling might be used to manage projectiles or enemy characters, improving performance during intense battles.

Q 24. How would you approach the problem of object graph traversal?

Object graph traversal involves systematically visiting all objects within a network of interconnected objects. The approach depends on the graph’s structure and the traversal’s purpose.

Depth-First Search (DFS): DFS explores a branch as far as possible before backtracking. It’s suitable for finding paths, detecting cycles, or copying object graphs.

Breadth-First Search (BFS): BFS visits all neighbors of a node before moving to the next level. This is useful for finding the shortest path or identifying components in a graph.

Iterators and Visitors: Using iterators provides a generic way to traverse collections within objects. Visitors allow defining operations to be performed on different object types during traversal without modifying the object classes themselves.

Recursive approaches: Recursion is a natural fit for DFS, especially when dealing with tree-like structures. However, recursive calls can lead to stack overflow errors for deep graphs.

Handling Cycles: When the graph contains cycles, it’s crucial to implement a mechanism (e.g., visited set) to prevent infinite loops. This ensures the traversal terminates correctly.

Example (Python DFS):

def dfs(node, visited): visited.add(node) print(node) for neighbor in node.neighbors: if neighbor not in visited: dfs(neighbor, visited) This example recursively explores a graph using DFS, keeping track of visited nodes to avoid cycles.

Q 25. Explain your understanding of object lifecycle management.

Object lifecycle management encompasses all stages of an object’s existence, from creation to destruction. Effective management is essential for resource efficiency and preventing errors.

Creation: This involves allocating memory and initializing the object’s state. Constructors play a key role in this stage.

Usage: During its lifespan, the object is used for its intended purpose, participating in application logic.

Destruction: Proper cleanup is vital, releasing resources (files, network connections, etc.) held by the object. Destructors (or

finallyblocks) handle this, preventing resource leaks.Object Pools: As mentioned before, object pooling significantly impacts lifecycle management, improving performance and memory utilization by reusing objects.

Dependency Injection: Techniques like dependency injection improve lifecycle management by managing the creation and disposal of dependencies external to the object itself, making testing and managing lifecycles easier.

Proper lifecycle management avoids memory leaks, dangling pointers, and resource exhaustion, leading to a more stable and reliable application.

Q 26. Describe your experience with working with different object-oriented programming languages (e.g., Java, C++, Python).

I have extensive experience with several object-oriented programming languages:

Java: I’ve built large-scale applications using Java, leveraging its rich libraries and robust ecosystem. My experience includes working with collections frameworks, multithreading, and design patterns.

C++: I’m proficient in C++, specializing in memory management and performance optimization. I’ve worked on projects requiring low-level control and high performance, focusing on game development and high-frequency trading.

Python: Python’s flexibility and extensive libraries are invaluable for rapid prototyping and scripting. I’ve used Python for data processing, machine learning, and automating tasks, often integrating it with other languages.

In each language, I adapt my approach to the language’s strengths and limitations, choosing appropriate design patterns and techniques for the task at hand. For instance, while I might use smart pointers heavily in C++ for memory management, I rely on Python’s garbage collection in Python projects, focusing on efficient algorithms and data structures instead.

Q 27. How do you choose the appropriate data structures for efficient object manipulation?

Selecting the right data structure is paramount for efficient object manipulation. The optimal choice depends on the specific operations and access patterns.

Arrays/Lists: Excellent for sequential access and when elements need to be indexed directly. However, insertions and deletions in the middle can be slow.

Linked Lists: Ideal when frequent insertions and deletions are needed, particularly in the middle of the sequence. Random access is slower.

Trees (Binary Trees, AVL Trees, B-Trees): Efficient for searching, sorting, and storing hierarchical data. Different tree types offer trade-offs in terms of search time and balance.

Hash Tables/Sets: Provide fast average-case lookups, insertions, and deletions. They are suitable for key-value storage and membership checks.

Graphs: Represent relationships between objects. Suitable for network analysis, social networks, or dependency management.

For example, if you need to frequently search for objects based on a unique identifier, a hash table would be the ideal choice. If you need to maintain a sorted order, a tree structure would be more appropriate.

Q 28. What strategies would you use to reduce the complexity of object transformations?

Reducing the complexity of object transformations involves several strategies:

Decomposition: Break down complex transformations into smaller, more manageable steps. This improves code readability and maintainability.

Abstraction: Create abstract classes or interfaces to represent transformations, hiding implementation details and allowing for easier swapping of algorithms.

Design Patterns: Employ patterns like the Command pattern to encapsulate individual transformation operations. This simplifies execution and sequencing of steps.

Data Transformation Libraries: Leverage existing libraries (e.g., Pandas in Python, Apache Spark) which offer optimized functions for data manipulation. This avoids reinventing the wheel and benefits from optimized implementations.

Functional Programming: Utilize functional programming paradigms (map, filter, reduce) to express transformations concisely and efficiently. This also allows for parallelization, further improving performance.

Data Normalization: Before transformations, normalizing the data structure can make the transformation process simpler and more efficient.

By applying these strategies, you can create more modular, efficient, and maintainable code for object transformations.

Key Topics to Learn for Object Manipulation and Transformation Interviews

- Object-Oriented Programming Principles: Deep understanding of encapsulation, inheritance, and polymorphism as they relate to object manipulation. Consider how these principles impact code efficiency and maintainability.

- Data Structures and Algorithms: Explore how different data structures (arrays, linked lists, trees, graphs) influence object manipulation efficiency. Practice algorithms for searching, sorting, and manipulating objects within these structures.

- Design Patterns: Familiarize yourself with common design patterns (e.g., Factory, Singleton, Observer) that facilitate effective object creation, interaction, and transformation. Understand when to apply each pattern and their impact on system design.

- Object Serialization and Deserialization: Learn techniques for converting objects into a storable format (e.g., JSON, XML) and reconstructing them. This is crucial for data persistence and interoperability.

- Memory Management: Understand how memory is allocated and deallocated for objects, particularly in languages with manual memory management. Grasp concepts like garbage collection and memory leaks.

- Object Cloning and Copying: Explore the nuances of creating copies of objects, differentiating between shallow and deep copies and their implications. Understand the performance trade-offs involved.

- Practical Applications: Consider real-world scenarios where object manipulation and transformation are critical, such as game development (object movement, collision detection), data processing (data transformation pipelines), and software engineering (model-view-controller architectures).

- Problem-Solving Strategies: Practice breaking down complex problems into smaller, manageable tasks related to object manipulation. Develop a systematic approach to designing and implementing solutions.

Next Steps

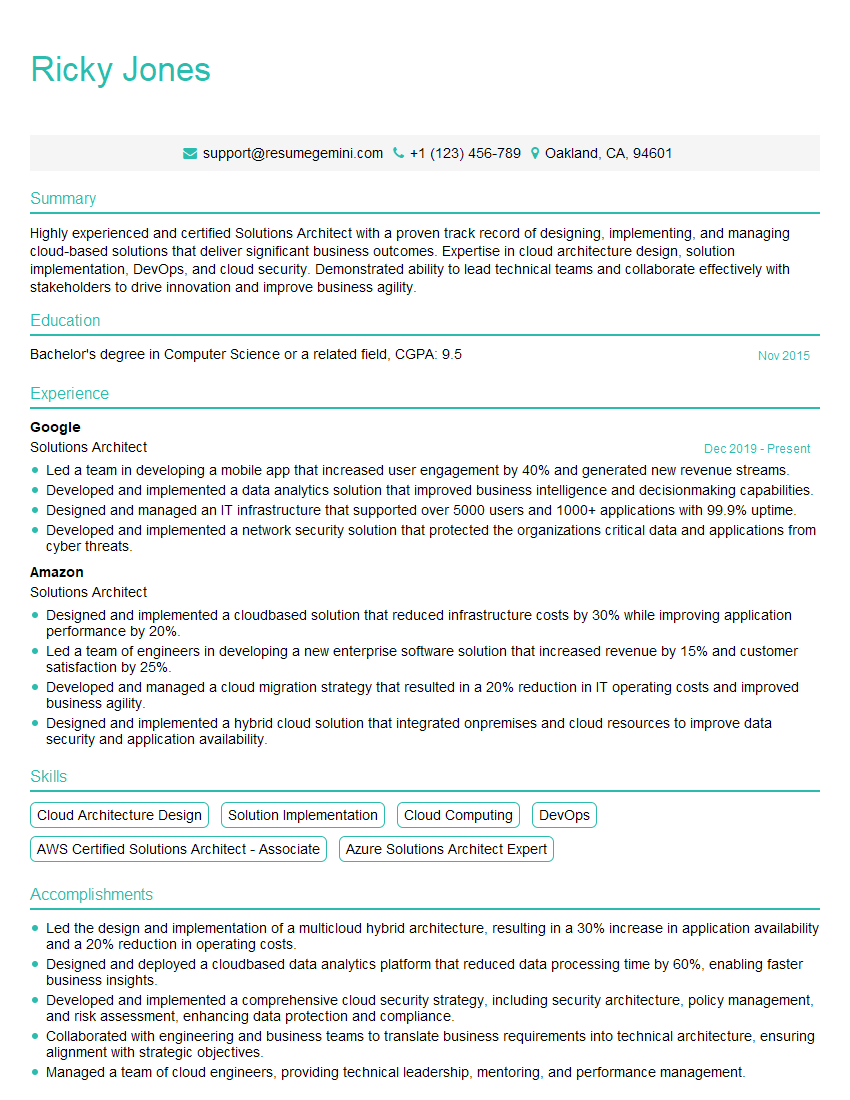

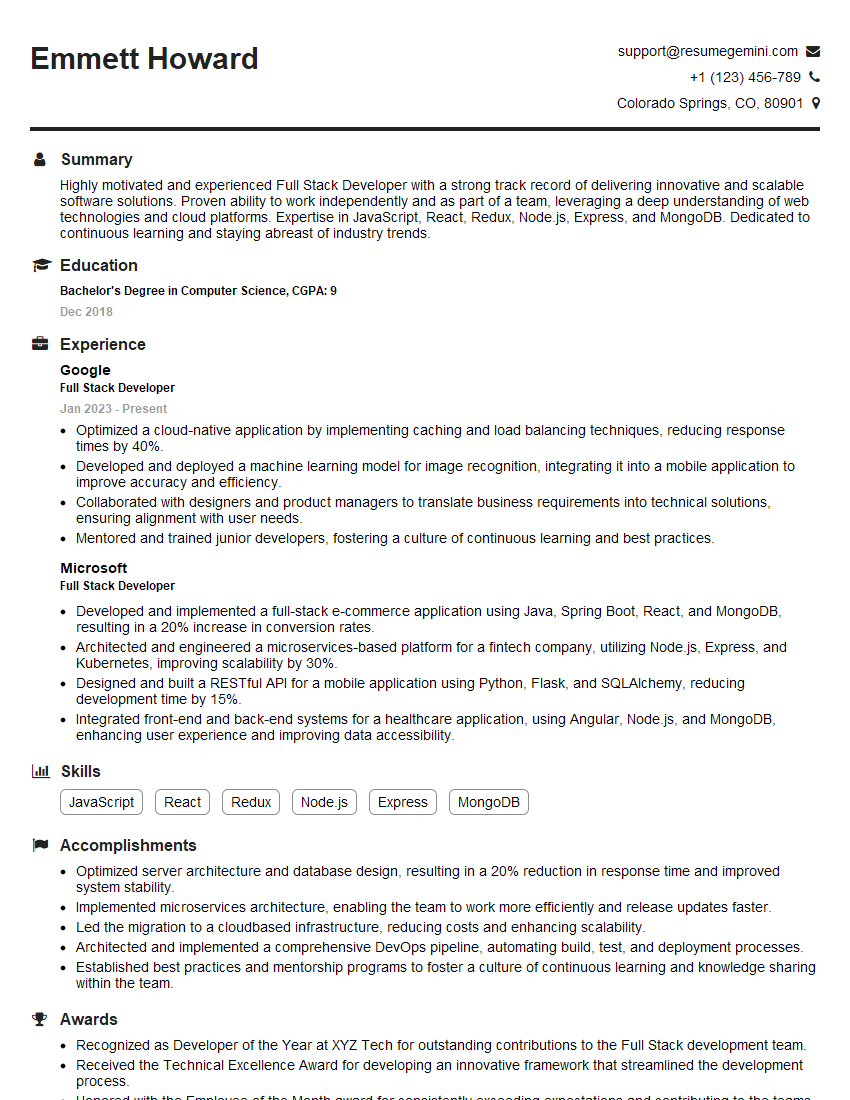

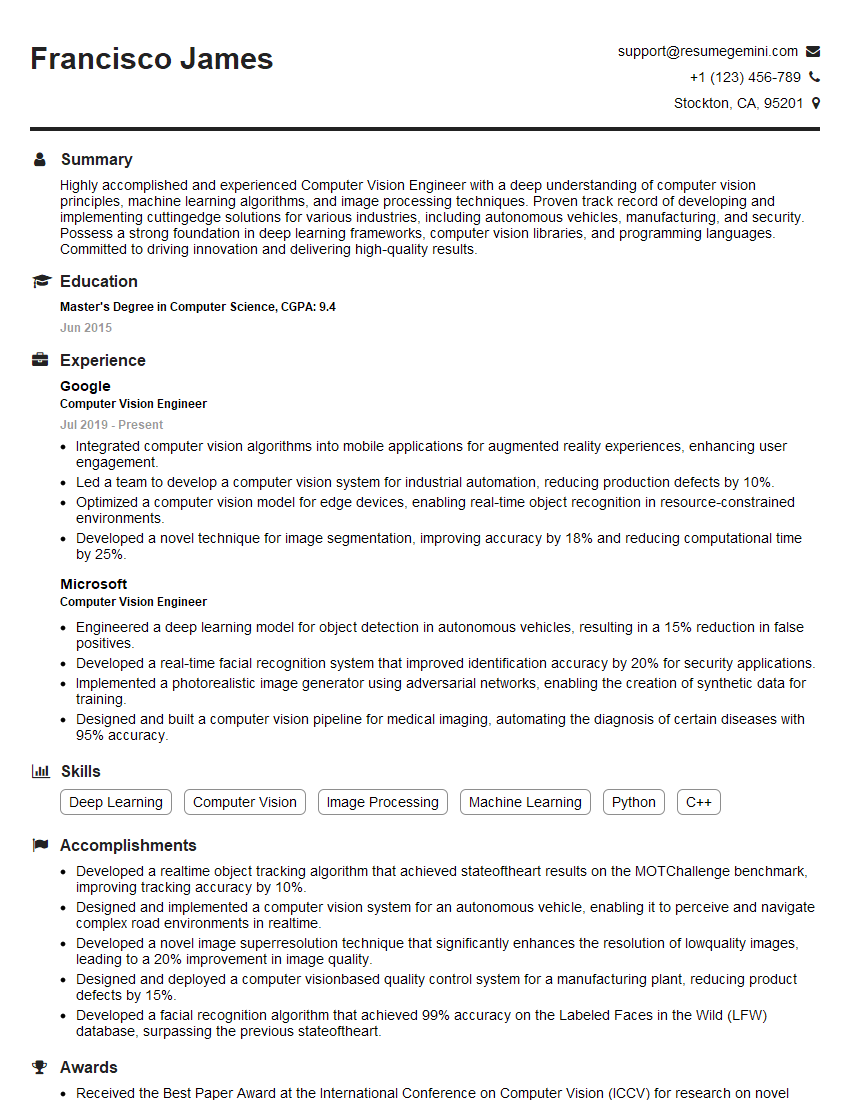

Mastering object manipulation and transformation is vital for career advancement in software development and related fields. These skills demonstrate a deep understanding of fundamental programming concepts and are highly sought after by employers. To maximize your job prospects, creating a strong, ATS-friendly resume is crucial. ResumeGemini is a trusted resource that can help you build a professional resume that highlights your skills and experience effectively. ResumeGemini provides examples of resumes tailored to Object Manipulation and Transformation roles to guide you in creating your own compelling application materials.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Very informative content, great job.

good