Cracking a skill-specific interview, like one for Business Intelligence Analysis, requires understanding the nuances of the role. In this blog, we present the questions you’re most likely to encounter, along with insights into how to answer them effectively. Let’s ensure you’re ready to make a strong impression.

Questions Asked in Business Intelligence Analysis Interview

Q 1. Explain the ETL process in detail.

ETL, or Extract, Transform, Load, is the cornerstone of any data warehousing initiative. It’s a three-stage process that prepares raw data from disparate sources for analysis.

- Extract: This stage involves retrieving data from various sources, which could include databases (SQL, NoSQL), flat files (CSV, TXT), APIs, and cloud storage (AWS S3, Azure Blob Storage). Think of it as gathering all the ingredients for your recipe.

- Transform: This is where the magic happens. Data is cleaned, standardized, and transformed to fit a consistent format. This might include handling missing values, correcting inconsistencies, data type conversions, and joining data from multiple sources. Imagine this stage as preparing your ingredients – chopping vegetables, measuring spices, etc.

- Load: Finally, the transformed data is loaded into a data warehouse or data mart. This is your beautifully prepared dish, ready to be served (analyzed).

For example, imagine extracting sales data from a CRM, website activity from Google Analytics, and customer demographics from a marketing database. The transformation phase would involve standardizing date formats, dealing with inconsistent customer IDs, and potentially joining these datasets to create a comprehensive view of customer behavior. This combined data would then be loaded into a data warehouse for analysis.

Q 2. What are the key differences between OLAP and OLTP databases?

OLTP (Online Transaction Processing) and OLAP (Online Analytical Processing) databases serve fundamentally different purposes. Think of OLTP as your daily checkbook and OLAP as your yearly financial statement.

- OLTP: Designed for transactional operations, focusing on speed and efficiency for individual transactions. Data is highly structured, normalized to minimize redundancy, and optimized for quick inserts, updates, and deletes. Examples include online banking systems, point-of-sale systems, and inventory management systems.

- OLAP: Optimized for complex analytical queries and reporting. Data is often denormalized, meaning some redundancy is accepted for faster query performance. OLAP databases are built for aggregating and summarizing data across multiple dimensions. Think of analyzing sales trends over time, by region, and by product category. Examples include data warehouses and data marts.

In short, OLTP handles day-to-day transactions, while OLAP provides insights based on historical data. They often complement each other – OLTP systems feed data into OLAP systems for analysis.

Q 3. Describe your experience with data visualization tools (e.g., Tableau, Power BI).

I have extensive experience with both Tableau and Power BI, utilizing them for diverse projects ranging from interactive dashboards to complex data visualizations.

In Tableau, I’ve leveraged its drag-and-drop interface to create compelling visualizations for various clients, often using calculated fields and parameters to enhance interactivity. For example, I built a dashboard tracking sales performance across different product categories, allowing users to filter data by region and time period. The ability to rapidly prototype and iterate on dashboards is a key strength of Tableau.

Power BI, on the other hand, excels in its seamless integration with Microsoft’s ecosystem, making it particularly useful for organizations heavily invested in the Microsoft stack. I have utilized DAX (Data Analysis Expressions) to create advanced calculations within Power BI reports and leveraged its data modeling capabilities to optimize query performance and data relationships. A recent project involved building a real-time sales dashboard using Power BI’s live connection capabilities to a SQL Server database.

My proficiency in both tools allows me to select the most appropriate technology based on project requirements and client needs.

Q 4. How do you handle missing data in a dataset?

Handling missing data is crucial for data integrity. Ignoring it can lead to biased and unreliable results. My approach involves a combination of strategies:

- Understanding the cause: Is the missing data Missing Completely at Random (MCAR), Missing at Random (MAR), or Missing Not at Random (MNAR)? This informs the best strategy.

- Deletion: If the missing data is minimal and MCAR, listwise or pairwise deletion might be appropriate. However, this can lead to a substantial loss of data if not used cautiously.

- Imputation: Replacing missing values with estimated values. Techniques include mean/median/mode imputation (simple but can distort variance), regression imputation (predicts missing values based on other variables), k-nearest neighbor imputation (uses similar data points), and multiple imputation (generates several plausible imputed datasets).

- Advanced techniques: For complex datasets and MNAR data, techniques like maximum likelihood estimation or expectation-maximization can be applied.

The best method depends on the dataset’s characteristics, the amount of missing data, and the analysis goals. Always document the chosen strategy and justify the decision.

Q 5. What are some common data quality issues and how do you address them?

Data quality issues significantly impact analysis accuracy. Common problems include:

- Inconsistent data formats: Dates may be recorded in multiple formats (MM/DD/YYYY, DD/MM/YYYY), leading to errors.

- Missing values: As discussed earlier, this requires careful handling.

- Duplicate data: Redundant records can skew analysis.

- Data entry errors: Typographical errors or incorrect data input.

- Outliers: Extreme values that don’t align with the rest of the data.

I address these issues using a combination of techniques:

- Data profiling: Analyzing data to understand its structure, quality, and potential issues.

- Data cleaning: Using scripts and tools to identify and correct errors, inconsistencies, and duplicates.

- Data validation: Implementing rules and checks to prevent future errors.

- Data standardization: Ensuring consistency in data formats and definitions across datasets.

For example, I once worked on a project where inconsistent date formats were causing errors in sales trend analysis. I wrote a Python script to standardize the dates and then validated the results using data profiling to ensure the correction was successful.

Q 6. Explain your understanding of different data warehousing architectures.

Data warehousing architectures vary depending on the organization’s needs and scale. Some common architectures include:

- Data warehouse bus architecture: A centralized data warehouse fed by multiple data sources. It’s relatively simple to implement but can be challenging to scale.

- Data lake architecture: Stores raw data in its native format, allowing for greater flexibility and scalability. Requires significant processing power for data analysis.

- Data lakehouse architecture: Combines the benefits of data lakes and data warehouses. It stores data in its raw form but also provides structured query capabilities for improved analytics.

- Hybrid architecture: A combination of different architectures, such as using a data lake for raw data and a data warehouse for structured data.

The choice of architecture depends on factors such as data volume, velocity, variety, and the organization’s analytical needs. A small business might choose a simpler bus architecture, while a large enterprise might benefit from a data lakehouse or hybrid approach.

Q 7. What experience do you have with SQL and database querying?

I possess extensive experience in SQL and database querying, utilizing it daily in my role. My skills encompass a wide range of tasks:

- Data retrieval: Writing complex queries to extract specific data from relational databases using

SELECT,FROM,WHERE,JOIN,GROUP BY, andHAVINGclauses. - Data manipulation: Transforming data using functions like

CASE,SUM,AVG,COUNT, andDATEPART. - Data loading: Using

INSERT INTO,UPDATE, andDELETEstatements to manage data in databases. - Database design: Understanding database normalization principles and designing efficient database schemas.

- Performance optimization: Analyzing query performance and implementing strategies to improve efficiency, including indexing and query rewriting.

For example, I recently optimized a slow-running query by adding an index to a frequently queried column, resulting in a 10x speed improvement. My SQL skills enable me to efficiently extract, transform, and load data for analysis, underpinning my expertise in business intelligence.

SELECT COUNT(*) FROM Sales WHERE Date BETWEEN '2023-01-01' AND '2023-12-31'

This query provides a simple example of counting sales records within a specific time range.

Q 8. Describe your experience with data mining techniques.

Data mining involves uncovering hidden patterns, anomalies, and valuable insights from large datasets. My experience encompasses various techniques, including:

- Association Rule Mining (Apriori Algorithm): I’ve used this to discover relationships between products purchased together, like finding that customers who buy diapers also frequently buy baby wipes. This informs targeted marketing and product placement strategies.

- Classification (Decision Trees, Logistic Regression): I’ve built models to predict customer churn or credit risk. For example, a decision tree model might identify factors like low usage frequency and missed payments as strong predictors of churn.

- Clustering (K-Means, Hierarchical Clustering): I’ve utilized clustering to segment customers based on their purchasing behavior or demographics, allowing for personalized marketing campaigns. For instance, identifying clusters of high-value, loyal customers versus price-sensitive customers enables tailored outreach.

- Regression Analysis (Linear, Polynomial): I’ve employed regression to forecast sales trends or predict future demand. This helps businesses optimize inventory management and resource allocation.

My approach always emphasizes rigorous data cleaning, feature engineering, and model evaluation to ensure the reliability and actionable nature of the insights generated.

Q 9. How do you identify and prioritize business needs for BI solutions?

Identifying and prioritizing business needs for BI solutions requires a collaborative and data-driven approach. I begin by engaging with key stakeholders across different departments to understand their challenges and objectives. This often involves:

- Workshops and Interviews: Facilitating discussions to understand pain points, data needs, and desired outcomes.

- Business Process Mapping: Visualizing workflows to pinpoint data bottlenecks and opportunities for improvement.

- Data Inventory Analysis: Assessing the available data assets to identify gaps and potential sources of insights.

- Prioritization Matrix: Using a framework like a MoSCoW method (Must have, Should have, Could have, Won’t have) to rank business needs based on their impact and feasibility.

For example, in a previous project for an e-commerce company, stakeholder interviews revealed a need to improve customer retention. Through data analysis, we identified key drivers of churn, leading to the prioritization of a BI solution focused on customer segmentation and targeted marketing campaigns.

Q 10. Explain your experience with different BI reporting tools.

I have extensive experience with various BI reporting tools, including:

- Tableau: Proficient in creating interactive dashboards and visualizations for effective data storytelling. I’ve used Tableau to track key performance indicators (KPIs), enabling real-time monitoring and proactive decision-making.

- Power BI: Experienced in building comprehensive reports and dashboards, integrating data from diverse sources. I’ve utilized Power BI’s capabilities to automate report generation and distribution, streamlining reporting processes.

- Qlik Sense: Skilled in creating interactive data visualizations and dashboards. Qlik Sense’s associative engine has been instrumental in exploratory data analysis and uncovering unexpected relationships within datasets.

- SQL Server Reporting Services (SSRS): Familiar with creating traditional reports and integrating them with enterprise data warehouses. SSRS is ideal for generating standardized reports with precise formatting.

My tool selection always depends on the specific needs of the project, considering factors like data volume, user requirements, and integration with existing systems.

Q 11. How do you ensure data security and compliance within a BI environment?

Data security and compliance are paramount in a BI environment. My approach involves implementing a multi-layered security strategy that includes:

- Access Control: Implementing role-based access control (RBAC) to restrict access to sensitive data based on user roles and responsibilities.

- Data Encryption: Encrypting data both in transit and at rest to protect against unauthorized access.

- Data Masking and Anonymization: Protecting sensitive data by masking or anonymizing personally identifiable information (PII) while preserving the utility of the data for analysis.

- Regular Security Audits and Vulnerability Assessments: Conducting regular audits and assessments to identify and address potential security vulnerabilities.

- Compliance with Regulations: Ensuring compliance with relevant regulations such as GDPR, CCPA, and HIPAA, depending on the industry and data involved.

For example, in a healthcare project, I ensured that all patient data was anonymized before being used for analysis, and access to the data was restricted to authorized personnel only, adhering strictly to HIPAA guidelines.

Q 12. Describe your experience working with large datasets.

I’ve worked extensively with large datasets, leveraging various techniques to manage and analyze them efficiently. This includes:

- Distributed Computing Frameworks (Hadoop, Spark): Utilizing these frameworks to process and analyze data that is too large to fit into the memory of a single machine. For example, I’ve used Spark to perform real-time analytics on streaming data from social media platforms.

- Data Warehousing and Data Lakes: Designing and implementing data warehousing solutions to efficiently store and retrieve large volumes of data. Data lakes provide a flexible approach for storing raw data, while data warehouses offer structured and optimized data for analysis.

- Database Optimization Techniques: Employing techniques like indexing, partitioning, and query optimization to improve query performance on large datasets.

- Sampling Techniques: Using appropriate sampling methods to work with representative subsets of massive datasets when analyzing the entire dataset is computationally infeasible.

In a project involving analyzing millions of customer transactions, we implemented a Hadoop-based solution to process the data efficiently, enabling the discovery of valuable insights that would have been impossible with traditional methods.

Q 13. How do you interpret and communicate complex data insights to non-technical stakeholders?

Communicating complex data insights to non-technical stakeholders requires clear, concise, and visually engaging communication. My approach involves:

- Storytelling with Data: Framing insights within a narrative that connects to the audience’s business goals and concerns. I avoid technical jargon and use relatable analogies.

- Data Visualization: Creating visually appealing charts, graphs, and dashboards that effectively communicate key findings. I choose the right visualization type to best represent the data.

- Interactive Dashboards: Building interactive dashboards that allow stakeholders to explore data at their own pace and discover additional insights.

- Executive Summaries and Key Takeaways: Summarizing key findings in a concise and easy-to-understand format for busy executives.

For instance, instead of saying ‘the R-squared value of our regression model is 0.85,’ I would explain, ‘our model accurately predicts 85% of the variation in sales, allowing us to forecast future demand with greater confidence and optimize inventory levels.’

Q 14. What are some key performance indicators (KPIs) you’ve used in past projects?

The specific KPIs I’ve used vary depending on the project, but some common examples include:

- Customer Acquisition Cost (CAC): The cost of acquiring a new customer. This helps measure the effectiveness of marketing campaigns.

- Customer Churn Rate: The percentage of customers who stop using a product or service within a given period. This indicates areas needing improvement in customer retention.

- Customer Lifetime Value (CLTV): The predicted revenue generated by a customer over their entire relationship with a company. This informs customer segmentation and resource allocation.

- Return on Investment (ROI): A measure of the profitability of a project or investment. This is crucial for justifying BI initiatives and assessing their impact.

- Website Conversion Rate: The percentage of website visitors who complete a desired action, such as making a purchase or signing up for a newsletter. This informs website optimization strategies.

In a previous project, we tracked website conversion rates, customer acquisition costs, and customer lifetime value to optimize marketing spending and improve the overall profitability of the business.

Q 15. Describe your experience with data modeling techniques.

Data modeling is the process of creating a visual representation of data structures and their relationships. It’s like designing the blueprint of a house before you start building – you need to know where the rooms go and how they connect. My experience spans various techniques, including:

- Star Schema: This is a classic approach, ideal for reporting and analysis. It features a central fact table surrounded by dimension tables. For example, in an e-commerce database, the fact table might contain sales transactions, while dimension tables would hold information about products, customers, and time.

- Snowflake Schema: An extension of the star schema, where dimension tables are further normalized to reduce redundancy. This is advantageous when dealing with complex hierarchies, like product categories with multiple levels.

- Data Vault Modeling: A robust technique suitable for data warehousing environments with complex data lineage requirements. It handles evolving data structures and provides an excellent audit trail, which is critical for compliance and data governance.

- Dimensional Modeling: This is a broader category encompassing star and snowflake schemas, emphasizing the design of a data warehouse for analytical processing. It involves identifying facts (measurable events) and dimensions (contextual attributes).

In my previous role, I designed a snowflake schema for a large retail client, significantly improving query performance and reducing data storage costs by approximately 15% compared to their previous relational model.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. Explain your experience with different data integration methods.

Data integration involves combining data from various sources into a unified view. Think of it as assembling a jigsaw puzzle – you have many different pieces, and the goal is to fit them together to create a coherent picture. I’ve worked with several methods, including:

- ETL (Extract, Transform, Load): This is the traditional approach, involving extracting data from source systems, transforming it to a consistent format, and then loading it into a target data warehouse. I’ve used tools like Informatica PowerCenter and Talend Open Studio extensively for this.

- ELT (Extract, Load, Transform): A more modern approach, where data is extracted and loaded into the data warehouse first, and transformations are performed within the target system (often using cloud-based services like AWS Redshift or Snowflake). This is particularly efficient when dealing with large volumes of data.

- Data Virtualization: This method creates a unified view of data without physically moving or consolidating it. It’s beneficial when dealing with sensitive or geographically dispersed data. I have experience leveraging tools that provide data virtualization capabilities like Denodo.

- API Integration: Connecting to various data sources using APIs (Application Programming Interfaces) to retrieve and update data. This approach is frequently used to integrate with SaaS (Software as a Service) applications.

In one project, I used a hybrid approach combining ETL and API integration to consolidate data from several disparate systems, achieving a 30% increase in data accessibility for business users.

Q 17. How do you validate the accuracy and reliability of your data analysis?

Data validation is crucial to ensure the accuracy and reliability of your analysis. It’s like double-checking your math before submitting your homework. My approach involves several steps:

- Data Profiling: Understanding the characteristics of the data, including data types, distributions, and missing values. Tools like Python libraries (pandas, datatable) can be instrumental.

- Data Quality Rules: Defining rules based on business logic to flag potential errors or inconsistencies. For instance, checking for negative values in sales figures or inconsistent date formats.

- Data Consistency Checks: Comparing data from different sources to identify discrepancies. This might involve comparing order information from an e-commerce platform with shipping data from a logistics provider.

- Root Cause Analysis: Investigating the source of errors and implementing corrections to prevent recurrence. For instance, correcting faulty data entry processes or fixing data integration issues.

- Visualization and Exploration: Using charts and graphs to visualize data and identify anomalies that automated checks might miss. A visual inspection is essential.

For example, I once discovered a significant data anomaly in a financial dataset by visualizing the data. Further investigation revealed a system glitch that had been recording erroneous transactions. Addressing this issue prevented a costly forecasting mistake.

Q 18. How do you handle conflicting data from different sources?

Conflicting data from different sources is a common challenge. Resolving conflicts requires a careful and methodical approach. The solution depends on the nature of the conflict and the data’s importance. My strategy usually includes:

- Prioritization: Identifying the most reliable data source based on data quality assessments and business context. Often, you might prioritize data from a primary system over a secondary or backup system.

- Data Reconciliation: Investigating the reasons for the conflict. This involves analyzing the data sources to identify errors, discrepancies, or inconsistencies in data definitions or data entry processes.

- Data Cleaning and Transformation: Implementing data cleansing techniques to resolve the inconsistencies. This may involve removing duplicate records, standardizing data formats, or imputing missing values based on established rules.

- Conflict Resolution Rules: Establishing clear rules for handling conflicts, for example, always prioritizing the most recently updated data or using a weighted average from multiple sources.

- Documentation: Maintaining a thorough record of the conflict resolution process for auditability and traceability.

In a previous project, we encountered conflicting customer address data from our CRM and e-commerce platforms. By analyzing the timestamps and data quality scores, we were able to identify the most reliable source for each customer and create a consolidated, accurate customer address dataset.

Q 19. What are some common challenges in implementing BI solutions?

Implementing BI solutions presents several challenges:

- Data Silos: Data scattered across different systems makes integration difficult and time-consuming. Often it requires a significant organizational shift.

- Data Quality Issues: Inconsistent, inaccurate, or incomplete data hinders analysis and decision-making. Data quality needs to be addressed proactively, not as an afterthought.

- Lack of Skilled Resources: A shortage of individuals with the necessary data analysis, data engineering, and BI tool expertise.

- Integration Complexity: Integrating diverse data sources with different structures, formats, and technologies can be technically challenging.

- Change Management: Getting users to adopt new tools, processes, and ways of working requires effective change management strategies.

- Cost and Time Constraints: BI projects can be expensive and time-consuming, requiring careful planning and resource allocation.

One challenge I encountered was persuading stakeholders to invest in data quality improvement efforts before embarking on a large BI project. By demonstrating the potential cost savings and improved decision-making resulting from higher-quality data, I secured the necessary investment and ensured the success of the project.

Q 20. How do you stay current with the latest trends in business intelligence?

Staying up-to-date in the rapidly evolving field of business intelligence requires a proactive approach. My strategy includes:

- Following Industry Publications and Blogs: I regularly read publications like Gartner, Forrester, and industry-specific blogs to stay abreast of new trends and technologies.

- Attending Conferences and Webinars: Conferences and webinars provide opportunities to learn from experts, network with peers, and explore new tools and techniques.

- Online Courses and Certifications: I regularly take online courses and pursue certifications from platforms like Coursera, edX, and DataCamp to enhance my skills in areas like cloud computing, machine learning, and specific BI tools.

- Networking and Collaboration: Engaging with other professionals in the field through online forums, professional organizations, and industry events allows me to share knowledge and stay updated on current trends.

- Hands-on Experimentation: Experimenting with new tools and techniques on personal projects keeps my skills sharp and allows me to evaluate their usefulness in real-world scenarios.

For example, I recently completed a course on cloud-based data warehousing, expanding my skills and allowing me to successfully leverage cloud technologies for a recent client project.

Q 21. Describe your experience with predictive modeling techniques.

Predictive modeling uses historical data to forecast future outcomes. It’s like predicting the weather based on past weather patterns. My experience covers a range of techniques:

- Regression Analysis: Predicting a continuous variable, such as sales revenue or customer lifetime value. Linear regression is a common method, but more sophisticated techniques like polynomial regression or ridge regression might be necessary depending on the complexity of the data.

- Classification: Predicting a categorical variable, such as customer churn or fraud detection. Techniques include logistic regression, decision trees, support vector machines (SVMs), and random forests.

- Time Series Analysis: Forecasting future values based on historical time-stamped data, such as stock prices or website traffic. Methods include ARIMA models and exponential smoothing.

- Machine Learning Algorithms: Employing algorithms like neural networks, gradient boosting, and deep learning for more complex predictive modeling tasks. Libraries like scikit-learn in Python are commonly used.

In one project, I built a predictive model to forecast customer churn using logistic regression. This model allowed the company to proactively target at-risk customers with retention strategies, resulting in a 10% reduction in churn rate.

Q 22. How do you measure the success of a BI initiative?

Measuring the success of a BI initiative goes beyond simply deploying a new dashboard. It requires a multifaceted approach, focusing on both the technical implementation and the impact on business decisions. Success hinges on demonstrating a clear return on investment (ROI).

- Improved Decision-Making: This is arguably the most critical metric. Did the BI initiative lead to better, more data-driven decisions? This can be measured by tracking key performance indicators (KPIs) that were previously difficult to monitor and the resulting improvements in those metrics. For example, a reduction in customer churn or an increase in sales conversion rates.

- Increased Efficiency: Did the BI initiative streamline processes and reduce manual effort? Measure the time saved by automating report generation, data analysis, or other tasks. For instance, if previously generating a sales report took a week, now it takes an hour, that’s a significant efficiency gain.

- Enhanced Revenue or Cost Savings: The ultimate measure of success often translates into financial gains. Did the BI initiative lead to increased revenue streams, or cost reductions, due to better inventory management, optimized marketing campaigns, or improved operational efficiency? Quantify this using financial metrics like net profit margin or ROI.

- User Adoption and Satisfaction: A successful BI initiative is one that’s actually used. Track the number of users accessing the system, the frequency of their use, and collect feedback through surveys or interviews to assess user satisfaction. If nobody is using your beautiful dashboards, then it’s not successful.

- Data Quality Improvements: The BI system itself might contribute to better data quality through data cleansing and standardization processes. Measure data accuracy and completeness before and after implementing the BI solution. A decrease in data errors contributes directly to improved decision-making.

Ultimately, defining success requires establishing clear, measurable, achievable, relevant, and time-bound (SMART) goals before the initiative begins. Tracking these goals throughout the project lifecycle ensures that the initiative remains focused and its success can be objectively measured.

Q 23. Explain your understanding of different data visualization best practices.

Data visualization best practices aim to present complex data in a clear, concise, and engaging manner, enabling easy understanding and facilitating decision-making. Key principles include:

- Choosing the Right Chart Type: Different chart types are suited for different data types and analytical goals. Bar charts are great for comparisons, line charts for trends over time, scatter plots for correlations, and maps for geographical data. Using the wrong chart type can misrepresent the data.

- Clear and Concise Labeling: All axes, legends, and data points should be clearly labeled with appropriate units and context. Avoid ambiguous labels and ensure the meaning is self-explanatory.

- Effective Use of Color: Color should be used strategically to highlight key information and patterns, not to overwhelm the viewer. Use a consistent color scheme and avoid excessive use of bright, distracting colors. Consider color blindness when selecting your palette.

- Minimalist Design: Avoid clutter by removing unnecessary elements. Focus on presenting the key insights, avoiding visual noise that distracts from the main message. Whitespace is important!

- Data Integrity: Ensure the data is accurate and presented truthfully. Avoid manipulating the data to show a particular outcome. Transparency is key.

- Interactive Elements: For complex datasets, interactive elements such as zooming, filtering, and drill-downs can significantly enhance user experience and allow deeper exploration of the data.

- Contextualization: Always provide context. A visualization without context is meaningless. Include relevant background information or narrative to help the audience understand the data’s significance.

For instance, using a pie chart to represent a dataset with many small slices is confusing, while a bar chart would be more effective. Conversely, showing trends over time is best done with a line chart rather than a bar chart. By following these practices, we create visualizations that are not only aesthetically pleasing but also highly effective in communicating insights.

Q 24. How do you handle ambiguous or incomplete data requirements?

Handling ambiguous or incomplete data requirements is a common challenge in BI. The key is to proactively engage with stakeholders and employ a structured approach to clarify needs and make informed decisions about data handling.

- Elicit Clarification: Schedule meetings with stakeholders to understand their needs in detail. Ask probing questions to uncover the underlying business problems they are trying to solve. Don’t assume you understand their requirements; ask for specific examples and use cases.

- Data Exploration and Discovery: Conduct thorough data exploration to understand the available data and its limitations. Identify potential inconsistencies or missing information. This exploratory data analysis (EDA) helps uncover potential issues early.

- Prioritization: If resources are limited, prioritize data requirements based on business impact. Focus on addressing the most critical aspects first, while acknowledging that some questions might need to be addressed later.

- Data Imputation Techniques: For missing data, appropriate imputation techniques may be employed, such as mean imputation, median imputation, or more sophisticated methods like K-Nearest Neighbors (KNN) imputation. However, always document these techniques and their potential impact on the analysis.

- Sensitivity Analysis: Perform sensitivity analysis to understand how different assumptions or imputation methods impact the results. This demonstrates the robustness of findings and highlights any potential biases.

- Data Governance and Documentation: Establish clear data governance procedures to address data quality issues and promote consistent data handling practices. Maintain thorough documentation of data sources, data quality issues, and assumptions made during data analysis.

For example, if a stakeholder requests ‘customer satisfaction,’ I would delve deeper, asking about specific aspects they’re interested in (e.g., Net Promoter Score, customer reviews, support ticket resolution times). This approach clarifies the requirements and guides the choice of appropriate data sources and analytical techniques.

Q 25. What is your experience with agile methodologies in a BI context?

Agile methodologies are extremely well-suited for BI projects, enabling iterative development, faster feedback loops, and improved adaptability to changing requirements. I have extensive experience leveraging the principles of Agile in BI projects, specifically Scrum.

- Iterative Development: Agile allows for the development of BI solutions in small, manageable increments (sprints). This enables early and frequent delivery of valuable features, reducing the risk of delivering a large, potentially flawed system at the end.

- Continuous Feedback: Regular sprint reviews and stakeholder feedback sessions provide valuable insights and allow for adjustments to the project scope and deliverables as needed. This iterative feedback ensures the project remains aligned with evolving business needs.

- Adaptability to Change: The inherent flexibility of Agile allows BI projects to adapt to changing business priorities or new data requirements. Unlike traditional waterfall approaches, Agile embraces change rather than resisting it.

- Improved Collaboration: Agile fosters close collaboration between business stakeholders, data engineers, data analysts, and developers. Daily stand-ups and sprint reviews promote communication and shared understanding.

- Faster Time-to-Market: By delivering working prototypes early and often, Agile significantly reduces time-to-market for BI solutions, allowing businesses to derive value from their data quicker.

In practice, I have employed Agile in numerous BI projects, using techniques like user story mapping to prioritize requirements, sprint planning to define actionable tasks, and daily stand-ups to track progress and identify potential roadblocks. This approach has consistently led to more successful and efficient BI projects.

Q 26. Describe a time you had to troubleshoot a complex data issue.

During a project involving customer segmentation for a retail client, we encountered a significant data issue. The customer database contained inconsistencies in the ‘address’ field, with some entries using abbreviations, others full addresses, and some containing errors or typos. This directly impacted the accuracy of our geographic segmentation.

My troubleshooting process involved:

- Data Profiling: I began by profiling the ‘address’ field to identify the extent and nature of the inconsistencies. This involved using SQL queries to analyze the data, identifying the different formats and patterns of errors.

- Data Cleaning Strategies: I explored various data cleaning techniques, including standardization (converting all addresses to a consistent format) and fuzzy matching (identifying similar addresses despite minor variations). I also considered using external geocoding services to validate and standardize addresses.

- Testing and Validation: Before implementing any cleaning strategy on the entire dataset, I tested it on a sample to assess its effectiveness and impact. This ensured the chosen method would improve data quality without introducing new errors.

- Documentation: I thoroughly documented the data cleaning process, including the methods used, any assumptions made, and the potential limitations. This ensured transparency and reproducibility.

- Collaboration: I collaborated with the data engineering team to implement the chosen solution efficiently and effectively. This involved working with them to create ETL (Extract, Transform, Load) processes to manage the address data cleaning.

Through a combination of careful data profiling, appropriate cleaning techniques, thorough testing, and effective collaboration, we successfully resolved the address data issue and achieved accurate customer segmentation. This experience highlighted the importance of a methodical approach to data quality issues and the value of collaboration in overcoming complex data challenges.

Q 27. How do you balance the need for detailed analysis with the need for timely insights?

Balancing the need for detailed analysis with timely insights requires a strategic approach that leverages the strengths of both. It’s not an either/or situation; rather, it’s about finding the optimal balance.

- Prioritization of Insights: Focus on the most critical business questions first. Address those needing immediate insights using readily available data and simpler analyses. More in-depth analyses can be tackled in a phased approach.

- Agile Approach: Utilizing an agile methodology enables the delivery of initial, high-level insights quickly, while allowing for deeper dives into the data in subsequent iterations. This way, you are continuously delivering value.

- Data Sampling: For extremely large datasets, using representative samples for initial analysis can dramatically reduce processing time, enabling quicker insights. This approach can then be refined with more comprehensive analysis later.

- Data Summarization: Summarizing and aggregating data before detailed analysis reduces complexity and accelerates the process of identifying key trends and patterns. This can provide useful high-level insights, while preparing for more granular investigation.

- Visualization and Dashboarding: Effective data visualization can make complex datasets easier to understand, enabling quicker assimilation of insights even from detailed analyses. Dashboards that present key metrics are essential for real-time monitoring and quick decision-making.

- Automation: Automating repetitive tasks like report generation frees up time for deeper analysis and allows for more frequent delivery of insights.

For example, when analyzing sales data, initial insights on overall sales trends can be quickly obtained using aggregated data. Later, more detailed analysis can be performed to understand the performance of specific product categories or customer segments, or to investigate outliers or anomalies requiring a more detailed investigation.

Q 28. What is your experience with cloud-based BI platforms (e.g., Snowflake, AWS Redshift)?

I have significant experience with cloud-based BI platforms, particularly Snowflake and AWS Redshift. These platforms offer scalability, elasticity, and cost-effectiveness compared to on-premise solutions. My experience encompasses:

- Data Warehousing and ETL: Designing and implementing data warehouses on both Snowflake and Redshift, leveraging their respective features for efficient data loading, transformation, and storage. I’m proficient in using their respective ETL tools and services.

- Data Modeling: Building dimensional models and star schemas optimized for query performance within these cloud platforms. Understanding the nuances of each platform’s architecture is crucial for efficient query processing.

- Query Optimization: Writing and optimizing SQL queries for efficient data retrieval and analysis, leveraging platform-specific features like materialized views, clustering, and partitioning to enhance performance.

- Security and Access Control: Implementing robust security measures, including role-based access control (RBAC) and data encryption, to protect sensitive data stored in these platforms.

- Cost Optimization: Monitoring resource usage and implementing strategies to optimize costs, such as leveraging auto-scaling features, utilizing serverless compute options, and carefully managing data storage.

- Integration with BI Tools: Integrating these cloud data warehouses with various BI tools such as Tableau, Power BI, and Qlik Sense to enable effective data visualization and reporting.

For example, when working with large datasets exceeding terabytes, Snowflake’s ability to scale on demand is incredibly beneficial. Its columnar storage and advanced query optimization features significantly improve query performance. Conversely, AWS Redshift’s cost-effectiveness and integration with other AWS services are advantages for projects within the AWS ecosystem.

Key Topics to Learn for Business Intelligence Analysis Interview

- Data Warehousing and Data Modeling: Understanding dimensional modeling, star schemas, snowflake schemas, and ETL processes is crucial. Practical application includes designing a data warehouse for a specific business problem.

- SQL and Database Management: Proficiency in SQL is essential for querying, manipulating, and analyzing data. Practical application involves writing efficient SQL queries to extract insights from large datasets.

- Data Visualization and Reporting: Mastering tools like Tableau or Power BI to create compelling visualizations and reports that communicate key findings effectively. Practical application includes designing dashboards to track key performance indicators (KPIs).

- Data Mining and Predictive Modeling: Familiarity with statistical techniques and machine learning algorithms for predictive analytics. Practical application includes building models to forecast sales or customer churn.

- Business Acumen and Communication: Understanding business processes and effectively communicating insights to stakeholders (both technical and non-technical) is critical. Practical application involves presenting data-driven recommendations to improve business outcomes.

- Data Cleaning and Preprocessing: Understanding techniques for handling missing values, outliers, and inconsistencies in data. Practical application includes identifying and resolving data quality issues before analysis.

- Data Governance and Security: Awareness of data governance policies and best practices for data security. Practical application includes ensuring compliance with regulations like GDPR.

Next Steps

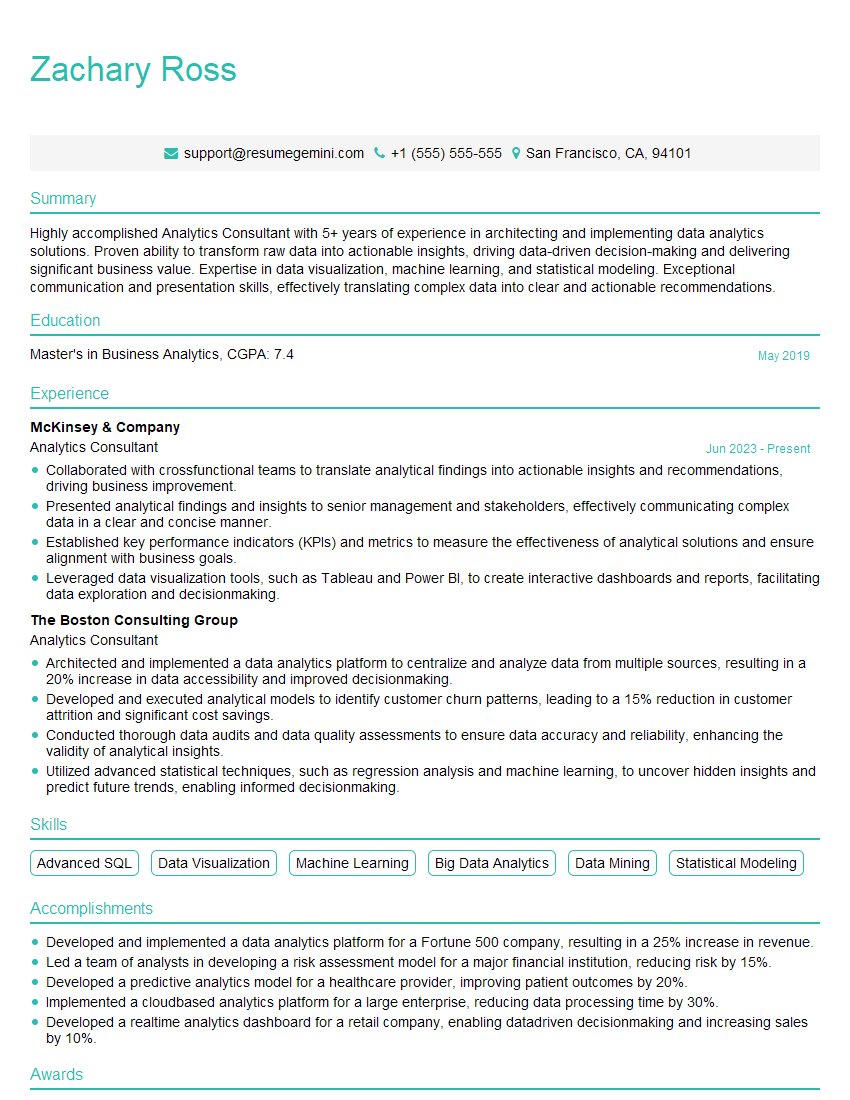

Mastering Business Intelligence Analysis opens doors to exciting and high-demand careers, offering opportunities for significant professional growth and impact. To maximize your job prospects, create an ATS-friendly resume that highlights your skills and experience effectively. ResumeGemini is a trusted resource that can help you build a compelling and professional resume, ensuring your application stands out. Examples of resumes tailored to Business Intelligence Analysis are available to guide you.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Very informative content, great job.

good