Cracking a skill-specific interview, like one for Analytics and Data Interpretation, requires understanding the nuances of the role. In this blog, we present the questions you’re most likely to encounter, along with insights into how to answer them effectively. Let’s ensure you’re ready to make a strong impression.

Questions Asked in Analytics and Data Interpretation Interview

Q 1. Explain the difference between correlation and causation.

Correlation and causation are two distinct concepts in statistics. Correlation refers to a statistical relationship between two or more variables; when one variable changes, the other tends to change as well. However, correlation does not imply causation. Just because two variables are correlated doesn’t mean one causes the other. There could be a third, unseen variable influencing both.

Example: Ice cream sales and drowning incidents are positively correlated – both increase during the summer. This doesn’t mean eating ice cream causes drowning! The underlying factor is the warm weather, which leads to more people swimming and more people buying ice cream.

In short: Correlation shows a relationship; causation demonstrates that one variable directly influences another. Establishing causation requires more rigorous methods like controlled experiments or strong longitudinal studies that control for confounding variables.

Q 2. What are the common types of data visualizations and when would you use each?

Data visualization is crucial for communicating insights effectively. Several types exist, each suited for different data and purposes:

- Bar charts: Compare categorical data. Example: Sales figures across different product categories.

- Line charts: Show trends over time. Example: Website traffic over a month.

- Pie charts: Display proportions of a whole. Example: Market share of different companies.

- Scatter plots: Explore relationships between two numerical variables. Example: Height vs. weight.

- Histograms: Show the distribution of a single numerical variable. Example: Distribution of customer ages.

- Heatmaps: Visualize data with two dimensions and color intensity. Example: Website click-through rates across different pages and time of day.

- Box plots: Show data distribution (median, quartiles, outliers). Example: Comparing income levels across different demographics.

The choice depends on the data type and the message you want to convey. A clear understanding of your audience is also crucial for selecting the most appropriate visualization.

Q 3. Describe your experience with SQL. Give examples of queries you’ve written.

I have extensive experience with SQL, using it daily for data extraction, transformation, and loading (ETL) processes. I’m proficient in writing complex queries involving joins, subqueries, and window functions. I’ve worked with various database systems, including PostgreSQL and MySQL.

Examples:

SELECT * FROM customers WHERE country = 'USA';(Simple selection)SELECT order_id, customer_name, order_date FROM orders INNER JOIN customers ON orders.customer_id = customers.id;(Inner join to combine data from two tables)SELECT product_name, SUM(quantity) AS total_quantity_sold FROM order_items GROUP BY product_name ORDER BY total_quantity_sold DESC;(Aggregation and ordering)SELECT *, ROW_NUMBER() OVER (PARTITION BY customer_id ORDER BY order_date) AS rn FROM orders;(Window function to assign row numbers within customer partitions)

In a past project, I used SQL to identify high-value customers based on their purchase history and recency of purchase, enabling targeted marketing campaigns. This involved complex queries using subqueries and aggregate functions. I’ve also used SQL extensively for data cleaning and preparation, handling inconsistencies and anomalies within the database.

Q 4. How do you handle missing data in a dataset?

Missing data is a common problem in datasets. The best approach depends on the nature of the data, the amount of missingness, and the potential impact on the analysis.

- Deletion: Removing rows or columns with missing values. This is simple but can lead to bias if the missing data isn’t random (e.g., if wealthier individuals are less likely to report their income).

- Imputation: Replacing missing values with estimated ones. Methods include using the mean, median, or mode (simple imputation), or more sophisticated techniques like k-Nearest Neighbors (k-NN) or multiple imputation. The choice depends on the data distribution and the desired level of accuracy.

- Model-based imputation: Utilize predictive modeling to fill in missing values based on patterns in the existing data.

Before choosing a method, I’d analyze the pattern of missing data (Missing Completely at Random (MCAR), Missing at Random (MAR), Missing Not at Random (MNAR)) to understand its potential impact. I’d also consider the sensitivity of the analysis to missing data. In some cases, acknowledging the presence of missing data and reporting it transparently is the most appropriate approach.

Q 5. Explain the concept of A/B testing and its applications.

A/B testing is a randomized experiment that compares two versions of a variable (A and B) to determine which performs better. It’s a powerful tool for making data-driven decisions.

How it works: Users are randomly assigned to either the A or B group. The results are then compared to see which version leads to a statistically significant improvement in a key metric (e.g., click-through rate, conversion rate).

Applications:

- Website optimization: Testing different website layouts, button colors, or call-to-action wording.

- Marketing campaigns: Comparing the effectiveness of different email subject lines, ad copy, or landing pages.

- Product development: Evaluating user preferences for different features or designs.

A successful A/B test requires careful planning, a large enough sample size to ensure statistical power, and a clear definition of success metrics.

Q 6. What is regression analysis and how is it used in data analysis?

Regression analysis is a statistical method used to model the relationship between a dependent variable and one or more independent variables. It helps us understand how changes in the independent variables affect the dependent variable.

Types: Linear regression models a linear relationship; multiple regression involves multiple independent variables; logistic regression predicts a categorical outcome (e.g., yes/no).

Applications:

- Predictive modeling: Predicting future values of the dependent variable based on the independent variables. Example: Predicting house prices based on size, location, and features.

- Understanding relationships: Determining the strength and direction of the relationship between variables. Example: Assessing the impact of advertising spend on sales.

- Causal inference: While correlation doesn’t equal causation, regression analysis, combined with appropriate experimental design and controls, can provide insights into causal relationships.

Interpreting regression results requires careful consideration of statistical significance, R-squared values, and potential confounding variables.

Q 7. What are some common statistical measures used in data analysis?

Many statistical measures are used in data analysis, depending on the context and the type of data. Some common ones include:

- Mean, Median, Mode: Measures of central tendency, describing the typical value of a dataset.

- Standard Deviation, Variance: Measures of dispersion, showing the spread or variability of data around the mean.

- Range: The difference between the maximum and minimum values.

- Interquartile Range (IQR): The range of the middle 50% of the data.

- Correlation coefficient: Measures the strength and direction of the linear relationship between two variables.

- P-value: Indicates the probability of obtaining results as extreme as, or more extreme than, the observed results, given that the null hypothesis is true.

- R-squared: In regression analysis, it represents the proportion of variance in the dependent variable explained by the independent variables.

Choosing the right statistical measures depends on the research question, data distribution, and the type of analysis being conducted. For example, the median is preferred over the mean when dealing with skewed data, as it is less sensitive to outliers.

Q 8. How do you identify outliers in a dataset?

Identifying outliers, data points significantly different from others, is crucial for data accuracy. We use various methods, often in combination. Simple methods include visual inspection using box plots or scatter plots to spot data points far from the main cluster. More statistically rigorous methods include:

- Z-score method: This calculates how many standard deviations a data point is from the mean. Points with a Z-score above a threshold (often 3 or -3) are considered outliers. For example, if a data point’s Z-score is 4, it’s 4 standard deviations above the average, indicating a potential outlier.

- Interquartile Range (IQR) method: This method focuses on the spread of the middle 50% of the data. Outliers are identified as points falling below Q1 – 1.5*IQR or above Q3 + 1.5*IQR, where Q1 and Q3 are the first and third quartiles, respectively. This method is less sensitive to extreme values than the Z-score method.

- DBSCAN (Density-Based Spatial Clustering of Applications with Noise): This is an unsupervised machine learning algorithm that groups data points based on density. Points that don’t belong to any cluster are identified as outliers. This is particularly useful for identifying outliers in high-dimensional data.

The choice of method depends on the dataset’s distribution and the nature of the outliers. For example, in a dataset with a skewed distribution, the IQR method might be preferred over the Z-score method. Often, a combination of visual inspection and statistical methods is used to ensure comprehensive outlier detection.

Q 9. Explain your experience with data cleaning and preprocessing techniques.

Data cleaning and preprocessing are foundational steps in any analytics project. My experience includes handling missing values, dealing with inconsistent data formats, and transforming data for analysis. I’ve used various techniques:

- Handling Missing Values: I’ve employed strategies like imputation (replacing missing values with mean, median, mode, or using more sophisticated methods like k-Nearest Neighbors) and removal of rows or columns with excessive missing data. The best approach depends on the amount of missing data and the nature of the variable. For example, in a dataset with a small percentage of missing values in a continuous variable, imputation using the mean might be appropriate. However, if a large number of values are missing in a categorical variable, it might be better to remove the entire variable.

- Data Transformation: I’ve applied techniques like standardization (Z-score normalization) to scale variables to a similar range and prevent variables with larger values from dominating analysis. I’ve also used techniques like log transformation to handle skewed data and improve model performance.

- Outlier Treatment: As discussed earlier, I identify and handle outliers using various statistical methods and visual inspection. Techniques include removal, winsorizing (capping values at a certain percentile), or transforming the data.

- Data Encoding: I have experience encoding categorical variables into numerical representations suitable for machine learning algorithms using techniques like one-hot encoding and label encoding.

I always document my data cleaning and preprocessing steps meticulously, ensuring reproducibility and transparency in the analysis.

Q 10. Describe your experience with different data mining techniques.

My data mining experience encompasses various techniques, categorized broadly into supervised and unsupervised learning:

- Supervised Learning: I have extensive experience with techniques like linear regression, logistic regression, decision trees, support vector machines (SVMs), and random forests for prediction and classification tasks. For instance, I used linear regression to model the relationship between advertising spend and sales revenue, and random forests to predict customer churn.

- Unsupervised Learning: I’ve utilized techniques like k-means clustering for customer segmentation, principal component analysis (PCA) for dimensionality reduction, and association rule mining (Apriori algorithm) for market basket analysis to uncover hidden patterns and relationships in data. For example, I used k-means clustering to group customers into distinct segments based on their purchasing behavior.

The choice of data mining technique depends heavily on the business problem, the nature of the data, and the desired outcome. I always ensure that I select the most appropriate technique based on a thorough understanding of the problem and data characteristics.

Q 11. How do you choose the appropriate statistical test for a given problem?

Selecting the right statistical test is crucial for drawing valid conclusions. The choice depends on several factors: the type of data (categorical or numerical), the number of groups being compared, and the research question (e.g., testing differences between means or associations between variables).

- For comparing means: If you have one group, use a one-sample t-test to compare the mean to a known value. For two independent groups, use an independent samples t-test; for two dependent groups (e.g., before-and-after measurements), use a paired samples t-test. For more than two groups, use ANOVA.

- For comparing proportions: Use a chi-square test for independence to assess the association between two categorical variables. For comparing proportions between two groups, use a z-test for proportions.

- For assessing correlation: Use Pearson’s correlation for linear relationships between two numerical variables, and Spearman’s rank correlation for non-linear relationships or ordinal data.

Before selecting a test, it’s essential to check assumptions like normality of data distribution (for t-tests and ANOVA) and independence of observations. Violations of assumptions may require alternative non-parametric tests (e.g., Mann-Whitney U test instead of t-test).

Q 12. Explain your experience with different data visualization tools (e.g., Tableau, Power BI).

I have extensive experience with data visualization tools, primarily Tableau and Power BI. Both tools allow for creating interactive and informative dashboards. My experience includes:

- Tableau: I’ve used Tableau to create interactive dashboards showing key performance indicators (KPIs), geographical visualizations (maps), and trend analysis using charts and graphs. Its drag-and-drop interface and rich visualization options make it excellent for exploratory data analysis and communicating findings to stakeholders.

- Power BI: I’ve leveraged Power BI’s capabilities for data integration from various sources, creating interactive reports, and implementing data storytelling techniques to communicate insights effectively. Its strong integration with Microsoft products makes it a powerful tool for enterprise-level reporting.

The choice between Tableau and Power BI often depends on specific needs and existing infrastructure. I’m proficient in both and can adapt my choice to best serve the project requirements.

Q 13. How do you interpret the results of a hypothesis test?

Interpreting hypothesis test results involves assessing whether the evidence supports rejecting the null hypothesis. The p-value is central to this interpretation. The p-value represents the probability of observing the obtained results (or more extreme results) if the null hypothesis were true.

- Low p-value (typically below 0.05): A low p-value suggests that the observed results are unlikely to have occurred by chance alone if the null hypothesis were true. We reject the null hypothesis and conclude that there is statistically significant evidence to support the alternative hypothesis.

- High p-value (typically above 0.05): A high p-value suggests that the observed results are likely to have occurred by chance alone if the null hypothesis were true. We fail to reject the null hypothesis. This does not mean the null hypothesis is true, only that there is insufficient evidence to reject it.

It is crucial to consider the effect size alongside the p-value. A statistically significant result (low p-value) might have a small effect size, meaning the practical significance is limited. Context is key – statistical significance doesn’t always equate to practical significance. A thorough interpretation always involves considering both the p-value and effect size within the broader context of the research question and the data.

Q 14. What is the difference between supervised and unsupervised learning?

The key difference between supervised and unsupervised learning lies in the presence or absence of labeled data.

- Supervised Learning: This involves training a model on a labeled dataset, where each data point is associated with a known outcome (target variable). The model learns to map inputs to outputs, allowing for predictions on new, unseen data. Examples include classification (predicting categories) and regression (predicting continuous values). Think of it as learning with a teacher who provides the correct answers.

- Unsupervised Learning: This involves training a model on an unlabeled dataset, where the outcome is unknown. The model aims to discover patterns, structures, and relationships within the data. Examples include clustering (grouping similar data points) and dimensionality reduction (reducing the number of variables while preserving important information). Think of it as learning without a teacher, exploring the data to find structure on your own.

In practice, the choice between supervised and unsupervised learning depends on the problem. If we have labeled data and want to predict an outcome, supervised learning is appropriate. If we want to discover hidden patterns in unlabeled data, unsupervised learning is the way to go. Sometimes, both approaches are used in combination.

Q 15. Explain the concept of overfitting and how to avoid it.

Overfitting occurs when a machine learning model learns the training data too well, capturing noise and random fluctuations instead of the underlying patterns. This leads to excellent performance on the training data but poor generalization to unseen data. Imagine trying to fit a complex, twisting curve through a scatter plot of points; if the curve perfectly hits every point, it’s likely overfitting – it’s learned the quirks of the specific data points instead of the general trend.

To avoid overfitting, we can employ several techniques:

- Cross-validation: Splitting the data into multiple subsets (folds) and training the model on some folds while testing on others. This gives a more robust estimate of model performance on unseen data.

- Regularization: Adding a penalty term to the model’s loss function to discourage overly complex models. L1 and L2 regularization are common methods (LASSO and Ridge regression, respectively).

- Feature selection/engineering: Carefully choosing relevant features and creating new ones that capture essential information, reducing noise and irrelevant data points.

- Pruning (for decision trees): Removing branches of a decision tree that don’t significantly improve accuracy, reducing complexity.

- Early stopping: Monitoring the model’s performance on a validation set during training and stopping when performance plateaus or starts to decrease; this prevents overtraining.

- Ensemble methods: Combining multiple models (e.g., bagging, boosting) to reduce variance and improve generalization.

For example, in a project predicting customer churn, I used cross-validation to evaluate different model complexities and regularization techniques (L2) to prevent overfitting and ensure reliable predictions on new customers.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. How do you measure the accuracy of a predictive model?

Measuring the accuracy of a predictive model depends on the type of problem (classification or regression) and the desired metrics. There’s no single ‘best’ metric; the appropriate choice depends on the specific context and business goals.

- Classification: Common metrics include accuracy (overall correctness), precision (proportion of true positives among predicted positives), recall (proportion of true positives among actual positives), F1-score (harmonic mean of precision and recall), and AUC (Area Under the ROC Curve, which measures the model’s ability to distinguish between classes).

- Regression: Common metrics include Mean Squared Error (MSE), Root Mean Squared Error (RMSE), Mean Absolute Error (MAE), and R-squared (coefficient of determination, representing the proportion of variance explained by the model).

For instance, in a fraud detection system (classification), recall is crucial – we want to catch as many fraudulent transactions as possible (even if it means some false positives). In contrast, for predicting house prices (regression), RMSE might be preferred as it gives a sense of the average error magnitude in monetary terms.

Choosing the right metric involves understanding the costs associated with false positives and false negatives, and aligning the model evaluation with the overall business objectives.

Q 17. Explain your experience with time series analysis.

Time series analysis involves analyzing data points collected over time to understand patterns, trends, and seasonality. I’ve extensive experience working with time series data, using various techniques depending on the specific problem.

My experience includes:

- Decomposition: Breaking down time series data into its components (trend, seasonality, residuals) to understand the underlying patterns. I’ve utilized this to identify seasonal sales peaks and long-term growth trends for a retail client.

- ARIMA modeling: Building autoregressive integrated moving average (ARIMA) models to forecast future values based on past observations. This has been valuable for predicting stock prices and demand forecasting.

- Exponential smoothing: Using methods like Holt-Winters to forecast future values, particularly useful when dealing with data exhibiting trends and seasonality. I applied this technique for predicting energy consumption.

- Prophet (from Meta): Using this powerful library for time series forecasting, especially when dealing with complex seasonality and trend changes. I found it highly effective for handling promotional impacts on sales data.

In one project, I used a combination of ARIMA and Prophet to forecast website traffic, accurately predicting peak periods and informing resource allocation strategies. Proper data preprocessing, including handling missing values and outliers, is always crucial for accurate time series analysis.

Q 18. How do you handle large datasets that don’t fit into memory?

Handling large datasets that don’t fit into memory requires strategies to process the data in smaller, manageable chunks. Key approaches include:

- Sampling: Working with a representative subset of the data to perform analysis and build models. This requires careful consideration to ensure the sample accurately reflects the population.

- Data streaming: Processing the data as it arrives, using algorithms designed for streaming data. This avoids loading the entire dataset into memory.

- Distributed computing (e.g., Spark, Hadoop): Partitioning the data across multiple machines and processing it in parallel. This is particularly effective for very large datasets.

- Out-of-core algorithms: Algorithms designed to work with data stored on disk, minimizing the amount of data in memory at any given time.

- Data reduction techniques: Applying techniques like dimensionality reduction (PCA) or feature selection to reduce the size of the dataset before processing.

In a project involving analyzing millions of customer transactions, I used Apache Spark to process the data in parallel across a cluster of machines. This enabled me to perform complex aggregations and build predictive models efficiently, even with the massive data volume.

Q 19. Describe your experience with data warehousing and ETL processes.

Data warehousing involves organizing and storing data from various sources into a central repository for analysis and reporting. ETL (Extract, Transform, Load) processes are crucial for populating the data warehouse.

My experience includes designing and implementing ETL pipelines using tools like Informatica PowerCenter and Apache Kafka. I’ve worked with both relational (SQL) and NoSQL databases to build data warehouses.

My responsibilities encompassed:

- Data extraction: Pulling data from various sources such as databases, APIs, and flat files.

- Data transformation: Cleaning, validating, and transforming the data to ensure consistency and accuracy. This included handling missing values, standardizing formats, and creating derived attributes.

- Data loading: Loading the transformed data into the data warehouse, optimizing for performance and scalability.

In one project, I designed a data warehouse for a financial institution, streamlining reporting processes and providing a single source of truth for business intelligence activities. The ETL process included handling complex data transformations to comply with regulatory requirements, ensuring data quality and integrity.

Q 20. What are some common challenges in data analysis and how have you overcome them?

Data analysis presents numerous challenges. Some common ones I’ve encountered include:

- Data quality issues: Inconsistent data, missing values, outliers, and errors are frequent. I address this through data cleaning, validation, and imputation techniques, using domain knowledge and statistical methods to handle inconsistencies effectively. For instance, I used k-NN imputation to fill missing values in customer demographics data.

- Data bias: Data can reflect existing biases, leading to skewed or unfair results. I address this through careful data exploration, identifying potential biases, and using appropriate sampling or modeling techniques to mitigate their impact.

- Lack of data: Insufficient data can make it difficult to draw reliable conclusions. I handle this by exploring alternative data sources, using imputation techniques, and employing robust statistical methods that can work with limited data.

- Interpreting results: Understanding what the data truly means requires careful consideration of context and limitations. I emphasize clear communication of findings, highlighting uncertainties and limitations in the analysis.

In a project involving analyzing customer feedback data, I discovered a significant bias in the sampling – a disproportionate number of negative reviews came from a specific demographic. Addressing this bias required resampling the data and applying weighting techniques in the analysis to obtain fairer and more representative results.

Q 21. Explain your understanding of different data structures (e.g., arrays, linked lists).

Data structures are fundamental to organizing and managing data efficiently. My understanding encompasses various structures, including:

- Arrays: Ordered collections of elements of the same data type, accessed using indices. Arrays offer fast access to elements but resizing can be inefficient.

- Linked lists: Collections of elements (nodes) where each node points to the next. Linked lists provide flexibility for insertions and deletions but accessing elements can be slower than arrays.

- Trees: Hierarchical data structures with nodes connected by branches. Trees are useful for representing hierarchical relationships and searching (e.g., binary search trees). I often used trees for decision-making algorithms.

- Graphs: Collections of nodes and edges representing relationships between nodes. Graphs are used to model networks and relationships (e.g., social networks). I utilized graph databases for fraud detection analysis.

- Hash tables: Data structures that use hash functions to map keys to values, providing fast lookups. Hash tables are very efficient for storing and retrieving data.

The choice of data structure depends on the specific application and its requirements for storage, access, and manipulation. For instance, when implementing a recommendation system, I would use hash tables for fast lookup of user preferences, and potentially a graph to analyze user-item interactions.

Q 22. How do you ensure data quality and integrity?

Data quality and integrity are paramount in analytics. Think of it like building a house – you can’t build a sturdy house on a weak foundation. Ensuring data quality involves a multi-step process starting before the data even enters our system.

- Data Profiling: I begin by thoroughly understanding the data’s structure, identifying potential issues like missing values, inconsistencies, and outliers. Tools like Pandas Profiling in Python are invaluable here.

- Data Cleaning: This is where I address the issues found during profiling. This might involve handling missing data (imputation or removal), correcting inconsistencies (standardizing formats), and smoothing outliers (depending on the context and their impact). For example, I might use KNN imputation to fill missing numerical values or replace categorical missing values with the mode.

- Data Validation: After cleaning, I validate the data to ensure the transformations haven’t introduced new errors. This involves checks against known ranges, data types, and business rules. I might write custom validation scripts or use database constraints.

- Data Governance: This is an ongoing process. I advocate for clear data governance policies including data dictionaries, version control, and access control to maintain the data’s integrity over time. This prevents accidental corruption or unauthorized modifications.

For example, in a project analyzing customer purchase data, I discovered inconsistencies in the ‘date of purchase’ field – some were in MM/DD/YYYY format, while others were DD/MM/YYYY. Data profiling flagged this. I then cleaned the data by standardizing the format, ensuring consistent reporting and analysis.

Q 23. Describe your experience with different programming languages relevant to data analysis (e.g., Python, R).

My core programming languages for data analysis are Python and R. They each excel in different areas.

- Python: I leverage Python’s versatility, particularly its rich ecosystem of libraries.

Pandasis my go-to for data manipulation and cleaning;NumPyfor numerical computation;Scikit-learnfor machine learning tasks; andMatplotlib/Seabornfor visualizations. For example, I recently used Python to build a predictive model for customer churn using a logistic regression model from Scikit-learn. - R: R shines in statistical computing and data visualization. Packages like

dplyr(for data manipulation),ggplot2(for stunning visualizations), and specialized packages for specific statistical methods are incredibly powerful. I used R extensively to perform survival analysis on patient data in a previous project, leveraging its specialized statistical functionalities.

I’m also proficient in SQL for database management and data extraction. The choice between Python and R often depends on the specific project’s needs and the team’s expertise.

Q 24. What are some ethical considerations in data analysis?

Ethical considerations in data analysis are critical. We’re dealing with potentially sensitive information, and our analysis can have real-world consequences.

- Privacy: Protecting individual privacy is paramount. I always adhere to data privacy regulations (like GDPR or CCPA) and employ anonymization or de-identification techniques where appropriate.

- Bias: Data can reflect existing societal biases, leading to unfair or discriminatory outcomes. I carefully examine data for bias and employ techniques to mitigate it. For instance, if analyzing loan applications, I would be wary of biases based on race or gender.

- Transparency: My analyses are transparent and reproducible. I meticulously document my methods and findings to allow for scrutiny and verification.

- Confidentiality: I never share sensitive data or findings without proper authorization.

- Accountability: I take responsibility for the impact of my work and ensure that my analyses are used responsibly.

For example, in a project involving health data, I made sure to anonymize patient identifiers before performing any analysis, ensuring compliance with HIPAA regulations.

Q 25. How do you communicate your findings to a non-technical audience?

Communicating complex data findings to a non-technical audience requires clear, concise, and engaging storytelling. I avoid jargon and technical details unless absolutely necessary. I focus on the narrative, using visualizations and simple language to convey the key insights.

- Visualizations: Charts and graphs are essential. I choose the most appropriate visualization type for the data and the message I want to convey. Bar charts for comparisons, line charts for trends, and maps for geographical data, for instance.

- Storytelling: I frame my findings within a compelling narrative, starting with a clear introduction of the problem and concluding with the key implications of my analysis. I often use analogies and real-world examples to make the information relatable.

- Focus on the ‘So What?’: The most important aspect is translating the data into actionable insights. What do the findings mean for the business, and what decisions should be made based on them?

For example, instead of saying ‘the correlation coefficient between X and Y is 0.8’, I’d say something like ‘there’s a strong positive relationship between X and Y, meaning that as X increases, Y tends to increase as well. This suggests that investing more in X could significantly boost Y.’

Q 26. Describe your experience with data storytelling and visualization best practices.

Data storytelling and visualization go hand-in-hand. Effective data storytelling leverages visualizations to build a narrative that resonates with the audience.

- Choosing the Right Visualizations: I select visualizations based on the type of data and the message I’m conveying. For example, I’d use a scatter plot to show the relationship between two continuous variables, a bar chart to compare categories, or a heatmap to visualize correlations.

- Clear and Concise Labeling: Charts need clear titles, axis labels, and legends. Avoid clutter and make sure the visuals are easy to understand.

- Color Palettes: I use color effectively, ensuring colorblind accessibility and avoiding distracting color schemes.

- Interactive Visualizations: When appropriate, interactive dashboards allow the audience to explore the data themselves, deepening their understanding.

- Narrative Structure: I structure my presentations with a clear beginning, middle, and end, guiding the audience through the story with the data as the supporting evidence. I also anticipate potential questions and address them proactively.

In a recent project, I used a series of interactive dashboards to present the findings of a customer segmentation analysis. This allowed stakeholders to explore different segments, filter data, and drill down into specific details, leading to a more engaged and insightful discussion.

Q 27. Explain your approach to problem-solving in a data analysis context.

My approach to problem-solving in data analysis is systematic and iterative.

- Understanding the Problem: I begin by clearly defining the business problem and translating it into a data analysis question. This involves discussions with stakeholders to gain a comprehensive understanding of their needs and objectives.

- Data Acquisition and Exploration: I gather the necessary data from various sources and explore it to understand its structure, identify potential issues, and generate initial hypotheses.

- Data Cleaning and Preprocessing: This crucial step involves handling missing data, outliers, and inconsistencies to ensure data quality and integrity.

- Feature Engineering: I create new variables from existing ones to improve the model’s performance. This involves using domain knowledge and understanding the data to engineer features that capture meaningful information.

- Modeling and Analysis: I select and apply appropriate statistical models or machine learning algorithms to analyze the data and answer the research questions. This may involve model selection, parameter tuning, and validation.

- Interpretation and Communication: I interpret the results of the analysis in a clear and concise manner, emphasizing the implications of the findings for the business problem.

- Iteration and Refinement: Data analysis is an iterative process. Based on the results, I may refine my approach, collect additional data, or explore alternative methods.

For instance, if tasked with improving customer retention, I’d follow these steps, potentially employing clustering algorithms to identify customer segments and then using predictive models to anticipate churn risk.

Q 28. How do you stay up-to-date with the latest trends in data analytics?

Staying current in data analytics requires continuous learning and engagement with the field.

- Online Courses and Platforms: I regularly take online courses on platforms like Coursera, edX, and DataCamp to learn new techniques and refresh my knowledge on existing ones. I’ve recently completed a course on deep learning.

- Conferences and Workshops: Attending industry conferences and workshops allows me to network with peers and learn about the latest advancements. I actively participate in webinars and online communities.

- Industry Publications and Blogs: I follow leading data science blogs and publications (like Towards Data Science or Analytics Vidhya) to stay updated on new research and trends.

- Open-Source Projects and Contributions: Engaging with open-source projects is a great way to learn from experienced professionals and contribute to the community. I often explore and contribute to relevant GitHub repositories.

- Peer Learning and Collaboration: I actively participate in online communities and forums, exchanging ideas and collaborating with fellow data professionals.

This multi-faceted approach ensures that my skills remain relevant and my knowledge is up-to-date with the rapidly evolving field of data analytics.

Key Topics to Learn for Analytics and Data Interpretation Interview

- Descriptive Statistics: Understanding measures of central tendency (mean, median, mode), dispersion (variance, standard deviation), and their applications in interpreting data sets. Practical application: Analyzing customer demographics to identify key market segments.

- Inferential Statistics: Grasping concepts like hypothesis testing, confidence intervals, and p-values. Practical application: Determining the statistical significance of A/B testing results.

- Data Visualization: Mastering the creation and interpretation of various charts and graphs (bar charts, histograms, scatter plots, etc.) to effectively communicate insights. Practical application: Presenting data findings to stakeholders in a clear and compelling manner.

- Regression Analysis: Understanding linear and multiple regression, interpreting coefficients, and assessing model fit. Practical application: Predicting sales based on marketing spend and other relevant factors.

- Data Cleaning and Preprocessing: Developing skills in handling missing data, outliers, and data transformations to ensure data quality. Practical application: Preparing raw data for analysis and modeling.

- Data Mining Techniques: Exploring methods for discovering patterns and insights in large datasets, including clustering and association rule mining. Practical application: Identifying customer segments with similar purchasing behavior.

- SQL and Database Management: Familiarity with SQL queries for data extraction, manipulation, and analysis. Practical application: Retrieving relevant data from a relational database for analysis.

- Business Acumen: Connecting data analysis to business problems and formulating data-driven recommendations. Practical application: Using data to inform strategic business decisions.

Next Steps

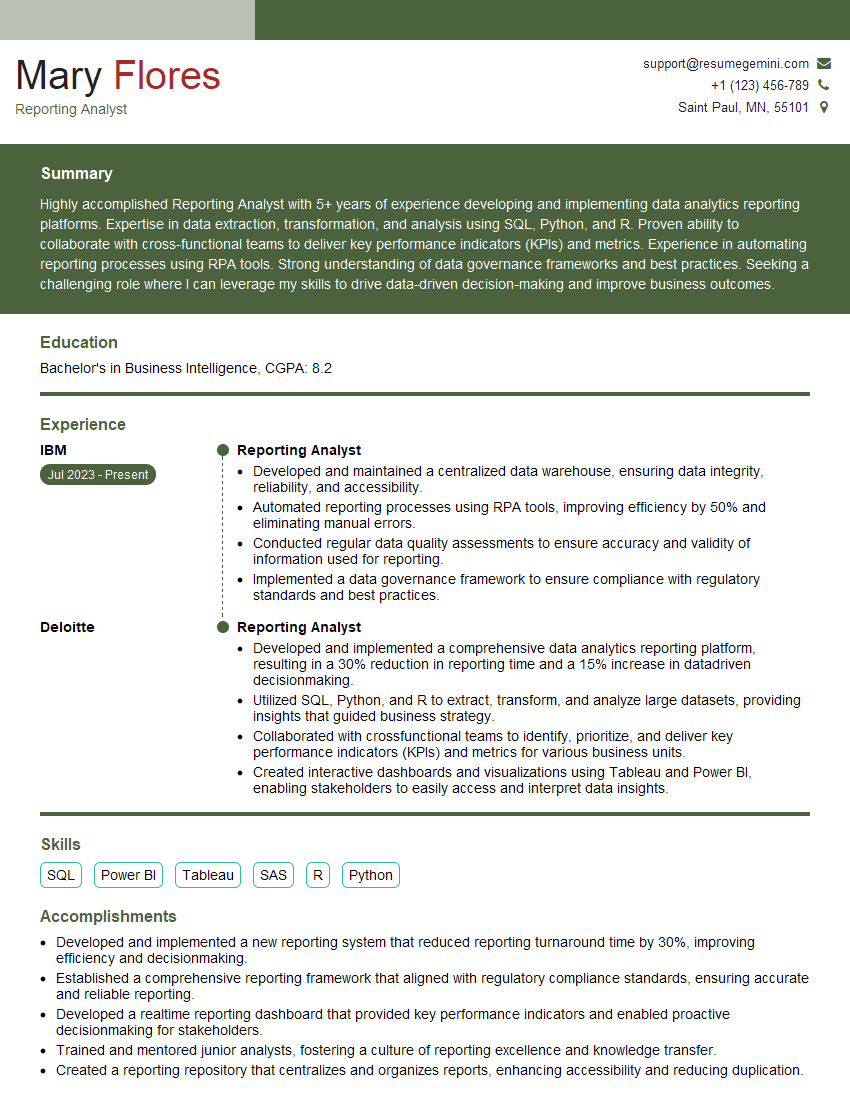

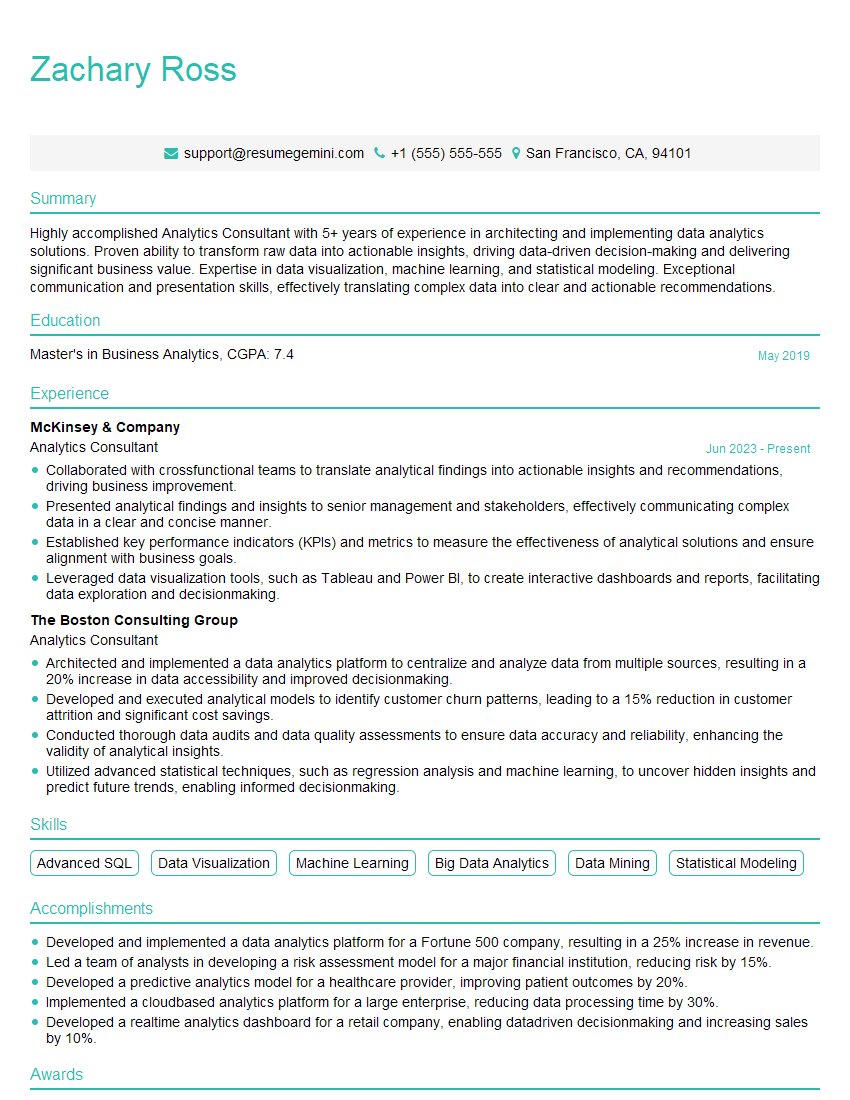

Mastering Analytics and Data Interpretation is crucial for career advancement in today’s data-driven world. It opens doors to exciting opportunities and allows you to contribute significantly to organizational success. To maximize your job prospects, focus on creating a compelling and ATS-friendly resume that highlights your skills and experience. We strongly encourage you to leverage ResumeGemini, a trusted resource for building professional resumes. ResumeGemini provides examples of resumes tailored to Analytics and Data Interpretation roles, helping you showcase your qualifications effectively and stand out from the competition.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Very informative content, great job.

good