Unlock your full potential by mastering the most common ARM Cortex-M Architecture interview questions. This blog offers a deep dive into the critical topics, ensuring you’re not only prepared to answer but to excel. With these insights, you’ll approach your interview with clarity and confidence.

Questions Asked in ARM Cortex-M Architecture Interview

Q 1. Explain the differences between Cortex-M0, Cortex-M3, Cortex-M4, and Cortex-M7 processors.

The Cortex-M processor family offers a range of devices optimized for different applications. The key differences between the M0, M3, M4, and M7 lie in their performance, features, and power consumption. Think of it like choosing a car – a small efficient city car (M0) versus a powerful luxury SUV (M7).

- Cortex-M0: This is the entry-level processor, focusing on low power consumption and minimal cost. It lacks a Floating Point Unit (FPU) and has simpler instruction sets. Ideal for very simple embedded systems.

- Cortex-M3: A significant step up, the M3 introduces a more powerful architecture with a richer instruction set and improved performance. It still lacks an FPU in the base version but is often used in applications requiring more processing power than the M0. Think of it as a reliable mid-size sedan.

- Cortex-M4: This processor boasts a significant performance boost, primarily due to the inclusion of a single-precision FPU and support for Digital Signal Processing (DSP) instructions. This makes it suitable for applications requiring more complex calculations, such as motor control and sensor processing. An excellent choice for applications demanding higher performance in a relatively power efficient package. Imagine this as a sporty, powerful car.

- Cortex-M7: This is the highest-performing member of the family, offering a powerful FPU, advanced memory management capabilities, and a highly optimized architecture. The M7 is best suited for demanding applications requiring high-throughput and real-time performance, like advanced motor control or sophisticated graphical user interfaces. This is the equivalent of a high-performance sports car.

The choice depends heavily on the application requirements. A simple thermostat might only need an M0, while a sophisticated industrial controller might require the power of an M7.

Q 2. Describe the memory map of a typical Cortex-M microcontroller.

The memory map of a Cortex-M microcontroller is a crucial aspect of its design. It defines how the processor accesses different memory regions. Imagine it as a well-organized filing cabinet for your microcontroller’s data and instructions.

A typical memory map includes:

- Flash Memory: Stores the program code. This is typically non-volatile, meaning the code persists even when the power is off. It’s like the long-term storage of your cabinet.

- SRAM (Static Random Access Memory): Used for storing data during program execution. It’s volatile, meaning data is lost when power is removed. Think of this as your desk – where you keep the documents you’re currently working on.

- Peripheral Registers: Used to control and monitor peripherals like timers, UARTs (Universal Asynchronous Receiver/Transmitter), and ADCs (Analog-to-Digital Converters). These registers are like the control knobs of your cabinet; they let you interact with different components.

- Stack Pointer (SP): Points to the top of the stack. The stack is used for storing temporary data and managing function calls. It acts as a temporary holding area for ongoing tasks.

The specific addresses and sizes of each memory region vary depending on the microcontroller and its configuration. The memory map is crucial for proper program development and debugging.

Q 3. What are the different interrupt priority levels in a Cortex-M processor?

Cortex-M processors use a prioritized interrupt system. Imagine you have several important tasks, some more urgent than others; the interrupt priority levels ensure the most critical tasks are handled first. The number of priority levels varies depending on the specific Cortex-M device, but common configurations include 256 or up to 16. These levels are typically programmable and allow for fine-grained control over interrupt handling.

Higher priority interrupts preempt lower priority ones. If a higher-priority interrupt occurs while a lower-priority one is being processed, the lower-priority interrupt is suspended until the higher-priority one completes. This ensures that time-critical tasks are always addressed promptly. The assignment of priorities is a critical part of real-time system design, ensuring stability and responsiveness.

Q 4. Explain the Nested Vectored Interrupt Controller (NVIC) in detail.

The Nested Vectored Interrupt Controller (NVIC) is the heart of the Cortex-M interrupt system. Think of it as the air traffic controller of your microcontroller, managing all incoming interrupts. It provides several key functions:

- Interrupt Prioritization: The NVIC assigns priority levels to each interrupt source. This ensures that critical interrupts are handled before less critical ones.

- Interrupt Pending Register: This register tracks which interrupts are waiting to be serviced. It’s like a to-do list for the NVIC.

- Interrupt Enable Register: This register controls which interrupts are enabled. It allows for selective enabling or disabling of specific interrupts based on the program’s needs. It’s like a switchboard, allowing the NVIC to connect or disconnect specific services.

- Nested Interrupts: The NVIC allows for nested interrupts, meaning an interrupt can be interrupted by a higher-priority interrupt. This is crucial for real-time systems that need to respond quickly to several events.

Efficient NVIC configuration is vital for robust real-time behavior. Incorrect prioritization can lead to unpredictable system behavior or missed interrupts, potentially resulting in system failure.

Q 5. How does the Cortex-M processor handle exceptions?

The Cortex-M processor handles exceptions, which include interrupts and other unexpected events, through a well-defined process. This process ensures that the processor can gracefully handle these events without crashing. Think of it like a fire alarm system for your microcontroller; it responds to unusual circumstances to maintain order.

When an exception occurs:

- The processor saves the current context (registers, program counter) onto the stack.

- The processor fetches the exception vector address from the vector table (a table mapping exception numbers to addresses).

- The processor jumps to the exception handler routine at the fetched address. This routine handles the specific exception.

- After the handler completes, the processor restores the saved context from the stack and resumes execution at the point where the exception occurred.

Proper exception handling is crucial for the stability and reliability of embedded systems.

Q 6. What are the different power saving modes available in Cortex-M microcontrollers?

Cortex-M microcontrollers offer various power-saving modes to extend battery life or reduce power consumption. Imagine these modes as different sleep settings on your smartphone, each optimizing for different power needs.

- Sleep Mode: The processor stops execution but retains the context (registers and memory contents). The processor can quickly wake up from this mode when an interrupt occurs.

- Stop Mode: The processor stops execution and clocks are disabled. The memory contents are retained, but the processor takes longer to wake up compared to sleep mode. Think of it as a deeper sleep for the device, consuming less power.

- Standby Mode: The processor is powered down, and the memory contents are also lost. The processor requires a full reset to start running again. This is typically used when the device needs to be completely shut down and restarted later.

The choice of power-saving mode depends on the application’s power and speed requirements. Real-time applications usually require quicker wake-up times, favoring sleep mode, while applications with less stringent timing constraints may use the deeper sleep modes to conserve more power.

Q 7. Explain the concept of context switching in a real-time operating system (RTOS).

Context switching is a fundamental concept in Real-Time Operating Systems (RTOS). Imagine a chef working in a busy kitchen; the chef needs to switch between different tasks (preparing ingredients, cooking, serving) in a smooth and efficient manner. Context switching is the mechanism that enables the RTOS to manage these different tasks.

When a task needs to be switched, the RTOS performs the following steps:

- Save the context of the current task: The RTOS saves the task’s state, including registers, stack pointer, and program counter. It’s like saving the chef’s current recipe and progress.

- Load the context of the new task: The RTOS loads the state of the task that needs to be executed next. It’s like the chef starting a new dish.

- Resume the execution of the new task: The processor resumes execution with the new task. The chef starts working on the next task seamlessly.

Context switching allows the RTOS to efficiently manage multiple tasks, enhancing responsiveness and resource utilization. However, it introduces a small overhead, affecting overall performance. The efficiency of context switching is a key characteristic of a well-designed RTOS.

Q 8. How do you handle memory allocation in a resource-constrained environment?

Memory allocation in resource-constrained environments like those found in Cortex-M microcontrollers requires a careful and efficient approach. The key is minimizing memory footprint and fragmentation. We avoid dynamic memory allocation (malloc, free) as much as possible because it’s prone to fragmentation and can lead to crashes. Instead, we favor static allocation where the memory is allocated at compile time. This ensures that memory is always available and avoids the overhead of runtime allocation.

For situations where dynamic allocation is unavoidable, we use memory pools or custom allocators. A memory pool pre-allocates a fixed-size block of memory, dividing it into smaller chunks. This is faster and less prone to fragmentation than general-purpose dynamic allocation. Custom allocators allow fine-grained control over memory management, enabling optimizations tailored to the specific application’s needs. For instance, if we know the sizes of data structures beforehand, a custom allocator that uses a first-fit or best-fit strategy can significantly improve efficiency.

Another critical strategy is careful data structure selection. Compact data structures like linked lists (if frequent insertions/deletions are needed) are preferred over arrays, which require contiguous memory blocks. Regular code reviews and memory profiling tools are crucial for identifying and addressing memory leaks and inefficiencies.

Q 9. Describe the different types of memory used in a Cortex-M microcontroller (RAM, Flash, ROM).

Cortex-M microcontrollers typically use three main types of memory:

- RAM (Random Access Memory): This is volatile memory; its contents are lost when power is removed. It’s used for storing variables, program stack, and temporary data. Access speed is fast, making it ideal for frequently accessed data. Different types exist, such as SRAM (Static RAM) and PSRAM (Pseudo-Static RAM). SRAM is faster but more expensive, while PSRAM offers a cost-effective alternative.

- Flash Memory: This is non-volatile memory; data is retained even when power is off. It’s primarily used for storing the program code and constant data. Flash memory is slower than RAM but significantly cheaper per bit. It has limited write cycles, meaning it can only be rewritten a limited number of times. This needs to be considered in the design of firmware updates.

- ROM (Read-Only Memory): This is also non-volatile and contains pre-programmed data or code. While it’s less common in modern microcontrollers compared to flash, it might be present for bootloaders or critical data that should never be overwritten. It’s typically faster than flash but often more expensive.

Understanding the characteristics and limitations of each memory type is essential for optimal program design and performance on Cortex-M devices. For example, large data structures should be stored in flash and loaded into RAM only when needed to conserve RAM resources.

Q 10. Explain the concept of memory protection units (MPU).

The Memory Protection Unit (MPU) is a crucial hardware component in many Cortex-M processors that enhances system security and stability. It allows for fine-grained control over memory access, preventing unauthorized code or data from accessing sensitive areas. This is vital for real-world applications, where different parts of the software need varying levels of access privileges. Imagine a system with both a user interface and a critical control loop – you would want to prevent the UI from accidentally corrupting the control loop’s memory.

The MPU works by dividing the memory space into regions. Each region is assigned access permissions (read, write, execute), and an access violation triggers an exception. This enables features like:

- Preventing buffer overflows: By restricting access to memory regions beyond the allocated size, the MPU can prevent buffer overflow attacks.

- Protecting critical data: Sensitive data can be placed in memory regions with restricted access, preventing unauthorized modification.

- Isolating different software components: Different parts of the software, such as an operating system kernel and user applications, can be placed in separate memory regions with appropriate access controls.

Configuring the MPU involves defining the memory regions, their size, and access permissions through specific registers within the microcontroller. Incorrect MPU configuration can lead to unpredictable behavior, so careful planning and testing are essential.

Q 11. How do you debug embedded systems using a debugger?

Debugging embedded systems relies on various tools and techniques. A debugger is a critical tool that allows you to examine the program’s execution in real-time, inspecting variables, memory contents, and registers. This lets you step through your code, set breakpoints (pause execution at specific points), and single-step through the instructions.

The debugging process usually involves:

- Connecting to the target: Using a debug interface like JTAG or SWD (explained in the next answer).

- Loading the program: Transferring your compiled code to the microcontroller’s flash memory.

- Setting breakpoints: Identifying points in your code where you want to pause execution to inspect variables or registers.

- Stepping through the code: Executing instructions one at a time to observe their effects.

- Inspecting variables and memory: Examining the values of variables and the contents of memory locations to identify errors.

- Using watchpoints: Setting breakpoints triggered when the value of a variable changes.

IDE’s like Keil MDK, IAR Embedded Workbench, and Segger Embedded Studio typically provide integrated debugging environments that simplify this process by offering graphical interfaces and powerful debugging features.

Q 12. Explain the use of JTAG and SWD interfaces for debugging.

JTAG (Joint Test Action Group) and SWD (Serial Wire Debug) are two common interfaces for debugging embedded systems, particularly Cortex-M microcontrollers. They provide a standardized way to connect a debugger to the microcontroller for communication and control.

JTAG: Uses a four-wire or five-wire interface for communication. It’s robust and supports advanced debugging features like boundary scan, allowing testing of physical connections and components. It is generally slower than SWD. It also requires more pins on the microcontroller.

SWD: Uses only two wires (SWDIO and SWCLK) for communication. It’s simpler, faster, and consumes less power compared to JTAG. It’s becoming increasingly popular, especially in resource-constrained environments.

The choice between JTAG and SWD often depends on the specific application requirements. JTAG is preferred when robustness and advanced debugging capabilities are essential, while SWD is favored when minimizing pin count and power consumption is crucial.

Both interfaces enable the debugger to access the microcontroller’s internal registers, memory, and control execution flow, making them essential for effective debugging of Cortex-M based systems. You would typically use a JTAG/SWD debugger connected to your development board to interface with the microcontroller.

Q 13. What is the significance of the SysTick timer?

The SysTick timer is a crucial component within the Cortex-M architecture. It’s a programmable down-counter that generates interrupts at regular intervals, providing a precise timing mechanism often used for:

- System Tick: As its name suggests, it’s frequently used as the system’s heartbeat. The periodic interrupts enable precise timing of tasks within the Real-Time Operating System (RTOS) scheduler or even for simple non-RTOS applications.

- Delay functions: Accurate delays can be implemented by using the SysTick timer to count down to a specified number of ticks.

- Real-Time Clock (RTC) implementation: Although not ideally suited for long-term timekeeping, it can contribute to RTC functionality when coupled with other mechanisms.

- Other timing-related applications: It’s versatile and can be utilized in any application requiring precise timing of events.

Its programmability allows setting the reload value (the initial count), enabling the generation of interrupts at various frequencies. This flexibility makes it a core component in embedded system design, providing a simple yet effective method for handling timing events and scheduling tasks.

Q 14. How do you implement a real-time clock (RTC) using a Cortex-M microcontroller?

Implementing a Real-Time Clock (RTC) on a Cortex-M microcontroller usually involves using a dedicated RTC peripheral if available, or leveraging the SysTick timer in conjunction with external components like a real-time clock chip (e.g., DS3231).

Method 1 (using a dedicated RTC peripheral): Many Cortex-M microcontrollers include dedicated RTC peripherals. These peripherals are designed for accurate timekeeping, often employing a crystal oscillator for precise timing. They maintain the time even when the microcontroller is in low-power modes. The implementation generally involves initializing the RTC with the current time, setting up interrupt handling for periodic time updates, and reading the time from the RTC registers whenever needed.

Method 2 (using SysTick and external RTC chip): If the microcontroller lacks a dedicated RTC, an external RTC chip can be used. The microcontroller periodically reads the time from this external chip and utilizes it. This requires additional hardware connections and interaction with the external chip’s interface (e.g., I2C or SPI).

Regardless of the chosen method, proper consideration for power consumption and time accuracy is vital. External RTC chips generally offer superior accuracy and low power consumption compared to relying solely on the SysTick timer, especially for long-term timekeeping.

An example using a hypothetical RTC peripheral might involve:

// Initialize RTC with current time RTC_Init(year, month, day, hour, minute, second); //Enable RTC interrupts RTC_EnableInterrupt(); //Interrupt handler for RTC void RTC_ISR() { //Update time-related variables }Q 15. Describe different communication protocols used with Cortex-M (UART, SPI, I2C, CAN).

Cortex-M microcontrollers support various communication protocols for interacting with peripherals and other devices. Let’s explore some key ones:

- UART (Universal Asynchronous Receiver/Transmitter): A simple, widely used serial communication protocol. Data is transmitted one bit at a time, asynchronously, meaning there’s no shared clock signal. It’s perfect for simple text-based communication, like debugging output to a computer’s serial port. Think of it like a single-lane road where data cars travel one after another without needing a precise schedule.

- SPI (Serial Peripheral Interface): A synchronous serial communication protocol that uses a shared clock signal for precise data transfer. It’s faster than UART and often used for communication with sensors, memory chips, and other peripherals that require high-speed data exchange. Imagine this as a multi-lane highway where data cars travel in a synchronized manner, making the transfer much quicker.

- I2C (Inter-Integrated Circuit): Another widely used serial communication protocol, it’s a multi-master protocol, meaning multiple devices can communicate on the same bus. It’s commonly used for connecting sensors and other low-speed devices in embedded systems. Think of it as a two-way street where different cars (devices) can take turns talking to each other.

- CAN (Controller Area Network): A robust protocol specifically designed for automotive applications, characterized by its high fault tolerance and ability to handle real-time communication in noisy environments. It prioritizes messages based on their importance, making it suitable for safety-critical applications. Think of it as an intelligent traffic management system where messages with higher priority get preferential treatment on the road.

The choice of protocol depends on factors like data rate, distance, complexity, and cost. For example, UART might suffice for simple debugging, while SPI is suitable for high-speed sensor data acquisition, and CAN is the go-to choice for critical systems like automotive control units.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. Explain DMA and its benefits in embedded systems.

DMA (Direct Memory Access) is a hardware feature that allows data transfer between peripherals and memory without the CPU’s constant intervention. Imagine the CPU as a busy manager – it delegates the data transfer task to a specialized team (DMA controller) freeing the manager to focus on more critical tasks.

Benefits in Embedded Systems:

- Reduced CPU Load: The CPU doesn’t need to actively manage data transfer, saving valuable processing cycles for other tasks.

- Improved Performance: Data transfer happens much faster compared to CPU-managed transfers, especially for large data blocks.

- Real-Time Capabilities: DMA ensures deterministic data transfer times, crucial for real-time applications needing precise timing.

- Power Efficiency: Reduced CPU activity translates to lower power consumption, extending battery life in portable devices.

Example: In a system acquiring data from an ADC (Analog-to-Digital Converter), DMA can transfer the converted data directly from the ADC’s memory buffer to the main system memory without the CPU needing to handle each byte individually. This allows the CPU to focus on processing the acquired data or other critical tasks.

Q 17. How do you handle interrupts in a Cortex-M microcontroller?

Interrupt handling in Cortex-M microcontrollers is a critical aspect of real-time operation. It involves a well-defined process that ensures timely response to external events.

When an interrupt occurs (e.g., a button press, sensor data ready), the currently running code is paused, and the processor jumps to a specific interrupt service routine (ISR) associated with that interrupt source. The ISR handles the event, and then control returns to the original code.

Key Steps:

- Interrupt Vector Table: Each interrupt has an associated vector in a table that the processor uses to locate the ISR.

- Nested Interrupts: Cortex-M supports nested interrupts, allowing higher-priority interrupts to interrupt lower-priority ones.

- Interrupt Priority Levels: Interrupts can be assigned priorities to manage the order of execution in case of multiple simultaneous interrupts.

- Interrupt Masking: Interrupts can be globally or individually disabled to prevent unwanted interruptions.

- Context Saving and Restoration: The processor automatically saves the context of the interrupted task before executing the ISR, ensuring that the task resumes execution correctly after the interrupt is handled.

Example: In a system with a timer interrupt, the ISR could increment a counter, update a display, or trigger a specific action at regular intervals. Proper interrupt handling ensures that these actions occur predictably and efficiently without impacting other processes.

Q 18. What is a critical section, and how do you protect it?

A critical section is a part of the code where shared resources (like memory locations or peripherals) are accessed. It’s crucial to prevent multiple tasks from accessing the critical section simultaneously because this can lead to data corruption or system instability. Imagine a critical section as a single-occupancy restroom; only one person can use it at a time.

Protection Mechanisms:

- Disabling Interrupts: The simplest method, but can only be used for very short critical sections because it can affect real-time performance. Think of this as putting a ‘Do Not Disturb’ sign on the restroom door, preventing others from entering.

- Mutexes (Mutual Exclusion): A more sophisticated approach; a mutex is a synchronization primitive that acts like a lock. Only one task can acquire the mutex at a time. Other tasks trying to access the critical section will wait until the mutex is released. This is like a smart restroom lock that allows only one person to enter and locks automatically when someone is inside.

- Atomic Operations: Some processors offer atomic instructions that guarantee that a sequence of operations is executed indivisibly. This is like having a special system that automatically handles the locking and unlocking of the restroom door.

The best method depends on the specific context. Disabling interrupts is suitable only for short critical sections, while mutexes provide more robust and flexible protection for longer sections.

Q 19. Explain the concept of semaphores and mutexes.

Semaphores and mutexes are synchronization primitives used for managing concurrent access to shared resources. They act as signaling mechanisms to coordinate tasks and prevent race conditions.

Semaphores: A semaphore is a counter that can be incremented (signaled) and decremented (waited on). It’s used to control access to a resource that can be shared among multiple tasks (e.g., a printer, a buffer). Imagine a semaphore as a parking lot with a limited number of spaces. A car (task) can enter only if a space (resource) is available. When a car leaves, it frees up a space, allowing another car to enter.

Mutexes (Mutual Exclusion): A mutex is a special type of semaphore that can only be acquired and released by the same task. It’s used to protect critical sections. Only one task can hold the mutex at a time, ensuring exclusive access to the shared resource. Imagine a mutex as a key to a single room. Only one person can have the key and enter the room at a time.

Key Differences: The main difference lies in their usage. Semaphores can count the number of available resources, while mutexes only manage exclusive access. Mutexes are typically used for protecting critical sections, whereas semaphores are better suited for controlling access to multiple instances of a resource.

Q 20. How do you implement task scheduling in an RTOS?

Task scheduling in an RTOS (Real-Time Operating System) involves assigning tasks to the CPU core according to a scheduling algorithm. The RTOS scheduler decides which task gets to run and when, ensuring efficient utilization of the processor and meeting real-time constraints.

Common Scheduling Algorithms:

- Round-Robin: Each task gets a slice of time (time quantum) to run, and the scheduler switches between tasks in a cyclical manner. Simple to implement but can lead to unfairness if some tasks require longer execution times.

- Priority-Based: Tasks are assigned priorities, and the scheduler always runs the highest-priority ready task. This is excellent for real-time systems where some tasks have strict deadlines.

- Rate Monotonic: A specific type of priority-based scheduling where tasks are prioritized by their execution frequency (shortest period gets highest priority). This algorithm guarantees schedulability under certain conditions.

- Earliest Deadline First (EDF): The scheduler selects the task with the earliest deadline to execute. This ensures that tasks with strict deadlines are met.

The choice of algorithm depends on the application’s requirements. For instance, priority-based scheduling is often used in hard real-time systems where meeting deadlines is critical, whereas round-robin might be more suitable for less time-critical applications.

Q 21. What are the advantages and disadvantages of using an RTOS?

Using an RTOS in embedded systems offers several advantages but also presents some drawbacks.

Advantages:

- Modular Design: Easier to develop, debug, and maintain complex embedded systems through modular task design.

- Real-Time Capabilities: RTOSes provide mechanisms for ensuring tasks meet real-time requirements.

- Resource Management: Efficient resource management including memory and peripherals.

- Multitasking: Multiple tasks can run concurrently.

- Deterministic Behavior: Predictable task execution makes real-time applications more reliable.

Disadvantages:

- Increased Complexity: RTOSes add a layer of complexity to the system, requiring developers to learn and manage the OS interface.

- Memory Overhead: RTOSes consume memory for their own operations and data structures.

- Performance Overhead: Context switching and other OS operations have a performance impact.

- Real-Time Guarantees Not Absolute: While RTOSes aim for real-time capabilities, unexpected events or poorly designed tasks might still cause deadline misses.

The decision to use an RTOS depends on project complexity, real-time requirements, and resource constraints. For simple applications, a simpler approach without an RTOS might be sufficient, but for complex and demanding systems, the benefits of using an RTOS typically outweigh the drawbacks.

Q 22. Explain the concept of FreeRTOS or another common RTOS.

FreeRTOS is a real-time operating system (RTOS) specifically designed for embedded systems with limited resources, like those based on ARM Cortex-M microcontrollers. Unlike a general-purpose OS like Windows or macOS, an RTOS prioritizes deterministic timing and predictable behavior. Imagine a system controlling a robotic arm; you need precise control over each movement, and an RTOS guarantees that.

At its core, FreeRTOS manages tasks (or threads) concurrently. Each task runs independently and has its own execution stack. The RTOS scheduler decides which task runs next based on priorities, allowing for efficient management of multiple processes simultaneously. For example, one task might handle sensor readings, another might manage motor control, and a third might communicate over a network. The RTOS ensures these tasks operate smoothly without interfering with one another.

Key features include:

- Task scheduling: Prioritized preemptive scheduling ensures timely execution of critical tasks.

- Inter-process communication (IPC): Mechanisms like semaphores, mutexes, and queues facilitate communication and synchronization between tasks.

- Memory management: While simple compared to larger OSes, FreeRTOS offers memory allocation and deallocation functions crucial for resource-constrained environments.

- Timer management: Provides timers for precise timing control, vital for real-time applications.

In a practical application, I used FreeRTOS to manage data acquisition from multiple sensors on a weather station. Each sensor had its own task, which collected data at specific intervals and then sent the data to a central task for logging and transmission. FreeRTOS’s task scheduling and inter-process communication mechanisms made this complex multi-tasking scenario manageable and reliable.

Q 23. How do you handle peripheral initialization in a Cortex-M microcontroller?

Peripheral initialization in a Cortex-M microcontroller involves configuring the microcontroller’s various peripherals (like UART, SPI, I2C, ADC, timers, etc.) to operate according to the application’s needs. This typically includes setting up clock sources, selecting operating modes, configuring interrupts, and initializing data registers.

The process usually follows these steps:

- Enable the clock: Most peripherals require a clock signal to operate. This is typically done by setting a bit in a system clock control register. For instance, to enable the UART1 peripheral clock, you’d modify the appropriate bit in the system clock configuration register.

- Configure the peripheral: This step involves setting up the peripheral’s specific registers according to the desired operation mode and settings. For example, for a UART, this would include setting the baud rate, data bits, parity, and stop bits. Specific registers control these parameters. For example, the baud rate is usually configured in a baud rate register.

- Enable interrupts (optional): Many peripherals can generate interrupts when specific events occur (e.g., data reception, transmission completion). Enabling interrupts allows for efficient asynchronous operation. You need to configure the interrupt vector table and the peripheral’s interrupt enable register.

- Check for errors: It’s good practice to check for errors after initialization. This might involve checking status registers for any errors encountered during initialization.

Example (conceptual C code):

void UART1_Init(uint32_t baudrate) { // Enable clock for UART1 RCC->APB2ENR |= RCC_APB2ENR_USART1EN; // Configure UART1 registers USART1->BRR = calculateBaudRate(baudrate); // ... other configurations ... // Enable UART1 interrupts (if needed) USART1->CR1 |= USART_CR1_RXNEIE; // Enable UART1 }The specifics vary greatly depending on the exact microcontroller and peripheral involved, but the overall process remains consistent.

Q 24. Describe the different clock sources for a Cortex-M microcontroller.

Cortex-M microcontrollers offer a variety of clock sources, providing flexibility in power management and frequency scaling. These sources typically include:

- Internal High-Speed Oscillator (HSI): A built-in oscillator providing a relatively stable clock frequency, often used for initial startup and low-power operation. It’s usually readily available and doesn’t require external components.

- External Crystal Oscillator: A high-precision external crystal that provides a more stable clock frequency than the HSI. It requires an external crystal connected to the microcontroller.

- External Clock Source (HSE): An external clock source, potentially another microcontroller or clock generator. This offers flexibility in integrating with other systems.

- Low-Speed Oscillator (LSI): A low-frequency oscillator usually used for low-power real-time clock (RTC) functionality.

- Ultra-Low-Power Oscillator (ULSI): An extremely low-power oscillator, ideal for deep-sleep modes where power consumption is critical.

The microcontroller’s clock system often includes a PLL (Phase-Locked Loop) to multiply the frequency from the chosen source, providing a higher system clock for faster processing. The system clock can be configured to use any of these sources, or a combination of them (e.g., HSE driving the PLL, which in turn drives the system clock).

Consider a scenario where an application requires low-power operation during idle periods and high-speed operation during active processing. You would configure the microcontroller to use the low-power LSI for the RTC during sleep mode and switch to HSE/PLL for high-speed operation.

Q 25. Explain the concept of clock gating.

Clock gating is a power-saving technique where the clock signal to a peripheral is turned off when the peripheral is not in use. Think of it like turning off a light switch when you leave a room. This significantly reduces the power consumed by that peripheral, as it stops drawing power for internal operations.

In Cortex-M microcontrollers, clock gating is typically controlled through registers. Each peripheral module has an associated clock enable bit in a register. Setting this bit to ‘1’ enables the clock to that peripheral, allowing it to operate; setting it to ‘0’ disables the clock, effectively powering down the peripheral. This granular control permits energy optimization based on the operating state of the system.

For example, if your application involves infrequent communication over a UART, you can enable the UART clock only when communication is required, and disable it during idle periods, thereby reducing power consumption when the UART isn’t actively transmitting or receiving data.

Modern Cortex-M devices often have numerous clock gating options, enabling fine-grained control over power consumption.

Q 26. How do you perform low-power design optimization in embedded systems?

Low-power design optimization in embedded systems is crucial, especially for battery-powered devices. It involves a multi-pronged approach targeting various aspects of the system.

Strategies include:

- Clock gating (as discussed above): Disabling clocks to peripherals when not in use.

- Sleep modes: Utilizing low-power sleep modes available in Cortex-M microcontrollers. These modes significantly reduce power consumption by slowing or completely stopping the CPU clock, disabling peripherals, and lowering voltage. The choice of sleep mode depends on the application’s requirements (e.g., the need for real-time responsiveness).

- Power-efficient peripherals: Choosing peripherals with low power consumption. Many manufacturers offer peripherals optimized for low-power operation.

- Code optimization: Writing efficient code that minimizes CPU usage. This can involve using optimized algorithms, avoiding unnecessary calculations, and reducing loop iterations.

- Voltage scaling: Lowering the operating voltage of the microcontroller when possible, thereby reducing power consumption. However, care must be taken to ensure system stability at lower voltages.

- Component selection: Choosing low-power components throughout the system, not just the microcontroller.

In one project, I implemented a system that switched between different sleep modes based on sensor activity. When no sensor events occurred, the system entered a deep sleep mode, consuming minimal power. When a sensor event was detected, the system woke up from sleep mode, processed the event, and then returned to the low-power state.

Q 27. How do you ensure code reliability and robustness in embedded systems?

Ensuring code reliability and robustness in embedded systems is paramount, as failures can have significant consequences. It necessitates a rigorous approach encompassing several key practices:

- Defensive programming: Writing code that anticipates and handles potential errors gracefully. This includes using error checks, bounds checking, and robust input validation.

- Static analysis tools: Utilizing static analysis tools to detect potential issues in the code before runtime, such as buffer overflows, memory leaks, and undefined behavior.

- Code reviews: Conducting thorough code reviews by peers to identify errors and improve code quality. A fresh pair of eyes often spots things missed by the original author.

- Unit testing: Writing comprehensive unit tests to verify the correct functionality of individual modules.

- Integration testing: Testing the interaction between different modules and components to identify integration issues.

- System testing: Testing the entire system under real-world operating conditions to verify its overall reliability.

- Memory management: Careful handling of memory allocation and deallocation to avoid memory leaks and other memory-related errors. Using memory debuggers is invaluable in this respect.

- Error handling and logging: Implementing robust error handling mechanisms and logging to track down and diagnose issues.

In the case of critical systems, formal verification techniques might be employed to mathematically prove the correctness of the code. This adds considerable complexity, but ensures a higher level of certainty about the code’s behavior.

Q 28. Explain your experience with any relevant development tools (IDE, compiler, debugger).

My primary development environment for ARM Cortex-M projects is based on the Keil MDK IDE (Microcontroller Development Kit). Keil MDK provides a comprehensive set of tools, including a powerful C/C++ compiler, an integrated debugger, and project management capabilities. I’m proficient in using the debugger to set breakpoints, single-step through code, inspect variables, and analyze memory usage. This is invaluable for debugging complex embedded systems. I have used the Keil debugger extensively to troubleshoot issues relating to real-time constraints and resource management.

I also have experience with other IDEs and compilers such as IAR Embedded Workbench, which offers a similar feature set but with a slightly different interface. The choice of IDE often depends on project needs and team preferences. I have also used command-line compilers like GCC (GNU Compiler Collection) for some ARM Cortex-M projects, especially when working on Linux-based systems. Understanding both IDE-based and command-line development tools provides great flexibility.

Beyond the IDE and compiler, I’m comfortable using various tools for analyzing performance, memory usage, and power consumption, which allows for iterative improvements to the embedded software.

Key Topics to Learn for ARM Cortex-M Architecture Interview

- Core Architecture: Understand the register set, memory map, and bus architecture. Focus on the differences between various Cortex-M families (e.g., M0+, M3, M4, M7).

- Interrupt Handling: Master interrupt vector table, priority levels, nested interrupts, and exception handling. Practice designing robust interrupt service routines (ISRs).

- Memory Management: Learn about different memory regions (flash, RAM, peripherals), memory access control, and techniques for optimizing memory usage in embedded systems.

- Peripheral Access: Familiarize yourself with common peripherals like GPIO, UART, SPI, I2C, and timers. Understand how to configure and use these peripherals effectively.

- Real-Time Operation (RTOS): Gain understanding of real-time operating systems (RTOS) concepts and their application in Cortex-M based systems. Explore common RTOS APIs and scheduling algorithms.

- Low-Power Techniques: Learn strategies for optimizing power consumption, including sleep modes, clock gating, and power-saving peripherals. This is crucial for battery-powered applications.

- Debugging and Troubleshooting: Develop strong debugging skills using tools like JTAG debuggers and understand common debugging techniques for embedded systems.

- Practical Application: Consider projects involving sensor integration, data acquisition, motor control, or communication protocols. These hands-on experiences significantly enhance your understanding.

- Advanced Topics (Optional): Explore floating-point units (FPU), DSP instructions, and memory protection units (MPU) depending on your target role and experience level.

Next Steps

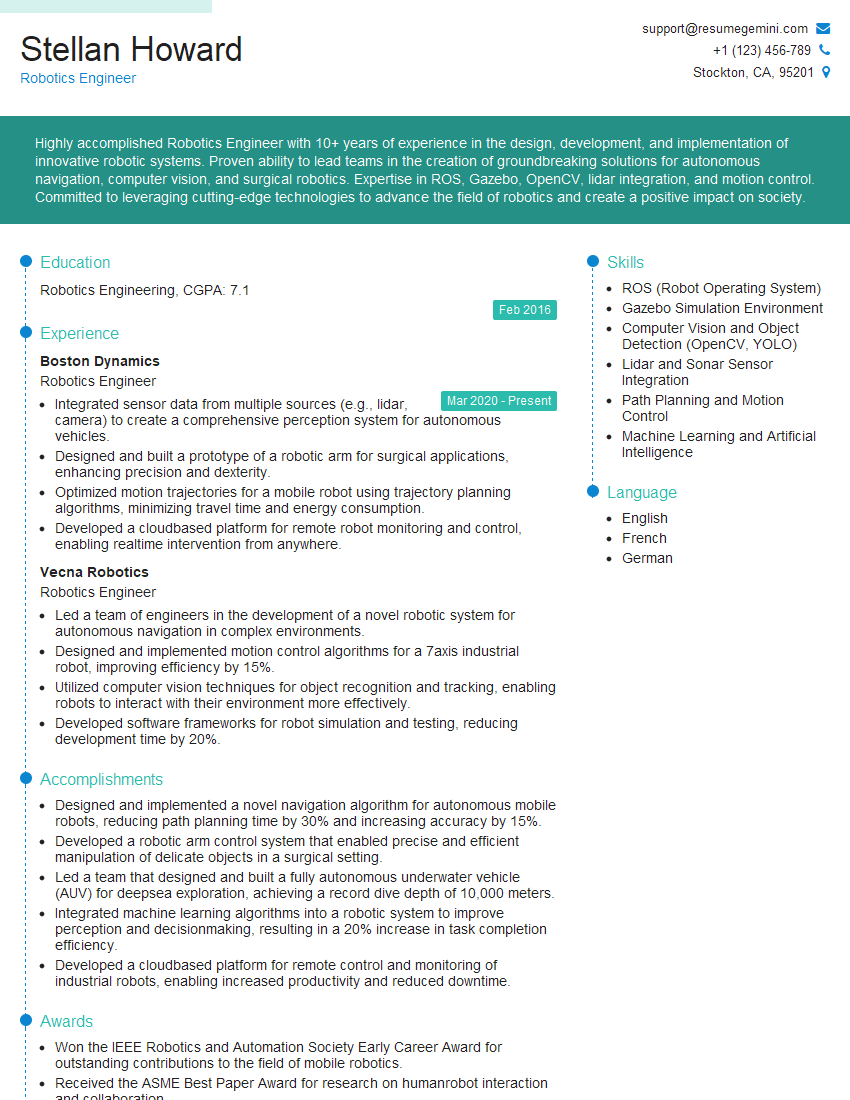

Mastering ARM Cortex-M architecture opens doors to exciting career opportunities in embedded systems, IoT, and related fields. A strong understanding of this architecture is highly valued by employers. To significantly boost your job prospects, create an ATS-friendly resume that highlights your skills and experience effectively. ResumeGemini is a trusted resource that can help you build a professional and impactful resume tailored to your specific skills. We offer examples of resumes tailored to ARM Cortex-M Architecture expertise to help you craft a compelling application. Take the next step towards your dream job today!

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Very informative content, great job.

good