Interviews are opportunities to demonstrate your expertise, and this guide is here to help you shine. Explore the essential Control Systems Theory interview questions that employers frequently ask, paired with strategies for crafting responses that set you apart from the competition.

Questions Asked in Control Systems Theory Interview

Q 1. Explain the difference between open-loop and closed-loop control systems.

The core difference between open-loop and closed-loop control systems lies in their feedback mechanisms. An open-loop system operates without feedback; it simply executes a pre-programmed action without monitoring its effect. Think of a toaster: you set the time, and it runs for that duration regardless of whether the bread is toasted to perfection. The output is entirely determined by the input, with no adjustment based on the actual result.

In contrast, a closed-loop system, also known as a feedback control system, continuously monitors its output and adjusts its input accordingly to maintain a desired setpoint. A thermostat is a perfect example: it measures the room temperature and adjusts the heating or cooling system to keep the temperature at the desired setting. The feedback loop ensures the system’s output closely matches the desired behavior.

- Open-loop advantages: Simple design, inexpensive, often sufficient for simple tasks.

- Open-loop disadvantages: Susceptible to disturbances and uncertainties; precision is limited; unable to correct for errors.

- Closed-loop advantages: High accuracy, robustness to disturbances, self-correcting.

- Closed-loop disadvantages: More complex design, potentially more expensive, risk of instability if not designed carefully.

Q 2. Describe the role of a PID controller and its tuning methods.

A Proportional-Integral-Derivative (PID) controller is a widely used feedback controller that adjusts the manipulated variable based on three terms: proportional, integral, and derivative. Each term addresses different aspects of the control error (the difference between the desired setpoint and the actual output).

- Proportional (P): Responds to the current error. A larger error leads to a larger corrective action. Imagine steering a car; the more you deviate from your lane, the more you turn the wheel.

- Integral (I): Addresses accumulated error over time. This eliminates steady-state error – a persistent offset between the setpoint and output. If your car consistently drifts to the right, the integral term will counteract this drift.

- Derivative (D): Anticipates future error based on the rate of change of the error. This helps damp oscillations and improve settling time. If you see an obstacle approaching quickly, the derivative term will cause a more rapid corrective action.

PID tuning involves adjusting the gains (Kp, Ki, Kd) associated with each term to achieve optimal performance. Methods include:

- Trial and error: Simple, but time-consuming and may not yield optimal results.

- Ziegler-Nichols method: A systematic approach based on the system’s response characteristics.

- Internal Model Control (IMC): A more advanced method utilizing a model of the controlled process.

Proper tuning is crucial; incorrect gains can lead to instability (oscillations), sluggish response, or large overshoots.

Q 3. What are the advantages and disadvantages of using a state-space representation?

State-space representation describes a system using a set of first-order differential equations. It’s represented by matrices that define the system’s dynamics and output.

Advantages:

- Handles multi-input, multi-output (MIMO) systems effectively.

- Provides a unified framework for analyzing linear and nonlinear systems.

- Allows for the use of powerful linear algebra techniques for analysis and design.

- Suitable for systems with multiple variables.

Disadvantages:

- Can be computationally intensive, especially for large-scale systems.

- Requires a good understanding of linear algebra and matrix operations.

- The state variables may not always have a clear physical interpretation.

For example, a complex robotic arm with multiple joints can be easily modeled using state-space, where each joint angle and its velocity represent a state variable. This allows for precise control and coordination of all joints.

Q 4. Explain the concept of stability in control systems. How can you determine stability?

Stability in a control system means that the system’s output will eventually settle to a steady state after a disturbance or change in input. An unstable system will exhibit unbounded oscillations or diverge from the desired state.

Determining stability can be done through various methods:

- Routh-Hurwitz criterion: A purely algebraic method using the system’s characteristic polynomial. It determines stability based on the signs of the coefficients in the Routh array.

- Eigenvalue analysis (state-space): Examines the eigenvalues of the system matrix. A system is stable if all eigenvalues have negative real parts.

- Nyquist criterion (frequency domain): A graphical method based on the system’s open-loop frequency response (discussed further in the next answer).

- Root locus analysis: A graphical method showing how the closed-loop poles of the system change as a gain parameter varies. It helps visualize the effects of gain adjustments on system stability.

For instance, an improperly tuned PID controller can lead to an unstable system with oscillations that grow in amplitude, whereas proper tuning will maintain a stable response.

Q 5. What is the Nyquist stability criterion and how is it used?

The Nyquist stability criterion is a graphical method that uses the open-loop frequency response to determine the stability of a closed-loop system. It’s based on mapping the open-loop transfer function onto the complex plane for frequencies ranging from 0 to ∞.

How it’s used:

- Plot the open-loop transfer function’s frequency response on the complex plane (Nyquist plot).

- Count the number of encirclements (clockwise or counter-clockwise) of the -1 point (+j0, -1) by the Nyquist plot.

- Determine the number of unstable poles in the open-loop system.

- Apply the Nyquist stability criterion formula to determine the number of unstable closed-loop poles: N = Z – P. Where N is the number of unstable closed-loop poles, Z is the number of clockwise encirclements of the -1 point, and P is the number of unstable open-loop poles.

If N = 0, the closed-loop system is stable; otherwise, it’s unstable. The Nyquist criterion is particularly useful for systems with time delays or other complexities where other methods are less straightforward.

Q 6. Describe the Bode plot and its significance in frequency response analysis.

A Bode plot is a graphical representation of a system’s frequency response, consisting of two plots: a magnitude plot (in decibels) and a phase plot (in degrees) as functions of frequency (typically logarithmic). It’s a powerful tool for analyzing a system’s response at different frequencies.

Significance in frequency response analysis:

- Gain and phase margins: Bode plots visually identify the gain and phase margins, indicating the system’s robustness to variations in gain and phase shift.

- Resonant frequencies: The magnitude plot reveals the system’s resonant frequencies, which are frequencies at which the system’s response is amplified.

- Bandwidth: The range of frequencies over which the system maintains a significant gain.

- System identification: Bode plots can be used to identify the transfer function of a system by fitting a model to experimental data.

For instance, in designing a control system for a motor, the Bode plot will reveal how the motor will respond to different frequency signals, allowing engineers to design a controller that maintains stability and desired performance across the operating frequency range.

Q 7. Explain the concept of gain margin and phase margin.

Gain margin (GM) and phase margin (PM) are two important metrics derived from the Bode plot that indicate the stability robustness of a closed-loop system. They represent how much the gain and phase can be changed before the system becomes unstable.

Gain margin is the amount of gain increase (in dB) that can be applied before the system becomes unstable (i.e., before the phase reaches -180°). A higher gain margin indicates greater stability and robustness to gain variations. A GM of 6dB or more is generally considered good.

Phase margin is the amount of additional phase lag (in degrees) that can be added to the system before it becomes unstable (i.e., before the magnitude reaches 0dB). A higher phase margin indicates that the system is more robust to delays and uncertainties. A PM of 45° or more is generally considered a good design practice.

These margins provide an important measure of a system’s safety margin from instability. Imagine designing a flight control system – larger margins are critical to ensure stable flight even with unexpected disturbances or component variations.

Q 8. What is the root locus method and how is it used in control system design?

The root locus method is a graphical technique in control systems engineering used to analyze the behavior of a closed-loop system as a gain parameter is varied. It shows how the closed-loop poles (roots of the characteristic equation) move in the complex s-plane as the gain changes. Essentially, it visualizes the stability and transient response characteristics of a system without complex calculations for each gain value.

How it’s used in design: We start with the open-loop transfer function of the system, then the root locus plot is constructed. This plot shows the paths traced by the closed-loop poles as the gain varies from zero to infinity. By examining the location of the poles on the plot, we can determine the system’s stability, speed of response, and damping ratio. The design process involves adjusting the system’s parameters (e.g., adding compensators) to move the poles to desired locations in the s-plane for optimal performance. For example, we might want to move the poles farther into the left half-plane to increase stability and reduce overshoot.

Example: Consider a simple proportional controller with a plant having a transfer function G(s) = 1/(s(s+1)). The root locus plot will show how the closed-loop poles move as the proportional gain (K) is varied. By observing the plot, we can determine the range of K values that lead to a stable system and also the settling time and overshoot for different gain values. The designer can use this information to select a suitable gain K to meet desired performance criteria.

Q 9. Explain the concept of controllability and observability.

Controllability refers to the ability of a control system to drive any state of the system to any other desired state within a finite time using only the available control inputs. Imagine steering a car; if you can’t turn the steering wheel, you can’t control the car’s direction – it’s uncontrollable. Mathematically, we check controllability using the controllability matrix. If this matrix has full rank, the system is controllable.

Observability, conversely, refers to the ability to determine the state of a system by observing its outputs. Think of a car’s blind spot; you can’t see what’s there, so you can’t ‘observe’ it directly from your seat. If the system’s internal state can’t be inferred from its outputs, the system is unobservable. Like controllability, we have a mathematical test – the observability matrix – to determine if a system is observable. A full-rank observability matrix confirms observability.

In practice, controllability and observability are crucial for system design and state feedback control. Uncontrollable systems cannot be regulated, and unobservable systems can lead to poor performance or even instability because we lack the complete information about the system’s state.

Q 10. How do you design a controller for a system with time delay?

Designing a controller for a system with time delay (also known as dead time or transport delay) is challenging because the delay introduces a phase lag that can destabilize the system. Standard control techniques might not be sufficient. Several strategies can be employed:

- Smith Predictor: This compensator predicts the delayed effect and compensates for it, effectively canceling out the delay in the feedback loop. It involves a model of the system’s delay and is particularly useful for relatively constant delays.

- Approximation Methods: The time delay can be approximated using Padé approximants (rational functions) or other approximation techniques. This allows for the use of traditional control design methods.

- Predictive Control (MPC): Model predictive control explicitly accounts for the time delay in its optimization algorithm. It predicts future system behavior and optimizes the control actions based on this prediction.

- Adaptive Control: Adaptive controllers adjust their parameters to account for the unknown or varying time delay, offering resilience to delay changes.

The choice of method depends on the specific application, the magnitude of the delay, and the desired performance characteristics. Often, a combination of techniques might be the best solution.

Q 11. What are some common techniques for dealing with nonlinearities in control systems?

Nonlinearities in control systems are common and can significantly affect performance and stability. Several techniques address this:

- Linearization: Approximating the nonlinear system with a linear model around an operating point. This is valid only for small deviations from the operating point.

- Feedback Linearization: Transforming the nonlinear system into a linear form using a nonlinear coordinate transformation and feedback. This allows for the application of linear control techniques.

- Gain Scheduling: Designing a family of linear controllers for different operating points and switching between them based on the system’s operating conditions.

- Sliding Mode Control (SMC): A robust control strategy that forces the system’s trajectories to remain on a predefined sliding surface, irrespective of matched uncertainties and disturbances.

- Fuzzy Logic Control: Using fuzzy sets and fuzzy rules to represent the nonlinear relationship between inputs and outputs. It allows for flexible and adaptable control.

The selection of the most appropriate technique depends heavily on the nature of the nonlinearity and the desired performance objectives.

Q 12. Describe different types of sensors and actuators used in control systems.

Sensors and actuators are the interface between the control system and the physical process. Examples include:

- Sensors: Potentiometers (position), thermocouples (temperature), accelerometers (acceleration), strain gauges (strain), flow meters (flow rate), photodiodes (light intensity), pressure transducers (pressure), gyroscopes (angular rate), GPS (position and velocity).

- Actuators: Electric motors (rotational motion), hydraulic actuators (linear or rotational motion), pneumatic actuators (linear or rotational motion), solenoids (on/off control), valves (flow control), heating elements (temperature control), pumps (fluid flow control).

The choice of sensor and actuator depends on factors such as the type of variable to be measured or controlled, the required accuracy, cost, robustness, and environmental conditions.

Q 13. Explain the concept of sampling and its effect on control systems.

Sampling is the process of converting a continuous-time signal into a discrete-time signal by taking measurements at regular intervals. This is fundamental in digital control systems. The sampling rate (frequency) is crucial. The Nyquist-Shannon sampling theorem states that the sampling frequency must be at least twice the highest frequency component present in the continuous-time signal to avoid aliasing, where high-frequency components are misinterpreted as low-frequency ones.

Effect on Control Systems: Sampling introduces a delay (sampling period) and can limit the bandwidth of the closed-loop system. Too slow a sampling rate can lead to poor performance or instability. Anti-aliasing filters are often used before sampling to attenuate frequencies above half the sampling frequency, preventing aliasing.

Example: Imagine controlling a robot arm’s position. If the sampling rate is too low, the controller might only receive position updates infrequently, resulting in jerky and imprecise movements. A high enough sampling rate ensures smooth and accurate control. The choice of sampling rate involves a trade-off between performance and computational complexity.

Q 14. What are the advantages and disadvantages of digital control systems over analog systems?

Digital control systems offer several advantages over their analog counterparts:

- Flexibility: Software-based implementation allows for easy modification and adaptation of control algorithms without hardware changes.

- Precision and Repeatability: Digital systems offer high accuracy and consistent performance.

- Cost-Effectiveness: Microcontrollers and digital signal processors (DSPs) have become increasingly inexpensive, making digital control systems affordable.

- Advanced Control Algorithms: Complex and sophisticated control algorithms, such as model predictive control, are easily implemented digitally.

However, digital control systems also have disadvantages:

- Sampling and Quantization Effects: Sampling introduces delay and quantization errors which can affect system performance. Proper design considerations are needed.

- Computational Delay: The time required for computations can introduce a delay, particularly with complex algorithms, impacting system response.

- Susceptibility to Noise: Digital signals can be more susceptible to noise compared to their analog counterparts. Proper signal conditioning and noise reduction techniques are essential.

- Programming Complexity: Designing and implementing digital control systems requires programming skills and a good understanding of embedded systems.

The choice between analog and digital control depends on the specific application requirements. For many modern applications, the advantages of digital control systems often outweigh the disadvantages.

Q 15. Describe your experience with different control system design software (e.g., MATLAB, Simulink).

My experience with control system design software is extensive, encompassing both MATLAB and Simulink extensively. MATLAB provides the foundational mathematical environment for control system analysis and design. I’ve used it for tasks ranging from linear algebra calculations to implementing custom control algorithms. I’m proficient in using its Control System Toolbox for tasks such as pole-zero placement, root locus analysis, and frequency response analysis. Simulink, on the other hand, allows for the creation of visual block diagrams to model and simulate dynamic systems. I’ve used Simulink to build complex models of various systems, from simple mass-spring-damper systems to more intricate electromechanical systems, and to test and refine control algorithms before physical implementation. For instance, I used Simulink to design a PID controller for a robotic arm, simulating various scenarios and tuning the controller parameters to achieve optimal performance. This involved modeling the robot’s dynamics, incorporating sensor noise, and validating the controller’s stability and robustness against external disturbances. Beyond MATLAB and Simulink, I have some familiarity with other tools like Python with control libraries (such as `control`) for more specialized tasks.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. Explain the concept of a transfer function and how it is used.

A transfer function is a mathematical representation of a linear, time-invariant (LTI) system’s input-output relationship. It describes how the system transforms an input signal into an output signal in the Laplace domain. Specifically, it’s the ratio of the Laplace transform of the output to the Laplace transform of the input, assuming zero initial conditions. Think of it like a recipe: the input is the ingredients, the transfer function is the recipe itself, and the output is the final dish. For instance, a simple first-order system like an RC circuit might have a transfer function of 1/(1+sRC), where ‘s’ is the Laplace variable, ‘R’ is the resistance, and ‘C’ is the capacitance. Transfer functions are crucial for analyzing system stability, designing controllers, and predicting system responses. For example, the location of poles and zeros in the complex s-plane directly dictates the system’s stability and transient response characteristics. They enable us to analyze the system’s frequency response, identifying its bandwidth, gain margin, and phase margin—all vital for performance assessment and controller tuning.

Q 17. Describe different types of control system architectures (e.g., cascade control, feedforward control).

Control system architectures dictate how feedback and control actions are implemented. A simple feedback control system involves measuring the output, comparing it to the desired setpoint, and using the error signal to adjust the input. More complex architectures include:

- Cascade Control: This uses multiple control loops, where the output of one loop serves as the setpoint for the next. This is effective for systems with multiple interacting variables. Imagine controlling the temperature of a furnace: an inner loop might control the fuel flow rate to achieve a specific flame temperature, while an outer loop adjusts the fuel flow based on the overall furnace temperature.

- Feedforward Control: This anticipates disturbances and compensates for them before they affect the output. For example, in a robot arm, a feedforward controller might predict the required torque based on the planned trajectory, reducing the effect of inertia and gravity.

- Feedback Linearization: This technique transforms a nonlinear system into an equivalent linear system to enable easier controller design.

The choice of architecture depends on the system’s complexity, performance requirements, and the nature of disturbances.

Q 18. How do you handle disturbances in a control system?

Disturbances are unavoidable in real-world control systems. They can stem from various sources like sensor noise, unmodeled dynamics, or external forces. Handling disturbances effectively is crucial for maintaining system performance and stability. Several strategies can be used:

- Feedback Control: This is the primary method for rejecting disturbances. A well-designed feedback controller continuously monitors the output and corrects for deviations caused by disturbances.

- Feedforward Control: As mentioned earlier, this approach anticipates and compensates for known disturbances.

- Robust Control Techniques: These methods design controllers that are insensitive to uncertainties and disturbances. H∞ control is a prime example that minimizes the effect of disturbances on the system’s output.

- Adaptive Control: These controllers adjust their parameters online to account for changes in the system dynamics or disturbances.

The best approach depends on the nature and characteristics of the disturbances and the system itself. Sometimes, a combination of techniques is employed for optimal performance.

Q 19. Explain the concept of system identification.

System identification is the process of building a mathematical model of a dynamic system from experimental data. It’s like reverse engineering: you observe the system’s input and output, and then create a model that accurately captures its behavior. This is essential when a detailed physical model is unavailable or too complex. The process typically involves these steps:

- Experiment Design: Carefully planned input signals (e.g., step inputs, sinusoidal signals) are applied to the system.

- Data Acquisition: The system’s input and output are measured and recorded.

- Model Structure Selection: A suitable model structure is chosen (e.g., ARX, ARMAX, state-space models).

- Parameter Estimation: The model’s parameters are estimated using techniques like least squares or maximum likelihood estimation to best fit the collected data.

- Model Validation: The identified model is validated using new data to ensure its accuracy and predictive capabilities.

Software packages like MATLAB’s System Identification Toolbox provide tools to facilitate this process. System identification plays a vital role in applications such as controller design, fault detection, and predictive maintenance.

Q 20. What is model predictive control (MPC) and its applications?

Model Predictive Control (MPC) is an advanced control algorithm that uses a model of the system to predict its future behavior. Based on this prediction, it optimizes the control actions over a finite horizon to minimize a cost function that incorporates performance and constraints. Think of it as a strategist planning several moves ahead in a game. It considers not only the current state but also the predicted future states, optimizing its actions to reach the desired goal. MPC is particularly useful when dealing with systems with constraints (e.g., actuator limits, output limits).

Applications of MPC are widespread:

- Process Control: Controlling chemical reactors, distillation columns, and other industrial processes.

- Robotics: Trajectory control of robots, especially in constrained environments.

- Automotive: Engine control, vehicle dynamics control.

- Energy Systems: Managing power grids and renewable energy sources.

The advantage of MPC lies in its ability to handle constraints, multivariable systems, and nonlinearities, making it suitable for complex applications.

Q 21. Describe your experience with real-time control systems.

My experience with real-time control systems involves working with systems where control actions must be executed within strict timing constraints. This often entails programming in languages like C/C++ for speed and efficiency. I have been involved in projects involving embedded systems, where the controller is implemented on a microcontroller or other hardware platform. A key challenge in real-time control is dealing with timing issues, such as jitter and latency, which can significantly impact system performance and stability. Careful consideration of hardware selection, software design (including interrupt handling and task scheduling), and communication protocols is crucial. For example, I worked on a project involving the real-time control of a small drone, requiring precise control of its motors and sensors to achieve stable flight. This involved designing a control algorithm, implementing it on a suitable microcontroller, integrating sensors, and addressing issues like communication latency and sensor noise in a real-time environment. The experience included rigorous testing and debugging in a simulated and real-world setting to ensure the system’s stability and responsiveness.

Q 22. How do you ensure the robustness of a control system?

Ensuring robustness in a control system means designing it to perform reliably and predictably despite uncertainties and disturbances. Think of it like building a sturdy bridge – you need it to withstand not just the expected weight but also unexpected events like strong winds or earthquakes. We achieve this robustness through several key strategies:

- Gain Margin and Phase Margin: These are crucial frequency-domain metrics that quantify the system’s tolerance to gain and phase variations. Sufficient margins ensure stability even with model inaccuracies or component variations. A low gain margin indicates vulnerability to gain increases, while a low phase margin suggests susceptibility to phase shifts caused by delays or dynamics not captured in the model.

- Robust Control Design Techniques: H-infinity control and L1 adaptive control are sophisticated methods that explicitly account for uncertainties in the plant model. They minimize the effect of disturbances and model mismatch on system performance.

- Feedback Control: The very essence of robust control is feedback. By continuously monitoring the output and comparing it to the desired setpoint, feedback allows the system to adapt to changes and maintain stability. Proportional-Integral-Derivative (PID) controllers, though simple, are incredibly effective in achieving robustness in many applications.

- Sensor and Actuator Selection: Choosing reliable and accurate sensors and actuators is fundamental. Poor quality sensors can introduce noise and inaccuracies, while faulty actuators can lead to unpredictable behavior. Redundancy can be added to further enhance robustness.

- System Identification and Model Validation: Before designing a controller, a thorough understanding of the plant dynamics is critical. System identification techniques, combined with rigorous model validation, ensure the controller is designed based on a realistic representation of the system.

For example, consider a temperature control system in a chemical reactor. Robustness is vital to ensure consistent product quality despite variations in ambient temperature, heat transfer rates, or reactant composition. Using a robust controller with sufficient gain and phase margins will ensure the reactor maintains the desired temperature even under these changing conditions.

Q 23. Explain the concept of adaptive control.

Adaptive control is a fascinating field focusing on designing control systems that automatically adjust their parameters in response to changes in the plant dynamics or the environment. Imagine a self-driving car navigating a snowy road – its control system needs to adapt its parameters to maintain stability and control on a slippery surface. It’s different from robust control, which anticipates uncertainties but doesn’t adapt actively.

Adaptive control algorithms typically involve two key components:

- Parameter Estimation: This component continuously monitors the system’s behavior and estimates its parameters, which might be changing over time. Common techniques include Recursive Least Squares (RLS) and Kalman filtering.

- Controller Adjustment: This component uses the estimated parameters to adjust the controller’s gains or other parameters to optimize performance. There are many different adaptive control architectures, such as model reference adaptive control (MRAC) and self-tuning regulators.

A common example is a robot arm performing a welding task. The arm’s weight might change as the welding material is added. An adaptive controller can adjust its control parameters to compensate for this weight change, ensuring consistent welding performance.

//Illustrative pseudo-code snippet for parameter adaptation (RLS): //theta = RLS_update(theta_old, error, input); //theta are the controller parameters //controller_output = controller(input, theta);Q 24. What are some common challenges in implementing control systems?

Implementing control systems presents several significant challenges:

- Model Uncertainty and Nonlinearities: Real-world systems are often complex and nonlinear, making accurate modeling challenging. Uncertainties in parameters can lead to poor performance or instability.

- Noise and Disturbances: Sensors and actuators are susceptible to noise and external disturbances. These can corrupt measurements and affect controller performance.

- Computational Limitations: Complex control algorithms can be computationally intensive, requiring significant processing power and memory, especially in real-time applications.

- Hardware Constraints: Practical implementation often involves constraints on sensor/actuator selection, bandwidth limitations, and power consumption.

- Safety and Reliability: Control systems are often critical in safety-sensitive applications where failures can have significant consequences. Ensuring reliability and safety is paramount.

- Integration and Testing: Integrating the control system with the plant and other systems can be complex. Thorough testing and validation are crucial.

For instance, designing a flight control system for an aircraft requires dealing with significant aerodynamic nonlinearities and disturbances, while ensuring safety and reliability in challenging flight conditions is paramount.

Q 25. How do you test and validate a control system?

Testing and validating a control system is a multi-stage process essential to ensure its proper function and safety. It involves:

- Simulation: Initially, the control system is thoroughly tested through simulations using realistic models of the plant and its environment. This allows for testing various scenarios without risking damage to real-world equipment.

- Hardware-in-the-Loop (HIL) Simulation: This sophisticated testing method integrates a real controller with a simulated plant model, enabling realistic testing of the controller under realistic conditions while maintaining a safe environment.

- Real-World Testing: After rigorous simulation, the controller is tested on the actual system. This often involves gradual testing, starting with simple maneuvers and progressively increasing complexity.

- Verification and Validation: This crucial step verifies that the system meets its specifications and validates that it performs as intended under various operating conditions. This may involve formal methods, such as model checking, or empirical testing.

- Failure Mode and Effects Analysis (FMEA): This systematic approach identifies potential failure modes and their effects on the system, allowing for the development of mitigation strategies.

For example, before deploying a new autonomous vehicle control system, extensive simulation, HIL testing, and real-world testing on closed tracks and controlled environments are required to ensure safety and reliable operation.

Q 26. Explain your understanding of Lyapunov stability.

Lyapunov stability is a powerful concept in nonlinear control theory that addresses the stability of equilibrium points of dynamic systems without explicitly solving the system’s differential equations. It’s based on the existence of a Lyapunov function, a scalar function that resembles an energy function.

A system is considered Lyapunov stable if, for any initial condition sufficiently close to an equilibrium point, the system’s state remains within a bounded region around that equilibrium point. Essentially, it means that small disturbances won’t cause the system to deviate significantly from its equilibrium.

To prove Lyapunov stability, we need to find a Lyapunov function V(x) that satisfies specific conditions:

- V(x) is positive definite (V(x) > 0 for x ≠ 0 and V(0) = 0).

- The time derivative of V(x) along the system’s trajectories, denoted as V̇(x), is negative semidefinite (V̇(x) ≤ 0). This implies that the Lyapunov function is decreasing or constant along trajectories.

If these conditions hold, the equilibrium point is Lyapunov stable. If V̇(x) is negative definite (V̇(x) < 0 for x ≠ 0), then the equilibrium point is asymptotically stable, meaning that the system converges to the equilibrium point as time goes to infinity. Lyapunov's direct method provides a powerful tool to analyze the stability of nonlinear systems, even if an explicit solution is unavailable.

Q 27. Describe your experience with different types of filters used in control systems.

Filters play a crucial role in control systems by reducing noise and unwanted signals, improving the controller’s performance and robustness. I’ve worked extensively with various filter types, including:

- Low-pass filters: These filters allow low-frequency signals to pass through while attenuating high-frequency noise. They’re commonly used to smooth noisy sensor readings. Simple RC circuits or digital moving average filters are examples.

- High-pass filters: These filters do the opposite, passing high-frequency components and attenuating low-frequency ones. They are useful for removing slow drifts or biases in signals.

- Band-pass filters: These filters pass signals within a specific frequency range, rejecting signals outside that range. They are used to select specific frequency components of interest.

- Notch filters: These are specialized filters that attenuate signals at a specific frequency, ideal for removing narrowband noise or interference.

- Kalman filters: These are optimal recursive filters that estimate the state of a dynamic system from noisy measurements. They’re particularly effective in dealing with stochastic disturbances.

The choice of filter depends on the specific application and the characteristics of the noise and the desired signal. For instance, in a robotic arm control system, a Kalman filter might be used to estimate the arm’s position and velocity from noisy sensor measurements, while a low-pass filter might smooth the velocity signal to reduce jitter.

Q 28. What are some ethical considerations in control system design?

Ethical considerations in control system design are paramount, particularly in safety-critical applications. We must consider:

- Safety: The primary ethical concern is ensuring the safety of humans and the environment. Thorough testing, risk assessment, and fail-safe mechanisms are crucial to mitigate potential hazards.

- Reliability: Control systems must be reliable and function as intended to prevent accidents or malfunctions. Redundancy and fault tolerance are important aspects of reliable design.

- Privacy: Control systems often collect and process data, raising privacy concerns. Data security and anonymization techniques are needed to protect user privacy.

- Transparency and Explainability: Especially in AI-based control systems, transparency is vital. Understanding how decisions are made is critical for accountability and trust.

- Bias and Fairness: AI-powered control systems can inherit biases from training data, leading to unfair or discriminatory outcomes. Careful data curation and bias mitigation techniques are crucial.

- Accountability: Clear lines of responsibility must be established for the design, deployment, and operation of control systems, especially in situations where malfunction can lead to harm.

For example, in autonomous driving, ethical considerations regarding safety, accountability, and potential biases in decision-making algorithms are paramount. A thorough ethical review process is needed before deploying such systems.

Key Topics to Learn for Control Systems Theory Interview

- System Modeling: Mastering techniques like transfer functions, state-space representations, and block diagrams is crucial for understanding system behavior. Consider exploring various modeling techniques suitable for different system complexities.

- Stability Analysis: Understand methods like Routh-Hurwitz criterion, root locus plots, and Bode plots to determine system stability and performance. Practice applying these methods to real-world examples, such as analyzing the stability of a robotic arm or a power grid.

- Frequency Response Analysis: Learn to interpret Bode plots, Nyquist plots, and gain/phase margins to assess system performance and robustness. Be prepared to discuss the implications of these parameters on system stability and transient response.

- Time-Domain Analysis: Understand step response, impulse response, and other time-domain characteristics of control systems. Relate these characteristics to performance metrics like rise time, settling time, and overshoot.

- Controller Design: Familiarize yourself with various control strategies like PID controllers, lead/lag compensators, and state-feedback controllers. Be ready to discuss the trade-offs involved in selecting an appropriate controller for a given application.

- Digital Control Systems: Understand the principles of digital control, including sampling, quantization, and Z-transforms. Explore the differences between continuous and discrete-time control systems.

- State-Space Methods: Gain proficiency in state-space representation, controllability, and observability analysis. This is vital for understanding and designing more complex control systems.

- Nonlinear Control Systems (Optional, but advantageous): A basic understanding of nonlinear systems and control techniques (e.g., Lyapunov stability) can set you apart.

Next Steps

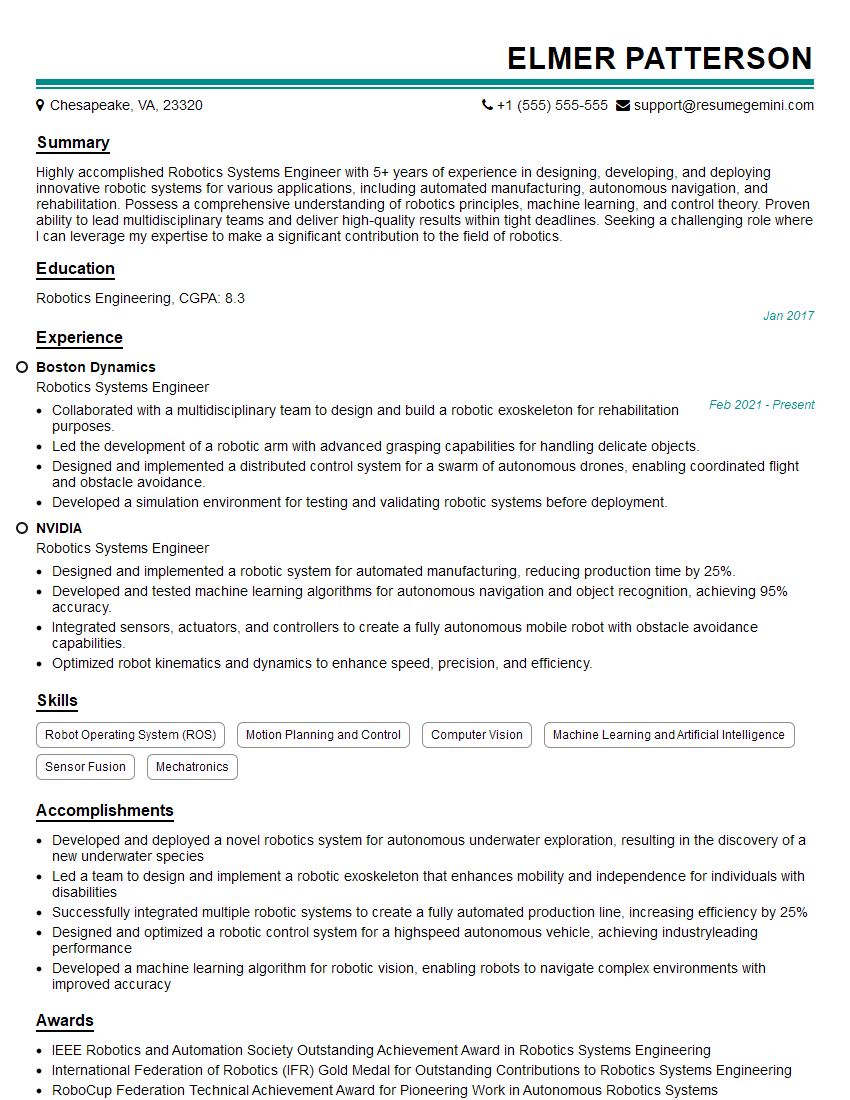

Mastering Control Systems Theory significantly enhances your career prospects in various engineering fields, opening doors to challenging and rewarding roles. To maximize your job search success, it’s essential to craft a compelling and ATS-friendly resume that highlights your skills and experience effectively. ResumeGemini is a trusted resource that can help you build a professional and impactful resume, tailored to showcase your expertise in Control Systems Theory. Examples of resumes specifically designed for this field are available to guide you.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Very informative content, great job.

good