The right preparation can turn an interview into an opportunity to showcase your expertise. This guide to Experience in data analysis and interpretation interview questions is your ultimate resource, providing key insights and tips to help you ace your responses and stand out as a top candidate.

Questions Asked in Experience in data analysis and interpretation Interview

Q 1. Explain the difference between correlation and causation.

Correlation and causation are two distinct concepts in statistics. Correlation simply means that two variables are related; when one changes, the other tends to change as well. Causation, on the other hand, implies that one variable directly influences or causes a change in another. A crucial distinction is that correlation does not equal causation.

Example: Ice cream sales and crime rates might be positively correlated – both tend to increase during the summer. However, this doesn’t mean that buying ice cream causes crime, or vice versa. The underlying factor, the hot weather, is causing both to increase independently.

In my work, understanding this difference is vital. I frequently use scatter plots and correlation coefficients to identify relationships between variables, but I always carefully consider potential confounding factors and conduct further analysis (like regression or experimental design) to determine whether a causal link actually exists before drawing conclusions.

Q 2. Describe your experience with different data visualization techniques.

I have extensive experience using various data visualization techniques to effectively communicate insights from data. My go-to choices depend heavily on the data type and the message I need to convey. For instance:

- Bar charts and histograms are excellent for comparing categorical or discrete data.

- Line charts are ideal for showing trends over time.

- Scatter plots are perfect for visualizing the relationship between two continuous variables, helping identify correlations.

- Box plots provide a clear summary of the distribution of a variable, showing median, quartiles, and outliers.

- Heatmaps are powerful for displaying relationships within large matrices, such as correlation matrices or geographical data.

- Interactive dashboards using tools like Tableau or Power BI allow for exploration and dynamic filtering of data.

For example, in a recent project analyzing customer churn, I used a combination of a bar chart to show the churn rate by customer segment and a line chart to visualize churn trends over the past year. This gave a holistic view of the problem, guiding subsequent analyses.

Q 3. How do you handle missing data in a dataset?

Missing data is a common challenge in data analysis. The best approach depends on the nature of the data, the extent of the missingness, and the potential impact on the analysis.

My strategies include:

- Deletion: Listwise deletion (removing entire rows with missing values) is simple but can lead to significant bias if data is not missing completely at random (MCAR).

- Imputation: Replacing missing values with estimated values. Common techniques include mean/median imputation (simple but can distort variance), k-Nearest Neighbors (KNN) imputation (considers similar data points), and multiple imputation (generates multiple plausible imputed datasets). I often prefer multiple imputation as it accounts for the uncertainty introduced by imputation.

- Model-based approaches: Incorporating missing data mechanisms into the analysis model itself. For example, using Maximum Likelihood Estimation (MLE) for models that can handle missing data directly.

The choice depends on the context. For instance, in a small dataset with non-random missingness, imputation might be preferred; in a large dataset with MCAR missingness, listwise deletion may be acceptable. Always assessing the impact of the chosen method on the final results is crucial.

Q 4. What are some common data cleaning techniques you use?

Data cleaning is a critical step. It ensures data accuracy and reliability for analysis. My common techniques include:

- Handling missing values: As described in the previous answer.

- Identifying and correcting outliers: Outliers can significantly skew results. I investigate them to determine if they’re errors (correct or remove) or legitimate extreme values (retain and potentially model separately).

- Data transformation: This includes standardizing or normalizing data to improve model performance or comparability. Techniques like log transformations can handle skewed data.

- Consistency checks: I ensure data consistency across different columns or sources, addressing inconsistencies in formats (e.g., date formats), units, and naming conventions.

- Duplicate removal: Identifying and removing duplicate entries to avoid bias and inflated sample sizes.

For example, in a marketing campaign analysis, I once discovered inconsistent customer IDs across different databases, which I corrected using fuzzy matching techniques before further analysis.

Q 5. Explain your experience with different regression models.

My experience encompasses various regression models, each suitable for different data types and objectives:

- Linear Regression: Predicts a continuous dependent variable based on one or more independent variables. I use it frequently for tasks like predicting sales based on advertising spend.

- Logistic Regression: Predicts a binary or categorical dependent variable. A common application is predicting customer churn (will a customer leave?).

- Polynomial Regression: Models non-linear relationships between variables by adding polynomial terms.

- Ridge and Lasso Regression: Regularization techniques to prevent overfitting, particularly useful with high-dimensional datasets.

Model selection depends on the research question, data characteristics (linearity, normality), and the type of dependent variable. I always evaluate model performance using metrics like R-squared, adjusted R-squared, AIC, BIC, and residual analysis to ensure model accuracy and avoid overfitting.

Q 6. How do you choose the appropriate statistical test for a given hypothesis?

Choosing the appropriate statistical test depends on several factors: the type of data (continuous, categorical, ordinal), the number of groups being compared, and the research hypothesis (e.g., comparing means, testing for independence, testing for correlation).

I typically follow these steps:

- Define the research question and hypothesis: Clearly state what you want to investigate.

- Identify the type of data: Determine whether your data is continuous, categorical, or ordinal.

- Consider the number of groups: Are you comparing two groups or more?

- Select the appropriate test: Based on the above, choose the suitable test. Examples include t-tests (comparing means of two groups), ANOVA (comparing means of three or more groups), chi-square tests (testing for independence between categorical variables), and correlation tests (measuring the association between variables).

- Check assumptions: Many statistical tests have assumptions (e.g., normality, independence). Verify these before proceeding. If assumptions are violated, consider alternative non-parametric tests.

For example, if I want to compare the average sales between two different marketing campaigns (continuous data, two groups), I would use a t-test. If I’m examining the relationship between customer gender (categorical) and product preference (categorical), I’d use a chi-square test of independence.

Q 7. Describe your experience with A/B testing.

A/B testing is a powerful method for comparing two versions of something (e.g., a website, an ad, an email) to see which performs better. My experience involves designing, implementing, and analyzing A/B tests, following these steps:

- Define the objective: Clearly state the goal of the A/B test (e.g., increase click-through rate, improve conversion rate).

- Develop hypotheses: Formulate testable hypotheses about which version will perform better.

- Design the test: Determine the metrics to be measured (e.g., click-through rate, conversion rate), sample size (using power analysis to ensure sufficient statistical power), and duration of the test.

- Implement the test: Deploy both versions (A and B) to randomly assigned groups of users.

- Analyze results: Use statistical tests (often a two-sample t-test or chi-squared test) to determine if there’s a statistically significant difference between the performance of A and B. Consider effect size in addition to p-value.

- Report findings: Clearly communicate the results, including statistical significance, effect size, and practical implications.

A recent project involved A/B testing different website layouts. By carefully designing the test and analyzing the results, we identified a layout that significantly increased conversion rates.

Q 8. How do you identify outliers in a dataset?

Identifying outliers, data points significantly different from the rest, is crucial for data quality. Think of it like finding the odd one out in a group. Several methods exist:

Visual Inspection: Box plots and scatter plots are excellent for initial outlier detection. A box plot clearly shows the quartiles and potential outliers beyond the whiskers. Scatter plots reveal outliers that deviate significantly from the overall trend.

Statistical Methods:

Z-score: This measures how many standard deviations a data point is from the mean. Data points with a Z-score exceeding a threshold (e.g., 3 or -3) are often considered outliers.

z = (x - μ) / σ, where x is the data point, μ is the mean, and σ is the standard deviation.Interquartile Range (IQR): This method uses the difference between the 75th and 25th percentiles. Outliers are defined as points below Q1 – 1.5*IQR or above Q3 + 1.5*IQR. This is less sensitive to extreme values than the Z-score.

DBSCAN (Density-Based Spatial Clustering of Applications with Noise): This clustering algorithm identifies outliers as points that don’t belong to any dense cluster. It’s particularly useful for high-dimensional data.

Example: In analyzing customer purchase data, a customer spending significantly more or less than the average could be an outlier. Investigating these outliers might reveal valuable insights, such as a new high-value customer or a problem with data entry.

Q 9. What are some common methods for feature selection?

Feature selection aims to choose the most relevant features from a dataset, improving model performance and reducing complexity. It’s like choosing the right ingredients for a recipe – you don’t need everything!

Filter Methods: These use statistical measures to rank features independently of the chosen model. Examples include:

Correlation: Selecting features highly correlated with the target variable.

Chi-squared test: For categorical features and target variables.

Information gain: Measuring the reduction in entropy after knowing the feature.

Wrapper Methods: These evaluate subsets of features by training a model on them. Examples include:

Recursive feature elimination (RFE): Recursively removes the least important features based on model coefficients.

Forward/Backward selection: Iteratively adds or removes features based on model performance.

Embedded Methods: These incorporate feature selection into the model training process itself. Examples include:

L1 regularization (LASSO): Adds a penalty to the model’s loss function that encourages sparsity (some coefficients become zero).

Decision tree-based methods: Feature importance is inherently assessed during tree construction.

The best method depends on the dataset and modeling goals. For instance, if you have a large dataset with many features, filter methods can be computationally efficient for initial feature reduction.

Q 10. Explain your experience with data mining techniques.

Data mining involves uncovering patterns and insights from large datasets. I’ve extensively used techniques such as:

Association Rule Mining (Apriori): Discovering relationships between items. For example, in retail, identifying that customers who buy diapers also often buy beer.

Clustering (K-means, hierarchical): Grouping similar data points together. I used K-means to segment customers based on demographics and purchasing behavior.

Classification (Decision Trees, Logistic Regression, Support Vector Machines): Predicting categorical outcomes. I applied logistic regression to predict customer churn based on usage patterns.

Regression (Linear, Polynomial): Predicting continuous outcomes. For instance, I used linear regression to forecast sales based on advertising spending.

In one project, I used association rule mining on supermarket transaction data to identify product affinities, which led to optimized store layouts and targeted promotions.

Q 11. Describe your experience with different database systems.

My experience spans several database systems, including:

Relational Databases (SQL Server, MySQL, PostgreSQL): I’m proficient in querying and managing data using SQL. I’ve worked with large transactional databases and data warehouses.

NoSQL Databases (MongoDB, Cassandra): I have experience with NoSQL databases for handling semi-structured and unstructured data, particularly in situations requiring high scalability and flexibility.

Cloud-based Databases (AWS RDS, Google Cloud SQL): I’m familiar with managing and deploying databases on cloud platforms.

Choosing the right database system is crucial. For example, a relational database is ideal for structured data requiring ACID properties (Atomicity, Consistency, Isolation, Durability), while a NoSQL database might be better suited for handling large volumes of unstructured data with high write throughput.

Q 12. How do you handle large datasets?

Handling large datasets requires strategic approaches. Here’s my typical workflow:

Sampling: Using a representative subset of the data for initial analysis and model development can significantly reduce processing time. Care must be taken to ensure the sample accurately reflects the population.

Data Partitioning: Dividing the data into smaller, manageable chunks for parallel processing. This is commonly used with big data frameworks like Hadoop and Spark.

Distributed Computing: Utilizing frameworks like Hadoop or Spark to distribute data processing across multiple machines. This is essential for datasets that exceed the capacity of a single machine.

Data Compression: Reducing data storage size and improving processing speed through compression techniques.

Feature Engineering and Dimensionality Reduction: Extracting the most relevant information and reducing the number of features to simplify computations. Techniques like PCA (Principal Component Analysis) are helpful.

In one project, I used Spark to process a terabyte-scale dataset for fraud detection, dramatically improving processing efficiency.

Q 13. Explain your experience with SQL and its applications in data analysis.

SQL is fundamental to my data analysis workflow. I leverage it for:

Data Extraction, Transformation, and Loading (ETL): Extracting data from various sources, transforming it into a usable format, and loading it into a data warehouse or data mart.

Data Cleaning and Validation: Identifying and correcting inconsistencies and errors in the data using SQL queries.

Data Aggregation and Summarization: Using aggregate functions (

SUM,AVG,COUNT, etc.) to generate summary statistics.Data Exploration and Analysis: Using

JOIN,WHERE, andGROUP BYclauses to answer specific business questions.

Example: To identify top-performing products, I might use a query like this: SELECT product_name, SUM(sales) AS total_sales FROM sales_data GROUP BY product_name ORDER BY total_sales DESC LIMIT 10;

Q 14. What are your preferred tools for data analysis?

My preferred tools depend on the task, but here are some key ones:

Programming Languages: Python (with libraries like Pandas, NumPy, Scikit-learn) and R.

Data Visualization Tools: Tableau, Power BI, and Matplotlib/Seaborn (Python).

Big Data Frameworks: Apache Spark and Hadoop.

Database Management Systems: SQL Server, MySQL, PostgreSQL, and MongoDB.

I choose tools based on their strengths. For example, Python with Pandas and Scikit-learn provides excellent support for data manipulation, statistical modeling, and machine learning. Tableau excels in creating interactive data visualizations for presentations and reports. Spark is invaluable when dealing with massive datasets that cannot be processed on a single machine.

Q 15. Describe your experience with data storytelling.

Data storytelling is the art of translating complex data insights into a compelling narrative that resonates with your audience. It’s about more than just presenting numbers; it’s about crafting a story that reveals the ‘why’ behind the data, making it memorable and actionable. I approach data storytelling by first identifying the key insights from the analysis. Then, I carefully select the appropriate visualization methods—charts, graphs, maps—to represent the data clearly and engagingly. Finally, I weave these visuals together with a narrative that explains the context, the implications, and the next steps. For example, in a project analyzing customer churn, instead of simply presenting churn rates, I would build a story around the root causes, perhaps showing a correlation between customer dissatisfaction and specific features using interactive dashboards and compelling visuals. This narrative approach helps stakeholders understand the problem’s depth and guides decision-making processes.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. How do you communicate complex data findings to non-technical audiences?

Communicating complex data to non-technical audiences requires a shift from technical jargon to plain language and relatable analogies. I avoid using statistical terms or complex formulas, instead focusing on visual representations and concise explanations. For example, instead of saying ‘the p-value is less than 0.05,’ I might say, ‘our analysis shows a statistically significant difference, meaning the results are highly unlikely to be due to chance.’ I also use storytelling techniques, focusing on the narrative and the implications of the findings, rather than the technical details of the analysis. Visual aids are crucial—simple charts, graphs, and infographics are much more effective than tables of numbers. Finally, I prioritize active listening and encourage questions to ensure everyone understands and feels comfortable with the presented information. A recent project involved explaining a complex A/B testing result to a marketing team; I used a simple bar chart to show the increase in conversion rates and related it to their overall marketing goals, thus making the data easily understandable and actionable for them.

Q 17. How do you measure the success of a data analysis project?

Measuring the success of a data analysis project goes beyond simply completing the analysis. It involves evaluating whether the project met its objectives and delivered tangible value. I use a multi-faceted approach, considering factors such as:

- Achieving project goals: Did the analysis answer the initial questions or solve the problem it was designed to address? This often involves comparing the results against pre-defined KPIs (Key Performance Indicators).

- Impact on decision-making: Did the insights from the analysis lead to informed decisions and tangible actions? For instance, did the analysis lead to a change in marketing strategy that resulted in increased sales or improved customer satisfaction?

- Return on Investment (ROI): This is especially important for business-oriented projects. Did the project generate a positive ROI by reducing costs, increasing revenue, or improving efficiency?

- Stakeholder satisfaction: Were the stakeholders satisfied with the quality of the analysis, the clarity of the communication, and the overall value delivered? This often involves gathering feedback through surveys or meetings.

For example, in a project focused on optimizing a website’s user experience, success would be measured by improvements in conversion rates, bounce rates, and time spent on site, directly linked to pre-determined business goals.

Q 18. Describe a time you had to deal with conflicting data sources.

Dealing with conflicting data sources is a common challenge. My approach involves a systematic investigation to identify the root cause of the discrepancies. First, I meticulously examine each data source for potential errors, inconsistencies, or biases. This might involve checking data definitions, data collection methods, and data cleaning procedures. Then, I evaluate the credibility and reliability of each source. This may involve considering the data source’s reputation, methodology, and potential conflicts of interest. Once the inconsistencies are identified, I might use techniques like data reconciliation or triangulation to resolve them. Data reconciliation involves comparing the data from different sources and identifying and resolving discrepancies. Data triangulation involves using multiple independent data sources to verify the accuracy of the information. For example, I once encountered conflicting sales figures from two different databases. After thorough investigation, I discovered that one database lacked updates from a specific sales channel. By carefully integrating the data from all sources, I was able to create a reconciled dataset that accurately reflected the true sales figures.

Q 19. Explain your experience with statistical modeling.

I have extensive experience with various statistical modeling techniques, including regression analysis (linear, logistic, polynomial), time series analysis (ARIMA, Prophet), clustering (K-means, hierarchical), and classification (decision trees, support vector machines, naive Bayes). My experience spans both the theoretical understanding of these models and their practical application in diverse contexts. I’m proficient in using statistical software packages such as R and Python (with libraries like scikit-learn, statsmodels) to build, evaluate, and interpret these models. For example, in a recent project, I used time series analysis with ARIMA modeling to forecast future demand for a product, which helped the company optimize its inventory management. In another project, I employed logistic regression to predict customer churn, allowing the company to proactively target at-risk customers. My focus is always on selecting the appropriate model based on the specific problem, data characteristics, and business objectives, and ensuring the model’s assumptions are met before drawing conclusions. I also emphasize model validation and interpretation to avoid overfitting and ensure the insights are relevant and meaningful.

Q 20. What are some ethical considerations in data analysis?

Ethical considerations in data analysis are paramount. My work is guided by principles of fairness, transparency, accountability, and respect for privacy. Key considerations include:

- Data Privacy: Adhering to data privacy regulations (like GDPR, CCPA) and ensuring data is handled responsibly and securely. This includes anonymizing data where necessary and obtaining informed consent before collecting and using personal information.

- Bias and Fairness: Being aware of potential biases in data and algorithms, and taking steps to mitigate them. This involves carefully examining the data collection process, selecting appropriate modeling techniques, and evaluating the fairness of the model’s outputs.

- Transparency and Explainability: Ensuring that the analysis is transparent and the results are easily understood by stakeholders. This involves clearly documenting the methodology, assumptions, and limitations of the analysis.

- Data Security: Protecting data from unauthorized access, use, disclosure, disruption, modification, or destruction.

- Misuse of Results: Avoiding the misuse of data analysis for manipulative or unethical purposes.

In all my work, I strive to uphold the highest ethical standards, ensuring that data analysis is used responsibly and contributes to positive outcomes.

Q 21. How do you ensure data quality and accuracy?

Ensuring data quality and accuracy is crucial for reliable analysis. My approach is multifaceted:

- Data Cleaning: This involves identifying and correcting errors, inconsistencies, and missing values in the dataset. Techniques include handling outliers, imputation of missing values, and data transformation.

- Data Validation: I employ various validation techniques to check the data’s integrity and consistency. This may involve comparing data against known values, checking for duplicates, and verifying data types.

- Source Verification: Establishing the credibility and reliability of data sources is crucial. This includes reviewing data collection methodologies, assessing data quality documentation, and consulting with data providers.

- Data Profiling: Conducting data profiling to understand the data’s characteristics, identify potential issues, and inform data cleaning and transformation strategies.

- Version Control: Utilizing version control systems (e.g., Git) to track changes to the data and analysis code, enabling reproducibility and facilitating collaboration.

For example, before conducting any analysis, I thoroughly examine the data for outliers or inconsistencies, using visualizations and statistical summaries to identify potential problems. I then use appropriate techniques to handle these issues, documenting all cleaning and transformation steps for transparency and reproducibility.

Q 22. Describe a challenging data analysis project you worked on and how you overcame the challenges.

One of the most challenging projects I undertook involved analyzing customer churn for a telecommunications company. The dataset was massive, containing millions of records with numerous features, many of which were highly correlated or contained missing values. Furthermore, the definition of ‘churn’ itself was nuanced, encompassing various scenarios like service cancellations, downgrades, and prolonged periods of inactivity.

To overcome these challenges, I employed a multi-faceted approach. First, I performed extensive exploratory data analysis (EDA) using visualization techniques like histograms, scatter plots, and correlation matrices to identify patterns and relationships within the data, as well as to pinpoint areas with missing data. I addressed missing values using imputation techniques such as K-Nearest Neighbors, selecting the method based on the characteristics of each specific feature.

Next, I employed feature selection methods, including recursive feature elimination and principal component analysis (PCA), to reduce the dimensionality of the dataset and mitigate the impact of highly correlated variables. This improved model performance and interpretability. Finally, I built several predictive models, including logistic regression, random forests, and gradient boosting machines, evaluating their performance using metrics like precision, recall, F1-score, and AUC. The best-performing model, a gradient boosting machine, provided valuable insights into the key drivers of churn, allowing the company to implement targeted retention strategies.

The project highlighted the importance of robust data preprocessing, feature engineering, and model selection in tackling complex analytical challenges. The success was measured not only by the accuracy of the predictive model but also by the actionable insights it generated, directly impacting the company’s retention efforts and bottom line.

Q 23. What is your experience with time series analysis?

Time series analysis is a specialized field focusing on data points indexed in time order. My experience encompasses various techniques, from basic descriptive statistics like calculating moving averages and trend lines to sophisticated modeling approaches such as ARIMA (Autoregressive Integrated Moving Average) and Prophet.

I’ve used these methods to forecast sales, predict stock prices, analyze website traffic, and monitor equipment performance. For example, in a project analyzing website traffic, I used ARIMA models to forecast daily website visits, taking into account seasonality and trend. This allowed the client to optimize their marketing campaigns and resource allocation based on anticipated traffic patterns.

My proficiency also includes handling issues common in time series data, like seasonality, trend, and autocorrelation, using techniques like differencing, decomposition, and appropriate model selection criteria (like AIC and BIC). I am familiar with both classical and machine learning-based time series methods, allowing me to select the most appropriate approach depending on the data’s characteristics and the project’s objectives.

Q 24. Explain your understanding of hypothesis testing.

Hypothesis testing is a statistical method used to make inferences about a population based on a sample of data. It involves formulating a null hypothesis (a statement of no effect or no difference) and an alternative hypothesis (a statement contradicting the null hypothesis). We then collect data and use statistical tests to determine whether there is enough evidence to reject the null hypothesis in favor of the alternative hypothesis.

The process typically involves:

- Defining the null and alternative hypotheses.

- Choosing an appropriate statistical test (e.g., t-test, chi-squared test, ANOVA) based on the data type and research question.

- Setting a significance level (alpha), typically 0.05, representing the probability of rejecting the null hypothesis when it is actually true (Type I error).

- Calculating the test statistic and p-value.

- Interpreting the results: If the p-value is less than alpha, we reject the null hypothesis; otherwise, we fail to reject the null hypothesis.

For instance, we might test the hypothesis that a new drug reduces blood pressure more effectively than a placebo. The null hypothesis would be that there is no difference in blood pressure reduction between the drug and the placebo. We’d collect blood pressure data from two groups (drug and placebo) and use a t-test to compare the means. A low p-value would suggest sufficient evidence to reject the null hypothesis and conclude the drug is more effective.

Q 25. How do you determine the appropriate sample size for a study?

Determining the appropriate sample size is crucial for ensuring the reliability and validity of research findings. The required sample size depends on several factors:

- The desired level of precision (margin of error): A smaller margin of error requires a larger sample size.

- The desired level of confidence: A higher confidence level (e.g., 99% vs. 95%) requires a larger sample size.

- The variability in the population: A higher variability requires a larger sample size.

- The effect size: The magnitude of the difference or relationship you’re trying to detect. Larger effect sizes require smaller sample sizes.

There are various methods for calculating sample size, often involving statistical power analysis. Software packages and online calculators can help determine the appropriate sample size based on these factors. For example, G*Power is a widely used free software for power analysis. Failing to use appropriate sample size calculation can lead to inaccurate conclusions, either by failing to detect a real effect (Type II error) or by falsely detecting an effect that doesn’t exist (Type I error).

Q 26. What is your experience with data warehousing and data lakes?

I have extensive experience with both data warehousing and data lakes. Data warehouses are centralized repositories designed for analytical processing, typically structured and schema-on-write. They are optimized for querying and reporting on historical data. I’ve worked with various data warehousing tools like Snowflake and Amazon Redshift, designing and implementing ETL (Extract, Transform, Load) processes to populate these warehouses.

Data lakes, on the other hand, are more flexible, schema-on-read repositories that store raw data in its native format. This allows for greater flexibility and agility in analysis but requires more careful consideration of data governance and data quality. I’ve used cloud-based data lake solutions like AWS S3 and Azure Data Lake Storage, leveraging tools like Spark and Hive for processing and querying the data. In practice, a hybrid approach often proves most effective, using a data lake for raw data storage and a data warehouse for curated and structured data analysis.

The choice between a data warehouse and a data lake (or a combination) depends heavily on the specific analytical needs, data volume, and the organization’s technical capabilities. I can assess these factors and recommend the most suitable architecture for any given project.

Q 27. Explain your understanding of different data types and structures.

Understanding data types and structures is fundamental to effective data analysis. Data types describe the kind of values a variable can hold, while data structures organize and relate those values. Common data types include:

- Numerical: Continuous (e.g., height, weight) and discrete (e.g., count, number of items).

- Categorical: Nominal (e.g., color, gender) and ordinal (e.g., education level, customer satisfaction rating).

- Textual: Strings of characters (e.g., names, descriptions).

- Boolean: True/False values.

- Date/Time: Values representing points in time.

Common data structures include:

- Relational databases: Data organized into tables with rows and columns, linked through relationships.

- NoSQL databases: More flexible databases suited for unstructured or semi-structured data.

- Arrays: Ordered collections of elements of the same type.

- Dictionaries/Hash tables: Key-value pairs for efficient data retrieval.

Understanding these data types and structures allows for appropriate data cleaning, transformation, and analysis. For example, using inappropriate statistical methods on categorical data can lead to misleading results. My experience spans working with various data types and structures, allowing me to choose the right tools and techniques for every situation.

Q 28. Describe your experience with predictive modeling and forecasting.

Predictive modeling and forecasting are crucial aspects of my data analysis expertise. Predictive modeling involves building statistical models to predict future outcomes based on historical data. I have experience with a wide range of techniques, including:

- Regression models: Linear regression, logistic regression, polynomial regression, etc., used for predicting continuous or binary outcomes.

- Classification models: Support vector machines (SVMs), decision trees, random forests, naive Bayes, etc., used for predicting categorical outcomes.

- Clustering models: K-means, hierarchical clustering, etc., used for grouping similar data points.

Forecasting, a subset of predictive modeling, focuses specifically on predicting future values of a time series. I’ve used various time series forecasting techniques, such as ARIMA, Exponential Smoothing, and Prophet, as described in my answer to question 2.

In a recent project, I developed a predictive model to forecast customer demand for a retail company. This involved cleaning and transforming the historical sales data, selecting relevant features (e.g., seasonality, promotions, economic indicators), training a model (in this case, a combination of ARIMA and regression models), evaluating its performance, and deploying it for real-time forecasting. The model helped the company optimize inventory management and improve resource allocation, leading to significant cost savings.

Key Topics to Learn for Data Analysis and Interpretation Interviews

- Data Cleaning and Preprocessing: Understanding techniques like handling missing values, outlier detection, and data transformation is crucial. Practical application includes using Python libraries like Pandas for data manipulation.

- Exploratory Data Analysis (EDA): Mastering EDA techniques like visualization (histograms, scatter plots, box plots) and summary statistics to identify patterns and trends in data. This helps in formulating hypotheses and guiding further analysis.

- Statistical Analysis: A solid grasp of hypothesis testing, regression analysis (linear, logistic), and other statistical methods is essential for drawing meaningful conclusions from data. Consider practical applications using statistical software like R or SPSS.

- Data Visualization: Creating clear and effective visualizations (charts, graphs, dashboards) to communicate insights derived from data analysis. This involves choosing appropriate chart types and conveying information concisely.

- Data Interpretation and Storytelling: Translating complex data analysis results into a clear and compelling narrative for a non-technical audience. This is a crucial soft skill for conveying your findings effectively.

- Database Management Systems (DBMS): Familiarity with SQL and database querying is often beneficial, especially for roles involving large datasets. Practical application includes extracting and preparing data for analysis from databases.

- Machine Learning (ML) Techniques (Depending on Role): Depending on the specific role, understanding basic ML concepts and algorithms (e.g., regression, classification) may be advantageous. Focus on understanding the application and interpretation of results, rather than complex model building.

Next Steps

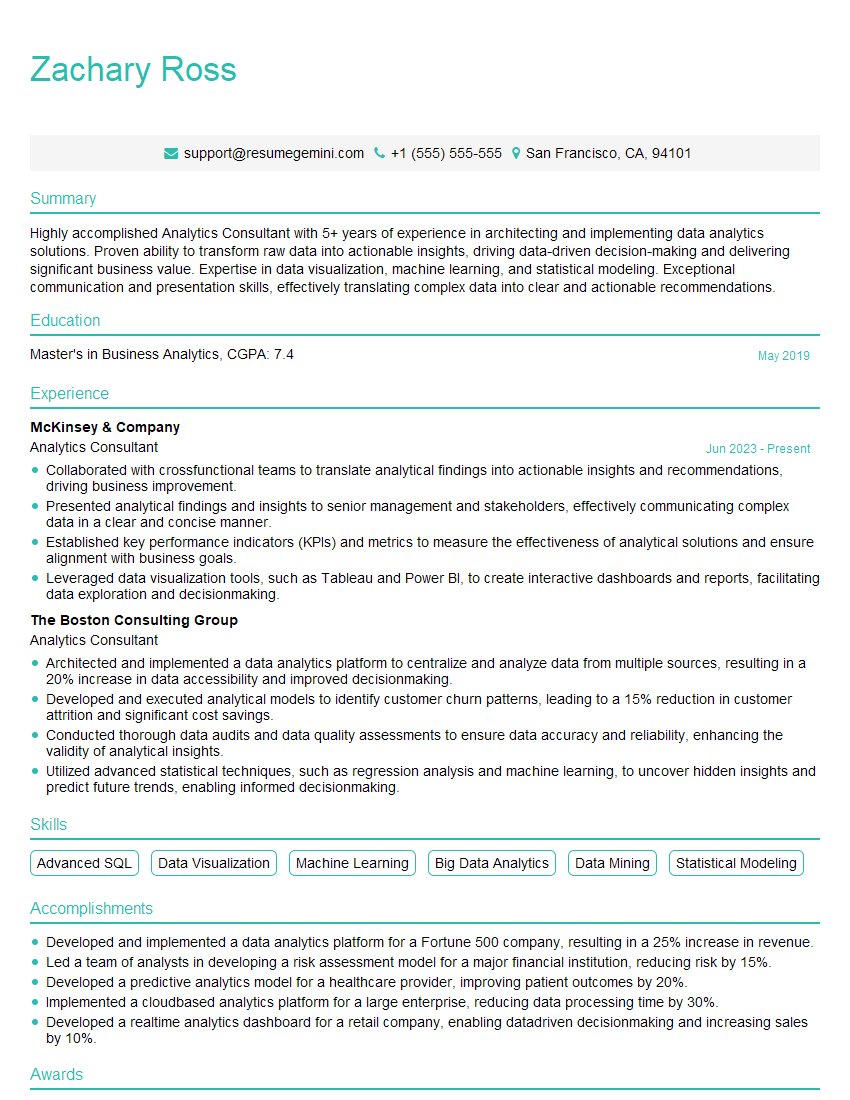

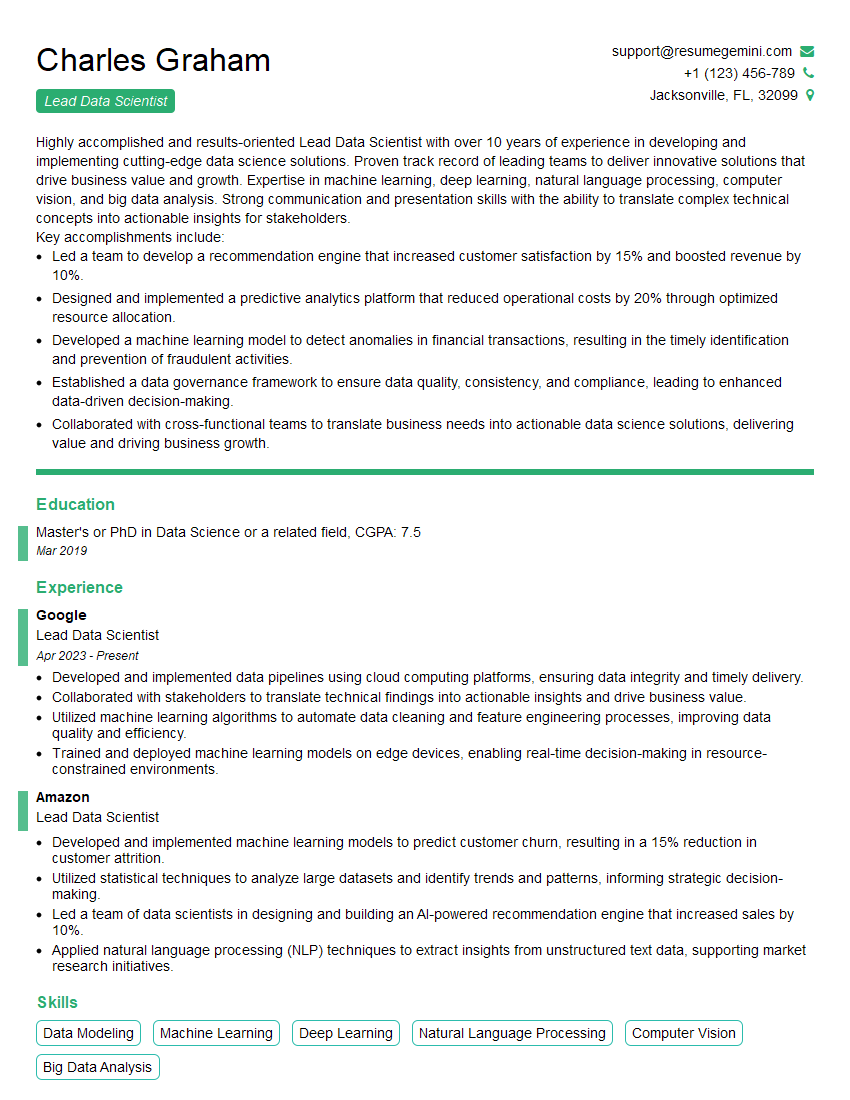

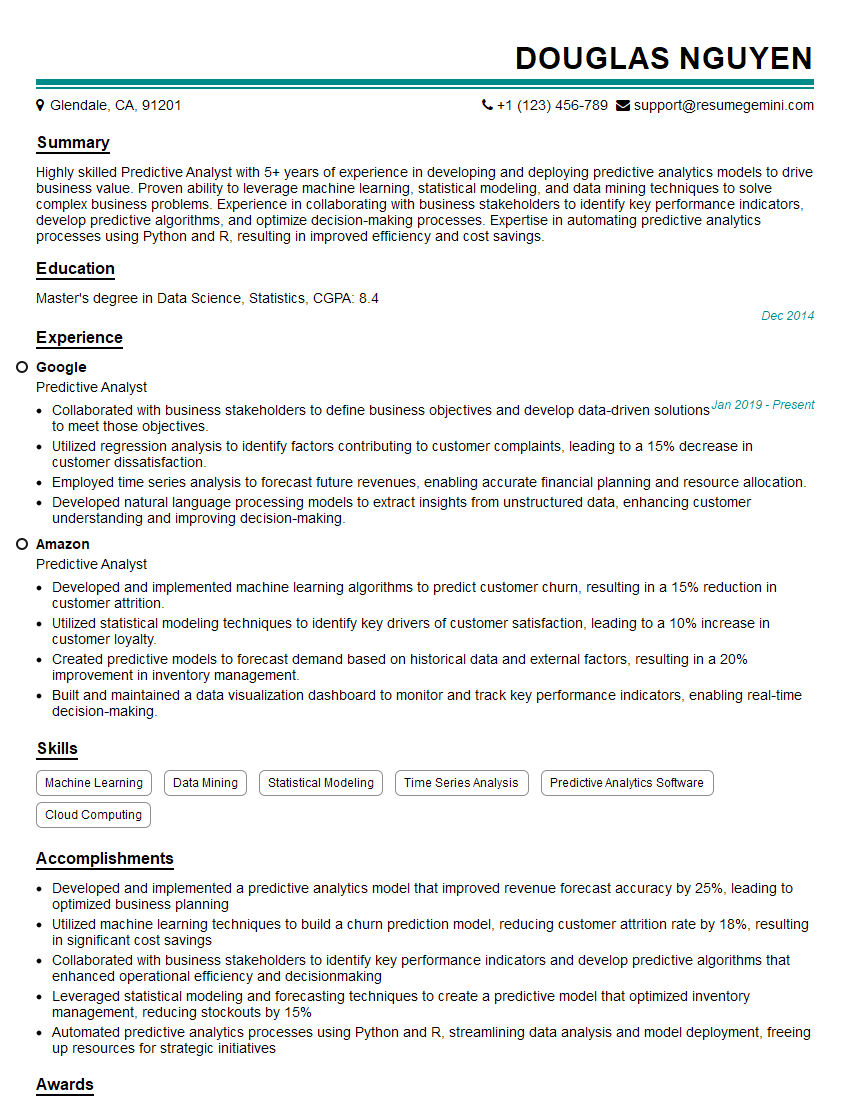

Mastering data analysis and interpretation is vital for career advancement in today’s data-driven world. It opens doors to exciting roles and opportunities for significant impact. To maximize your job prospects, create an ATS-friendly resume that highlights your skills and experience effectively. ResumeGemini is a trusted resource to help you build a professional and impactful resume. We provide examples of resumes tailored to data analysis and interpretation experience to guide you through the process.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Very informative content, great job.

good