Cracking a skill-specific interview, like one for Experience with research and user testing, requires understanding the nuances of the role. In this blog, we present the questions you’re most likely to encounter, along with insights into how to answer them effectively. Let’s ensure you’re ready to make a strong impression.

Questions Asked in Experience with research and user testing Interview

Q 1. Describe your experience conducting user interviews.

Conducting user interviews is a cornerstone of user research. It involves engaging in a structured conversation with individuals to understand their experiences, perspectives, and needs related to a particular product, service, or system. My approach emphasizes creating a safe and comfortable environment where participants feel free to share their honest opinions. I typically start with broad, open-ended questions to encourage narrative responses, gradually moving towards more specific probes to clarify or delve deeper into particular areas. For instance, instead of asking, “Did you find the website easy to use?”, I might ask, “Can you walk me through your experience trying to find information about [specific task] on our website?” This approach allows me to understand not just the outcome but also the process and the user’s thought process. I always ensure that interviews are recorded (with participant consent) and thoroughly transcribed for later analysis. I also use techniques like active listening, paraphrasing, and probing to ensure clarity and depth of understanding. For example, if a participant mentions frustration with a specific feature, I might ask, “Can you tell me more about what specifically made you frustrated?” to gain richer qualitative data.

Q 2. Explain your process for recruiting participants for user research.

Recruiting participants for user research is crucial for ensuring the representativeness and validity of the findings. My process begins with clearly defining the target audience based on the research objectives. For example, if I’m researching a new mobile banking app, I’d need to identify the demographic profile, tech-savviness, and banking habits of my ideal participants. Next, I select the most appropriate recruitment method: This could involve using online participant panels, social media advertising, collaborating with relevant organizations, or employing snowball sampling (asking participants to recommend others). I always craft a compelling recruitment script emphasizing the purpose, duration, compensation (if any), and confidentiality aspects of the research. I prioritize creating diverse participant pools to mitigate biases and get a broader picture. Finally, I meticulously screen potential participants to ensure they meet the pre-defined criteria. For example, I might use screening questionnaires or pre-interview calls to verify their experience and suitability. This meticulous approach guarantees that the recruited participants provide valuable and relevant insights.

Q 3. What qualitative research methods are you most proficient in?

I’m highly proficient in a range of qualitative research methods. User interviews, as previously discussed, are a core strength. Beyond that, I regularly employ contextual inquiry, which involves observing users in their natural environment while they interact with the product or service. This provides rich, contextual data that can’t be gleaned from interviews alone. I also leverage card sorting, a technique used to understand how users organize and categorize information, helping design intuitive information architectures. Finally, I frequently use diary studies, where participants keep a log of their experiences over a set period. This offers longitudinal insights into user behavior and attitudes. I find that combining these methods provides a more comprehensive understanding than relying on a single technique.

Q 4. What quantitative research methods are you most proficient in?

While my expertise leans towards qualitative research, I am also proficient in several quantitative methods. A/B testing, for example, allows me to compare the performance of two different versions of a product or feature. This provides objective data on which version is more effective. I also utilize surveys, particularly online surveys, to gather large-scale data on user opinions, preferences, and behaviors. This method helps quantify trends and patterns identified through qualitative research. Finally, I’m comfortable analyzing usage data such as website analytics (e.g., Google Analytics), which provides insights into user behavior on digital platforms, revealing patterns and areas needing improvement. I often integrate quantitative data to validate or supplement findings from qualitative research, creating a robust understanding of user experience.

Q 5. How do you analyze qualitative data?

Analyzing qualitative data is an iterative process that relies on careful attention to detail and a systematic approach. I begin by transcribing the interview recordings verbatim. Then, I use thematic analysis to identify recurring themes, patterns, and insights within the data. This involves systematically coding segments of text based on their relevance to specific themes. I might use software like NVivo or Atlas.ti to assist in this process, particularly when dealing with large datasets. Beyond simple coding, I employ techniques like affinity mapping to visually organize and cluster themes, allowing for deeper interpretation. I constantly compare and contrast different themes, looking for connections, contradictions, and areas requiring further investigation. The goal is not just to summarize what participants said but to synthesize the data to derive meaningful conclusions and actionable recommendations.

Q 6. How do you analyze quantitative data?

Analyzing quantitative data involves using statistical techniques to identify trends, patterns, and relationships within numerical data. For A/B testing, I’d use statistical tests like t-tests to determine if the differences between the two groups are statistically significant. With survey data, I use descriptive statistics (mean, median, mode, standard deviation) to summarize the data and inferential statistics (correlation, regression) to explore relationships between variables. For example, I might analyze the correlation between user satisfaction scores and the frequency of app usage. I also visualize the data using charts and graphs (bar charts, pie charts, scatter plots) to facilitate understanding and communication of findings. The choice of statistical methods depends on the research question and the type of data collected. The critical aspect is to use appropriate statistical tests and interpret results accurately to avoid misleading conclusions.

Q 7. How do you synthesize findings from both qualitative and quantitative research?

Synthesizing findings from both qualitative and quantitative research is crucial for a complete and nuanced understanding. I view these methods as complementary, not competing. Quantitative data provides a broad overview and can validate patterns observed in qualitative data. For example, a survey might reveal a general dissatisfaction with a particular feature (quantitative), while user interviews can uncover the specific reasons behind that dissatisfaction (qualitative). I use a triangulation approach, where I compare and contrast findings from both methods to confirm or refute hypotheses. A strong synthesis also involves identifying any discrepancies between the two datasets. These discrepancies can highlight important nuances or areas needing further investigation. The final synthesis usually results in a report that clearly outlines both the quantitative and qualitative findings, explains the relationship between them, and presents actionable recommendations based on a holistic understanding of the user experience.

Q 8. Describe a time you had to overcome a challenge during user research.

One of the biggest challenges I faced was during research for a new mobile banking app. We initially focused solely on usability testing with tech-savvy young adults. However, the app was designed for a much broader audience, including older users less comfortable with technology. The initial feedback was overwhelmingly positive from our test group, leading us to believe we were on the right track. However, when we expanded our testing to include older adults, we discovered significant usability issues. They struggled with navigation, font sizes, and the overall visual design. We had to overcome this challenge by pivoting our research strategy. We implemented iterative design changes based on the feedback from the older adult group, incorporating larger fonts, simpler navigation, and clearer visual cues. We also utilized different research methods, such as contextual inquiry, to understand their needs and preferences in their natural environment.

This experience highlighted the critical importance of considering user diversity from the beginning of the research process. A narrow focus on a single demographic can lead to a flawed product that fails to meet the needs of a larger target audience. The solution involved not only changing the app but also changing our research approach to be more inclusive and representative.

Q 9. How do you ensure your research is unbiased?

Ensuring unbiased research is paramount. I employ several strategies to mitigate bias. First, I carefully define my research objectives and target audience to avoid leading questions or focusing on specific user groups at the expense of others. My questionnaires are meticulously crafted to use neutral language and avoid biased phrasing. I also utilize diverse recruitment strategies to ensure a representative sample of participants. This includes using diverse recruiting platforms and partnering with organizations representing various demographic groups.

Furthermore, I use blind testing methodologies whenever possible. This means that participants are unaware of the hypothesis being tested. During analysis, I rely on quantitative data whenever feasible. While qualitative data provides valuable insights, it can be susceptible to subjective interpretation. Combining qualitative and quantitative findings helps to balance subjective insights with objective measurements. Finally, I strive for transparency in the research process. The methodology, data analysis, and findings are documented thoroughly so that others can review the work and identify any potential biases.

Q 10. Explain your experience with A/B testing.

A/B testing, or split testing, is a crucial component of my research arsenal. It involves presenting two or more versions of a design element (e.g., a button, headline, image) to different groups of users and measuring which version performs better based on pre-defined metrics. I’ve extensively used A/B testing to optimize website designs, email campaigns, and app interfaces. For instance, during a recent website redesign, we A/B tested two different homepage layouts: one with a prominent call-to-action button above the fold and another with the button placed lower on the page.

We tracked metrics like click-through rates, time on page, and bounce rates to determine which version was more effective in driving conversions. The results clearly indicated that the version with the button above the fold significantly improved user engagement and lead generation. To ensure the validity of A/B tests, it’s important to use statistically significant sample sizes, minimize external variables that might influence results (such as seasonal changes), and closely monitor the test duration to avoid premature conclusions.

Q 11. How do you define success in a user research project?

Success in user research is not solely defined by achieving a specific metric or outcome. It’s a multifaceted achievement that involves several key elements. First, a successful research project must provide actionable insights that directly influence design and development decisions. This means that the findings should lead to tangible improvements in the user experience, such as increased usability, improved task completion rates, or higher user satisfaction. Second, a successful research project must be conducted ethically and with respect for participant rights and privacy.

Third, successful user research projects should be efficient and cost-effective. This involves carefully planning the research design, selecting appropriate methodologies, and analyzing data promptly and efficiently. Finally, effective communication of the findings is essential. I prepare clear and concise reports, presentations, and other relevant communication materials to ensure stakeholders understand the implications of the findings and can effectively implement the recommendations. A successful project isn’t just about completing the research, but about ensuring that research influences positive change.

Q 12. Describe your experience with usability testing.

Usability testing is a cornerstone of my research practice. I have considerable experience conducting both moderated and unmoderated usability tests, using a variety of methods, including think-aloud protocols, eye-tracking, and heuristic evaluations. In a recent project involving an e-commerce platform, we conducted moderated usability tests to observe users as they navigated the website and completed tasks such as adding items to their shopping cart, and checking out. We used a think-aloud protocol, encouraging participants to verbalize their thoughts and actions throughout the process. This provided invaluable qualitative data on pain points and areas for improvement.

We also incorporated eye-tracking to identify areas of visual attention and potential usability problems. This helped us understand where users were focusing their attention on the screen and identify any areas where navigation or visual design was causing confusion. The data gathered from the usability testing sessions were then analyzed to identify patterns of behavior, usability problems, and areas for improvement. This informed the design team’s decisions about the website’s structure, navigation, and overall user experience.

Q 13. What are some key metrics you track in usability testing?

In usability testing, I track a range of key metrics to measure the effectiveness and efficiency of a design. These metrics can be broadly categorized into quantitative and qualitative measures. Quantitative metrics include:

- Task Completion Rate: The percentage of participants who successfully complete the assigned tasks.

- Time on Task: The amount of time it takes participants to complete each task.

- Error Rate: The number of errors made by participants during task completion.

- Efficiency: A combination of time on task and error rate, often expressed as task completion time divided by errors.

Qualitative metrics, gathered through observations and user interviews, include:

- User Satisfaction: Measured using questionnaires or feedback forms after the test sessions.

- Perceived Ease of Use: Participants’ subjective rating of how easy the system is to use.

- Qualitative Feedback: Detailed observations of users’ actions and behaviors, and their verbal feedback during the task.

By combining both quantitative and qualitative data, I can gain a comprehensive understanding of the user experience and identify specific areas for improvement.

Q 14. How do you handle conflicting results from different research methods?

Conflicting results from different research methods are common and highlight the complexity of user behavior. Instead of dismissing any method, I treat them as complementary sources of information. The first step is to carefully analyze the data from each method, looking for underlying patterns and potential explanations for the discrepancies. For example, a quantitative analysis might show a high task completion rate, while qualitative interviews reveal user frustration with certain aspects of the design.

It’s vital to delve deeper into the conflicting findings, possibly by conducting additional user research to clarify any ambiguities. I often triangulate data by comparing findings from different methods. For instance, if a usability test reveals navigation issues and a survey shows low satisfaction scores, the conclusion strengthens the evidence indicating the need for navigation improvements. Sometimes, the conflicts point to underlying user needs that weren’t fully addressed in the initial research design, requiring an adjustment in the research plan. The key is to look for overarching themes and understand how seemingly contradictory results can reveal a more nuanced picture of the user experience. The goal is not to reconcile differences at all costs, but to understand the underlying reasons for those differences, and to build a comprehensive understanding of the user experience.

Q 15. How do you present your research findings to stakeholders?

Presenting research findings effectively to stakeholders requires a tailored approach that considers their level of understanding and their primary interests. I begin by summarizing key findings concisely, focusing on the ‘so what?’ – the implications of the research for the product or strategy. Then, I progressively delve into the details, using visuals like charts, graphs, and user quotes to illustrate key points and make the data more accessible. For example, instead of simply stating ‘users found the navigation confusing,’ I’d present a heatmap showing where users clicked most frequently, coupled with direct quotes from users expressing their frustration. I often structure my presentations around a narrative, highlighting user needs and pain points, and how our proposed solutions address them. Finally, I always conclude with clear, actionable recommendations and next steps, ensuring the presentation isn’t just informative but also leads to tangible outcomes. A strong Q&A session is crucial for addressing stakeholder concerns and clarifying any ambiguities.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. What tools do you use for user research?

My toolkit for user research is quite diverse, adapting to the specific needs of each project. For qualitative research, I frequently use tools like UserTesting.com and TryMyUI for unmoderated remote testing, allowing for quick and scalable feedback collection. For moderated sessions, I leverage Zoom or Google Meet, supplemented by screen recording software like Loom to capture user interactions. For quantitative research, I rely on survey platforms such as Qualtrics and SurveyMonkey. I also utilize Miro or Figma for collaborative brainstorming and prototyping sessions, allowing stakeholders to actively participate in the research process. Finally, data analysis is handled using software like Excel, R, or specialized analytics dashboards depending on the scale and complexity of the data. Choosing the right tool is about optimizing for efficiency and extracting meaningful insights within the project’s constraints.

Q 17. What is your experience with remote user testing?

I have extensive experience conducting remote user testing, recognizing its benefits in terms of scalability, cost-effectiveness, and access to a wider range of participants. I’ve successfully conducted numerous studies using various platforms, mastering the techniques to ensure a smooth and engaging experience for participants despite the physical distance. This includes careful planning of the testing script, detailed instructions for participants, employing clear and concise communication throughout the process, and using screen-sharing and collaborative tools to mimic the experience of in-person testing as much as possible. One challenge I frequently address is ensuring technical setup is easy for participants. I provide detailed step-by-step instructions and technical support during the study, minimizing participant drop-off due to technical issues. Moreover, I meticulously analyze the data from remote sessions, looking for patterns and insights just as I would with in-person studies, adjusting my approach as needed based on the platform and the nature of the study.

Q 18. How do you prioritize research activities?

Prioritizing research activities is a crucial skill that involves balancing strategic goals with resource constraints. I typically employ a framework that considers factors such as business impact, user impact, feasibility, and time constraints. I use a prioritization matrix, often a MoSCoW method (Must have, Should have, Could have, Won’t have), to rank research questions based on their urgency and importance. For instance, if a key feature is causing significant user frustration and impacting conversion rates, research investigating this issue would be prioritized as a ‘Must have.’ Less critical areas, like exploring minor UI improvements, might be categorized as ‘Could have,’ to be addressed after higher-priority research is completed. Regular communication with stakeholders is also crucial for ensuring alignment on priorities and making adjustments as new information emerges during the research process. This ensures that research resources are allocated effectively to maximize the impact on product development and business outcomes.

Q 19. Describe your experience with different types of user testing (e.g., moderated, unmoderated).

My experience encompasses both moderated and unmoderated user testing methodologies. Moderated testing, where I directly interact with participants, allows for deeper probing and contextual understanding. I can adapt questions in real-time, clarifying ambiguities and exploring unexpected insights. This approach is ideal when exploring complex interactions or nuanced user behaviours. For example, in a recent project, I used moderated testing to understand user perceptions of a new feature. The live interaction enabled me to uncover underlying concerns about usability that weren’t apparent in survey responses. Unmoderated testing, on the other hand, is advantageous for its scalability and efficiency. Platforms like UserTesting allow me to gather feedback from a larger and more diverse user base quickly. I’ve used this approach to gauge overall satisfaction with existing features and to identify common usability pain points across various demographics. Choosing between moderated and unmoderated depends heavily on research objectives, budget, and timeline. Often, a combination of both offers the most comprehensive understanding.

Q 20. How do you ensure the ethical conduct of user research?

Ethical conduct is paramount in user research. I strictly adhere to guidelines ensuring informed consent, privacy, and data security. Before any study, participants are provided with clear and concise information about the research purpose, procedures, data usage, and their rights, including the right to withdraw at any time. All data is anonymized and stored securely, complying with relevant data privacy regulations. I always emphasize participant well-being, avoiding situations that could cause stress or discomfort. For example, I carefully review research tasks to ensure they are not overly demanding or frustrating. Transparency is vital; participants are kept informed throughout the process and given opportunities to ask questions. Debriefing sessions after the study allow for clarification and address any concerns. My commitment to ethical practices fosters trust with participants, increasing the validity and credibility of the research findings.

Q 21. How do you adapt your research approach for different user groups?

Adapting research approaches for different user groups is critical for obtaining meaningful insights. This requires understanding the specific needs, contexts, and communication preferences of each group. For example, conducting research with elderly users might necessitate larger fonts, simpler interfaces, and longer response times. Similarly, when researching with individuals with disabilities, accessibility considerations are paramount, ensuring the testing environment and methods accommodate their specific needs. For diverse language groups, translation and interpretation services are crucial. I actively seek diverse participants representing the user base’s demographics and technical proficiency. Method selection also adapts – a visual approach might be preferred for less tech-savvy users, while technically proficient users might engage effectively with usability testing on a prototype. Empathy and cultural sensitivity are key; the research process needs to be inclusive and respectful of participants’ backgrounds and experiences.

Q 22. Describe your experience with creating research plans.

Creating a robust research plan is the cornerstone of any successful user research project. It’s like creating a blueprint for a house – you need a solid foundation to build upon. My approach involves several key stages: defining the research objectives, identifying the target audience, selecting appropriate methodologies, outlining the data collection process, and establishing a timeline and budget.

- Defining Objectives: I start by clearly articulating the research questions. For example, instead of a vague objective like ‘improve user experience,’ I’d specify ‘Determine the key pain points users encounter when completing the checkout process on our e-commerce website.’ This ensures the research stays focused and delivers actionable insights.

- Target Audience: I carefully define the user groups we’ll be researching. This might involve creating detailed user personas, which are semi-fictional representations of our ideal users based on available data and research. This helps us recruit the right participants for testing.

- Methodology Selection: I choose the most appropriate research methods based on the research objectives. This might include surveys for gathering quantitative data on user preferences, user interviews for in-depth qualitative insights, or usability testing to observe users interacting with a product or service.

- Data Collection & Analysis: I detail how data will be collected (e.g., through online surveys, in-person interviews, observation protocols) and how it will be analyzed (e.g., thematic analysis for qualitative data, statistical analysis for quantitative data). This includes outlining the tools and technologies we’ll use.

- Timeline & Budget: I create a realistic timeline, factoring in recruitment, data collection, analysis, and reporting. The budget outlines all anticipated costs, including participant compensation, software licenses, and any travel expenses.

For example, in a recent project for a mobile banking app, we started by clearly defining the objective of assessing user satisfaction with the new mobile deposit feature. This led us to select a mixed-methods approach, combining surveys to gather quantitative data on overall satisfaction and user interviews to understand the qualitative reasons behind those ratings.

Q 23. How do you manage the budget for a user research project?

Managing the budget for a user research project requires careful planning and prioritization. I start by estimating the costs associated with each phase of the research, from participant recruitment to data analysis and reporting. This usually involves creating a detailed budget spreadsheet that outlines all anticipated expenses.

- Participant Compensation: This is often the largest expense. I determine appropriate compensation based on the time commitment and the type of research (e.g., higher compensation for longer interviews or complex usability tests).

- Recruitment Costs: The cost of recruiting participants will depend on the target audience and the recruitment method used (e.g., using a participant recruitment platform versus recruiting participants through internal channels).

- Software and Tools: Costs may include subscriptions to survey platforms (e.g., Qualtrics, SurveyMonkey), usability testing software (e.g., UserTesting.com), or data analysis tools (e.g., SPSS).

- Travel and Facilities: If conducting in-person research, I budget for travel, venue rental, and any necessary equipment.

- Data Analysis and Reporting: Allocate budget for the time required for analyzing the data and creating a final report. This might involve hiring additional data analysts or paying for transcription services.

Throughout the project, I track expenses carefully against the budget, making adjustments as needed. Transparency with stakeholders is crucial; regular budget updates ensure everyone is informed of the project’s financial status. I also prioritize value; I constantly evaluate whether each expense aligns with the research goals and contributes to achieving meaningful insights.

Q 24. Explain your experience working with various research methodologies.

My experience encompasses a wide range of research methodologies, allowing me to select the most appropriate approach depending on the research question and available resources. I’m proficient in both quantitative and qualitative methods, and often use mixed-methods approaches to gain a comprehensive understanding of the user experience.

- Qualitative Methods: These methods provide rich, in-depth insights into user behaviors and attitudes. I have extensive experience with user interviews, focus groups, ethnographic studies (observing users in their natural environment), and diary studies.

- Quantitative Methods: These methods are focused on gathering numerical data and analyzing patterns and trends. My experience includes surveys, A/B testing, and analytics analysis (e.g., using Google Analytics to track website usage).

- Usability Testing: This involves observing users interacting with a product or service to identify usability issues and areas for improvement. I’m experienced in moderated and unmoderated usability testing, using a variety of tools and techniques.

- Mixed Methods: This approach combines both quantitative and qualitative methods to get a more holistic view. For instance, I might conduct a survey to gather quantitative data on user satisfaction, followed by user interviews to delve deeper into the reasons behind those scores.

For example, in a project for a new mobile app, we used a mixed-methods approach: a survey provided quantitative data on user satisfaction, while in-depth interviews offered qualitative insights into users’ experiences and pain points. This combination provided a well-rounded picture, informing design improvements and driving greater user engagement.

Q 25. How familiar are you with different data visualization tools?

Data visualization is crucial for effectively communicating research findings. I’m proficient in using various tools to create compelling and insightful visualizations. My experience includes:

- Spreadsheet Software (Excel, Google Sheets): Used for basic data manipulation and charting (bar charts, pie charts, line graphs).

- Data Visualization Software (Tableau, Power BI): These tools enable the creation of more sophisticated dashboards and interactive visualizations, ideal for presenting complex datasets and trends.

- Presentation Software (PowerPoint, Google Slides): While not solely for data visualization, these are essential for creating compelling presentations that integrate charts and graphs to showcase research insights.

- Specialized Tools (e.g., R, Python with libraries like Matplotlib and Seaborn): For advanced statistical analysis and custom visualizations.

The choice of tool depends on the complexity of the data and the intended audience. For a quick overview presented to a project team, a simple chart in Excel might suffice. For a comprehensive report to senior management, a more sophisticated dashboard created using Tableau or Power BI would be more appropriate. The key is to choose tools that allow for clear, accurate, and engaging communication of findings.

Q 26. How do you ensure the validity and reliability of your research?

Ensuring the validity and reliability of research is paramount. Validity refers to the accuracy of the research findings – are we measuring what we intend to measure? Reliability refers to the consistency of the findings – would we get similar results if we repeated the study? I employ several strategies to maximize both:

- Rigorous Research Design: A well-defined research plan, using appropriate methodologies and sampling techniques, is crucial. For example, ensuring a representative sample in surveys avoids biased results.

- Pilot Testing: Conducting pilot studies with a small group allows for refining the research instruments (surveys, interview protocols) and identifying potential issues before the main study.

- Triangulation: Using multiple methods (e.g., surveys and interviews) to collect data on the same topic strengthens the validity and reliability of the findings. This helps to cross-validate the insights obtained through different approaches.

- Inter-rater Reliability (for qualitative data): When multiple researchers are involved in analyzing qualitative data, such as interviews, having them independently code the data and compare their findings helps ensure consistency and agreement.

- Clear Operational Definitions: Defining all key terms and concepts precisely helps to minimize ambiguity and enhances the reproducibility of the research.

For instance, in a usability study, we use clear, standardized tasks to ensure consistency across participants. We also record the sessions to enable multiple researchers to analyze the data and check for inter-rater reliability. This approach helps ensure the validity and reliability of our findings, building confidence in the insights generated.

Q 27. How do you handle situations where research findings contradict initial assumptions?

When research findings contradict initial assumptions, it’s crucial to remain objective and explore the reasons behind the discrepancy. This is an opportunity for learning and refinement, rather than a setback. My approach involves:

- Reviewing the Research Process: I carefully examine the entire research process, from the research design to the data analysis, to identify potential flaws or biases that may have contributed to the unexpected findings.

- Data Validation: I re-examine the data to ensure its accuracy and completeness. This might involve checking for errors in data entry or re-analyzing the data using different methods.

- Qualitative Data Exploration: If using qualitative methods, I delve deeper into the interview transcripts or observation notes to understand the underlying reasons for the unexpected findings. Often, rich qualitative data provides context for unexpected quantitative results.

- Stakeholder Discussion: I discuss the findings with stakeholders to gain their perspectives and explore alternative interpretations. This collaborative approach helps to identify potential blind spots and refine our understanding of the issue.

- Refining Assumptions: Based on the analysis and discussion, I revise my initial assumptions and integrate the new findings into the overall project understanding. This iterative process leads to a more accurate and nuanced understanding of the problem.

In a recent project, initial assumptions about user preference for a specific design feature were challenged by user testing. By carefully reviewing the data and conducting additional interviews, we uncovered a significant usability issue that hadn’t been anticipated. This led to design improvements and a more user-friendly product.

Q 28. Describe your experience with user feedback analysis.

User feedback analysis is a crucial part of the research process. It helps us understand user needs, identify areas for improvement, and measure the success of design changes. My approach involves a systematic process:

- Data Collection: This involves gathering feedback from various sources, including surveys, interviews, usability testing sessions, and app store reviews. Each source offers different types of data (quantitative, qualitative), which must be analyzed accordingly.

- Data Organization: Once collected, the data is organized and categorized. This may involve creating spreadsheets to organize quantitative data or using qualitative data analysis software (e.g., NVivo) to code and analyze interview transcripts.

- Identifying Themes and Patterns: For qualitative data, I employ thematic analysis to identify recurring themes and patterns in user feedback. This involves coding the data, grouping similar codes into themes, and identifying relationships between themes.

- Quantitative Analysis: For quantitative data (e.g., survey results), I use statistical methods to analyze the data, identify trends, and calculate key metrics such as user satisfaction scores.

- Reporting and Communication: Finally, I present the findings in a clear and concise manner using visualizations and data storytelling techniques. This involves synthesizing the findings from both qualitative and quantitative data to provide a holistic view of user feedback.

A recent example involved analyzing app store reviews for a mobile game. By identifying common themes related to game mechanics and user interface issues, we were able to prioritize improvements and enhance the user experience, leading to a significant increase in positive ratings.

Key Topics to Learn for Experience with research and user testing Interview

- User Research Methodologies: Understanding and applying various research methods like user interviews, usability testing, A/B testing, surveys, and ethnographic studies. Consider the strengths and weaknesses of each approach and when to apply them.

- Qualitative vs. Quantitative Data Analysis: Mastering the art of analyzing both qualitative (e.g., interview transcripts) and quantitative (e.g., survey results) data to draw meaningful conclusions and inform design decisions. Practice interpreting data and identifying key trends.

- Usability Testing Principles: Gain a strong understanding of conducting effective usability tests, including participant recruitment, test plan development, moderation techniques, and data analysis. Learn how to identify usability issues and prioritize them based on severity.

- User Persona Development: Learn to create detailed user personas based on research findings. This involves synthesizing user data to create representative profiles of your target audience.

- Data Visualization and Reporting: Practice presenting your research findings clearly and concisely through effective data visualizations and compelling reports. Learn to tailor your communication to different audiences.

- Iterative Design Process: Understand how user research integrates into an iterative design process, enabling continuous improvement based on user feedback.

- Ethical Considerations in User Research: Familiarize yourself with ethical guidelines and best practices for conducting user research, including informed consent, data privacy, and participant well-being.

Next Steps

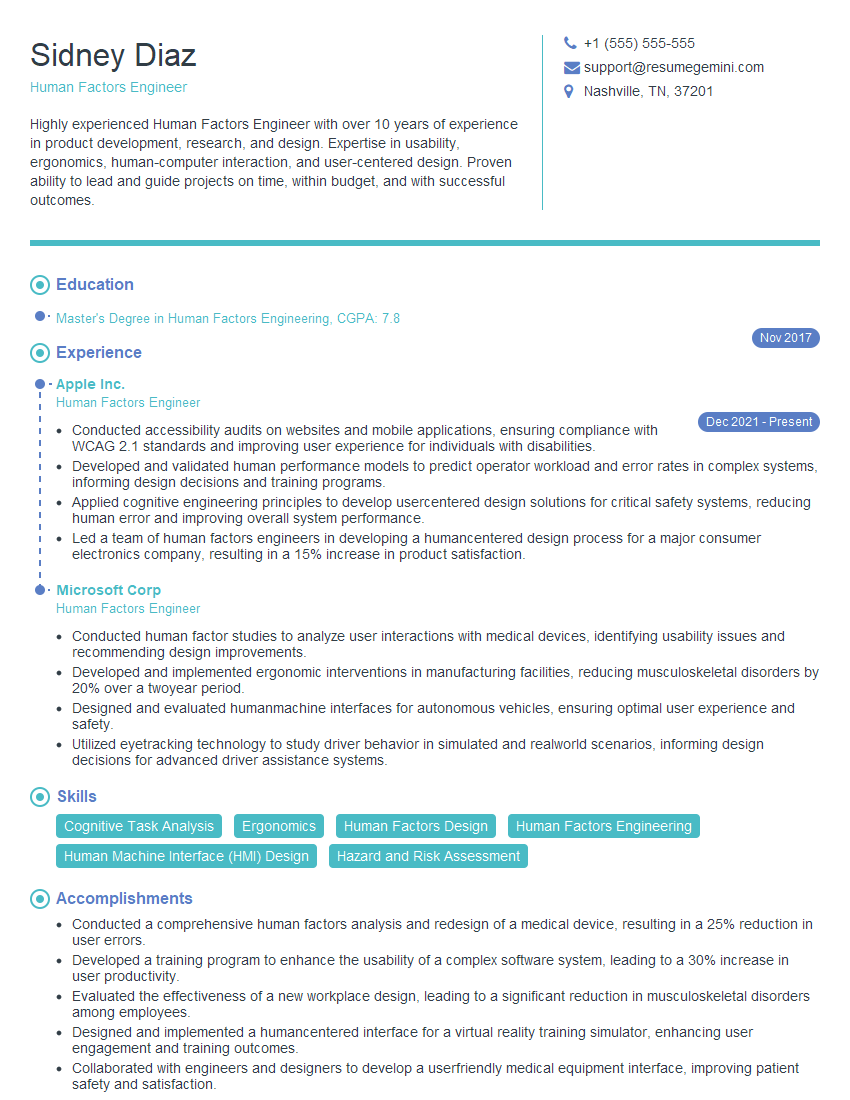

Mastering experience with research and user testing is crucial for career advancement in today’s user-centric design landscape. Demonstrating strong research skills significantly improves your job prospects and allows you to contribute meaningfully to product development. To increase your chances of landing your dream role, it’s vital to create a compelling and ATS-friendly resume that highlights your skills and experience. ResumeGemini is a trusted resource that can help you build a professional and impactful resume. They provide examples of resumes tailored to Experience with research and user testing, making the process even easier. Take the next step towards your dream career – build a standout resume today!

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Very informative content, great job.

good