Feeling uncertain about what to expect in your upcoming interview? We’ve got you covered! This blog highlights the most important Music and Technology interview questions and provides actionable advice to help you stand out as the ideal candidate. Let’s pave the way for your success.

Questions Asked in Music and Technology Interview

Q 1. Explain the Nyquist-Shannon sampling theorem and its relevance to digital audio.

The Nyquist-Shannon sampling theorem is a fundamental principle in digital signal processing. It states that to accurately represent an analog signal (like audio) in digital form, you need to sample the signal at a rate at least twice the highest frequency present in that signal. This minimum sampling rate is called the Nyquist rate.

In simpler terms, imagine you’re taking snapshots of a spinning wheel. If the wheel spins too fast and you take snapshots too infrequently, you won’t capture its true motion. You might even think it’s spinning slower than it actually is. Similarly, if you sample audio too slowly, you’ll lose high-frequency information, resulting in a distorted, muffled sound – this is called aliasing.

For CD-quality audio, the highest frequency we aim to capture is roughly 20kHz (the upper limit of human hearing). Therefore, the minimum sampling rate is 44.1kHz (twice 20kHz). Higher sampling rates (like 96kHz or 192kHz) capture even more detail, potentially improving the fidelity of the recording but they also significantly increase file size.

Q 2. Describe the differences between lossy and lossless audio compression codecs.

Lossy and lossless compression codecs differ in how they reduce the size of audio files. Lossless compression uses algorithms that allow the original audio data to be perfectly reconstructed after decompression. Think of it like zip files for music: the file size shrinks, but no information is lost. Examples include FLAC (Free Lossless Audio Codec) and ALAC (Apple Lossless Audio Codec).

Lossy compression, on the other hand, discards some audio data deemed less important to human hearing during compression. This results in smaller file sizes, but an irreversible loss of quality. Think of it like summarizing a long story; you make it shorter but lose some details. MP3 (MPEG Audio Layer III) and AAC (Advanced Audio Coding) are common lossy codecs used for music streaming and distribution because of their small file sizes.

The choice between lossy and lossless depends on the application. Lossless is preferred for archiving, mastering, and situations where the highest fidelity is crucial. Lossy is better for streaming and situations where smaller file sizes are prioritized over absolute fidelity.

Q 3. What are the advantages and disadvantages of using different microphone types (e.g., condenser, dynamic)?

Condenser and dynamic microphones are two main types, each with strengths and weaknesses:

- Condenser microphones: These are generally more sensitive and offer a wider frequency response, capturing more detail and nuance. They’re excellent for recording delicate instruments like acoustic guitars or vocals, where capturing subtle nuances is essential. However, they require phantom power (48V) and can be more susceptible to handling noise.

- Dynamic microphones: These are more robust, less sensitive to handling noise, and don’t require phantom power. They’re ideal for live performances, loud instruments like drums or amplified guitars, and situations where feedback is a concern. Their frequency response is usually narrower than condenser mics, meaning they may not capture the same level of detail.

The choice of microphone depends greatly on the source and recording environment. A live rock concert might necessitate rugged dynamic mics, while a classical recording session might benefit from the sensitivity of a condenser microphone.

Q 4. How do you achieve proper equalization (EQ) in a mix?

EQ (Equalization) is the process of adjusting the balance of different frequencies in an audio signal. Proper EQ aims to enhance clarity, improve balance, and fix problematic frequencies in a mix. It’s a subtractive and additive process. The goal is not to simply boost everything but to shape the sound.

Achieving proper EQ involves:

- Listening critically: Identify frequencies that are muddy, harsh, or lacking presence.

- Subtractive EQ: First, address problem frequencies by cutting or reducing them. For example, reducing muddiness in the low-midrange (250-500Hz).

- Additive EQ: Then, subtly boost frequencies to enhance certain aspects of the sound. This could involve adding presence to vocals in the high-midrange (2-4kHz).

- Gain Staging: Ensure that your overall levels are balanced before and after applying EQ. Avoid excessive boosts to prevent distortion.

- A/B Comparisons: Constantly compare your EQ’d tracks to the unprocessed versions to monitor the effect of your changes.

A good analogy is sculpting. You start with a block of clay (the original audio) and you carefully carve away (subtractive EQ) and add (additive EQ) to achieve your desired shape (the final, balanced mix).

Q 5. Explain the concept of dynamic processing (compression, limiting, gating).

Dynamic processing encompasses techniques used to control the dynamic range of an audio signal – the difference between the quietest and loudest parts. Compression, limiting, and gating are common dynamic processing tools:

- Compression: Reduces the difference between loud and quiet parts. It makes a signal more even in volume, useful for vocals, drums, or controlling dynamics in a mix.

- Limiting: A more extreme form of compression, limiting prevents a signal from exceeding a specified threshold. It’s used for mastering to maximize loudness without clipping (distortion).

- Gating: Eliminates or reduces sounds below a certain threshold. It’s often used to remove unwanted background noise or to make a quieter instrument or sound more prominent.

Imagine a vocalist with inconsistent volume. Compression evens out those variations, resulting in a more consistent and polished performance. Limiting is like a safety net in mastering, ensuring that peaks remain within the boundaries of the audio format, preventing distortion. Gating silences background noise, allowing only the desired signals through, like cleaning up audio where unintended noises creep in.

Q 6. What are your preferred Digital Audio Workstations (DAWs) and why?

My preferred DAWs depend on the project’s needs, but I frequently use Logic Pro X and Ableton Live.

Logic Pro X excels in its comprehensive feature set, intuitive workflow, and robust MIDI capabilities, particularly for composition and recording. Its vast library of instruments and effects is a significant advantage. I often use it for detailed projects like orchestral scoring or complex pop production.

Ableton Live is better suited for electronic music production, live performance, and creative looping. Its session view makes it incredibly versatile for improvisation and arranging. I usually turn to it for electronic music, experimental projects, or when a more fluid, less linear workflow is required.

Ultimately, the best DAW is subjective and depends on individual workflow preferences and specific project requirements.

Q 7. Describe your experience with MIDI controllers and sequencing.

I have extensive experience with various MIDI controllers and sequencing. MIDI (Musical Instrument Digital Interface) is a protocol that allows electronic musical instruments and computers to communicate. MIDI controllers are devices used to input MIDI data into a DAW, which is subsequently used to trigger sounds, control parameters, or sequence music.

My experience encompasses keyboards, drum pads, and specialized controllers. I’m proficient in using MIDI controllers to:

- Record MIDI notes, creating melodies and harmonies.

- Control synthesizer parameters in real-time, shaping sounds dynamically.

- Automate parameters, creating dynamic changes in sound over time.

- Sequence complex musical arrangements.

- Work with different MIDI protocols and software integrations.

I regularly use MIDI sequencing for composing, arranging, and programming sounds, often employing techniques like step sequencing, automation, and advanced MIDI editing for creative control over sounds.

Q 8. How do you handle feedback in a live sound reinforcement setting?

Handling feedback in live sound reinforcement is crucial for a clear and enjoyable listening experience. It’s a constant process of monitoring, identifying, and mitigating unwanted sounds. Think of it like conducting an orchestra – you need to balance all the instruments (microphones, instruments, etc.) to create a harmonious whole, without any individual section overpowering the others.

My approach involves a multi-step process:

- Careful Mic Placement and Technique: Minimizing feedback starts before the sound even hits the microphone. This involves understanding microphone polar patterns (cardioid, omnidirectional, etc.) and placing them strategically to avoid picking up unwanted sounds from speakers. For example, using a cardioid mic pointed directly at the sound source will reduce unwanted sounds from the sides and rear.

- EQ (Equalization): A graphic or parametric equalizer is your primary weapon against feedback. By cutting specific frequencies where feedback is most likely to occur (usually in the lower mids and highs), you can dramatically reduce the risk. This is a dynamic process – you’ll adjust EQ levels based on the room’s acoustics and the volume levels.

- Gain Staging: Maintaining appropriate gain levels across the entire signal chain is paramount. Too much gain before the EQ will make it harder to combat feedback. It’s like trying to contain a flood with a tiny bucket. Start low and gradually increase the signal levels, constantly listening for any signs of feedback.

- Feedback Destroyers/Notch Filters: Specialized equipment like feedback destroyers automatically identify and attenuate feedback frequencies in real-time. They’re invaluable for larger, complex setups.

- Room Acoustics: The physical characteristics of the venue play a huge role. Excessive reverberation (echo) increases feedback risk. Techniques such as sound absorption materials (like bass traps and acoustic panels) and careful speaker placement can significantly improve the sound quality and reduce feedback problems.

I’ve found that proactive monitoring and a combination of these techniques prevent most feedback issues, enabling a smooth and enjoyable live sound experience. My experience includes working in various venues, from small clubs to large concert halls, constantly adapting my approach based on the specific acoustic characteristics of each space.

Q 9. Explain your workflow for mixing and mastering audio.

My mixing and mastering workflow is iterative, focusing on achieving a polished and cohesive final product. I treat them as two distinct processes that serve different goals. Mixing aims to achieve good balance and clarity in the individual tracks, while mastering aims to create a cohesive final output, optimizing for different playback systems.

Mixing Workflow:

- Preparation: This includes organizing files, editing individual tracks (cleaning up unwanted noises, timing corrections), and setting up a well-organized session.

- Gain Staging and Level Matching: Creating a solid foundation through appropriate levels across all tracks before applying any processing.

- EQ (Equalization): Sculpting the tonal balance of individual instruments and vocals to improve clarity and remove unwanted frequencies.

- Compression: Controlling the dynamics of individual tracks and buses to create a balanced and punchy sound. This makes the quieter parts louder and the louder parts quieter.

- Reverb and Delay: Adding spatial depth and ambience. The correct amount will depend greatly on the song.

- Automation: Using automation to subtly adjust parameters (volume, panning, effects) over time to keep the listener engaged.

- Stereo Imaging: Creating a wide and immersive stereo field that gives a greater sense of space.

- Final Mix Checks: Listening to the mix on various playback systems to ensure it translates well across different environments.

Mastering Workflow:

- Gain Staging: Setting the overall volume level for the final product.

- EQ: Subtle EQ adjustments to ensure optimal frequency balance across the entire mix.

- Compression and Limiting: Maximizing the loudness of the mix while preserving its dynamic range. This can often result in a more radio-ready sound.

- Stereo Widening: Ensuring appropriate stereo image.

- Dithering: Adding very low-level noise to reduce distortion when converting to lower bit-depth formats.

- Final Checks and Export: Listening on different systems for potential issues before exporting in the desired format.

Throughout both processes, constant A/B comparison (comparing multiple versions) and critical listening are fundamental to ensure quality. My experience spans various genres, and I adjust my workflow based on the individual needs of each project.

Q 10. How familiar are you with different audio file formats (WAV, AIFF, MP3, etc.)?

I am very familiar with various audio file formats, each with its own strengths and weaknesses.

- WAV (Waveform Audio File Format): A lossless format, meaning no audio data is lost during compression. It’s ideal for archiving and high-quality production work but results in larger file sizes. It’s the gold standard for uncompressed audio.

- AIFF (Audio Interchange File Format): Another lossless format, similar to WAV but primarily used on Apple systems. The file sizes are often comparable to WAV files.

- MP3 (MPEG Audio Layer III): A lossy format, meaning some audio data is discarded during compression to achieve smaller file sizes. It’s widely used for streaming and online distribution but can result in reduced audio quality, especially at lower bitrates. The compression algorithm causes some sound loss, but it’s usually acceptable for casual listening.

- AAC (Advanced Audio Coding): A lossy format that offers generally better sound quality than MP3 at similar bitrates, making it popular for digital music distribution and streaming platforms.

- FLAC (Free Lossless Audio Codec): A lossless format known for its excellent compression ratio, balancing high quality with relatively small file sizes compared to WAV and AIFF.

The choice of file format depends heavily on the intended use. For mastering and archiving, lossless formats like WAV, AIFF, or FLAC are preferred. For distribution where file size is a major concern, lossy formats like MP3 or AAC are often used. I understand the trade-offs involved in each and choose accordingly.

Q 11. What is your experience with music notation software?

I have extensive experience with music notation software, primarily using Sibelius and Finale. My proficiency encompasses not only inputting and editing musical scores but also leveraging the software’s advanced features for analysis, printing, and audio playback.

Beyond basic score entry, my expertise includes:

- Advanced Notation Techniques: Creating complex scores with intricate rhythmic and melodic structures, including microtonal music and non-standard notation.

- Score Preparation for Print and Online Publishing: Ensuring professional quality scores meet industry standards.

- Audio Export and Playback: Generating high-quality audio from scores for playback and distribution.

- Analysis Tools: Using analysis tools built into the software to understand harmonic, melodic, and rhythmic structures.

- Score Collaboration and Sharing: Efficiently collaborating on scores with others using features such as file sharing and version control.

I often use music notation software during the composition process to assist in visualization, refinement, and collaboration with other musicians. It’s an integral part of my workflow, helping to bridge the gap between concept and final product.

Q 12. Describe your approach to sound design for video games or film.

My approach to sound design for video games or film involves a deep understanding of the narrative and the emotional impact desired. It’s about creating sounds that not only sound realistic but also effectively support the storytelling.

My process generally involves:

- Understanding the Project’s Vision: Thorough review of scripts, storyboards, or game design documents to understand the intended mood and atmosphere.

- Sound Concept Creation: Developing a comprehensive sound design plan that outlines the types of sounds needed and their overall sonic palette.

- Sound Recording and Synthesis: Capturing field recordings, synthesizing sounds, or manipulating existing sounds to achieve the desired effects. This might include using libraries or creating custom sounds from scratch.

- Sound Processing and Manipulation: Employing various audio processing techniques (EQ, compression, reverb, delay) to shape sounds and create a cohesive soundscape.

- Implementation and Integration: Working closely with the game or film production team to integrate the sounds into the final product, considering factors such as game engine limitations or post-production workflow.

- Iterative Refinement: Continuous feedback and adjustment based on testing and collaboration with the project team.

For example, in a horror game, I might design unsettling ambient sounds using synthesized textures and distorted field recordings to create a sense of unease. Conversely, in an action movie, I might create powerful explosions using a combination of recorded explosions and synthesized elements, adding layers of detail and realism to improve the overall impact.

Q 13. How do you create realistic and immersive soundscapes?

Creating realistic and immersive soundscapes requires a multi-faceted approach that combines technical skill with artistic vision. Think of it as creating a believable sonic environment that engages the listener on an emotional level.

Key elements include:

- Layered Sounds: Soundscapes are rarely single elements. Layering multiple sounds creates depth and realism. For instance, a forest soundscape might include bird calls, rustling leaves, distant wind, and the subtle sounds of insects.

- Spatialization: The placement of sounds within a virtual space greatly impacts realism. This is achievable with panning (left-right placement) and more sophisticated spatial audio techniques.

- Dynamic Range: Utilizing a wide range of volume levels adds depth and realism. A soundscape should have quiet moments and loud moments to keep the listener engaged.

- Environmental Details: Adding subtle sounds creates realism. These can be subtle details, such as footsteps, wind, or distant traffic noise, that contribute to a cohesive feel.

- Sound Libraries and Sample Libraries: Using high-quality libraries provide a starting point for sound creation, but should be enhanced and modified to add personal touches.

- Field Recordings: Recording real-world sounds often provides a higher level of realism than synthesizing sounds from scratch.

I often combine field recordings with synthesized textures and manipulated sounds to create a unique and believable soundscape, constantly adjusting and refining the mix to achieve the desired atmosphere.

Q 14. Explain your experience with spatial audio techniques.

My experience with spatial audio techniques is substantial, encompassing both traditional methods and the latest immersive technologies. Spatial audio goes beyond simple stereo panning; it aims to create a three-dimensional sound field that accurately positions sounds in space, enhancing the sense of immersion and realism.

I’m proficient in:

- Stereo Panning: The basic technique of placing sounds in a left-right field.

- Surround Sound (5.1, 7.1): Using multiple speakers to create a more immersive experience by placing sounds in relation to the listener. This creates a sense of presence.

- Ambisonics: A higher-order spatial audio format that allows for more accurate and detailed sound field encoding. This captures the sound field more accurately than stereo or surround formats.

- Binaural Recording: Recording audio using microphones that mimic the human ear’s ability to perceive the directionality of sounds.

- Software and Plugins: I’m experienced using various software and plugins for spatial audio processing, such as those within DAWs, specialized spatial audio mixing systems, and game engines.

I’ve worked on projects utilizing spatial audio to create immersive soundscapes for video games, virtual reality (VR) experiences, and interactive installations. The choice of technique depends on the project’s needs and the available technology. My understanding of the human auditory system and its ability to locate sounds enhances my ability to accurately convey spatial information, maximizing the listener’s immersive experience.

Q 15. What is your familiarity with various synthesis techniques (subtractive, additive, FM, etc.)?

My understanding of synthesis techniques is comprehensive, encompassing subtractive, additive, frequency modulation (FM), granular, wavetable, and physical modeling synthesis. Subtractive synthesis, the most common, starts with a rich sound (often a sawtooth or square wave) and uses filters to remove unwanted frequencies, shaping the timbre. Think of a sculptor chipping away at a block of marble to reveal a statue – that’s subtractive synthesis. Additive synthesis, conversely, builds complex sounds by layering pure sine waves. It’s like an orchestra, where each instrument (sine wave) contributes to the overall sound. FM synthesis uses modulation of one oscillator’s frequency by another, creating rich, evolving sounds often used in classic synths like the Yamaha DX7. It’s similar to how two musical instruments played together can create interference patterns, resulting in new timbres. Granular synthesis involves manipulating tiny sound particles (grains), offering unique textural control. Wavetable synthesis uses pre-recorded waveforms that can be manipulated, offering a blend of flexibility and efficiency. Finally, physical modeling synthesis simulates the acoustic properties of real-world instruments, leading to extremely realistic sounds.

- Subtractive Example: A classic analog synth using a low-pass filter to sculpt a sawtooth wave into a warmer sound.

- Additive Example: A software synthesizer combining multiple sine waves to create a bell-like tone.

- FM Example: Creating metallic, bell-like, or shimmering sounds by modulating the frequency of an oscillator with a lower frequency oscillator.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. What experience do you have with music plugins and effects processing?

My experience with music plugins and effects processing is extensive. I’m proficient in using a wide range of plugins, from compressors and equalizers to reverbs, delays, and more specialized effects like granular processors and vocoders. I understand the signal flow within a Digital Audio Workstation (DAW) and how different plugins interact. For instance, I know when it’s beneficial to apply a compressor before or after an equalizer, based on the desired effect. I’m familiar with both commercially available plugins (e.g., Waves, Universal Audio, FabFilter) and open-source options. I frequently experiment with different plugin combinations to achieve unique and creative results, tailoring my choices to the specific genre and sonic aesthetic of the project. Beyond basic application, I have a deep understanding of the algorithms underpinning many of these plugins and can troubleshoot issues effectively.

For example, in a recent project, I used a combination of a multiband compressor to control dynamics across different frequency ranges, a tape saturation plugin to add warmth and character, and a convolution reverb to create a realistic space. This layered approach is fundamental to achieving a professional polish.

Q 17. Describe your experience with music algorithms and music information retrieval.

My experience with music algorithms and music information retrieval (MIR) involves both practical application and theoretical understanding. I’ve worked on projects involving automatic transcription of musical scores, audio fingerprinting for music identification, and the development of tools for music analysis and generation. I’m familiar with common algorithms used in MIR, such as dynamic time warping for comparing musical sequences, and various machine learning techniques used for tasks such as genre classification and melody extraction. For example, I implemented a system for automatically identifying the key and tempo of a piece of music using spectral analysis and signal processing techniques. This involved pre-processing the audio, extracting relevant features (like chroma features representing the harmonic content), and feeding this data to a machine learning model for classification. Furthermore, I have experience with utilizing MIR libraries and APIs to leverage existing algorithms and tools in my work.

Q 18. How familiar are you with audio signal processing techniques?

My familiarity with audio signal processing techniques is substantial. I understand concepts such as Fourier transforms (for frequency analysis), filtering (high-pass, low-pass, band-pass), convolution (for reverb and other effects), and time-frequency analysis. I’m comfortable working with both time-domain and frequency-domain representations of audio signals. For instance, I’ve used Fast Fourier Transforms (FFTs) to analyze the frequency content of audio signals, identifying dominant frequencies and harmonics. This is crucial for tasks such as equalization, noise reduction, and the design of custom audio effects. My understanding extends to advanced techniques like wavelet transforms, which provide a better time-frequency resolution than traditional FFTs, especially useful for transient signals.

A practical example involves designing a custom noise gate plugin. This required an understanding of thresholding techniques to identify and attenuate unwanted noise while preserving the desired audio. The process involved using FFTs to analyze the frequency spectrum and time-domain analysis to identify the noise profile. This allowed me to target the noise effectively.

Q 19. What experience do you have programming audio applications?

I have significant experience programming audio applications, primarily using languages like C++, Python, and Max/MSP. I’ve developed custom audio plugins, signal processing tools, and even small-scale DAWs. My C++ experience allows me to write highly efficient code for real-time audio processing, while Python facilitates rapid prototyping and data analysis. Max/MSP, a visual programming language, enables quick experimentation and development of interactive audio applications. I’m proficient in using libraries like SuperCollider, JUCE, and PortAudio, which are crucial for audio development. For example, I once created a custom plugin in C++ using the JUCE framework that implemented a novel granular synthesis algorithm. This involved a deep understanding of buffer management, signal processing, and the intricacies of plugin development within a DAW environment. This project required careful optimization to maintain low latency and avoid unwanted artifacts.

Q 20. Describe your understanding of psychoacoustics.

My understanding of psychoacoustics is strong, encompassing the perception of sound and its relationship to the physical properties of audio signals. I know how the human auditory system processes sound, including aspects such as frequency response, loudness perception, and spatial hearing. This knowledge is invaluable in music production, allowing me to make informed decisions about mixing and mastering, ensuring that the final product sounds balanced and pleasing to the listener. For instance, I understand the concept of the Fletcher-Munson curves, which illustrate how our perception of loudness changes with frequency. This informs my approach to equalization – boosting frequencies that are perceived as quieter at low volumes, and vice versa. I also apply this knowledge when designing audio effects; for example, creating a reverb that accurately simulates the spatial characteristics of a room, taking into account how our brains perceive sound localization and reverberation.

A practical application lies in mastering. Knowing the limitations of human hearing allows me to carefully sculpt the frequency spectrum and dynamics, ensuring that the music translates well across different playback systems, without introducing unwanted artifacts. I also utilize knowledge of psychoacoustic masking to make informed decisions regarding dynamic range compression.

Q 21. How do you handle challenging clients or tight deadlines in a music production environment?

Handling challenging clients and tight deadlines is a crucial skill in music production. My approach is proactive and communicative. Firstly, I establish clear expectations and communication channels with clients from the outset, ensuring they understand the scope of the project, timelines, and potential challenges. Regular check-ins help to prevent misunderstandings and allow for adjustments as needed. When faced with tight deadlines, I prioritize tasks effectively, focusing on core elements first and using time management techniques like the Pomodoro method to maintain focus and productivity. I’m also adept at delegating tasks where appropriate and collaborating with other professionals to ensure timely completion. Finally, I maintain a calm and professional demeanor even under pressure, allowing for creative problem-solving and avoiding unnecessary conflicts.

For instance, on one project, a client requested significant changes to a track very late in the production process. Instead of panicking, I immediately communicated the impact of these changes on the timeline, proposed solutions (like scaling back on less important aspects), and worked collaboratively to find a revised schedule that still met their needs. The transparent communication prevented frustration and ensured we delivered a high-quality product, albeit with a slightly adjusted timeline.

Q 22. Explain your experience with audio editing software.

My experience with audio editing software spans over a decade, encompassing a wide range of DAWs (Digital Audio Workstations). I’m highly proficient in industry-standard software like Pro Tools, Ableton Live, Logic Pro X, and Cubase. My expertise extends beyond basic editing to include advanced techniques such as:

- Multitrack editing and mixing: I routinely handle projects with numerous audio tracks, employing techniques like gain staging, EQ, compression, and automation to achieve a polished and professional sound. For instance, in a recent project involving a symphony orchestra recording, I meticulously balanced individual instruments and sections, carefully addressing phase issues and ensuring optimal clarity across the frequency spectrum.

- Audio restoration and mastering: I’m skilled in cleaning up noisy recordings, using tools like noise reduction, de-clickers, and spectral editing. I also have a strong understanding of mastering principles, including dynamic range control, equalization, and loudness maximization, essential for preparing music for distribution.

- MIDI editing and sequencing: Beyond audio, I’m proficient in working with MIDI data, composing, editing, and arranging musical scores using virtual instruments and creating complex MIDI automation sequences.

I’m comfortable working with both linear and non-linear editing workflows, adapting my approach to the specific demands of each project. My proficiency is further enhanced by continuous exploration of new features and plugins within these DAWs, allowing me to stay ahead of the curve in audio technology.

Q 23. Describe your process for troubleshooting audio equipment malfunctions.

Troubleshooting audio equipment malfunctions requires a systematic approach. My process begins with identifying the problem:

- Identify the symptoms: Is there no sound? Distortion? Intermittent signal? Pinpointing the exact nature of the issue is crucial. For example, if only one channel is affected, the problem is likely isolated to that specific channel’s equipment.

- Isolate the source: I methodically check each component in the signal chain. This includes cables, connectors, audio interfaces, microphones, speakers, and any processing units. I might start by swapping cables to rule out a faulty connection. If using multiple interfaces, try a different one to determine if the interface itself is faulty.

- Utilize test signals: Using a test tone generator or a simple signal source helps determine where the signal path breaks down. If the signal is lost after a certain point, I know the problem lies within that segment.

- Check power supplies and connections: Ensure that all equipment is receiving adequate power and that connections are secure. Often, a loose connection or inadequate power supply is the root cause.

- Consult documentation and online resources: If the issue persists, I refer to the manufacturer’s documentation, user manuals, and online forums for troubleshooting tips and known problems.

If the issue is complex or beyond my ability to resolve, I’ll seek help from a qualified technician. This systematic, step-by-step process has allowed me to resolve countless audio issues quickly and efficiently.

Q 24. What is your experience with virtual instruments and sample libraries?

I have extensive experience with virtual instruments (VIs) and sample libraries, utilizing them extensively in my music production workflow. My familiarity includes:

- Software instruments: I’m proficient in using a broad range of VIs, encompassing synthesizers (analog modeling, wavetable, subtractive, FM), samplers (Kontakt, HALion), and orchestral libraries (Vienna Symphonic Library, Spitfire Audio). I understand the nuances of sound design within these instruments, utilizing parameters like oscillators, filters, envelopes, and LFOs to shape sounds creatively.

- Sample library management: I have experience organizing and managing large sample libraries, often utilizing library management software to efficiently browse and access various sounds. For instance, in a recent project, I used Kontakt’s built-in library management to streamline sample recall and editing.

- Sample manipulation and sound design: I’m adept at manipulating and processing samples to create unique and custom sounds. This involves techniques like time-stretching, pitch-shifting, granular synthesis, and using effects to create textural elements and unique soundscapes.

My ability to blend VIs and sample libraries with acoustic recordings allows for rich and complex musical textures, adding depth and dimension to my compositions and arrangements.

Q 25. How familiar are you with various audio hardware interfaces?

My familiarity with audio hardware interfaces is comprehensive. I’ve worked with various interfaces from manufacturers such as Focusrite, Universal Audio, Antelope Audio, RME, and MOTU. My understanding encompasses the technical specifications and practical applications of these interfaces, including:

- Different connectivity options: I understand the differences between Thunderbolt, USB, Firewire, and ADAT connections and their implications for latency and bandwidth. For high-channel-count projects, I often opt for Thunderbolt interfaces due to their superior bandwidth.

- Analog-to-digital and digital-to-analog conversion: I understand the importance of high-quality converters in maintaining audio fidelity. Factors such as sample rate, bit depth, and dynamic range significantly impact audio quality, and I choose interfaces with specifications that meet the demands of each project.

- Preamplification and signal routing: Many interfaces incorporate high-quality preamps. I’m skilled in using these preamps to shape the sound of microphones and instruments. Understanding signal routing allows me to efficiently manage multiple inputs and outputs.

- Monitoring and headphone management: I’m familiar with using different monitoring options including low-latency monitoring, talkback microphones, and dedicated headphone outputs, essential for efficient and comfortable workflow during recording and mixing.

The selection of the appropriate interface depends on the project’s complexity and specific requirements; I always choose the interface that optimizes audio quality and workflow.

Q 26. Describe your understanding of digital signal processing concepts such as convolution and filtering.

Digital Signal Processing (DSP) concepts such as convolution and filtering are fundamental to my work. Let’s break them down:

- Convolution: This is a mathematical process that essentially describes the effect of one signal on another. In audio, it’s commonly used to simulate the response of a physical system, like a room or a loudspeaker. A convolution reverb plugin, for example, uses a pre-recorded impulse response (IR) of a room to apply that room’s acoustic character to the audio signal. This means the audio will sound as if it were recorded in that specific room.

- Filtering: This involves modifying the frequency content of an audio signal. Filters can be used to remove unwanted frequencies (like noise), boost certain frequencies (like enhancing a vocal’s presence), or shape the overall tonal balance. Common filter types include low-pass, high-pass, band-pass, and notch filters. For instance, a high-pass filter can be used to remove low-frequency rumble from a microphone recording, while an EQ could be used to sculpt the tone of a guitar.

Understanding these DSP concepts is crucial for effective audio editing, mixing, and mastering. I utilize these principles daily to refine audio and achieve the desired sonic results. For example, I might use a de-esser (a specific type of filter) to reduce harsh sibilance in vocals, or a multiband compressor to dynamically control different frequency ranges.

Q 27. Explain your experience with automating tasks within a DAW.

Automating tasks within a DAW is essential for efficiency and consistency in music production. My experience encompasses various automation techniques:

- Track automation: I routinely automate parameters such as volume, pan, EQ, and effects sends to create dynamic and evolving soundscapes. For example, I might automate the volume of a synth pad to gradually increase throughout a song section.

- MIDI automation: I use MIDI automation to control parameters of virtual instruments, creating complex rhythmic and melodic variations. This includes automating synth parameters like cutoff frequency, resonance, or LFO speed to add movement and evolution to sounds.

- Using automation clips: Ableton Live’s automation clips, for example, offer a visual and flexible way to create intricate automation patterns. These can be easily copied and pasted, creating consistent automation across different parts of a project.

- Macros and shortcuts: I utilize keyboard shortcuts and custom macros to speed up repetitive tasks. This makes the entire workflow significantly more efficient.

Automation isn’t just about saving time; it allows for more creative control and the ability to achieve subtle nuances that would be difficult or impossible to achieve manually. Mastering automation techniques is key to professional and efficient music production.

Q 28. What is your experience with collaborative music production workflows?

I have extensive experience in collaborative music production workflows, having worked on numerous projects involving multiple musicians, engineers, and producers. My experience includes:

- Cloud-based collaboration platforms: I’m comfortable using platforms like Dropbox, Google Drive, and cloud-based DAWs that allow for seamless file sharing and version control. This ensures that everyone has access to the latest version of the project and that changes are tracked efficiently.

- Session file management: I understand the importance of well-organized session files to facilitate smooth collaboration. Using clear naming conventions, consistent track labeling, and using separate folders to organize each track or stems aids in efficient workflow.

- Version control and backup strategies: I utilize version control systems (like Git) or simply maintain regular backups to avoid data loss and to revert to previous versions if needed. This is crucial to safeguard creative work and prevent conflicts in shared projects.

- Communication and feedback: Effective communication is paramount in collaborative settings. I actively participate in discussions, provide and receive constructive feedback, and ensure everyone’s input is considered. Tools like video conferencing and online chat improve collaborative interaction.

Successful collaborative projects require meticulous organization, clear communication, and a collaborative mindset. I thrive in these settings, contributing my skills and experience to create cohesive and high-quality musical outputs.

Key Topics to Learn for Your Music and Technology Interview

- Digital Audio Workstations (DAWs): Understanding the core functionalities of popular DAWs like Ableton Live, Logic Pro X, Pro Tools, or Reaper. This includes signal flow, MIDI processing, audio editing, and mixing techniques.

- Audio Signal Processing: Grasping concepts like sampling rate, bit depth, quantization, dynamic range compression, equalization, and reverb. Be prepared to discuss their practical applications in music production and post-production.

- Music Notation and Theory: Demonstrate a solid understanding of musical notation, scales, chords, and basic music theory. This is crucial for effectively communicating musical ideas within a technological context.

- Programming and Scripting (for Music Tech): Familiarity with languages like Python, Max/MSP, or Pure Data is highly valuable for automating tasks, creating custom plugins, or developing interactive music experiences. Highlight any relevant projects.

- Sound Design and Synthesis: Explore subtractive, additive, and FM synthesis techniques. Understanding how to manipulate sound using virtual instruments and effects is essential.

- Music Technology Hardware: Show understanding of various hardware components, their functionalities, and their integration within a digital audio workflow (e.g., audio interfaces, MIDI controllers, synthesizers).

- Algorithmic Composition and AI in Music: Explore the emerging field of AI-driven music creation and its potential applications in the industry. Be ready to discuss ethical considerations and the role of human creativity.

- Problem-solving and Troubleshooting: Showcase your ability to diagnose and solve technical issues related to audio and MIDI workflows, software glitches, or hardware malfunctions.

Next Steps

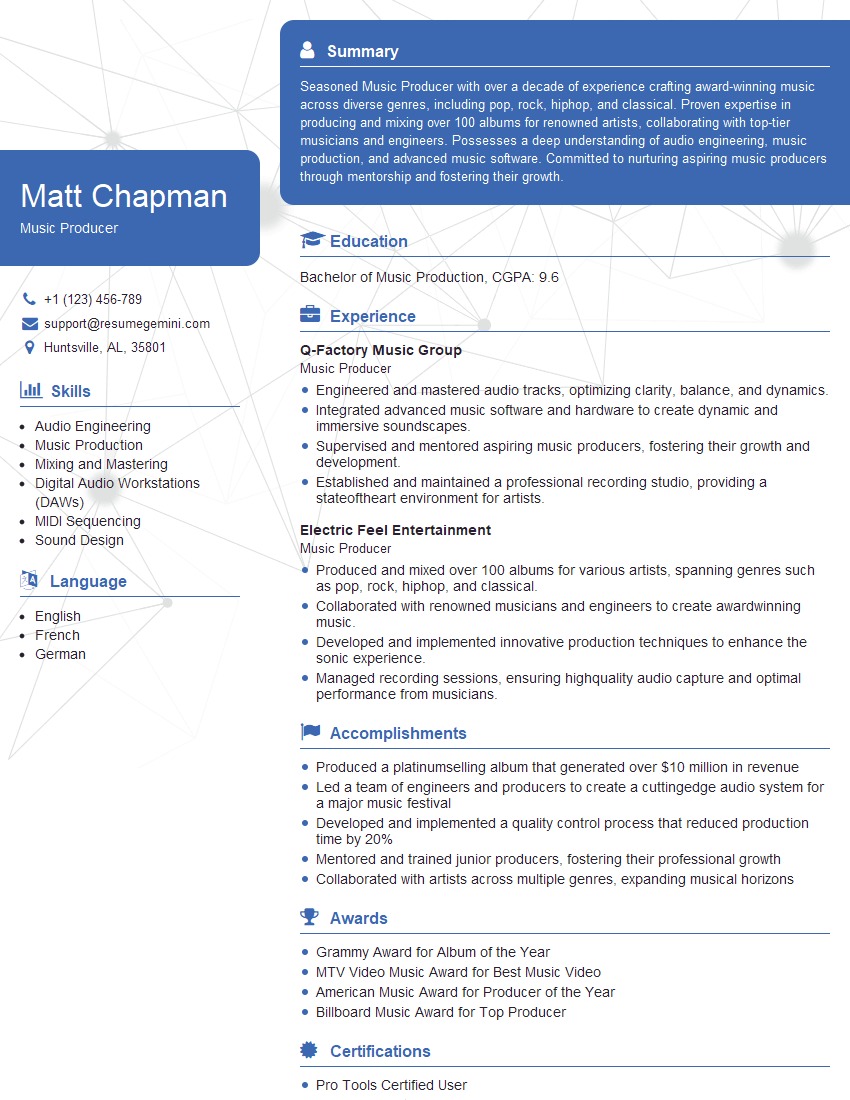

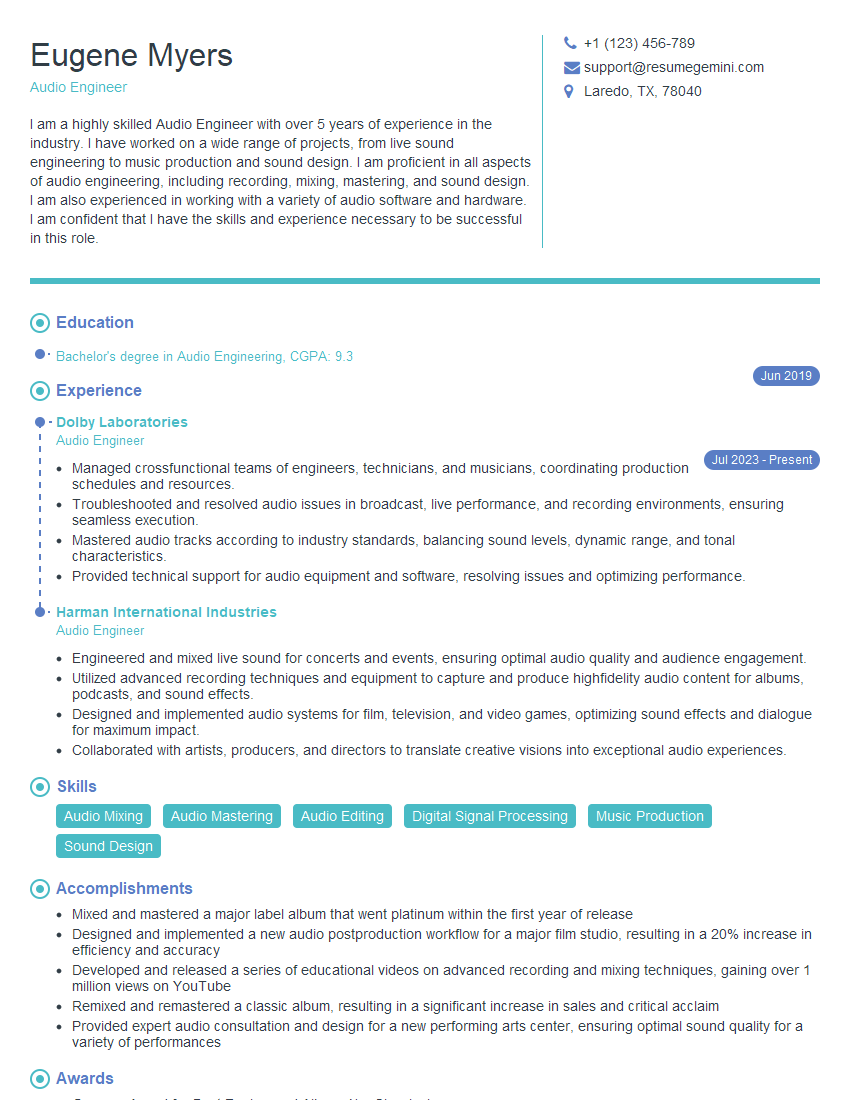

Mastering the intersection of music and technology opens doors to exciting and innovative careers. Whether you’re pursuing a role in music production, sound design, music technology development, or research, a strong foundation in these areas is crucial for success. To stand out, create an ATS-friendly resume that effectively highlights your skills and experience. We highly recommend using ResumeGemini to build a professional and impactful resume that grabs recruiters’ attention. ResumeGemini provides examples of resumes tailored to the Music and Technology field, helping you present your qualifications in the best possible light. Take the next step towards your dream career today!

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Very informative content, great job.

good