Are you ready to stand out in your next interview? Understanding and preparing for Performing Quality Checks interview questions is a game-changer. In this blog, we’ve compiled key questions and expert advice to help you showcase your skills with confidence and precision. Let’s get started on your journey to acing the interview.

Questions Asked in Performing Quality Checks Interview

Q 1. Describe your experience with different quality control methodologies.

Throughout my career, I’ve had extensive experience with various quality control methodologies. My expertise spans from traditional methods like waterfall and V-model to more agile approaches such as Agile testing and DevOps.

In waterfall, for example, I’ve meticulously managed rigorous testing phases following detailed documentation and specifications, ensuring each phase’s completion before moving to the next. This approach is excellent for projects with stable requirements. In contrast, my experience with Agile methodologies involves iterative testing integrated throughout the development lifecycle. This allows for quick feedback loops and adaptation to evolving requirements.

Within Agile, I’ve leveraged techniques like Test-Driven Development (TDD), writing tests *before* the code, ensuring functionality matches expectations from the outset. I’ve also utilized Behavior-Driven Development (BDD), collaborating closely with business stakeholders to define acceptance criteria and create clear, easily understandable tests.

My experience includes working with different testing types: unit, integration, system, and user acceptance testing (UAT). I understand the strengths and weaknesses of each, tailoring my approach based on project needs and available resources.

Q 2. Explain the difference between preventative and corrective quality control.

Preventative and corrective quality control are two distinct but complementary approaches to maintaining quality. Preventative QC focuses on *proactive* measures to prevent defects from ever occurring. Think of it as putting safety measures in place before an accident happens.

Corrective QC, on the other hand, addresses defects *after* they’ve been discovered. It’s the ‘fixing’ stage. Imagine it as cleaning up after a spill.

Example: In software development, preventative QC might involve rigorous code reviews, adhering to coding standards, and using static analysis tools to detect potential issues early on. Corrective QC would involve debugging, fixing identified bugs, and conducting regression testing to ensure the fix hasn’t introduced new problems.

The ideal approach involves a balance between both. While preventative measures are highly effective, some defects will inevitably slip through the cracks. Having a robust corrective process in place is crucial for mitigating those issues and maintaining overall product quality.

Q 3. How do you prioritize defects or bugs found during testing?

Prioritizing defects involves a systematic approach based on several factors. I often use a severity/priority matrix. Severity refers to the impact of the defect on the system, while priority focuses on how urgently the defect needs to be fixed.

- Severity: Ranges from critical (system crash) to minor (cosmetic issue).

- Priority: Considers factors like business impact, user experience, and release deadlines. A critical bug impacting a core feature would have high priority, even if its severity isn’t the absolute highest.

I often use a simple system like this:

- Critical: Immediate fix required; blocks further testing or functionality.

- High: Major functional issue; significant impact on user experience.

- Medium: Minor functional issue; limited impact on user experience.

- Low: Cosmetic issues; no impact on functionality.

Prioritization is rarely straightforward. I’ll often work with developers and stakeholders to reach consensus, explaining the implications of delaying the fix for each defect. This ensures that the most crucial issues get resolved first, optimizing both quality and timely product delivery.

Q 4. What tools or software do you have experience using for quality control?

My experience encompasses a wide range of quality control tools. For test management, I’m proficient in Jira and TestRail, using them to track bugs, manage test cases, and generate reports. For automation, I have experience with Selenium for web application testing and Appium for mobile testing.

I’m also comfortable using various performance testing tools like JMeter and LoadRunner to analyze application behavior under stress. For static code analysis, I’ve worked with tools like SonarQube to identify potential vulnerabilities and code quality issues early in the development cycle. Finally, I have proficiency in using defect tracking systems such as Bugzilla and Azure DevOps to document and manage the entire defect lifecycle.

Q 5. How do you handle disagreements with developers about bug severity?

Disagreements about bug severity are inevitable. My approach involves fostering open communication and collaboration. Instead of confrontation, I focus on objective evidence.

If I disagree with a developer’s assessment, I’ll present concrete evidence, such as screenshots, logs, or reproducible steps, to demonstrate the impact of the bug. I’ll reference relevant documentation or user stories to support my claim of severity. I also emphasize the impact on the user experience, focusing on the perspective of the end user.

If the disagreement persists, I’ll involve a senior engineer or project manager to mediate. The goal is not to ‘win’ the argument, but to reach a consensus that reflects the actual impact of the bug and ensures appropriate prioritization.

Q 6. Describe your approach to creating a test plan.

Creating a comprehensive test plan is crucial for successful quality control. My approach involves these key steps:

- Scope Definition: Clearly define the software components or features to be tested.

- Test Objectives: Outline the goals of the testing process (e.g., identify bugs, ensure performance, verify functionality).

- Test Strategy: Choose appropriate testing methods (unit, integration, system, UAT, performance, security) based on the project requirements.

- Test Environment Setup: Detail the hardware and software needed for testing.

- Test Data: Identify and prepare necessary data for testing.

- Test Schedule: Create a realistic timetable for different testing stages.

- Test Deliverables: Define what documentation will be produced (e.g., test cases, bug reports, test summary reports).

- Risk Assessment: Identify potential risks and outline mitigation strategies.

The final plan serves as a roadmap for the testing process, ensuring comprehensive coverage and efficient resource allocation. It should be a living document, allowing for adjustments as the project evolves.

Q 7. What are your preferred methods for documenting quality control processes?

Effective documentation is vital for maintaining consistency and traceability in quality control processes. I prefer a combination of methods:

- Test Management Tools: Tools like Jira and TestRail provide a centralized repository for test cases, bug reports, and test results.

- Spreadsheets: For simpler projects or specific reports, spreadsheets offer a quick and easy way to track information.

- Detailed Test Cases: I write clear, concise test cases, detailing steps, expected results, and actual results. This enables repeatability and facilitates efficient bug reporting.

- Bug Tracking System: I meticulously document each bug, including its severity, priority, steps to reproduce, and screen shots or videos. This allows for consistent tracking and improved resolution.

- Test Summary Reports: These reports summarize the testing process, highlighting key metrics like the number of bugs found, testing coverage, and overall quality assessment.

My documentation style emphasizes clarity, completeness, and traceability. This ensures that all aspects of the testing process are well-documented, enabling efficient communication and collaboration among team members and stakeholders.

Q 8. How do you measure the effectiveness of quality control processes?

Measuring the effectiveness of quality control processes relies on a multi-faceted approach. It’s not just about the number of defects found, but also about the efficiency and impact of the entire process. We use key performance indicators (KPIs) to track and measure this effectiveness.

- Defect Rate: This is the most basic metric – the percentage of defective units or products compared to the total produced. A lower defect rate indicates better quality control.

- Defect Density: This metric measures the number of defects per unit of code, feature, or module. It’s particularly useful in software development.

- Mean Time To Failure (MTTF): In hardware or systems, MTTF indicates the average time a system operates before failure. A higher MTTF is preferable.

- Cost of Quality (COQ): This encompasses all costs associated with preventing, appraising, and failing to meet quality requirements. Reducing COQ is a major goal.

- Customer Satisfaction: Ultimately, the effectiveness of quality control is reflected in customer satisfaction. Feedback surveys and reviews provide valuable insights.

For example, in a manufacturing setting, tracking the defect rate of a particular assembly line over time allows us to identify trends and implement corrective actions if the rate increases. Similarly, in software development, tracking defect density helps us pinpoint problem areas within the codebase and improve testing strategies.

Q 9. Explain your experience with statistical process control (SPC).

Statistical Process Control (SPC) is a crucial tool in my quality control arsenal. I’ve extensively used control charts, such as Shewhart charts and CUSUM charts, to monitor process variation and identify potential issues before they become major problems. These charts visually represent data points collected over time, highlighting trends and deviations from the expected norm.

For instance, in a project involving the production of precision parts, we used control charts to monitor the dimensions of the parts. Any points falling outside the control limits triggered an investigation into the root cause of the variation, which might include tool wear, material inconsistencies, or operator errors. This proactive approach allowed us to prevent significant defects and maintain consistent quality.

Beyond control charts, I’m experienced in using various SPC techniques, including process capability analysis (Cp and Cpk) to assess the capability of a process to meet specifications. This helps determine if the process is inherently capable of meeting quality standards or if improvements are needed.

Q 10. How do you ensure quality control in Agile development environments?

Ensuring quality control in agile environments requires a shift from traditional, heavy documentation-based approaches to a more iterative and collaborative process. Continuous integration and continuous delivery (CI/CD) pipelines are key. Automated testing plays a pivotal role, with unit, integration, and system tests running frequently.

- Shift-left testing: Incorporating testing early in the development cycle is vital. This involves unit testing, code reviews, and automated testing at every stage.

- Continuous integration: Developers integrate code frequently into a shared repository, triggering automated builds and tests. This allows for early detection of integration issues.

- Test-driven development (TDD): Writing tests *before* writing code ensures that the code meets the specified requirements.

- Sprint retrospectives: Regular team meetings focus on identifying areas for improvement in the development process, including quality control practices.

- Collaboration: Close collaboration between developers, testers, and product owners is essential for quickly addressing quality concerns.

Imagine a team developing a mobile app using Scrum. Each sprint includes dedicated time for testing, with automated tests running after each code commit. The team also incorporates user acceptance testing (UAT) before each sprint ends, ensuring the product aligns with user needs.

Q 11. Describe a time you identified a critical quality issue. What was your approach to resolving it?

In a previous role, we discovered a critical bug in a software application shortly before its release. This bug caused data corruption under specific, yet frequent, user scenarios. The initial reaction was panic, but I immediately implemented a structured approach.

- Problem Definition: We clearly defined the scope of the issue, including its impact on users and the system.

- Root Cause Analysis: Using the 5 Whys technique, we systematically investigated the root cause of the bug, identifying a flaw in the data validation logic.

- Solution Development: We developed a fix that addressed the root cause and included thorough regression testing to ensure the fix didn’t introduce new problems.

- Communication: We communicated the issue and resolution plan to stakeholders, including management and the affected users.

- Implementation and Monitoring: We implemented the fix through a hot-fix release, carefully monitoring system performance to ensure stability.

This situation highlighted the importance of thorough testing and proactive communication. It also underscored the need for a robust incident management process.

Q 12. How familiar are you with ISO 9001 or other quality management standards?

I am very familiar with ISO 9001:2015, the international standard for quality management systems. I’ve worked in organizations that have implemented and maintained ISO 9001 certification, understanding its requirements for documentation, process control, internal audits, and management review. My experience extends beyond ISO 9001; I have also worked with other quality management standards relevant to specific industries, such as those in medical device manufacturing or automotive production. I understand the principles of continuous improvement and risk-based thinking that underpin these standards.

Q 13. What is your experience with root cause analysis?

Root cause analysis (RCA) is a fundamental skill in my quality control toolkit. I’m proficient in various RCA techniques, including the 5 Whys, fishbone diagrams (Ishikawa diagrams), and fault tree analysis. The choice of technique depends on the complexity of the problem and the available data.

For example, using the 5 Whys technique to investigate why a machine frequently malfunctions, we might ask:

- Why did the machine malfunction? (Answer: Because the motor overheated.)

- Why did the motor overheat? (Answer: Because the cooling fan failed.)

- Why did the cooling fan fail? (Answer: Because the bearings were worn out.)

- Why were the bearings worn out? (Answer: Because they weren’t lubricated properly.)

- Why weren’t the bearings lubricated properly? (Answer: Because the lubrication schedule wasn’t followed.)

This process helps us to identify the underlying cause – the failure to follow the lubrication schedule – rather than just treating the symptom (the overheated motor).

Q 14. How do you balance the speed of delivery with the need for thorough quality checks?

Balancing speed of delivery with thorough quality checks is a constant challenge, especially in today’s fast-paced environment. The key is to optimize the process and leverage the right tools and techniques.

- Prioritization: Focus quality efforts on the most critical aspects of the product or service. Not every aspect needs the same level of scrutiny.

- Automation: Automate as many testing processes as possible. This increases efficiency and reduces the time required for manual checks.

- Risk-based testing: Concentrate testing efforts on areas with higher risk of failure.

- Continuous monitoring: Implement monitoring systems to continuously track product performance after release. This allows for quick identification and resolution of any issues.

- Agile methodologies: Agile methodologies emphasize iterative development and frequent testing, allowing for early detection and correction of quality issues.

Think of it like building a house: you wouldn’t wait until the entire house is built before checking the foundation’s stability. Instead, you’d check it at each stage. Similarly, in software development, continuous integration and frequent testing ensure that quality is built in from the beginning, rather than being an afterthought.

Q 15. What is your experience with different testing types (e.g., unit, integration, system)?

My experience spans all levels of software testing, from unit to system testing. Unit testing focuses on individual components or modules, verifying they function correctly in isolation. I frequently use techniques like white-box testing, examining the internal code structure to design tests. For example, I’ve used JUnit for Java projects to test individual methods, ensuring each performs its intended function accurately and efficiently. Integration testing, on the other hand, verifies the interaction between different modules. I’ve used integration testing to ensure seamless data flow between a user interface module and a database module. This often involves techniques like stubbing and mocking to simulate dependencies. Finally, system testing evaluates the entire system as a whole, ensuring it meets requirements and performs as expected in a realistic environment. A recent project involved rigorous system testing of a web application, encompassing functionality, usability, and performance under simulated heavy load conditions. I’m proficient in designing and executing these various tests to pinpoint defects early in the development lifecycle.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. Describe your experience with test case design and execution.

Test case design is crucial. My approach begins with understanding the requirements thoroughly. I employ various techniques like equivalence partitioning, boundary value analysis, and state transition testing to create comprehensive test cases that cover a wide range of scenarios. For example, when testing a login form, equivalence partitioning helps to define valid and invalid input ranges for usernames and passwords. Boundary value analysis focuses on testing at the edge cases—e.g., the maximum length of a username or password. These techniques ensure efficient test coverage. Test case execution involves meticulously following each step, documenting results, and comparing them against the expected outcomes. I am proficient with various test management tools like TestRail to organize, execute, and track test cases. Any discrepancies are documented as defects and tracked through resolution. A recent project involved over 1000 test cases using the above approaches. Thorough documentation and a structured approach were key to success.

Q 17. How do you manage and track defects throughout the software development lifecycle?

Defect management is a critical part of my workflow. I use a combination of techniques and tools to track defects effectively. This typically involves logging defects in a dedicated bug tracking system like Jira or Bugzilla. Each defect report includes a clear title, detailed description, steps to reproduce, expected and actual results, severity level, and priority. I meticulously track the status of each defect—from its initial reporting to its resolution and verification. This frequently involves close collaboration with developers to reproduce, understand, and fix the issues. Regular status reports and defect metrics provide visibility into quality issues and the progress of remediation. My aim is to ensure transparent communication and a rapid resolution process, minimizing the impact on the project timeline and end-user experience. I’ve found that using a structured approach with clear communication is vital to efficient defect management.

Q 18. How do you communicate quality control findings to stakeholders?

Communicating quality control findings effectively to stakeholders requires a clear and concise approach. I tailor my communication style based on the audience and the complexity of the issue. For technical stakeholders, I provide detailed defect reports with technical explanations and supporting evidence. For non-technical stakeholders, I translate complex information into easily understandable terms, using visualizations like charts and graphs to demonstrate trends and impact. Regular status meetings, formal reports, and dashboards are utilized to provide updates on the overall quality status and risk assessment of the project. In several projects, I’ve successfully used visual dashboards to demonstrate the number of critical defects and their resolution progress to project managers and clients, fostering clear understanding and proactive risk mitigation.

Q 19. How do you adapt your quality control approach to different project types?

Adaptability is key in quality control. My approach changes based on project type, size, and methodology. Agile projects necessitate a more iterative and flexible approach, with frequent testing cycles and close collaboration with developers. Waterfall projects, on the other hand, require more structured and planned testing phases. For example, in a large-scale enterprise system project, I’d prioritize comprehensive system testing with more focus on risk management and security. For a smaller mobile application project, I’d concentrate on usability testing and rapid iteration. In all cases, I ensure that the testing strategy aligns with the project’s goals and constraints, always prioritizing risk mitigation and efficient resource allocation.

Q 20. Describe your experience with automated testing.

I have extensive experience with automated testing using various tools and frameworks. Selenium for web UI testing, Appium for mobile app testing, and tools like JUnit and TestNG for unit testing are part of my skillset. Automation helps in accelerating the testing process, improving test coverage, and reducing human error. For example, I’ve automated regression testing for large-scale web applications, significantly reducing testing time and allowing for more frequent releases. I’m also proficient in creating and maintaining automated test scripts, utilizing best practices for maintainability and scalability. Automation, however, doesn’t replace manual testing. Manual testing is still crucial for exploratory testing, usability testing, and addressing scenarios not easily automated. A balanced approach is necessary for comprehensive quality assurance.

Q 21. What is your experience with performance testing?

Performance testing is a critical aspect of quality assurance, especially for applications with high user volume. My experience includes conducting load tests, stress tests, and endurance tests to evaluate application responsiveness, stability, and scalability under various conditions. Tools like JMeter and LoadRunner are frequently employed in my workflow. For example, I’ve used JMeter to simulate hundreds of concurrent users accessing a web application, identifying performance bottlenecks and ensuring the application remains responsive under peak load. The results of these tests provide critical insights for performance optimization and capacity planning. Performance testing is not a standalone activity; it’s integrated throughout the SDLC, informing development decisions and ultimately contributing to a high-performing and reliable application.

Q 22. How do you handle situations where deadlines conflict with quality assurance needs?

Balancing deadlines and quality assurance is a delicate act, akin to walking a tightrope. My approach involves proactive communication and risk-based prioritization. First, I’d engage in open dialogue with the project team to understand the deadline constraints and the critical functionalities. Then, I’d perform a risk assessment, identifying areas where compromising quality would have the most significant impact. This might involve using a risk matrix, considering factors like the severity and probability of failures. We’d then prioritize testing efforts, focusing on high-risk areas even if it means slightly delaying less critical testing. For instance, if a new payment gateway is being integrated, thorough security testing would take precedence over less critical UI testing. Finally, we’d use techniques like test automation and parallel testing to optimize efficiency and mitigate the impact of time constraints. Continuous communication throughout the process is crucial to keep everyone informed and aligned.

Q 23. What is your experience with security testing?

My security testing experience encompasses various methodologies, including penetration testing, vulnerability scanning, and security code reviews. I’m proficient in using tools like OWASP ZAP and Burp Suite for identifying vulnerabilities in web applications. I’ve worked on projects involving both black-box and white-box testing, focusing on areas like SQL injection, cross-site scripting (XSS), and cross-site request forgery (CSRF). In one project, I identified a critical SQL injection vulnerability that could have compromised sensitive customer data; my timely discovery prevented a potential data breach. I understand the importance of integrating security testing throughout the software development lifecycle (SDLC), not just as an afterthought.

Q 24. How do you ensure data quality and integrity?

Ensuring data quality and integrity is paramount. My approach is multi-faceted and starts even before the data enters the system. This includes defining clear data standards and validation rules upfront, implementing data cleansing processes, and using data profiling techniques to understand the data’s characteristics. During the development process, I employ techniques like data validation checks within the application code itself to ensure data consistency and accuracy. I also regularly monitor data quality metrics, such as completeness, accuracy, and consistency, using tools and techniques to identify anomalies and potential issues. For instance, I might use SQL queries to check for data inconsistencies or utilize dedicated data quality management tools. Addressing data quality issues early in the lifecycle significantly reduces downstream problems and ensures the reliability of any analyses or decisions based on the data.

Q 25. What is your understanding of different quality metrics (e.g., defect density, defect removal efficiency)?

Understanding quality metrics is fundamental to evaluating the effectiveness of quality assurance efforts. Defect density, which is the number of defects per lines of code or functionality, helps gauge the overall quality of the software. A high defect density indicates potential problems needing attention. Defect removal efficiency (DRE) measures the percentage of defects identified and fixed during the testing phase. A high DRE reflects the efficiency of the testing process. Other metrics I utilize include test coverage, which quantifies how much of the codebase is tested, and mean time to failure (MTTF), which measures the average time until a system fails. By tracking these metrics over time, we can identify trends and areas for improvement in our quality control processes.

Q 26. How do you contribute to continuous improvement of quality control processes?

Continuous improvement is a cornerstone of effective quality control. My contributions include actively participating in retrospective meetings, analyzing quality metrics to identify trends and bottlenecks, and suggesting process improvements. For instance, identifying a recurring issue in a specific module led me to propose enhancing the testing procedures for that area, which resulted in a significant reduction in defects. I’ve also advocated for the adoption of automation tools to increase testing efficiency and coverage. I actively encourage the sharing of best practices and knowledge within the team and actively look for opportunities to implement new testing techniques to further improve the quality control process. This collaborative and proactive approach is critical for sustaining high-quality standards over time.

Q 27. Explain your experience with risk assessment and management related to quality.

Risk assessment and management are integral to my quality assurance approach. I begin by identifying potential risks to product quality, such as technical complexities, inadequate testing time, or poorly defined requirements. I use risk assessment techniques, like FMEA (Failure Mode and Effects Analysis), to analyze the likelihood and impact of these risks. Once identified, a mitigation plan is developed, involving strategies like prioritizing testing efforts, adding buffer time to the schedule, or increasing the test coverage. For example, if we identify a high risk of a specific module failing, we may dedicate more resources and expertise to testing it rigorously. Regular monitoring and communication are crucial to track the effectiveness of mitigation strategies and adapt our approach as needed. This proactive approach helps minimize potential quality issues and protects the overall product quality.

Key Topics to Learn for Performing Quality Checks Interview

- Understanding Quality Standards: Defining and interpreting quality metrics, specifications, and standards relevant to the industry and role.

- Inspection Techniques: Mastering various inspection methods, including visual inspection, dimensional checks, functional testing, and data analysis, depending on the context (e.g., manufacturing, software, etc.).

- Data Analysis and Reporting: Collecting, analyzing, and presenting quality data effectively through reports, dashboards, or other visual aids to identify trends and areas for improvement.

- Root Cause Analysis (RCA): Applying techniques like the 5 Whys, fishbone diagrams, or Pareto analysis to identify the underlying causes of quality defects and implement corrective actions.

- Process Improvement: Understanding and applying methodologies like Lean, Six Sigma, or Kaizen to improve efficiency and reduce defects within quality control processes.

- Quality Control Documentation: Maintaining accurate and detailed records of inspections, test results, and corrective actions, adhering to relevant regulatory guidelines.

- Communication and Collaboration: Effectively communicating quality issues and findings to relevant stakeholders, including engineers, managers, and clients, fostering a collaborative environment for problem-solving.

- Problem-solving and Decision-making: Applying critical thinking skills to troubleshoot quality issues, make informed decisions, and implement effective solutions within time constraints and resource limitations.

- Software/Tools Proficiency: Demonstrating familiarity with relevant software or tools used for quality control, such as statistical software packages, quality management systems (QMS), or specialized testing equipment.

Next Steps

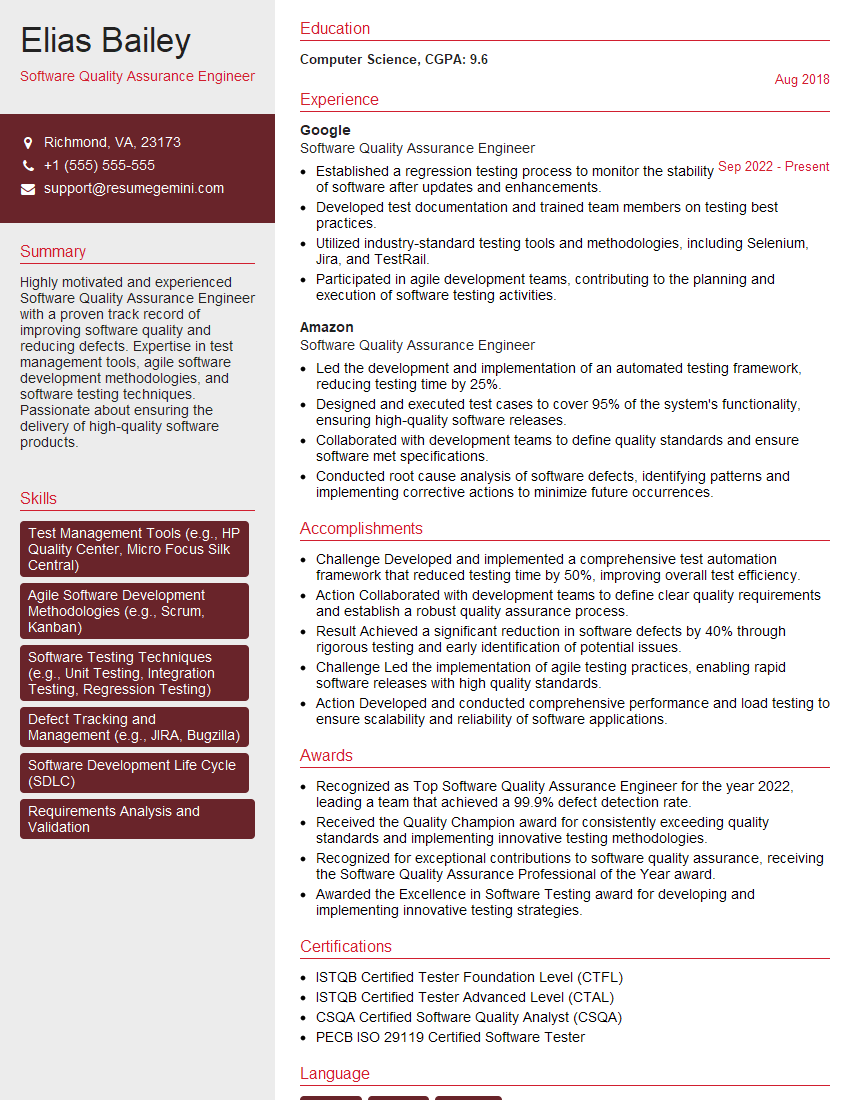

Mastering Performing Quality Checks is crucial for career advancement in many industries. It demonstrates your attention to detail, problem-solving skills, and commitment to delivering high-quality results. To significantly boost your job prospects, create an ATS-friendly resume that highlights your skills and experience effectively. ResumeGemini is a trusted resource that can help you build a professional and impactful resume tailored to your specific career goals. Examples of resumes tailored to Performing Quality Checks are available to help guide you.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Very informative content, great job.

good