Feeling uncertain about what to expect in your upcoming interview? We’ve got you covered! This blog highlights the most important Proficient in music technology interview questions and provides actionable advice to help you stand out as the ideal candidate. Let’s pave the way for your success.

Questions Asked in Proficient in music technology Interview

Q 1. Explain the difference between sample rate and bit depth.

Sample rate and bit depth are crucial parameters defining the quality of digital audio. Think of it like taking a photograph: sample rate is how many pictures you take per second, and bit depth is the resolution of each picture.

Sample Rate: This determines how many times per second the audio waveform is measured and converted into a digital value. It’s measured in Hertz (Hz). A higher sample rate means more data points are captured, resulting in a more accurate representation of the original analog sound. Common rates include 44.1kHz (standard CD quality), 48kHz (common in professional settings), 88.2kHz, and 96kHz (used for high-resolution audio). The higher the sample rate, the higher the fidelity but also the larger the file size.

Bit Depth: This represents the precision of each sample, measured in bits. Each bit doubles the number of possible values. A higher bit depth provides finer resolution, allowing for a greater dynamic range (the difference between the quietest and loudest sounds) and a lower noise floor (the level of inherent background noise). Common bit depths are 16-bit (CD quality) and 24-bit (higher resolution, used in professional recording). A 16-bit recording has 65,536 possible values for each sample, while 24-bit has over 16 million.

In short: Higher sample rate = more samples per second (better detail), higher bit depth = more precision per sample (better dynamic range and less noise). Choosing the appropriate combination depends on the application. For example, a high-resolution audiophile recording might use 96kHz/24-bit, while a podcast might use 44.1kHz/16-bit to save space.

Q 2. Describe your experience with various Digital Audio Workstations (DAWs).

My DAW experience spans several years and includes proficiency in a variety of platforms, each with its strengths and weaknesses. I’ve extensively utilized Logic Pro X for Mac, renowned for its intuitive interface and powerful features, particularly its MIDI editing capabilities. I’ve also worked extensively with Ableton Live, highly valued for its workflow, especially for electronic music production and live performance. Pro Tools is another major player in my skillset, essential for professional studio work due to its industry-standard compatibility and extensive plugin support. Finally, I’ve had experience with Cubase, known for its advanced features suitable for complex projects and orchestral scoring. My experience allows me to seamlessly adapt to different workflows and choose the best DAW for a particular project.

Q 3. What are the advantages and disadvantages of different audio file formats (e.g., WAV, MP3, AIFF)?

Different audio file formats offer trade-offs between file size, audio quality, and compatibility. Let’s compare WAV, MP3, and AIFF:

- WAV (Waveform Audio File Format): This is an uncompressed format, meaning no data is lost during encoding. It provides excellent audio quality but results in large file sizes. WAV files are ideal for archiving master recordings or projects where quality is paramount.

- MP3 (MPEG Audio Layer III): A lossy compressed format, meaning data is discarded during encoding to reduce file size. It sacrifices some audio quality but achieves significantly smaller file sizes, making it suitable for sharing music online or storing large music libraries. The amount of data loss depends on the compression level.

- AIFF (Audio Interchange File Format): Similar to WAV, it’s an uncompressed format offering high-quality audio with large file sizes. It is primarily used on Apple platforms, although it is also compatible with many other DAWs.

Choosing the right format depends on your priorities. For archival purposes or professional mixing, WAV or AIFF are preferred. For distribution or online sharing, MP3 might be more practical due to its smaller size. It’s common to work with uncompressed formats during production and then convert to a compressed format (like MP3) for distribution.

Q 4. How do you troubleshoot audio latency issues?

Audio latency (delay between playing a note and hearing it) is a common issue in music production. Troubleshooting involves a systematic approach:

- Check buffer size in your DAW: A larger buffer size reduces latency but increases processing load, potentially leading to CPU overload and clicks/pops. Experiment to find the optimal balance between latency and performance.

- Reduce the number of plugins: Each plugin adds processing time. Use only necessary plugins and consider using lighter alternatives where possible.

- Lower the sample rate and bit depth (if acceptable): Processing lower-resolution audio is faster.

- Close unnecessary applications: Other programs consuming system resources can contribute to latency.

- Upgrade your computer’s hardware: A faster CPU, more RAM, and a dedicated audio interface will significantly improve performance and reduce latency.

- Check your audio interface settings: Ensure proper driver installation and appropriate buffer size settings. Some interfaces have dedicated low-latency modes.

- Check cable connections: Ensure your audio cables are properly connected and functioning correctly. Poor connections can introduce latency.

The process of identifying the root cause might involve trial and error. Start with the easiest steps (checking buffer size and plugins) before tackling hardware upgrades.

Q 5. Explain the concept of dynamic range compression.

Dynamic range compression reduces the difference between the loudest and quietest parts of an audio signal. Imagine a song with both quiet verses and loud choruses. Compression makes the quiet parts louder and the loud parts quieter, resulting in a more consistent overall volume level. This is achieved by using a compressor, a plugin that attenuates (reduces) the gain of a signal exceeding a certain threshold. The amount of reduction is controlled by the ratio setting. A higher ratio implies more aggressive compression.

Key parameters:

- Threshold: The level at which compression begins.

- Ratio: The ratio between the input signal and the output signal (e.g., a 4:1 ratio means that for every 4dB increase in input, the output increases by only 1dB).

- Attack: How quickly the compressor reacts to a signal exceeding the threshold.

- Release: How quickly the compressor returns to normal operation after the signal falls below the threshold.

Applications: Compression is widely used in mastering to control dynamics, make a mix sound louder, or enhance the clarity of instruments and vocals. It is also commonly used to even out the volume of vocals or instruments in a mix.

Q 6. Describe your experience with EQ techniques (parametric, graphic).

Equalization (EQ) is a crucial process for shaping the frequency balance of audio. I’m proficient in both parametric and graphic EQ techniques.

Parametric EQ: Offers precise control over individual frequency bands. You can adjust the center frequency, gain (boost or cut), bandwidth (Q factor – how wide the affected frequency range is), and often the filter type (high-pass, low-pass, band-pass, notch). This level of granularity makes it ideal for surgical adjustments, addressing specific frequency issues like muddiness in the low-mids or harshness in the high frequencies. I frequently use parametric EQs to clean up recordings, sculpt individual instruments, or shape the overall tonal balance of a mix.

Graphic EQ: Uses sliders to adjust gain at various frequency bands visually. It’s less precise than parametric EQ but provides a quicker way to make overall adjustments. Graphic EQ is useful for broad tonal shaping, such as adding warmth or brightness to a track. It’s less ideal for pinpoint fixes.

My approach often involves a combination of both: a parametric EQ for fine-tuning specific frequencies and a graphic EQ for a general overview and quick adjustments.

Q 7. What are your preferred methods for noise reduction and restoration?

Noise reduction and restoration are crucial in audio post-production. My preferred methods depend on the type and severity of the noise.

For background hiss or hum: I often employ spectral editing techniques (using tools that visualize the frequency spectrum) to identify and selectively remove the noise frequencies, preserving the desired audio. Dedicated noise reduction plugins are also very effective, particularly for consistent, stationary noise. These plugins often learn the characteristics of the noise and then remove it accordingly, keeping in mind to avoid artifacts.

For clicks, pops, and other transient noises: I often use sample-based editing techniques to surgically remove the offending samples or use sophisticated restoration algorithms found in many DAWs to repair these kinds of problems. Replacing the affected areas with a calculated sample from similar areas of the audio signal works well. Often, a combination of automated noise removal and manual editing provides the best result.

For more severe damage: More advanced techniques like spectral repair and AI-powered restoration tools might be necessary. It is important to understand the characteristics of the audio and the various noise reduction techniques available to make a judicious choice.

Q 8. Explain your understanding of signal flow in a typical recording studio setup.

Signal flow in a recording studio describes the path an audio signal takes from its source to the final output. Think of it like a river flowing from its source to the sea. The source could be a microphone capturing a vocalist, a guitar amplifier, or a synthesizer. This signal then typically flows through a chain of processing stages.

- Source: The instrument or voice being recorded.

- Microphone (if applicable): Converts acoustic sound waves into electrical signals.

- Preamplifier: Boosts the weak signal from the microphone to a usable level, often shaping the sound with EQ and gain controls.

- Compressor/Limiter: Controls the dynamic range, making the signal more even.

- Equalizer (EQ): Shapes the frequency balance, boosting or cutting certain frequencies to improve clarity or add warmth.

- Effects Processors (optional): Reverb, delay, chorus, etc., add ambience, texture, and creative effects.

- Analog-to-Digital Converter (ADC): Converts the analog signal into a digital format for computer processing.

- Digital Audio Workstation (DAW): Software where recording, editing, and mixing occur.

- Digital-to-Analog Converter (DAC): Converts the final digital mix back to an analog signal for playback or mastering.

- Power Amplifiers and Speakers (for monitoring and playback): Amplifies the signal to drive loudspeakers.

For instance, a vocal track might go from the microphone, through a preamp with some EQ, into a compressor to control dynamics, then into the DAW for recording and further processing.

Q 9. How do you approach mixing a song to achieve a cohesive and professional sound?

Mixing is the art of blending multiple tracks into a cohesive and balanced whole. My approach centers around achieving clarity, creating a natural spatial image, and ensuring that all instruments and vocals sit well together in the mix.

- Gain Staging: I start by setting appropriate levels for each track, ensuring that no individual track is too loud or too quiet relative to others. This prevents clipping and creates headroom for processing.

- EQ: I sculpt the frequency response of each track to remove muddiness, add clarity, and create space between instruments. For example, I might cut low-mid frequencies from a guitar track to allow the bass to be heard clearly.

- Dynamics Processing: Using compression and limiting, I control the dynamic range of tracks, ensuring a consistent level and preventing harsh peaks.

- Panning: I use panning to create a stereo width and spatial placement of instruments, making the mix feel more open and spacious.

- Effects: I use reverb, delay, and other effects sparingly to add ambience, depth, and creative interest, but always with the goal of enhancing the overall sound rather than obscuring it.

- Automation: I automate various parameters over time to add subtle movements and changes, making the mix more dynamic and engaging.

- Reference Tracks: I frequently refer to commercially released tracks in a similar genre to make sure the mix is achieving a professional standard.

Imagine building a house – each instrument is like a building block, and the mixer carefully places them to create a solid, aesthetically pleasing structure. Each instrument must be balanced with respect to each other to achieve a professional sound.

Q 10. What are your strategies for mastering audio for different media (e.g., streaming, CD)?

Mastering is the final stage of audio production, where the overall balance and loudness of the mix are optimized for a specific playback medium. Different media have different requirements. Streaming services prioritize loudness while maintaining dynamic range, and CDs require a certain level of loudness to match the listener’s expectations without digital distortion.

- Loudness Maximization: I use mastering limiting to achieve the target loudness levels for each platform, considering the loudness wars and the need for dynamic range. Different platforms will specify recommended loudness levels (e.g., LUFS).

- EQ: Subtle adjustments are made to address any overall tonal imbalances that weren’t addressed during mixing.

- Stereo Imaging: I optimize the stereo width to ensure a cohesive stereo image across all playback systems. This involves using specialized mastering plugins designed to widen the mix safely and avoid phase issues.

- Dynamic Range Control: While aiming for suitable loudness, I preserve the dynamic range where appropriate to ensure the music has impact and doesn’t feel overly compressed.

- Dithering: To minimize the introduction of noise when converting from a high-bit depth to a lower bit depth (for example, from 24-bit to 16-bit for CD mastering), I use dithering techniques.

Think of mastering as the finishing touches on a painting: a final polish to create an impressive masterpiece appropriate for exhibition (the playback medium).

Q 11. What experience do you have with MIDI sequencing and virtual instruments?

I have extensive experience with MIDI sequencing and virtual instruments, using them for composition, arrangement, and sound design. My workflow typically involves using a DAW like Ableton Live or Logic Pro X.

- MIDI Sequencing: I’m proficient in creating and editing MIDI data, including note input, velocity, automation, and various MIDI controllers for dynamic expression.

- Virtual Instruments: I’m well-versed in using a variety of virtual instruments, from synthesizers (both subtractive and additive) to samplers and drum machines. I can program sounds from scratch and utilize existing presets creatively.

- Workflow: I often begin with MIDI sequencing to sketch out the arrangement and melodies before diving into sound design and programming. This allows me to focus on the arrangement before fine tuning the sonic details.

- Examples: I’ve used virtual instruments extensively in creating electronic music compositions, composing orchestral arrangements, and creating sounds for video games.

For example, I’ve used Kontakt to create custom orchestral sounds, Massive for complex synth patches and Ableton’s built-in instruments for quick sketches and prototyping.

Q 12. Explain your understanding of different microphone types and their applications.

Different microphone types are designed to capture sound in different ways, making them suitable for various applications. The choice of microphone depends on the sound source and desired character.

- Condenser Microphones: Highly sensitive, capturing a wide frequency range and nuances. Great for vocals, acoustic instruments (e.g., guitars, pianos), and orchestral instruments in a studio environment where higher SPL is not expected.

- Dynamic Microphones: More rugged and less sensitive to handling noise. Suitable for loud sound sources like drums, guitar amplifiers, and live vocals where feedback is a concern.

- Ribbon Microphones: Capture sound through the vibration of a thin metallic ribbon. They often have a warm, smooth character, and are commonly used for recording guitars, horns, and other instruments.

- Large-Diaphragm Condenser Microphones (LDCs): These microphones have larger diaphragms than small-diaphragm condensers (SDCs) leading to a warmer, richer tone, often preferred for vocals and instruments needing that full-bodied sound.

- Small-Diaphragm Condenser Microphones (SDCs): Often used for overhead drum recording, acoustic instruments, and more detailed sound capturing where a more precise sound reproduction is desired. Good for instruments needing a bright, clear tone.

Imagine a painter selecting different brushes – a small brush for detail, a large brush for broad strokes. Similarly, different microphones capture sound with varying levels of detail and character.

Q 13. Describe your process for designing sound effects for games or film.

Designing sound effects for games or film involves understanding the narrative and creating sounds that enhance the realism, emotion, and impact of the visual content.

- Sound Design Brief: I begin by reviewing the visual material and getting a brief understanding of the game or film’s aesthetic and desired mood.

- Sound Library & Foley: I start by browsing sound libraries and then record original foley (sound effects recorded in sync with the picture). This process involves creating sounds using everyday objects. For example, crushing paper could sound like footsteps on a crunchy surface.

- Synthesis: I utilize synthesis techniques to create original sounds when the library or foley doesn’t have what I need. I might use granular synthesis for abstract textures, or FM synthesis for metallic sounds.

- Processing and Editing: I use various digital audio effects to process and sculpt the sounds, including EQ, compression, reverb, delay, distortion, and other creative effects.

- Spatialization: Depending on the project, I might use techniques like panning and surround sound to place sounds in the 3D space, adding depth and realism.

The process is iterative. I’ll often create multiple versions of a sound, trying different approaches to find the best fit for the scene.

Q 14. How do you create immersive soundscapes using spatial audio techniques?

Creating immersive soundscapes using spatial audio techniques involves placing sounds within a three-dimensional space to enhance realism and listener engagement.

- Ambisonics: This technique encodes spatial audio information into multiple channels, allowing for the reproduction of sound from all directions. It creates a highly realistic and immersive experience.

- Binaural Recording: Using dummy-head microphones that mimic the human ear, binaural recording creates a highly realistic and individualized spatial audio experience. The listener feels like the sound is coming directly from the environment in the recordings.

- Head-Related Transfer Functions (HRTFs): HRTFs are used to simulate how sounds are perceived by the listener based on their position relative to the sound source. These functions help create accurate spatial cues.

- 3D Audio Plugins: Various plugins (like those within DAWs or dedicated spatial audio software) help to process and render sounds in a 3D space, allowing for precise placement and control over the soundscape’s spatial characteristics.

- Virtual Reality (VR) Audio: VR applications require precise spatial audio control to create realistic and engaging virtual environments.

Imagine sitting in a concert hall – you hear the sound from different directions. Spatial audio strives to reproduce this experience through various techniques that manipulate the sound’s position relative to the listener.

Q 15. What are your experiences with various audio plugins (compressors, reverbs, delays)?

My experience with audio plugins spans a wide range, encompassing compressors, reverbs, and delays from various manufacturers like Waves, Universal Audio, FabFilter, and iZotope. I’m proficient in using them to sculpt the sonic character of instruments and vocals.

Compressors, for instance, I use to control dynamics, making sounds louder or quieter depending on their level. I’ve extensively used compressors like the Waves CLA-76 for adding punch to drums, the SSL Bus Compressor for glueing together a mix, and the FabFilter Pro-C for surgical dynamic control. Understanding different compressor types – optical, FET, VCA – and their respective sonic characteristics is crucial. For example, an optical compressor tends to be more subtle and transparent, while an FET compressor offers a more aggressive sound.

Reverbs add space and depth to sounds. I’m experienced with algorithmic reverbs, convolutional reverbs (which use impulse responses of real spaces), and plate reverbs, choosing the appropriate type based on the desired effect. For a natural, spacious sound, I might use a convolution reverb with an impulse response of a cathedral; for a more artificial, dramatic sound, I might choose an algorithmic reverb with a longer decay time.

Delays create echoes and rhythmic effects. I’ve worked with various delay types, including tape delays, analog delays, and digital delays. I often use them creatively for rhythmic textures and fills, or for subtle thickening of sounds. A simple delay on a vocal can add depth, while a more complex rhythmic delay pattern can add a percussive element.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. Explain your knowledge of digital signal processing (DSP) concepts.

Digital Signal Processing (DSP) is the backbone of music technology. My understanding encompasses fundamental concepts like sampling, quantization, and digital filtering. I understand how audio is converted from analog to digital and back again, and the inherent limitations and artifacts that can arise in this process.

Sampling is the process of converting a continuous analog signal into a discrete digital signal by taking samples at regular intervals (the sample rate). Quantization is the process of representing the amplitude of each sample with a finite number of bits (the bit depth). Both influence the fidelity of the digital audio.

Digital filters are used extensively in audio processing. I understand the various types of filters—low-pass, high-pass, band-pass, and notch—and how they affect the frequency spectrum of a signal. This knowledge allows me to effectively use EQ (equalization) plugins to shape the tonal balance of my mixes. For instance, a high-pass filter can remove unwanted low-frequency rumble from a vocal track, while a notch filter can eliminate a specific, resonant frequency causing feedback.

Further, I grasp the concepts of Fourier transforms and their applications in spectral analysis, enabling me to understand how frequency components contribute to the overall sound. My understanding extends to concepts like convolution and its relevance to reverb and other effects processing.

Q 17. Describe your experience with audio editing software and techniques.

I’m proficient in various Digital Audio Workstations (DAWs) including Pro Tools, Logic Pro X, and Ableton Live. My experience involves a comprehensive range of audio editing techniques, from basic tasks like cutting, pasting, and trimming audio to advanced processes like time-stretching, pitch-shifting, and noise reduction.

I regularly use tools like spectral editing to surgically remove unwanted noise or artifacts. I’m familiar with various audio restoration techniques and utilize plugins for tasks like de-clicking, de-essing, and de-noising. Furthermore, I understand the importance of proper gain staging and level management throughout the production process to prevent clipping and maintain a healthy audio signal flow.

For example, during a recent project, I had to restore a severely damaged old vinyl recording. I employed spectral editing to remove clicks and pops, and then used dynamic noise reduction to minimize background hiss while preserving the nuances of the original performance. This involved careful manipulation of the threshold, reduction amount, and attack/release settings to avoid unwanted side effects.

Q 18. How do you handle feedback issues in a live sound reinforcement scenario?

Feedback in live sound is a common problem caused by a microphone picking up its own amplified sound, creating a loud, often unpleasant, squealing noise. My approach to handling feedback is multi-pronged and proactive.

- Proper Microphone Placement: The most effective method is to minimize the chances of feedback by strategically positioning microphones away from loudspeakers. Pointing mics away from monitors or speakers is key.

- EQing: Using a parametric equalizer to carefully cut frequencies prone to feedback is crucial. Identifying the resonant frequencies causing feedback through careful listening and observation is essential. Once identified, a narrow cut at that specific frequency will resolve the issue.

- Gain Staging: Keeping microphone and amplifier gains at appropriate levels minimizes the possibility of feedback. It’s a balance: enough gain to capture the performance clearly without pushing it into the territory where feedback is likely.

- Feedback Suppressors: Specialized equipment such as feedback suppressors can automatically identify and mitigate feedback problems. These are especially useful in complex environments with many microphones and speakers.

- Room Acoustics: While not directly under my control in all settings, understanding room acoustics— how sound waves reflect—and addressing potential issues is important. Treating the room acoustically (e.g. using acoustic panels) can greatly reduce the risk of feedback.

The key is a preventative approach. Proactive measures are far better than reactive fixes during a performance.

Q 19. What is your approach to collaborating with musicians and other sound professionals?

Collaboration is fundamental to successful music production. My approach emphasizes clear communication, active listening, and mutual respect. I strive to understand the artist’s vision and contribute my technical expertise to realize their creative goals.

With musicians, I focus on creating a comfortable and inspiring recording environment. This involves offering constructive feedback, making technical suggestions, and understanding the artistic intent behind their performance. I believe open dialogue is vital – sharing my understanding of the technical limitations and possibilities encourages creative problem-solving and mutual respect for each other’s areas of expertise.

When working with other sound professionals, I aim for a seamless workflow. This involves clear communication of roles and responsibilities, timely delivery of work, and a willingness to compromise and integrate diverse perspectives and skillsets. Sharing session files effectively and using a consistent workflow can improve overall collaboration.

Q 20. Explain your understanding of music theory and its relevance to music production.

Music theory is crucial to effective music production. A strong understanding of harmony, melody, rhythm, and form enables me to make informed decisions about arrangement, composition, and sound design. It allows me to anticipate how different musical elements will interact and create a cohesive and satisfying listening experience.

For example, understanding chord progressions allows me to create compelling musical structures, while knowledge of melody writing helps in composing memorable and engaging vocal lines or instrumental parts. My understanding of counterpoint helps me layer different instrumental parts effectively, avoiding muddy or dissonant sounds. Rhythm and meter are fundamental to creating groove and musical energy.

Furthermore, music theory knowledge improves my ability to communicate effectively with musicians and composers. Sharing musical ideas efficiently and accurately is crucial for collaborative projects, while a strong foundation in music theory enables deeper critical analysis of music.

Q 21. Describe your experience with music notation software.

I’m experienced with several music notation software packages, including Sibelius and Finale. My skills include inputting scores, editing existing scores, creating parts for different instruments, and generating audio from the notated music. I utilize these tools for various purposes.

Creating arrangements and scores for ensembles often begins with digital notation. This can be then used to generate audio mockups for preview or to create lead sheets and parts for musicians. The ability to create professional-looking scores that are clear, accurate, and easy to read is essential, allowing me to work effectively with musicians and collaborators.

Beyond scoring, notation software can also aid in arranging, providing a visual representation that enhances creativity and facilitates making informed choices about musical structure. The visual and aural components work together, allowing me to test ideas, experiment with arrangements, and polish the final product efficiently.

Q 22. What are some common challenges in music technology and how have you addressed them?

One of the biggest challenges in music technology is the constant evolution of software and hardware, requiring continuous learning and adaptation. Another significant hurdle is achieving a balance between creative vision and technical limitations. For example, a complex sonic idea might require specialized plugins or processing power that isn’t readily available or affordable. I address these by actively engaging in continuous professional development, experimenting with different tools to find efficient workflows, and prioritizing realistic project scopes that match my available resources. If I encounter a technical limitation, I explore creative workarounds; for instance, if I can’t achieve a specific effect with a plugin, I might emulate it using alternative techniques like sound design or strategic signal routing.

Furthermore, collaborative projects present unique challenges. Different collaborators may have differing technical proficiencies, creating a need for clear communication and a willingness to find common ground in terms of workflow and technical requirements. My approach is to establish a clear technical plan from the beginning, ensuring everyone is on the same page about the technology used and the division of tasks. This also involves proactive troubleshooting during the process to prevent delays caused by unforeseen technical snags.

Q 23. Describe your proficiency in using various studio hardware (e.g., mixers, interfaces).

My experience with studio hardware is extensive. I’m proficient in operating various mixing consoles, from small analog mixers like the Mackie 1202-VLZ4, ideal for quick and dirty mixes, to larger digital consoles such as the Yamaha RIVAGE PM7, suitable for large-scale productions demanding precision and versatility. I understand the principles of signal flow, gain staging, EQ, dynamics processing, and routing within a hardware environment. I am comfortable with both inline and outboard processing. I’ve worked with various audio interfaces, from Focusrite Scarlett interfaces for basic recording to high-end units like Universal Audio Apollo interfaces, which allow for the integration of UAD plugins and high-quality A/D and D/A conversion. I’m adept at troubleshooting hardware issues, identifying and addressing problems quickly and efficiently, often diagnosing the problem even before resorting to technical support. For instance, I once resolved a noisy signal issue in a complex mixing setup by identifying a faulty cable, rather than immediately suspecting a failing mixer channel.

Q 24. How familiar are you with different types of audio monitoring systems?

My familiarity with audio monitoring systems is comprehensive, encompassing various speaker types and configurations. I understand the importance of accurate and fatigue-free listening, which is why I’m comfortable using studio monitors from different brands, such as Genelec, KRK, and Adam Audio. I appreciate the subtle differences in their frequency responses and their suitability for different tasks – for example, Genelec’s precision is crucial for critical mastering tasks, while KRKs might be preferred for tracking. I know how to calibrate monitoring systems for accurate sound reproduction using measurement tools like Sonarworks Reference 4, ensuring that my mixes translate well to different playback systems. I also understand the role of acoustic treatment in a monitoring environment, and how factors like room reflections and standing waves affect the sound. I’ve designed and implemented acoustic treatments for studios to optimize the listening experience, taking into account factors like bass traps and diffusion panels.

Q 25. What is your experience with automated mixing and mastering workflows?

I have considerable experience with automated mixing and mastering workflows. While I believe human intuition and artistry remain crucial, I effectively leverage tools like iZotope Ozone and RX for tasks such as spectral balancing, dynamic processing, and noise reduction. I understand the principles behind these processes and can customize automated actions to achieve specific creative outcomes, always maintaining a critical ear to prevent over-processing or undesirable artifacts. My approach isn’t fully automated; instead, I use these tools to streamline repetitive tasks and enhance efficiency. For example, I might use Ozone’s mastering assistant to generate a starting point for a master bus, then refine the results with manual adjustments based on my artistic judgment and the specific characteristics of the music. I am particularly skilled in using automation to optimize workflows, creating custom macros and scripts to achieve consistent and high-quality results.

Q 26. How do you stay current with advancements in music technology?

Staying current in music technology is a continuous process. I regularly read industry publications like Sound on Sound and Mix, attend workshops and conferences such as NAMM and AES, and actively participate in online communities and forums. I subscribe to newsletters from relevant companies to stay informed about new products and updates. I’m also a keen user of online tutorials, exploring new plugins and techniques through YouTube channels and masterclasses. This multi-pronged approach ensures I am aware of the latest advancements and best practices, allowing me to integrate them into my workflow where appropriate. Specifically, I focus on acquiring knowledge about new production techniques and plugin capabilities, paying attention to the evolving demands and trends in the industry.

Q 27. Describe your workflow for a typical music production project.

My workflow for a typical music production project is iterative and adaptable, but generally follows these stages: 1. Pre-Production: This involves planning the overall structure, arranging the song, and sketching out instrumental parts. 2. Tracking: Recording all the individual instruments and vocals. 3. Editing: This stage focuses on refining the recorded tracks—cleaning up timing, pitch, and unwanted noise. 4. Mixing: Combining all the tracks, balancing their levels, and applying effects to create a cohesive and well-balanced mix. 5. Mastering: Final stage of audio post-production, polishing the mix for optimal loudness and dynamic range across different playback systems. Throughout this process, I leverage various DAWs (Digital Audio Workstations) such as Ableton Live and Logic Pro X, constantly evaluating and optimizing my workflow based on the project’s demands and my learning and experimentation. For example, if a project requires a specific sound, I will actively research and experiment with different plugins and techniques to achieve that sound, iterating and testing until the desired results are reached.

Q 28. What are your experience with immersive audio technologies like binaural and 360 audio?

My experience with immersive audio technologies such as binaural and 360 audio is growing. I’ve worked with binaural recording techniques using dummy heads and specialized microphones, understanding the nuances of capturing realistic spatial cues. I am familiar with 360 audio workflows using software like Sound Particles and Reaper, creating and manipulating ambisonic recordings. I’m aware of the challenges involved in achieving high-quality immersive audio, such as the need for precise spatial positioning and the importance of considering the listener’s perspective. I am currently exploring the use of Ambisonics and object-based audio formats for projects that demand a realistic spatial audio experience. The creation of immersive soundscapes requires a solid understanding of spatial audio principles, and a creative mindset to design soundscapes that engage the listener completely.

Key Topics to Learn for a Proficient in Music Technology Interview

- Digital Audio Workstations (DAWs): Understanding the core functionalities of popular DAWs (e.g., Logic Pro X, Ableton Live, Pro Tools) including MIDI editing, audio editing, mixing, mastering, and automation. Consider practical application examples from your projects.

- Signal Processing: Grasping fundamental concepts like equalization (EQ), compression, reverb, delay, and their effects on sound. Be prepared to discuss how you’d use these tools to solve specific mixing or mastering challenges.

- Music Theory and Notation: Demonstrating a strong understanding of music theory (scales, chords, harmony, rhythm) and its application in music production. Be ready to discuss how theoretical knowledge informs your practical approach.

- Synthesizers and Sound Design: Familiarity with various synthesizer types (analog, virtual, etc.), sound design techniques, and the creation of unique sounds. Be prepared to discuss your approach to sound design and the tools you use.

- Audio for Video: Understanding the workflow and technical considerations involved in audio post-production for film, video games, or other visual media. This could include dialogue editing, sound effects design, and Foley.

- Music Production Workflow: Demonstrating an efficient and organized workflow for music production, including pre-production planning, recording, editing, mixing, and mastering. Be ready to discuss your preferred workflow and how it contributes to your efficiency and creative output.

- Software and Hardware Knowledge: Familiarity with relevant software (plugins, virtual instruments) and hardware (interfaces, controllers, microphones) used in music technology. Be prepared to discuss your experience with specific tools and their functionalities.

Next Steps

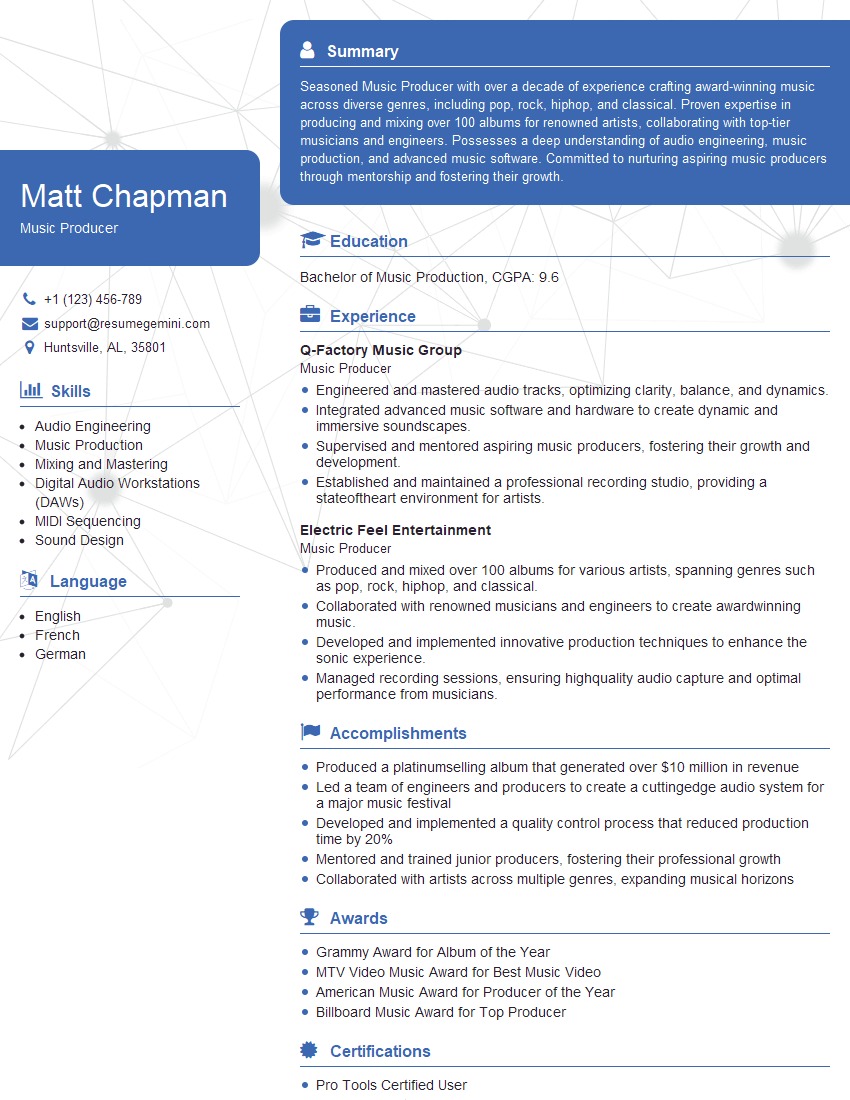

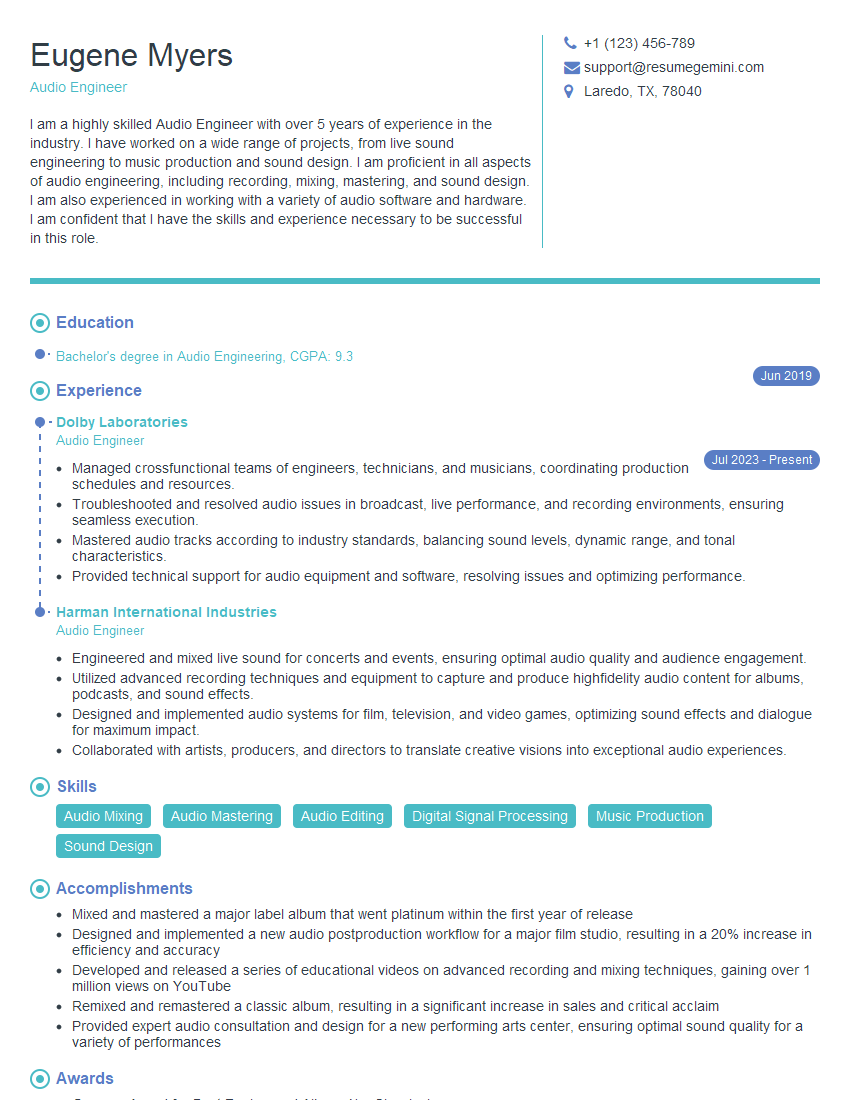

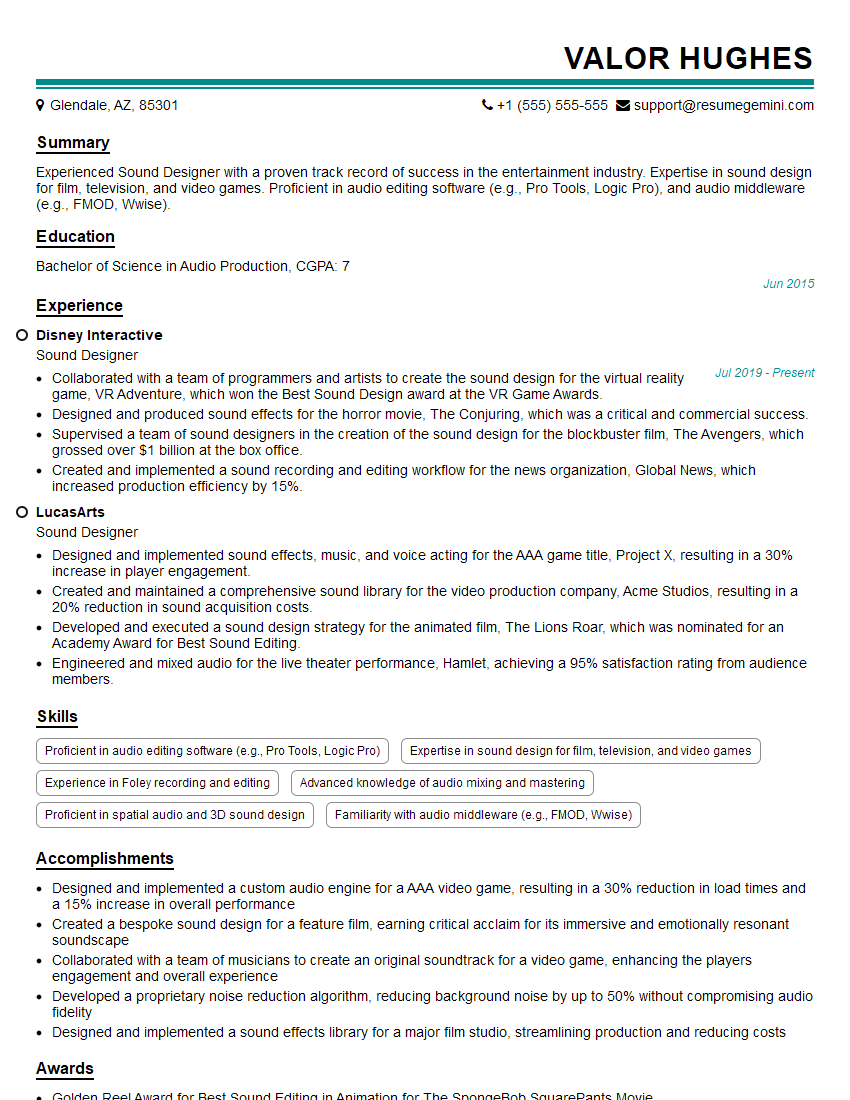

Mastering music technology opens doors to exciting careers in audio engineering, music production, sound design, and related fields. A strong understanding of these concepts is crucial for career advancement and securing your dream role. To maximize your job prospects, create an ATS-friendly resume that highlights your skills and experience effectively. ResumeGemini is a trusted resource to help you build a professional and impactful resume that catches the eye of recruiters. ResumeGemini provides examples of resumes tailored to the music technology field, ensuring you present yourself in the best possible light.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Very informative content, great job.

good