Interviews are more than just a Q&A session—they’re a chance to prove your worth. This blog dives into essential Shading and Lighting Techniques interview questions and expert tips to help you align your answers with what hiring managers are looking for. Start preparing to shine!

Questions Asked in Shading and Lighting Techniques Interview

Q 1. Explain the difference between diffuse, specular, and ambient lighting.

Imagine shining a flashlight on a wall. The light interacts with the surface in several ways, represented by diffuse, specular, and ambient lighting. Diffuse lighting is the soft, scattered light that reflects equally in all directions. Think of the wall’s overall color – that’s diffuse. Specular lighting is the shiny highlight you see, like a tiny mirror reflecting the light source directly. The intensity and size of this highlight depend on the surface’s shininess and the angle of the light. Finally, ambient lighting is the overall background illumination. It’s the soft light that fills in shadows and prevents areas from becoming completely black, representing light bouncing around the entire scene indirectly.

- Diffuse: Determines the base color and how evenly light reflects.

- Specular: Creates highlights based on the light source’s position and surface material.

- Ambient: Provides general illumination to avoid harsh shadows and dark areas.

In a game, a matte clay pot would have strong diffuse lighting and weak specular lighting. A polished metal teapot would exhibit strong specular reflections and a subtle diffuse component.

Q 2. Describe your experience with different lighting models (e.g., Phong, Blinn-Phong, Cook-Torrance).

I have extensive experience with various lighting models, each offering different levels of realism and computational cost. Phong shading is a classic and relatively simple model. It calculates diffuse and specular lighting based on the angle between the light source, surface normal, and viewer. Blinn-Phong is an improvement that’s more efficient, replacing the computationally expensive calculation in Phong’s specular component with a half-vector, offering a good balance between realism and speed. Cook-Torrance is a more physically based model, providing more accurate and realistic specular highlights by considering microfacet theory. It simulates the behavior of light reflecting off microscopic irregularities on the surface. While more accurate, it’s more computationally expensive.

In my work, I’ve frequently used Blinn-Phong for real-time applications due to its speed and satisfactory visual results. For offline rendering or high-fidelity projects where performance isn’t as critical, Cook-Torrance offers significantly better visual quality. The choice often hinges on the project’s requirements and constraints. For example, I’d use Blinn-Phong in a game engine to ensure high frame rates, while Cook-Torrance would be preferable for a high-resolution cinematic render.

Q 3. How do you optimize lighting for real-time rendering?

Optimizing lighting for real-time rendering is crucial for performance. Several strategies are employed:

- Using simpler lighting models: Blinn-Phong is often preferred over Cook-Torrance in real-time applications.

- Light culling: Only calculate lighting for objects and light sources that are visible in the current view frustum. This reduces calculations drastically.

- Shadow optimization: Employ techniques like cascaded shadow maps to reduce shadow aliasing and improve performance. Also consider using simpler shadow algorithms for less computationally intensive results where possible.

- Light pre-pass: Calculating lighting values in a separate rendering pass to reduce per-pixel calculations in the final pass.

- Light probes/Lightmaps: For static scenes, pre-calculate lighting and store it in textures for quick retrieval, which improves performance for stationary objects and indirect lighting.

- Level of Detail (LOD) for lighting: Switch to simpler lighting methods for distant objects where the visual details are less noticeable.

A common approach is to combine these techniques. For instance, you might use Blinn-Phong with cascaded shadow maps and light culling for high-performance real-time rendering.

Q 4. What are the advantages and disadvantages of using baked lighting versus dynamic lighting?

Baked lighting pre-calculates lighting information and stores it in textures (lightmaps). Dynamic lighting calculates lighting in real-time as the scene changes. Each has advantages and disadvantages:

- Baked Lighting:

- Advantages: Extremely efficient during runtime as lighting calculations are done offline. Allows for complex lighting setups.

- Disadvantages: Requires static geometry. Changes to scene geometry or lighting necessitate a recalculation of baked lighting data which can be time-consuming.

- Dynamic Lighting:

- Advantages: Supports moving objects, lights, and dynamic scenes.

- Disadvantages: Can be computationally expensive, especially with many lights or complex lighting models. May result in lower frame rates in real-time applications.

The choice often depends on the application. Baked lighting is ideal for static environments like architectural visualization, while dynamic lighting is necessary for games and interactive applications where objects move and lights change.

Q 5. Explain your understanding of global illumination techniques (e.g., ray tracing, path tracing).

Global illumination simulates the indirect lighting in a scene, where light bounces multiple times before reaching the viewer’s eye. Ray tracing and path tracing are two prominent techniques:

- Ray tracing: Traces rays from the camera into the scene, checking for intersections with objects. It typically handles reflections and refractions accurately. It’s very efficient for reflections and refractions but struggles with diffuse inter-reflections.

- Path tracing: Simulates the path of light rays from the light source, bouncing off various surfaces before reaching the camera. It handles diffuse inter-reflections effectively but is computationally very intensive. It simulates global illumination more accurately by considering all possible light paths.

In simpler terms, imagine a pool ball hitting other balls on a table. Ray tracing is like following the path of one specific ball, while path tracing is like tracking all possible paths every ball can take.

Ray tracing is often used for real-time applications to get realistic reflections and refractions. Path tracing is better for offline high-quality renders, especially when you need that global diffuse interreflection.

Q 6. How do you handle light bleeding and artifacts in your work?

Light bleeding and artifacts are common problems in rendering. They occur due to inaccuracies in the lighting calculations and limitations of the algorithms. Several techniques are employed to mitigate these issues:

- Proper filtering techniques: Using appropriate filtering methods (like PCF – Percentage-Closer Filtering or PCSS – Percentage Closer Soft Shadows) for shadow maps and other lighting textures helps reduce aliasing and improve the visual quality of shadows.

- Higher resolution textures: Using higher-resolution textures for shadow maps or lightmaps reduces aliasing artifacts, particularly in shadows.

- Contact hardening: This technique slightly expands the shadows to cover gaps or seams between surfaces which reduces light bleeding.

- Improved sampling techniques: Increasing the number of samples in ray tracing or path tracing can lead to cleaner lighting results, reducing artifacts and noise. In global illumination, more samples result in more accurate results.

- Careful scene geometry design: Properly planned scene geometry, where surfaces don’t overlap too closely, can reduce some light bleeding.

The specific method used depends on the cause and type of artifact. A combination of approaches is often necessary to achieve satisfying results.

Q 7. Describe your experience with different shadow mapping techniques (e.g., shadow maps, cascaded shadow maps).

Shadow mapping is a fundamental technique used to render shadows in real-time. I have experience with various techniques:

- Shadow maps: A simple and common method. The scene is rendered from the light’s perspective to create a depth map. This depth map is then used to determine whether pixels in the main rendering pass are in shadow or not.

- Cascaded shadow maps: An improved technique that divides the view frustum into multiple cascades (regions). Each cascade has its own shadow map, which helps reduce the resolution issues encountered with using only a single shadow map.

- Variance shadow maps (VSM): Store variance information along with the depth, resulting in much softer shadows compared to standard shadow maps. Requires more memory compared to standard shadow maps.

Cascaded shadow maps are very widely used for real-time applications as they offer a good balance of performance and shadow quality, reducing aliasing and shadow stretching artifacts prevalent in basic shadow maps. For high-end renders or situations needing softer shadows without the need for high performance, I’ve used Variance Shadow Maps to achieve higher-quality visual results.

Q 8. Explain how you would approach creating realistic subsurface scattering effects.

Subsurface scattering (SSS) is a phenomenon where light penetrates a surface and scatters internally before exiting. Think of how light subtly illuminates the skin, making it look translucent, or how light appears to glow from within a marble statue. Creating realistic SSS effects requires understanding how light interacts with the material’s density and thickness.

My approach involves a multi-step process: First, I’d determine the appropriate SSS model based on the material’s properties. Simple models like diffusion approximation are suitable for fast rendering, while more complex models like dipole or path tracing offer higher fidelity but are computationally more expensive. The choice depends on the project’s performance requirements and desired visual quality.

Next, I’d implement the chosen model in a shader. This might involve using built-in functions or writing custom ones based on the chosen scattering model. For example, a diffusion approximation might use a convolution with a pre-computed scattering profile.

Finally, I’d meticulously adjust parameters like scattering radius, albedo, and scattering color to match the desired material. This often involves iterative tweaking and referencing real-world examples or reference images. For instance, skin SSS would have a different scattering radius and color compared to wax.

For particularly challenging scenarios, I may leverage techniques like pre-computed irradiance volumes for improved efficiency in real-time rendering or use ray tracing for the highest quality offline renders.

Q 9. How do you troubleshoot lighting issues in a game engine or rendering pipeline?

Troubleshooting lighting issues is a detective process. My first step is always isolating the problem. Is it a global lighting issue, or localized to a specific object or area? I systematically investigate using a range of tools and techniques.

- Visual Inspection: Carefully examine the scene, comparing it to reference images or videos. Look for inconsistencies in lighting, shadowing, or color.

- Shader Debugging: Use the engine’s debugging tools to inspect the values of shader variables. Check for unexpected values or incorrect calculations. This might involve inserting

debugstatements into the shaders themselves. - Light Source Analysis: Analyze the properties of light sources: intensity, color, type (directional, point, spot), and shadows. Are the light sources positioned correctly? Are their settings realistic and consistent?

- Material Inspection: Examine the materials’ properties. Are the albedo, roughness, metallic values set appropriately? Are there any issues with normal maps or other texture maps?

- Pipeline Analysis: Analyze the rendering pipeline stages: Is there a problem with the lighting calculations, the shadow mapping, or the tone mapping?

I often use a process of elimination, systematically disabling parts of the lighting system or modifying individual parameters to pinpoint the source of the problem. The engine’s logging system is invaluable, often providing error messages or warnings that point to issues in the lighting setup.

Q 10. What is your experience with different shader languages (e.g., HLSL, GLSL)?

I’m proficient in both HLSL (High-Level Shading Language) and GLSL (OpenGL Shading Language). HLSL is my primary language for DirectX-based projects, while GLSL is used for OpenGL projects. I understand their similarities and differences and can quickly adapt between them.

My experience extends beyond simply writing shaders. I understand the nuances of each language, optimizing for performance, and leveraging the capabilities of each API. For instance, I can utilize HLSL’s specific features like structured buffers or efficiently utilize GLSL’s built-in functions for vector math. I also understand compiler optimization techniques and how to profile shaders for potential bottlenecks.

I’ve worked on projects that required complex shader effects, including physically based rendering, subsurface scattering, advanced lighting techniques, and post-processing effects. My skills extend to both vertex and fragment shaders, allowing me to manipulate geometry and pixel data for achieving the desired visual outcome. I’m comfortable working with shader includes and libraries for managing and reusing code efficiently.

Q 11. Explain your process for creating realistic materials and textures.

Creating realistic materials and textures is an iterative process combining artistic skill and technical knowledge. It starts with understanding the material’s properties and how it interacts with light. I begin by gathering reference images and studying the material’s physical characteristics – its roughness, reflectivity, color variations, and any unique features.

For textures, I might use a combination of techniques. Photogrammetry provides high-fidelity textures directly from real-world objects, while procedural generation allows for creating complex textures based on mathematical formulas, enabling greater control and repeatability. Hand-painted textures can provide a unique artistic touch.

I then translate this information into a material definition within the game engine or renderer. This involves defining parameters such as albedo (base color), roughness (surface smoothness), metallic (reflectivity), normal map (surface detail), and potentially others depending on the complexity required (e.g., subsurface scattering parameters).

The process is iterative. I often render test scenes to evaluate the material’s appearance and make adjustments based on the result, constantly refining the material properties until I achieve a visually convincing result. I may also use tools to analyze real-world materials to determine their roughness and other physical properties, to improve accuracy.

Q 12. How do you balance artistic vision with technical constraints in lighting design?

Balancing artistic vision and technical constraints is a crucial aspect of lighting design. It’s a constant negotiation. A beautiful artistic vision might require significantly more processing power than the target platform allows.

My approach involves open communication with the art director. Early discussions about the project’s scope and target platform’s limitations help establish realistic expectations. We jointly define lighting styles and identify potential challenges early in the process. For example, we might agree to simplify certain lighting effects if they prove too computationally expensive for real-time rendering.

Throughout the process, I leverage profiling and optimization techniques. I regularly monitor performance metrics and identify bottlenecks. This might involve simplifying shaders, reducing the number of light sources, or employing more efficient rendering techniques such as light baking or using lower-resolution shadow maps. The goal is to find the best compromise that maintains visual fidelity while staying within the performance budget.

Sometimes, compromises are necessary. Perhaps highly detailed shadows are replaced by simpler, less computationally intensive ones. These are made judiciously, considering their impact on the overall visual quality. The key is constant communication and a willingness to adapt and iterate.

Q 13. Describe your experience with light baking and its optimization strategies.

Light baking is a crucial technique for pre-calculating lighting information, making it highly efficient for real-time rendering. It’s particularly useful for static geometry and indirect lighting. My experience involves using various light baking solutions and optimization strategies.

The process typically involves these steps: First, I prepare the scene. This includes identifying which objects require baked lighting, setting up light sources, and choosing the appropriate lightmap resolution. Higher resolution produces better quality but increases memory usage and baking time.

Then I configure the baking settings. These involve parameters like the baking algorithm (e.g., progressive photon mapping, irradiance caching), lightmap resolution, and texture compression. Choosing an appropriate algorithm depends on factors such as scene complexity and desired quality.

After baking, I integrate the lightmaps into the rendering pipeline. This usually involves using the engine’s built-in tools or writing custom shaders to access and apply the baked lighting data. Optimization here involves careful use of texture atlases to minimize draw calls and using appropriate texture formats for memory efficiency.

Optimization strategies include using lightmap atlases to reduce draw calls, employing texture compression to minimize memory footprint, and carefully selecting the resolution of lightmaps based on the visual importance of the object. I also explore using techniques like light propagation volumes for areas with complex indirect lighting where traditional light baking might struggle.

Q 14. How familiar are you with light probes and their application?

Light probes are a powerful technique for capturing and reusing indirect lighting information, offering a good balance between quality and performance. I’m very familiar with their application and various implementation methods.

Light probes capture the lighting at various points in the scene, storing the radiance (light intensity and direction) in a cubemap or similar data structure. These cubemaps are then used during rendering to approximate the lighting at points near the probe, providing a relatively fast and efficient way to render indirect illumination.

I’ve worked with various types of light probes, including spherical harmonics, which represent the lighting using mathematical functions, and irradiance volumes, which capture lighting within a 3D volume. The choice depends on the desired quality and performance characteristics.

The application of light probes includes rendering indirect lighting for static geometry, and creating realistic reflections and refractions. In real-time applications, light probes are often used to reduce the computational cost of rendering global illumination.

Optimization strategies involve carefully placing the probes to capture the most important lighting variations and using efficient data structures and algorithms for interpolation between probes. I also understand the trade-offs involved: more probes improve accuracy but also increase memory usage and rendering time.

Q 15. What are your preferred methods for creating realistic reflections and refractions?

Creating realistic reflections and refractions involves understanding the physics of light interaction with surfaces. My preferred methods leverage ray tracing and environment maps. For reflections, I use ray tracing to cast rays from the surface into the scene, sampling the environment map at the reflection vector. This provides accurate reflections that adapt to the surrounding environment. For highly polished surfaces, I might use screen-space reflections (SSR) for performance optimization, particularly for close-range reflections, but ray tracing offers superior quality for larger, more distant reflections. Refractions are handled similarly, but instead of reflecting the ray, we refract it using Snell’s Law, accounting for the index of refraction of the material. This accurately simulates the bending of light as it passes through transparent objects. To enhance realism, I often incorporate Fresnel effects, where the reflectivity of a surface changes based on the viewing angle, resulting in more believable transitions between reflection and refraction.

For example, in a game with a glass of water, ray tracing would accurately simulate the light bending as it passes through the water and reflects off the inner surface of the glass, creating realistic distortions and highlights. SSR would be a good complement for the water’s surface, quickly capturing the reflection of near objects.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. Explain your understanding of physically-based rendering (PBR).

Physically-Based Rendering (PBR) is a rendering technique that aims to simulate the interaction of light with materials in a physically accurate way. It’s based on the principles of microfacet theory and energy conservation. Instead of using arbitrary parameters to control the appearance of materials, PBR utilizes physically measurable properties such as albedo (base color), roughness (surface smoothness), metallic (metalness), and normal maps. This allows for more predictable and realistic results, independent of the specific lighting conditions.

For instance, a rough, non-metallic surface will diffuse light more evenly, while a smooth, metallic surface will have sharp specular highlights and strong reflections. This consistency is crucial for achieving a photorealistic look and feel. The use of energy conservation means that the light energy entering a scene isn’t lost; it’s either absorbed, reflected, or transmitted. This avoids artifacts like overly bright surfaces or unrealistically dark shadows. Many modern game engines and rendering software rely on PBR workflows because of their predictable and consistent results.

Q 17. How do you optimize shaders for performance on different hardware?

Optimizing shaders for performance across various hardware requires a multi-pronged approach. Profiling is critical; I utilize tools to identify performance bottlenecks in my shaders. Common optimization strategies include reducing the number of instructions, minimizing branching (conditional statements), and using efficient mathematical operations. I often employ techniques like loop unrolling and texture atlasing to improve memory access patterns.

For different hardware, I might employ different shader code paths (e.g., using simpler calculations on lower-end hardware) or leverage hardware-specific features (e.g., using compute shaders on GPUs that support them for tasks like shadow mapping or particle effects). Furthermore, I pay close attention to data types; using lower-precision floating-point numbers (where appropriate) can significantly improve performance on mobile devices or older hardware. It’s also important to consider the shader complexity relative to the scene’s complexity and overall rendering budget.

For example, a shader heavily relying on complex ray tracing could be optimized by reducing ray depth or implementing a simpler method for less demanding scenarios. Careful shader design and the use of profiling tools are crucial for creating high-performance visuals across different platforms.

Q 18. Describe your workflow for creating and implementing lighting in a project.

My lighting workflow begins with a clear understanding of the project’s artistic direction and desired mood. I usually start by defining the key light sources—the sun, ambient light, and key fill lights—and their properties (color, intensity, and type). I then consider the types of shadows I need: soft shadows for a more diffused look, or hard shadows for a more dramatic effect. I choose the appropriate shadow mapping technique based on performance needs and visual quality. I typically start with simpler lighting setups and iteratively refine them based on feedback and testing.

For example, I might begin with a single directional light representing the sun, then add ambient lighting to prevent completely dark areas. Then, I might incorporate point lights for smaller light sources like lamps, creating more localized lighting effects. I often use a combination of global illumination techniques (like baked lighting or light probes) for more efficient and realistic indirect lighting, followed by dynamic lighting effects for real-time changes in light and shadow. This iterative process ensures that the final lighting setup both looks great and performs well.

Q 19. How do you use color temperature and hue to create mood and atmosphere?

Color temperature and hue are powerful tools for setting mood and atmosphere. Color temperature, measured in Kelvin, affects the overall ‘warmth’ or ‘coolness’ of the scene. Cooler colors (blues and purples, associated with higher Kelvin values) often evoke feelings of serenity, sadness, or coldness. Warmer colors (reds and oranges, associated with lower Kelvin values) usually create a sense of warmth, comfort, or excitement. Hue, on the other hand, represents the pure color itself. Different hues evoke distinct emotional responses; for instance, red might suggest danger or passion, while green could represent nature or tranquility.

For example, a scene with cool-toned bluish lighting might suggest a cold, lonely night, while a scene with warm orange tones might convey a sense of sunset or a cozy interior. By carefully adjusting the color temperature and hue throughout the scene, I can guide the player’s emotions and create a more immersive and engaging experience. Combining these with saturation (intensity of color) provides further control over the atmosphere.

Q 20. How familiar are you with different light types (e.g., point lights, directional lights, spotlights)?

I’m very familiar with various light types commonly used in rendering. Directional lights simulate light sources far away, like the sun, casting parallel rays. Point lights emit light uniformly in all directions, like a light bulb. Spotlights, similar to point lights, have a cone-shaped beam of light, often used to simulate lamps or spotlights. I also have experience with area lights, which are more physically accurate representations of light sources with a physical size, producing softer shadows. The choice of light type significantly impacts the visual style and performance of the scene. For example, point lights are easy to implement but can be computationally expensive for large numbers; directional lights are very efficient but lack the localized effect of a point light.

Understanding the strengths and weaknesses of each type is crucial for efficient and effective lighting design. Often, a combination of light types is used to create a well-lit scene that’s both visually appealing and performs well. For instance, a scene might use a directional light for general illumination, supplemented by point lights and spotlights for local details and accents.

Q 21. Explain your experience with image-based lighting (IBL).

Image-Based Lighting (IBL) is a technique that uses high-resolution environment maps to simulate realistic lighting and reflections within a scene. It’s a powerful method for creating realistic indirect lighting without the computational cost of full global illumination simulations. The environment map captures the lighting and reflections from the surrounding environment and is then used to illuminate and reflect light onto objects in the scene. I use various techniques to process and implement IBL, such as pre-filtering the environment map for different roughness levels to correctly simulate how light interacts with surfaces of different smoothness, and using cubemaps for efficient rendering.

IBL significantly enhances the realism and immersion of a scene by providing accurate and detailed indirect lighting and reflections. For instance, in an outdoor scene, IBL can accurately simulate the diffuse and specular lighting from the sky and surroundings, without the need for individually placed light sources, leading to much faster render times while still creating a believable scene. The use of IBL is crucial for creating high-quality visuals in real-time applications and game development.

Q 22. How do you handle dynamic objects and their interaction with lighting?

Handling dynamic objects and their lighting interaction requires efficient and adaptable techniques. Imagine a game with moving characters and objects; you can’t recalculate the entire scene’s lighting every frame. Instead, we use several strategies. First, spatial partitioning methods like octrees or bounding volume hierarchies (BVHs) organize scene geometry, enabling faster light calculations by only considering objects near light sources. Second, we employ techniques like shadow mapping or shadow volumes to render shadows dynamically. Shadow mapping projects the scene from the light’s perspective, creating a depth map used to determine if a pixel is in shadow. Shadow volumes build volumes around objects, then check if a light ray intersects these volumes. Third, for more sophisticated effects, deferred shading can improve performance. This method calculates lighting per pixel, once geometry is already rendered, allowing for more efficient processing of dynamic elements. Lastly, light probes or baked lighting can be pre-calculated for static parts of the scene, reducing the load on the dynamic elements’ lighting calculation.

Q 23. Describe your experience with light culling techniques.

Light culling is crucial for optimization, especially in complex scenes. It’s the process of eliminating lights that don’t significantly affect a given area. I’ve extensively used frustum culling, which checks if a light’s influence area (sphere or bounding box) intersects the camera’s viewing frustum – essentially, can the camera even see the effect of this light? If not, we don’t bother computing it. Beyond frustum culling, I’ve implemented hierarchical occlusion culling, where we use a hierarchy of bounding volumes to quickly determine if lights are obscured by geometry. Think of it like looking through a window – you don’t need to calculate the lighting of objects behind the walls if you are not seeing it. For point lights, distance culling is effective; if a light is beyond a certain distance, its effect is negligible, so it’s removed. Choosing the right method depends on the scene’s complexity and performance requirements. In a project with thousands of lights, a well-implemented hierarchical culling technique was essential to keep the frame rate smooth.

Q 24. How familiar are you with different light falloff functions?

Light falloff functions describe how the intensity of a light decreases with distance. I’m familiar with several, each with its characteristics: linear falloff (intensity drops linearly), inverse square falloff (intensity drops proportionally to the inverse square of the distance—this is physically accurate for many light sources), and inverse linear (a compromise between linear and inverse square). I’ve also worked with custom falloff curves, providing more artistic control; for example, a light might have a sharp cutoff, which you can’t easily achieve with simple functions. The choice depends on the desired aesthetic. A linear falloff can give a broader, more even illumination, while inverse square provides a more realistic decay. Sometimes, a custom curve allows for creative control, for instance simulating a light source with a specific projector design or lens effect.

Q 25. What is your approach to creating believable shadows?

Creating believable shadows is key to realism. My approach is multifaceted. Firstly, I consider the type of light source. A point light casts sharp shadows with a well-defined umbra (completely dark area) and penumbra (partially shadowed area). An area light, like the sun, creates softer, more diffused shadows. Secondly, I utilize appropriate shadow techniques: shadow mapping for performance, especially in dynamic scenes, and ray tracing for high-quality, realistic shadows when performance allows. Ray tracing calculates light paths accurately, capturing subtle shadow interactions and indirect lighting. Thirdly, I pay attention to shadow resolution and filtering. Lower resolution can result in aliasing or jagged shadow edges, which is undesirable. Shadow filtering helps smooth these edges. Fourthly, I consider the ambient occlusion, where shadows can be introduced by the surrounding geometry, making the scene more realistic. Finally, I often experiment with techniques like contact shadows, which create subtle shadows under objects to enhance realism and reduce a ‘floating’ effect.

Q 26. How do you use lighting to enhance the storytelling in a scene?

Lighting is storytelling’s unsung hero. I use it to guide the viewer’s eye, create mood, and emphasize narrative elements. For example, a dark, ominous scene illuminated only by a single flickering candle can evoke fear and mystery, whereas a brightly lit, welcoming scene might feel cheerful and safe. Using color temperature is critical; cool blues can convey coldness and isolation, while warm oranges and reds communicate warmth and excitement. Light direction is also significant: a back light can silhouette a character, creating a sense of mystery or danger, while a front light can make them appear approachable and open. In one project, we used a spotlight to focus the viewer’s attention on a crucial item within a cluttered room, ensuring the narrative’s progression was not obscured by too much detail. Subtle changes in lighting can greatly alter the emotional impact of a scene, enhancing the storytelling powerfully.

Q 27. Describe your experience working with different lighting setups (e.g., HDRI, environment maps).

I have extensive experience with various lighting setups. HDRI (High Dynamic Range Imaging) allows for realistic and immersive environments. An HDRI environment map captures the lighting from a 360-degree sphere and is mapped onto the scene, creating dynamic reflections and indirect illumination. This produces incredibly realistic lighting, even without multiple point lights. Working with environment maps (which can also be LDR, Lower Dynamic Range Images) allows us to set up lighting based on existing images or to create stylized looks. For example, using a cityscape environment map instantly grounds a scene in a believable urban setting. Combining these techniques with IBL (Image-Based Lighting), which uses environment maps to calculate indirect illumination, allows for efficient creation of realistic lighting environments. We sometimes use these techniques for real-time applications and pre-rendered scenarios, depending on the project’s needs and requirements.

Q 28. Explain your understanding of volumetric lighting and fog.

Volumetric lighting simulates the scattering and absorption of light within a volume, like fog or smoke. This creates realistic atmospheric effects. It’s different from surface lighting, which only affects surfaces. Techniques like ray marching or volume rendering calculate light scattering along rays through the volume. Consider a scene with fog: volumetric lighting would illuminate the fog itself, showing light beams scattering through it, and creating depth and mood. I’ve also used screen-space techniques for volumetric fog in real-time applications. These techniques approximate volumetric effects by rendering them onto the screen, providing a compromise between realism and performance. The choice depends on the level of realism needed and the hardware limitations. For realistic results in pre-rendered scenes, ray marching with scattering models is favored, allowing for realistic depictions of light beams, haze, and atmospheric perspectives.

Key Topics to Learn for Shading and Lighting Techniques Interview

- Understanding Light Sources: Types of light (ambient, directional, point, spot), color temperature, intensity, and falloff. Practical application: Analyzing existing lighting setups in game engines or 3D software and optimizing them for realism and performance.

- Shading Models: Lambert, Phong, Blinn-Phong, Cook-Torrance. Understanding the strengths and weaknesses of each model and their appropriate applications in different scenarios. Practical application: Implementing a chosen shading model in a shader language (e.g., GLSL, HLSL) and comparing visual results.

- Material Properties: Diffuse, specular, ambient, reflectivity, roughness, metallicness. How these properties affect the appearance of surfaces under different lighting conditions. Practical application: Creating realistic materials in a 3D modeling package by adjusting material parameters to achieve desired visual results.

- Shadowing Techniques: Shadow maps, shadow volumes, screen-space ambient occlusion (SSAO). Understanding the trade-offs between accuracy, performance, and visual quality. Practical application: Implementing and optimizing a shadow technique in a game engine or renderer.

- Global Illumination: Understanding basic concepts like radiosity and path tracing. Practical application: Discussing the impact of global illumination on scene realism and performance considerations. Understanding the benefits of different baking techniques.

- Lighting Techniques for Specific Genres: How lighting techniques differ in realistic rendering, stylized rendering (e.g., cel shading), and other artistic styles. Practical application: Analyzing lighting choices in different games or films and explaining the artistic and technical reasons behind them.

- Optimizing Performance: Techniques for improving the efficiency of shading and lighting calculations, such as level of detail (LOD) and culling. Practical application: Identifying performance bottlenecks in a lighting pipeline and suggesting solutions for optimization.

Next Steps

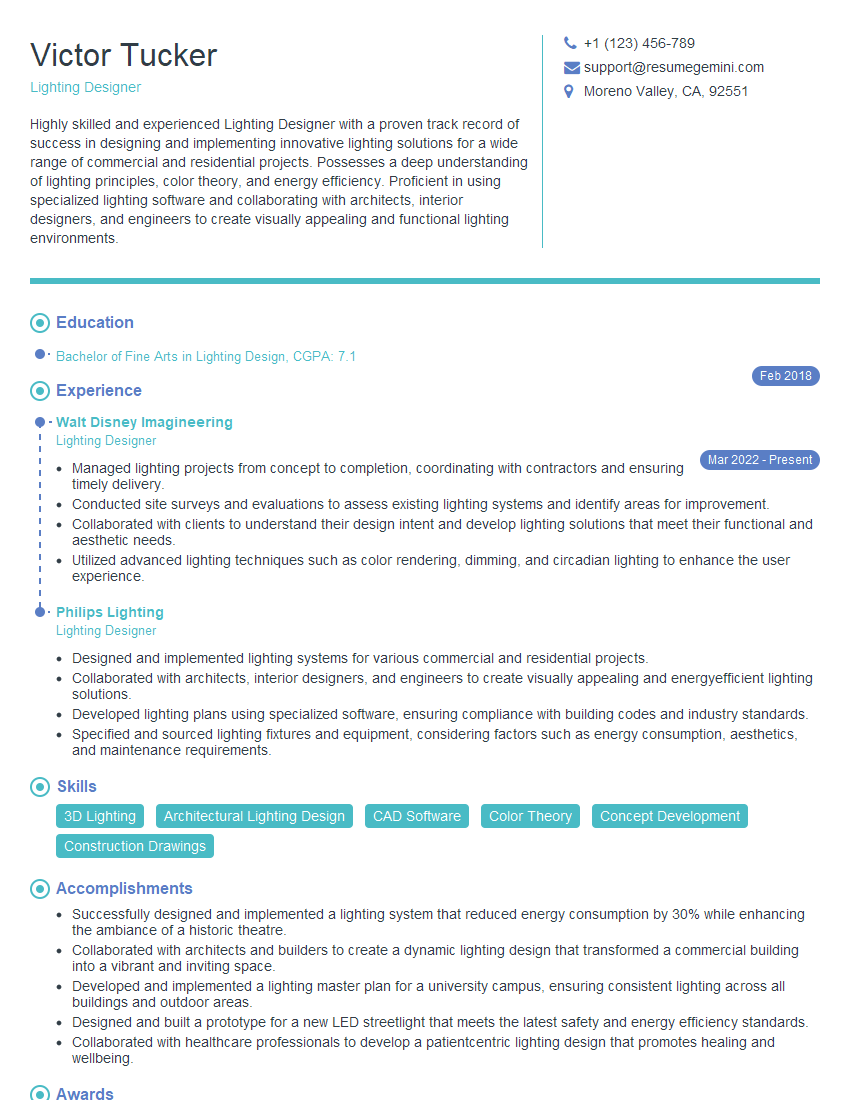

Mastering shading and lighting techniques is crucial for career advancement in fields like game development, film VFX, and architectural visualization. A strong understanding of these techniques demonstrates a high level of technical skill and creative problem-solving ability, making you a highly desirable candidate. To boost your job prospects, focus on creating an ATS-friendly resume that effectively showcases your skills and experience. ResumeGemini is a trusted resource that can help you build a professional and impactful resume. We provide examples of resumes tailored to Shading and Lighting Techniques to guide you in creating your own compelling application materials.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Very informative content, great job.

good