Interviews are opportunities to demonstrate your expertise, and this guide is here to help you shine. Explore the essential Software Safety Engineering interview questions that employers frequently ask, paired with strategies for crafting responses that set you apart from the competition.

Questions Asked in Software Safety Engineering Interview

Q 1. Explain the difference between safety and security in software.

While both safety and security are crucial for software, they address different concerns. Safety focuses on preventing unintended hazards that can cause physical harm or damage to property. Think of a self-driving car – safety ensures it won’t accidentally crash. Security, on the other hand, focuses on protecting software and data from unauthorized access, use, disclosure, disruption, modification, or destruction. Consider a banking app – security ensures that only authorized users can access account information and prevent fraud. A system can be secure but not safe (e.g., a perfectly secured medical device that malfunctions and delivers the wrong dosage), and vice versa (e.g., a safe, but insecure, home automation system whose controls can be hacked).

Q 2. Describe the software development lifecycle (SDLC) and how safety is integrated.

The Software Development Lifecycle (SDLC) is a structured process for developing software. Safety integration is crucial throughout the entire cycle, not just as an afterthought. A common model is the V-model, emphasizing verification and validation at each stage.

- Requirements: Safety requirements are explicitly defined. For example, a medical device might require a specific response time to avoid harm.

- Design: Safety mechanisms are designed into the architecture. This could involve redundant systems or error detection capabilities.

- Implementation: Developers write code adhering to coding standards and safety guidelines, using techniques such as defensive programming.

- Verification: This involves techniques like code reviews, static analysis, and unit testing to ensure the code meets the safety requirements.

- Validation: This involves testing the entire system to confirm it behaves as expected in all relevant scenarios, often with rigorous simulations and real-world testing.

- Deployment and Maintenance: Continuous monitoring and updates address emerging safety concerns or vulnerabilities.

Integrating safety early, starting from requirements gathering, is paramount to avoid costly rework later. A well-defined safety case needs to be developed and maintained throughout the SDLC, justifying the safety claims made.

Q 3. What are the key standards and regulations relevant to software safety (e.g., ISO 26262, IEC 61508)?

Several standards and regulations govern software safety, depending on the application domain. Key examples include:

- IEC 61508: This is a foundational standard for functional safety of electrical/electronic/programmable electronic safety-related systems. Many other standards build upon it.

- ISO 26262: This standard addresses functional safety for road vehicles. It specifies requirements for all stages of development, from concept to production.

- DO-178C (for airborne systems): This standard defines software considerations in airborne systems and certifies the safety level of the system through various levels of compliance.

- EN 50128 (for railway applications): This standard focuses on the safety requirements for railway control and signaling systems.

Compliance with these standards often requires rigorous documentation, testing, and verification processes to demonstrate that the software meets the required safety integrity level.

Q 4. Explain the concept of Hazard Analysis and Risk Assessment (HARA).

Hazard Analysis and Risk Assessment (HARA) is a systematic process to identify potential hazards in a system and assess the associated risks. It’s crucial for determining the necessary safety measures. The process typically involves:

- Hazard identification: Listing all potential hazards that could lead to unacceptable risk. For example, in a medical infusion pump, a hazard could be an inaccurate dosage delivered.

- Hazard analysis: Analyzing the causes of the identified hazards and how they could occur. For example, a software error in the dosage calculation could cause an inaccurate dosage.

- Risk assessment: Evaluating the severity, probability, and controllability of each hazard. This involves considering factors such as the likelihood of the hazard occurring and the potential consequences if it does.

- Risk mitigation: Defining strategies to reduce the risks associated with the identified hazards. This could involve implementing safety mechanisms or changing the system design.

HARA is iterative; as the design evolves, the risk assessment should be revisited and updated.

Q 5. What are Fault Tree Analysis (FTA) and Failure Mode and Effects Analysis (FMEA)?

Fault Tree Analysis (FTA) is a top-down, deductive technique used to analyze how various lower-level failures can contribute to a specific top-level undesired event (or ‘top event’). It visually represents the system failures as a tree, with branches showing the combinations of events leading to the top event. It’s good at identifying combinations of failures that might lead to a hazardous situation.

Failure Mode and Effects Analysis (FMEA) is a bottom-up, inductive approach used to identify potential failure modes of individual components or functions within a system and analyze their effects on the overall system. It systematically explores potential failures and their consequences. For each failure mode, severity, occurrence, and detection are rated.

Both FTA and FMEA are valuable tools to understand potential failure points and improve system reliability and safety, often used in conjunction.

Q 6. How do you determine the Automotive Safety Integrity Level (ASIL) or Safety Integrity Level (SIL)?

The Automotive Safety Integrity Level (ASIL) in ISO 26262 and the Safety Integrity Level (SIL) in IEC 61508 are classifications that define the required safety integrity for a specific function or system. They are determined based on the HARA process.

The ASIL/SIL is determined by assessing the severity of potential harm (injuries, fatalities, damage), the probability of the hazard occurring, and the controllability (how easily the hazard can be mitigated or avoided). Higher ASIL/SIL levels (ASIL D or SIL 4) represent higher risks, requiring more stringent safety measures. This assessment considers factors like the operating environment, system complexity, and the potential consequences of failure. A detailed risk matrix is used to categorize the risk, assigning the appropriate ASIL/SIL.

For example, a critical function in a vehicle like braking will likely have a higher ASIL than a less critical function like window control.

Q 7. Describe different safety mechanisms used in software (e.g., redundancy, watchdog timers).

Numerous safety mechanisms are used to enhance software reliability and mitigate risks. These include:

- Redundancy: Using multiple independent components or systems to perform the same function. If one fails, the others can take over, ensuring continued operation. For example, a flight control system might have triple modular redundancy.

- Watchdog timers: Software timers that monitor the operation of other parts of the system. If the monitored system fails to ‘ping’ the watchdog within a certain time, the watchdog triggers a safety response, such as a system reset or shutdown. This prevents runaway processes.

- Error detection and handling: Mechanisms to detect and handle errors gracefully, preventing them from propagating and causing larger failures. This could include input validation, range checks, and exception handling.

- Diversity: Using different programming languages, design techniques, or development teams to build independent versions of the same software. This can reduce the probability of common-mode failures.

- Fail-safe design: Designing systems to automatically enter a safe state in case of failure. For example, a robotic arm might retract to a safe position if it detects a malfunction.

The choice of safety mechanism depends on the specific hazard, risk level (ASIL/SIL), and system requirements.

Q 8. Explain the concept of safety verification and validation.

Safety Verification and Validation (V&V) are distinct but complementary processes ensuring a software system meets its safety requirements. Verification answers the question: “Are we building the product right?” It focuses on ensuring the software conforms to its specification. Validation, on the other hand, asks: “Are we building the right product?” It verifies that the software meets the intended needs and fulfills its purpose, ultimately ensuring safety.

Imagine building a bridge. Verification would involve checking if the bridge’s design adheres to engineering specifications (e.g., correct material strength, load-bearing capacity). Validation would involve confirming that the bridge actually serves its intended purpose (e.g., safely allowing vehicles to cross).

In software, verification might involve code reviews, static analysis, and unit testing. Validation would include integration testing, system testing, and user acceptance testing in simulated or real-world operational environments.

Q 9. What are the different techniques used for software verification?

Software verification employs various techniques to ensure the software conforms to its specification. These techniques can be broadly classified as static or dynamic.

- Static Analysis: Examines the code without actually executing it. This includes:

- Code Reviews: Manual inspection of the code by peers to identify defects and ensure adherence to coding standards.

- Static Code Analysis Tools: Automated tools that analyze the code for potential bugs, vulnerabilities, and deviations from coding guidelines. Examples include tools that check for memory leaks, buffer overflows, and coding style violations.

- Formal Methods: Mathematical techniques used to formally prove the correctness of the code or specific properties of the system, often involving model checking or theorem proving.

- Dynamic Analysis: Involves executing the code and observing its behavior. This includes:

- Unit Testing: Testing individual components (functions, modules) in isolation.

- Integration Testing: Testing the interaction between different components.

- System Testing: Testing the complete system as a whole.

For example, a static analysis tool might detect a potential null pointer dereference in the code, while unit testing would confirm that a specific function returns the expected output under various input conditions.

Q 10. What are the different techniques used for software validation?

Software validation focuses on confirming that the software meets its intended purpose and user needs, specifically focusing on safety. This involves demonstrating that the system behaves as expected in its operational environment and achieves its safety goals.

- System Testing: Testing the entire system in a simulated or real-world environment to verify its functionality and safety.

- Integration Testing: Testing the interactions between different software components and hardware to ensure they work together safely.

- User Acceptance Testing (UAT): Testing with end-users to verify that the software meets their needs and is user-friendly and safe in real-world scenarios.

- Simulation and Modeling: Using simulations to test the system’s response to various events and conditions that might not be easily reproducible in real life.

- Fault Injection Testing: Deliberately injecting faults into the system to evaluate its resilience and safety mechanisms.

- Hardware-in-the-loop (HIL) Testing: Testing the software with real hardware components to verify interactions and system safety in a controlled environment.

For instance, UAT for an aircraft control system might involve pilots testing the software in a flight simulator to ensure safe and predictable responses to various flight conditions.

Q 11. What is a safety case and how is it developed?

A safety case is a structured argument demonstrating that a system is adequately safe for its intended purpose. It provides evidence that the safety requirements have been met and that the risks associated with the system are acceptably low. The safety case is a critical artifact in safety-critical systems.

Developing a safety case involves a systematic approach:

- Hazard Analysis: Identify potential hazards and assess their risks.

- Safety Requirements Definition: Define safety requirements to mitigate identified hazards.

- Safety Argument Construction: Develop a structured argument, often using a goal-tree/success-tree approach, linking safety requirements to design and implementation details.

- Evidence Gathering: Collect evidence to support the claims made in the safety argument. This evidence comes from various V&V activities (testing, analysis, etc.).

- Safety Case Review and Assessment: Independent review and assessment of the safety case to ensure completeness, consistency, and soundness.

Think of a safety case as a legal brief presenting the evidence supporting the claim that a system is safe. It’s vital for demonstrating compliance with regulations and for justifying the deployment of safety-critical systems.

Q 12. How do you handle safety-critical defects during software development?

Handling safety-critical defects requires a rigorous and systematic approach, prioritizing safety above all else. The process involves:

- Immediate Prioritization: Safety-critical defects are immediately prioritized and addressed, often halting further development until resolved.

- Root Cause Analysis: Thorough investigation to understand the root cause of the defect. This prevents recurrence.

- Defect Resolution: Careful design and implementation of a fix, rigorously tested to ensure it does not introduce new problems.

- Impact Assessment: Evaluation of the impact of the defect and the fix, considering the potential consequences of deployment before and after the fix.

- Rigorous Testing: Comprehensive regression testing to ensure the fix does not negatively impact other parts of the system.

- Change Management: Controlled change management procedures to track and document all changes related to the defect fix.

- Documentation: Detailed documentation of the defect, root cause, resolution, testing, and impact assessment.

A critical aspect is using a well-defined defect tracking and management system to ensure that no safety-critical defect is overlooked or mishandled.

Q 13. Explain the importance of traceability in safety-critical software development.

Traceability in safety-critical software development is essential. It ensures a clear and verifiable link between all aspects of the development process, from initial requirements to final implementation and testing. This allows for easier identification of the source of defects, easier comprehension of system behavior, and greater confidence in safety.

Types of traceability:

- Requirements Traceability: Links requirements to design elements, code, test cases, and risk assessments. This demonstrates that all requirements have been addressed.

- Design Traceability: Shows the relationship between design elements and implementation details. This allows for understanding how design choices impact system behavior.

- Test Traceability: Links test cases to requirements and design elements. This ensures that all requirements are adequately tested.

For instance, if a safety requirement states that the system must respond within 10 milliseconds to a critical event, traceability ensures that this requirement is linked to the design elements that achieve this response time, the code that implements those design elements, and the test cases that verify the response time.

Without proper traceability, it becomes difficult to identify the source of a defect or to demonstrate compliance with safety standards.

Q 14. What is the role of static and dynamic analysis in software safety?

Static and dynamic analysis play crucial roles in ensuring software safety. They complement each other and contribute to a comprehensive V&V strategy.

Static Analysis: Examines the code without execution, identifying potential problems early in the development cycle. It’s cost-effective, but limitations exist as it cannot detect runtime errors.

- Benefits: Early detection of defects, improved code quality, reduced testing effort.

- Examples: Lint tools, data-flow analysis, control-flow analysis, model checking.

Dynamic Analysis: Involves executing the code and observing its behavior. It can reveal runtime errors that static analysis cannot detect. However, it can’t guarantee finding all defects as testing is inherently incomplete.

- Benefits: Detection of runtime errors, validation of system behavior, assessment of system performance under stress.

- Examples: Unit testing, integration testing, system testing, fault injection testing.

Using both static and dynamic analysis maximizes the chances of finding defects and helps build a safer system. Imagine static analysis as a pre-flight inspection of an aircraft and dynamic analysis as a test flight – both are necessary to ensure safe and reliable operation.

Q 15. Describe your experience with safety-related coding standards (e.g., MISRA C).

Safety-related coding standards, such as MISRA C, are crucial for developing safe and reliable software, especially in safety-critical systems like automotive or aerospace applications. MISRA C, for example, provides a set of guidelines and rules that aim to mitigate potential coding errors that could lead to hazardous situations. These rules address various aspects of C programming, focusing on areas prone to undefined behavior, such as pointer arithmetic, integer overflow, and the use of potentially unsafe functions. My experience encompasses not only adhering to these standards but also actively participating in their implementation using static analysis tools. For instance, I’ve utilized tools like PC-lint and Coverity to automatically check code compliance with MISRA C rules, which helps identify potential problems early in the development process. Beyond just compliance, I also understand the rationale behind each rule, allowing me to make informed decisions when deviations are necessary – always meticulously documenting the justification and assessing the impact on safety.

In one project involving a medical device, we strictly followed MISRA C:2012, adapting it further to our specific safety requirements. We integrated the static analysis tool into our Continuous Integration/Continuous Delivery (CI/CD) pipeline, ensuring every code commit was automatically checked for MISRA compliance. This proactive approach significantly reduced the number of defects detected during later testing phases, saving time and resources. This demonstrates my understanding of not only the ‘what’ but also the ‘why’ and ‘how’ of applying these standards effectively.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

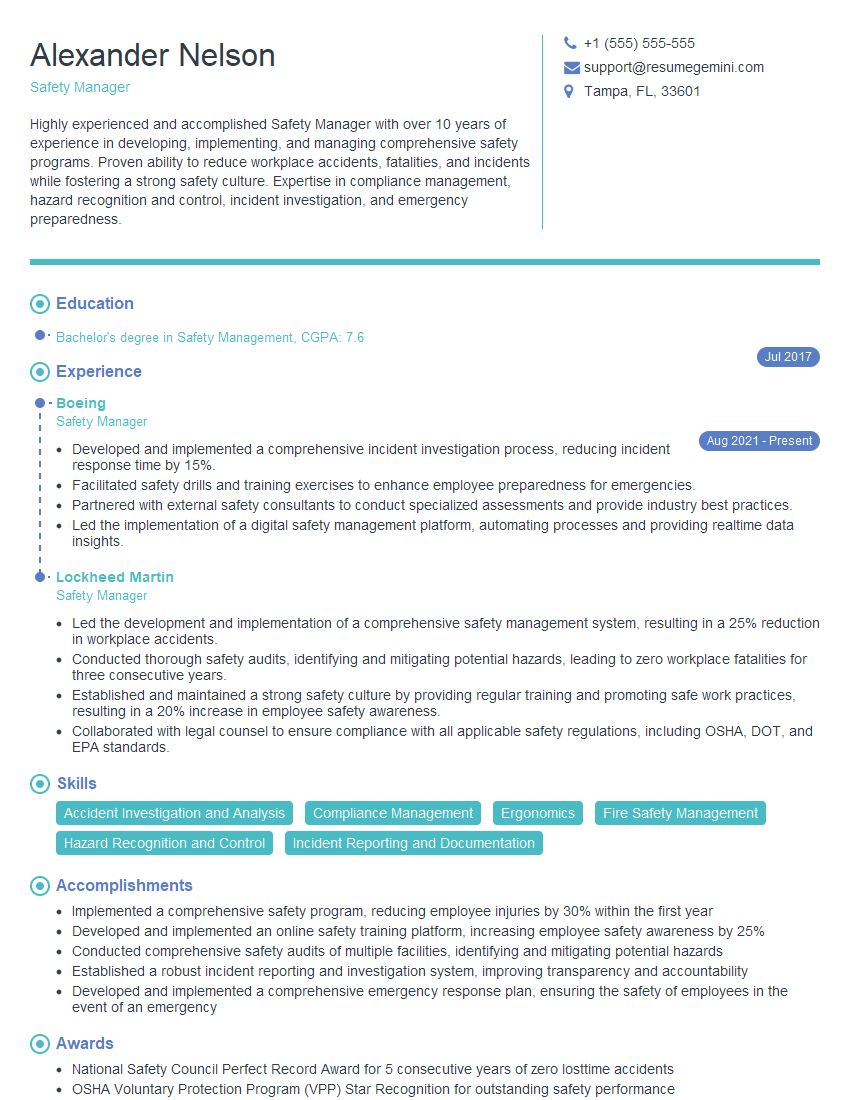

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. How do you ensure the safety of third-party components in your software?

Ensuring the safety of third-party components is paramount in software safety engineering. It’s not enough to simply trust the vendor’s claims. A multi-faceted approach is required. This includes thorough vetting of the vendor, rigorous testing of the component itself, and careful integration into the overall system. Think of it like building a house – you wouldn’t just accept any brick without inspecting its quality.

- Vendor Assessment: We investigate the vendor’s safety processes, their track record, and their experience with safety-critical systems. We look for certifications (e.g., ISO 26262 for automotive) and evidence of robust development practices.

- Component Testing: We conduct extensive testing on the third-party component, focusing on its functional safety aspects. This might include unit testing, integration testing, and even formal verification techniques to prove its correctness and robustness within the system. We often employ techniques like fault injection to simulate potential failures.

- Secure Integration: The component needs to be integrated securely into the overall system. This includes clearly defining the interfaces, handling potential errors effectively, and establishing proper communication protocols to prevent unwanted interactions and cascading failures.

- Documentation Review: A careful review of the third-party component’s safety documentation, including safety cases, hazard analyses, and test results is crucial to gain a thorough understanding of its capabilities and limitations.

In a recent project, we used a third-party communication library. Before integrating it, we performed a comprehensive security audit, reviewed its safety case, subjected it to rigorous unit and integration testing, and even conducted fault injection testing to assess its resilience to various failure scenarios. This thorough approach ensured the safe integration of the component, preventing it from becoming a single point of failure in the system.

Q 17. Explain the concept of software safety metrics.

Software safety metrics provide quantitative measures of the safety characteristics of a software system. They help us track progress, identify potential problems, and demonstrate compliance with safety standards. These metrics are not simply numbers; they are indicators that, when analyzed, provide insights into the software’s safety posture. Think of them as vital signs for your software’s health.

- Defect Density: This measures the number of defects found per lines of code (KLOC), indicating the quality of the codebase. A high defect density signifies potential safety risks.

- Failure Rate: This measures the frequency of failures in a given period. It helps identify areas where the system is prone to failures.

- Mean Time Between Failures (MTBF): This represents the average time between failures, a common indicator of reliability and safety. A higher MTBF implies greater safety.

- Safety Integrity Level (SIL): In safety-critical systems, SIL represents the probability of failure on demand. It’s a categorical measure, not a precise number, used to determine the level of safety needed based on the consequences of failure.

- Code Coverage: This metric indicates the percentage of code tested during the testing phase, reflecting the thoroughness of the testing process.

By tracking these metrics throughout the development lifecycle, we can identify trends, assess the effectiveness of our safety practices, and proactively address potential safety risks. For example, a consistent increase in defect density in a particular module might signal the need for improved coding practices or more thorough testing in that area.

Q 18. How do you manage risk throughout the software development lifecycle?

Risk management is an ongoing process throughout the entire software development lifecycle (SDLC), not just a one-time event. It requires a systematic approach that incorporates risk identification, assessment, mitigation, and monitoring. This is analogous to navigating a ship – you constantly monitor the course, adjust the sails (mitigation), and react to changing weather conditions (new risks).

- Hazard Analysis and Risk Assessment (HARA): Early in the SDLC, we perform HARA to identify potential hazards and assess their associated risks. This often involves techniques like Fault Tree Analysis (FTA) and Failure Modes and Effects Analysis (FMEA).

- Risk Mitigation Planning: Once risks are identified, we develop plans to mitigate them. Mitigation strategies could involve changing the design, implementing safety mechanisms, adding redundant components, or improving testing procedures.

- Continuous Monitoring and Review: We continuously monitor the risks throughout the project, regularly reviewing the effectiveness of mitigation strategies and adapting our plans as needed. New risks may emerge, so ongoing monitoring is essential.

- Risk Acceptance: In some cases, residual risks (risks that remain even after mitigation) might be deemed acceptable. This decision must be documented and justified, taking into account the overall safety goals.

For example, in a project involving autonomous vehicles, we used a formal risk assessment methodology to identify potential hazards related to sensor failures, software bugs, and environmental factors. This led to the implementation of redundant sensor systems, advanced algorithms for fault detection and recovery, and extensive testing under various simulated scenarios. The risk management process was iterative, with regular reviews and adjustments to the mitigation strategies based on the results of testing and analysis.

Q 19. What is a safety requirement specification?

A safety requirement specification (SRS) is a formal document that outlines the safety requirements for a software system. It’s the foundation upon which the entire safety engineering process is built. Think of it as the blueprint for building a safe system. It’s not just about functionality; it specifically focuses on preventing hazards and ensuring the system behaves as expected even under exceptional circumstances.

An SRS typically includes:

- Hazard Analysis Results: The outcomes of the hazard analysis, clearly identifying potential hazards and their associated risks.

- Safety Goals: High-level statements defining the overall safety objectives of the system.

- Safety Requirements: Specific, measurable, achievable, relevant, and time-bound (SMART) requirements related to safety. These often specify acceptable failure rates, response times in case of errors, and other quantitative or qualitative measures.

- Safety Constraints: Limitations and restrictions imposed on the system to ensure safety.

A well-defined SRS ensures that everyone involved in the project understands the safety goals and how they are to be achieved. It serves as a critical reference point throughout the development process and provides the basis for verification and validation activities.

Q 20. How do you ensure that safety requirements are met?

Ensuring that safety requirements are met requires a comprehensive approach that integrates throughout the entire SDLC. This goes beyond simple testing and involves a combination of proactive design practices and rigorous verification and validation activities.

- Verification: This involves checking that the software meets its specified requirements. This includes static analysis, code reviews, and testing at different levels (unit, integration, system).

- Validation: This ensures that the developed software meets its intended purpose and fulfills its safety objectives. This typically involves demonstrating compliance with relevant safety standards and regulations through rigorous testing and analysis.

- Safety Cases: These are structured arguments that demonstrate that the system is sufficiently safe to be used in its intended application. They present evidence that safety requirements have been met.

- Independent Verification and Validation (IV&V): An independent team reviews the safety requirements, design, implementation, and testing to provide an unbiased assessment of the system’s safety.

For example, in a medical device project, we employed a combination of formal methods, model checking, and rigorous testing procedures to verify and validate the safety requirements. We also developed a comprehensive safety case that documented all the evidence to support our claim that the device met its intended safety objectives. This multi-layered approach greatly enhanced the confidence in the safety of the final product.

Q 21. Describe your experience with safety certification processes.

My experience with safety certification processes encompasses various industry standards, such as ISO 26262 (automotive), IEC 61508 (industrial automation), and DO-178C (avionics). These certifications are not simply checkboxes; they represent a rigorous and comprehensive process that demands a thorough understanding of safety engineering principles and the ability to demonstrate compliance. It’s a journey, not a destination, requiring meticulous documentation and evidence at each stage.

My experience includes:

- Developing safety plans: Defining the approach to achieve safety certification, including the identification of safety goals, development of safety requirements, and selection of appropriate safety methods and tools.

- Managing certification audits: Working with certification bodies to conduct thorough audits to verify compliance with relevant standards and regulations.

- Preparing certification documentation: Creating comprehensive documentation to support the certification application, including safety cases, hazard analyses, and test reports. This requires accurate and meticulous record-keeping.

- Implementing safety processes: Integrating safety considerations into the entire development process, including requirements engineering, design, implementation, testing, and maintenance.

In one project, I led the effort to obtain ISO 26262 ASIL D certification for an automotive control unit. This involved a significant effort in documenting our safety case, demonstrating compliance with the stringent requirements of the standard, and navigating the audit process with the certification body. Successfully achieving this certification was a testament to our commitment to safety and a reflection of my experience in managing complex safety certification projects.

Q 22. What are the challenges in ensuring software safety?

Ensuring software safety presents a multitude of challenges, stemming from the inherent complexity of software systems and the diverse ways they can fail. Think of it like building a bridge: a single faulty component can bring the whole thing crashing down. Similarly, a seemingly small software bug can have catastrophic consequences.

- Complexity: Modern software systems are incredibly intricate, making it difficult to fully understand all possible interactions and failure modes. The sheer volume of code, coupled with the use of third-party libraries and components, dramatically increases the potential for errors.

- Unpredictability: Software can behave unexpectedly when interacting with different hardware, operating systems, or user inputs. It’s like trying to predict the weather – there are many factors influencing the outcome.

- Testing limitations: Exhaustive testing of all possible scenarios is often impractical or impossible due to time and resource constraints. We can never fully replicate the real-world environment.

- Evolving threats: New security vulnerabilities and attack vectors are constantly emerging, requiring ongoing updates and mitigation strategies. It’s an ongoing arms race against malicious actors.

- Human error: Software development is inherently human-driven, and human errors are inevitable. We need robust processes to catch these errors before they reach production.

Addressing these challenges requires a multi-faceted approach, including rigorous development processes, comprehensive testing, and continuous monitoring of deployed systems. It’s a continuous effort, not a single fix.

Q 23. How do you communicate safety-related information to stakeholders?

Communicating safety-related information effectively to stakeholders—engineers, managers, clients, and regulators—is crucial. Think of it as a team effort where everyone needs to be on the same page.

- Clear and concise language: Avoid technical jargon whenever possible. Use visuals like charts and graphs to present complex data in a digestible format.

- Targeted communication: Tailor your message to the audience’s level of technical understanding. A detailed technical report might be suitable for engineers but not for clients.

- Regular updates: Provide timely updates on safety-related issues and mitigation strategies. Transparency is key to building trust.

- Multiple channels: Use a combination of methods, including meetings, presentations, reports, and dashboards, to reach a broader audience.

- Feedback mechanisms: Encourage feedback and questions to ensure everyone understands the information and can contribute to the process.

For instance, when presenting to management, I focus on the business risks associated with safety issues and the cost-benefit analysis of implementing safety measures. When presenting to engineers, I go into the technical details and solutions. A consistent and well-structured communication approach ensures everyone is informed and involved.

Q 24. How do you stay updated with the latest trends in software safety engineering?

Staying current in the rapidly evolving field of software safety engineering requires a proactive and multi-pronged approach. Think of it as a journey of continuous learning.

- Professional organizations: Active participation in organizations like the IEEE and SAE provides access to conferences, publications, and networking opportunities with leading experts.

- Industry publications and journals: Regularly reviewing reputable journals and industry publications keeps me updated on the latest research and best practices.

- Conferences and workshops: Attending conferences and workshops allows me to learn from leading practitioners and network with peers.

- Online courses and webinars: Many online platforms offer excellent courses on various aspects of software safety engineering, providing opportunities for focused learning.

- Following key influencers: Engaging with thought leaders on social media and through their publications helps to stay abreast of emerging trends and innovations.

For example, I actively participate in the IEEE working groups on software safety standards. This provides me with direct access to the development of safety standards and allows me to contribute to the field.

Q 25. Explain your understanding of the relationship between safety and performance.

Safety and performance are often intertwined, yet sometimes conflicting, aspects of software engineering. Imagine a race car: it needs to be both fast (high performance) and safe (reliable braking system).

High performance usually involves optimizing the software for speed and efficiency, sometimes at the expense of safety checks and robustness. For example, removing error handling to improve speed could compromise safety. Conversely, prioritizing safety might involve adding redundancy or implementing thorough error handling mechanisms, potentially impacting performance. The goal is to find a balance. A system might be perfectly safe but unusable if it’s too slow.

This balance is often achieved through careful risk assessment and prioritization. Identifying critical functions and allocating resources appropriately ensures that safety-critical components are developed and tested to a higher standard while less critical parts might tolerate some performance compromises.

Q 26. Describe your experience with formal methods in software safety.

Formal methods provide a rigorous and mathematical approach to software verification and validation. Think of it as applying mathematical proof to software development to ensure correctness.

My experience includes using model checking and theorem proving techniques to analyze safety-critical software. Model checking automatically verifies whether a system model meets its specified properties, while theorem proving involves mathematically proving the correctness of assertions about the system. We use tools like SPIN and HOL to perform these analyses.

For example, in a previous project involving an autonomous vehicle system, we used model checking to verify the correctness of the collision avoidance algorithm. This allowed us to identify potential flaws in the algorithm before deployment, significantly reducing the risk of accidents.

Formal methods can be resource-intensive and require specialized expertise, but their value in ensuring the safety of critical systems far outweighs the costs, particularly when human lives are at stake.

Q 27. How do you handle conflicting safety and performance requirements?

Handling conflicting safety and performance requirements often involves a systematic approach, prioritizing safety while striving for acceptable performance.

- Risk assessment: Identify and analyze potential hazards and risks associated with both safety and performance issues. This helps prioritize which aspects need more attention.

- Prioritization: Based on the risk assessment, prioritize safety requirements over performance requirements when there’s a conflict. In many systems, safety is paramount.

- Trade-off analysis: Explore potential trade-offs between safety and performance. For example, you might accept a small performance reduction to gain significant safety improvements.

- Optimization techniques: Employ various optimization techniques to improve performance without compromising safety. This could involve code optimization, algorithm improvements, or hardware upgrades.

- Documentation: Clearly document all decisions and trade-offs made, justifying the choices in terms of risk and benefit.

For instance, in a medical device project, we encountered a conflict between the speed of data processing and the accuracy of safety checks. We opted to slightly reduce processing speed to increase the reliability of safety checks, acknowledging the impact on performance but prioritizing patient safety.

Q 28. Describe a situation where you had to make a critical decision regarding software safety.

In a previous project involving a railway signaling system, we discovered a potential race condition in the software that could lead to collisions. This was identified late in the testing phase.

The decision was critical: delay the launch, potentially incurring significant financial penalties, or proceed with a patch that might not completely eliminate the risk. We opted to delay the launch, forming a dedicated team to thoroughly analyze and fix the issue. While it caused cost overruns, it ensured the safety of the system and avoided potential catastrophic consequences.

The key to this decision was transparency. We thoroughly documented the potential risks, analyzed the possible solutions, and presented the information to stakeholders, who ultimately supported the decision to prioritize safety. This reinforced the importance of our safety-first approach within the organization.

Key Topics to Learn for Software Safety Engineering Interview

- Safety Requirements Analysis and Specification: Understand how to elicit, analyze, and specify safety requirements using techniques like HAZOP, FMEA, and FTA. Consider practical applications in different domains like automotive or aerospace.

- Software Architectural Design for Safety: Explore architectural patterns that enhance safety, such as separation of concerns, redundancy, and fault tolerance. Think about how these designs translate into practical implementation choices.

- Safety Verification and Validation: Learn about various verification and validation techniques like static analysis, dynamic testing, and formal methods. Consider practical challenges and trade-offs in applying these methods.

- Safety Standards and Regulations: Familiarize yourself with relevant safety standards like ISO 26262 (automotive) or DO-178C (aviation). Understand how these standards influence the software development lifecycle.

- Software Safety Metrics and Assessment: Learn how to define and measure key safety metrics and use them to assess the safety level of a software system. Consider how to present this information effectively.

- Fault Detection, Diagnosis, and Recovery: Explore techniques for detecting, diagnosing, and recovering from faults in a safe and controlled manner. Consider practical examples of how these mechanisms work in real-world systems.

- Software Safety Case Development: Understand the process of building a compelling safety case that demonstrates the system’s adherence to safety requirements. Consider how to structure and present evidence effectively.

Next Steps

Mastering Software Safety Engineering opens doors to exciting and impactful careers, offering high demand and excellent compensation. To stand out in this competitive field, a strong, ATS-friendly resume is crucial. ResumeGemini can help you craft a compelling narrative that showcases your skills and experience effectively. Use ResumeGemini to create a professional resume that highlights your expertise; examples tailored to Software Safety Engineering are available to guide you. Invest time in building a resume that represents your unique qualifications and experience, and watch your job prospects soar.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

good