Are you ready to stand out in your next interview? Understanding and preparing for Sports Analytics and Data Visualization interview questions is a game-changer. In this blog, we’ve compiled key questions and expert advice to help you showcase your skills with confidence and precision. Let’s get started on your journey to acing the interview.

Questions Asked in Sports Analytics and Data Visualization Interview

Q 1. Explain the difference between descriptive, predictive, and prescriptive analytics in sports.

In sports analytics, we use three main types of analytics: descriptive, predictive, and prescriptive. Think of them as progressing stages of understanding.

- Descriptive Analytics: This is all about summarizing what happened. For example, calculating a player’s batting average, total points scored by a team, or the average speed of a runner. It’s like looking in the rearview mirror – you’re understanding past performance. We use tools like dashboards and visualizations to communicate these findings effectively.

- Predictive Analytics: This takes it a step further and tries to predict what *might* happen. We use statistical models (like regression or machine learning) to forecast a player’s future performance, the outcome of a game, or the likelihood of an injury. For instance, predicting a player’s points based on their past performance and opponent’s defensive statistics.

- Prescriptive Analytics: This is the most advanced level, focusing on recommending actions to optimize outcomes. It goes beyond simply predicting the future by suggesting strategies to improve performance. An example might be recommending optimal player lineups based on opponent matchups and predicted performance, or suggesting training regimens to reduce injury risk.

In essence, descriptive analytics tells us ‘what happened,’ predictive analytics tells us ‘what might happen,’ and prescriptive analytics tells us ‘what we should do’.

Q 2. Describe your experience with various data visualization tools (e.g., Tableau, Power BI, R Shiny).

I have extensive experience with several data visualization tools, each with its own strengths. My proficiency includes:

- Tableau: I frequently use Tableau for creating interactive dashboards and visualizations. Its drag-and-drop interface makes it user-friendly for presenting complex sports data in an easily digestible format. I’ve used it to build dashboards showing team performance trends, player statistics comparisons, and even interactive maps displaying player movement on the field.

- Power BI: Similar to Tableau, Power BI excels at creating interactive dashboards and reports. I’ve found it particularly useful for integrating data from multiple sources, which is common in sports analytics where data might come from different tracking systems, websites, and databases. I’ve used it to create reports tracking player performance across different seasons or even comparing the effectiveness of different coaching strategies.

- R Shiny: For more custom and dynamic visualizations, R Shiny is my go-to. Its ability to integrate with R’s statistical computing capabilities allows for advanced visualizations and interactive explorations of data. I’ve built Shiny apps to allow coaches and analysts to interactively explore various statistical models, visualize player performance distributions, and experiment with different parameters.

My choice of tool depends on the project’s complexity, the intended audience, and the need for customizability. For quick visualizations and presentations, Tableau or Power BI are often ideal. When deep statistical analysis and interactive exploration are needed, R Shiny offers unmatched flexibility.

Q 3. How would you approach cleaning and preprocessing a large dataset of sports statistics?

Cleaning and preprocessing a large sports dataset is crucial for accurate analysis. My approach involves several steps:

- Data Inspection: First, I thoroughly examine the data to identify missing values, inconsistencies, and outliers. I use descriptive statistics and data visualization techniques (histograms, box plots) to understand the data distribution and identify potential problems.

- Handling Missing Data: Depending on the nature and extent of missing data, I might use various imputation techniques, such as mean/median imputation, k-nearest neighbors imputation, or more sophisticated methods if the data shows specific patterns of missingness. I always document my choices and their potential impact on the analysis.

- Outlier Detection and Treatment: I use methods like box plots and z-scores to identify outliers. Depending on the context, I might remove them, cap them, or transform the data (e.g., using logarithmic transformation) to reduce their influence.

- Data Transformation: This step might involve standardizing or normalizing the data, creating new variables (e.g., ratios or interaction terms), converting data types, and handling categorical variables (e.g., one-hot encoding).

- Data Validation: After cleaning, I validate the data to ensure its accuracy and consistency. This often involves comparing the cleaned data to the original data and checking for any inconsistencies.

The specific methods employed depend heavily on the nature of the dataset and the analytical goals. Thorough documentation throughout the process is essential to ensure reproducibility and transparency.

Q 4. What statistical methods are you most proficient in, and how have you applied them to sports analysis?

My statistical toolkit is quite broad, but some methods I use most frequently in sports analysis include:

- Regression Analysis (Linear, Logistic, Poisson): I’ve used these extensively to model player performance, predict game outcomes, and analyze the relationship between different variables. For example, I built a linear regression model to predict a basketball player’s points scored based on minutes played, field goal percentage, and free throw percentage.

- Time Series Analysis (ARIMA, Exponential Smoothing): Crucial for analyzing trends in player performance over time or team performance throughout a season. I used ARIMA models to forecast a baseball team’s win probability for the rest of the season based on past performance.

- Clustering and Classification (k-means, SVM, Decision Trees): Excellent for identifying player types or team strategies. I used k-means clustering to group hockey players based on their skating style and shot accuracy.

- Hypothesis Testing (t-tests, ANOVA): To compare the performance of different players, teams, or strategies. For example, I used a t-test to compare the batting averages of two baseball teams.

The choice of method always depends on the research question and the nature of the data. I emphasize rigorous application of statistical methods and always consider the limitations of each approach.

Q 5. Explain your understanding of regression analysis in the context of sports performance prediction.

Regression analysis is a powerful tool for predicting sports performance. It helps establish a relationship between a dependent variable (e.g., points scored) and one or more independent variables (e.g., minutes played, shooting percentage).

For example, we might use linear regression to model the relationship between a basketball player’s points scored (dependent variable) and their field goal percentage, three-point percentage, and free throw percentage (independent variables). The resulting model could then be used to predict a player’s future points based on these variables.

Different types of regression are useful in different contexts. Linear regression is suitable for continuous dependent variables, while logistic regression is used for binary outcomes (e.g., win or loss). Poisson regression is often appropriate for count data (e.g., number of goals scored). The selection of the appropriate regression model depends on the nature of the dependent variable and the assumptions that can be made about the data.

Careful consideration must be given to model assumptions (e.g., linearity, independence of errors) and model diagnostics (e.g., residual plots) to ensure the model’s validity and reliability.

Q 6. How do you handle missing data in a sports analytics project?

Missing data is a common problem in sports analytics. My approach involves several steps:

- Understanding the Missingness: First, I determine the pattern of missing data (Missing Completely at Random (MCAR), Missing at Random (MAR), or Missing Not at Random (MNAR)). This informs the best strategy for handling the missing data.

- Imputation Methods: For MCAR or MAR data, I might use imputation methods like mean/median imputation (simple but can bias results), k-nearest neighbors imputation (considers similar data points), or multiple imputation (creates multiple imputed datasets to account for uncertainty).

- Deletion Methods: In some cases, removing observations with missing data might be the most appropriate strategy, particularly if the amount of missing data is small and deleting those rows doesn’t significantly bias the results. This is usually my last resort.

- Model-Based Approaches: For MNAR data, more advanced techniques like maximum likelihood estimation or multiple imputation that explicitly models the missing data mechanism might be necessary.

The chosen method always depends on the nature of the data and the potential impact of the missingness on the analysis. I always clearly document my approach and the justifications behind the chosen method.

Q 7. Describe your experience with time series analysis in sports.

Time series analysis is essential for analyzing sports data that changes over time, such as player performance, team win-loss records, or even injury rates. I’ve utilized various time series methods, including:

- ARIMA models: To model and forecast trends in player statistics or team performance over a season. For example, predicting the number of goals a hockey player will score in the remaining games of a season based on their past performance.

- Exponential Smoothing: This is particularly useful for short-term forecasting, like predicting a team’s performance in the next few games based on recent results. It’s especially effective when trends are not strictly linear.

- Decomposition methods: To break down a time series into its components (trend, seasonality, and randomness). This helps understand the underlying patterns and separate them from random noise. I used this to isolate the impact of specific coaching changes on a team’s overall performance.

The choice of method depends on the specific characteristics of the time series data (e.g., trend, seasonality, stationarity). Careful consideration must be given to model selection, diagnostics, and interpretation of results.

Q 8. How would you assess the effectiveness of a particular player’s performance using statistical metrics?

Assessing a player’s effectiveness goes beyond simple box scores. We need a multifaceted approach using relevant statistical metrics, tailored to the specific sport and position. For example, for a basketball point guard, we wouldn’t focus solely on points scored. Instead, we’d analyze metrics like assists per game, assist-to-turnover ratio, effective field goal percentage (eFG%), and even advanced metrics like Player Efficiency Rating (PER) or Box Plus/Minus (BPM). These metrics offer a more holistic view of their contribution beyond just scoring.

Step-by-step process:

- Define Objectives: What are we trying to measure? Is it overall offensive impact, defensive prowess, or something else?

- Select Relevant Metrics: Choose metrics aligning with the defined objectives. This might involve traditional stats (points, rebounds, etc.) or advanced metrics (PER, BPM, etc.).

- Contextualize Data: Consider factors like opponent strength, minutes played, and game situation. A player performing exceptionally against a weak team doesn’t necessarily reflect true skill.

- Comparative Analysis: Compare the player’s performance to league averages, team averages, and their own historical performance. This helps determine whether their performance is exceptional, average, or below average.

- Visualization: Use charts and graphs (like scatter plots, line graphs, or heatmaps) to visually represent the data and identify trends or patterns. This makes the findings much clearer and more easily understood.

Example: Let’s say we’re evaluating a basketball point guard. A high assist-to-turnover ratio combined with a high eFG% suggests excellent court vision and efficiency. A low BPM despite high scoring might indicate a negative impact on other aspects of the game.

Q 9. Explain your experience working with different types of sports data (e.g., tracking data, event data, transactional data).

My experience encompasses diverse sports data types. I’ve worked extensively with:

- Tracking Data: This involves high-frequency data from technologies like optical tracking systems or wearable sensors. I’ve used this data to analyze player movement patterns, speed, acceleration, and spatial positioning on the field/court. This helps us understand tactical decisions, player positioning, and efficiency of movements during a game.

- Event Data: This covers specific in-game events like shots, passes, tackles, and fouls. I’ve leveraged this to calculate advanced statistics, build predictive models for game outcomes, and understand team strategies. For instance, analyzing shot charts helps identify efficient shooting zones and preferred shot types.

- Transactional Data: This includes ticket sales, merchandise purchases, and other financial aspects. This is useful for understanding fan engagement, market analysis, and revenue generation strategies. For example, analyzing ticket sales data can help identify peak demand periods and optimize pricing strategies.

Practical Application: In one project, I combined tracking and event data to optimize player positioning in soccer. By analyzing player movement patterns during attacking plays, we identified areas where improved positioning could lead to higher goal-scoring probabilities. This information was then used to train the players.

Q 10. How would you communicate complex statistical findings to a non-technical audience?

Communicating complex statistical findings to a non-technical audience requires translating technical jargon into plain language. This involves simplifying complex concepts, using visual aids effectively, and focusing on the story the data tells.

Strategies:

- Use clear and concise language: Avoid jargon and technical terms unless absolutely necessary. If you must use them, provide clear definitions.

- Focus on the narrative: Frame the findings as a story, highlighting key insights and their implications. What does the data reveal about team performance, player effectiveness, or strategic choices?

- Employ compelling visuals: Charts and graphs are crucial. Use simple and easily interpretable visuals (e.g., bar charts, pie charts, line graphs). Avoid overwhelming the audience with too much information in one visualization.

- Use analogies and metaphors: Relate statistical concepts to everyday experiences to make them more relatable and easier to understand.

- Tailor the presentation: Adapt the communication style to the audience’s background and level of understanding. A presentation for team management will differ from one for the general public.

Example: Instead of saying “The player’s expected goals (xG) significantly outperformed his actual goals scored,” I would say, “This player created many high-quality scoring opportunities, but didn’t always convert them into actual goals. We need to address this conversion rate.”

Q 11. What are some common biases or limitations in sports data analysis?

Sports data analysis is prone to several biases and limitations:

- Sampling Bias: The data might not be representative of the entire population. For example, analyzing data from only high-profile games might skew the results.

- Survivorship Bias: Focusing only on successful teams or players might ignore valuable lessons from unsuccessful ones.

- Confirmation Bias: Analysts might selectively focus on data confirming pre-existing beliefs, ignoring contradictory evidence.

- Data Quality Issues: Inconsistent data collection methods, missing data, or errors in data recording can significantly impact the analysis.

- Overfitting: Building a model that performs exceptionally well on training data but poorly on unseen data. This happens when a model is too complex and captures noise instead of genuine patterns.

- Regression to the Mean: Exceptionally high or low performance in a given period often regresses towards the average over time.

Mitigation Strategies: These biases can be mitigated through careful data collection, rigorous statistical methods, cross-validation techniques, and a critical assessment of the results.

Q 12. Describe your experience with A/B testing in a sports context.

I have experience designing and conducting A/B tests in sports contexts, primarily to evaluate the effectiveness of different training methods or strategic approaches.

Example: In a project with a basketball team, we wanted to compare two different shooting drills. We randomly assigned players to two groups: Group A received Drill 1, and Group B received Drill 2. We then tracked their shooting accuracy over a set period. By comparing the results using statistical significance tests, we could determine which drill yielded better improvement in shooting accuracy. This ensured objective evaluation of training efficacy.

Key Considerations:

- Randomization: Randomly assigning participants to different groups is crucial to avoid bias.

- Control Group: A control group is necessary to provide a benchmark for comparison.

- Sample Size: A sufficiently large sample size is needed to ensure statistically significant results.

- Metrics Selection: Define clear and measurable metrics to evaluate the effectiveness of the different approaches.

- Statistical Analysis: Use appropriate statistical tests (like t-tests or chi-squared tests) to determine the significance of the results.

Q 13. Explain your experience with machine learning techniques relevant to sports analytics.

I have extensive experience applying machine learning techniques in sports analytics. My expertise includes:

- Predictive Modeling: Using algorithms like logistic regression, support vector machines (SVM), and random forests to predict game outcomes, player performance, or injury risk.

- Clustering: Grouping similar players or teams based on their performance characteristics. This helps identify player archetypes or team strengths and weaknesses.

- Recommendation Systems: Recommending optimal player strategies or formations based on game situations or opponent characteristics.

Example: I used random forests to predict the likelihood of a successful pass completion in soccer, considering factors like player position, passing distance, and opponent pressure. This helped us understand the factors affecting passing success and optimize passing strategies.

Specific techniques and algorithms: I have experience with various algorithms and libraries like scikit-learn (Python), TensorFlow, and PyTorch, and I’m adept at selecting the appropriate algorithm for a given task based on data characteristics and project objectives.

Q 14. How do you evaluate the accuracy of your predictive models?

Evaluating the accuracy of predictive models involves a combination of techniques:

- Holdout Set: Dividing the data into training, validation, and test sets. The model is trained on the training set, parameters are tuned on the validation set, and final performance is evaluated on the unseen test set. This prevents overfitting.

- Cross-Validation: Repeatedly training and validating the model on different subsets of the data. This provides a more robust estimate of performance.

- Metrics: Using appropriate metrics depending on the nature of the prediction problem. For classification problems, we might use accuracy, precision, recall, F1-score, AUC-ROC. For regression problems, we might use Mean Squared Error (MSE), Root Mean Squared Error (RMSE), R-squared.

- Visualizations: Using visualizations (like learning curves or confusion matrices) to understand the model’s strengths and weaknesses.

Example: To evaluate a model predicting game outcomes, we’d use the accuracy score on the test set and also analyze the confusion matrix to understand the types of errors the model makes. A low accuracy score and a high number of false positives would suggest the model needs improvement.

Q 15. How would you select appropriate metrics to evaluate a specific sports analytics project?

Selecting the right metrics for a sports analytics project is crucial for its success. It depends heavily on the project’s objective. We need to define what we’re trying to measure and improve. For example, are we trying to improve a team’s offensive efficiency, predict player performance, or optimize player recruitment strategies? The metrics should directly reflect these goals.

Here’s a structured approach:

- Define the Objective: Clearly state the project’s goal. Are we aiming for improved win probability, increased scoring, or reduced turnovers? This sets the foundation for metric selection.

- Identify Key Performance Indicators (KPIs): Based on the objective, choose relevant KPIs. For offensive efficiency, this could involve points per possession, effective field goal percentage, or assist-to-turnover ratio. For player performance prediction, metrics like player efficiency rating (PER), true shooting percentage, or advanced box score statistics could be vital.

- Consider Context and Data Availability: The available data dictates the feasibility of using certain metrics. If we lack detailed tracking data, we might rely on simpler, readily available statistics. Consider factors like the sport, league rules, and data quality.

- Balance Simplicity and Complexity: While sophisticated metrics offer deeper insights, simpler metrics are often easier to understand and communicate. A good balance is key. For instance, combining simple metrics like win percentage with more complex metrics like expected goals (xG) in soccer provides a comprehensive understanding.

- Validate and Iterate: Continuously evaluate the chosen metrics. Do they truly reflect the project’s objective? Are there unexpected correlations or limitations? Adjust as needed based on results and further analysis.

Example: If the objective is to improve a basketball team’s three-point shooting, we might track three-point percentage, three-point attempts per game, and three-point shooting efficiency against specific defensive schemes. We can then use these metrics to identify areas for improvement in player training or game strategy.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. Describe a situation where your data visualization skills were crucial to conveying insights.

During a project analyzing the performance of baseball pitchers, I used data visualization to highlight a crucial insight that would have been otherwise missed. We were initially focusing on traditional metrics like ERA (Earned Run Average) and WHIP (Walks plus Hits per Inning Pitched). While these indicated good overall performance, they didn’t reveal subtle patterns in pitcher behavior.

I created an interactive dashboard using Tableau. It displayed pitch type distribution, velocity changes, and movement characteristics over time, visualized using heatmaps, scatter plots, and line charts. This allowed us to see that a pitcher with consistently good ERA and WHIP was experiencing a gradual decline in fastball velocity towards the end of games. This wasn’t apparent from simple aggregate statistics.

By visually representing this subtle change, we effectively communicated the need for adjustments to the pitcher’s training program, focusing on maintaining velocity late in games, directly impacting his performance.

This visually compelling presentation led to immediate acceptance of the findings and implementation of targeted interventions. This underscores the power of effective data visualization in translating complex data into actionable insights.

Q 17. What is your experience with database management systems (e.g., SQL, NoSQL)?

I have extensive experience with both SQL and NoSQL databases. My proficiency in SQL stems from years of working with relational databases like PostgreSQL and MySQL, commonly used to store structured sports data – think player statistics, game results, and team information. I’m comfortable with complex queries, data manipulation, and database optimization. For instance, I’ve used SQL to join multiple tables containing game events, player stats, and team information to create detailed performance analyses.

However, I also understand the limitations of relational databases when dealing with unstructured or semi-structured data. That’s where NoSQL databases like MongoDB shine. I’ve used MongoDB to store and analyze textual data, such as player scouting reports, news articles, or social media sentiment analysis related to player performance or team morale. In those cases, the flexibility of a NoSQL database is invaluable.

My experience includes designing database schemas, optimizing query performance, and managing data integrity in both SQL and NoSQL environments. This expertise is crucial for efficiently extracting, transforming, and loading (ETL) large datasets, which are common in sports analytics.

Q 18. How would you identify and address outliers in your dataset?

Identifying and handling outliers is critical in sports analytics as they can skew results and lead to inaccurate conclusions. Outliers can represent genuine exceptional events (e.g., a player’s record-breaking performance) or data errors (e.g., a typo in a statistic). A multi-faceted approach is necessary.

Identification:

- Visual Inspection: Box plots, scatter plots, and histograms are excellent tools to visually identify points deviating significantly from the overall distribution. This gives a quick, intuitive overview.

- Statistical Methods: Z-score or IQR (Interquartile Range) methods are quantitative ways to identify outliers. A Z-score exceeding a certain threshold (e.g., 3) suggests an outlier. IQR measures the spread of the middle 50% of data; points far from this range are potential outliers.

- Domain Knowledge: Understanding the sport and its context is crucial. A seemingly extreme data point might be perfectly legitimate, representing a unique event.

Addressing Outliers:

- Investigation: Before removing or modifying any data, thoroughly investigate potential causes. Was there a data entry error? Or is it a genuine exceptional performance that should be kept?

- Removal: Only remove outliers if they are demonstrably errors. Proper documentation of the removal process is essential.

- Transformation: Transforming the data (e.g., using logarithmic transformation) can sometimes reduce the impact of outliers without removing them. This maintains more data points.

- Robust Statistical Methods: Use statistical methods less sensitive to outliers (e.g., median instead of mean). This gives a more realistic representation.

Example: Imagine analyzing shooting percentage in basketball. A player with a 100% shooting percentage in a single game might be an outlier. Before removing this data point, we would check if it’s accurate (e.g., verifying the game’s box score). If legitimate, we might consider using a more robust metric, such as a rolling average to smooth out the extreme values.

Q 19. What programming languages are you proficient in for sports analytics?

I’m proficient in several programming languages relevant to sports analytics. My core skills lie in Python and R. Python’s versatility and extensive libraries like Pandas (data manipulation), Scikit-learn (machine learning), and Matplotlib/Seaborn (visualization) make it ideal for a wide range of tasks, from data cleaning to model building and visualization.

# Example Python code for calculating batting average import pandas as pd data = {'hits':[5,10,2,8], 'at_bats':[10,20,5,15]} df = pd.DataFrame(data) df['batting_average'] = df['hits']/df['at_bats'] print(df)

R is particularly powerful for statistical modeling and visualization. Packages like dplyr (data manipulation), ggplot2 (visualization), and caret (machine learning) are invaluable. I’ve used R extensively for creating detailed statistical models and generating compelling visualizations of player performance and team strategies.

Beyond Python and R, I have working knowledge of SQL (for database management), and JavaScript (for interactive data visualization using libraries like D3.js). This diverse skill set allows me to tackle a wide array of sports analytics challenges.

Q 20. Describe your familiarity with different types of sports data sources.

My familiarity with sports data sources spans various types, each with unique characteristics and challenges. I’ve worked with:

- Official League Data: This includes structured data provided directly by sports leagues (e.g., NBA API, MLB Statcast). This is usually the most reliable and consistent data source, but often limited in scope.

- Publicly Available Data: Websites like ESPN, Basketball-Reference, or similar sports statistics websites provide a wealth of data, though it’s crucial to validate its accuracy and consistency. This data is often scraped using web scraping techniques.

- Tracking Data: This granular data, such as player location, speed, and acceleration, is provided by advanced tracking systems used in many modern sports. This offers very detailed insights into player movements and game dynamics, but requires specialized processing techniques.

- Broadcast Data: Data extracted from broadcast feeds, using computer vision techniques, can provide additional information such as player actions and game events. This data is less structured and needs considerable pre-processing.

- Social Media and News Data: Analyzing sentiment from social media posts and sports news can provide valuable contextual information but needs careful handling due to its unstructured nature and potential biases.

Each source has its strengths and weaknesses. Choosing the right source depends on the project’s specific needs and the availability of resources. Data cleaning and preprocessing are essential steps regardless of the source to ensure data quality and consistency.

Q 21. How do you prioritize features in a predictive model?

Prioritizing features in a predictive model is a crucial step that significantly impacts its accuracy and interpretability. Several methods can be used, often in combination:

- Feature Importance from Models: Many machine learning algorithms (like Random Forests or Gradient Boosting Machines) provide built-in feature importance scores. These scores indicate the relative contribution of each feature to the model’s prediction accuracy. Higher scores signify more influential features.

- Statistical Methods: Statistical tests like correlation analysis can assess the linear relationship between each feature and the target variable. Features with strong correlations are usually prioritized.

- Domain Expertise: Understanding the underlying sports context is essential. Some features, despite low statistical importance, might be crucial based on expert knowledge. For example, in soccer, a defender’s positioning might be a critical feature even if it shows low correlation with goal prevention in a simple model.

- Feature Selection Techniques: Techniques like Recursive Feature Elimination (RFE) or SelectKBest can systematically select a subset of features based on their predictive power while minimizing redundancy.

- Regularization: Methods like L1 or L2 regularization penalize the model for using too many features, inherently selecting the most important ones. This improves generalization performance.

- Trial and Error: Experimenting with different feature combinations and evaluating model performance using appropriate metrics (e.g., accuracy, precision, recall, F1-score) is crucial for finding the best feature set.

Example: In a basketball shot prediction model, features like distance from the basket, shot angle, defender proximity, and player shooting history are important. Feature importance scores from a Random Forest model might reveal that distance and shooting history are the most influential, guiding us to prioritize these when building a simplified model.

The selection process often involves an iterative cycle, combining automated methods with expert judgment to arrive at an optimal feature set.

Q 22. Explain your experience with different types of visualizations (e.g., scatter plots, bar charts, heatmaps).

Visualizations are crucial for understanding complex sports data. My experience spans a variety of chart types, each chosen strategically based on the data and the message I want to convey.

- Scatter Plots: I frequently use scatter plots to explore the relationship between two continuous variables. For example, I might plot a basketball player’s points per game against their minutes played to see if there’s a correlation. Identifying clusters or outliers can reveal valuable insights about player performance and efficiency.

- Bar Charts: These are excellent for comparing categorical data. In baseball, I might use a bar chart to compare the batting averages of different players across a season, immediately highlighting top performers.

- Heatmaps: Heatmaps are powerful for visualizing data density across two dimensions. In soccer, a heatmap of a player’s touches on the field can illustrate their preferred playing zones and contribution to the team’s attacking or defensive strategies. I’ve used these to identify areas of strength and weakness for both individual players and the team as a whole.

Beyond these, I’m proficient with line charts (for trends over time), pie charts (for proportions), and more specialized charts like box plots (for showing data distribution) and network graphs (for visualizing player connections).

Q 23. How do you handle overfitting in your models?

Overfitting is a common problem in sports analytics where a model performs exceptionally well on the training data but poorly on unseen data. Think of it like a student memorizing answers for a specific exam but failing to grasp the underlying concepts. To combat this, I employ several techniques:

- Cross-Validation: This involves splitting the data into multiple folds, training the model on some folds and testing it on the others. This provides a more realistic estimate of the model’s performance on new data.

- Regularization: Techniques like L1 and L2 regularization add penalties to the model’s complexity, discouraging it from fitting noise in the training data. This helps to generalize better to new data.

- Feature Selection: Carefully selecting relevant features and removing irrelevant or redundant ones reduces the model’s complexity and the risk of overfitting. I often use feature importance scores from tree-based models to guide this process.

- Early Stopping: When training iterative models like neural networks, I monitor the performance on a validation set and stop training when the performance starts to degrade, preventing overfitting to the training data.

The choice of technique depends on the specific model and dataset. Often, a combination of these methods is most effective.

Q 24. Describe a time you had to deal with conflicting data sources.

In one project, I was analyzing player performance data for a professional basketball team. I had access to data from the team’s internal tracking system and a publicly available dataset. The challenge was that the definitions of certain metrics, like ‘effective field goal percentage,’ differed slightly between the two sources. This discrepancy could lead to flawed conclusions if not addressed properly.

My solution involved a careful comparison of the data definitions, identifying the discrepancies, and developing a process to harmonize the data. This included data cleaning, transformations, and potentially creating new, consistent metrics based on a combination of both datasets. Thorough documentation was crucial to ensure transparency and reproducibility of my analysis. This experience highlighted the critical importance of data provenance and consistency checks when working with multiple sources.

Q 25. How do you stay up-to-date with the latest trends in sports analytics?

Staying current in sports analytics requires a multi-pronged approach:

- Academic Journals and Conferences: I regularly read publications like the Journal of Quantitative Analysis in Sports and attend conferences such as the MIT Sloan Sports Analytics Conference to learn about cutting-edge research and methodologies.

- Online Resources and Blogs: Websites and blogs dedicated to sports analytics, such as those published by prominent sports organizations or analytics firms, provide valuable insights and updates on the latest trends.

- Professional Networking: Engaging with other professionals in the field through online forums, LinkedIn groups, and attending industry events keeps me connected to new developments and fosters collaboration.

- Open-Source Projects and Code Repositories: Exploring open-source projects and code repositories on platforms like GitHub exposes me to new tools, techniques, and applications of sports analytics.

Continuous learning is vital in this rapidly evolving field. Keeping abreast of new statistical models, data sources, and visualization techniques is essential for maintaining a competitive edge.

Q 26. How would you design a dashboard to visualize key performance indicators (KPIs) for a sports team?

A dashboard for visualizing key performance indicators (KPIs) for a sports team should be intuitive, visually appealing, and provide actionable insights. I would design it using a modular approach:

- Summary Section: A top-level overview displaying key aggregate metrics such as win percentage, points per game, goals against average (depending on the sport), and overall team performance trends over time.

- Individual Player Performance: Interactive charts showcasing individual player statistics, potentially allowing users to filter by position, game, or other relevant factors. Heatmaps could visualize player movement and contribution on the field.

- Opponent Analysis: A section comparing the team’s performance against different opponents, highlighting strengths and weaknesses against specific teams or playing styles.

- Advanced Metrics: The inclusion of advanced statistical metrics relevant to the sport, allowing coaches and management to delve deeper into aspects like expected goals (soccer), plus/minus (hockey), or win probability added (basketball).

- Interactive Elements: The dashboard must be interactive, enabling users to drill down into specific data points, filter data, and customize the visualizations to meet their needs.

The technology choice (e.g., Tableau, Power BI, custom web application) would depend on the team’s resources and existing infrastructure. The emphasis would be on clear communication and providing timely, actionable insights to support data-driven decision-making.

Q 27. Explain the ethical considerations in using sports analytics.

Ethical considerations in sports analytics are paramount. The misuse of data can have significant consequences for players, teams, and the integrity of the sport itself.

- Data Privacy: Protecting the privacy of players and other individuals whose data is used in analysis is crucial. This includes anonymizing data whenever possible and adhering to relevant data protection regulations.

- Bias and Fairness: Algorithms and models can perpetuate existing biases if not carefully designed and validated. It’s essential to be aware of potential biases in the data and to mitigate their impact on the analysis.

- Transparency and Explainability: The methods and results of the analysis should be transparent and easily understood. This helps to build trust and avoid misinterpretations of the findings.

- Misuse of Information: The results of sports analytics should not be used to unfairly target or manipulate players or teams. It’s critical to use the insights responsibly and ethically.

Professional organizations and governing bodies are increasingly developing guidelines and standards to ensure the ethical application of sports analytics. Adhering to these principles is essential for maintaining the integrity and fairness of the sport.

Q 28. Describe your experience with data storytelling.

Data storytelling is the art of using data to create a compelling narrative. In sports analytics, this means transforming raw numbers into a story that engages the audience and provides meaningful insights. My approach involves:

- Identifying the Key Message: Before starting any visualization or analysis, I clearly define the central message or question I want to answer. This provides focus and direction for the entire process.

- Choosing the Right Visualizations: Selecting visualizations that effectively communicate the message is critical. Different chart types suit different data and narratives.

- Crafting a Narrative: The data should be presented in a logical and engaging sequence, guiding the audience through the key findings. I often use a combination of visualizations and textual descriptions to create a clear and compelling story.

- Considering the Audience: Tailoring the presentation to the audience’s background and knowledge is essential. Technical jargon should be minimized, and the presentation should be clear and accessible to everyone.

For example, instead of simply presenting a table of player statistics, I might create a compelling narrative about a player’s career progression, using visualizations to showcase their improvement over time and highlight key moments. Effective data storytelling transforms complex data into meaningful and impactful insights.

Key Topics to Learn for Sports Analytics and Data Visualization Interview

- Statistical Modeling in Sports: Understanding and applying regression models (linear, logistic, etc.), time series analysis, and forecasting techniques to predict player performance, game outcomes, or team success. Consider practical applications like predicting injury risk or optimizing player deployment strategies.

- Data Wrangling and Cleaning: Mastering data manipulation and cleaning techniques using tools like Python (Pandas, NumPy) or R to prepare messy sports datasets for analysis. This includes handling missing values, outliers, and inconsistencies to ensure data integrity.

- Data Visualization Best Practices: Creating clear, concise, and insightful visualizations (charts, graphs, dashboards) using tools like Tableau, Power BI, or Python libraries (Matplotlib, Seaborn). Focus on effectively communicating complex data to both technical and non-technical audiences.

- Performance Metrics and KPIs: Understanding and applying relevant Key Performance Indicators (KPIs) for various sports and contexts. This includes selecting and interpreting metrics that accurately reflect player or team performance and drive decision-making.

- Advanced Analytics Techniques: Exploring more advanced techniques such as machine learning algorithms (classification, clustering, etc.), network analysis, and causal inference for deeper insights into sports data. Consider applications like player scouting or strategic analysis.

- Database Management and SQL: Familiarity with relational databases and SQL querying for efficient data retrieval and manipulation. This is crucial for working with large sports datasets stored in databases.

Next Steps

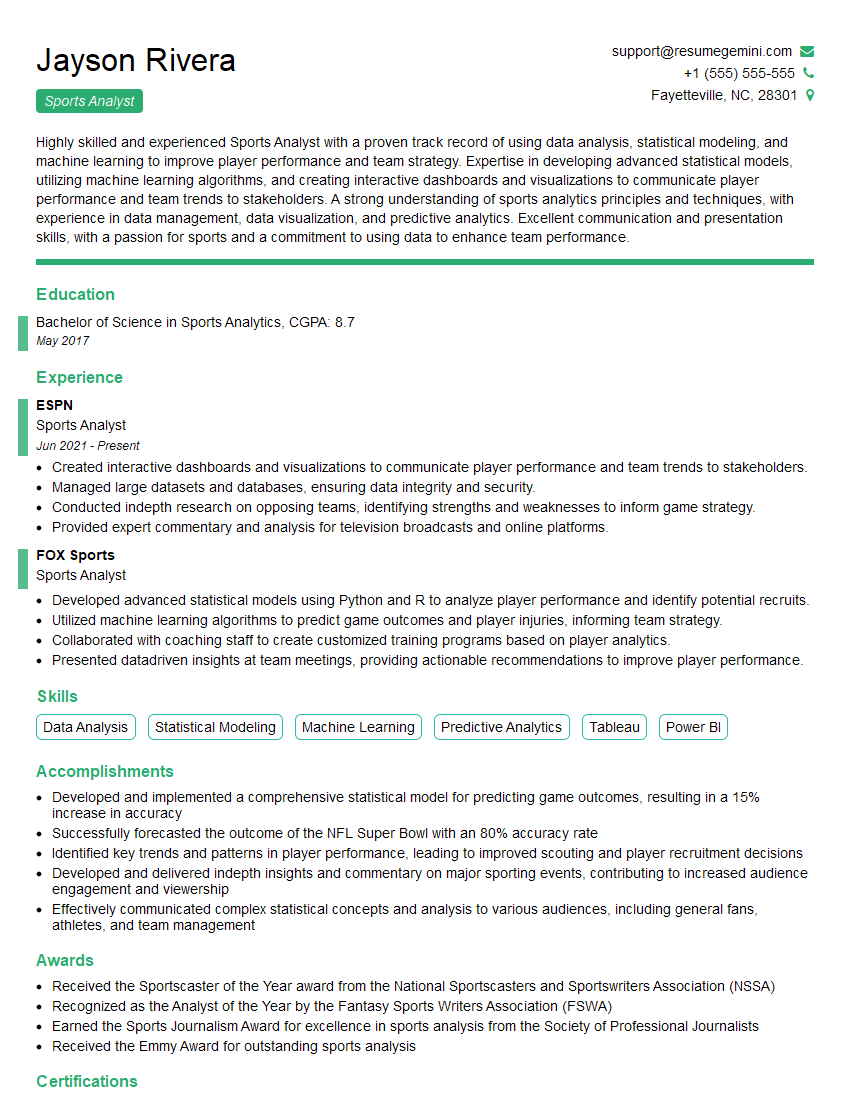

Mastering Sports Analytics and Data Visualization is crucial for a thriving career in the exciting world of sports. It opens doors to impactful roles where you can leverage data to influence strategic decisions, optimize player performance, and enhance the fan experience. To significantly boost your job prospects, crafting an ATS-friendly resume is essential. This ensures your application gets noticed by recruiters and hiring managers. We highly recommend using ResumeGemini, a trusted resource, to build a professional and effective resume. ResumeGemini provides examples of resumes tailored specifically to Sports Analytics and Data Visualization roles, helping you present your skills and experience in the most compelling way.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Very informative content, great job.

good