Unlock your full potential by mastering the most common Advanced DAW Proficiency interview questions. This blog offers a deep dive into the critical topics, ensuring you’re not only prepared to answer but to excel. With these insights, you’ll approach your interview with clarity and confidence.

Questions Asked in Advanced DAW Proficiency Interview

Q 1. Explain your experience with MIDI editing and sequencing in your preferred DAW.

MIDI editing and sequencing are fundamental to my workflow, particularly in Ableton Live. I’m proficient in manipulating MIDI data to create complex melodies, harmonies, and rhythms. This involves everything from basic note entry and editing to advanced techniques like quantization, velocity editing, and automation of MIDI parameters. For instance, I might use Ableton’s powerful MIDI clip editor to fine-tune the timing of a drum performance, subtly shifting individual notes to create a more human feel, or use its powerful warping features to adjust the tempo or pitch of MIDI data from external instruments in real-time. I frequently utilize MIDI effects like arpeggiators and sequencers to generate interesting rhythmic and melodic patterns. My experience extends to working with external MIDI controllers, integrating them seamlessly with my DAW to enhance my creative process. I routinely use automation lanes to modulate MIDI parameters over time, giving me immense control over the dynamic expression of my MIDI-based tracks.

For example, I recently worked on a project where I needed to create a complex, evolving synth line. By using Ableton’s note-based automation I was able to gradually change the filter cutoff frequency of my synth to create a slow, evolving pad-like sound, instead of a static one. This demonstrates my ability to combine MIDI editing with automation for dynamic and expressive results.

Q 2. Describe your workflow for mixing a complex project with numerous tracks.

Mixing a complex project requires a systematic approach. My workflow usually begins with gain staging: setting appropriate levels for each track to prevent clipping and maintain headroom. Then, I’ll move on to creating a solid foundation by focusing on the drums and bass, ensuring these fundamental elements sit well in the mix. I utilize various EQ techniques, using surgical cuts to eliminate muddiness and boost frequencies where needed. Compression is used judiciously to even out dynamics and glue tracks together, ensuring the overall balance. I work iteratively, frequently checking the mix on various playback systems to maintain consistency across different listening environments.

Next, I’ll address the other instruments and vocals, carefully balancing them with the existing foundation. Reverb and delay are then incorporated sparingly to enhance the three-dimensionality and create a sense of space. Automation plays a key role in creating dynamic shifts and subtle nuances. Finally, I focus on mastering-level processing, using subtle compression and limiting to maximize loudness while preserving the overall dynamic range and avoiding any undesirable artifacts. Throughout the process, I regularly use reference tracks to gauge the progress and ensure the mix aligns with my desired sound.

I find that a well-organized track structure and meticulous labeling are crucial for streamlining this complex process, making collaborative work much smoother.

Q 3. How do you approach automation and its practical applications?

Automation is an invaluable tool for adding nuance and life to a mix. I use it extensively to create dynamic changes in volume, panning, effects parameters, and instrument settings. Think of it as adding subtle expression to an otherwise static composition. For example, automating the volume of a synth line can add rhythmic feel and dynamics. Gradually increasing the reverb on a vocal during a crescendo builds emotional intensity.

My approach is to automate only what’s necessary, avoiding over-automation which can make a mix sound artificial. I prefer using smooth automation curves, often opting for drawn automation to have precise control rather than relying solely on automation generated by a MIDI performance. I also utilize automation clips which allow for quick and easy editing and non-destructive workflow. A common example is using automation to subtly ride the gain of a snare drum throughout a track, creating variation without making it sound processed.

Q 4. What are your preferred techniques for noise reduction and audio restoration?

Noise reduction and audio restoration are critical, especially when working with older recordings or field recordings with background noise. My approach involves a combination of techniques. I typically start by identifying the frequency range of the noise using spectral analysis tools within my DAW. This allows me to target noise reduction more effectively. Then I employ specialized plug-ins like iZotope RX or Waves X-Noise, which allow for more selective removal of unwanted noise or clicks, and even allow for delicate repair of damaged audio material. I often use a combination of spectral and adaptive noise reduction techniques, carefully adjusting parameters to avoid artifacts and preserve the integrity of the original signal.

For more substantial restoration, such as removing clicks or pops, I might use plug-ins with dedicated click repair functions. The key is a careful and iterative approach, constantly listening critically to assess the impact of the processing. It’s essential to avoid over-processing, which can make the audio sound unnatural and processed.

Q 5. Explain your understanding of dynamic processing (compression, limiting, gating).

Dynamic processing—compression, limiting, and gating—is crucial for shaping the dynamics of audio signals. Compression reduces the difference between the loudest and quietest parts of a signal, making it sound more even and consistent. Limiting prevents a signal from exceeding a certain threshold, protecting against clipping. Gating reduces or eliminates low-level signals below a certain threshold, often used to eliminate background noise.

I use compression to control dynamics, glue together elements in a mix, and enhance the perceived loudness. Limiting ensures that the overall mix is not too hot, ensuring it translates well across different systems. Gating is used sparingly, mainly to reduce noise or unwanted sounds, primarily in microphone recordings.

My approach is subtle and mindful. I carefully adjust the attack, release, threshold, and ratio parameters of my compressors and limiters to shape the sound naturally and avoid artificial-sounding results. It’s more about sculpting than squashing.

Q 6. How do you handle latency issues in your DAW?

Latency, the delay between input and output, is a common issue when working with audio interfaces and plug-ins. My approach involves a multi-pronged strategy. First, I select my audio interface and buffer size carefully; a smaller buffer size reduces latency but increases CPU load. Finding the right balance is key. I also use low-latency plug-ins wherever possible, as high-quality plugins can sometimes incur heavier CPU overhead and cause latency.

If latency remains an issue, I’ll use software compensation features offered by my DAW, such as input monitoring with low latency. Finally, if I’m still experiencing unacceptable levels of latency, I’ll consider using hardware monitoring, bypassing the DAW’s processing entirely for input signals.

Q 7. Describe your experience with various audio plug-ins (EQ, reverb, delay).

My experience with EQ, reverb, and delay plug-ins is extensive. I use EQs to shape the tonal balance of individual tracks and the overall mix. I’m proficient with parametric EQs, allowing for precise adjustments of specific frequencies. Reverb adds depth and space to a sound, and I use it judiciously to create realistic ambience or creative effects. Delay creates rhythmic echoes and textures, used to enhance rhythmic elements or add atmospheric effects. I’m comfortable working with both hardware and software processing, and often combine both for richer textures.

My plugin choices vary depending on the project and sound, but I have extensive experience with Waves, FabFilter, Universal Audio, and other high-quality plugin manufacturers. The understanding of how these plug-ins interact with each other and with the material I’m working with is crucial, requiring attention to detail and a keen ear. For example, I might use a Pultec-style EQ to warm up a bass line, add plate reverb for a vintage vocal sound, and a tape delay to add a rhythmic texture to a guitar part. The specific combination is very much project-dependent.

Q 8. What are your strategies for effective time management during a large project?

Effective time management on large projects is crucial. My strategy involves a multi-pronged approach focusing on planning, task breakdown, and consistent monitoring. First, I create a detailed project plan, outlining all tasks, their dependencies, and estimated timelines using a project management tool. This isn’t just a simple to-do list; it’s a visual representation of the entire project, allowing me to identify potential bottlenecks early on. I then break down large tasks into smaller, more manageable sub-tasks. This makes progress more visible and prevents feeling overwhelmed. For instance, instead of ‘mix the song,’ I’ll have sub-tasks like ‘process drums,’ ‘EQ vocals,’ ‘add reverb to guitars,’ etc. Regularly reviewing my progress against the plan, using tools like Gantt charts, helps me stay on track and adjust deadlines as needed. Finally, I allocate specific time blocks for focused work, minimizing distractions. The Pomodoro Technique, for instance, works well for me – 25 minutes of intense work followed by a short break. This prevents burnout and maintains productivity throughout the project.

Q 9. How do you troubleshoot common DAW issues (crashes, glitches, audio dropouts)?

Troubleshooting DAW issues requires a systematic approach. When encountering crashes, glitches, or audio dropouts, I start by identifying the problem’s context: Does it happen with specific plugins, projects, or audio files? My first step is always to restart the DAW and my computer. This often resolves temporary glitches. If the issue persists, I’ll check my audio driver settings, ensuring they’re up-to-date and correctly configured for my audio interface. Buffer size adjustments can also significantly impact performance; increasing the buffer size can reduce glitches but may introduce latency. Plugin conflicts are a common culprit. I’ll try disabling plugins one by one to pinpoint the source. If the problem seems related to a specific project, I’ll try creating a new, empty project to rule out corruption. For audio dropouts, I’ll check cable connections, audio interface settings, and disk space. Finally, I’ll check my DAW’s support forums and documentation for solutions. If all else fails, reinstalling the DAW can be a last resort.

Q 10. Explain your experience with sample rate conversion and its impact on audio quality.

Sample rate conversion is the process of changing the number of samples per second in an audio file. Higher sample rates (e.g., 96kHz) capture more audio information, resulting in potentially higher fidelity, but also larger file sizes. Lower sample rates (e.g., 44.1kHz) are more efficient but might lose some subtle details. The impact on audio quality depends largely on the conversion algorithm used. High-quality algorithms minimize audible artifacts like aliasing (the introduction of unwanted frequencies) and phase distortion. Poor quality conversion can result in a noticeable loss of clarity, brightness, or a muddy sound. In practice, I prefer to work at the highest possible sample rate during recording and editing to preserve as much detail as possible, only converting to lower rates for final delivery or archiving if necessary, using a high-quality resampling algorithm found within my DAW. Think of it like taking a high-resolution photo; converting to a lower resolution might save space, but you’ll lose detail. The same principle applies to audio.

Q 11. Describe your workflow for creating and editing virtual instruments.

My workflow for virtual instruments involves several key steps: First, I select the appropriate virtual instrument based on the desired sound. Then I carefully program the instrument’s parameters, focusing on sound design; experimenting with oscillators, filters, envelopes, LFOs, and effects to achieve the desired timbre. I may layer multiple virtual instruments to create richer sounds. Next, I record the MIDI performance, paying close attention to articulation and dynamics. Subsequently, I edit the MIDI data, adjusting notes, velocities, and automation to refine the performance. Finally, I mix and process the virtual instrument tracks, applying EQ, compression, and other effects to achieve a balanced sound within the overall mix. For example, if I’m creating a complex orchestral string sound, I might layer several instances of a string library instrument, assigning them different articulations, velocities, and panning to create depth and movement. This detailed approach allows for creative control and helps in achieving professional sounding results.

Q 12. How do you manage and organize large audio projects?

Managing large audio projects requires a robust organizational system. I use a combination of folder structures, color-coding, and metadata tagging to keep everything organized. For example, I create a main project folder and subfolders for audio files, MIDI files, session data, and backups. I also use descriptive filenames, following a consistent naming convention that clearly identifies the track and its function. Within the DAW itself, I utilize track grouping, color-coding, and VCA faders to improve visual clarity and workflow efficiency. I regularly consolidate and archive older projects to avoid clutter and maintain efficient performance. A well-structured system is paramount for preventing chaos and making finding specific files easier.

Q 13. Explain your experience with mastering techniques and processes.

Mastering is the final stage of audio production, focusing on optimizing the overall balance, loudness, and clarity of a track. My mastering process begins with careful listening to identify any frequency imbalances, dynamic issues, or other problems. I use various tools to address these issues, such as EQ, compression, limiting, and stereo widening. A crucial aspect is gain staging – ensuring optimal levels throughout the chain to prevent clipping and maximize headroom. I pay close attention to the stereo image and the track’s overall sonic character. I typically use high-resolution files (24-bit/96kHz or higher) for mastering to preserve as much dynamic range as possible. I also frequently use metering tools to ensure that the final mix meets broadcast standards (loudness). I often employ reference tracks to guide my decisions, comparing my work to professionally mastered releases. Finally, I deliver the master in appropriate formats (e.g., WAV, AIFF, MP3) as required by the client or platform.

Q 14. What are the key differences between various DAWs (e.g., Pro Tools, Logic Pro X, Ableton Live)?

Different DAWs cater to different workflows and preferences. Pro Tools is renowned for its industry-standard status, particularly in film and television post-production, excelling in its robust editing capabilities and extensive plugin support. Logic Pro X, a strong contender, is known for its ease of use, extensive sound library, and powerful MIDI editing features, popular among composers and musicians. Ableton Live, on the other hand, is favored by electronic music producers for its session view, clip-based workflow, and its real-time performance capabilities. These differences highlight that choosing a DAW is about finding the tool best suited for one’s workflow and the type of music they produce. Consider features like MIDI editing capabilities, automation options, plugin integration, and overall interface design when comparing DAWs.

Q 15. Describe your experience with audio file formats (WAV, AIFF, MP3).

Understanding audio file formats is crucial for efficient workflow and maintaining audio quality. WAV and AIFF are lossless formats, meaning they retain all the original audio data. This results in pristine audio quality, ideal for mastering and archiving, but comes at the cost of larger file sizes. Think of them like high-resolution photographs – they take up more space but retain every detail.

MP3, on the other hand, is a lossy format. It compresses the audio file by discarding some data deemed inaudible to the human ear. This significantly reduces file size, making it perfect for streaming and online distribution. However, some audio information is lost in the process, potentially impacting sound quality, especially noticeable in complex mixes. Imagine it like a compressed JPEG – smaller file, but some detail is lost.

In my workflow, I utilize WAV or AIFF for all recording and mixing stages, ensuring the highest possible fidelity. Once the mix is finalized and ready for delivery, I’ll then export to MP3 or other suitable formats for distribution, carefully selecting the appropriate bitrate to balance file size and quality for the intended platform.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. Explain your approach to creating and implementing custom automation.

Creating effective automation is about streamlining your workflow and achieving consistent, repeatable results. My approach starts with careful planning. Before diving into automation, I thoroughly map out the desired changes. This involves identifying target parameters (volume, panning, effects parameters, etc.), the timing and shape of the automation, and any conditional logic involved. Think of it like drawing a detailed blueprint before construction.

For example, I might automate a gradual fade-in of a vocal track over four bars using automation curves. I prefer to use a combination of draw automation and automation clips, depending on complexity. Draw automation is best for subtle, fine-tuned adjustments, whereas automation clips are great for creating and reusing complex automation patterns.

I often use MIDI controllers to implement automation in real-time. For complex automation or for automating parameters not easily controlled via MIDI, I’ll utilize my DAW’s scripting or macro capabilities. This allows for highly sophisticated automation based on complex logic or external sources.

Q 17. How do you optimize your DAW settings for performance and efficiency?

Optimizing DAW settings is paramount for avoiding latency issues, crashes, and maximizing processing power. My approach involves a multi-pronged strategy. Firstly, I ensure that the buffer size is appropriately set. A larger buffer size reduces latency but increases the delay between playing a note and hearing it. A smaller buffer reduces the delay but increases the CPU load, potentially leading to dropouts. The ideal size depends on the complexity of the project and the computer’s capabilities – finding the sweet spot is crucial.

Secondly, I regularly close unused plugins and tracks. Every plugin and track consumes processing power and RAM. Keeping only what’s needed reduces the overall load on the system. Thirdly, I regularly manage my sample library. Redundant or unused samples only contribute to disk space usage and hinder performance. Regular consolidation and deletion are vital.

Finally, I keep my DAW and operating system up to date. Software updates often include performance improvements and bug fixes, thus leading to a smoother workflow.

Q 18. Describe your experience with surround sound mixing techniques.

Surround sound mixing requires a strong understanding of spatial audio and how sounds interact within a three-dimensional environment. I approach it by visualizing the soundstage and strategically placing instruments and effects within the various channels. A key aspect is employing panning techniques to create a sense of width, depth, and height.

I often begin by establishing a solid stereo foundation, ensuring that each element has a good place in the stereo field. Then, I selectively place elements into the surround channels to enhance the overall sonic image. For example, I might use the rear channels to place ambience, reverbs, or background elements for an immersive experience. The use of panning plugins or tools specifically designed for surround mixing is extremely helpful.

Metering is also critical in surround mixing to avoid level imbalances and phasing issues. Careful monitoring and reference checks using calibrated monitor speakers or headphones are essential to ensure consistent translation across different playback systems.

Q 19. How do you work with external hardware (e.g., synthesizers, MIDI controllers)?

Integrating external hardware expands the creative possibilities in music production. My approach involves understanding the specific requirements of each piece of hardware. This includes setting up MIDI connections, configuring audio interfaces, and ensuring correct sample rates and bit depths to prevent audio degradation. Before working with new hardware, I check compatibility with my DAW, and often refer to manufacturer documentation or online resources.

For example, using a synthesizer might involve routing its audio output through an audio interface into my DAW’s inputs. I’ll then configure the DAW to recognize and appropriately route the audio signal. Using a MIDI controller can be simpler, as it often just requires connecting the controller via USB or MIDI and setting up the MIDI mapping within my DAW. However, sometimes I may need to adjust MIDI velocity curves and other settings to tailor the response to my needs.

Careful attention to driver updates and configuration is crucial for reliable performance and avoiding unexpected issues during the recording and mixing process.

Q 20. Explain your understanding of signal flow and routing within a DAW.

Understanding signal flow is fundamental to efficient and effective audio production. It’s essentially a chain of events where audio signals travel from input to output. This involves understanding how audio signals are processed and manipulated by various components within the DAW, from input sources (microphones, instruments, etc.) to output devices (speakers, headphones).

Each audio track represents a distinct signal path. Signals can be routed to different channels using auxiliary sends and returns, allowing for versatile processing using plugins. For instance, you might send a vocal track to an auxiliary channel with a reverb plugin. This process ensures that the reverb doesn’t affect other tracks unnecessarily. Busses are powerful tools that allow for grouping of tracks for shared processing. Routing ensures efficient workflow and avoids issues such as feedback.

Understanding the signal flow and routing allows for creative control over the sound, enabling precise processing and mixing.

Q 21. Describe your experience using various metering tools (peak, RMS, LUFS).

Metering tools are vital for ensuring a professional and balanced mix. Peak meters measure the highest amplitude level of a signal, preventing clipping. RMS (Root Mean Square) meters show the average power level over time, giving a better representation of the perceived loudness. LUFS (Loudness Units relative to Full Scale) meters measure loudness according to international standards, essential for broadcast and streaming platforms.

I regularly use all three meters during my mixing process. Peak meters ensure that I avoid clipping, which can result in distortion. RMS meters provide a consistent level, especially for dynamic tracks like vocals. LUFS meters ensure compliance with broadcasting requirements and platform specifications, so my mixes translate correctly across various platforms.

Proper use of these meters ensures a mix that is both balanced in terms of dynamic range and compliant with industry standards.

Q 22. How do you collaborate effectively with other audio professionals?

Effective collaboration in audio production hinges on clear communication, organized workflows, and a shared understanding of the project goals. I typically begin by establishing a clear project brief, outlining the desired sonic landscape, deadlines, and individual responsibilities. We utilize cloud-based collaboration tools like Google Drive or Dropbox for efficient file sharing and version control. For real-time collaboration, we might employ session linking capabilities within our DAW (Digital Audio Workstation), allowing multiple engineers to work simultaneously on a project. This often involves pre-determining specific tasks for each team member – perhaps one engineer focuses on recording, another on editing, and a third on mixing. Regular check-ins, whether through video conferencing or in-person meetings, are vital for keeping everyone on the same page and addressing any challenges proactively. For example, on a recent film scoring project, our team used a combination of session linking in Pro Tools and shared project folders to simultaneously compose, record, and edit multiple instrument parts across geographical locations. The result was streamlined communication and a faster production timeline.

Q 23. Explain your understanding of digital audio bit depth and its effect on sound quality.

Bit depth refers to the number of bits used to represent each sample of digital audio. Think of it like this: each sample is a tiny snapshot of the audio waveform. A higher bit depth provides more precise information about the amplitude (volume) of each sample, leading to a greater dynamic range and reduced quantization noise. Quantization noise is the inherent distortion that occurs when a continuous analog signal is converted into discrete digital values. A 16-bit recording has 65,536 possible levels for each sample, while a 24-bit recording offers 16,777,216 levels. This significant increase in resolution leads to a noticeably cleaner, richer sound, particularly evident in quieter passages. Although the human ear can’t always discern the difference between 24-bit and higher resolutions, it’s crucial for archiving and mastering because it preserves more audio information, allowing for more flexibility during later processing without introducing additional artifacts. In practical terms, I’d typically prefer to work with 24-bit audio during recording and editing, down-converting only when necessary for distribution to platforms with limitations (such as streaming services which often use 16-bit audio).

Q 24. How do you manage version control within your DAW projects?

Version control is paramount in audio production, preventing accidental overwrites and allowing for easy recall of previous mixes or edits. Most DAWs offer built-in versioning features; Pro Tools, for instance, utilizes session saving which enables creating autosaves and manually saved versions. I often supplement this with a robust file management system. I frequently use a folder structure that reflects the project’s phases (recording, editing, mixing, mastering) and dates, ensuring easy identification and retrieval. I also regularly back up my projects to an external hard drive, cloud storage, or both, creating redundancy to prevent data loss. Moreover, for more complex projects, using a dedicated version control system like Git (often alongside a collaboration platform) can prove invaluable. While not as intuitive as DAW-based saving, it offers greater granular control and the ability to track changes and revert to previous versions with detailed documentation of the modifications. This is particularly helpful when working collaboratively on large-scale projects, enabling team members to merge their work efficiently and safely.

Q 25. Describe your experience with audio editing and manipulation techniques.

My experience in audio editing and manipulation encompasses a wide array of techniques, from basic tasks like trimming and splicing to more advanced processes such as time-stretching, pitch shifting, and noise reduction. I’m proficient in using various plugins for restoring damaged audio, enhancing clarity, and creatively manipulating sounds. For example, I regularly use spectral editing to surgically remove unwanted artifacts or to isolate specific frequencies for processing. I also utilize dynamic processing (compression, limiting, gating) extensively to manage the overall dynamics and sonic balance of my mixes. Time-stretching and pitch-shifting tools are frequently employed when dealing with vocal tuning, loop manipulation, and creative sound design. A specific example: I recently worked on a project where I had to remove background hum from a vintage recording. Employing a combination of noise reduction and spectral editing, I successfully recovered a clean, usable audio track.

Q 26. What are your preferred methods for creating a professional-sounding mix?

Achieving a professional-sounding mix involves a multi-stage process that prioritizes balance, clarity, and cohesiveness. My approach typically begins with careful gain staging during tracking, ensuring the signal levels are optimal from the start. Then, the mixing process itself is iterative, focusing on different frequency ranges and instrumental groups. I pay meticulous attention to the low-end, ensuring the bass frequencies are tight and well-defined without muddying the overall mix. I extensively use EQ (equalization) to shape the tonal characteristics of each instrument, carefully carving out space in the frequency spectrum to avoid conflicts. Compression and limiting are employed judiciously to control dynamics, adding punch and glue while maintaining a natural feel. Finally, adding effects like reverb and delay strategically adds depth and space to create a sense of immersion. Throughout the process, I regularly compare my mix to reference tracks, ensuring it aligns with the desired sonic aesthetic. The goal isn’t just technical perfection, but also emotional impact. A good mix should evoke the desired mood and feeling within the listener.

Q 27. How do you use your DAW to prepare audio for different platforms (e.g., streaming, broadcast)?

Preparing audio for different platforms requires understanding the specific technical requirements of each. Streaming services like Spotify and Apple Music often necessitate a certain bit depth and sample rate (typically 16-bit/44.1kHz), while broadcast media might require different specifications entirely. Within my DAW, I’d begin by exporting audio files with the correct parameters, adhering to the requirements of each platform’s specifications. Loudness normalization is vital to ensure consistency across different platforms. I use LUFS (Loudness Units relative to Full Scale) metering to adjust the overall loudness, maximizing clarity while preventing clipping or distortion and maintaining compatibility across devices. Metadata embedding is also critical, particularly for streaming services. This involves properly tagging the files with artist information, album title, track name, and other relevant details to ensure correct display on different platforms. Furthermore, for platforms with strict loudness standards (e.g., broadcast television), a mastering process is often required to fine-tune the audio’s dynamic range, compression, and overall loudness, adhering to the broadcasting regulations and guidelines.

Key Topics to Learn for Advanced DAW Proficiency Interview

- Advanced Mixing and Mastering Techniques: Understanding dynamic processing, equalization, compression, limiting, and mastering chains for optimal audio quality. Practical application: Demonstrate your ability to achieve a professional-sounding mix and master, explaining your choices at each stage.

- MIDI Sequencing and Automation: Proficiently using MIDI editors, creating complex MIDI arrangements, and implementing advanced automation techniques for dynamic and expressive productions. Practical application: Showcase your ability to create intricate musical passages and control parameters effectively using automation.

- Signal Flow and Routing: Comprehensive understanding of audio signal paths, routing techniques, and bussing strategies for efficient workflow and creative sound design. Practical application: Explain your preferred workflow for handling multiple tracks and instruments, emphasizing efficient routing strategies.

- Plugin Management and Processing: Effective utilization of various plugins (compressors, EQs, reverbs, delays, synthesizers, etc.), understanding their parameters, and optimizing their usage for specific tasks. Practical application: Be ready to discuss your preferred plugins and how you tailor them to different musical contexts.

- DAW-Specific Features and Workflows: Deep understanding of advanced features within your chosen DAW (e.g., Logic Pro X, Ableton Live, Pro Tools, Cubase), including shortcuts, automation options, and advanced editing techniques. Practical application: Highlight your proficiency with features specific to your chosen DAW that demonstrate a high level of skill and efficiency.

- Sound Design and Synthesis: Demonstrate skills in creating custom sounds using synthesizers, samplers, and other sound design tools. Practical application: Showcase your ability to design unique sounds and explain your creative process.

- Troubleshooting and Problem-Solving: Ability to identify and resolve technical issues, such as latency, clicks, pops, and other common audio problems. Practical application: Be prepared to discuss your approach to troubleshooting and problem-solving in a DAW environment.

Next Steps

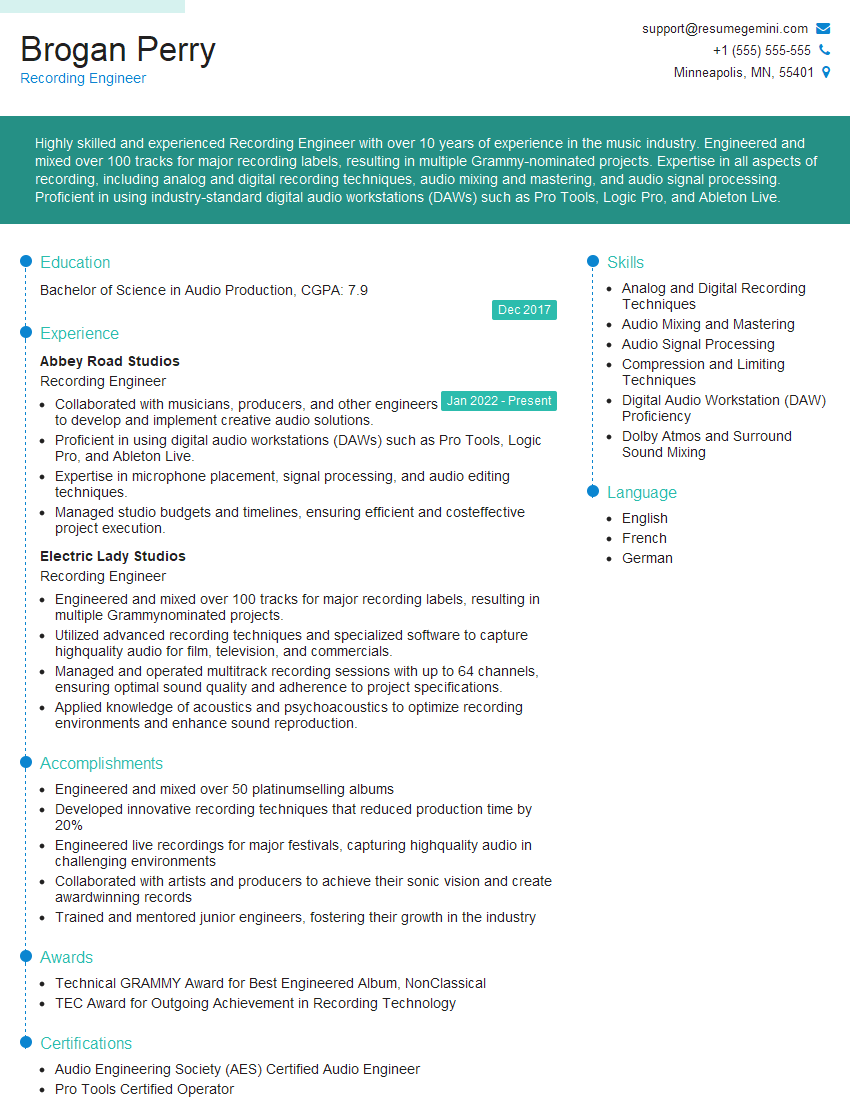

Mastering advanced DAW proficiency is crucial for career advancement in the audio industry, opening doors to exciting opportunities and higher earning potential. To maximize your job prospects, create an ATS-friendly resume that highlights your skills and experience effectively. ResumeGemini is a trusted resource to help you build a professional and impactful resume. Examples of resumes tailored to Advanced DAW Proficiency are available to guide you, ensuring your qualifications shine through to potential employers.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Very informative content, great job.

good