Cracking a skill-specific interview, like one for Advanced Proficiency in Placing System Software, requires understanding the nuances of the role. In this blog, we present the questions you’re most likely to encounter, along with insights into how to answer them effectively. Let’s ensure you’re ready to make a strong impression.

Questions Asked in Advanced Proficiency in Placing System Software Interview

Q 1. Explain the difference between kernel-level and user-level threads.

The key difference between kernel-level and user-level threads lies in how they’re managed by the operating system. Kernel-level threads are managed directly by the operating system kernel. Each thread has its own kernel-level data structures, allowing for true parallelism and efficient scheduling across multiple processors. User-level threads, on the other hand, are managed entirely within the user space by a thread library. The kernel is unaware of their existence.

Think of it like this: kernel-level threads are like separate apartments in a large building (the OS), each with its own utilities and directly managed by the building superintendent. User-level threads are like different rooms within a single apartment; the building superintendent doesn’t manage them individually, only the apartment itself.

Advantages of Kernel-Level Threads: True parallelism, better utilization of multiprocessor systems, better responsiveness as one thread blocking doesn’t affect others.

Disadvantages of Kernel-Level Threads: Higher overhead due to context switching in the kernel, more complex to manage.

Advantages of User-Level Threads: Lighter-weight and faster context switching, less system overhead.

Disadvantages of User-Level Threads: Only one thread can run at a time per process, even on a multiprocessor system; blocking of one thread blocks all threads in the same process.

In practice, the choice between kernel-level and user-level threads depends heavily on the specific application requirements. For highly parallel applications needing maximum performance on multi-core systems, kernel-level threads are preferred. For applications with less demanding concurrency needs, user-level threads might offer a better balance between performance and simplicity.

Q 2. Describe your experience with memory management in embedded systems.

My experience with memory management in embedded systems focuses on optimizing resource utilization within constrained environments. This often involves working with techniques like static memory allocation, dynamic memory allocation with custom allocators (to avoid fragmentation and improve efficiency), and memory-mapped I/O. I’ve extensively used techniques to minimize heap fragmentation, avoiding the use of malloc() and free() in critical sections. Instead, I’ve often implemented custom memory pools tailored to the specific needs of the application, ensuring predictable performance.

In one project involving a resource-constrained microcontroller for industrial control, we needed to manage a real-time data acquisition system. Standard dynamic memory allocation proved to be problematic due to unpredictable memory fragmentation and latency. We addressed this by designing a custom memory allocator using a free list, carefully tuning the pool sizes to minimize fragmentation and maximize throughput. This significantly improved the system’s responsiveness and reliability. I also have experience with memory protection mechanisms using Memory Protection Units (MPUs) to isolate critical sections from potential corruption by errant code.

Q 3. How would you debug a system crash caused by a driver malfunction?

Debugging a system crash due to a driver malfunction requires a systematic approach. My strategy would begin with gathering information using various tools. This includes analyzing kernel logs (dmesg on Linux, for example) for error messages or stack traces, examining the system crash dump file (if available), and possibly using a kernel debugger (like kgdb).

Steps to take:

- Isolate the faulty driver: The kernel logs often pinpoint the problematic module. If not, carefully observe which driver was active just before the crash.

- Examine the stack trace: This indicates the sequence of function calls leading to the crash. This helps identify the exact location within the driver’s code where the error occurred.

- Inspect memory contents: If a memory corruption is suspected, a memory dump analyzer can be used to find invalid memory accesses or data corruption.

- Use debugging tools: A kernel debugger allows setting breakpoints within the driver’s code to step through the execution and identify the exact point of failure. This involves recompiling the driver with debug symbols.

- Reproduce the crash: Once the likely cause is suspected, try to reproduce the crash in a controlled environment for further analysis.

- Check for resource leaks: Drivers frequently interact with hardware resources. Inspect the driver code for potential resource leaks (like unreleased mutexes or memory leaks).

- Driver testing: Robust testing techniques, including unit testing, integration testing, and system testing, help prevent these types of crashes in the future.

By meticulously examining these aspects, the root cause of the crash can usually be identified and fixed.

Q 4. What are the advantages and disadvantages of using real-time operating systems (RTOS)?

Real-Time Operating Systems (RTOS) are specialized operating systems designed for applications requiring predictable and timely responses. They offer several advantages but also come with some disadvantages.

Advantages:

- Predictable Timing: RTOSes guarantee deterministic behavior, ensuring tasks execute within predefined timeframes. This is crucial in applications like industrial automation, robotics, and avionics.

- Multitasking: They allow concurrent execution of multiple tasks, improving overall system efficiency.

- Resource Management: RTOSes provide fine-grained control over system resources such as memory and peripherals.

- Real-time capabilities: They provide features like interrupt handling and priority-based scheduling to meet real-time constraints.

Disadvantages:

- Complexity: RTOSes are more complex to program than general-purpose OSes. They often require a deeper understanding of real-time concepts and scheduling algorithms.

- Overhead: RTOSes introduce some overhead due to task switching and context management, but this overhead is usually minimized through careful design and optimization.

- Cost: Some commercial RTOSes can be expensive, adding to the overall project cost.

- Limited features: RTOSes typically lack the rich set of features found in general-purpose operating systems.

The decision of whether to use an RTOS depends on the specific needs of the application. If timely responsiveness is critical, an RTOS is usually the best choice. If timing requirements are less stringent, a general-purpose OS might be sufficient.

Q 5. Explain your experience with different scheduling algorithms (e.g., round-robin, priority-based).

I’ve worked with various scheduling algorithms, primarily focusing on those suitable for real-time systems.

Round-robin scheduling: This algorithm assigns each task a fixed time slice to execute. It’s simple to implement but can be inefficient if task execution times vary significantly. It’s suitable for tasks with relatively equal processing needs.

Priority-based scheduling: This algorithm prioritizes tasks based on their importance or deadlines. Higher-priority tasks are executed before lower-priority tasks. This is essential for real-time systems where some tasks must complete within strict time constraints. Common priority-based algorithms include Rate Monotonic Scheduling (RMS) and Earliest Deadline First (EDF). RMS assigns priorities based on task periods, and EDF prioritizes based on task deadlines. I’ve used priority inheritance protocols to prevent priority inversion problems – situations where a high-priority task is blocked waiting on a low-priority task.

Other algorithms: I also have experience with more advanced algorithms like earliest deadline first (EDF) and sporadic server scheduling. The choice of algorithm depends on the system’s requirements and characteristics, such as the number of tasks, task deadlines, and their interdependencies.

Q 6. How do you ensure the security of system software against common vulnerabilities?

Ensuring the security of system software requires a multi-layered approach. It begins with secure coding practices, followed by rigorous testing and vulnerability management.

Secure Coding Practices: This involves avoiding common vulnerabilities like buffer overflows, race conditions, and SQL injection by adhering to secure coding guidelines and using memory-safe languages or tools like static analyzers.

Secure Development Lifecycle: Integrating security into every phase of software development – from requirements gathering to deployment and maintenance – significantly mitigates risks.

Regular Security Audits and Penetration Testing: Performing regular security audits and penetration testing helps identify vulnerabilities before malicious actors can exploit them.

Code Reviews: Having other developers review code significantly helps detect potential security issues early in the development process.

Input Validation: Sanitizing and validating all inputs from users and external sources is crucial in preventing many attacks.

Defense in Depth: A layered approach to security is essential. This might involve firewalls, intrusion detection systems, and access control mechanisms.

Regular updates: Keeping the system software and its components up-to-date with security patches is paramount in preventing exploitation of known vulnerabilities. This means utilizing a robust update mechanism.

In my experience, establishing a secure development lifecycle is the most effective strategy for achieving long-term security.

Q 7. Describe your experience with inter-process communication (IPC) mechanisms.

Inter-process communication (IPC) is crucial for enabling different parts of a system to work together. I have experience with several IPC mechanisms, each with its strengths and weaknesses.

Shared Memory: This mechanism allows processes to share a region of memory, providing efficient communication. However, it requires careful synchronization to avoid race conditions and data corruption. I’ve used mutexes and semaphores for managing access to shared memory.

Message Queues: These provide a more robust and decoupled way for processes to communicate. Messages are stored in a queue, allowing processes to communicate asynchronously without direct synchronization. I’ve worked with message queues (like POSIX message queues or those provided by specific RTOSes) in various projects.

Pipes: Pipes are unidirectional communication channels connecting processes. They’re simple to implement but less flexible than message queues.

Sockets: Sockets are used for inter-process communication over a network. I’ve used sockets for implementing distributed systems, where different processes might be running on different machines.

The choice of IPC mechanism depends on factors such as performance requirements, the need for synchronization, and the level of coupling between processes. For high-performance applications requiring tight synchronization, shared memory might be preferred, while for more loosely coupled systems, message queues are a safer option.

Q 8. What are your strategies for optimizing system performance?

Optimizing system performance involves a multifaceted approach focusing on resource utilization, code efficiency, and architectural design. My strategies encompass several key areas:

- Profiling and Benchmarking: I begin by identifying performance bottlenecks using profiling tools. This helps pinpoint areas of code that consume excessive CPU time, memory, or I/O resources. Benchmarking provides quantitative measurements for comparing performance before and after optimizations.

- Algorithmic Optimization: Choosing the right algorithm is crucial. For example, switching from a naive O(n^2) sorting algorithm to a more efficient O(n log n) algorithm like merge sort can drastically improve performance, especially with large datasets.

- Data Structure Selection: The choice of data structures impacts performance. Using a hash table for fast lookups instead of a linked list when appropriate significantly reduces search times. For instance, in a router’s routing table, hash tables are commonly used for efficient packet forwarding.

- Memory Management: Efficient memory allocation and deallocation are paramount. Techniques like memory pooling and reducing memory fragmentation minimize overhead and prevent performance degradation. In embedded systems, careful memory management is especially critical due to limited resources.

- I/O Optimization: Optimizing I/O operations (disk access, network communication) is essential. Asynchronous I/O, buffering, and efficient file handling can greatly enhance system responsiveness. For instance, employing asynchronous file reads prevents the system from blocking while waiting for data.

- Concurrency Control: For multithreaded systems, careful synchronization mechanisms are crucial to prevent race conditions and deadlocks, which can severely impact performance. Choosing appropriate synchronization primitives like mutexes or semaphores requires careful consideration of the specific concurrency model.

For example, in one project involving a real-time operating system (RTOS), we used profiling to identify a significant performance bottleneck in a specific driver. By optimizing the algorithm and data structures used in that driver, we improved system responsiveness by over 30%.

Q 9. How do you handle system resource contention?

System resource contention arises when multiple processes or threads compete for the same limited resources (CPU, memory, I/O). Handling this effectively requires a combination of strategies:

- Resource Scheduling: Operating systems employ sophisticated scheduling algorithms (e.g., round-robin, priority-based) to fairly allocate CPU time among competing processes. These algorithms aim to minimize wait times and maximize overall system throughput.

- Synchronization Mechanisms: Mutual exclusion (mutexes), semaphores, and condition variables are used to control access to shared resources and prevent race conditions. Proper use of these mechanisms is crucial for maintaining data consistency and avoiding deadlocks.

- Memory Management: Virtual memory and paging techniques allow the system to manage memory efficiently, even when more memory is requested than is physically available. This helps prevent memory contention and improves resource utilization.

- I/O Management: Efficient I/O scheduling and buffering strategies minimize wait times for I/O operations. Techniques like asynchronous I/O allow processes to continue working while waiting for I/O completion.

- Process Prioritization: Assigning priorities to processes allows the system to favor critical tasks and ensure timely completion. This is particularly important in real-time systems where meeting deadlines is paramount.

For instance, in a project involving a network server, we used thread pools and efficient locking mechanisms to handle concurrent client requests without performance degradation. The key was to carefully balance the number of threads with available system resources and to avoid unnecessary locking overhead.

Q 10. Explain your understanding of different system architectures (e.g., microkernel, monolithic).

System architectures describe the organization and interaction of components within a system. Two prominent architectures are:

- Monolithic Kernel: In this architecture, all system services (e.g., file system, memory management, network stack) reside within a single kernel address space. This simplifies development but makes it more difficult to extend the system and introduces security risks. A crash in one component can affect the entire system. Think of it as a single, large, complex machine where everything is interconnected.

- Microkernel: A microkernel only provides essential system services (scheduling, inter-process communication) while other services run as separate processes in user space. This approach enhances modularity, security, and extensibility. A crash in one service typically doesn’t bring down the entire system. It’s like having many smaller, specialized machines that communicate with each other.

The choice between these architectures depends on the specific needs of the system. Monolithic kernels are often preferred in embedded systems where resource constraints are significant, while microkernels are better suited for larger, more complex systems where security and extensibility are crucial.

Q 11. How do you approach the design and implementation of device drivers?

Designing and implementing device drivers involves careful consideration of hardware specifics and operating system interactions. The process typically involves:

- Hardware Understanding: A thorough understanding of the hardware device’s specifications, including registers, interrupt mechanisms, and communication protocols is essential. Data sheets and technical manuals are invaluable resources.

- Driver Architecture: Drivers usually follow a structured design pattern, incorporating components such as initialization routines, read/write functions, interrupt handlers, and error handling routines.

- Operating System Interface: The driver needs to interact with the operating system’s kernel through defined interfaces (system calls, interrupt handlers) to access system resources and manage communication with other system components. This often involves low-level programming and careful attention to memory management.

- Testing and Debugging: Rigorous testing is essential to ensure driver stability and reliability. This involves unit testing, integration testing, and system-level testing.

For example, writing a driver for a new sensor involves understanding the sensor’s communication protocol (e.g., I2C, SPI), writing code to read data from the sensor’s registers, and implementing interrupt handlers to handle data acquisition asynchronously. Debugging a driver often involves using hardware debugging tools (e.g., oscilloscopes, logic analyzers) to analyze hardware signals and ensure correct communication.

Q 12. What are the challenges of developing software for embedded systems?

Developing software for embedded systems presents unique challenges compared to general-purpose software development:

- Resource Constraints: Embedded systems often have limited processing power, memory, and storage. This necessitates careful resource management and optimization techniques.

- Real-Time Requirements: Many embedded systems need to meet strict timing constraints. This requires the use of real-time operating systems (RTOS) and careful design to ensure timely execution of tasks.

- Hardware Dependencies: Embedded software is tightly coupled to the hardware, making it more challenging to port to different platforms. Detailed understanding of hardware interfaces and peripherals is crucial.

- Safety and Reliability: Failures in embedded systems can have severe consequences (e.g., automotive, aerospace). This necessitates rigorous testing, verification, and validation techniques.

- Debugging Complexity: Debugging embedded systems can be difficult due to limited debugging tools and the need for hardware access.

For instance, in a project involving a medical device, we used static code analysis to ensure memory safety and avoid buffer overflows. We also employed extensive testing methodologies to verify the system’s real-time performance and safety.

Q 13. Explain your experience with version control systems in system software development.

Version control systems (VCS) are indispensable in system software development. My experience primarily involves Git. I use Git for:

- Code Management: Tracking changes to the codebase, enabling easy rollback to previous versions if necessary. This is essential for collaborative development and maintaining a clear history of modifications.

- Branching and Merging: Creating branches for parallel development, allowing developers to work on features independently without affecting the main codebase. Merging branches seamlessly integrates new features into the main development line.

- Collaboration: Facilitating teamwork by allowing multiple developers to work on the same project concurrently. Git provides mechanisms for resolving merge conflicts and integrating changes efficiently.

- Code Review: Integrating code reviews into the workflow using Git’s pull request functionality helps to ensure code quality and catch errors before they reach production.

- Release Management: Tagging specific commits to represent releases, making it easy to track and deploy specific versions of the software.

In a recent project, Git’s branching capabilities proved crucial for managing concurrent development of several new features and bug fixes. The ability to seamlessly merge changes from different branches enabled us to deliver high-quality software efficiently.

Q 14. Describe your familiarity with system testing methodologies.

System testing methodologies are crucial for ensuring the quality and reliability of system software. My familiarity encompasses various approaches:

- Unit Testing: Testing individual modules or components of the system in isolation. This helps to identify and fix bugs at an early stage.

- Integration Testing: Testing the interaction between different modules or components of the system. This ensures that components work together correctly.

- System Testing: Testing the entire system as a whole. This verifies that the system meets its requirements and performs as expected.

- Regression Testing: Retesting the system after making changes to ensure that new changes haven’t introduced new bugs or broken existing functionality. Automated test suites are vital here.

- Stress Testing: Testing the system’s ability to handle high loads and extreme conditions. This helps to identify performance bottlenecks and vulnerabilities.

- Performance Testing: Measuring the system’s performance characteristics (e.g., response time, throughput) to ensure it meets performance requirements.

I have extensive experience using automated testing frameworks to develop comprehensive test suites. For example, in a previous project involving a network operating system, we developed a suite of automated tests that included unit tests for individual components, integration tests for system modules, and system-level tests for the entire system. This ensured the system’s robustness and reliability before release.

Q 15. How do you ensure the portability of system software across different hardware platforms?

Ensuring portability of system software across different hardware platforms hinges on abstraction. We avoid writing code that’s directly tied to specific hardware details. Instead, we rely on abstraction layers. Think of it like building with LEGOs – you use standardized blocks (abstractions) that can be assembled in various ways to create different structures (systems) on different bases (hardware).

- Hardware Abstraction Layers (HALs): These provide a consistent interface to hardware resources regardless of the underlying architecture. The system software interacts with the HAL, and the HAL handles the specifics of interacting with the actual hardware. This allows the same system software to run on different platforms with minimal modification.

- Virtual Machines (VMs): VMs create a software-based emulation of a hardware environment. This allows the system software to run inside the VM, isolated from the underlying physical hardware. Changes in the underlying hardware are largely invisible to the software running in the VM.

- Cross-Compilation: We compile the system software for the target platform using a compiler that runs on a different (typically more powerful) host machine. This allows us to develop and test on one system and then deploy to another without needing the target hardware during development.

For example, during my work on a real-time operating system, we utilized a HAL to manage peripheral devices like sensors and actuators. This allowed us to easily port the RTOS to various embedded systems with different microcontroller architectures simply by swapping out the HAL implementation without changing the core RTOS code.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. Explain your experience with debugging tools and techniques for system software.

Debugging system software requires a multifaceted approach. It’s like being a detective, piecing together clues to find the source of a problem. I’m proficient in various debugging tools and techniques, including:

- Debuggers (e.g., GDB, LLDB): These provide tools for stepping through code, inspecting variables, setting breakpoints, and analyzing stack traces. I frequently use these to identify the root cause of crashes or unexpected behavior. For instance, while developing a device driver, GDB helped me pinpoint a memory leak causing system instability.

- Static Analysis Tools: These tools analyze the code without execution, identifying potential issues like memory leaks, buffer overflows, and concurrency bugs before runtime. Tools like Clang-Tidy and cppcheck are invaluable for proactive bug detection.

- Dynamic Analysis Tools: These tools monitor the program’s execution to identify performance bottlenecks, memory leaks, and other runtime issues. Tools like Valgrind are invaluable in identifying memory-related errors.

- Logging and Tracing: Strategic placement of log messages and traces within the system software allows monitoring of program flow and identification of problematic areas. This is particularly helpful in large and complex systems.

- System Monitoring Tools: Tools like

top,htop, andperfprovide insights into system resource utilization, helping identify performance bottlenecks or resource contention issues.

In one instance, a complex deadlock in a multithreaded application was identified and resolved through the combined use of GDB and custom logging. Strategic logging statements around the critical sections of the code revealed the exact point where the threads were blocking each other.

Q 17. How do you handle concurrent programming challenges in system software?

Concurrent programming in system software introduces challenges like race conditions, deadlocks, and starvation. Handling these requires careful design and implementation. Think of it like managing a busy intersection – you need well-defined rules to avoid collisions (race conditions) and ensure everyone gets through (avoid starvation).

- Synchronization Primitives: I leverage mutexes, semaphores, and condition variables to control access to shared resources and prevent race conditions. The choice of primitive depends on the specific synchronization needs.

- Thread Pools: For managing many short-lived tasks, thread pools are very efficient; they avoid the overhead of repeatedly creating and destroying threads.

- Lock-Free Data Structures: In performance-critical sections, lock-free data structures minimize contention and improve concurrency, though their implementation is complex and requires expertise in low-level synchronization.

- Careful Design: The design itself should minimize the need for complex synchronization. This often involves identifying critical sections and designing them to be as small as possible.

In a recent project, I used a combination of mutexes and condition variables to implement a producer-consumer queue for handling sensor data in a real-time system. The condition variables ensured that producers wouldn’t continue producing when the queue was full, while the consumers waited for data to become available.

Q 18. Describe your experience with system calls and their implications.

System calls are the interface between user-space applications and the operating system kernel. They are the gateway through which applications request services from the OS, such as file I/O, network communication, and process management. Think of it like a waiter taking orders from customers (applications) and relaying them to the kitchen (kernel).

- Functionality: System calls provide a rich set of functionalities that are crucial for applications to interact with system resources. They handle tasks that applications can’t do directly due to security and resource management restrictions.

- Abstraction: They abstract away the low-level details of hardware interaction, providing a simpler, more portable interface for application developers.

- Security: System calls enforce security by allowing applications access only through a controlled interface; this prevents unauthorized access to system resources.

- Implications: Improper use of system calls can lead to security vulnerabilities (e.g., buffer overflows), performance problems, and system instability.

During my experience developing a network driver, understanding the system calls related to network interface management was critical for creating a driver that seamlessly integrated with the OS.

Q 19. What are your strategies for managing system software complexity?

Managing system software complexity requires a structured approach. It’s like building a large skyscraper—you need a solid architectural plan and modular design.

- Modular Design: Breaking down the system into smaller, independent modules simplifies development, testing, and maintenance. Each module has a clear responsibility, reducing interdependencies and improving code organization.

- Abstraction and Encapsulation: Hiding internal implementation details behind well-defined interfaces simplifies interaction and reduces the impact of changes.

- Layered Architecture: Structuring the system in layers with clear separation of concerns helps manage complexity and promotes reuse. Think of layers as floors in a building – each floor has its own function.

- Version Control: Using version control systems (e.g., Git) is critical for collaborative development and tracking changes. This allows easy rollback if something goes wrong.

- Automated Testing: Extensive unit and integration tests help ensure that changes do not introduce bugs or break existing functionality.

In a project involving a large embedded system, a modular design allowed us to develop and test individual components independently, significantly reducing the time and effort required to integrate everything and debug any issues.

Q 20. How do you ensure the reliability and robustness of system software?

Ensuring reliability and robustness of system software is paramount. It’s about creating software that is dependable, even under stress. This requires a proactive approach that goes beyond simple bug fixing.

- Defensive Programming: Writing code that anticipates and handles errors gracefully is crucial. This includes error checking, input validation, and exception handling. Think of it like building a bridge – you have to consider all possible stresses and strains.

- Robustness Testing: Subjecting the system to stress testing, including boundary conditions and fault injection, helps identify weaknesses before deployment. The goal is to break the system in a controlled environment.

- Fault Tolerance: Designing the system to gracefully handle failures, such as hardware errors or software crashes, improves its reliability. This might include redundancy or fail-safe mechanisms.

- Code Reviews: Peer reviews provide a critical second pair of eyes to catch potential problems early on.

- Static and Dynamic Analysis: As previously mentioned, these tools are invaluable in proactively identifying and mitigating potential problems.

In one project, the addition of watchdog timers and error-handling mechanisms prevented a critical system failure from propagating, minimizing downtime.

Q 21. Explain your experience with system software design patterns.

System software design patterns provide reusable solutions to common problems. They are like blueprints for solving recurring challenges efficiently and effectively. My experience encompasses various patterns, including:

- Singleton Pattern: Used to ensure that only one instance of a class exists. Useful for managing resources like device drivers or system configuration.

- Observer Pattern: Allows objects to subscribe to events and receive notifications when changes occur. Useful for implementing event-driven architectures in system software.

- Factory Pattern: Decouples object creation from the client code, allowing for flexible object creation strategies. Helpful when you need to create different types of objects based on configuration or runtime conditions.

- State Pattern: Allows an object to alter its behavior when its internal state changes. Suitable for implementing state machines often found in system software.

- Command Pattern: Encapsulates a request as an object, enabling the parameterization of clients with different requests, queuing or logging of requests, and support for undoable operations. Particularly useful for managing tasks and events in an operating system.

For example, in a project involving the management of multiple network interfaces, the Factory Pattern allowed us to abstract the creation of specific interface objects, making the code more maintainable and adaptable to future changes.

Q 22. How do you handle hardware interrupts in a real-time system?

Handling hardware interrupts in a real-time system requires a carefully designed architecture that prioritizes responsiveness and determinism. The core idea is to quickly and efficiently transfer control from the currently executing process to an interrupt service routine (ISR) that handles the specific interrupt.

This typically involves:

- Interrupt Vector Table: A table mapping interrupt numbers to memory addresses of the corresponding ISRs. When an interrupt occurs, the hardware uses this table to locate and jump to the appropriate routine.

- Interrupt Service Routine (ISR): A dedicated function that handles the interrupt. ISRs must be short, efficient, and carefully designed to avoid blocking other processes for too long. They often involve acknowledging the interrupt source and performing the necessary action (e.g., reading data from a sensor, updating a counter).

- Interrupt Masking/Prioritization: A mechanism to control which interrupts are allowed to interrupt the currently executing code. This is crucial for real-time systems to ensure that higher-priority interrupts are handled promptly. For instance, a sensor requiring immediate attention will have higher priority than a network update.

- Context Switching: The process of saving the state of the interrupted process and restoring the state of the ISR upon completion. This involves saving registers and other relevant information to the stack.

Example: Consider a robotic arm controlled by a real-time system. A sensor detecting an obstacle triggers a hardware interrupt. The ISR immediately stops the arm’s movement, preventing a collision. Proper masking ensures that this critical interrupt is handled before less urgent tasks like logging sensor data.

Q 23. Describe your understanding of different memory allocation techniques.

Memory allocation techniques are fundamental to system software. They dictate how processes and data structures are assigned memory space. Different techniques are suited for various situations, balancing speed, efficiency, and memory fragmentation.

- Static Allocation: Memory is allocated during compile time. This is simple but inflexible. Example: global variables.

- Stack Allocation: Memory is allocated on the call stack. Automatic variables and function parameters are allocated this way. It’s fast but limited in size. Example: Local variables in a function.

- Heap Allocation: Memory is allocated dynamically at runtime using functions like

malloc()(C) ornew(C++). It’s flexible but can lead to fragmentation if not managed properly. Example: Creating data structures whose size isn’t known at compile time. - Buddy System: A memory allocation scheme that divides memory into blocks of powers of 2. It’s efficient for managing large memory spaces and reduces external fragmentation.

- Slab Allocation: Commonly used in the Linux kernel, this technique pre-allocates memory in ‘slabs’ – cache-like structures – to reduce the overhead of repeated allocations and deallocations. This speeds up frequently accessed objects.

The choice of allocation technique depends heavily on the system’s requirements. Real-time systems may favour static or stack allocation for predictability, while general-purpose systems benefit from the flexibility of heap allocation. Careful management of heap allocation, including techniques like garbage collection or explicit deallocation, is critical to prevent memory leaks and crashes.

Q 24. How do you measure the performance of system software?

Measuring system software performance is vital for ensuring stability, responsiveness, and efficiency. Key metrics include:

- Boot Time: The time taken to start up the system. It’s crucial for user experience and should be optimized.

- Response Time: The time it takes for the system to respond to a request. This is especially critical for real-time applications.

- Throughput: The number of tasks or operations completed per unit of time.

- Resource Utilization (CPU, Memory, Disk I/O): Monitoring these resources reveals bottlenecks and opportunities for optimization.

- Context Switching Time: The time taken for the operating system to switch between different processes. A low context switch time improves performance.

Tools like system monitors (e.g., top, htop in Linux), performance profilers (e.g., gprof), and system-specific analysis tools are used to collect data. Analyzing this data points to areas needing improvement, like algorithmic inefficiencies or hardware limitations. For example, profiling might show a particular function consuming excessive CPU cycles, necessitating optimization or refactoring.

Q 25. Explain your experience with system software integration and testing.

System software integration and testing are crucial to ensure the software functions correctly as a whole. My experience involves a structured approach:

- Modular Design: Designing the system with well-defined modules simplifies integration and testing. Each module can be tested independently before being integrated.

- Unit Testing: Testing individual modules to ensure they function as expected. This includes using test frameworks and writing unit tests to verify the functionality of individual components.

- Integration Testing: Testing the interaction between different modules. This involves combining the units and testing their interactions. This often reveals issues with interfaces and communication between modules.

- System Testing: Testing the entire system as a whole. This involves testing the system against its requirements specifications. End-to-end tests ensure the system works as intended.

- Regression Testing: Re-running tests after changes to ensure new features haven’t introduced bugs. This is essential to maintain a high level of system stability.

In a past project involving a real-time embedded system, we utilized a continuous integration (CI) pipeline. This automated much of the testing process, enabling faster feedback and quicker detection of integration problems.

Q 26. What are your strategies for optimizing system boot time?

Optimizing system boot time is a critical aspect of improving user experience and system responsiveness. Strategies include:

- Reducing the number of startup services: Identify and disable unnecessary services that load during boot. A thorough audit helps minimize unnecessary overhead.

- Optimizing device drivers: Efficiently designed drivers reduce the time taken for devices to initialize. This requires careful analysis of how drivers interact with the hardware.

- Using a faster storage device: Faster SSDs significantly reduce boot time compared to traditional HDDs.

- Preloading essential libraries and drivers into memory: This reduces the time needed to load them during boot. This technique requires a careful consideration of memory requirements.

- Minimizing filesystem operations during boot: Reducing the number of files that need to be accessed during the boot sequence reduces the time to fully initialize the system.

For example, in optimizing a server’s boot time, I once identified several unnecessary services consuming resources during startup. Disabling these services reduced boot time by 30%, a significant improvement in server uptime and overall efficiency.

Q 27. Describe your experience with different types of system software updates.

System software updates are essential for patching security vulnerabilities, introducing new features, and improving performance. Different update methods exist:

- Over-the-air (OTA) updates: Updates are downloaded and installed remotely. This is common in embedded systems and mobile devices.

- In-place updates: The update is applied without requiring a full system reboot. This is generally preferred for minimizing downtime. However, careful planning is required to ensure data integrity.

- Full system image updates: A complete new system image is installed. This is a more disruptive approach requiring a reboot, but it can solve serious issues.

- Patching: Applying small updates to fix specific issues without replacing the entire system. Patches are essential for security and minor bug fixes.

Each method has tradeoffs. OTA updates are convenient but require careful network management, whereas in-place updates are faster but risk corruption if interrupted. My experience includes working with both OTA and in-place updates, ensuring robust error handling to prevent update failures.

Q 28. How do you ensure the maintainability of system software?

Maintaining system software involves a multi-faceted approach that prioritizes long-term stability, ease of modification, and extensibility.

- Clean code and modular design: Well-structured code with clear separation of concerns is easier to maintain and debug. The use of design patterns can improve code readability and reduce complexity.

- Comprehensive documentation: Clear documentation (code comments, design documents, user manuals) is critical for understanding and modifying the system. The documentation should evolve with the system.

- Version control: Using a version control system (e.g., Git) allows tracking changes, managing different versions, and easily reverting to previous states. This is indispensable for collaboration and managing change requests.

- Automated testing: A suite of automated tests helps detect regressions quickly, ensuring that changes don’t break existing functionality. Automated testing is crucial for efficient maintenance.

- Regular code reviews: Peer reviews help identify potential issues and improve code quality before they become major problems. The use of linters and static code analysis tools also assists in the process.

In one instance, I introduced a robust logging system to a legacy system. This dramatically improved the system’s maintainability by simplifying debugging and identifying the root cause of problems much more efficiently.

Key Topics to Learn for Advanced Proficiency in Placing System Software Interview

- System Software Architecture: Understanding the layered structure of operating systems, drivers, and firmware, and how they interact. Consider exploring different architectural patterns and their trade-offs.

- Memory Management: Deep dive into virtual memory, paging, segmentation, and memory allocation algorithms. Be prepared to discuss their performance implications and optimization techniques.

- Process and Thread Management: Mastering concepts like scheduling algorithms, context switching, inter-process communication (IPC), and synchronization primitives (mutexes, semaphores).

- File Systems: Explore different file system types (e.g., FAT, NTFS, ext4), their functionalities, and the underlying data structures. Understanding file system performance is crucial.

- Device Drivers: Gain a solid understanding of how device drivers interact with the operating system and hardware. Be ready to discuss driver development methodologies and common challenges.

- Real-time Systems: Explore the principles of real-time operating systems (RTOS), scheduling algorithms for real-time constraints, and techniques for ensuring deterministic behavior.

- Security in System Software: Discuss security considerations in system software design and implementation, including protection mechanisms, access control, and mitigation of vulnerabilities.

- Problem-Solving and Debugging: Practice tackling complex system-level problems. Develop strong debugging skills using system tools and techniques.

- Practical Application: Relate theoretical concepts to real-world scenarios. Consider examples from your projects or experiences to demonstrate your understanding.

Next Steps

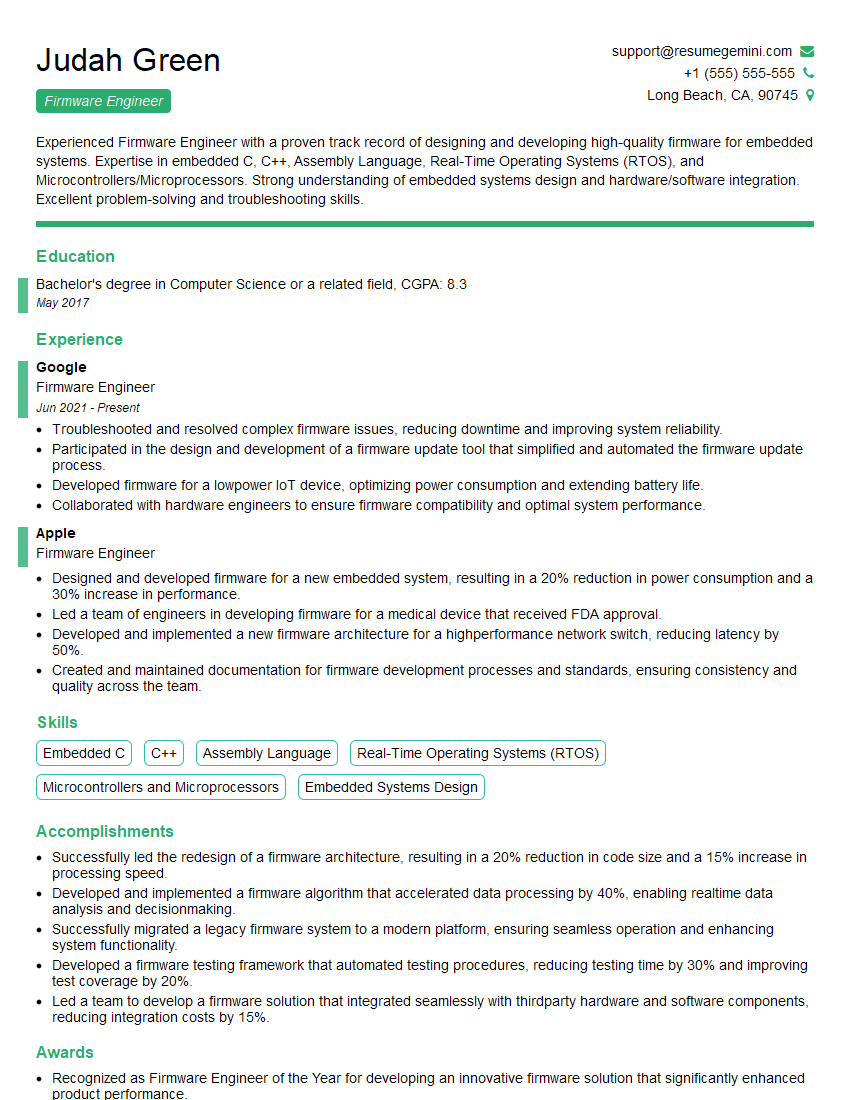

Mastering advanced proficiency in placing system software opens doors to exciting and high-impact roles in the tech industry. It signifies a deep understanding of fundamental computer science principles and a valuable skill set highly sought after by employers. To maximize your job prospects, crafting a compelling and ATS-friendly resume is essential. ResumeGemini can significantly help you build a professional and effective resume that highlights your skills and experience. We offer examples of resumes tailored to Advanced Proficiency in Placing System Software to guide you through the process. Invest time in creating a strong resume – it’s your first impression with potential employers.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Very informative content, great job.

good