Feeling uncertain about what to expect in your upcoming interview? We’ve got you covered! This blog highlights the most important Audio Enhancement Techniques interview questions and provides actionable advice to help you stand out as the ideal candidate. Let’s pave the way for your success.

Questions Asked in Audio Enhancement Techniques Interview

Q 1. Explain the difference between compression and limiting.

Both compression and limiting reduce the dynamic range of an audio signal, but they do so in different ways. Think of it like controlling the flow of water through a pipe.

Compression gradually reduces the volume of signals that exceed a certain threshold (the threshold). The amount of reduction is determined by the ratio. A 4:1 ratio means that for every 4dB increase above the threshold, the output increases by only 1dB. This smooths out peaks while preserving some dynamic variation. Imagine a partially open valve – it allows some water through but restricts the flow of very large surges.

Limiting, on the other hand, acts like a dam. Once the signal reaches a predefined level (the ceiling or limit), it’s abruptly prevented from exceeding that level. No matter how loud the input becomes, the output remains at the limit. This is crucial for preventing clipping, but it can result in a less natural-sounding result if used heavily. It’s like a valve that completely shuts off once a certain water level is reached.

In short: Compression reduces loud peaks, while limiting prevents them from exceeding a certain level.

Q 2. Describe the process of noise reduction using spectral subtraction.

Spectral subtraction is a noise reduction technique that estimates and removes noise from an audio signal by analyzing the frequency spectrum. It works by assuming that the noise spectrum is relatively consistent over time. The process generally involves these steps:

- Noise Profile Estimation: A section of the audio containing only noise (like the beginning of a recording before the speech starts) is analyzed to create a noise profile. This profile represents the spectral characteristics of the noise.

- Signal Analysis: The entire audio signal is then analyzed in the frequency domain (e.g., using a Fast Fourier Transform or FFT).

- Noise Subtraction: For each frequency bin in the signal, the estimated noise power is subtracted from the signal power. This is where the ‘subtraction’ part happens. The formula is often modified to prevent artifacts (subtracting more than the signal power).

- Inverse Transform: The modified spectrum is then converted back to the time domain, resulting in a ‘denoised’ audio signal.

However, spectral subtraction has limitations. It can create musical noise artifacts—a ‘hissing’ sound—particularly when noise estimation is inaccurate or the noise floor is constantly changing. More sophisticated noise reduction algorithms address these issues by using techniques like Wiener filtering or wavelet analysis which offer better performance and handle fluctuating noise levels more gracefully.

Q 3. How do you handle audio clipping during post-production?

Audio clipping occurs when the amplitude of a signal exceeds the maximum level that the recording device or software can handle. It results in a harsh, distorted sound. Unfortunately, you can’t ‘un-clip’ audio; the information is lost. However, several strategies can mitigate the damage:

- Careful Gain Staging: The best way to prevent clipping is to ensure your input levels are never too high. This includes using meters to monitor levels carefully throughout the recording and mixing process.

- Compression: Using a compressor before the clipping point can prevent the loudest peaks from exceeding the maximum level, helping to avoid drastic distortion.

- Waveform Editing: If clipping has already occurred, careful editing in a DAW (Digital Audio Workstation) might allow you to slightly reduce the clipped peaks by lowering their amplitude, but it’s often a very delicate operation and may result in an unnatural sound.

- Noise Reduction/Restoration Plugins: Advanced plugins that attempt to fill in or repair the areas of distortion might help, but they usually add a processing artifact that further affects the audio quality.

Prevention is always far better than trying to cure clipping afterwards. Professional audio engineers prioritize maintaining a good headroom throughout the entire production pipeline.

Q 4. What are the common artifacts introduced by audio compression?

Audio compression, while useful for dynamic control, can introduce several artifacts:

- Pumping: A rhythmic variation in volume, often noticeable as a pulsing or breathing effect. This typically occurs with high compression ratios and slow attack times.

- Breathing: Similar to pumping but more subtle and less rhythmic. It is often caused by compression reacting to slower dynamic shifts in the audio.

- Muddy Bass: Excessive compression can reduce clarity in the low frequencies, making the bass sound muddy or undefined.

- Transient Loss: Compression can reduce the impact and punch of percussive sounds. This happens when the compressor’s attack time is slow, which takes away from the initial transient character.

- Loudness War artifacts: Over-compressing to reach extremely loud levels often creates a harsh, flattened sound and may introduce further distortions.

These artifacts are often minimized by using careful settings, such as shorter attack times, lower ratios, and suitable gain reduction. Proper gain staging and using other processing tools alongside compression can also reduce these issues.

Q 5. Explain the concept of dynamic range compression.

Dynamic range compression reduces the difference between the loudest and quietest parts of an audio signal. It’s like making the audio signal less ‘dynamic’ to control the peaks and valleys of the signal. Think of a photograph – dynamic range refers to the difference between the brightest and darkest parts. Dynamic range compression reduces the contrast.

This is achieved by attenuating loud signals and amplifying quieter signals. The parameters used to control this process include the threshold (the level at which compression begins), the ratio (the amount of gain reduction applied above the threshold), the attack time (how quickly the compressor reacts to signals exceeding the threshold), and the release time (how quickly the compressor returns to its normal gain after the signal falls below the threshold). These parameters are used to achieve various effects. For example, a fast attack and slow release makes percussion sounds punchy, whereas a slow attack and fast release keeps the initial transient but reduces the overall dynamic range.

Dynamic range compression is commonly used to make quieter parts of recordings more audible, control loud peaks to prevent distortion, and create a more consistent overall loudness.

Q 6. Describe different types of equalizers (parametric, graphic, etc.) and their applications.

Equalizers (EQs) are used to adjust the frequency balance of an audio signal. Different types of EQs offer varying levels of control:

- Parametric EQ: Offers the most control, allowing adjustment of frequency, gain (boost or cut), bandwidth (Q factor, which controls how wide the affected frequency range is), and filter type (e.g., bell, shelf, high-pass, low-pass).

- Graphic EQ: Uses a visual display (usually sliders) to adjust gain at various fixed frequency bands. Provides less precise control than a parametric EQ, but is easier to use for broad adjustments.

- Shelving EQ: Affects all frequencies above or below a specified cutoff frequency with a constant slope.

- High-Pass/Low-Pass Filters: Cut frequencies below or above a specific point. This is used frequently to remove unwanted low rumble or high-frequency hiss.

Applications: Parametric EQs are preferred for precise adjustments like shaping vocal tones, cutting unwanted frequencies, or boosting certain frequencies. Graphic EQs are more commonly used for broader adjustments, like room equalization or general tonal shaping during mixing.

Q 7. How do you use EQ to enhance speech clarity?

EQ plays a critical role in enhancing speech clarity. The goal is to make the vocals stand out in the mix without sacrificing naturalness.

- Boosting Intelligibility Frequencies: Boosting frequencies around 1-3kHz can significantly increase the intelligibility of speech. These frequencies contain important consonant sounds. However, avoid over-boosting which might introduce harshness or sibilance.

- Reducing Muds and Unwanted Frequencies: Cutting muddy frequencies (typically in the 250-500Hz range) can make speech more clear by removing low-frequency muddiness that can obscure vocals.

- De-essing: High-frequency sibilance (hissing ‘s’ sounds) can be reduced using a de-esser, which is a type of compressor that focuses on those higher frequencies.

- High-Pass Filtering: Using a high-pass filter to remove unnecessary low-frequency rumble and background noise can significantly improve the overall clarity and intelligibility.

The precise frequencies to target will depend on the recording, microphone used, and the overall mix. A good understanding of human hearing and frequency ranges will give you an advantage. Using a narrow bandwidth parametric EQ makes subtle changes with more accuracy and will give you more control over the result than using a broader bandwidth.

Q 8. What are some common techniques for improving the clarity of dialogue?

Improving dialogue clarity often involves a multi-pronged approach. The goal is to make the speech intelligible and prominent within the overall mix. This usually starts with careful microphone technique during recording – using a directional mic close to the speaker minimizes background noise capture. In post-production, several techniques are crucial:

- Equalization (EQ): Boosting frequencies around 2-4kHz can enhance sibilance (the ‘s’ sounds), making speech crisper. However, excessive boosting can sound harsh, so careful adjustments are essential. We might use a narrow band boost around 3kHz to bring out clarity without adding harshness.

- De-essing: This process specifically targets excessive sibilance, often using a dynamic EQ or compressor that reduces the gain only when the sibilants exceed a certain threshold, preventing harshness.

- Compression: Carefully applied compression can even out the dynamic range of the dialogue, making quieter parts more audible and loud parts less jarring. It helps make the dialogue sound more consistent. This is especially important in dynamic scenes where the actor’s volume varies.

- Gate/Expander: This can be employed to reduce background noise between words or phrases by only allowing sounds above a certain threshold to pass through. This is particularly effective when dealing with low-level background noise.

- Noise Reduction: Sophisticated noise reduction algorithms (discussed later) help eliminate unwanted background sounds that obscure the dialogue. But it’s crucial to remember aggressive noise reduction can dull the overall audio quality.

For example, I once worked on a documentary where the dialogue was muffled due to poor microphone placement. By carefully applying EQ, de-essing, and a moderate amount of compression, I was able to significantly improve intelligibility and make the film much more engaging for the viewers.

Q 9. Explain the principles of reverberation and how it is used in audio enhancement.

Reverberation, or reverb, is the persistence of sound after the original sound has stopped. It’s created by sound waves reflecting off surfaces in a room or space. The characteristics of reverb are shaped by the size, shape, and materials of the room, creating unique acoustic signatures. In audio enhancement, reverb is used to:

- Create a sense of space: Adding reverb makes a sound appear to be in a larger space, whether it’s a concert hall, a small room, or an open field. This is achieved by varying parameters like decay time (how long the reverb lasts) and pre-delay (the time between the original sound and the first reflection).

- Enhance depth and ambience: Appropriate reverb can increase the natural feel of a recording, making instruments and voices sound more natural and less isolated. It adds a sense of depth and space to the audio.

- Simulate specific environments: With advanced reverb plugins, you can precisely mimic the sonic signature of various acoustic spaces, adding realistic ambience to otherwise sterile recordings. For instance, you could add a natural reverb sound that mimics a cathedral to give a choral recording the appropriate ambience.

Imagine listening to a singer in a tiny booth versus a large concert hall. The lack of reverb in the booth makes the voice sound ‘dry’ and isolated. The reverberant sound in the concert hall creates a lush, full sound, providing context and realism.

Q 10. How do you design realistic room ambiance using reverb plugins?

Designing realistic room ambiance with reverb plugins involves understanding and manipulating several key parameters:

- Decay Time (RT60): This measures how long it takes for the reverb to decay by 60dB. Longer decay times indicate larger spaces. Experimenting with RT60 is crucial for achieving the desired ambience. A short RT60 may suit a small room, whereas a much longer RT60 is suitable for a larger hall.

- Pre-delay: The time between the original dry signal and the first reflections. A longer pre-delay creates a sense of space, giving the listener time to perceive the direct sound before the reflections arrive. Experimenting with pre-delay makes a big difference in the perceived size and realism.

- Size and Shape: Many plugins allow for modelling room sizes and shapes. Understanding how these factors influence the reverb character is essential. A rectangular room will have a different reverberation pattern compared to a circular room.

- Damping: This parameter controls how quickly high frequencies decay compared to low frequencies. A highly damped room sounds ‘dead’ whereas less damping results in a brighter, more spacious sound. Experimenting with damping is crucial for achieving the desired character.

- Early Reflections: These are the first reflections that reach the listener after the direct sound. Precise control over early reflections is essential for realism, often done manually to place those reflections in a convincing manner.

For instance, to simulate a small, intimate jazz club, I might use a short decay time, a relatively short pre-delay, and subtle early reflections. For a large cathedral, I would use a much longer decay time, a longer pre-delay, and more prominent early reflections to capture the grandeur and spaciousness.

Q 11. What are the challenges of restoring damaged audio recordings?

Restoring damaged audio recordings presents numerous challenges, primarily stemming from the nature of the damage itself:

- Noise: Background hiss, crackle, pops, and hum are common issues. Removing this noise without affecting the desired audio is difficult; aggressive noise reduction can easily make the audio sound unnatural or lifeless.

- Dropouts/Gaps: Missing sections of audio require careful reconstruction, often by interpolating from surrounding sections. This can be challenging because it demands accurate analysis and synthesis of the surrounding audio to create a seamless transition.

- Clicks and Pops: These transient events are caused by scratches or dust on the recording medium and require specialized tools for their removal.

- Wow and Flutter: Variations in playback speed introduce pitch changes, necessitating sophisticated algorithms to correct the timing and pitch inconsistencies. The amount of wow and flutter is not consistent so it can be extremely difficult to correct.

- Degradation of high frequencies: High frequencies are often the first to be lost in older recordings, leading to a dull and muffled sound.

The challenge lies in finding a balance between noise reduction and preserving the integrity of the original recording. Over-processing can result in an unnatural or artificial-sounding audio, losing important aspects of the recording.

Q 12. Describe your experience with different audio restoration techniques (e.g., click repair, declicking, decrackling).

My experience with audio restoration techniques is extensive. I routinely use various methods, including:

- Click Repair: I frequently use specialized plugins and algorithms designed to identify and remove clicks and pops caused by scratches or dust. These typically involve analyzing the waveform to locate these events and either replace or attenuate them with interpolation or sophisticated noise-shaping algorithms.

- Declicking and Decrackling: These are closely related techniques, often used in conjunction. Declicking targets sharp, impulsive noises, while decrackling addresses more continuous, crackling sounds. I employ both spectral and time-domain analysis for precise treatment; often this is iterative, with the results carefully monitored to avoid artifacts.

- Spectral Editing: This technique uses a visual representation of the audio’s frequency spectrum to remove or attenuate unwanted noise. It is very effective for dealing with unwanted low-level noise which is spread across the spectrum.

- Phase Cancellation: I’ve employed phase cancellation techniques to eliminate some types of noise, which is based on the understanding that unwanted noise will often be spread across the stereo channels; clever phase alignment can sometimes reduce this noise dramatically.

One project involved restoring a 78-rpm recording with significant crackle and pops. By carefully using a combination of click repair, spectral editing, and noise reduction, I was able to significantly improve the audio quality without significantly compromising the recording. The original had a high level of noise which was removed over a series of passes using different techniques to achieve a pleasing balance of noise reduction and preserving the original character.

Q 13. What are some strategies for reducing background noise in audio recordings?

Reducing background noise is a critical aspect of audio enhancement. Strategies vary based on the nature of the noise and the recording.

- Careful Recording Techniques: The most effective approach is minimizing noise at the source by employing proper microphone techniques, choosing suitable recording locations with minimal ambient noise and using appropriate equipment. This is frequently the most efficient and effective method.

- Noise Gates: These only allow signals exceeding a certain threshold to pass, effectively silencing quieter background noise during pauses in the audio. This is particularly helpful for audio with quieter sections.

- Noise Reduction Plugins: These utilize algorithms like spectral subtraction or Wiener filtering (discussed later) to analyze and remove noise from audio. This requires care as aggressive noise reduction can adversely affect audio quality.

- Spectral Editing: Manually removing noise from the frequency spectrum using software. This requires careful listening and is not an automated process but gives excellent control.

- Channel Separation: If the noise is predominantly on one channel in a stereo recording, attenuating that channel relative to the other can help improve the signal-to-noise ratio. This is only suitable for situations where the noise is not present across both channels.

For instance, when working with a recording containing a consistent hum, I might employ a notch filter to specifically target that frequency. Alternatively, if the noise is more random, I might use a noise reduction plugin, carefully balancing noise reduction with retaining the natural character of the original recording. One example was cleaning up an old podcast recording and the use of a noise reduction plugin allowed the voice recording to become very clear.

Q 14. Explain your experience with different noise reduction algorithms (e.g., spectral subtraction, Wiener filtering).

I have extensive experience with various noise reduction algorithms. Each has strengths and weaknesses:

- Spectral Subtraction: This technique estimates the noise spectrum from silent sections of the audio and subtracts it from the entire recording. It’s relatively simple but can introduce artifacts like ‘musical noise’ (a high-pitched, whining sound) if not carefully applied. It’s often used as a starting point, as it can reduce the most obvious and consistent noise, after which more nuanced noise reduction methods are used.

- Wiener Filtering: A more sophisticated method that uses statistical analysis to estimate the signal and noise components in each frequency band. It’s more accurate and less prone to artifacts than spectral subtraction, but it’s computationally more intensive. It’s very effective for dealing with a low level of constant noise across the spectrum. This method frequently gives more realistic results than spectral subtraction.

- Adaptive Noise Reduction: This method adapts to changes in the noise characteristics throughout the recording, providing better performance on recordings where noise varies over time. This method gives excellent results but it can require significant processing power.

The choice of algorithm depends on the type of noise and desired result. For a recording with consistent hiss, Wiener filtering might be preferred. For a recording with varying noise levels, adaptive noise reduction might be a better choice. It’s important to carefully monitor the results to avoid artifacts and to avoid over-processing and causing unnatural sounds.

Q 15. How do you handle phase cancellation issues during mixing?

Phase cancellation is a destructive interference that occurs when two or more sound waves with the same frequency are out of phase. This results in a reduction in overall volume, or even complete silence, at certain frequencies. It’s a common problem when layering multiple tracks, especially when using multiple microphones or processing techniques that introduce phase shifts.

Handling phase cancellation involves several strategies. Firstly, monitoring phase correlation is crucial. Many DAWs offer phase meters or correlation displays which visually represent the phase relationship between signals. If you see a significant negative correlation, it suggests phase cancellation. Secondly, carefully positioning microphones is paramount. Maintaining consistent distance and angle relative to the sound source minimizes phase issues. If multiple mics are employed (e.g., in stereo recording), using the Mid-Side technique helps avoid problems by encoding the sound in ways that separate the monophonic and stereo information. Thirdly, using phase correction plugins or applying subtle delays can help realign signals. These plugins analyze the phase difference and attempt to compensate. Finally, experimenting with panning can often mitigate the impact by spatially separating the conflicting frequencies. In essence, a good audio engineer is constantly listening for and adjusting for these phase issues. For example, during a drum recording, I might find the kick drum and snare drum are cancelling each other out in the low end. By slightly adjusting the placement of the mics or utilizing a phase correction plugin, I’ll be able to restore the low frequency body.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. What is the importance of proper gain staging in audio production?

Proper gain staging is the art of setting appropriate signal levels at each stage of the audio production process. It’s fundamentally important for achieving optimal sound quality and preventing distortion or unwanted noise. Think of it like building with LEGOs: if you start by placing too many large blocks before building a base, the structure becomes unstable. Similarly, if you start with too much gain, the signal may clip and lose fidelity.

The benefits of proper gain staging are numerous: it prevents clipping (a type of distortion that occurs when a signal exceeds the maximum amplitude), maximizes the dynamic range of your recordings, reduces the likelihood of background noise becoming audible, and makes mixing and mastering easier. A well-gain-staged project usually only requires minimal adjustments later on. I always start by setting input gain levels appropriately – ensuring the signal is strong enough but not exceeding the digital level of -18dBFS before applying any processing. Then I continue to stage the gain levels of each effect and track, leaving enough headroom to avoid clipping further down the line. Imagine recording a vocalist – if the initial recording level is too low, the recording will be noisy. Conversely, if it’s too high, the recording will be distorted. A good engineer anticipates these scenarios and controls the gain levels proactively.

Q 17. Explain your familiarity with various audio file formats (WAV, AIFF, MP3).

I’m very familiar with several audio file formats. WAV (Waveform Audio File Format) and AIFF (Audio Interchange File Format) are uncompressed formats that retain high audio quality. This makes them ideal for archiving and for early stages of audio production when you want to avoid any loss of information. They are lossless, meaning no audio data is discarded during encoding. The downside is that they are large file sizes.

MP3 (MPEG Audio Layer III) is a compressed format, which significantly reduces file sizes but at the cost of some audio quality loss. The compression process removes some audio data that is deemed less perceptible to the human ear. The level of compression can be adjusted which affects the file size and quality. This makes MP3 a popular format for distribution and streaming applications but usually is avoided in professional mixing and mastering where preserving every nuance is crucial. Selecting the right file format depends on the context: If I’m archiving high-quality recordings for a client, WAV or AIFF are a must. If I’m creating a compressed version for streaming, MP3 is appropriate but I always keep a high resolution original copy.

Q 18. What are the differences between linear and non-linear audio editing?

Linear audio editing works like a tape recorder; it edits audio in a sequential manner. Changes made to one part of the audio do not affect other parts. This is a simple, intuitive approach but lacks the flexibility of non-linear editing. If you want to adjust the volume in the middle of the song, it’s a simple operation.

Non-linear audio editing provides the flexibility to edit audio in any order, regardless of its position within the timeline. You can manipulate sections without affecting other sections, insert new audio, or rearrange segments without re-recording. Digital audio workstations (DAWs) use non-linear editing, providing tools such as cut, copy, paste, move, and adjust volume and pitch independently on various sections of the audio. Non-linear editing revolutionized audio production; it allows for greater creative freedom and efficiency, especially when working on complex projects. For instance, I can easily remove a short section of noise within a track and then seamlessly close that gap using non-linear editing, something impossible with a linear approach.

Q 19. What is your experience with different DAWs (Digital Audio Workstations)?

My experience with DAWs is extensive, encompassing Pro Tools, Logic Pro X, Ableton Live, and Cubase. I’m proficient in using them for all aspects of audio production, including recording, editing, mixing, and mastering. Each DAW has its own strengths and weaknesses; for instance, Pro Tools remains the industry standard, especially for film and television, while Ableton Live excels in live performance and electronic music production. My preference often depends on the project’s requirements and personal workflow preferences. However, I can transition between different DAWs easily as the core principles of audio engineering remain consistent.

My expertise extends to using advanced features such as automation, MIDI editing, and sophisticated plugin management. I’m comfortable setting up complex routing configurations, using external hardware, and integrating various technologies within the DAW environment. I’ve used these DAWs for a wide variety of projects, ranging from solo acoustic recordings to complex orchestral scores.

Q 20. Describe your experience using audio plugins (e.g., compressors, EQs, reverbs).

I have extensive experience using a wide range of audio plugins, including compressors, EQs (Equalizers), and reverbs. These plugins are essential tools for shaping and enhancing the sound. Compressors control dynamics by reducing the difference between loud and soft parts of the audio signal, making it more even. EQs adjust the balance of different frequencies, allowing you to boost or cut specific frequencies to improve clarity and presence. Reverbs simulate the effect of sound reflecting in a space, adding depth and ambience. Many other plugins are used to enhance the sound as well.

My familiarity extends to advanced plugin features, including sidechaining (a technique that uses one signal to control another’s dynamics), dynamic EQ (equalization that adapts to the dynamics of the audio), and parallel processing (applying effects to a copy of the signal and blending it with the original). For example, I might use a compressor to control the dynamics of a vocal track, then use an EQ to sculpt the frequency response, and finally add a touch of reverb to enhance the ambience. This layered approach provides subtle yet significant enhancements. Understanding the intricacies of each plugin and how they interact with each other is key to effective audio enhancement.

Q 21. How do you ensure consistency in audio levels across different tracks?

Maintaining consistent audio levels across different tracks is crucial for a balanced and professional-sounding mix. Inconsistent levels can lead to listener fatigue, or certain instruments dominating the mix. This is addressed through a combination of techniques.

Firstly, accurate gain staging during recording and tracking sets the foundation for consistent levels. Secondly, using a metering plugin allows precise monitoring of peak and RMS (Root Mean Square) levels across all tracks. This allows me to identify tracks with inconsistent levels. Thirdly, applying gain adjustments in the mixer ensures levels are balanced, with careful attention paid to the overall dynamics of the song. Furthermore, reference tracks are frequently employed to compare levels against professional productions with a similar style. This allows me to adjust levels within a familiar dynamic range. Finally, automation allows for dynamic changes to track levels throughout the song to maintain consistency and avoid any sudden jumps or dips.

For example, when mixing a band recording, I’ll use a peak and RMS level meter to set each track to a suitable level relative to other tracks. Then, using automation I’ll adjust the levels at different points during the song, like the verse vs. the chorus, ensuring that the overall balance and consistency are maintained across different sections.

Q 22. What are your strategies for managing large audio projects?

Managing large audio projects requires a structured approach. Think of it like conducting an orchestra – each instrument (audio track) needs careful attention and placement. My strategy involves meticulous organization, utilizing Digital Audio Workstations (DAWs) effectively, and leveraging automation where possible.

- Project File Management: I create a clear folder structure, separating stems, mixes, masters, and metadata. This ensures easy navigation and prevents file loss. I use descriptive file names to avoid confusion.

- Session Management: Within my DAW (typically Pro Tools or Logic Pro), I employ techniques like color-coding tracks, creating groups and busses, and using track templates to streamline workflow. I frequently save versions of my project, employing a version control system.

- Automation and Templates: I create and reuse automation clips for repetitive tasks like volume adjustments or effects automation. I also use project templates to maintain consistency across projects and save time on setup.

- Collaboration Tools: For collaborative projects, I use cloud-based solutions like Dropbox or Google Drive to facilitate easy sharing and version control. Communication tools like Slack or dedicated project management software are essential for seamless communication with clients and team members.

For example, on a recent documentary project with hundreds of audio clips, using a color-coded system and creating dedicated busses for dialogue, sound effects, and music was crucial for maintaining clarity and efficiency during the mix.

Q 23. Describe your experience working with different types of microphones and their polar patterns.

My experience with microphones spans various types, from condenser mics for capturing delicate details to dynamic mics for handling high sound pressure levels. Understanding polar patterns is key to microphone selection.

- Cardioid: This pattern, common in vocal mics, captures sound primarily from the front, rejecting sound from the sides and rear, minimizing background noise. For example, a Shure SM7B is a great choice for podcasts due to its excellent cardioid pattern.

- Omnidirectional: Picking up sound equally from all directions, these mics are useful for ambient recordings or situations requiring a wide sound field, such as recording a room’s atmosphere. Small-diaphragm condenser microphones often have this pattern.

- Figure-8 (Bidirectional): This captures sound equally from the front and rear, rejecting the sides. It’s often used in stereo recording techniques to create a wider soundscape. Ribbon microphones are a classic example.

- Hypercardioid/Supercardioid: These patterns are more directional than cardioid, providing even more rejection of background noise. These are useful in live sound situations or environments with significant ambient noise.

I carefully choose microphone types based on the application. For example, a hypercardioid shotgun microphone would be perfect for location sound recording, while a cardioid large-diaphragm condenser would be appropriate for studio vocal recordings.

Q 24. What is the concept of psychoacoustics and its relevance in audio enhancement?

Psychoacoustics is the study of the perception of sound. It’s crucial in audio enhancement because it helps us understand how humans hear and perceive audio signals. This allows us to make informed decisions about audio processing to achieve a desired effect, rather than relying solely on technical measurements.

- Loudness Perception: Our perception of loudness isn’t linear; it’s logarithmic. We use tools like LUFS (Loudness Units relative to Full Scale) to control perceived loudness across different playback systems.

- Frequency Masking: Louder sounds can mask quieter sounds in nearby frequencies. Understanding this helps in decisions about EQ and dynamic processing to reveal details without creating harshness.

- Spatial Perception: Our brains use subtle cues like interaural time differences (ITDs) and interaural level differences (ILDs) to locate sounds. This is vital in surround sound mixing and spatial audio processing.

- Pre-Echo and Haas Effect: A slightly delayed signal that is nearly identical to an earlier signal can help create fullness. This knowledge is important when choosing reverb and delay settings. This effect (aka precedence effect) influences how we hear sounds in spaces.

For instance, applying psychoacoustic principles when mastering a track ensures the final mix sounds balanced and full, regardless of the playback system. Understanding masking allows for subtle EQ adjustments to create clarity, avoiding unnecessary processing.

Q 25. Explain your understanding of audio metering and its importance.

Audio metering is the process of measuring various aspects of an audio signal. It’s essential for ensuring your audio is within acceptable levels and free of distortion. It’s like having a quality control system for sound.

- Level Meters (VU, PPM): Measure the amplitude of the audio signal to prevent clipping (distortion caused by exceeding the maximum signal level).

- Loudness Meters (LUFS, LKFS): Measure the perceived loudness of the audio, ensuring consistent loudness across different platforms and devices.

- Frequency Analyzers (Spectrogram): Display the frequency content of the audio, revealing potential issues like muddiness or harshness. This aids in EQ adjustments.

- Correlation Meters: Measure the correlation between two signals, useful for identifying phase issues and cancellation.

For instance, a frequency analyzer can show where muddiness exists in the bass frequencies, guiding the EQ processing. Monitoring LUFS levels guarantees the finished product meets the loudness standards for broadcast or streaming services.

Q 26. What are your preferred methods for delivering final audio mixes and masters?

The preferred method for delivering final audio mixes and masters depends on the project and client requirements, but high-quality, lossless formats are paramount.

- WAV (Waveform Audio File Format): A common, lossless format for high-fidelity audio. Suitable for archiving and mastering.

- AIFF (Audio Interchange File Format): Another lossless format, similar to WAV, used primarily on Apple platforms. Excellent for preserving audio quality.

- Mastering-Ready Files: These files are usually lossless (WAV or AIFF) and are delivered with comprehensive metadata (artist, title, album, genre, etc.) in accordance with industry standards.

- Delivery Platforms: Depending on the client’s specifications, I use platforms like Dropbox, WeTransfer, or dedicated collaboration services such as Soundtrap.

I always confirm with the client the preferred delivery method and format, ensuring it’s compatible with their workflow and archiving system. Providing clear metadata with the final files is extremely important.

Q 27. Describe a time you had to troubleshoot a complex audio problem. What was your approach?

During a live recording session, we encountered significant feedback issues from a monitor speaker. The initial approach of reducing the microphone gain resulted in a weak signal and a lack of dynamic range.

My systematic troubleshooting process involved:

- Isolation: We systematically identified the problematic microphone(s) by testing them individually, proving the feedback wasn’t solely due to overall gain levels.

- Phase Alignment: I checked the phase relationships between the monitor speakers and microphones, as phase cancellation is a common cause of feedback in live recording scenarios.

- Equalization (EQ): Using a graphic EQ on the monitor’s signal, I specifically attenuated (reduced the gain) at the resonant frequencies causing the feedback. This solved the issue without sacrificing the overall sound quality.

- Mic Placement: I strategically repositioned the microphone, maximizing its distance from the monitor speakers while maintaining desirable proximity to the sound source.

By combining techniques and methodically eliminating potential causes, we successfully resolved the issue without significant time loss or compromise to the quality of the recording. It was a great demonstration of how a detailed understanding of acoustic principles is paramount in solving audio challenges.

Q 28. How do you stay up-to-date with the latest advancements in audio technology?

Staying current in audio technology is vital. My approach is multifaceted:

- Industry Publications and Websites: I regularly read publications like Sound on Sound, Mix Magazine, and follow relevant websites and blogs.

- Conferences and Workshops: Attending industry conferences like AES (Audio Engineering Society) conventions provides invaluable insights and opportunities to network with other professionals.

- Online Courses and Tutorials: Platforms like Coursera, Udemy, and Skillshare offer courses on advanced audio techniques and software updates.

- Software Updates and Documentation: I stay updated on the latest features and functionalities of the software I use, carefully reading release notes and tutorials.

- Experimentation and Personal Projects: I actively engage in personal projects and experiments to explore new tools and techniques firsthand.

Continuous learning is critical; the audio world evolves rapidly. Combining various resources ensures a holistic understanding of the latest developments.

Key Topics to Learn for Audio Enhancement Techniques Interview

- Noise Reduction: Understanding various noise reduction algorithms (spectral subtraction, Wiener filtering, etc.), their strengths and weaknesses, and practical application in different audio scenarios (e.g., removing background hum from a recording, reducing wind noise in a field recording).

- Equalization (EQ): Mastering the principles of EQ, including frequency response, Q factor, gain staging, and practical application in shaping audio for clarity, warmth, and specific tonal characteristics. This includes understanding different EQ types (parametric, graphic, shelving).

- Compression and Limiting: Comprehending dynamic range control using compression and limiting, including threshold, ratio, attack, and release times. Understanding their effects on perceived loudness and audio dynamics, and practical applications in mastering, broadcast, and live sound.

- Reverb and Delay: Grasping the principles of artificial reverberation and delay, including various algorithms and their use in creating spatial depth, ambience, and special effects. Understanding the differences between convolution reverb and algorithmic reverb.

- Audio Restoration: Exploring techniques for repairing damaged or degraded audio, including click and pop removal, declicking, decrackling, and restoration of old recordings. Understanding the challenges involved and the application of specialized software.

- Spatial Audio: Exploring the fundamentals of spatial audio processing, including techniques like binaural recording, ambisonics, and object-based audio. Understanding the advantages and applications of immersive audio experiences.

- Digital Signal Processing (DSP) Fundamentals: A foundational understanding of DSP concepts relevant to audio processing, such as sampling rates, bit depth, filters (FIR, IIR), and their influence on audio quality and processing techniques.

Next Steps

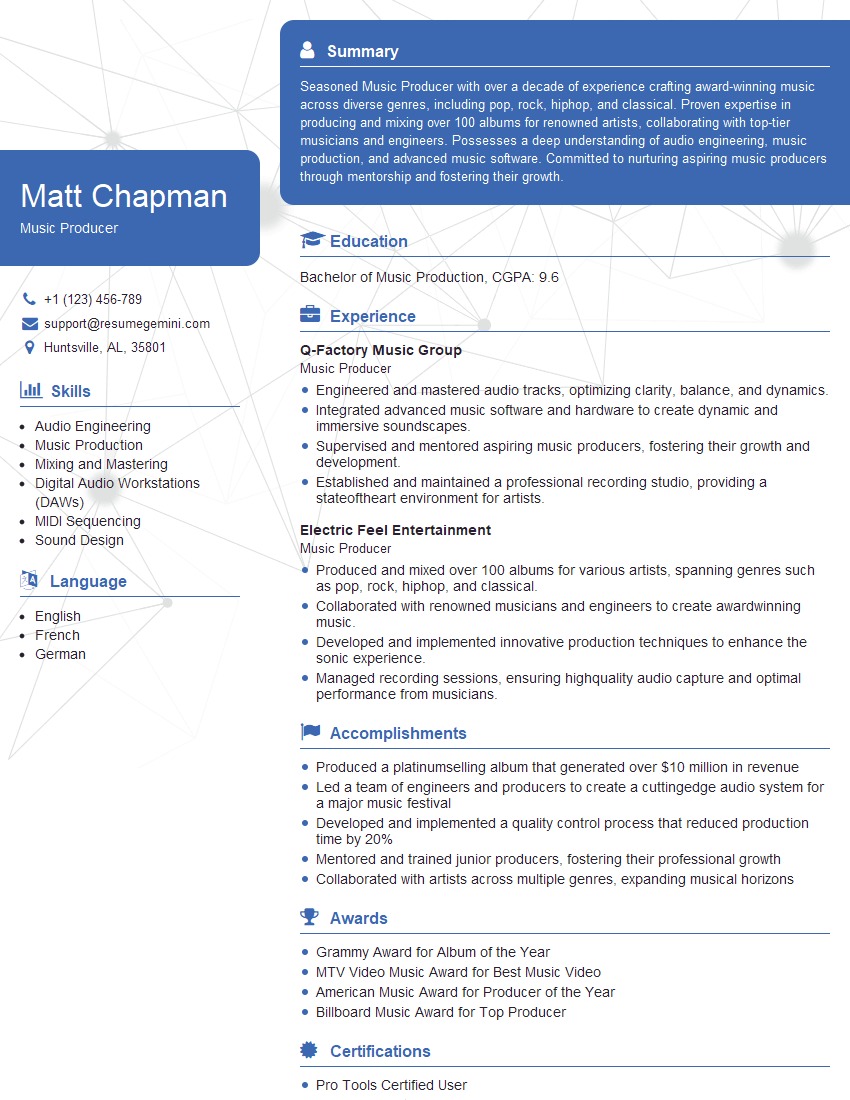

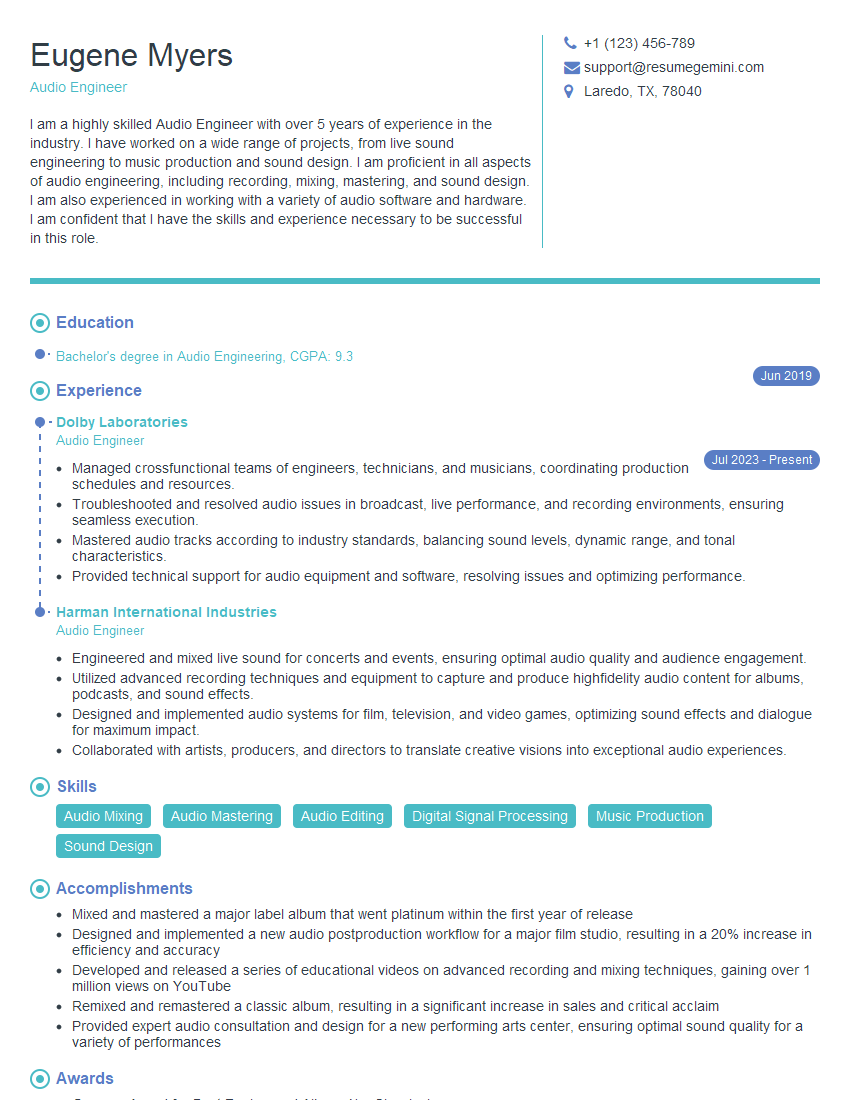

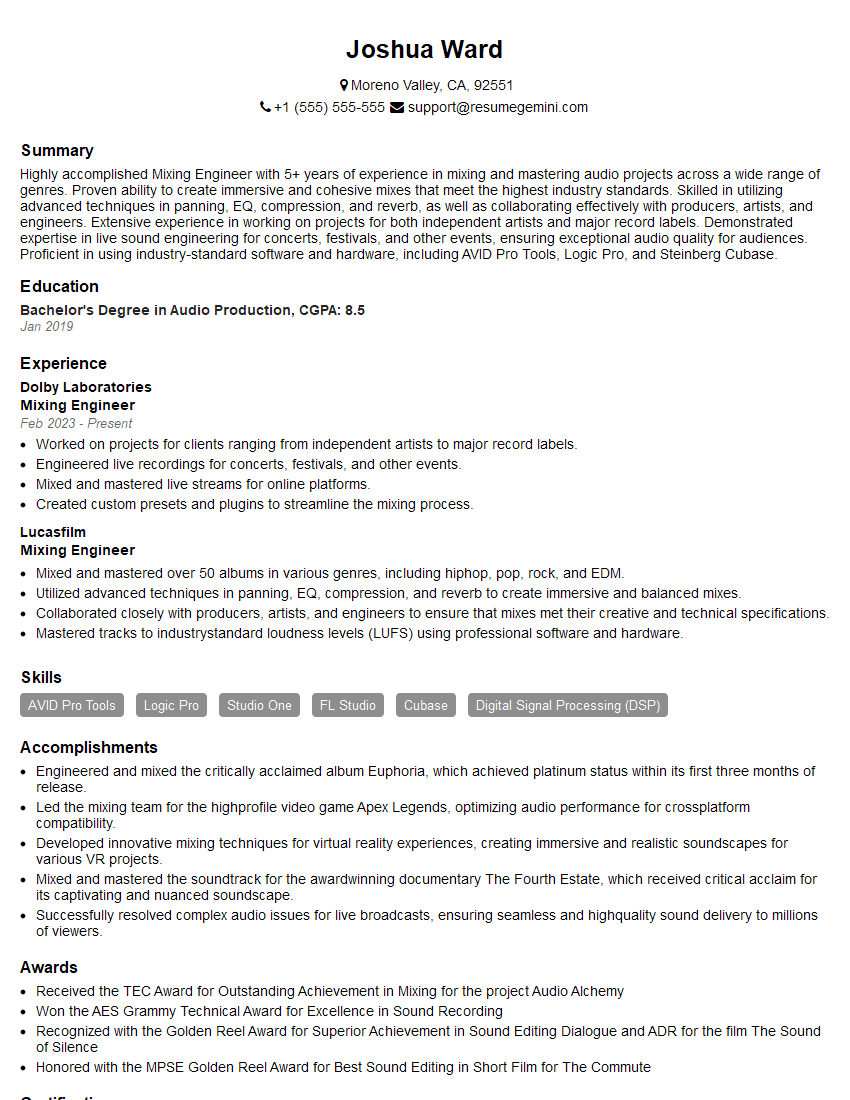

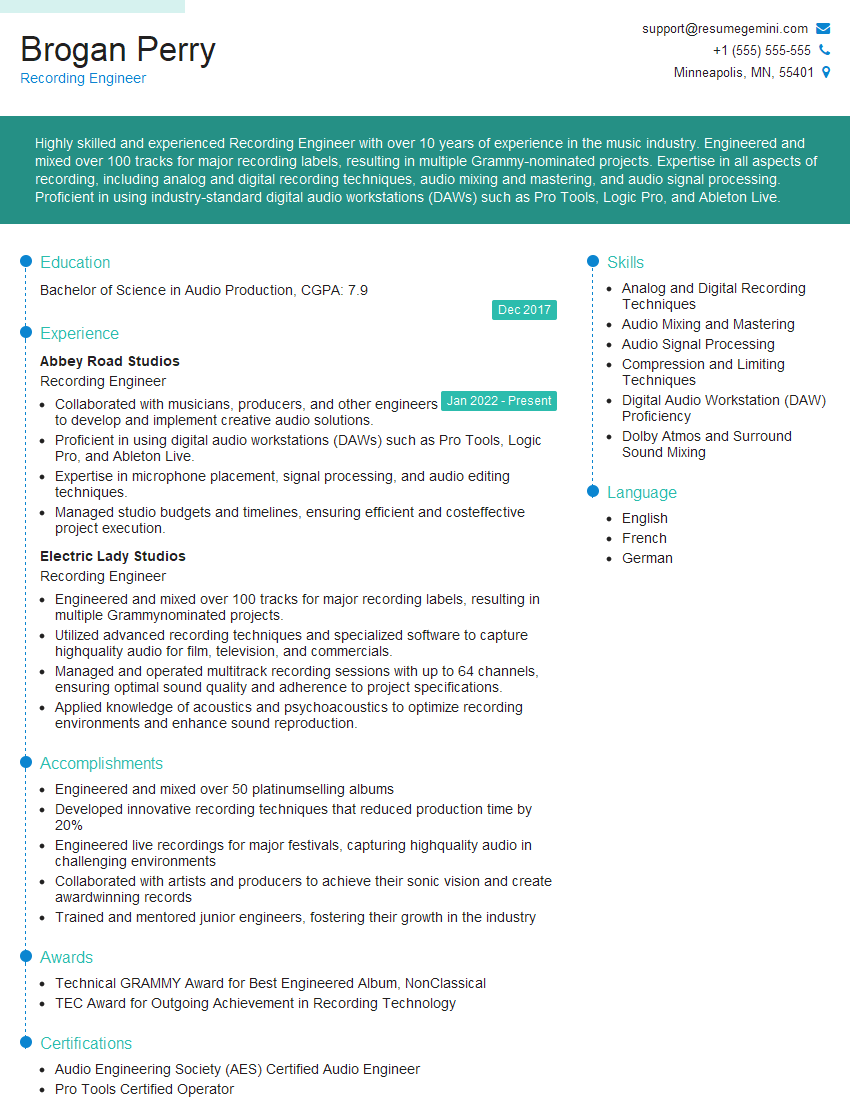

Mastering Audio Enhancement Techniques is crucial for career advancement in various audio-related fields, opening doors to exciting opportunities in music production, post-production, broadcast engineering, and more. To significantly increase your chances of landing your dream job, invest time in creating a strong, ATS-friendly resume that showcases your skills and experience effectively. ResumeGemini is a trusted resource that can help you build a professional and impactful resume tailored to your specific needs. Examples of resumes tailored to Audio Enhancement Techniques are available to help you get started. Make a powerful first impression and take control of your career journey!

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Very informative content, great job.

good