Every successful interview starts with knowing what to expect. In this blog, we’ll take you through the top Bandwidth Optimization interview questions, breaking them down with expert tips to help you deliver impactful answers. Step into your next interview fully prepared and ready to succeed.

Questions Asked in Bandwidth Optimization Interview

Q 1. Explain the difference between bandwidth and throughput.

Bandwidth and throughput are closely related but distinct concepts. Think of a highway: bandwidth represents the maximum number of lanes (capacity) available on that highway, determining the theoretical maximum data transfer rate. Throughput, on the other hand, is the actual amount of data successfully transferred over that highway at a given time. It’s the number of cars actually making it through, which can be less than the theoretical maximum due to traffic, accidents (errors), or speed limits (limitations).

For example, a network connection might have a bandwidth of 1 Gbps (gigabits per second), but the actual throughput might be only 500 Mbps due to network congestion or latency. The difference highlights the distinction between potential and realized data transfer rates.

Q 2. Describe common bandwidth bottlenecks in network infrastructure.

Bandwidth bottlenecks can arise at various points in a network infrastructure. Common culprits include:

- Overloaded Network Devices: Routers, switches, and firewalls can become bottlenecks if they lack sufficient processing power or buffer capacity to handle the volume of traffic. Imagine a single toll booth on a busy highway – it creates a choke point.

- Insufficient Link Capacity: Low-bandwidth links between network segments (e.g., using older technologies or thin fiber optic cables) restrict the overall data flow. This is like having a narrow section of the highway that reduces overall flow.

- Wireless Interference: In wireless networks, interference from other devices operating on the same frequency can significantly reduce throughput. Think of radio waves colliding and disrupting the signal.

- Network Congestion: High traffic volume during peak hours can overwhelm network resources, leading to slowdowns and delays. This is like rush hour traffic on the highway.

- Application-Specific Bottlenecks: Certain applications might be resource-intensive, consuming disproportionate amounts of bandwidth. For example, a video streaming application can consume a lot of bandwidth, potentially impacting other applications.

Q 3. How do you identify bandwidth usage patterns and anomalies?

Identifying bandwidth usage patterns and anomalies requires a combination of monitoring tools and analysis techniques. I typically employ these methods:

- Network Monitoring Tools: Tools like SolarWinds, PRTG, or Wireshark provide real-time visibility into network traffic, allowing me to see which applications are consuming the most bandwidth, and identify unusual spikes or drops.

- Log Analysis: Examining network device logs can reveal errors, dropped packets, or other events that might indicate a bandwidth bottleneck. For instance, high error rates might point to a faulty network link.

- Performance Baselines: Establishing baseline network performance metrics allows me to compare current performance against historical data, making it easier to spot anomalies. Significant deviations from the baseline would warrant investigation.

- Traffic Analysis: Analyzing network traffic patterns can reveal recurring bottlenecks or usage peaks. This might lead to implementing solutions such as QoS policies to prioritize specific types of traffic.

For instance, a sudden surge in bandwidth usage from a particular IP address might indicate a security breach or a denial-of-service attack, prompting immediate investigation and mitigation.

Q 4. What are the key performance indicators (KPIs) you monitor for bandwidth optimization?

Key Performance Indicators (KPIs) for bandwidth optimization include:

- Throughput: The actual data transfer rate, measured in bits per second (bps) or similar units. Low throughput indicates a bottleneck.

- Latency: The delay experienced in data transmission. High latency can impact application responsiveness.

- Packet Loss: The percentage of data packets that are lost during transmission. High packet loss indicates network problems.

- Jitter: Variations in latency. High jitter can affect real-time applications such as VoIP or video conferencing.

- Bandwidth Utilization: The percentage of available bandwidth being used. Consistently high utilization might indicate a need for increased bandwidth.

- Error Rate: The number of errors detected during data transmission. High error rates signal underlying network issues.

By monitoring these KPIs, I can proactively identify and address potential bandwidth issues before they impact users or applications.

Q 5. Explain your experience with Quality of Service (QoS) mechanisms.

Quality of Service (QoS) mechanisms are crucial for bandwidth optimization, especially in networks with diverse traffic types. QoS allows prioritizing specific types of traffic, ensuring that critical applications receive adequate bandwidth even during periods of high congestion. My experience involves implementing and managing various QoS techniques, including:

- Traffic Classification: Identifying and categorizing network traffic based on various criteria such as protocol, port number, or application. For example, prioritizing VoIP traffic over web browsing.

- Traffic Policing: Monitoring and controlling the rate of traffic flow, preventing individual applications from consuming excessive bandwidth. Think of it as traffic management on a highway.

- Traffic Shaping: Modifying the traffic flow to ensure smooth transmission, smoothing out bursts and prioritizing high-priority traffic.

- Queue Management: Managing the queues of packets waiting for transmission. Weighted Fair Queuing (WFQ) and Class-Based Queuing (CBQ) are examples of queue management algorithms.

In a previous role, I implemented QoS policies to ensure smooth video conferencing during peak hours by prioritizing VoIP traffic over other less critical applications. This significantly improved user experience and call quality.

Q 6. Describe different traffic shaping techniques and their applications.

Traffic shaping techniques aim to manage and optimize network traffic flow by adjusting the rate and timing of data transmission. Different techniques are employed depending on the specific needs:

- Rate Limiting: Restricting the maximum transmission rate of individual users or applications. This prevents congestion caused by bandwidth hogs.

- Packet Scheduling: Prioritizing certain types of packets over others. For example, prioritizing low-latency packets for real-time applications.

- Bandwidth Allocation: Assigning specific bandwidth allocations to different users or applications. This guarantees a minimum level of bandwidth for critical applications.

- Weighted Fair Queuing (WFQ): A queue management algorithm that distributes bandwidth fairly among different traffic classes, ensuring that no single class monopolizes the network.

For example, in a hospital network, traffic shaping might prioritize medical imaging data transfer over other network activities, ensuring that critical medical procedures are not hampered by slow network speeds.

Q 7. How do you implement and manage bandwidth throttling?

Bandwidth throttling intentionally reduces the bandwidth available to specific users or applications. It’s a tool used to manage network resources, prevent abuse, and ensure fair usage. Implementing and managing bandwidth throttling involves these steps:

- Identify Targets: Determine which users or applications require throttling based on their usage patterns and impact on network performance.

- Set Thresholds: Define the bandwidth limits to be applied. These limits should be appropriate for the targeted users or applications.

- Configure Throttling Mechanisms: Employ network devices or software capable of implementing bandwidth throttling policies, such as routers, firewalls, or dedicated bandwidth management tools.

- Monitor and Adjust: Continuously monitor the impact of throttling on network performance and user experience. Adjust the thresholds as needed to optimize network resource utilization and ensure fairness.

For example, an internet service provider might throttle bandwidth for users exceeding their data caps, preventing them from unduly impacting the network. Proper monitoring is crucial to avoid unintended consequences.

Q 8. What are your experiences with network monitoring tools (e.g., PRTG, SolarWinds)?

My experience with network monitoring tools like PRTG and SolarWinds is extensive. I’ve used them extensively in various roles to proactively identify and resolve bandwidth issues. PRTG, for example, is excellent for its comprehensive sensor capabilities, allowing me to monitor everything from bandwidth utilization on individual interfaces to the overall health of network devices. I’ve used its reporting features to create customized dashboards illustrating bandwidth trends, which proved invaluable in capacity planning. With SolarWinds, I’ve particularly appreciated its ability to provide deeper insights into network performance, including identifying bottlenecks through its application performance monitoring features. In one project, SolarWinds helped pinpoint a specific application causing significant bandwidth spikes during peak hours, allowing us to implement targeted optimization strategies.

For instance, using PRTG’s interface monitoring, I once discovered a single, misconfigured server was sending out unnecessary broadcasts, consuming a significant portion of our network bandwidth. By adjusting the server’s settings and implementing better network segmentation, we freed up considerable bandwidth and improved overall network performance.

Q 9. Explain your understanding of TCP/IP and its impact on bandwidth.

TCP/IP is the fundamental communication protocol suite for the internet. Think of it as the set of rules that govern how data travels across networks. TCP (Transmission Control Protocol) provides a reliable, ordered, and error-checked delivery of data. It’s like sending a registered letter – you know it will arrive, in the correct order, and that you’ll get confirmation of its delivery. IP (Internet Protocol) handles the addressing and routing of data packets across networks. It’s like the address on the envelope, ensuring the data reaches the correct destination.

TCP’s reliability comes at a cost: it requires acknowledgment of each data packet, which adds overhead and can impact bandwidth. If there are network issues or congestion, TCP will retransmit lost packets, further impacting bandwidth. Understanding TCP’s behavior, like window size adjustments and congestion control algorithms, is crucial for optimizing bandwidth. For example, properly configuring TCP window sizes can significantly improve throughput, especially in high-bandwidth applications. Optimizing TCP settings involves balancing reliability with speed, and different strategies work best depending on the application and network conditions.

Q 10. How do you optimize bandwidth for video streaming applications?

Optimizing bandwidth for video streaming requires a multi-faceted approach. The core strategies revolve around reducing the bitrate (the amount of data transmitted per second) while maintaining acceptable video quality.

- Adaptive Bitrate Streaming (ABR): This is a crucial technology. ABR dynamically adjusts the bitrate based on the available bandwidth and network conditions. If bandwidth drops, the quality reduces to avoid buffering, and improves when bandwidth increases. This ensures smooth playback even with fluctuating network conditions.

- Content Delivery Networks (CDNs): CDNs geographically distribute video content, reducing latency and improving delivery speeds. They’re like having multiple copies of a book at various libraries – the user can access the nearest copy, speeding up delivery.

- Caching: Caching video content closer to the users, on edge servers or even at the user’s device, further reduces the load on the main servers and network.

- Video Compression: Using efficient codecs like H.264 or H.265 significantly reduces the file size without drastically impacting video quality, ultimately saving bandwidth.

- Network Optimization: Implementing Quality of Service (QoS) features can prioritize video traffic over other less critical network activities, ensuring smooth playback during periods of high network usage.

Q 11. How do you address bandwidth congestion in a virtualized environment?

Bandwidth congestion in virtualized environments often stems from resource contention between virtual machines (VMs). Addressing this involves a combination of monitoring, optimization, and resource management techniques.

- Monitoring Resource Utilization: Tools like VMware vCenter or similar hypervisors provide detailed insights into CPU, memory, and network usage per VM. Identifying VMs consistently consuming excessive bandwidth is the first step.

- Resource Allocation: Adjusting the virtual network interface card (vNIC) settings of high-bandwidth VMs to reserve a specific amount of bandwidth can prevent starvation of other VMs.

- Network Segmentation: Isolating VMs with high bandwidth needs onto separate virtual networks (VLANs) reduces congestion on shared networks.

- QoS Policies: Implementing QoS policies within the virtual network infrastructure prioritizes critical VM traffic over less critical applications.

- Overprovisioning: While costly, overprovisioning network resources within the virtual infrastructure provides headroom to handle unexpected bandwidth spikes.

For example, if a database VM is consistently exceeding its bandwidth allocation, causing performance issues for other VMs, adjusting its vNIC settings to a higher bandwidth reservation, or moving it to a separate VLAN, can alleviate the congestion.

Q 12. What strategies do you use to optimize bandwidth in cloud environments (e.g., AWS, Azure)?

Optimizing bandwidth in cloud environments like AWS and Azure leverages many of the same principles discussed earlier, but with cloud-specific features.

- Content Delivery Networks (CDNs): Cloud providers offer robust CDN services (e.g., Amazon CloudFront, Azure CDN) for efficient content distribution.

- Cloud-Based Load Balancers: Load balancers distribute traffic across multiple servers, preventing overload on any single instance.

- Auto-Scaling: Automatically scaling up or down the number of instances based on demand ensures sufficient resources are available to handle bandwidth spikes.

- Data Transfer Optimization: Using services that minimize data transfer costs, such as S3 storage for static content and choosing regions closest to users, helps reduce latency and costs.

- Network Performance Monitoring: Leveraging cloud provider monitoring tools allows detailed analysis of network performance, pinpointing bottlenecks and areas for optimization.

For instance, in an AWS environment, using S3 to store static assets, and configuring CloudFront to deliver them, significantly reduces load on web servers and improves user experience by utilizing a global network of edge servers for fast content delivery.

Q 13. Explain your experience with network capacity planning.

Network capacity planning is a crucial aspect of network management. It involves forecasting future network bandwidth requirements and designing a network infrastructure that can meet those demands. This is not a one-time task but rather an ongoing process of monitoring, analysis, and adjustment.

My approach begins with collecting historical data on bandwidth usage. This includes analyzing network traffic patterns, identifying peak usage times, and understanding the growth trends of different applications. Then, I project future bandwidth requirements based on anticipated growth, new applications, and changes in user behavior. This projection considers factors like the number of users, data transfer volumes, and application requirements. Finally, I design the network architecture, including selecting appropriate hardware and software, ensuring sufficient capacity to meet the forecasted needs, with built-in scalability to accommodate future growth. Regular review and adjustment of this plan based on ongoing monitoring is key to keeping the network performing optimally.

Q 14. How do you handle bandwidth issues during peak usage times?

Handling bandwidth issues during peak usage times requires proactive strategies and a layered approach.

- Capacity Planning: As mentioned earlier, accurate capacity planning is crucial to avoid issues during peak hours. This includes overprovisioning to handle unexpected surges.

- QoS Policies: Prioritizing critical applications during peak times ensures they continue to function smoothly, even when bandwidth is limited. Video conferencing or mission-critical applications can be prioritized over less essential ones.

- Caching Strategies: Implementing robust caching mechanisms helps reduce the load on the origin servers during peak times by serving content from caches closer to users.

- Load Balancing: Using load balancers distributes network traffic evenly across multiple servers, preventing any single server from becoming overloaded.

- Traffic Shaping and Throttling: If necessary, traffic shaping techniques can prioritize certain types of traffic or throttle less critical traffic to manage congestion. However, it must be used judiciously to avoid negatively impacting user experience.

Monitoring network performance closely during peak times is vital. This enables quick identification of any emerging bottlenecks and enables the implementation of corrective actions in real-time. For example, if a specific application is identified as causing congestion, we can temporarily throttle its traffic or investigate underlying performance issues.

Q 15. Describe your experience with WAN optimization techniques.

WAN optimization is crucial for businesses relying on wide area networks to connect geographically dispersed locations. My experience encompasses a range of techniques, focusing on improving application performance and reducing bandwidth consumption. This involves leveraging technologies like:

- WAN acceleration: Techniques like data deduplication, compression, and caching significantly reduce the amount of data traversing the WAN. Imagine sending a large file – deduplication removes redundant data, compression shrinks its size, and caching stores frequently accessed data closer to users, drastically reducing transfer times.

- Traffic shaping and prioritization: This involves assigning different priorities to network traffic based on application needs. For instance, VoIP traffic gets priority over less critical file transfers, ensuring smooth voice communication even during periods of high network load. Think of it like a highway with different lanes – emergency vehicles (VoIP) get the fast lane.

- TCP optimization: Techniques like window scaling and selective acknowledgments improve the efficiency of TCP, the primary protocol for data transmission on the internet. This is akin to streamlining the delivery process – ensuring the packages are delivered more efficiently and with fewer errors.

- Application-level optimization: This focuses on optimizing specific applications, such as databases or ERP systems, to reduce their bandwidth demands. This is like optimizing the individual packages themselves to be smaller and more efficient.

In a previous role, I implemented a WAN optimization solution that reduced bandwidth consumption by 40% and improved application response times by 60%, leading to significant cost savings and increased productivity.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. What are some common causes of bandwidth latency?

Bandwidth latency, the delay in data transmission, can stem from several sources:

- Network congestion: Too much traffic on a network segment can cause delays. Imagine a busy highway – the more cars, the slower the traffic.

- Physical limitations: Older hardware, inadequate cabling, or long distances between network devices can all contribute to latency. Think of this as using an old, narrow road instead of a modern highway.

- Protocol inefficiencies: Inefficient network protocols can increase latency. Suboptimal routing, for instance, can force data to travel a longer path than necessary.

- High error rates: Frequent errors necessitate retransmissions, adding to delay. Think of this as road construction that frequently causes stoppages.

- Queueing delays: Network devices have queues to buffer incoming data. Overly long queues can cause delays. It’s like waiting in a long line at a store checkout.

- Security measures (firewalls, IPS): Security appliances, while necessary, can introduce delays if not properly configured.

Q 17. How do you troubleshoot bandwidth problems?

Troubleshooting bandwidth problems follows a systematic approach:

- Identify the problem: Determine which applications or users are experiencing slowdowns. Use network monitoring tools to pinpoint affected segments.

- Isolate the cause: Analyze network traffic using tools like Wireshark to identify bottlenecks or errors. Check device logs for errors or warnings.

- Gather metrics: Collect data on bandwidth usage, latency, packet loss, and error rates. This helps to identify trends and pinpoint the root cause.

- Implement solutions: Based on the diagnosis, implement solutions such as upgrading hardware, optimizing network configuration, or adjusting security settings.

- Monitor and test: Continuously monitor the network to ensure the implemented solutions are effective. Regularly test network performance to identify potential issues before they impact users.

For instance, if you notice slow application performance during peak hours, you might discover network congestion and implement traffic shaping or upgrade network infrastructure to address the problem. Using tools like ping, traceroute, and network monitoring software provides critical diagnostic data for this type of troubleshooting.

Q 18. Explain your understanding of different network topologies and their impact on bandwidth.

Network topologies significantly impact bandwidth. Understanding them is crucial for optimization:

- Bus topology: All devices share a single cable. This is simple but has limited bandwidth and is susceptible to single points of failure. Think of this as a single lane road.

- Star topology: Devices connect to a central hub or switch. It offers better bandwidth and scalability than a bus topology, as each device has its own connection. This is like having multiple roads leading to a central point.

- Ring topology: Data travels in a circle. It’s efficient but vulnerable to single points of failure. Think of this as a circular highway.

- Mesh topology: Devices connect to multiple other devices, providing redundancy and high bandwidth. This is like a very complex network of interconnecting roads.

The choice of topology depends on factors like budget, scalability requirements, and tolerance for failure. In a large enterprise setting, a mesh topology with multiple redundant paths might be favored for its high bandwidth and resilience, while a smaller office might use a simpler star topology.

Q 19. Describe your experience with network security measures to improve bandwidth efficiency.

Network security measures can impact bandwidth efficiency. Properly configured systems are key:

- Firewalls: Effective firewalls filter unwanted traffic, reducing bandwidth consumption by blocking unnecessary data. However, poorly configured firewalls can introduce significant latency.

- Intrusion Prevention Systems (IPS): IPS systems inspect traffic for malicious activity. Similar to firewalls, they can impact performance if not optimized.

- Virtual Private Networks (VPNs): VPNs encrypt data, adding overhead and potentially reducing bandwidth. However, they’re essential for secure remote access.

- Data Loss Prevention (DLP): DLP solutions monitor data movement, which can impact bandwidth, but protect sensitive information.

Regular security audits and optimization are critical. This involves reviewing firewall rules, optimizing IPS settings, and selecting efficient VPN protocols. For example, using IPSec with optimized encryption algorithms reduces overhead compared to less efficient options.

Q 20. How do you balance bandwidth allocation between different applications and users?

Balancing bandwidth allocation requires a multi-faceted approach:

- Quality of Service (QoS): QoS allows you to prioritize certain applications or users, ensuring critical traffic receives adequate bandwidth. Think of it as allocating more lanes to critical traffic on a highway.

- Bandwidth throttling: Limiting bandwidth usage for specific applications or users can prevent resource exhaustion and improve overall performance for others.

- Traffic shaping: Similar to throttling, but more sophisticated, it can smooth out bursts of traffic, preventing congestion.

- Fair queuing: This ensures that all users receive a fair share of bandwidth, preventing any single user or application from monopolizing resources.

In a scenario with multiple users and applications competing for bandwidth, QoS could prioritize real-time applications like video conferencing while implementing fair queuing to avoid users from hogging bandwidth. Monitoring tools provide insights into bandwidth usage, which informs appropriate allocation strategies.

Q 21. What is your experience with load balancing techniques for optimizing bandwidth?

Load balancing distributes network traffic across multiple servers or links, increasing bandwidth capacity and preventing overload. Methods include:

- DNS load balancing: Directs users to different servers based on DNS responses. Simple but can be less efficient if servers have varying capacities.

- Layer 4 load balancing: Distributes traffic based on TCP/UDP ports. Offers more control than DNS balancing.

- Layer 7 load balancing: Distributes traffic based on application layer content. This allows for sophisticated traffic management based on application requirements.

- Geographic load balancing: Directs users to servers closest to their location, reducing latency. Important for geographically dispersed users.

In a web application context, layer 7 load balancing can distribute traffic based on URL or application-specific parameters, ensuring optimal performance during high traffic periods. This could involve routing users to different server clusters based on their location or the type of service requested.

Q 22. Explain your experience with various network protocols (e.g., BGP, OSPF).

My experience with network protocols like BGP and OSPF is extensive. BGP (Border Gateway Protocol) is crucial for routing between autonomous systems (ASes) on the internet, essentially deciding how data travels across different networks. I’ve worked extensively with configuring BGP to optimize routes for minimizing latency and maximizing bandwidth utilization. For instance, I’ve implemented BGP route filtering to prevent unwanted traffic from congesting our network and used AS path prepending to influence traffic distribution among multiple upstream providers.

OSPF (Open Shortest Path First) is an interior gateway protocol used within a single AS to determine the best paths for data. My work with OSPF includes designing and implementing hierarchical OSPF networks to scale efficiently, tuning OSPF timers to enhance convergence speed after network changes, and utilizing OSPF area boundaries to manage the size and complexity of routing tables. I’ve also troubleshooting scenarios where OSPF instability led to bandwidth bottlenecks, using tools like show ip ospf neighbor and show ip ospf database to pinpoint the issue.

Q 23. How do you utilize network analysis tools to identify bandwidth issues?

Identifying bandwidth issues requires a multi-faceted approach using network analysis tools. I typically start with basic monitoring tools to gain an overview – this might include monitoring CPU and memory utilization on routers and switches, as well as interface statistics showing packet loss, errors, and utilization levels. Tools like SolarWinds, PRTG, or even built-in network management systems provide these basic metrics.

For deeper analysis, I use packet capture tools like Wireshark or tcpdump to analyze network traffic patterns. This allows me to identify specific applications or protocols consuming excessive bandwidth, pinpoint congestion points, and analyze latency issues. For example, I recently used Wireshark to discover that a rogue application was using a significant portion of our network bandwidth during off-peak hours. I correlated these findings with bandwidth utilization graphs to pinpoint the source and implement appropriate QoS policies. Furthermore, I frequently employ network performance monitoring tools such as NetFlow or sFlow to analyze aggregate traffic flows, identify patterns and anomalies, and gain a comprehensive view of network bandwidth consumption.

Q 24. Describe your experience with implementing and managing caching mechanisms to improve bandwidth.

Caching mechanisms are fundamental for bandwidth optimization. I have extensive experience implementing and managing various caching solutions, both at the network edge (e.g., using CDN services like Akamai or Cloudflare) and within the network itself (e.g., using Squid or Varnish). CDN deployments are particularly effective in reducing latency and bandwidth consumption for static content such as images, videos, and CSS files by storing copies closer to users. This is especially important for applications like video streaming where bandwidth usage is substantial.

Implementing caching within the network itself helps reduce bandwidth consumption by storing frequently accessed content closer to the clients. For example, I’ve used Squid proxies to cache web content for internal users, significantly reducing the load on our internet connection and improving response times. Proper configuration of cache policies, including time-to-live (TTL) settings and cache invalidation mechanisms, is critical to ensuring cache freshness and avoiding stale data.

Q 25. Explain your understanding of bandwidth aggregation techniques.

Bandwidth aggregation techniques involve combining multiple lower-speed links into a single, higher-speed link to increase overall bandwidth capacity. This can be achieved using various methods including link aggregation (LACP), port channel bundling, and bonding. Link aggregation, for instance, allows multiple physical links to be combined into a single logical link, providing increased bandwidth and redundancy. I’ve used this extensively in data center environments to provide high-speed connectivity between servers and network devices.

Other techniques include using technologies like Ethernet bonding, which aggregates multiple Ethernet interfaces into a single logical interface. The choice of aggregation technique depends on factors such as the type of network infrastructure, vendor-specific capabilities, and budget constraints. Careful planning and configuration are vital to ensuring that the aggregated link operates efficiently and provides the desired bandwidth increase. Effective error handling and redundancy mechanisms should be included in the design.

Q 26. How do you optimize bandwidth for VoIP applications?

Optimizing bandwidth for VoIP applications requires a focus on quality of service (QoS). VoIP is highly sensitive to jitter (variations in packet arrival times) and packet loss, which can significantly impact call quality. I prioritize VoIP traffic using QoS mechanisms like DiffServ or MPLS, assigning higher priority to VoIP packets than other types of traffic. This ensures that VoIP packets receive preferential treatment during network congestion, reducing the likelihood of dropped calls or poor audio quality.

Additionally, I employ techniques like jitter buffers and compression algorithms to mitigate the impact of jitter and reduce the bandwidth required for VoIP communication. Careful monitoring of VoIP traffic metrics, including jitter, packet loss, and one-way delay, is crucial to ensure that the QoS policies are effective. For example, in one project, implementing QoS policies reduced call latency by over 50% and nearly eliminated dropped calls, drastically improving user experience.

Q 27. What is your experience with software-defined networking (SDN) and its role in bandwidth optimization?

Software-Defined Networking (SDN) plays a significant role in bandwidth optimization by providing a centralized and programmable approach to network management. SDN separates the control plane (network intelligence) from the data plane (packet forwarding), enabling dynamic and automated control of network resources. This allows for more efficient bandwidth allocation, real-time traffic engineering, and better utilization of network capacity.

I’ve worked with SDN controllers like OpenDaylight and ONOS to implement bandwidth optimization strategies, such as dynamic bandwidth allocation based on application requirements or network conditions. For example, using SDN, I was able to automatically adjust bandwidth allocation during peak usage periods to ensure critical applications received sufficient bandwidth while less-critical applications had their bandwidth temporarily reduced. This level of dynamic control is difficult to achieve using traditional network management techniques.

Q 28. Describe your approach to resolving a critical bandwidth outage.

Resolving a critical bandwidth outage requires a systematic and rapid approach. My first step is to identify the scope and impact of the outage— which users or applications are affected? I use network monitoring tools to quickly pinpoint the location and cause of the problem; this might involve checking interface statistics on routers and switches, inspecting routing tables for inconsistencies, and analyzing network logs for errors.

Once the cause is identified (e.g., a faulty link, router failure, or configuration error), I implement a solution, prioritizing restoring service as quickly as possible. This might involve switching to a redundant link, rebooting a faulty device, or reverting to a previous configuration. Simultaneously, I initiate a root cause analysis to prevent similar outages from occurring in the future. This usually involves a post-mortem analysis, which includes documenting the steps taken during the incident resolution and recommendations for improvements to prevent recurrence.

Key Topics to Learn for Bandwidth Optimization Interview

- Network Protocols and Congestion Control: Understanding TCP/IP, UDP, and congestion control algorithms (e.g., TCP Reno, Cubic) is fundamental. Consider exploring their impact on bandwidth utilization and efficiency.

- Quality of Service (QoS): Learn how QoS mechanisms prioritize specific network traffic (e.g., voice over IP, video streaming) to ensure optimal performance and minimize bandwidth consumption.

- Caching and Content Delivery Networks (CDNs): Explore the role of caching in reducing bandwidth usage by storing frequently accessed content closer to users. Understand the benefits and functionalities of CDNs.

- Compression Techniques: Familiarize yourself with various compression algorithms (e.g., gzip, Brotli) and their effectiveness in reducing data transfer sizes.

- Network Monitoring and Analysis: Master the skills of analyzing network traffic patterns, identifying bottlenecks, and using tools for performance optimization. Consider practical applications like packet capture and analysis.

- Bandwidth Management Tools and Techniques: Explore various bandwidth management tools and strategies, including traffic shaping, rate limiting, and bandwidth allocation policies.

- Cloud-Based Bandwidth Optimization: Understand how cloud platforms optimize bandwidth usage through features such as load balancing, content delivery, and virtual networks.

- Security Considerations: Discuss the interplay between bandwidth optimization and network security. How can you optimize bandwidth without compromising security?

Next Steps

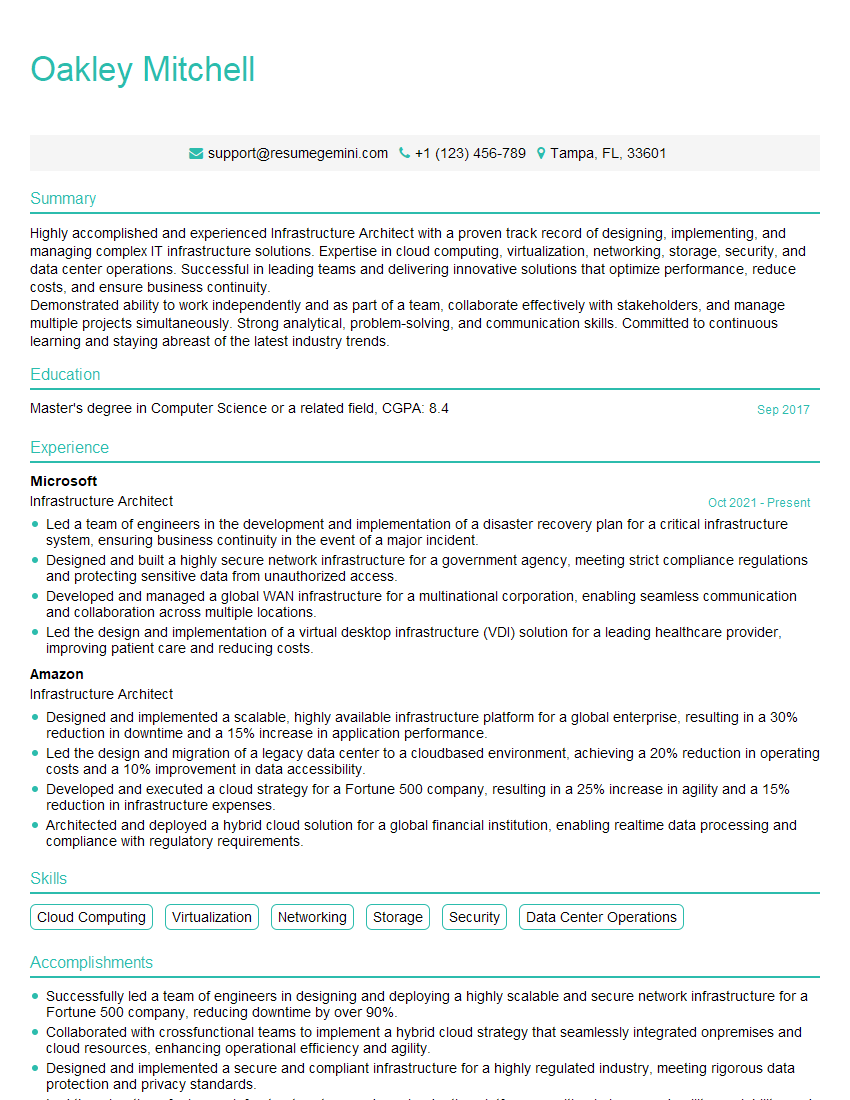

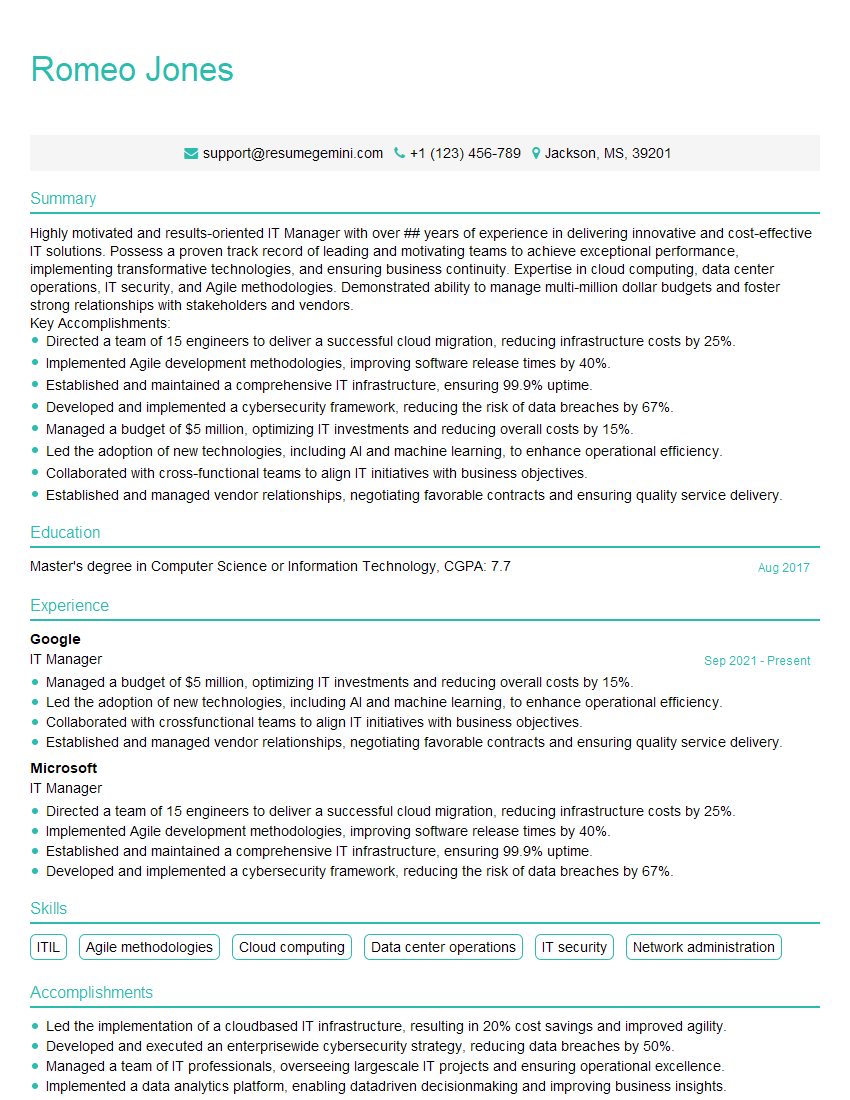

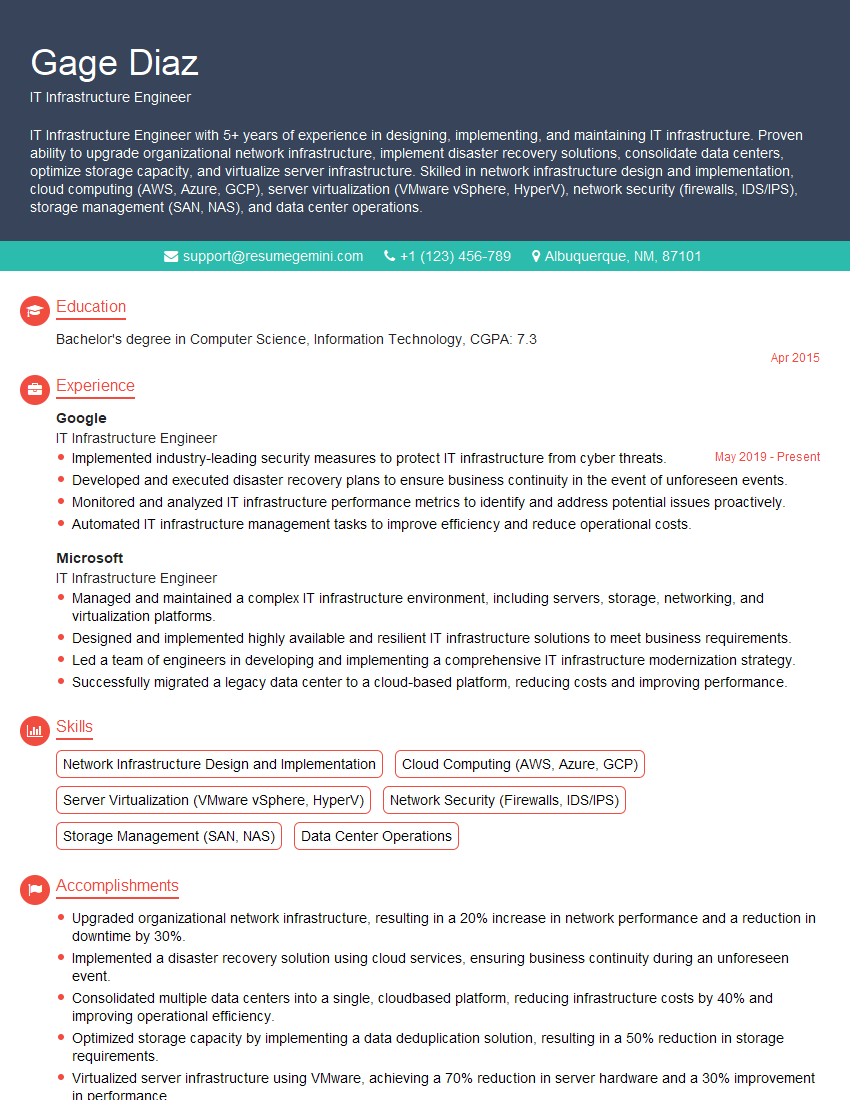

Mastering bandwidth optimization significantly enhances your career prospects in networking and related fields. It demonstrates a crucial skillset highly valued by employers seeking to improve network efficiency and performance. To maximize your chances of landing your dream role, crafting an ATS-friendly resume is crucial. A well-structured resume helps your application stand out, ensuring it gets noticed by recruiters. ResumeGemini is a trusted resource for creating professional and impactful resumes. We provide examples of resumes tailored specifically to Bandwidth Optimization roles, ensuring your skills and experience are presented effectively to potential employers.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Very informative content, great job.

good