Cracking a skill-specific interview, like one for Ciphertext Analysis, requires understanding the nuances of the role. In this blog, we present the questions you’re most likely to encounter, along with insights into how to answer them effectively. Let’s ensure you’re ready to make a strong impression.

Questions Asked in Ciphertext Analysis Interview

Q 1. Explain the difference between symmetric and asymmetric encryption.

Symmetric and asymmetric encryption differ fundamentally in how they manage encryption keys. Imagine you want to send a secret message to a friend.

- Symmetric encryption is like sharing a secret codebook with your friend. You both use the same secret key (the codebook) to encrypt and decrypt the message. It’s fast and efficient, but the challenge is securely sharing that secret key. Think of it like a shared password – if someone intercepts it, your secret is compromised.

- Asymmetric encryption, on the other hand, is like having two separate keys: a public key and a private key. You publicly share your public key (like a mailbox), and anyone can use it to encrypt a message to you. However, only you possess the private key (the key to your mailbox) to decrypt it. This solves the key exchange problem because you never need to share your private key. This is the principle behind technologies like SSL/TLS used for secure web browsing.

In short: Symmetric encryption uses one key for both encryption and decryption, while asymmetric encryption uses a pair of keys – a public key for encryption and a private key for decryption.

Q 2. Describe the process of a frequency analysis attack.

Frequency analysis exploits the fact that some letters or symbols appear more frequently than others in a given language. For example, in English, ‘E’ is the most common letter. A frequency analysis attack works by analyzing the ciphertext to identify the frequency of each symbol and comparing it to the known frequencies of letters in the plaintext language.

Process:

- Count symbol frequencies: Analyze the ciphertext and count the occurrences of each symbol (letter, number, etc.).

- Compare to known frequencies: Compare the observed symbol frequencies to the known letter frequencies of the target language (e.g., English). The most frequent symbol in the ciphertext is likely to correspond to the most frequent letter in the language.

- Substitute and refine: Make substitutions based on the frequency analysis. You might start by substituting the most frequent symbol with ‘E’. Then, analyze the resulting plaintext to check for patterns and consistency, making further substitutions based on digraphs (two-letter combinations) and trigraphs (three-letter combinations) frequencies. This iterative process continues until a meaningful plaintext is obtained.

Example: If ‘X’ appears most frequently in the ciphertext and ‘E’ is the most frequent letter in English, you’d initially substitute ‘X’ with ‘E’ throughout the ciphertext. You’d then continue the process, looking for other patterns and refining your substitutions.

Q 3. What are the strengths and weaknesses of the Caesar cipher?

The Caesar cipher is a simple substitution cipher where each letter in the plaintext is shifted a certain number of positions down the alphabet. For example, a shift of 3 would change ‘A’ to ‘D’, ‘B’ to ‘E’, and so on.

- Strengths: It’s extremely easy to understand and implement. This simplicity can be useful for quick, low-security encryption where strong security isn’t crucial.

- Weaknesses: It’s incredibly vulnerable to frequency analysis. The shifted letter frequencies remain the same as the original language’s frequencies, making it very easy to break, even with simple tools.

Example: With a shift of 3, ‘HELLO’ becomes ‘KHOOR’. The pattern is easily recognizable, and frequency analysis would quickly reveal the shift value.

Q 4. Explain the concept of Kerckhoffs’s principle.

Kerckhoffs’s principle states that the security of a cryptosystem should depend only on the secrecy of the key, not on the secrecy of the algorithm itself. In simpler terms, the algorithm can be public knowledge, but the key must remain secret for the system to be secure.

This principle is crucial because keeping the algorithm secret is practically impossible. Sooner or later, an algorithm will likely be revealed through reverse engineering or leaks. If security relies solely on algorithm secrecy, a breach renders the entire system insecure. However, if the security depends only on the key, even if the algorithm is known, the system remains secure provided the key is kept secret.

Modern cryptography overwhelmingly adheres to Kerckhoffs’s principle. Open-source algorithms are common because their public scrutiny leads to improved security and broader adoption. The security relies entirely on keeping keys private.

Q 5. How does a one-time pad work, and what makes it secure?

A one-time pad (OTP) is a theoretically unbreakable encryption method if used correctly. It involves combining the plaintext message with a truly random key (the pad) of the same length using a bitwise XOR operation.

How it works: Each bit of the plaintext is XORed with the corresponding bit of the randomly generated key. This produces ciphertext. To decrypt, the ciphertext is XORed again with the same key. The XOR operation cancels itself out, revealing the original plaintext.

Security: The OTP’s security stems from the fact that:

- The key is truly random: It should be generated using a strong random number generator and used only once.

- The key is at least as long as the message: This ensures that no part of the key is reused. Reusing a key compromises security.

If these conditions are met, the ciphertext provides no information about the plaintext, even with unlimited computational power. Each possible plaintext is equally likely, rendering cryptanalysis impossible. The major practical challenge is secure key distribution – ensuring that both parties possess the same, long, random key without interception.

Q 6. Describe the workings of the RSA algorithm.

RSA is an asymmetric encryption algorithm based on the mathematical properties of modular arithmetic and prime numbers. It relies on the difficulty of factoring large numbers into their prime components.

Key generation:

- Choose two large prime numbers,

pandq. - Compute

n = p * q(this is the modulus). - Compute

φ(n) = (p-1)(q-1)(Euler’s totient function). - Choose an integer

e(public exponent) such that1 < e < φ(n)andgcd(e, φ(n)) = 1(e and φ(n) are coprime). - Compute

d(private exponent) such thatd * e ≡ 1 (mod φ(n))(d is the modular multiplicative inverse of e modulo φ(n)).

The public key is (n, e), and the private key is (n, d).

Encryption: To encrypt a message M:

C = Me (mod n)

Decryption: To decrypt the ciphertext C:

M = Cd (mod n)

The security relies on the difficulty of factoring n into p and q. If an attacker could factor n, they could compute φ(n) and subsequently derive the private exponent d, breaking the system. RSA is widely used in secure communication protocols like SSL/TLS and digital signatures.

Q 7. Explain the concept of a digital signature.

A digital signature is a cryptographic technique used to verify the authenticity and integrity of digital data. It's analogous to a handwritten signature on a physical document, but with far greater security and tamper-evidence.

How it works: Digital signatures are created using asymmetric cryptography. The sender uses their private key to sign a message (a hash of the message, to be precise). Anyone can then use the sender's public key to verify the signature. This ensures that:

- Authentication: Only the possessor of the private key could have created the signature, proving the sender's identity.

- Integrity: Any change to the message after signing will invalidate the signature, ensuring the message hasn't been tampered with.

Digital signatures are essential for secure email, software distribution, financial transactions, and many other applications where authenticity and integrity are paramount. They provide a high level of trust and security in the digital world.

Q 8. What are common types of cryptographic hash functions?

Cryptographic hash functions are one-way functions that take an input of any size and produce a fixed-size output, called a hash. These are crucial for data integrity and authentication. Several common types exist, each with its strengths and weaknesses:

- MD5 (Message Digest Algorithm 5): While widely used historically, MD5 is now considered cryptographically broken due to successful collision attacks. It's best avoided for security-sensitive applications.

- SHA-1 (Secure Hash Algorithm 1): Similar to MD5, SHA-1 is also considered insecure for many applications due to discovered vulnerabilities. It's generally recommended to migrate away from SHA-1.

- SHA-256 and SHA-512 (Secure Hash Algorithm 2): These are part of the SHA-2 family and offer stronger security than MD5 and SHA-1. SHA-256 produces a 256-bit hash, while SHA-512 produces a 512-bit hash, offering increased collision resistance. They are widely used in various applications like digital signatures and password hashing (often with salting).

- SHA-3 (Secure Hash Algorithm 3): This is a more recent standard, designed with a different internal structure than SHA-2. It's considered a strong and secure alternative, offering different variants like SHA3-256 and SHA3-512.

- Blake2: Known for its speed and security, Blake2 is a competitive alternative to SHA-3, offering various hash lengths.

Choosing the right hash function depends on the security requirements of your application. For new projects, SHA-256 or SHA-512 are generally recommended unless there's a specific reason to use SHA-3 or Blake2 (e.g., performance optimization in specific scenarios).

Q 9. What is a collision attack, and how does it work?

A collision attack exploits a weakness in a hash function by finding two different inputs that produce the same hash output. Imagine a perfectly balanced scale: a collision attack finds two different weights that balance the scale equally. This compromises the integrity of the hash function because it violates its property of unique outputs for different inputs.

How it works: Attackers use various techniques, often involving brute-force or more sophisticated algorithms, to search for colliding inputs. The success of a collision attack depends heavily on the strength of the hash function and the computational resources available to the attacker. For example, finding collisions in SHA-256 is computationally infeasible with current technology, while older algorithms like MD5 have been demonstrably vulnerable to successful collision attacks.

Real-world impact: A successful collision attack could allow malicious actors to forge digital signatures, tamper with data without detection, or create malicious software that bypasses security checks relying on hash verification.

Q 10. Explain the concept of a man-in-the-middle attack.

A man-in-the-middle (MitM) attack occurs when a malicious actor secretly intercepts and relays communication between two parties who believe they are directly communicating with each other. It's like an eavesdropper secretly joining a phone call. The attacker can read, modify, or even forge messages between the two unsuspecting parties.

Example: Imagine Alice and Bob are exchanging secure messages. A MitM attacker could intercept Alice's message to Bob, modify it, and then forward the altered message to Bob. Bob, unaware of the interception, responds to the manipulated message, and the attacker can further relay and manipulate communications, potentially compromising sensitive data or establishing false trust.

Prevention: Using strong encryption protocols like TLS/SSL for secure communication, verifying digital certificates, and employing techniques such as VPNs to encrypt communication channels significantly reduce the risk of successful MitM attacks.

Q 11. Describe different methods for key exchange.

Key exchange is the process of securely sharing cryptographic keys between two parties. Several methods exist:

- Diffie-Hellman key exchange: This is a revolutionary method that allows two parties to establish a shared secret key over an insecure channel without ever directly transmitting the key itself. It relies on the mathematical properties of modular arithmetic.

- RSA key exchange: This leverages the RSA cryptosystem, allowing secure key exchange by encrypting a session key using the recipient's public key.

- Elliptic Curve Diffie-Hellman (ECDH): This is a more modern and efficient variant of Diffie-Hellman that uses elliptic curve cryptography, offering similar security with smaller key sizes.

- Public Key Infrastructure (PKI): PKI uses digital certificates issued by trusted certificate authorities (CAs) to verify the authenticity of public keys. This enables secure key exchange and authentication in large-scale systems.

The choice of key exchange method often depends on the specific application and security requirements. For example, ECDH is frequently used in modern secure protocols due to its efficiency and security.

Q 12. How do you handle a situation where you're presented with an unknown ciphertext?

Handling unknown ciphertext requires a systematic approach. First, I'd try to identify the type of cipher used (e.g., substitution, transposition, block cipher). This can be done by analyzing the ciphertext's structure, frequency analysis of characters or blocks, and exploring potential patterns or anomalies. Knowing the cipher type narrows down the decryption methods.

Then, I'd apply relevant cryptanalytic techniques. This might include frequency analysis (for substitution ciphers), known-plaintext attacks (if any part of the plaintext is known), chosen-plaintext attacks (if I can get the ciphertext for some plaintext I choose), or brute-force attacks (if the key space is relatively small).

Advanced techniques might involve using tools like cryptanalysis software, employing statistical methods to identify patterns and anomalies, and perhaps consulting publicly available cryptanalysis databases or resources to look for similar ciphers and potential weaknesses.

Throughout the process, meticulous record-keeping and methodical investigation are crucial. The process is iterative, often involving hypothesis generation, testing, refinement, and backtracking.

Q 13. Explain the process of breaking a substitution cipher.

Breaking a substitution cipher involves identifying the mapping between the ciphertext letters and the plaintext letters. This relies heavily on the statistical properties of language. Frequency analysis is a powerful technique.

Step-by-step process:

- Frequency Analysis: Determine the frequency of each letter in the ciphertext. Compare this frequency to the known letter frequencies in the language the plaintext is expected to be in (e.g., English). Common letters in English (like 'E', 'T', 'A') will likely correspond to the most frequent letters in the ciphertext.

- Pattern Analysis: Look for patterns in the ciphertext, such as repeated sequences of letters. These could correspond to common words or letter combinations in the plaintext language.

- Digraphs and Trigraphs: Analyze the frequency of letter pairs (digraphs) and letter triplets (trigraphs) to further refine the mapping.

- Trial and Error: Based on the initial mappings deduced from frequency and pattern analysis, start making substitutions. Test the resulting plaintext fragments for meaning and coherence. Refine the mappings based on the results.

- Contextual Analysis: Use context to deduce the meaning of ambiguous parts and guide the selection of mappings.

For example, if 'X' is the most frequent letter in the ciphertext and 'E' is the most frequent letter in English, you might initially assume 'X' maps to 'E'. By iteratively applying this process and refining the mappings based on context and statistical analysis, the cipher can be broken.

Q 14. Describe various types of cryptanalytic attacks.

Cryptanalytic attacks aim to break cryptographic systems. They can be categorized in several ways:

- Ciphertext-only attack: The attacker only has access to the ciphertext. Frequency analysis is a common technique used here.

- Known-plaintext attack: The attacker has access to both the ciphertext and corresponding plaintext. This significantly simplifies the attack.

- Chosen-plaintext attack: The attacker can obtain the ciphertext for any plaintext they choose. This gives the attacker greater control over the cryptanalysis process.

- Chosen-ciphertext attack: The attacker can obtain the plaintext for any ciphertext they choose. This is a stronger attack than chosen-plaintext.

- Adaptive chosen-plaintext/ciphertext attack: The attacker can adaptively choose plaintexts/ciphertexts based on the results of previous queries. This gives them the most power.

- Brute-force attack: The attacker tries every possible key until the correct one is found. This is computationally expensive but effective against weaker ciphers with small key spaces.

- Side-channel attack: These attacks exploit information leaked during the cryptographic operation, such as power consumption, timing variations, or electromagnetic emissions.

The success of an attack depends on the strength of the cipher, the attacker's resources, and the type of information available to the attacker. Modern ciphers are designed to resist all known attacks, especially the more powerful chosen-plaintext and chosen-ciphertext types.

Q 15. What are some common vulnerabilities in cryptographic systems?

Cryptographic systems, while designed to be secure, are often vulnerable to various attacks. These vulnerabilities can stem from weaknesses in the algorithms themselves, poor implementation, or inadequate key management. Some common vulnerabilities include:

- Algorithmic weaknesses: Some algorithms, especially older ones, may have inherent flaws that can be exploited by attackers. For example, early versions of DES (Data Encryption Standard) were susceptible to brute-force attacks due to their relatively short key length.

- Implementation flaws: Even a strong algorithm can be rendered vulnerable by poor implementation. This could involve coding errors that introduce unexpected behavior or side channels that leak information about the secret key.

- Side-channel attacks: These attacks exploit information leaked during computation, such as timing differences, power consumption, or electromagnetic emissions. A skilled attacker can use this leaked information to deduce the secret key.

- Key management issues: Improper key generation, storage, distribution, or revocation can significantly weaken a cryptographic system, rendering the strongest algorithms useless. Weak or reused keys are a major source of vulnerabilities.

- Broken cryptography libraries or APIs: Using outdated or poorly maintained libraries increases the risk of exposure to known vulnerabilities and exploits.

Understanding and mitigating these vulnerabilities is crucial for building secure cryptographic systems.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini's guide. Showcase your unique qualifications and achievements effectively.

- Don't miss out on holiday savings! Build your dream resume with ResumeGemini's ATS optimized templates.

Q 16. Discuss the importance of key management in cryptography.

Key management is paramount in cryptography; it's the backbone of security. Without proper key management, even the strongest encryption algorithm is useless. Think of a key as the 'secret code' to unlock the encrypted message; if this code is compromised, the entire system falls apart.

Effective key management encompasses several crucial aspects:

- Key generation: Keys must be generated using cryptographically secure random number generators (CSPRNGs) to avoid predictable patterns that attackers could exploit.

- Key storage: Keys need to be stored securely, often using hardware security modules (HSMs) or other secure enclaves to protect them from unauthorized access.

- Key distribution: Securely exchanging keys between parties is crucial. Techniques like Diffie-Hellman key exchange are commonly used to establish shared secrets without directly transmitting the keys.

- Key rotation: Regularly changing keys reduces the window of vulnerability. If a key is compromised, the damage is limited to the period it was in use.

- Key revocation: A mechanism to immediately invalidate compromised keys is essential. This prevents further unauthorized access.

In a nutshell, robust key management is the cornerstone of a secure cryptographic system, ensuring confidentiality, integrity, and authenticity of data.

Q 17. How do you evaluate the strength of a cryptographic algorithm?

Evaluating the strength of a cryptographic algorithm is a complex process involving both theoretical analysis and practical testing. It's not simply about looking at the algorithm's design; we need to consider how it performs under various attack scenarios.

Here's how we typically evaluate strength:

- Cryptanalysis: This involves attempting to break the algorithm using various techniques like brute-force attacks, known-plaintext attacks, chosen-plaintext attacks, and chosen-ciphertext attacks. The goal is to identify vulnerabilities or weaknesses.

- Mathematical analysis: Analyzing the underlying mathematical principles of the algorithm helps to determine its theoretical security. This might involve examining its resistance to various attacks based on complexity theory.

- Security proofs: While rare, some cryptographic schemes have formal security proofs demonstrating their resistance to certain types of attacks under specific assumptions.

- Performance analysis: The algorithm's computational efficiency and resource requirements are crucial, as an impractically slow algorithm might be rendered useless in real-world applications.

- Peer review and community scrutiny: The algorithm's design and implementation are subjected to extensive review by the cryptographic community to identify potential flaws.

The strength of an algorithm is often expressed in terms of its key length and the computational resources required to break it. A robust algorithm should resist attacks even with significant computational resources and advancements in cryptanalysis techniques. For instance, the strength of AES (Advanced Encryption Standard) is evidenced by years of scrutiny without major breakthroughs.

Q 18. What are the ethical considerations in cryptography?

Cryptography, while incredibly powerful, carries significant ethical considerations. The ability to encrypt and decrypt information raises concerns about privacy, surveillance, and potential misuse.

- Privacy vs. security: Strong encryption protects individual privacy, but it can also hinder law enforcement investigations. Balancing these competing interests is a crucial ethical challenge.

- Data security and accountability: Organizations have an ethical responsibility to protect sensitive data using appropriate cryptographic techniques. They must also be accountable for breaches or misuse of this data.

- Export controls and censorship: Restrictions on the export or use of strong encryption technologies can have far-reaching consequences for freedom of expression and access to information.

- Potential for misuse: Cryptography can be used for legitimate purposes, but it can also be exploited by malicious actors for illegal activities, such as hiding illicit communications or conducting ransomware attacks. This necessitates careful consideration of potential unintended consequences.

- Transparency and openness: Ethical cryptography practices promote transparency and openness in algorithm design and implementation, allowing for thorough scrutiny and reducing the risk of hidden vulnerabilities.

Ethical considerations in cryptography require a balanced approach that prioritizes individual rights and freedoms while acknowledging the need for security and law enforcement.

Q 19. Explain the concept of elliptic curve cryptography.

Elliptic Curve Cryptography (ECC) is a public-key cryptography system based on the algebraic structure of elliptic curves over finite fields. Unlike traditional public-key systems like RSA, which rely on the difficulty of factoring large numbers, ECC relies on the difficulty of solving the Elliptic Curve Discrete Logarithm Problem (ECDLP).

Here's a simplified explanation:

Imagine an elliptic curve as a specific type of curve on a graph. Points on this curve can be added together using a well-defined mathematical operation. The ECDLP states that given two points on the curve, P (a public key) and Q (a point derived from a secret key), finding the scalar 'k' such that Q = kP is computationally very difficult for appropriately chosen curves and parameters.

This difficulty forms the basis of ECC's security. ECC offers comparable security to RSA with much shorter key lengths, making it more efficient for resource-constrained devices like smartphones and embedded systems. This efficiency advantage is particularly significant in applications requiring high levels of security with limited computational power.

Q 20. What is public key infrastructure (PKI)?

Public Key Infrastructure (PKI) is a system for creating, managing, distributing, using, storing, and revoking digital certificates and managing public-key cryptography. Think of it as a trusted third-party system that verifies the authenticity of digital identities.

Key components of PKI include:

- Certificate Authority (CA): A trusted entity that issues and verifies digital certificates.

- Registration Authority (RA): An intermediary that helps verify the identity of individuals or organizations requesting certificates.

- Certificate Revocation List (CRL): A list of revoked certificates, indicating that they should no longer be trusted.

- Digital certificates: Electronic documents that bind a public key to an identity. They assure recipients that the public key belongs to the claimed entity.

PKI enables secure communication and authentication over the internet. For example, when you visit a secure website (HTTPS), your browser verifies the website's digital certificate issued by a trusted CA, ensuring that you're communicating with the legitimate website and not an imposter.

Q 21. How do you protect against side-channel attacks?

Side-channel attacks exploit information leaked during cryptographic operations, such as timing differences, power consumption, or electromagnetic emissions. These subtle leaks can reveal sensitive information, such as cryptographic keys.

Protecting against these attacks requires a multi-layered approach:

- Constant-time algorithms: Designing algorithms that take the same amount of time to execute regardless of the input data prevents timing attacks.

- Power analysis countermeasures: Techniques like masking and shielding can reduce power consumption variations that reveal information about the processed data.

- Electromagnetic shielding: Protecting cryptographic hardware from electromagnetic emanations reduces the risk of electromagnetic analysis attacks.

- Regular security audits and penetration testing: Identifying vulnerabilities in hardware and software through rigorous testing helps prevent side-channel attacks.

- Secure hardware: Using hardware security modules (HSMs) or trusted execution environments (TEEs) provides a more secure environment for cryptographic operations.

Mitigating side-channel attacks is crucial for securing cryptographic systems, particularly in high-security environments where physical access or observation might be possible. A robust approach involves combining multiple countermeasures to achieve comprehensive protection.

Q 22. Describe the difference between confidentiality, integrity, and availability.

Confidentiality, integrity, and availability (CIA) are the three core principles of information security. Think of them as the three legs of a stool – if one is missing, the whole thing collapses.

- Confidentiality ensures that only authorized individuals or systems can access sensitive information. It's like having a secret code only you and your best friend know. Examples include encrypting emails or using access control lists to restrict file access.

- Integrity guarantees that data remains accurate and unchanged. It’s like making sure a contract remains unaltered after signing. This involves techniques like hashing algorithms to detect tampering and digital signatures to verify authenticity.

- Availability ensures that authorized users can access information and resources when needed. It's like making sure the library is open during its operating hours. Redundancy, failover systems, and disaster recovery plans are crucial for availability.

In short, confidentiality is about who can access the data, integrity is about what the data is, and availability is about when the data is accessible.

Q 23. Explain the role of cryptography in securing data in transit and at rest.

Cryptography plays a vital role in securing data both in transit (while being transferred) and at rest (while stored).

- Data in Transit: When data travels across a network (e.g., from your computer to a server), it's vulnerable to interception. Cryptography secures this using techniques like Transport Layer Security (TLS) or Secure Sockets Layer (SSL), which encrypt the data before transmission. Think of it as sending a letter in a sealed, encrypted envelope.

- Data at Rest: Data stored on hard drives, databases, or cloud storage is also susceptible to unauthorized access. Here, cryptography employs techniques like disk encryption, database encryption, or file encryption. This is akin to locking a valuable item in a safe.

Symmetric and asymmetric encryption are commonly used. Symmetric uses the same key for encryption and decryption, offering speed but posing key management challenges. Asymmetric uses separate keys, enhancing security but being computationally slower. Often, a hybrid approach is used, leveraging the strengths of both.

Q 24. Discuss the importance of random number generation in cryptography.

Random number generation (RNG) is the backbone of many cryptographic algorithms. Its importance stems from the need for unpredictability and randomness in keys, initialization vectors (IVs), and nonces. Without truly random numbers, cryptographic systems become vulnerable to attacks.

For example, if a predictable sequence of numbers is used for key generation, an attacker could potentially guess the key and decrypt the data. This is why cryptographically secure pseudo-random number generators (CSPRNGs) are critical. These algorithms produce sequences that appear random but are deterministically generated from a seed value. However, the seed value itself must be truly random, often obtained from sources like hardware random number generators (HRNGs) or atmospheric noise.

Poor RNG can lead to weak keys, predictable IVs, and ultimately, compromised security. The quality of the RNG directly impacts the security of the entire cryptographic system.

Q 25. What experience do you have with specific cryptographic libraries or tools?

I have extensive experience with various cryptographic libraries and tools, including:

- OpenSSL: I've used OpenSSL for tasks like generating RSA keys, implementing TLS/SSL connections, and performing various cryptographic operations (encryption/decryption, hashing, digital signatures). I'm familiar with its command-line interface and its integration into various programming languages.

- Bouncy Castle (Java): I've utilized Bouncy Castle in Java applications for its wide range of cryptographic algorithms and its flexibility in handling different cryptographic protocols.

- libsodium: I've worked with libsodium for its focus on modern, robust, and easy-to-use cryptographic primitives, prioritizing security and ease of integration. I find its modern approach and emphasis on avoiding common pitfalls highly valuable.

- Cryptography libraries within various programming languages: I have experience integrating cryptographic functions within Python (using libraries like `cryptography`), C++, and Java, adapting my approach to the specific strengths and functionalities of each language's built-in or third-party libraries.

My experience extends beyond just using these libraries; I understand their underlying algorithms and limitations, which is crucial for choosing the appropriate tool for a specific security requirement.

Q 26. Describe your experience with different types of cipher modes (e.g., CBC, CTR).

I'm proficient in several cipher modes, each offering different properties and security trade-offs:

- Cipher Block Chaining (CBC): CBC uses an Initialization Vector (IV) and chained encryption of blocks. A change in one block affects the subsequent blocks, providing integrity. However, it's susceptible to padding oracle attacks if not handled carefully. The IV must be unique for each encryption.

- Counter (CTR): CTR mode treats the counter as a nonce and combines it with a key to generate a keystream. It's highly parallelizable, offers good performance, and avoids the issues of padding oracle attacks. The counter, like the IV in CBC, must never be reused with the same key.

- Galois/Counter Mode (GCM): GCM is an authenticated encryption mode combining the efficiency of CTR with authentication. This provides both confidentiality and integrity checks in a single operation, making it a popular choice for its security and performance.

My experience includes selecting the appropriate cipher mode based on the specific security requirements of the application, taking into account performance constraints and the threat model. I also understand the importance of proper IV/nonce generation and management to prevent vulnerabilities.

Q 27. How familiar are you with different block cipher designs (e.g., AES, DES)?

I have a strong understanding of various block cipher designs.

- Advanced Encryption Standard (AES): I am very familiar with AES, its different key sizes (128, 192, 256 bits), and its robust security. I understand its structure – involving multiple rounds of substitution, permutation, and mixing operations – making it highly resistant to cryptanalysis.

- Data Encryption Standard (DES): While DES is considered outdated due to its relatively small key size (56 bits), I understand its historical significance and its vulnerabilities to modern attacks. I can analyze the differences between DES and its successor, 3DES (Triple DES), and explain why 3DES, despite its improvements, is also gradually becoming obsolete.

My knowledge extends to analyzing the strengths and weaknesses of different block cipher designs, and I can assess their suitability for specific applications based on factors such as security requirements, performance constraints, and hardware limitations.

Q 28. Have you ever encountered and solved a real-world cryptography challenge?

Yes, during my previous role at [Previous Company Name], we encountered a situation where a legacy system used a weak, custom-designed encryption algorithm. This posed a significant security risk.

My approach to solving this involved a multi-step process:

- Assessment: First, I conducted a thorough analysis of the existing encryption algorithm, identifying its weaknesses and vulnerabilities. This involved reverse-engineering aspects of the system to understand how the encryption functioned.

- Risk Evaluation: I assessed the potential impact of a breach, considering the sensitivity of the data being protected. This helped prioritize the remediation effort.

- Migration Strategy: Based on the risk assessment and the available resources, I developed a phased migration plan. This involved replacing the weak algorithm with a robust, industry-standard encryption algorithm (AES-256) using a well-tested cryptographic library. We transitioned in phases to minimize disruptions.

- Testing and Validation: After the migration, we rigorously tested the system to ensure the new encryption algorithm was implemented correctly and effectively protected the data. This included penetration testing and code reviews.

This experience highlighted the importance of using well-vetted cryptographic algorithms and regularly reviewing and updating legacy systems to maintain optimal security.

Key Topics to Learn for Ciphertext Analysis Interview

- Classical Ciphers: Understanding substitution and transposition ciphers, their strengths and weaknesses, and methods for breaking them. Practical application: Analyzing historical ciphertexts and understanding their historical context.

- Modern Ciphers: Familiarize yourself with symmetric and asymmetric encryption algorithms (e.g., AES, RSA). Practical application: Analyzing the security of different cryptographic systems and identifying vulnerabilities.

- Cryptanalysis Techniques: Master frequency analysis, known-plaintext attacks, ciphertext-only attacks, and chosen-plaintext attacks. Practical application: Developing and applying cryptanalytic techniques to solve real-world scenarios.

- Statistical Methods in Cryptanalysis: Understanding the application of statistical methods like chi-squared tests and correlation analysis in breaking ciphers. Practical application: Analyzing ciphertext for patterns and anomalies to aid in decryption.

- Steganography and Watermarking: Learn about techniques used to hide information within other data. Practical application: Detecting and analyzing hidden information in various media formats.

- Hashing Algorithms and their Cryptanalysis: Understanding collision resistance, pre-image resistance and second pre-image resistance. Practical application: Assessing the security of different hashing algorithms used in authentication and data integrity.

- Side-Channel Attacks: Explore attacks that exploit information leaked during cryptographic operations (e.g., timing attacks, power analysis). Practical application: Designing secure cryptographic implementations resistant to side-channel attacks.

Next Steps

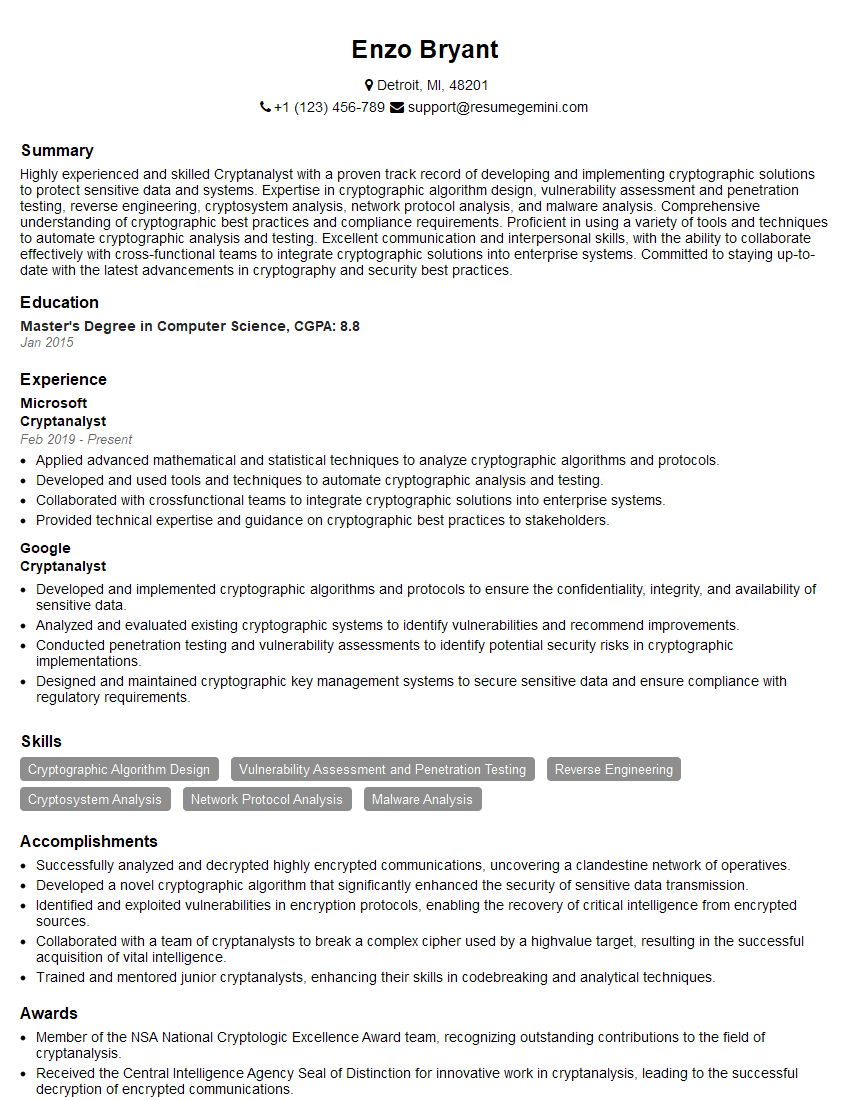

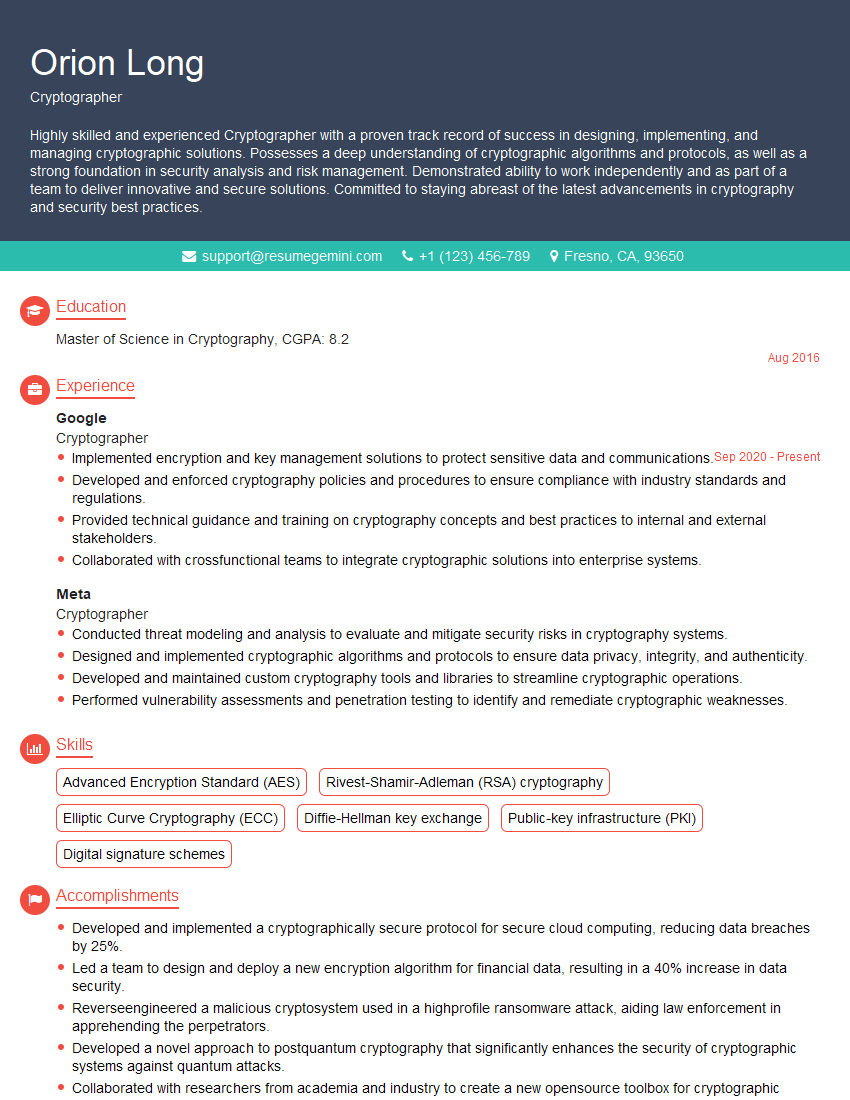

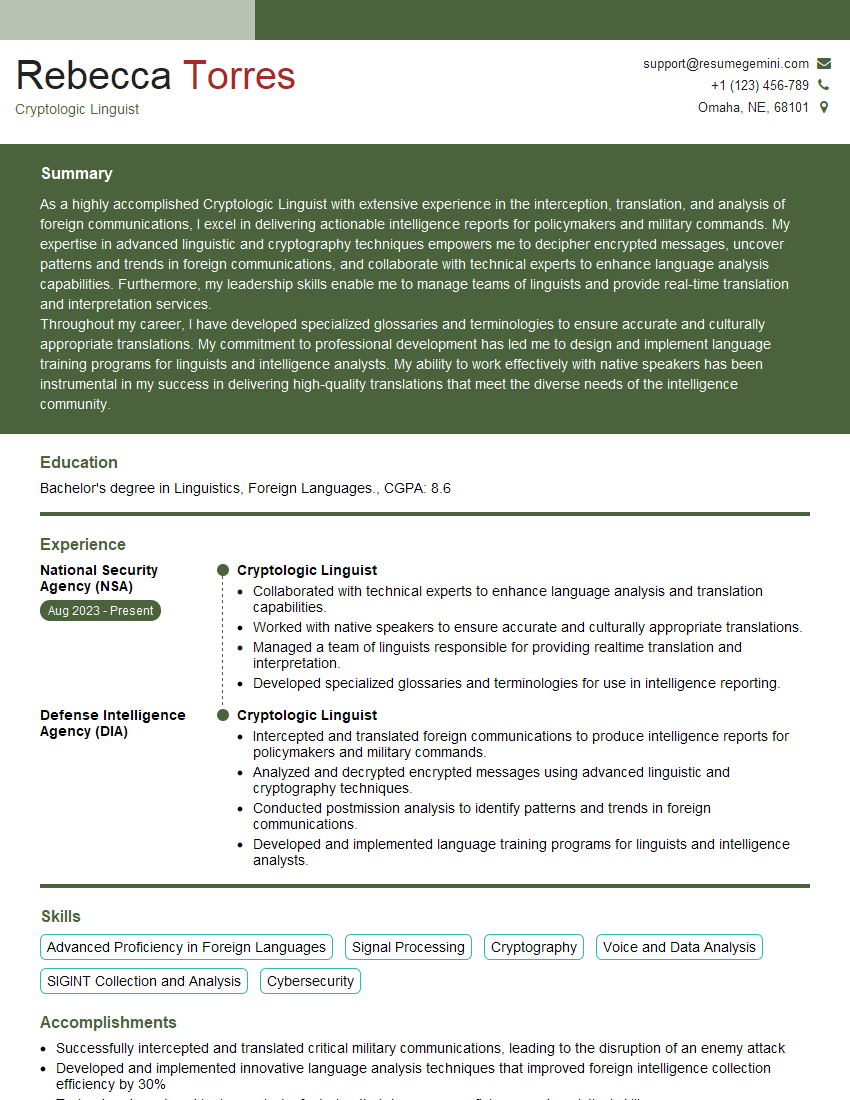

Mastering Ciphertext Analysis opens doors to exciting careers in cybersecurity, cryptography, and intelligence. To maximize your job prospects, creating a strong, ATS-friendly resume is crucial. ResumeGemini is a trusted resource that can help you build a professional resume that highlights your skills and experience effectively. We provide examples of resumes tailored to Ciphertext Analysis to help you craft a compelling application. Take the next step towards your dream career – build your best resume with ResumeGemini.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Very informative content, great job.

good