The right preparation can turn an interview into an opportunity to showcase your expertise. This guide to Data Management and Analysis Skills interview questions is your ultimate resource, providing key insights and tips to help you ace your responses and stand out as a top candidate.

Questions Asked in Data Management and Analysis Skills Interview

Q 1. Explain the difference between data mining and data profiling.

Data mining and data profiling are both crucial parts of data analysis, but they serve different purposes. Think of data mining as the process of discovering hidden patterns and insights within a large dataset, like searching for gold nuggets in a mountain of dirt. Data profiling, on the other hand, is like creating a detailed map of your data’s terrain before you start digging – it focuses on understanding the data’s characteristics, quality, and structure.

Data Mining: This involves using various algorithms and techniques to uncover meaningful relationships, trends, and anomalies within the data. It’s about answering questions like, “What are the factors driving customer churn?” or “Which products are most frequently purchased together?” Techniques used include association rule mining, classification, clustering, and regression.

Data Profiling: This is a more descriptive process focusing on understanding the data’s metadata, identifying data types, data ranges, missing values, duplicates, and inconsistencies. It helps in assessing data quality and understanding its limitations. For example, profiling might reveal that a date field contains both MM/DD/YYYY and DD/MM/YYYY formats, impacting data analysis.

In essence, data profiling is often a necessary precursor to data mining. You wouldn’t start digging for gold without first exploring the area and making a plan, and similarly, you’d usually profile your data before attempting to mine it for insights.

Q 2. Describe your experience with ETL processes.

My experience with ETL (Extract, Transform, Load) processes spans several projects, encompassing various data sources and target systems. I’ve worked extensively with tools like Informatica PowerCenter and Apache Kafka. A typical project involves extracting data from diverse sources – databases (SQL Server, Oracle, MySQL), flat files (CSV, TXT), APIs, and cloud-based storage (AWS S3, Azure Blob Storage).

The transformation phase is where I leverage my data manipulation skills. I’ve handled data cleansing (removing duplicates, correcting inconsistencies), data standardization (converting data formats to ensure consistency), data enrichment (adding information from other sources), and data aggregation (summarizing data). I’m proficient in using SQL and scripting languages like Python to perform these transformations. For example, I once used Python’s Pandas library to clean and transform a large CSV file containing customer data, handling missing values and converting date formats.

Finally, the loading phase involves moving the transformed data into the target system, which could be a data warehouse, data lake, or operational database. I have experience optimizing load processes for efficiency and ensuring data integrity. I’ve used various techniques to handle large data volumes and ensure data is loaded accurately and efficiently.

Q 3. What are the common challenges in data quality, and how do you address them?

Common data quality challenges include incompleteness (missing values), inaccuracy (wrong or outdated data), inconsistency (different formats or meanings for the same data), invalidity (data violating constraints), duplication (redundant data), and ambiguity (data with multiple interpretations). Addressing these requires a multi-pronged approach.

- Data profiling: As mentioned earlier, this is the first step in identifying data quality issues.

- Data cleansing: This involves techniques like imputation (filling missing values), standardization (ensuring consistent formats), and deduplication (removing duplicates).

- Data validation: Implementing rules and constraints to prevent invalid data from entering the system.

- Data governance: Establishing policies and procedures to manage data quality throughout its lifecycle.

- Root cause analysis: Investigating the source of data quality issues to prevent recurrence.

For example, if I encounter inconsistent date formats, I would first profile the data to understand the extent of the problem. Then, I’d use data cleansing techniques, perhaps SQL queries or Python scripts, to standardize the date format. Proactive data validation rules would be implemented to prevent future entries with inconsistent formats.

Q 4. How do you handle missing data in a dataset?

Handling missing data is a critical step in data analysis. The best approach depends on the nature of the data, the extent of missingness, and the analysis goals. Ignoring missing data can lead to biased and unreliable results.

- Deletion: If the missing data is minimal and randomly distributed, complete case deletion (removing rows with missing values) might be acceptable, though it can lead to a loss of information. Listwise deletion is another approach.

- Imputation: This involves filling in missing values with estimated values. Common methods include:

- Mean/Median/Mode imputation: Replacing missing values with the average, median, or most frequent value. This is simple but can distort the data distribution.

- Regression imputation: Predicting missing values based on other variables using regression models. More sophisticated but requires assumptions about the data relationships.

- K-Nearest Neighbors (KNN) imputation: Estimating missing values based on values from similar data points.

- Multiple imputation: Creating multiple plausible imputed datasets and analyzing each separately to account for uncertainty in the imputed values.

The choice of imputation method depends on the context. For example, mean imputation might be suitable for a variable with normally distributed data, while KNN might be better for non-linear relationships. It’s crucial to document the chosen method and its potential impact on the analysis.

Q 5. Explain different types of data and their appropriate analysis techniques.

Data comes in various types, each requiring appropriate analysis techniques. Here are some examples:

- Numerical data: Represents quantities. Can be continuous (e.g., height, weight) or discrete (e.g., number of children). Analysis techniques include descriptive statistics (mean, median, standard deviation), regression analysis, correlation analysis.

- Categorical data: Represents categories or groups. Can be nominal (e.g., gender, color) or ordinal (e.g., education level, customer satisfaction rating). Analysis techniques include frequency tables, chi-square tests, contingency tables.

- Text data: Unstructured data such as documents, emails, or social media posts. Analysis techniques include natural language processing (NLP), sentiment analysis, topic modeling.

- Time series data: Data collected over time. Analysis techniques include time series decomposition, forecasting models (ARIMA, exponential smoothing).

- Spatial data: Data associated with geographical locations. Analysis techniques include Geographic Information Systems (GIS) analysis, spatial statistics.

For example, analyzing customer demographics (categorical) might involve creating frequency tables and cross-tabulations, while predicting sales based on advertising spend (numerical) might involve regression analysis.

Q 6. What are your preferred data visualization tools and why?

My preferred data visualization tools are Tableau and Power BI. These tools offer a comprehensive suite of features for creating interactive and insightful visualizations. I chose them for several reasons:

- Ease of use: Both platforms have intuitive drag-and-drop interfaces, making it easy to create visualizations even with complex datasets.

- Data connectivity: They seamlessly connect to various data sources, including databases, spreadsheets, and cloud services.

- Visual richness: They provide a wide array of chart types, allowing me to choose the most appropriate visualization for the data and the story I want to tell.

- Interactive dashboards: They enable the creation of interactive dashboards that allow users to explore data dynamically and gain deeper insights.

- Collaboration features: They facilitate collaboration by allowing users to share dashboards and reports.

While other tools like Python libraries (Matplotlib, Seaborn) are valuable for custom visualizations, Tableau and Power BI offer a superior balance of ease of use and powerful features for data storytelling and communication.

Q 7. Describe your experience with SQL. Provide examples of your queries.

I’ve been using SQL for over [Number] years and am highly proficient in writing complex queries for data extraction, manipulation, and analysis. My experience encompasses various database systems like SQL Server, MySQL, and PostgreSQL.

Here are a few examples:

Example 1: Finding the top 10 customers by total spending:

SELECT CustomerID, SUM(TotalAmount) AS TotalSpent FROM Orders GROUP BY CustomerID ORDER BY TotalSpent DESC LIMIT 10;Example 2: Identifying customers who haven’t placed an order in the last 6 months:

SELECT CustomerID FROM Customers WHERE CustomerID NOT IN (SELECT DISTINCT CustomerID FROM Orders WHERE OrderDate >= DATE('now', '-6 months'));Example 3: Joining tables to get combined customer and order information:

SELECT c.CustomerID, c.Name, o.OrderDate, o.TotalAmount FROM Customers c JOIN Orders o ON c.CustomerID = o.CustomerID WHERE o.OrderDate BETWEEN '2023-01-01' AND '2023-12-31';These examples illustrate my ability to write efficient and effective SQL queries to retrieve and manipulate data. I can adapt my SQL skills to various database systems and complex queries involving joins, subqueries, window functions, and common table expressions (CTEs).

Q 8. Explain normalization in databases and its importance.

Database normalization is a systematic process of organizing data to reduce redundancy and improve data integrity. Think of it like organizing your closet – instead of throwing everything in haphazardly, you categorize items (shirts, pants, etc.) and store similar items together. This makes finding things easier and prevents duplicates. In databases, normalization achieves this by breaking down larger tables into smaller, more manageable ones, connected through relationships.

There are several levels of normalization (1NF, 2NF, 3NF, etc.), each addressing specific types of redundancy. For example, 1NF eliminates repeating groups of data within a table. 2NF builds upon 1NF by eliminating redundant data that depends on only part of the primary key (in tables with composite keys). 3NF further refines this by removing transitive dependencies – where one non-key attribute depends on another non-key attribute.

The importance of normalization is multifaceted:

- Reduced Data Redundancy: Minimizes storage space and reduces the risk of inconsistencies.

- Improved Data Integrity: Ensures data accuracy and consistency by enforcing relationships between tables.

- Simplified Data Modification: Easier to update and maintain data as changes only need to be made in one place.

- Enhanced Query Performance: Optimized database structure leads to faster query execution.

For instance, imagine a table storing customer information with multiple phone numbers. A non-normalized table might repeat the customer’s name for each phone number. Normalization would split this into two tables: one for customer details (customer ID, name, address) and another for phone numbers (phone ID, customer ID, phone number). This eliminates redundancy and improves data integrity.

Q 9. What is the difference between a fact table and a dimension table in a data warehouse?

In a data warehouse, fact tables and dimension tables are the core components of a star schema or snowflake schema. They work together to provide a comprehensive view of business data. Think of it like a spreadsheet – the fact table represents the core data (like sales figures), while dimension tables provide context and details (like product information, customer details, time of sale).

Fact Table: Contains numerical data, or facts, that are measured. This is the central table in the schema and contains the primary keys of all the dimension tables. A typical fact table would have a primary key made up of foreign keys pointing to the dimension tables, along with the measures.

Dimension Table: Contains descriptive attributes about the fact table. They provide context to the numerical data in the fact table. They often contain hierarchical data such as geography, products, time, and customer details. Each dimension table will have a primary key that will be used as a foreign key in the fact table.

For example, in a sales data warehouse:

- Fact Table: Contains sales records with fields like

SaleID,ProductID,CustomerID,DateID, andSalesAmount. - Dimension Tables: Would include

Product(ProductID,ProductName,ProductCategory),Customer(CustomerID,CustomerName,CustomerLocation), andDate(DateID,Date,Month,Year) tables.

The relationships between these tables are crucial for efficient querying and analysis. By joining these tables, analysts can perform complex queries, such as finding total sales for a specific product in a particular region during a given time period.

Q 10. How do you identify and handle outliers in your data?

Outliers are data points that significantly deviate from the rest of the data. Identifying and handling them is crucial for accurate analysis, as they can skew results and mislead conclusions. Imagine analyzing house prices – a mansion worth millions in a neighborhood of modest homes would be an outlier.

Identifying outliers involves several methods:

- Visual Inspection: Box plots, scatter plots, and histograms can visually reveal outliers.

- Statistical Methods:

- Z-score: Measures how many standard deviations a data point is from the mean. Values beyond a certain threshold (e.g., ±3) are considered outliers.

- IQR (Interquartile Range): The difference between the 75th and 25th percentiles. Data points below Q1 – 1.5*IQR or above Q3 + 1.5*IQR are considered outliers.

Handling outliers depends on the context and the reason for their existence:

- Removal: If outliers are due to errors or data entry mistakes, they can be removed. However, this should be done cautiously and documented.

- Transformation: Techniques like logarithmic transformation can reduce the impact of outliers by compressing the range of values.

- Winsorizing/Trimming: Replacing extreme values with less extreme ones (Winsorizing) or removing a certain percentage of the most extreme values (Trimming).

- Robust Statistical Methods: Using methods less sensitive to outliers, like median instead of mean, or robust regression.

The key is to understand the reason for the outlier before deciding how to handle it. It is always a good idea to analyze the data with and without the outliers to determine their influence on the analysis.

Q 11. Explain your experience with data modeling techniques.

I have extensive experience with various data modeling techniques, including Entity-Relationship Diagrams (ERDs), star schemas, snowflake schemas, and dimensional modeling. I’m proficient in using tools like Erwin and PowerDesigner to create and manage data models. My experience spans both relational and NoSQL databases.

In a recent project involving customer relationship management (CRM) data, I utilized ERDs to design a relational database schema. The ERD clearly illustrated the entities (customers, products, orders, etc.) and their relationships, ensuring a well-structured and efficient database. I carefully considered primary and foreign keys to enforce data integrity and facilitate efficient data retrieval.

For a large-scale data warehousing project, I employed a star schema to organize data from diverse sources. This involved identifying fact tables (like sales transactions) and associated dimension tables (customers, products, time). The star schema structure simplified querying and analysis by making relationships between tables clear. I utilized a combination of top-down and bottom-up approaches to accommodate various data sources, ensuring the data model was both robust and scalable.

I am also experienced in using NoSQL database modeling approaches such as document, key-value and graph models when the data doesn’t fit into a relational schema. This experience allows me to choose the best data model depending on project requirements and data characteristics.

Q 12. Describe a time you had to deal with inconsistent or conflicting data sources.

In a previous project involving integrating data from multiple marketing platforms (Google Ads, Facebook Ads, and email marketing), I encountered significant inconsistencies and conflicts. Data formats varied widely, with different platforms using different naming conventions and data types for the same metrics (e.g., campaign IDs, dates, and conversion values).

My approach involved a multi-step process:

- Data Profiling: I first profiled each data source to understand its structure, data types, and potential inconsistencies. This involved identifying missing values, data type mismatches, and outliers.

- Data Cleaning: I implemented data cleaning procedures to standardize formats, handle missing values (using imputation techniques), and resolve data type conflicts. This involved writing custom scripts using Python and SQL.

- Data Transformation: I created a standardized data dictionary to ensure consistent naming conventions across all sources. I then transformed the data from each platform into this standard format using ETL (Extract, Transform, Load) processes.

- Data Reconciliation: For conflicting data points (e.g., different conversion counts for the same campaign across platforms), I used reconciliation rules based on data quality assessment to prioritize sources based on their reliability.

Through this structured approach, I successfully integrated the data into a single, consistent dataset suitable for analysis. The result was a unified view of marketing performance, allowing for better insights and more effective campaign optimization.

Q 13. How familiar are you with different database management systems (e.g., MySQL, PostgreSQL, MongoDB)?

I have significant experience with various database management systems. My expertise includes relational databases like MySQL and PostgreSQL, and NoSQL databases like MongoDB. I’m proficient in writing SQL queries for data retrieval and manipulation in relational databases, and I’m comfortable using the respective query languages and APIs for NoSQL databases.

MySQL: I’ve used MySQL extensively for web application development, leveraging its scalability and ease of use for managing moderately sized datasets. I’m familiar with its various storage engines and optimization techniques.

PostgreSQL: My experience with PostgreSQL extends to projects requiring advanced features like advanced data types, spatial functions, and complex query processing. I find its robust features and compliance with SQL standards particularly valuable for large and complex datasets.

MongoDB: I’ve used MongoDB in projects where schema flexibility was paramount. I’ve leveraged its document-oriented structure to efficiently manage semi-structured and unstructured data, often for applications with high volume and velocity data ingestion requirements.

My selection of a specific DBMS depends on the project’s requirements, including data volume, data structure, query patterns, scalability needs, and overall cost-benefit analysis. This experience allows me to make informed decisions about database technology based on project constraints.

Q 14. What are some common statistical methods you use in data analysis?

My data analysis toolkit includes a wide range of statistical methods, chosen based on the data characteristics and the research question. These commonly include:

- Descriptive Statistics: Mean, median, mode, standard deviation, variance, percentiles – to summarize and describe data.

- Inferential Statistics: Hypothesis testing (t-tests, ANOVA, chi-square tests), regression analysis (linear, logistic, multiple), correlation analysis – to draw conclusions about a population based on sample data.

- Regression Analysis: To model the relationship between a dependent variable and one or more independent variables. I’m proficient in both linear and non-linear regression techniques, using methods like ordinary least squares (OLS) and generalized linear models (GLMs).

- Time Series Analysis: Methods like ARIMA, exponential smoothing, to analyze data collected over time. I use this for forecasting and identifying trends.

- Clustering: K-means, hierarchical clustering, DBSCAN – to group similar data points together. I’ve used this for customer segmentation and anomaly detection.

- Dimensionality Reduction: Principal Component Analysis (PCA), t-SNE – to reduce the number of variables while retaining important information.

In addition to these, I regularly utilize statistical software packages like R and Python (with libraries like Scikit-learn, Statsmodels, Pandas) to perform these analyses and create visualizations to communicate findings effectively.

Q 15. How do you determine the appropriate statistical test for a given hypothesis?

Choosing the right statistical test hinges on several factors: your hypothesis type (null vs. alternative), the type of data you have (categorical, continuous, etc.), and whether your data meets the assumptions of specific tests. Think of it like choosing the right tool for a job – a hammer won’t work for screwing in a screw.

For comparing means: If you have two independent groups and your data is normally distributed, a t-test is appropriate. If you have more than two groups, an ANOVA (Analysis of Variance) is used. If your data isn’t normally distributed, consider non-parametric alternatives like the Mann-Whitney U test (for two groups) or the Kruskal-Wallis test (for more than two groups).

For comparing proportions: A chi-squared test is commonly used to compare proportions between groups. For example, determining if there’s a significant difference in the click-through rates of two different ad designs.

For examining relationships: Correlation analysis (Pearson’s r for linear relationships) helps determine the strength and direction of the association between two continuous variables. Regression analysis predicts the value of one variable based on the value of one or more other variables.

Example: Let’s say we hypothesize that a new marketing campaign will increase website traffic. We can conduct a t-test to compare the average website traffic before and after the campaign launch (assuming the data meets the assumptions of a t-test). If the p-value is below our significance level (e.g., 0.05), we’d reject the null hypothesis and conclude the campaign was effective.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. Explain your experience with data governance and compliance regulations.

My experience with data governance encompasses establishing data quality standards, defining data ownership and access control, and implementing data lineage tracking. I’ve worked with organizations to ensure compliance with regulations like GDPR (General Data Protection Regulation) and CCPA (California Consumer Privacy Act). This involves developing and implementing data retention policies, anonymization techniques, and data security protocols.

In a previous role, I was instrumental in leading our team’s effort to achieve GDPR compliance. This involved creating a comprehensive data map, identifying sensitive data points, implementing data subject access requests (DSAR) procedures, and conducting regular data privacy impact assessments (DPIAs). We utilized data masking and encryption techniques to protect sensitive information and documented all processes meticulously to ensure auditability.

I’m familiar with various compliance frameworks, and my approach always prioritizes proactive risk management, data minimization, and transparency. I believe strong data governance not only mitigates legal and reputational risks but also significantly enhances the trustworthiness and value of our data assets.

Q 17. Describe your experience with big data technologies (e.g., Hadoop, Spark).

I have extensive experience with big data technologies, particularly Hadoop and Spark. I’ve used Hadoop’s distributed file system (HDFS) for storing and processing massive datasets that exceed the capacity of traditional databases. I’m proficient in using MapReduce for parallel processing of large datasets within the Hadoop ecosystem. My experience also includes leveraging Spark’s in-memory processing capabilities for significantly faster data analysis compared to Hadoop’s MapReduce paradigm. I’ve used Spark SQL for querying large datasets and Spark MLlib for building machine learning models on big data.

In a project involving customer transaction data exceeding 10 terabytes, I utilized Spark to perform real-time fraud detection. Spark’s speed and scalability were crucial for processing the vast volume of data and identifying fraudulent transactions quickly.

Beyond Hadoop and Spark, I have familiarity with other big data tools like Hive, Pig, and Kafka, allowing me to adapt to various project needs and technological landscapes.

Q 18. How do you ensure data security and privacy in your work?

Data security and privacy are paramount in my work. I employ a multi-layered approach encompassing technical, procedural, and organizational measures. My strategies include:

Data encryption: Employing encryption both at rest and in transit to protect data from unauthorized access.

Access control: Implementing strict access control measures, including role-based access control (RBAC) to limit access to sensitive data based on individual roles and responsibilities.

Data masking and anonymization: Using techniques to protect sensitive data by replacing it with substitute values while preserving data utility for analysis.

Regular security audits and penetration testing: Conducting regular security assessments to identify and mitigate vulnerabilities.

Data loss prevention (DLP) tools: Utilizing DLP solutions to monitor and prevent sensitive data from leaving the organization’s control.

Compliance with regulations: Ensuring adherence to relevant data privacy regulations, such as GDPR and CCPA.

I believe a proactive and comprehensive approach, coupled with continuous monitoring and improvement, is vital for maintaining the confidentiality, integrity, and availability of data.

Q 19. What is your experience with data warehousing and business intelligence tools?

I have significant experience with data warehousing and business intelligence (BI) tools. I’ve designed and implemented data warehouses using dimensional modeling techniques, optimizing data structures for efficient query performance and reporting. I’m proficient in using ETL (Extract, Transform, Load) processes to move data from various sources into the data warehouse. My experience includes working with various BI tools, including Tableau and Power BI, to create interactive dashboards and reports that provide actionable insights to business stakeholders.

In one project, I designed a data warehouse to consolidate sales data from multiple regional offices. This involved developing an ETL pipeline using Informatica PowerCenter, creating a star schema, and building a comprehensive set of reports and dashboards in Tableau that helped the sales team identify trends and optimize their sales strategies.

Q 20. How do you communicate complex data insights to non-technical stakeholders?

Communicating complex data insights to non-technical stakeholders requires a clear, concise, and engaging approach. I avoid technical jargon and utilize visualizations, storytelling, and analogies to make the information readily understandable.

Visualizations: Charts, graphs, and dashboards effectively communicate complex relationships in a visual manner. I always select the most appropriate chart type based on the data and the message I wish to convey.

Storytelling: Framing the data insights within a narrative makes them more engaging and memorable. I focus on the ‘so what?’ – explaining the implications of the data and how it relates to the business objectives.

Analogies and real-world examples: Using simple analogies and relatable scenarios helps translate complex concepts into easily digestible information.

Interactive presentations: I avoid passive presentations; instead, I encourage questions and discussions to ensure everyone understands and can connect with the data.

For instance, instead of saying “the conversion rate increased by 15%,” I might say, “Our new marketing campaign led to 15% more customers completing their purchases, resulting in a $X increase in revenue.” This makes the data more tangible and relevant for non-technical audiences.

Q 21. Explain your experience with A/B testing and experiment design.

A/B testing, or split testing, is a crucial part of my data-driven decision-making process. I have experience in designing experiments, selecting appropriate sample sizes, and analyzing results to understand the impact of different variations. I’m familiar with various A/B testing platforms and methodologies.

My approach to A/B testing involves clearly defining the hypothesis, identifying key metrics, and choosing a statistically significant sample size. This is followed by careful implementation, meticulous tracking, and rigorous analysis of the results. I use statistical significance tests to determine if the differences between the variations are significant. I also take into account factors such as the duration of the test, potential confounding variables, and the overall business context.

In a previous project, we used A/B testing to compare two different website landing page designs. We ran the test for two weeks, carefully tracking conversion rates and other key metrics. Using statistical analysis, we determined that one design significantly outperformed the other and implemented the winning design across the website.

Q 22. What are your preferred programming languages for data analysis?

My preferred programming languages for data analysis are Python and SQL. Python’s versatility, coupled with its extensive libraries like Pandas, NumPy, and Scikit-learn, makes it ideal for data manipulation, cleaning, exploration, and model building. Pandas, for example, provides powerful data structures like DataFrames that greatly simplify data wrangling. NumPy offers efficient numerical computation capabilities. Scikit-learn is a comprehensive machine learning library. SQL, on the other hand, is essential for efficient data retrieval and manipulation within relational databases. I frequently use SQL to query large datasets, perform aggregations, and join tables to uncover insights. The combination of Python and SQL allows me to effectively handle the entire data analysis lifecycle, from data extraction to model deployment.

For instance, in a recent project involving customer churn prediction, I used SQL to extract relevant customer data from our database, then leveraged Python with Pandas and Scikit-learn to preprocess the data, build a logistic regression model, and finally evaluate its performance.

Q 23. Describe your experience with machine learning algorithms and their applications.

I have extensive experience with a variety of machine learning algorithms, including supervised learning techniques like linear regression, logistic regression, support vector machines (SVMs), decision trees, and random forests; unsupervised learning methods such as k-means clustering and principal component analysis (PCA); and deep learning techniques like neural networks, particularly convolutional neural networks (CNNs) for image data and recurrent neural networks (RNNs) for sequential data.

My experience spans various applications. For example, I used linear regression to model the relationship between advertising spend and sales revenue, logistic regression for customer churn prediction, and random forests for fraud detection. In an image recognition project, I utilized CNNs to classify different types of medical images with high accuracy. The choice of algorithm always depends on the specific problem, the nature of the data, and the desired outcome.

Understanding the strengths and weaknesses of each algorithm is crucial. For instance, while linear regression is simple and interpretable, it assumes a linear relationship between variables, which may not always hold true. Decision trees are easy to understand but prone to overfitting. Choosing the right algorithm requires careful consideration and often involves experimentation and comparison of various models.

Q 24. How do you approach a new data analysis project?

My approach to a new data analysis project follows a structured methodology, often mirroring the CRISP-DM (Cross-Industry Standard Process for Data Mining) framework. This involves six key phases:

- Business Understanding: Clearly defining the project goals, objectives, and success metrics. This involves collaborating closely with stakeholders to understand their needs and expectations.

- Data Understanding: Exploring the available data, assessing its quality, identifying potential issues like missing values or outliers, and gaining a preliminary understanding of the data’s characteristics.

- Data Preparation: Cleaning, transforming, and preparing the data for analysis. This includes handling missing values, dealing with outliers, and potentially feature engineering to create new variables.

- Modeling: Selecting and applying appropriate statistical models or machine learning algorithms to analyze the data and achieve the project’s objectives. This phase involves model selection, training, and validation.

- Evaluation: Evaluating the performance of the chosen model, assessing its accuracy and reliability, and comparing it to alternative approaches.

- Deployment: Deploying the model and making the insights derived from the analysis actionable. This often involves communicating the results to stakeholders through clear visualizations and reports.

Throughout the entire process, thorough documentation and version control are crucial for maintainability and reproducibility.

Q 25. How do you handle conflicting priorities in a data analysis project?

Handling conflicting priorities in a data analysis project requires careful planning, clear communication, and effective prioritization. I typically address this using a combination of techniques:

- Prioritization Matrix: I use a matrix (e.g., MoSCoW method – Must have, Should have, Could have, Won’t have) to rank project requirements based on their importance and urgency. This helps to focus on the most critical aspects first.

- Stakeholder Alignment: I facilitate discussions with stakeholders to understand their priorities and find common ground. Sometimes, compromises are necessary, and transparently communicating the trade-offs involved is key.

- Scope Management: If necessary, I adjust the project scope to manage conflicting priorities. This might involve breaking down the project into smaller, more manageable phases or postponing less critical tasks.

- Time Management: Effective time management techniques, like using project management tools and regularly tracking progress, ensure that tasks are completed efficiently and within allocated timelines.

Open and honest communication is paramount. Keeping stakeholders informed about progress, challenges, and any necessary adjustments helps to manage expectations and prevent conflicts from escalating.

Q 26. Describe your experience with data versioning and control.

Data versioning and control are crucial for ensuring data integrity, reproducibility, and collaboration. My experience involves using Git for version control of code and data, along with tools like DVC (Data Version Control) for managing large datasets. Git allows me to track changes in my code, revert to previous versions if necessary, and collaborate effectively with team members. DVC handles large datasets efficiently by storing only changes and using remote storage if necessary.

For example, in a recent project involving a large time-series dataset, I used DVC to manage the data, tracking changes to the dataset and ensuring that every analysis was reproducible. This was crucial for auditing purposes and for facilitating collaboration among team members.

I also utilize data catalogs and metadata management tools to document data lineage, schema, and quality information. This helps to maintain a comprehensive understanding of the data and facilitates efficient data discovery and reuse.

Q 27. What is your approach to performance tuning of database queries?

Performance tuning of database queries is essential for ensuring efficient data analysis. My approach involves a multi-pronged strategy:

- Query Optimization: This involves analyzing the query execution plan to identify bottlenecks. Techniques include using appropriate indexes, optimizing joins, and rewriting queries to reduce complexity.

- Indexing: Creating appropriate indexes on frequently queried columns significantly improves query performance. However, over-indexing can negatively impact write operations, so careful consideration is needed.

- Database Design: A well-designed database schema can significantly improve query performance. This includes normalizing the database to reduce redundancy and ensuring efficient data storage.

- Caching: Utilizing caching mechanisms to store frequently accessed data in memory can dramatically improve query response time.

- Hardware Optimization: In some cases, hardware upgrades, such as increasing RAM or upgrading to faster storage, may be necessary to improve overall database performance.

Tools like SQL Profiler (or equivalent tools depending on the database system) help monitor query performance and identify areas for improvement. I also frequently utilize explain plans to understand how the database engine executes a query and identify opportunities for optimization.

Q 28. Explain how you stay up-to-date with the latest trends and technologies in data management and analysis.

Staying up-to-date with the latest trends and technologies in data management and analysis is crucial in this rapidly evolving field. I employ a multi-faceted approach:

- Online Courses and Tutorials: Platforms like Coursera, edX, Udacity, and DataCamp offer excellent courses on various aspects of data science and data management.

- Conferences and Workshops: Attending industry conferences and workshops provides invaluable opportunities to learn about new technologies and network with other professionals.

- Professional Networking: Engaging with the data science community through online forums, LinkedIn groups, and local meetups keeps me abreast of the latest developments and best practices.

- Research Papers and Publications: Reading research papers and publications in reputable journals and conferences allows me to stay informed about cutting-edge advancements in the field.

- Industry Blogs and Newsletters: Subscribing to newsletters and following industry blogs keeps me updated on current trends and emerging technologies.

Continuous learning is vital. I allocate dedicated time each week for learning new skills and deepening my existing knowledge. This ensures I remain at the forefront of innovation in data management and analysis.

Key Topics to Learn for Data Management and Analysis Skills Interview

- Data Cleaning and Preprocessing: Understanding techniques like handling missing values, outlier detection, and data transformation is crucial. Practical application: Preparing a messy dataset for analysis, ensuring data quality and reliability.

- Data Wrangling with SQL: Mastering SQL queries for data extraction, manipulation, and aggregation is essential. Practical application: Efficiently querying large databases to extract specific information for analysis.

- Exploratory Data Analysis (EDA): Learn to use visualization techniques and statistical methods to understand data patterns and distributions. Practical application: Identifying trends, anomalies, and relationships within a dataset to inform analysis.

- Data Visualization: Develop skills in creating effective charts and graphs using tools like Tableau or Python libraries (Matplotlib, Seaborn). Practical application: Communicating insights from data analysis clearly and concisely to diverse audiences.

- Statistical Analysis: Understand hypothesis testing, regression analysis, and other statistical methods relevant to your field. Practical application: Drawing statistically sound conclusions from data analysis and supporting business decisions.

- Data Modeling and Database Design: Familiarize yourself with relational databases, data warehousing concepts, and dimensional modeling. Practical application: Designing efficient and scalable database structures to support analysis.

- Big Data Technologies (Optional): Depending on the role, familiarity with Hadoop, Spark, or cloud-based data platforms may be beneficial. Practical application: Processing and analyzing extremely large datasets efficiently.

- Problem-Solving & Communication: Practice articulating your analytical process and explaining complex findings in a clear and concise manner. Practical application: Presenting your analysis and recommendations to stakeholders effectively.

Next Steps

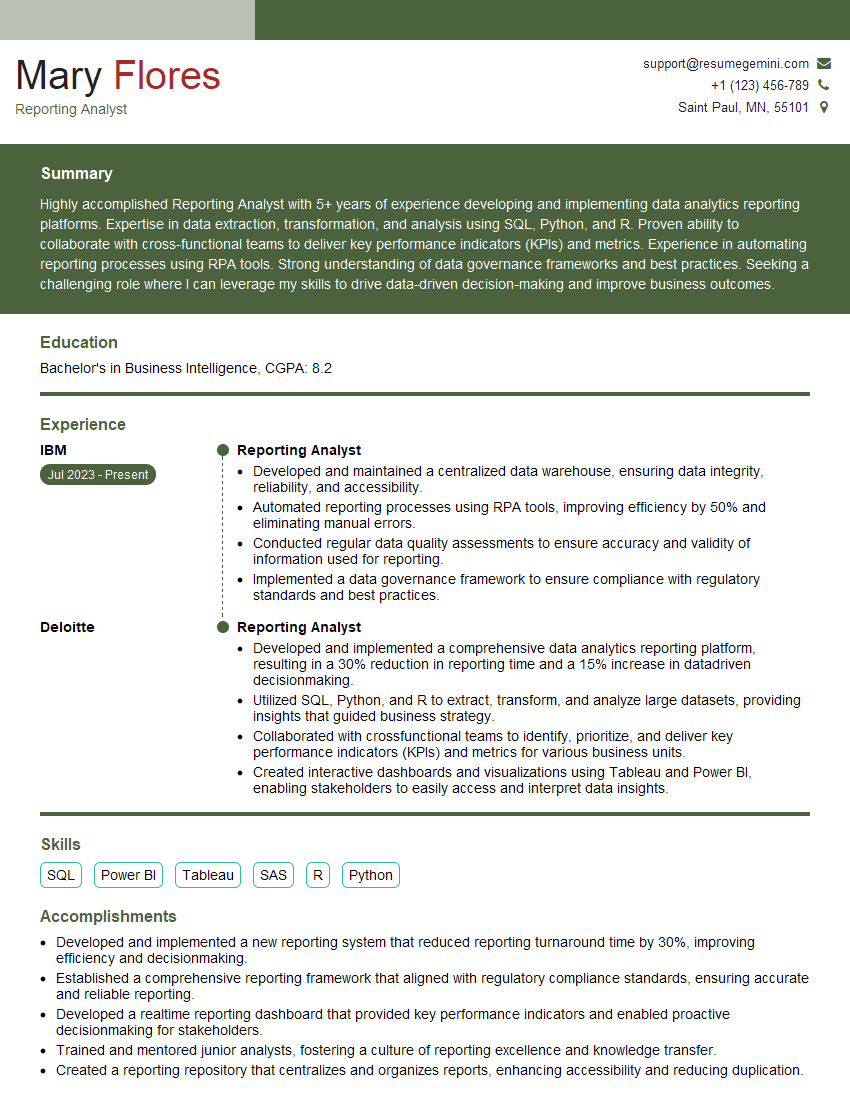

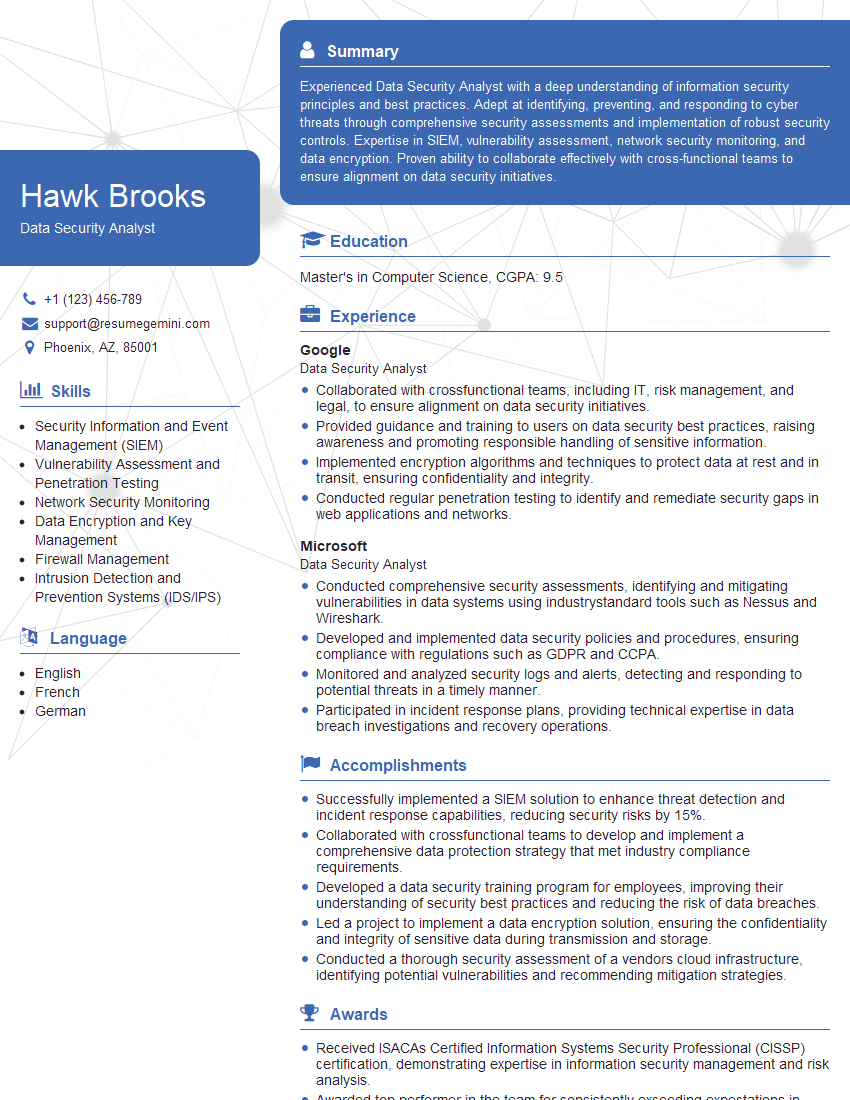

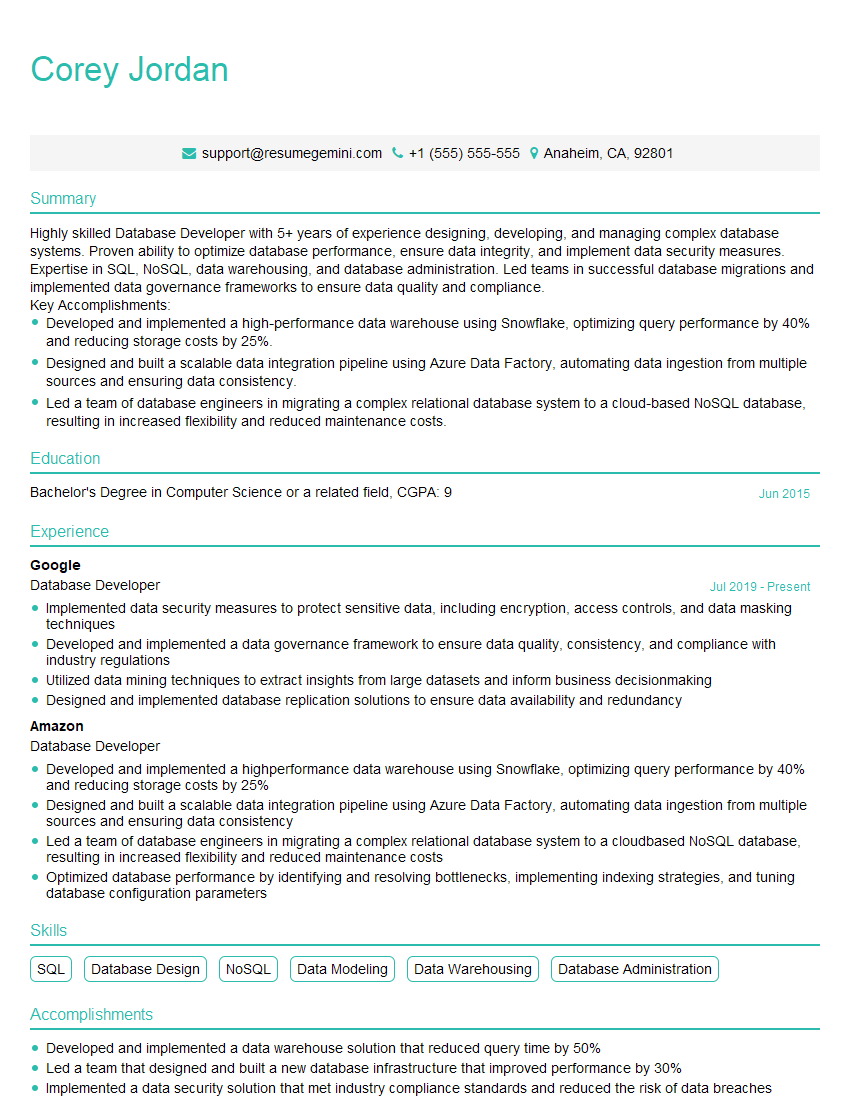

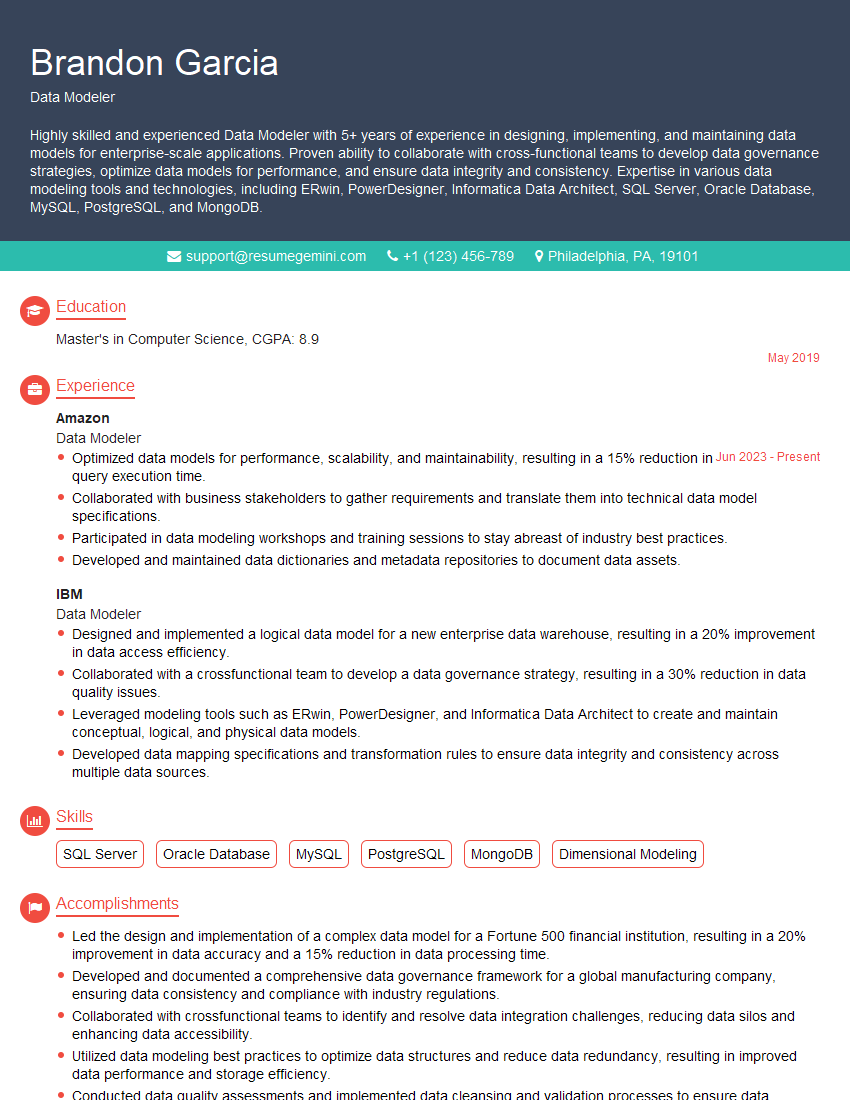

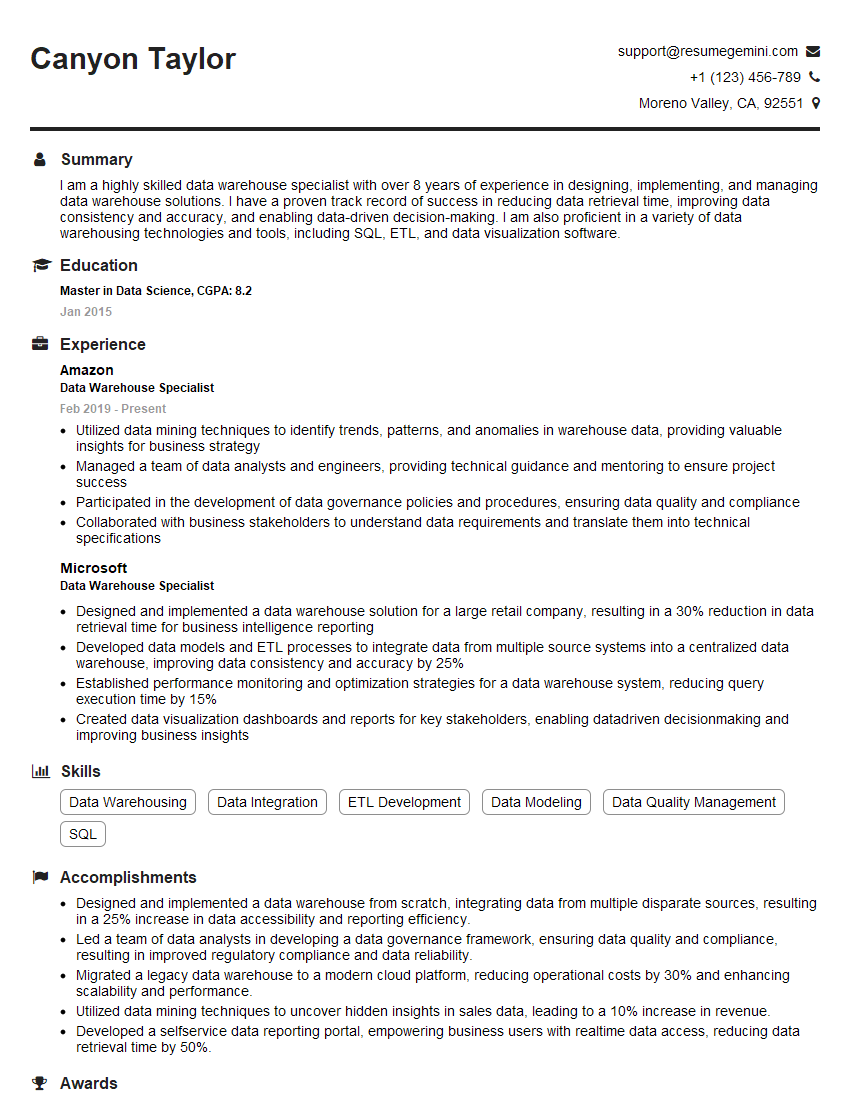

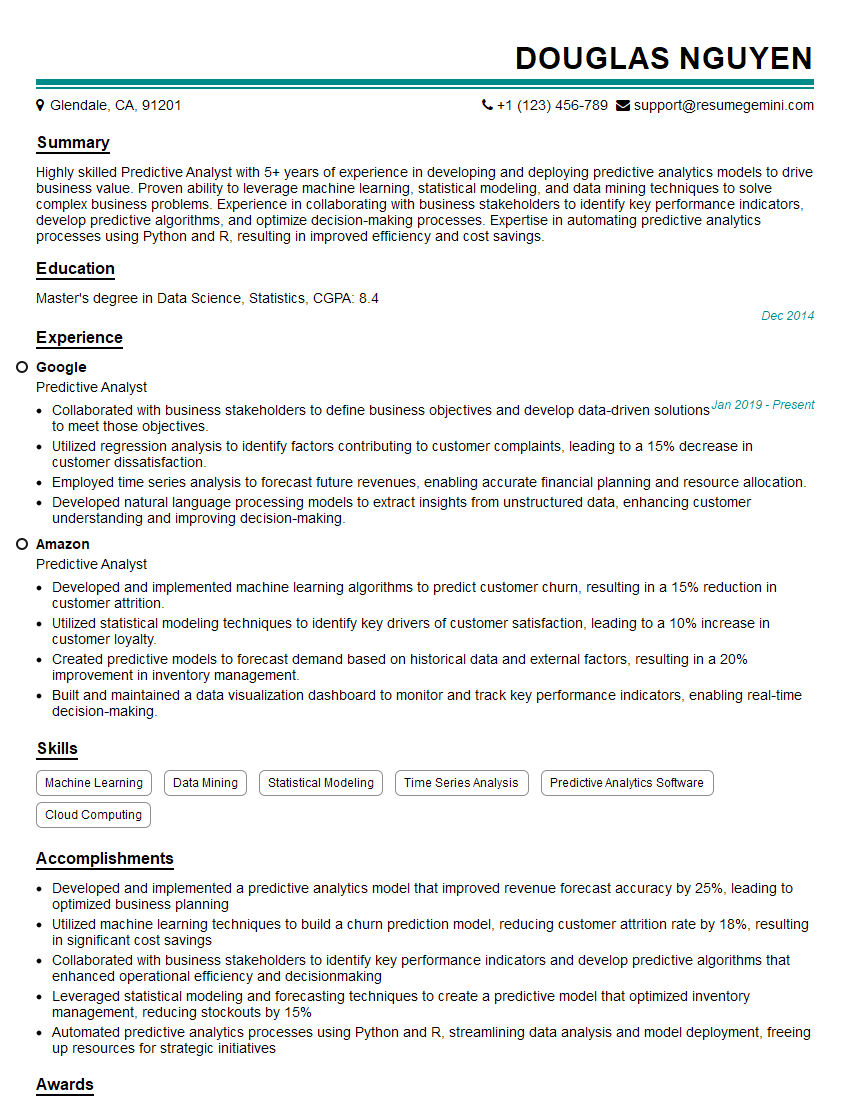

Mastering Data Management and Analysis Skills is paramount for career advancement in today’s data-driven world. These skills are highly sought after, opening doors to exciting opportunities and higher earning potential. To maximize your job prospects, invest time in creating a strong, ATS-friendly resume that effectively showcases your expertise. ResumeGemini is a trusted resource to help you build a professional and impactful resume. We provide examples of resumes tailored to Data Management and Analysis Skills to guide you. Take advantage of these resources to present yourself as a compelling candidate.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Very informative content, great job.

good