The thought of an interview can be nerve-wracking, but the right preparation can make all the difference. Explore this comprehensive guide to Diagnostic and Analytical Skills interview questions and gain the confidence you need to showcase your abilities and secure the role.

Questions Asked in Diagnostic and Analytical Skills Interview

Q 1. Describe your approach to solving a complex problem with limited data.

When tackling a complex problem with limited data, my approach prioritizes a thorough understanding of the problem’s context and the available data’s limitations. I start by clearly defining the problem and the desired outcome. Then, I employ a multi-pronged strategy.

- Exploratory Data Analysis (EDA): I begin with a comprehensive EDA to visualize the data, identify patterns, and unearth potential insights even with limited observations. This involves using descriptive statistics, creating visualizations (histograms, scatter plots, box plots), and checking for data quality issues like missing values or outliers.

- Assumption Testing and Model Selection: Given limited data, I carefully consider the assumptions of any statistical models I might employ. Simpler models with fewer parameters are often preferred to avoid overfitting. Techniques like bootstrapping or cross-validation are crucial to assess model robustness.

- Data Augmentation (where appropriate): If feasible and ethically sound, I explore methods to augment the data, such as using synthetic data generation techniques or carefully integrating external, related datasets. However, this step demands rigorous validation to prevent introducing bias.

- Sensitivity Analysis: I perform sensitivity analysis to understand how the results change based on variations in assumptions or minor changes in the limited data. This helps in quantifying the uncertainty associated with the conclusions drawn.

- Expert Consultation: Collaborating with domain experts is essential. Their knowledge can fill gaps in the data and inform the interpretation of findings, even with limited quantitative evidence.

For example, imagine predicting customer churn with only a small sample of customer data. EDA might reveal a correlation between early cancellation and specific customer service interactions. A simple logistic regression model, coupled with bootstrapping to assess uncertainty, could then offer a reasonable prediction, acknowledging the limitations of the data sample.

Q 2. Explain a time you identified a critical flaw in a dataset or analysis.

In a previous project analyzing website traffic data, I discovered a significant flaw in the dataset. The data included timestamps showing a large volume of traffic originating from a single IP address at odd hours. This raised concerns about data integrity. Initially, the analysis suggested exceptionally high traffic from a specific geographic location. However, a closer look using IP address geolocation and traffic pattern analysis revealed that the IP address was associated with a web scraper, not legitimate website visitors.

This discovery was crucial because it would have significantly skewed key performance indicators (KPIs) like bounce rate and conversion rates. I addressed this by filtering out the identified bot traffic, leading to a more accurate and reliable representation of genuine user behavior. This highlighted the importance of data validation and cleaning in any analytical process.

Q 3. How do you prioritize tasks when facing multiple urgent analytical requests?

When faced with multiple urgent analytical requests, I use a prioritization framework that combines urgency, impact, and feasibility. I employ a matrix where tasks are evaluated on their urgency (high/medium/low) and their potential impact on business decisions (high/medium/low). Feasibility considers the resources required and my expertise.

- High Urgency, High Impact: These tasks are tackled immediately. Examples include critical error detection in a live system or an urgent request from senior management.

- High Urgency, Low Impact: These tasks are delegated if possible or prioritized after high-impact tasks. The urgency is addressed while minimizing the impact on strategic goals.

- Low Urgency, High Impact: These are important strategic tasks. I schedule these tasks strategically, ensuring sufficient time and resources are allocated for thorough analysis.

- Low Urgency, Low Impact: These are often deferred or removed from the immediate queue unless they support larger strategic objectives.

This matrix, combined with clear communication with stakeholders, ensures that the most critical requests are addressed first while managing expectations around less urgent tasks.

Q 4. Describe your experience with statistical analysis techniques.

My experience encompasses a wide range of statistical techniques. I’m proficient in descriptive statistics for summarizing data (mean, median, standard deviation, percentiles), inferential statistics for drawing conclusions about populations (hypothesis testing, confidence intervals), and regression analysis for modeling relationships between variables.

- Regression Analysis: I use linear, logistic, and polynomial regression to model relationships and make predictions. I understand the importance of checking model assumptions (linearity, normality, homoscedasticity) and using appropriate diagnostic tools.

- Hypothesis Testing: I’m experienced in t-tests, ANOVA, and chi-square tests to determine statistical significance and draw conclusions about population parameters.

- Time Series Analysis: I’ve used ARIMA and exponential smoothing models for forecasting and analyzing time-dependent data. This is crucial when dealing with trends and seasonality.

- Clustering and Classification: I’ve applied techniques like k-means clustering and logistic regression for customer segmentation and classification problems.

I also have experience with more advanced techniques such as survival analysis, Bayesian statistics, and machine learning algorithms as needed. My approach always involves selecting the most appropriate technique based on the specific problem and the nature of the data.

Q 5. How do you ensure the accuracy and reliability of your analytical findings?

Ensuring accuracy and reliability is paramount. My approach follows these steps:

- Data Validation and Cleaning: I meticulously check for data quality issues like missing values, outliers, and inconsistencies. I use appropriate techniques to handle missing data (imputation or exclusion) and outlier detection methods.

- Robustness Checks: I use techniques like cross-validation to evaluate the performance of models and ensure they generalize well to unseen data. I also check for overfitting.

- Sensitivity Analysis: As mentioned earlier, I assess how sensitive the results are to changes in the data or assumptions.

- Peer Review and Documentation: I actively seek peer review of my analysis and findings. Detailed documentation of my methodology, data sources, and assumptions is crucial for transparency and reproducibility.

- Error Tracking and Correction: I implement processes for tracking errors, investigating their root cause, and correcting them. This is an iterative process to ensure continuous improvement in the accuracy of my work.

For instance, before presenting any findings, I would always perform a thorough review of my code, ensuring it is free of bugs and the data cleaning steps have been carefully executed. Documenting every step allows for easier debugging and ensures that the entire process is verifiable.

Q 6. What tools and technologies are you proficient in for data analysis?

I’m proficient in a range of tools and technologies for data analysis. My expertise includes:

- Programming Languages: Python (with libraries like Pandas, NumPy, Scikit-learn, Matplotlib, Seaborn), R

- Databases: SQL, NoSQL databases (e.g., MongoDB)

- Data Visualization Tools: Tableau, Power BI, matplotlib, seaborn

- Cloud Computing Platforms: AWS (Amazon Web Services), Google Cloud Platform (GCP), Azure

- Statistical Software: SPSS, SAS (though I prefer Python and R for their flexibility and open-source nature)

I adapt my tool selection to the specific project requirements. For example, I would use Python and its powerful libraries for large-scale data manipulation and machine learning tasks, while Tableau might be more suitable for creating interactive dashboards for presenting results to stakeholders.

Q 7. Explain your understanding of different types of biases in data analysis.

Understanding biases is critical for reliable data analysis. Biases can significantly distort results and lead to flawed conclusions. Here are some key types:

- Selection Bias: This occurs when the sample used for analysis isn’t representative of the population of interest. For example, surveying only online users to understand the preferences of the entire population would introduce selection bias.

- Confirmation Bias: This involves favoring information that confirms pre-existing beliefs and ignoring contradictory evidence. It’s crucial to maintain objectivity and avoid interpreting data to fit preconceived notions.

- Sampling Bias: This relates to how the sample is selected. For instance, using a convenience sample (easily accessible individuals) rather than a random sample can lead to biased results.

- Measurement Bias: This happens when the method used to collect data systematically over- or under-estimates the true value. For example, poorly designed survey questions can introduce measurement bias.

- Reporting Bias: This refers to the selective reporting of data, often omitting results that don’t support a particular hypothesis. Transparency in reporting all findings, positive or negative, is crucial to avoid this bias.

Mitigating these biases requires careful planning of the data collection process, employing appropriate sampling techniques, using rigorous analytical methods, and critically evaluating the results with awareness of potential biases.

Q 8. How do you handle conflicting data sources or interpretations?

Conflicting data sources are a common challenge in diagnostics. My approach involves a systematic investigation to understand the discrepancies. First, I meticulously review the data sources, assessing their credibility, methodology, and potential biases. This includes examining data collection methods, sample sizes, and potential sources of error. For example, if I’m comparing data from two different hospital systems, I would check for differences in diagnostic criteria or patient populations.

Next, I use data visualization techniques (like scatter plots or histograms) to identify patterns and outliers. This visual inspection can reveal inconsistencies that might not be apparent through simple statistical analysis. I then employ statistical methods to quantify the level of disagreement between sources. This might involve calculating correlation coefficients or conducting hypothesis tests to assess whether the differences are statistically significant.

Finally, I reconcile the discrepancies by considering the strengths and weaknesses of each data source. Sometimes, one source might be more reliable than another, requiring a weighting scheme to give more importance to the more accurate information. In cases where the discrepancies are irreconcilable, I carefully document the conflict and discuss the limitations of the analyses, presenting the various interpretations transparently.

Q 9. Describe your process for identifying root causes of problems.

Identifying root causes requires a structured approach. I typically use a combination of techniques, including the ‘5 Whys’ methodology, fishbone diagrams (Ishikawa diagrams), and fault tree analysis.

The ‘5 Whys’ involves repeatedly asking ‘why’ to uncover the underlying causes. For instance, if a system is failing, I might ask: Why is the system failing? (Answer: Due to a software bug.) Why is there a software bug? (Answer: Inadequate testing.) Why was the testing inadequate? (Answer: Lack of resources.) Why was there a lack of resources? (Answer: Budget constraints.) Why were there budget constraints? (Answer: Unforeseen economic downturn.) This iterative process helps drill down to the core issue.

Fishbone diagrams help visualize potential causes, categorizing them into main branches (e.g., people, process, materials, equipment, environment) and then listing sub-causes. This provides a comprehensive overview of potential root causes, facilitating collaborative brainstorming. Fault tree analysis, on the other hand, works top-down, starting with the failure and breaking it down into contributing factors until basic causes are identified. I typically adapt the chosen technique to suit the specific problem and context.

Q 10. How do you communicate complex analytical findings to a non-technical audience?

Communicating complex analytical findings to a non-technical audience requires simplifying the information without sacrificing accuracy. I achieve this through several strategies: First, I focus on telling a story. I start with a clear and concise overview of the problem, explaining its significance in relatable terms. I then present the key findings using simple language, avoiding jargon and technical terms whenever possible. Instead of using statistical terms like ‘p-value’, I would explain the significance in plain English, like, ‘The results show a significant increase of X, which suggests…’

Next, I use visuals extensively. Charts, graphs, and infographics are incredibly powerful tools for conveying complex information in an easily digestible format. I choose the most appropriate visual representation for the data, ensuring it is clear, concise, and easy to understand. Finally, I use analogies and real-world examples to illustrate the findings and their implications. This helps the audience connect with the data on a personal level. I also keep the presentation concise and tailored to the audience’s specific needs and knowledge level.

Q 11. Give an example of a time you had to defend your analytical conclusions.

In a previous role, I identified a significant flaw in a widely used diagnostic algorithm. My analysis revealed that the algorithm was producing false positives in a specific patient subgroup, leading to unnecessary and potentially harmful interventions. This finding challenged the existing clinical practice, and I had to defend my conclusions to a panel of senior clinicians and medical informaticians.

To support my findings, I presented a detailed breakdown of my analysis, including the data sources, methodologies, and statistical evidence. I also addressed potential counterarguments and limitations of my study proactively. I presented visualizations of the data which clearly demonstrated the issue. My thorough preparation, clear and concise communication, and robust evidence ultimately convinced the panel of the validity of my conclusions. The algorithm was subsequently revised, improving patient care and avoiding unnecessary interventions. The experience highlighted the importance of thorough research, rigorous methodology, and the ability to communicate findings persuasively.

Q 12. How do you stay updated on the latest advancements in your field?

Staying current in the rapidly evolving field of diagnostics and analytical skills requires a multi-faceted approach. I regularly attend conferences, webinars, and workshops to learn about the latest advancements in statistical methods, data mining techniques, and emerging technologies. I actively participate in professional organizations, engaging with peers and experts through online forums and networking events.

Furthermore, I dedicate time to continuous learning through online courses, tutorials, and academic publications. This includes following leading journals, subscribing to relevant newsletters and podcasts, and participating in online communities focused on specific analytical techniques, like machine learning or data visualization. I also actively seek out mentorship opportunities, learning from experienced professionals in the field. This multi-pronged strategy ensures that my skillset remains sharp and relevant.

Q 13. Describe a situation where you had to make a critical decision based on incomplete data.

During a critical incident involving a malfunctioning piece of medical equipment, I had to make a crucial decision with incomplete data. The system was exhibiting erratic behaviour, and the available diagnostic data was insufficient to pinpoint the exact cause. The equipment was critical to patient care, and delaying action could lead to severe consequences.

My approach involved a structured process: First, I systematically gathered all available data, including error logs, sensor readings, and technician reports. I then developed a hypothesis based on the most likely scenarios, prioritizing safety and considering the worst-case scenarios. With limited time and data, I utilized the available information to determine the safest and most effective course of action, which was to temporarily shut down the affected system and activate a backup system. Though the root cause remained initially unclear, this decision prioritized patient safety while providing time for more in-depth investigation. Following this, I actively pursued further diagnostics, ultimately identifying and resolving the root cause. The incident highlighted the importance of quick thinking, decisive action, and an ability to balance risk and potential consequences with limited information.

Q 14. How do you validate the accuracy of your analytical models?

Validating the accuracy of analytical models is crucial. I use a variety of methods depending on the model’s type and purpose. For example, for predictive models, I employ techniques like cross-validation, which involves splitting the data into multiple subsets, training the model on some subsets, and testing its performance on the remaining ones. This helps assess how well the model generalizes to unseen data. I also use metrics such as AUC (Area Under the Curve) or precision and recall to quantify the model’s performance.

Another crucial step is comparing model predictions with known outcomes. This could involve comparing model outputs against ground truth data, such as retrospectively collected patient outcomes. For example, if I developed a model to predict patient readmission risk, I’d compare my model’s predictions with actual readmission rates. Any significant discrepancy would require a review of the model’s assumptions, features, or parameters. Finally, I document the entire validation process transparently and meticulously, allowing others to scrutinize my findings. Regular model monitoring and updates based on new data are also essential for maintaining accuracy and reliability.

Q 15. What is your experience with predictive modeling?

Predictive modeling is the process of using historical data to predict future outcomes. I have extensive experience building predictive models using various techniques, including linear regression, logistic regression, decision trees, random forests, and neural networks. My experience spans diverse applications, from predicting customer churn for a telecommunications company – where I used logistic regression to identify at-risk customers based on usage patterns and demographics – to forecasting equipment failures in a manufacturing plant using time series analysis and machine learning. In the latter case, I implemented a predictive maintenance system that reduced downtime by 15% by anticipating potential breakdowns.

My approach involves a rigorous process: first, careful feature engineering to select the most relevant variables; second, model selection and training; third, thorough model evaluation using appropriate metrics like AUC, precision, recall, and F1-score; and finally, deployment and monitoring of the model to ensure its continued accuracy and effectiveness. I also employ techniques like cross-validation to prevent overfitting and ensure generalizability.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. Explain your approach to data cleaning and preprocessing.

Data cleaning and preprocessing are crucial steps in any analytical project. My approach is systematic and iterative. It begins with a thorough exploration of the data to understand its structure, identify missing values, and detect anomalies. I then use a combination of techniques to address these issues.

- Handling Missing Values: I carefully consider the reasons for missing data. Simple imputation (mean, median, mode) might be suitable for some cases, but for more complex scenarios, I may use more advanced techniques like k-Nearest Neighbors or multiple imputation. The choice depends on the data’s characteristics and the potential impact on the analysis.

- Outlier Detection and Treatment: I utilize various methods like box plots, scatter plots, and Z-score calculations to identify outliers. The treatment depends on the context. Sometimes, outliers are genuine data points representing extreme cases, and removing them would bias the analysis. In other cases, they might be due to errors, and removal or transformation (e.g., using logarithmic transformation) is justified. I always document my decisions and rationale.

- Data Transformation: I often employ data transformations like scaling (standardization or normalization) to improve model performance, particularly for algorithms sensitive to feature scales.

- Feature Engineering: This crucial step involves creating new features from existing ones to improve model accuracy. For example, I might create interaction terms or derive new features from dates and times.

Throughout this process, I use version control to track changes and ensure reproducibility. Think of it as building a sturdy foundation for my analysis – without clean data, even the most sophisticated model will fail.

Q 17. How do you handle unexpected results or outliers in your data?

Unexpected results or outliers demand careful investigation. My approach is rooted in a systematic process of verification and validation. I start by reviewing the data cleaning and preprocessing steps to rule out any errors in that stage. I then investigate the data generating process to understand the potential reasons behind the unexpected results. This might involve consulting subject matter experts or exploring additional data sources.

For example, if a predictive model unexpectedly shows a negative correlation where a positive one was expected, I would: (1) verify the data integrity, (2) examine the model’s assumptions, (3) check for potential confounding variables, and (4) consider alternative model specifications. If an outlier persists after careful scrutiny, I may choose to either remove it, transform it, or develop a robust model that is less sensitive to outliers. The decision will always be documented and justified.

Ultimately, thorough investigation is paramount. Ignoring unexpected results or outliers risks drawing inaccurate conclusions and potentially making flawed decisions. The goal is to understand, not simply dismiss, the anomalies.

Q 18. Describe your experience with data visualization tools and techniques.

Data visualization is critical for effective communication and understanding. I am proficient in several tools and techniques, including:

- Tableau and Power BI: For creating interactive dashboards and reports that communicate insights effectively to both technical and non-technical audiences.

- Matplotlib and Seaborn (Python): For generating static visualizations like histograms, scatter plots, and heatmaps to explore data patterns and relationships.

- ggplot2 (R): A powerful package for creating publication-quality graphics.

My approach to visualization is driven by the specific analytical goal. For exploratory data analysis, I focus on creating visualizations that reveal patterns and outliers. For reporting, I aim for clarity and conciseness, using appropriate charts and graphs to communicate insights in an accessible manner. A well-designed visualization can often reveal insights that are otherwise missed in a table of numbers.

Q 19. How do you measure the success of your analytical work?

Measuring the success of analytical work depends on the project’s goals. However, some common metrics I use include:

- Accuracy and Precision: For predictive models, these metrics assess the model’s ability to correctly classify or predict outcomes.

- Recall and F1-score: These are particularly relevant when dealing with imbalanced datasets, where one class is significantly more prevalent than others.

- AUC (Area Under the ROC Curve): A measure of a classifier’s ability to distinguish between classes.

- R-squared: For regression models, this indicates the proportion of variance in the dependent variable explained by the model.

- Business Impact: Ultimately, the success of analytical work is often measured by its impact on business decisions and outcomes. This might involve quantifying cost savings, revenue increases, or improvements in efficiency.

I always aim to present my findings in a clear, concise, and actionable manner, providing recommendations based on the analysis and highlighting the implications for decision-making.

Q 20. What are your strengths and weaknesses in diagnostic and analytical thinking?

My strengths in diagnostic and analytical thinking lie in my ability to approach problems systematically, my attention to detail, and my capacity to critically evaluate evidence. I am comfortable working with complex datasets and have a knack for identifying underlying patterns and relationships. I am also adept at communicating my findings clearly and concisely to both technical and non-technical audiences.

A potential weakness is my tendency to get overly focused on the details, sometimes losing sight of the bigger picture. I am actively working to improve this by practicing higher-level strategic thinking and by consciously setting aside time for reflection and synthesis.

Q 21. Describe a time you had to work under pressure to meet a deadline.

During my time at [Previous Company Name], we were tasked with delivering a critical analysis for a major client with a tight deadline – just three days. The data was complex, and initial analyses revealed unexpected inconsistencies. Under this pressure, I prioritized the most crucial aspects of the analysis first, focusing on delivering meaningful insights within the time constraint. I effectively delegated tasks to my team, ensuring clear communication and collaboration. We worked extended hours, and I employed agile methodologies to adapt to new findings and address challenges as they arose. We ultimately delivered a comprehensive report that met the client’s expectations, showcasing our ability to deliver accurate results under pressure. This experience underscored the importance of effective time management, teamwork, and prioritization in high-stakes situations.

Q 22. How do you collaborate effectively with others on analytical projects?

Effective collaboration on analytical projects hinges on clear communication, shared understanding, and a well-defined workflow. I believe in fostering a collaborative environment where everyone feels comfortable contributing their expertise. This starts with clearly defining project goals and deliverables from the outset. We then break down the project into manageable tasks, assigning responsibilities based on individual strengths and expertise. Regular check-ins, utilizing tools like project management software or shared online workspaces, ensure we stay on track and address any challenges promptly. I actively encourage open communication, actively listening to others’ perspectives, and providing constructive feedback. For example, on a recent customer segmentation project, we used a collaborative whiteboard to brainstorm potential segments, then used a shared spreadsheet to track progress on individual analyses and combine our findings. This ensured transparency and facilitated efficient knowledge sharing.

Q 23. How do you identify and mitigate potential risks in your analyses?

Risk mitigation in data analysis is crucial for ensuring the validity and reliability of our conclusions. I approach this systematically by considering potential risks at each stage of the analytical process. This includes data quality issues like missing values or outliers, biases in data collection or sampling methods, and limitations of the analytical techniques employed. For instance, I always check for missing data and handle them appropriately using imputation or other techniques. I also rigorously document assumptions and limitations of my analyses. To account for potential biases, I might explore multiple analytical approaches or conduct sensitivity analyses to assess the impact of various assumptions. Finally, I always ensure that my findings are clearly communicated along with the associated uncertainties and limitations. For example, in a forecasting project, I identified the risk of using only historical data, which might not reflect future trends. To mitigate this, I incorporated external factors like economic indicators into my model and performed scenario planning to assess various possible outcomes.

Q 24. What is your experience with A/B testing or experimental design?

I have extensive experience with A/B testing and experimental design. A/B testing, a form of randomized controlled experiment, allows us to compare two versions of a variable (e.g., a website design, an email subject line) to determine which performs better. Successful A/B testing requires careful planning, including defining a clear hypothesis, selecting appropriate metrics, and ensuring sufficient sample size to detect statistically significant differences. I’m familiar with various experimental designs, including factorial designs and randomized block designs, depending on the complexity of the experiment. For example, I once conducted an A/B test on a client’s website to compare two different call-to-action buttons. We carefully tracked conversion rates for each version, ensuring that other variables remained constant. The results showed a statistically significant improvement in conversion rates for one version, leading to a data-driven decision about website optimization. This rigorous approach helps minimize bias and ensure the results are reliable and actionable.

Q 25. Explain your understanding of hypothesis testing.

Hypothesis testing is a statistical method used to make inferences about a population based on sample data. It involves formulating a null hypothesis (H0), a statement of no effect or no difference, and an alternative hypothesis (H1), which contradicts the null hypothesis. We then collect data and use statistical tests to determine the probability of observing the obtained results if the null hypothesis were true. If this probability (p-value) is below a pre-defined significance level (alpha, usually 0.05), we reject the null hypothesis in favor of the alternative hypothesis. For instance, a null hypothesis might be that there is no difference in average sales between two marketing campaigns. We would collect sales data from both campaigns, perform a t-test, and assess the p-value. A low p-value would suggest that the observed difference is statistically significant and that the null hypothesis can be rejected.

It’s crucial to understand that failing to reject the null hypothesis doesn’t necessarily prove it to be true; it simply means there isn’t enough evidence to reject it based on the available data. The choice of statistical test depends on the type of data and research question.

Q 26. Describe a situation where you had to identify and solve a problem with minimal guidance.

In a previous role, we experienced an unexpected spike in customer support tickets related to a newly launched feature. With limited guidance, I took the initiative to investigate the root cause. I started by analyzing the ticket data, identifying patterns and common themes. I discovered that a specific error message was consistently reported by users experiencing the issue. I then delved into the application logs and codebase to pinpoint the source of the error. After some debugging, I identified a code defect that triggered the error under specific circumstances. I proposed a quick fix, which was reviewed and deployed, effectively resolving the issue and significantly reducing the volume of support tickets. This experience highlighted the importance of systematic problem-solving, meticulous data analysis, and proactive communication to address unexpected challenges effectively.

Q 27. How do you approach learning new analytical techniques or technologies?

I approach learning new analytical techniques and technologies with a structured and hands-on approach. I usually start by identifying the specific needs and objectives that require these new skills. Then, I utilize a combination of resources, including online courses, tutorials, documentation, and professional publications. I find that practical application is key; I often work through examples and exercises to solidify my understanding and develop proficiency. I also actively participate in online communities and forums to engage with other professionals, exchange knowledge, and seek clarifications when needed. For instance, when I needed to learn Python for data analysis, I enrolled in an online course, followed tutorials, and worked on personal projects to apply what I learned. This approach helps ensure I not only understand the theoretical concepts but also can effectively apply them in real-world scenarios.

Q 28. What are some common pitfalls to avoid in data analysis?

Several common pitfalls can lead to inaccurate or misleading conclusions in data analysis. One significant pitfall is confirmation bias, where analysts might subconsciously seek out evidence to support pre-existing beliefs while ignoring contradictory evidence. Another is overfitting, where a model performs well on training data but poorly on unseen data, often due to excessive complexity. Data dredging, or exploring data without a clear hypothesis, can also lead to spurious correlations. Finally, ignoring the context and limitations of the data can lead to erroneous interpretations. For example, relying solely on correlation without considering causation can lead to flawed conclusions. To avoid these pitfalls, it’s essential to maintain objectivity, rigorously validate models, and clearly document assumptions and limitations.

Key Topics to Learn for Diagnostic and Analytical Skills Interview

- Problem Decomposition: Breaking down complex problems into smaller, manageable parts. This involves identifying the core issue, separating symptoms from root causes, and defining clear objectives.

- Data Analysis & Interpretation: Practical application includes analyzing data from various sources (e.g., reports, logs, user feedback) to identify trends, patterns, and anomalies. Mastering data visualization techniques to effectively communicate findings is crucial.

- Root Cause Analysis: Utilizing methodologies like the “5 Whys” or fishbone diagrams to identify the underlying causes of problems, rather than just addressing surface-level symptoms. This demonstrates a proactive and solution-oriented approach.

- Critical Thinking & Logical Reasoning: Developing and applying logical reasoning to evaluate information objectively, identify biases, and formulate well-supported conclusions. This involves recognizing assumptions and evaluating the validity of evidence.

- Hypothesis Formulation & Testing: Formulating testable hypotheses based on available data and designing experiments or analyses to validate or refute those hypotheses. This showcases a scientific and evidence-based approach to problem-solving.

- Decision-Making & Judgment: Making informed decisions based on analyzed data and logical reasoning, considering potential risks and consequences. This includes the ability to justify decisions clearly and confidently.

- Communication of Findings: Effectively communicating complex analytical findings to both technical and non-technical audiences through clear, concise, and persuasive presentations or reports.

Next Steps

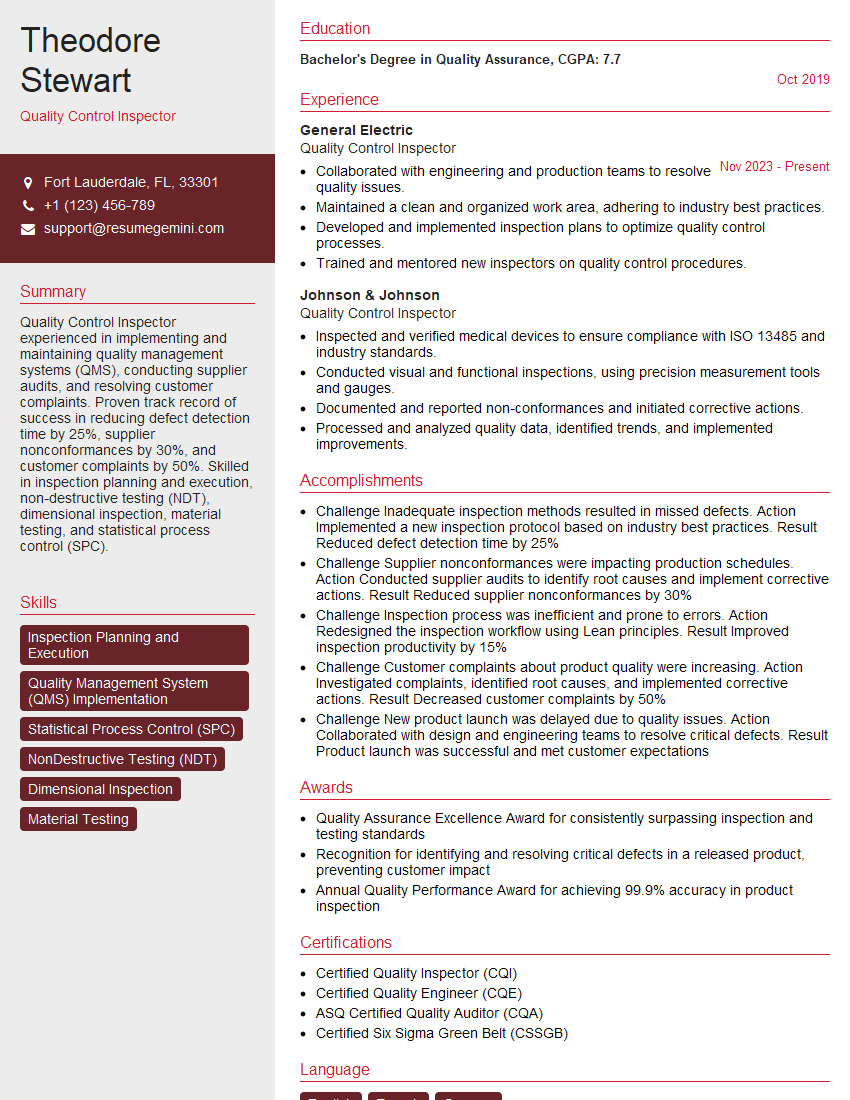

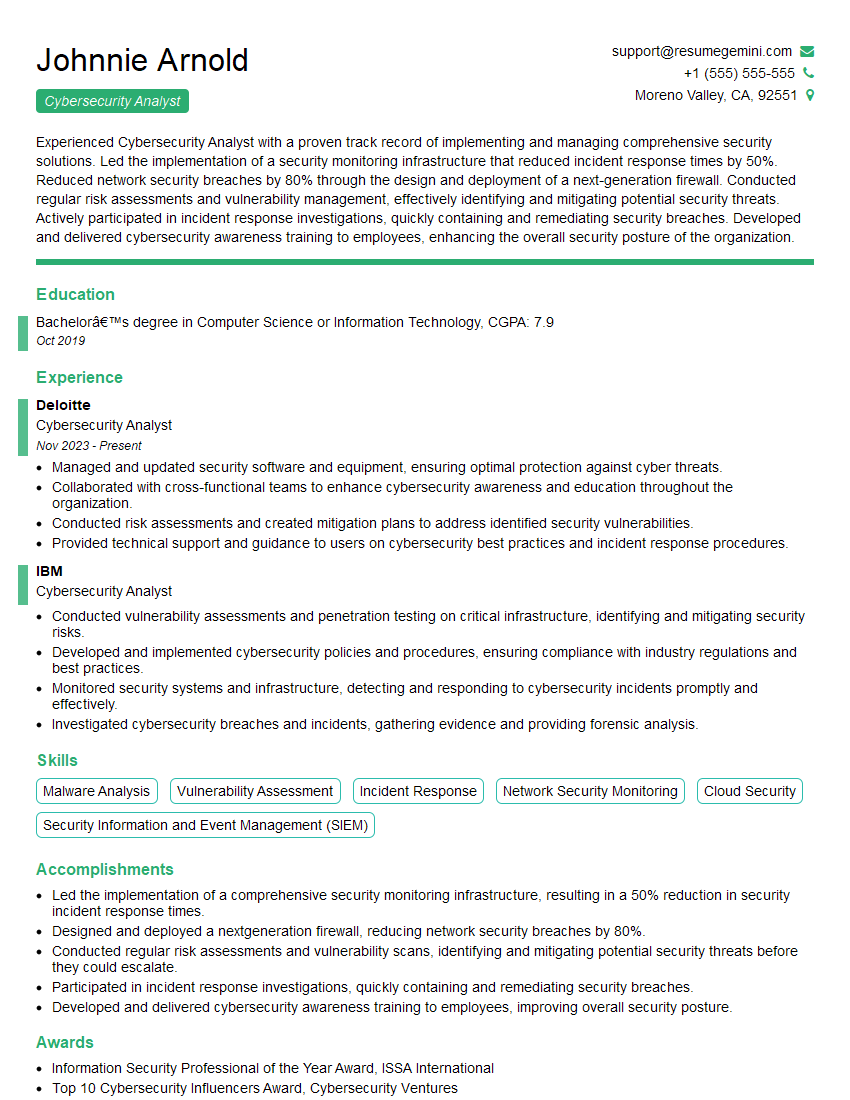

Mastering diagnostic and analytical skills is paramount for career advancement in today’s data-driven world. These skills are highly sought after across numerous industries, leading to greater responsibility, influence, and earning potential. To maximize your job prospects, it’s essential to present these skills effectively on your resume. Creating an ATS-friendly resume is crucial for getting your application noticed. ResumeGemini is a trusted resource that can help you build a professional and impactful resume, highlighting your diagnostic and analytical capabilities. Examples of resumes tailored to these skills are available within the ResumeGemini platform to guide you.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Very informative content, great job.

good