The right preparation can turn an interview into an opportunity to showcase your expertise. This guide to Experience with remote sensing and aerial imagery analysis interview questions is your ultimate resource, providing key insights and tips to help you ace your responses and stand out as a top candidate.

Questions Asked in Experience with remote sensing and aerial imagery analysis Interview

Q 1. Explain the differences between passive and active remote sensing.

Passive remote sensing relies on detecting naturally emitted or reflected electromagnetic radiation from the Earth’s surface. Think of it like taking a photograph – you’re capturing the light that’s already there. Examples include capturing visible light with a camera or infrared radiation with a thermal sensor. Active remote sensing, on the other hand, emits its own energy and then measures the radiation reflected back. This is like shining a flashlight on an object and observing the reflected light. LiDAR (Light Detection and Ranging) is a prime example; it sends out laser pulses and records the time it takes for the pulses to return, providing highly accurate elevation data. The key difference lies in the source of the radiation: passive systems use naturally occurring radiation, while active systems create their own radiation source.

Q 2. Describe the electromagnetic spectrum and its relevance to remote sensing.

The electromagnetic spectrum encompasses all types of electromagnetic radiation, ranging from very long radio waves to very short gamma rays. Remote sensing utilizes specific portions of this spectrum depending on the application. For instance, visible light (the portion we see) is used for creating standard color images. Near-infrared (NIR) is highly sensitive to vegetation health, allowing us to monitor crop growth or detect forest fires. Shortwave infrared (SWIR) helps identify minerals and soil types. Thermal infrared (TIR) detects heat emitted by objects, making it useful for monitoring temperature changes and volcanic activity. Microwave radiation is used in radar systems, penetrating clouds and allowing for all-weather imaging. Understanding the unique characteristics of each part of the spectrum is crucial for choosing the right sensor and interpreting the resulting data. It’s like having a toolbox filled with different tools – each one designed for a specific task.

Q 3. What are the different types of aerial platforms used in remote sensing?

Aerial platforms for remote sensing encompass a wide range of technologies, each with its strengths and limitations. The most common include:

- Aircraft: Fixed-wing aircraft (planes) offer wide coverage areas but can be more expensive to operate. Helicopters provide greater maneuverability for detailed surveys in complex terrain but are less cost-effective for large areas.

- Unmanned Aerial Vehicles (UAVs or Drones): Drones are increasingly popular for their flexibility, affordability, and high-resolution imagery acquisition. They’re ideal for targeted surveys and detailed mapping of smaller regions.

- Satellites: Satellites provide the broadest coverage, offering imagery of vast regions even inaccessible areas. However, their spatial resolution might be lower compared to aircraft or drones, and data acquisition is often scheduled and not on demand.

- High-Altitude Platforms (HAPs): These are platforms operating at altitudes between UAVs and satellites offering a mix of high resolution and large area coverage.

The choice of platform depends on factors like budget, required spatial resolution, area of coverage, and accessibility of the target region.

Q 4. Explain the concept of spatial resolution in remote sensing imagery.

Spatial resolution in remote sensing refers to the size of the smallest discernible detail in an image. It essentially represents the level of fineness or sharpness of the image. A higher spatial resolution means smaller pixels and a greater level of detail – you can see finer features. A lower spatial resolution means larger pixels and less detail. For example, a satellite image with a 1-meter spatial resolution can identify objects as small as 1 meter across, while a 10-meter resolution image would only show details larger than 10 meters. This is analogous to the resolution of a photograph – a higher resolution image printed on a large canvas will show more detail compared to a low-resolution image.

Q 5. What are the advantages and disadvantages of using LiDAR data?

LiDAR (Light Detection and Ranging) data offers several advantages: it provides highly accurate three-dimensional (3D) elevation models, it can penetrate vegetation to measure the ground surface below, and it allows for precise measurements of object heights. This makes it invaluable for creating digital elevation models (DEMs), analyzing terrain, detecting changes in elevation, and mapping infrastructure. However, LiDAR also has disadvantages. The cost can be substantial, especially for large areas. The data processing can be complex and requires specialized software. Also, the accuracy is affected by weather conditions, particularly dense fog or heavy rain.

Q 6. How do you perform orthorectification of aerial imagery?

Orthorectification is a process of geometrically correcting aerial imagery to remove distortion caused by terrain relief, camera tilt, and lens distortion. This results in an image where distances and angles are accurate, suitable for precise measurements and mapping. The process generally involves these steps:

- Sensor orientation and calibration: Determining the camera’s position and orientation during image capture.

- Ground control point (GCP) identification: Selecting easily identifiable points in both the image and on a reference map with known coordinates.

- Geometric model creation: Developing a mathematical model that relates image coordinates to ground coordinates.

- Orthorectification transformation: Applying the model to correct for geometric distortions.

- Quality assessment: Evaluating the accuracy of the orthorectified image through root mean square error (RMSE) analysis.

Software packages like ERDAS Imagine, ENVI, and ArcGIS are commonly used for orthorectification, often utilizing digital elevation models (DEMs) to account for terrain variations.

Q 7. Describe different image enhancement techniques used in remote sensing.

Image enhancement techniques aim to improve the visual quality and information content of remote sensing imagery. These techniques can be categorized into:

- Spatial Enhancement: Techniques like filtering (e.g., low-pass, high-pass) improve image sharpness, reduce noise, or highlight edges. For example, a high-pass filter enhances boundaries between land cover classes making them more easily discernible.

- Spectral Enhancement: Techniques like band ratioing (e.g., NDVI for vegetation) or principal component analysis (PCA) highlight specific spectral features. NDVI highlights vegetation density, making it invaluable in agriculture or forestry applications. PCA reduces dimensionality while retaining most of the spectral variance.

- Geometric Enhancement: These techniques involve geometric corrections, like orthorectification (discussed earlier), removing distortions and improving accuracy.

The choice of enhancement technique depends on the type of imagery, the desired information, and the specific objectives of the analysis. It’s a crucial step in preparing imagery for interpretation and analysis.

Q 8. What are common image classification methods used in remote sensing?

Image classification in remote sensing involves assigning categories to pixels in satellite or aerial imagery. Think of it like coloring a map – each pixel gets a color representing a land cover type, like forest, water, or urban area. Several methods exist, each with its strengths and weaknesses. Common methods include:

Supervised Classification: This approach uses training data – samples of known land cover types – to ‘train’ a classifier to recognize patterns and assign classes to the rest of the image. Algorithms like Maximum Likelihood Classification (MLC), Support Vector Machines (SVM), and Random Forest are frequently used.

Unsupervised Classification: This method doesn’t use labeled training data. Instead, it groups pixels based on their spectral similarities. K-means clustering is a popular algorithm for unsupervised classification. It’s like asking the computer to find natural groupings in the data without prior knowledge of what those groupings represent.

Object-Based Image Analysis (OBIA): Instead of classifying individual pixels, OBIA groups pixels into meaningful objects (e.g., buildings, trees) based on spectral and spatial characteristics. This approach is particularly useful for high-resolution imagery.

Deep Learning Methods: Convolutional Neural Networks (CNNs) are increasingly used for image classification due to their ability to learn complex features from large datasets. They often outperform traditional methods, especially with high-resolution data.

The choice of method depends on factors like data availability, desired accuracy, and computational resources. For instance, supervised classification requires labelled data, which can be time-consuming to obtain, while unsupervised classification is faster but might require more post-processing to interpret the results.

Q 9. Explain the concept of supervised and unsupervised classification.

The core difference lies in the use of training data. Supervised classification requires a ‘ground truth’ dataset, where we know the land cover type for a set of pixels. We use this labeled data to train a classifier, teaching it to associate spectral signatures (the unique reflectance patterns of different materials) with specific classes. Imagine teaching a child to identify different fruits by showing them examples of apples, oranges, and bananas. The child learns to associate visual features (color, shape) with each fruit type. The classifier does the same, but with spectral information instead of visual features.

Unsupervised classification, on the other hand, doesn’t use pre-labeled data. The algorithm groups pixels based on their inherent similarities in spectral characteristics. It’s like asking the computer to sort a basket of fruits into piles without telling it what types of fruits are in the basket. The algorithm finds natural groupings based on color, shape, and size, but you have to interpret the groupings afterward to assign meaningful labels (e.g., ‘pile 1: mostly red and round – likely apples’).

In practice, supervised classification generally yields higher accuracy, but requires more effort in data preparation. Unsupervised classification is faster but needs careful interpretation of the results.

Q 10. How do you assess the accuracy of a classification result?

Accuracy assessment is crucial to understand the reliability of a classification result. We typically use a confusion matrix, which compares the classified land cover to a reference dataset (e.g., high-quality ground truth data or another classification with known accuracy). The confusion matrix shows how many pixels were correctly classified (producer’s accuracy) and how accurately each class was represented in the classified image (user’s accuracy).

From the confusion matrix, we can calculate several key metrics:

Overall Accuracy: The percentage of pixels correctly classified.

Producer’s Accuracy (PA): The accuracy of each class in terms of correctly classifying pixels belonging to that class. A low PA for a class indicates that the classifier is often misclassifying pixels belonging to that class.

User’s Accuracy (UA): The accuracy of identifying pixels belonging to a certain class in the final classification. A low UA for a class means there is a high chance that pixels that have been classified as belonging to this class are actually from a different class.

Kappa Coefficient: Measures the agreement between the classified map and the reference data, correcting for chance agreement. A higher kappa value (closer to 1) indicates better agreement and hence higher accuracy.

Imagine you classified an image and want to check its accuracy. You would compare the results with a reference map produced by field surveys or high-resolution aerial photography. A high overall accuracy combined with high producer’s and user’s accuracy for all the land cover types would signal a reliable classification.

Q 11. What are the challenges of working with very high-resolution imagery?

Very high-resolution (VHR) imagery, while offering incredible detail, presents unique challenges:

Computational Cost: Processing VHR data requires significant computational resources due to the massive amount of data involved. Classifying a large VHR image can take considerable time and processing power.

Data Volume: The sheer size of VHR datasets poses storage and management challenges. Specialized infrastructure and efficient data handling techniques are required.

Increased Complexity: VHR imagery reveals finer details, leading to more complex spectral and spatial patterns that can make classification more challenging. The algorithm needs to be sophisticated to distinguish between subtle differences.

Data Heterogeneity: VHR images often exhibit higher within-class variability. For example, within a forest class, you might have variations in tree species and density, making classification more difficult.

Speckle Noise: Sensor noise can be particularly noticeable in VHR imagery, requiring pre-processing steps like filtering to reduce its impact on classification accuracy.

For example, working with 1-meter resolution imagery for urban areas involves dealing with hundreds of gigabytes of data, requiring powerful computers and efficient algorithms to perform classification effectively.

Q 12. Describe your experience with different GIS software packages.

Throughout my career, I’ve extensively utilized various GIS software packages, including ArcGIS Pro, QGIS, and ERDAS IMAGINE. ArcGIS Pro, with its comprehensive suite of tools and extensive spatial analysis capabilities, has been my primary platform for complex projects involving large datasets and sophisticated analysis. Its ability to handle geodatabases and integrate different data types is invaluable.

QGIS, an open-source alternative, provides a powerful and cost-effective option for tasks like image preprocessing, visualization, and simpler classification tasks. Its flexibility and extensive plugin support make it a versatile tool. I’ve used QGIS extensively for teaching and smaller-scale projects where licensing costs are a concern. ERDAS IMAGINE, with its specialized image processing capabilities, is particularly useful for tasks requiring advanced image enhancements and analysis. For example, its orthorectification tools are very powerful for generating accurate geometrically corrected images.

My experience spans the entire workflow – from data acquisition and preprocessing to analysis, classification, and final product generation, utilizing these software packages according to the specific needs of each project.

Q 13. How do you handle large datasets in remote sensing?

Handling large remote sensing datasets requires a strategic approach involving both hardware and software solutions. Key strategies include:

Cloud Computing: Platforms like Google Earth Engine, Amazon Web Services (AWS), and Azure provide scalable computing resources and storage solutions ideal for processing massive datasets. This eliminates the need for expensive local infrastructure.

Parallel Processing: Dividing the dataset into smaller chunks and processing them simultaneously on multiple processors dramatically reduces processing time. Many GIS software packages support parallel processing.

Data Compression: Using efficient compression techniques (e.g., lossless compression like GeoTIFF with appropriate codecs) reduces storage space and improves data transfer speeds.

Data Subsetting: Working with smaller subsets of the data for analysis and processing can significantly improve efficiency. This is particularly helpful during exploratory data analysis or for testing different algorithms.

Efficient Data Structures: Utilizing optimized data structures, such as tiled datasets or cloud-optimized GeoTIFFs (COGs), ensures quick access to data subsets. COGs allow efficient access to portions of an image, reducing loading time and processing demands.

For instance, I worked on a project involving several terabytes of satellite imagery. Using Google Earth Engine’s cloud-based processing capabilities, I was able to process and analyze the data efficiently, which would have been impossible using local resources.

Q 14. What are some common file formats used in remote sensing and GIS?

Remote sensing and GIS employ a variety of file formats, each with its strengths and weaknesses. Some of the most common include:

GeoTIFF (.tif, .tiff): A widely used raster format that supports georeferencing (geographic coordinates), making it ideal for storing and sharing spatially referenced imagery and elevation data. It is versatile and has various compression options for efficient storage.

Erdas Imagine (.img): A proprietary raster format used by ERDAS IMAGINE software. It offers efficient storage and supports a wide range of data types.

Shapefile (.shp, .shx, .dbf): A common vector format used to store geographic features like points, lines, and polygons. It doesn’t store spatial index information directly and usually consists of several files.

GeoJSON (.geojson): A text-based, open-source geographic data format suitable for web mapping and data exchange. It is human-readable and widely supported across GIS software.

HDF (.hdf, .h5): Hierarchical Data Format is a flexible format that can store various data types, including raster and vector data, making it suitable for large, complex datasets.

The choice of file format depends on the application, data volume, compatibility requirements, and desired compression level. For instance, GeoTIFF is a good general-purpose format, while HDF is better suited for handling very large datasets, and Shapefiles are best for vector data.

Q 15. Explain the concept of georeferencing.

Georeferencing is the process of assigning real-world coordinates (latitude and longitude) to points in a remote sensing image. Think of it like putting a map grid onto a photograph so you know exactly where each pixel represents on the Earth’s surface. This is crucial because raw imagery is just a collection of pixels; georeferencing gives it geographical context, allowing us to analyze it within a spatial framework and integrate it with other geographic data.

This is achieved using ground control points (GCPs). GCPs are points with known coordinates (obtained from surveys, maps, or GPS) that are identifiable in both the image and a reference dataset (like a map). Software then uses these points to transform the image coordinates into a geographic coordinate system (like UTM or WGS84).

For example, imagine a satellite image of a forest. Without georeferencing, you only know the pixel location of a tree. After georeferencing, you know the precise latitude and longitude of that tree, allowing you to overlay it with other data like forest cover maps or elevation models.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. How do you perform geometric correction of imagery?

Geometric correction addresses distortions in imagery caused by factors like sensor perspective, Earth’s curvature, and atmospheric refraction. These distortions can lead to inaccuracies in measurements and analysis. The goal is to create a geometrically accurate image that faithfully represents the Earth’s surface.

There are several methods, ranging from simple to complex:

- Polynomial Transformations: These use mathematical functions (like polynomials) to map image coordinates to geographic coordinates. They are suitable for images with relatively minor distortions.

- Rubber Sheeting/Spline Interpolation: This involves using GCPs to warp the image to fit a reference dataset. It’s more flexible than polynomial transformations and better handles larger distortions.

- Orthorectification: This is a sophisticated technique that corrects for both geometric and relief (elevation) distortions. It requires a Digital Elevation Model (DEM) to account for terrain variations and produces highly accurate, orthorectified imagery – essentially a perfectly “flattened” view.

The choice of method depends on the image quality, the magnitude of distortions, and the required accuracy. Software packages like ArcGIS, ENVI, and QGIS provide tools for performing these corrections. For instance, in ENVI, I would typically select the appropriate GCPs, define the coordinate system, and choose the transformation method before applying the correction.

Q 17. What is the role of metadata in remote sensing data management?

Metadata is crucial for managing remote sensing data. Think of it as the descriptive information associated with the data – it’s the ‘data about the data’. It describes characteristics of the sensor, acquisition parameters, processing steps, and other relevant information needed to understand and interpret the imagery effectively.

It includes information like:

- Sensor type and specifications: Spectral bands, spatial resolution, radiometric resolution.

- Acquisition date and time: Crucial for understanding temporal changes.

- Geographic location: Coordinate system, projection.

- Processing history: Corrections applied, algorithms used.

Effective metadata management is vital for data discovery, reproducibility, and quality control. Without proper metadata, it’s nearly impossible to track the origin, processing steps, and reliability of remote sensing datasets – leading to potential errors in analysis and interpretation.

For example, metadata allows us to readily verify the accuracy of a particular dataset through its processing history. We can compare the metadata of different acquisitions to determine data consistency and choose appropriate datasets for change detection or other analyses.

Q 18. Describe your experience with different types of sensors (e.g., multispectral, hyperspectral).

My experience spans various sensor types, each offering unique capabilities. I’ve extensively worked with:

- Multispectral sensors: These capture data in several broad spectral bands (e.g., Landsat, Sentinel-2). I’ve used this data for vegetation monitoring, land cover classification, urban planning, and detecting water quality changes. The relatively lower cost and wider availability make multispectral imagery ideal for large-scale studies.

- Hyperspectral sensors: These record data in hundreds of narrow, contiguous spectral bands, providing detailed spectral information about surface materials. This level of detail is valuable for precise mineral identification, vegetation stress assessment, and pollution detection. However, hyperspectral data is often more computationally demanding and requires specialized processing techniques. For example, I used hyperspectral imagery to identify specific types of invasive plant species in a conservation project.

- LiDAR (Light Detection and Ranging): I’ve also worked with LiDAR data, which provides 3D information about the Earth’s surface. LiDAR is incredibly useful for creating Digital Elevation Models (DEMs), detecting changes in elevation, and analyzing canopy structure. This has been particularly useful for assessing the impact of natural disasters on terrain.

Each sensor type presents its own challenges and benefits. Choosing the appropriate sensor is crucial for a successful project, based on the specific research question and available resources.

Q 19. How do you address atmospheric effects in remote sensing data?

Atmospheric effects like scattering and absorption significantly alter the signal recorded by remote sensing sensors, affecting the accuracy of analysis. These effects are primarily caused by atmospheric particles (aerosols, gases) which interact with the electromagnetic radiation.

Several methods are employed to address atmospheric effects:

- Atmospheric Correction Models: These models use physical and empirical relationships to estimate and remove the atmospheric influence. Examples include dark object subtraction (DOS), empirical line methods, and more complex radiative transfer models (e.g., MODTRAN). These models often require ancillary data, such as atmospheric profiles.

- Empirical methods: Methods such as histogram matching and image normalization can improve the consistency between images and reduce atmospheric impacts.

- Using atmospheric correction software: Specialized software packages (like ATCOR or FLAASH) are frequently used to perform atmospheric corrections. They take into account sensor characteristics, atmospheric conditions, and other relevant parameters.

The choice of method depends on the sensor, atmospheric conditions, and the required accuracy. Failing to correct for atmospheric effects can lead to misinterpretations of land surface properties and inaccurate quantitative analysis.

Q 20. What is your experience with data fusion techniques?

Data fusion combines data from different sources to create a more comprehensive and informative dataset than using individual sources alone. This improves the quality of information and allows for more robust analysis.

I’ve experience with several data fusion techniques:

- Image fusion: Combining multispectral and panchromatic imagery to enhance spatial resolution. I’ve used techniques like Brovey transform and principal component analysis (PCA) for this purpose. The result is a high-resolution image that retains the spectral information from the multispectral data.

- Sensor fusion: Combining data from different sensors (e.g., multispectral imagery and LiDAR data). This can integrate spectral information with 3D elevation data, useful for creating detailed land cover maps with topographic context.

- Data fusion using machine learning: I’ve used machine learning algorithms to integrate multiple datasets to improve classification accuracies and reduce uncertainties in land-cover or object detection.

Data fusion enhances analysis by allowing for the integration of complementary data to overcome individual limitations. The choice of technique is highly dependent on the specific application and data characteristics. For example, in a project mapping urban infrastructure, fusing LiDAR data with high-resolution imagery allowed for the identification and precise mapping of buildings, roads, and other urban features.

Q 21. Explain the concept of change detection in remote sensing.

Change detection involves analyzing two or more images acquired at different times to identify and quantify changes in the Earth’s surface. This is a fundamental technique for monitoring environmental dynamics, urban growth, deforestation, and other temporal processes.

Common change detection methods include:

- Image differencing: Subtracting pixel values of two images to highlight areas of difference. This is a straightforward method, but sensitive to noise and variations in illumination.

- Image ratioing: Dividing the pixel values of two images; useful for detecting subtle changes and less sensitive to illumination effects than differencing.

- Post-classification comparison: Classifying each image independently and then comparing the resulting land cover maps to identify changes. This is a more robust method but requires careful classification of both images.

- Change vector analysis (CVA): A method that uses the magnitude and direction of change vectors to detect and quantify changes.

In practice, change detection workflows involve image preprocessing (geometric correction, atmospheric correction), selecting an appropriate change detection method, and analyzing the results using GIS tools to delineate and quantify the detected changes. For example, I’ve used change detection to monitor deforestation in the Amazon using Landsat time-series data, identifying areas of forest loss and quantifying the rates of change over time.

Q 22. How would you approach a project involving analyzing deforestation using satellite imagery?

Analyzing deforestation using satellite imagery involves a multi-step process. First, we need to select appropriate imagery – ideally, we’d use a time series of high-resolution images (e.g., Landsat, Sentinel-2) to track changes over time. The spatial resolution is crucial; higher resolution allows for more accurate detection of smaller deforestation events.

Next, we perform pre-processing steps like atmospheric correction to remove the effects of the atmosphere on the image, and geometric correction to ensure accurate spatial registration. Then comes the core analysis: I typically employ change detection techniques. This could involve comparing images from different time periods using methods like image differencing, image ratioing, or more advanced algorithms like post-classification comparison.

For example, we might create a vegetation index, such as the Normalized Difference Vegetation Index (NDVI), for each image. A significant decrease in NDVI over time in a specific area strongly indicates deforestation. Further analysis might involve object-based image analysis (OBIA) to identify and classify individual forest patches and quantify the area of deforestation. Finally, the results are presented in maps and reports, often incorporating GIS tools for visualization and analysis.

A key consideration is the selection of appropriate classification algorithms. Supervised classification requires ground truth data for training, while unsupervised techniques explore the data without prior knowledge. The choice depends on the data availability and project requirements. Ultimately, rigorous quality control and validation are essential to ensure the accuracy and reliability of the results.

Q 23. Describe your experience with processing SAR (Synthetic Aperture Radar) data.

My experience with SAR data processing is extensive. I’m proficient in handling various SAR data formats, including Sentinel-1 and RADARSAT-2 data. My workflow usually begins with data pre-processing, which includes radiometric calibration to correct for sensor-specific biases, and speckle filtering to reduce the noise characteristic of SAR imagery. Speckle filtering is crucial because it can significantly impact the subsequent analysis and interpretation. I’ve used various techniques such as Lee filtering and Frost filtering, selecting the optimal filter based on the specific characteristics of the data and the desired level of detail preservation.

Further processing often involves geometric correction to align the data to a map projection and co-registration of multiple SAR images acquired at different times or from different sensors. Depending on the application, I might use interferometric SAR (InSAR) techniques to generate digital elevation models (DEMs) or measure ground deformation. For instance, I’ve successfully used InSAR to monitor land subsidence in urban areas and assess the impact of earthquakes. I also have experience in polarimetric SAR analysis, extracting information on the scattering properties of the Earth’s surface, which is particularly useful for differentiating different land cover types. My expertise extends to using various software packages, including SNAP, ENVI, and ArcGIS, for SAR data processing and analysis.

Q 24. What are the ethical considerations in using remote sensing data?

Ethical considerations in using remote sensing data are paramount. Privacy is a major concern, especially with high-resolution imagery that can identify individuals or sensitive locations. We must ensure compliance with relevant privacy laws and regulations. For example, anonymization techniques might be necessary to protect the identity of individuals captured in the imagery. Secondly, data security and integrity are crucial. Remote sensing datasets are valuable assets that must be protected against unauthorized access or modification. Secure data storage and access control protocols are essential.

Another critical issue is responsible data use. Remote sensing data can be used for both beneficial and harmful purposes. It’s vital to consider the potential implications of our analysis and ensure that the data is used ethically and responsibly. For example, high-resolution imagery could be misused for military surveillance or to target specific groups. Therefore, careful consideration of the potential impacts of our research is a moral obligation. Finally, data transparency and accessibility are important. Making data and methodologies accessible to others promotes reproducibility and builds trust in the results. Open data initiatives are valuable in this regard.

Q 25. How do you stay updated with the latest advancements in remote sensing technology?

Staying current in the rapidly evolving field of remote sensing requires a multi-pronged approach. I regularly attend conferences and workshops – both in-person and virtual – to learn about the latest advancements in sensor technology, data processing techniques, and applications. I actively participate in online communities and forums, engaging with other professionals to exchange ideas and knowledge.

I subscribe to key journals and publications in the field, reading research papers and articles to keep abreast of cutting-edge developments. This includes reading both peer-reviewed journal articles and industry-specific publications to get a broad view of advances in hardware, software, and algorithms. Additionally, I regularly review new software and hardware releases to understand their capabilities and potential applications to my work. Online courses and webinars from reputable institutions are another valuable resource for upskilling and staying informed about new developments. For example, I recently completed a course on deep learning techniques for image classification, significantly enhancing my ability to process complex datasets.

Q 26. Describe a challenging project you worked on involving remote sensing and how you overcame the challenges.

One challenging project involved mapping mangrove forests in a remote coastal region using a combination of satellite imagery and drone-acquired data. The challenge lay in the dense canopy cover, which made it difficult to accurately assess the health and extent of the mangroves using satellite imagery alone. The cloud cover in the region also presented a significant hurdle, limiting the availability of cloud-free satellite images.

To overcome these challenges, we employed a multi-sensor approach. We used high-resolution satellite imagery to delineate the overall extent of the mangrove forest, complemented by drone imagery to obtain detailed information on canopy structure and health. We developed a novel image processing workflow incorporating advanced image fusion techniques to combine the data from multiple sources, improving the accuracy of the mangrove cover classification. This involved using a combination of object-based image analysis (OBIA) and machine learning algorithms to handle the complexity and variability of the mangrove forest.

The project also presented logistical challenges due to the remote location and limited infrastructure. We had to carefully plan the drone flights, considering weather conditions and potential risks. Despite these hurdles, we successfully completed the project, producing high-quality maps of the mangrove forests that provided valuable information for conservation efforts. This project highlighted the power of integrating different data sources and techniques to tackle complex remote sensing challenges.

Q 27. What are your salary expectations for this role?

My salary expectations for this role are in the range of $110,000 to $130,000 per year. This is based on my experience, skills, and the current market rate for similar positions. I am, however, flexible and open to discussing this further based on the comprehensive benefits package and the specific details of the role.

Q 28. Why are you interested in this position?

I’m highly interested in this position because it aligns perfectly with my passion for applying remote sensing and aerial imagery analysis to address real-world environmental challenges. The opportunity to contribute to [mention specific project or company goal, showing you’ve researched the company] is particularly exciting. Your team’s reputation for innovation and its commitment to [mention company values] strongly resonate with my professional values. Furthermore, the prospect of collaborating with a team of experienced professionals in a dynamic environment is extremely appealing. I am confident that my skills and experience will make a significant contribution to your team’s success.

Key Topics to Learn for Remote Sensing and Aerial Imagery Analysis Interviews

- Fundamentals of Remote Sensing: Understanding electromagnetic spectrum, sensor types (e.g., multispectral, hyperspectral, LiDAR), and data acquisition principles.

- Image Preprocessing: Geometric correction, atmospheric correction, radiometric calibration, and orthorectification techniques. Practical application: Ensuring accurate measurements and analysis from raw imagery.

- Image Classification Techniques: Supervised and unsupervised classification methods (e.g., maximum likelihood, support vector machines, decision trees). Practical application: Extracting meaningful information like land cover types or identifying objects of interest.

- Object-Based Image Analysis (OBIA): Segmentation and classification of image objects, improving accuracy and efficiency in complex scenes. Practical application: Analyzing urban areas, identifying individual trees, or monitoring infrastructure.

- Change Detection: Methods for identifying and quantifying changes over time using multi-temporal imagery. Practical application: Monitoring deforestation, urban sprawl, or infrastructure damage.

- Data Analysis and Interpretation: Understanding statistical methods, spatial statistics, and creating insightful visualizations from remotely sensed data. Practical application: Communicating findings effectively to stakeholders.

- Specific Software Proficiency: Demonstrate expertise in relevant software packages like ArcGIS, QGIS, ENVI, Erdas Imagine, or other industry-standard tools.

- GIS Integration: Understanding how remote sensing data integrates with GIS for spatial analysis and mapping applications.

- Error Analysis and Uncertainty: Understanding and quantifying sources of error and uncertainty in remote sensing data and analysis.

Next Steps

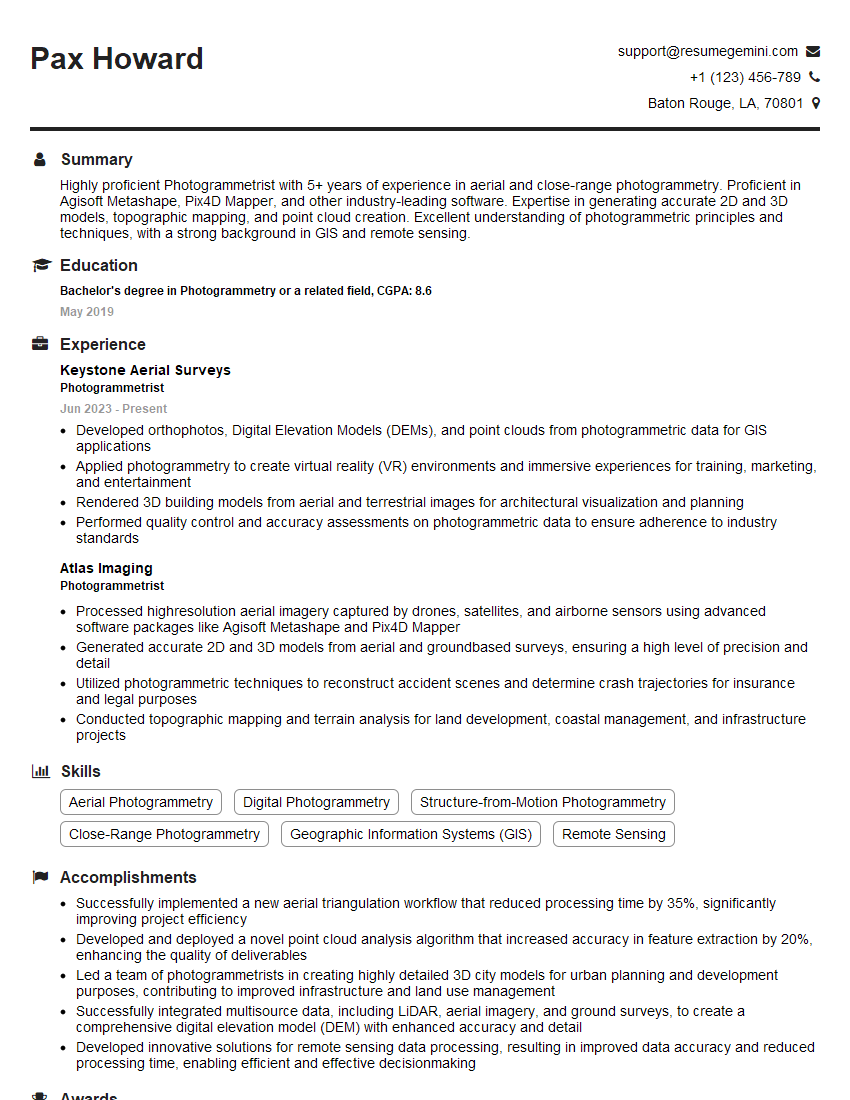

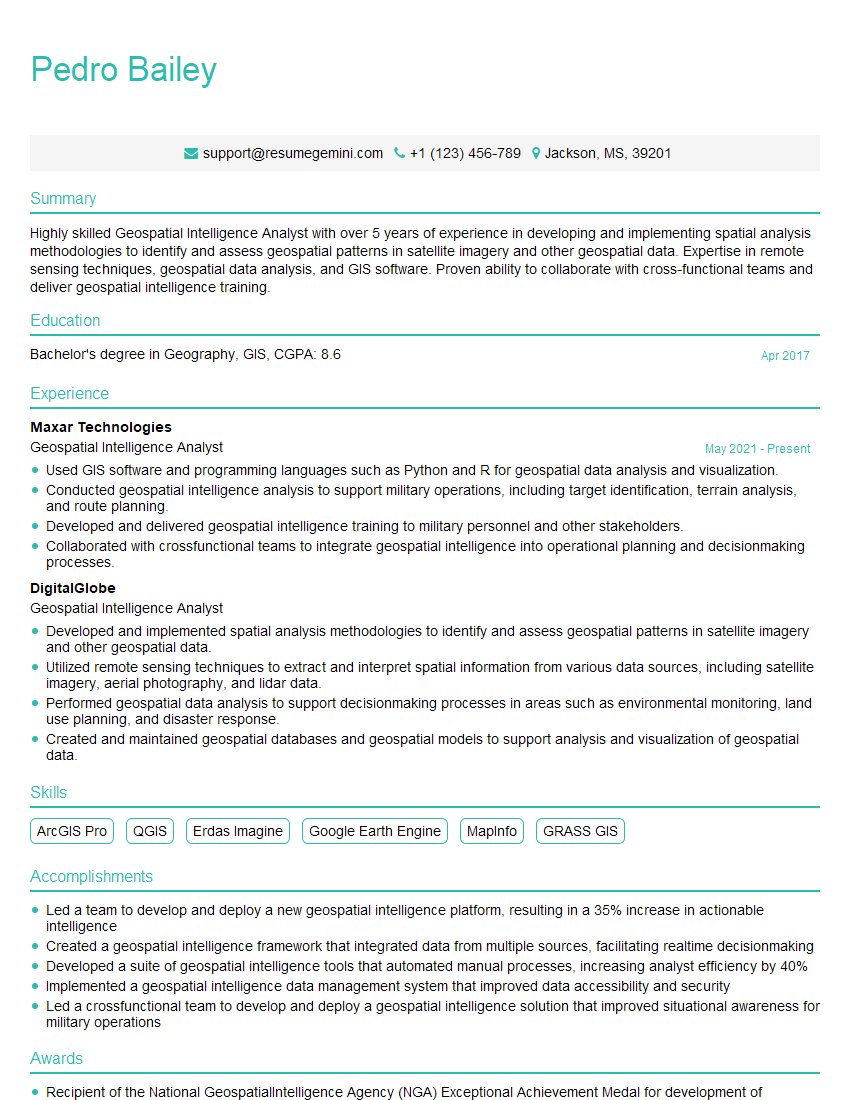

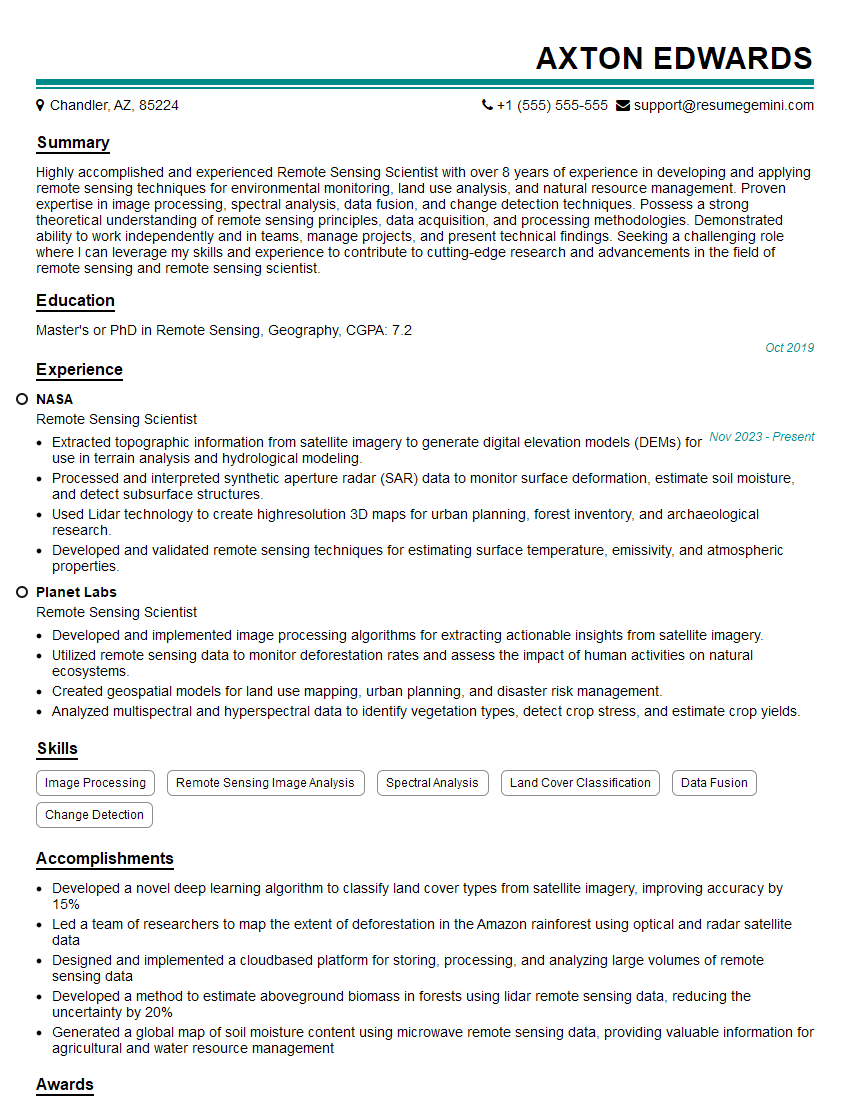

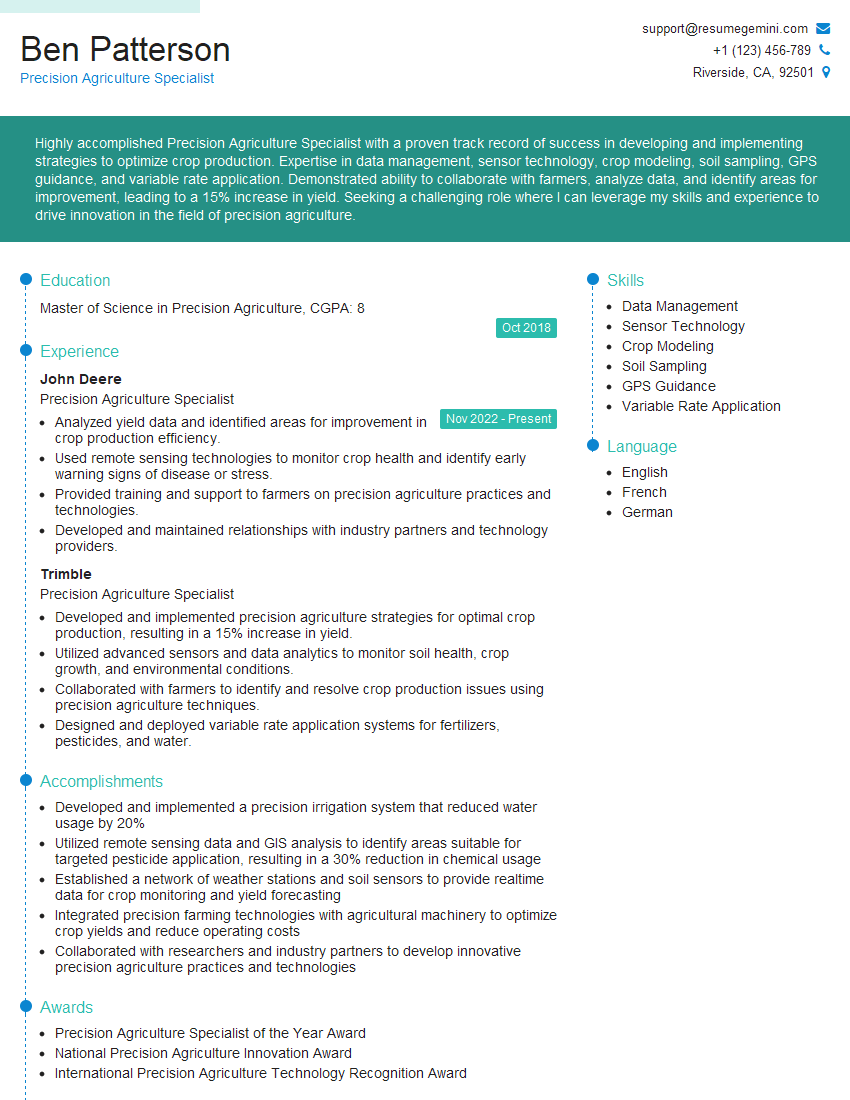

Mastering remote sensing and aerial imagery analysis significantly enhances your career prospects in fields like environmental monitoring, urban planning, agriculture, and natural resource management. These skills are highly sought after, leading to rewarding and impactful careers. To maximize your job search success, crafting a strong, ATS-friendly resume is crucial. ResumeGemini is a trusted resource to help you build a professional and effective resume that highlights your unique skills and experiences. ResumeGemini provides examples of resumes tailored to remote sensing and aerial imagery analysis roles to help guide you in crafting your own compelling application materials.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Very informative content, great job.

good