Are you ready to stand out in your next interview? Understanding and preparing for GCP Big Data interview questions is a game-changer. In this blog, we’ve compiled key questions and expert advice to help you showcase your skills with confidence and precision. Let’s get started on your journey to acing the interview.

Questions Asked in GCP Big Data Interview

Q 1. Explain the differences between Data Lake and Data Warehouse in GCP.

In GCP, both Data Lake and Data Warehouse serve the purpose of storing and analyzing large datasets, but they differ significantly in their approach and use cases. Think of a Data Lake as a raw, unorganized repository of data in its native format – like a vast, uncategorized library. A Data Warehouse, on the other hand, is a structured, curated repository of data specifically organized for analytical processing – like a neatly organized archive with indexed files.

- Data Lake: Stores data in its raw format (structured, semi-structured, and unstructured) without pre-defined schemas. It’s ideal for exploratory data analysis, machine learning, and scenarios where you need to retain all data for potential future use. In GCP, Cloud Storage is often used as the foundation for a Data Lake.

- Data Warehouse: Stores data in a structured format, typically using a star schema or snowflake schema for efficient querying. Data undergoes transformation and cleaning before loading. It’s optimized for analytical queries and reporting, providing faster query performance than a Data Lake. BigQuery is a perfect example of a managed Data Warehouse service within GCP.

Example: Imagine a retail company. Their Data Lake might store all transaction data, website logs, customer surveys, and social media mentions in their original formats. Their Data Warehouse would then contain a curated subset of this data, transformed into a star schema with dimensions like customer demographics, product categories, and time, making it easier to run reports on sales trends.

Q 2. Describe your experience with BigQuery: schema design, query optimization, and data partitioning.

My experience with BigQuery encompasses designing efficient schemas, optimizing queries for performance, and leveraging data partitioning for scalability. I’ve worked extensively with both nested and repeated fields to model complex data structures effectively.

- Schema Design: I prioritize creating schemas that minimize data redundancy and maximize query efficiency. This involves careful consideration of data types, using nested and repeated fields where appropriate, and understanding the trade-offs between denormalization for query speed and normalization for data integrity. For instance, instead of having multiple tables for customer information, I might use nested fields within a single table for address, contact details etc.

- Query Optimization: I utilize BigQuery’s query profiling tools extensively to identify bottlenecks and optimize queries. Techniques I employ include using appropriate data types, leveraging `WHERE` clauses effectively, avoiding `COUNT(*)` on large tables (using approximate `COUNT(*)` instead), utilizing partitions and clustering, and employing common table expressions (CTEs) for complex queries. For example, I’ll often analyze query execution plans to identify opportunities for improvement, like changing join types.

- Data Partitioning: I routinely partition BigQuery tables based on relevant attributes like date, region, or customer ID. This drastically improves query performance by enabling BigQuery to only scan relevant partitions, rather than the entire table. For instance, if I’m running a query for sales in a particular month, partitioning by date would ensure that only the corresponding partition is scanned.

Example Query Optimization: SELECT COUNT(1) FROM `project.dataset.table` -- Inefficient SELECT APPROX_COUNT_DISTINCT(customer_id) FROM `project.dataset.table` -- More Efficient for large datasets

Q 3. How do you handle data security and access control in GCP Big Data projects?

Data security and access control are paramount in GCP Big Data projects. I employ a multi-layered approach incorporating Identity and Access Management (IAM), encryption, and data loss prevention (DLP) measures.

- IAM: I meticulously define roles and permissions using IAM, granting only the necessary access to specific users, groups, or service accounts. This ensures that only authorized personnel can access sensitive data, adhering to the principle of least privilege. For example, data analysts might have read-only access while data engineers have read/write access.

- Encryption: Data at rest is encrypted using Cloud Storage’s encryption features, while data in transit is secured using HTTPS. BigQuery also offers encryption at rest and in transit.

- Data Loss Prevention (DLP): I leverage DLP APIs to detect and prevent sensitive data, such as personally identifiable information (PII), from being leaked. This helps maintain compliance with data privacy regulations. Example: setting DLP rules to automatically redact credit card numbers or social security numbers from logs before storage.

- Virtual Private Cloud (VPC) Service Controls: I often employ VPC Service Controls to establish stricter boundaries around access to BigQuery datasets based on organizational requirements.

By combining these methods, we create a robust security posture safeguarding our Big Data assets.

Q 4. Compare and contrast Dataflow, Dataproc, and Dataplex.

Dataflow, Dataproc, and Dataplex are all powerful GCP services for Big Data processing, but they cater to different needs:

- Dataflow: A fully managed, serverless, stream and batch data processing service. It’s ideal for building complex, scalable data pipelines that need to handle high volumes of data in real-time or near real-time. Think of it as a sophisticated assembly line for data transformations.

- Dataproc: A managed Hadoop and Spark service that offers a fully managed cluster environment. It’s suited for batch processing, ETL jobs, and other computationally intensive tasks. Imagine it as a powerful data processing factory with customized machinery.

- Dataplex: A unified data lake service that simplifies data discovery, organization, and governance across diverse data sources. It acts as a central hub and management system for your Data Lake. It’s like a central control room for all your data resources.

Key Differences: Dataflow excels in stream processing and scalability; Dataproc is best for batch processing and custom Hadoop/Spark jobs; Dataplex focuses on managing and organizing data within a lake environment, making it easily accessible and governable.

Example: You might use Dataflow for real-time fraud detection, Dataproc for running a nightly ETL job to process large datasets, and Dataplex to manage the metadata and govern access to the underlying data lake.

Q 5. What are the different storage options in GCP for Big Data, and when would you choose each?

GCP provides several storage options for Big Data, each with its strengths and weaknesses:

- Cloud Storage: Object storage for unstructured data like images, videos, and logs. It’s cost-effective and highly scalable. It forms the basis for many Data Lakes.

- BigQuery Storage: Optimized for querying large datasets with BigQuery. Data is stored in columnar format for faster query performance. Ideal as the primary storage for a Data Warehouse.

- Cloud SQL: Relational database service for structured data. Suitable for transactional workloads and applications requiring ACID properties.

- Cloud Spanner: Globally distributed, scalable, and highly available relational database service. Ideal for applications requiring strong consistency and global reach.

Choosing the Right Storage: The choice depends on the data type, access patterns, and performance requirements. Cloud Storage is best for raw, unstructured data and Data Lakes. BigQuery Storage is best for analytical queries and Data Warehouses. Cloud SQL is ideal for transactional data and applications requiring ACID compliance. Cloud Spanner is suitable for global scale and strong consistency.

Q 6. Explain your experience with building and managing data pipelines in GCP.

My experience in building and managing data pipelines in GCP centers around leveraging a combination of services for robust, scalable, and reliable solutions. I leverage various GCP services depending on the specific pipeline requirements.

Typical Pipeline Components:

- Ingestion: Cloud Storage Transfer Service, Pub/Sub

- Processing: Dataflow, Dataproc, Cloud Functions

- Transformation: Dataflow (Apache Beam), Dataproc (Spark, Hadoop), Cloud Data Fusion

- Loading: BigQuery, Cloud SQL, Cloud Storage

- Monitoring: Cloud Monitoring, Stackdriver Logging

Example: A typical ETL pipeline I’ve built involved ingesting data from various sources (databases, CSV files, cloud storage) using Cloud Storage Transfer Service and Pub/Sub, transforming the data using Dataflow (Apache Beam) to clean, standardize, and aggregate the data, and loading the transformed data into BigQuery for analytical processing. I leverage Cloud Composer for scheduling the pipeline execution and Cloud Monitoring for monitoring pipeline performance and identifying any issues.

I always focus on modularity, reusability, and error handling to ensure the robustness and maintainability of the pipelines.

Q 7. How do you monitor and troubleshoot performance issues in a BigQuery dataset?

Monitoring and troubleshooting BigQuery performance issues involves a multi-pronged approach.

- BigQuery Query Profiler: This tool provides detailed information on the execution plan of your queries, including the time spent on various stages, data scanned, and the overall query performance. By analyzing the profiler’s output, you can pinpoint bottlenecks and optimization opportunities.

- Query Execution Details: Using the query execution details, you can understand the impact of different query parameters and optimizations. This helps in fine-tuning queries for better performance.

- BigQuery Reservation and Slots: Analyzing the usage patterns of reservations and slots can reveal if there are resource contention issues which affect query performance. If queries routinely time-out, you need to evaluate if you have sufficient capacity.

- Data Modeling and Schema Design Review: Inefficient schema design can significantly impact query performance. Reviewing your tables’ schemas, partitions, and clustering helps identify areas for improvement. Consider whether your chosen schema is appropriate for the types of queries run against the data.

- Dataset Location and Network Latency: BigQuery’s performance can be affected by the location of your dataset and the network latency between your application and BigQuery. Choosing an optimal location for your data and minimizing network latency will help improve the performance.

By systematically investigating these areas, you can effectively diagnose and resolve performance issues in your BigQuery datasets.

Q 8. Describe your experience with schema evolution in BigQuery.

Schema evolution in BigQuery refers to the ability to modify the structure of your tables after they’ve been created, without having to recreate the entire dataset. This is crucial for handling evolving data requirements in real-world projects. Imagine you’re tracking customer data, and initially, you only collect name and email. Later, you decide to add a phone number field. Schema evolution lets you add this field without losing existing data or requiring a massive data migration.

BigQuery handles this elegantly using features like:

- Adding columns: This is the simplest form, where you add new columns to an existing table. BigQuery automatically populates the new column with

NULLvalues for existing rows. - Modifying data types: You can change the data type of an existing column, subject to compatibility rules. For example, you could change a column from

STRINGtoBYTES, but not vice-versa without careful data transformation. - Altering column names: Renaming columns is straightforward; BigQuery manages the changes seamlessly.

However, you should be mindful of potential issues. For example, changing data types can lead to data loss if the new type cannot accommodate existing values. Always test thoroughly and back up your data before performing schema changes. Using BigQuery’s data diff tools and rigorously testing are crucial steps in a robust schema evolution strategy. We utilize these approaches to minimize disruption and guarantee data integrity.

Q 9. Explain your approach to designing a scalable and cost-effective Big Data solution on GCP.

Designing a scalable and cost-effective Big Data solution on GCP involves a holistic approach, focusing on several key areas. It’s like building a house – you need a strong foundation, well-designed rooms, and efficient utilities.

1. Data Storage: Choosing the right storage solution is paramount. Cloud Storage is excellent for raw data, while BigQuery is ideal for analytical workloads. Consider using partitioned and clustered tables in BigQuery to improve query performance and reduce storage costs. For streaming data, Pub/Sub provides a robust, scalable messaging system.

2. Data Processing: Dataflow is a powerful choice for batch and streaming data processing. Its serverless nature ensures scalability while optimizing costs based on actual usage. For more specialized tasks, consider Dataproc for Hadoop/Spark jobs, but ensure careful resource allocation to avoid unnecessary expense.

3. Data Orchestration: Cloud Composer (Apache Airflow) helps manage and schedule complex data pipelines. This ensures that your data flows efficiently and reliably, preventing bottlenecks and reducing operational costs.

4. Monitoring and Optimization: Regularly monitor your solution’s performance using Cloud Monitoring and Cloud Logging. Identify bottlenecks and optimize your queries and pipelines to reduce costs and improve efficiency. BigQuery’s query optimization features and Dataflow’s automatic scaling are critical to this.

5. Cost Management: Utilize GCP’s various cost optimization tools, such as committed use discounts and sustained use discounts, to get better pricing. Proper resource allocation and right-sizing instances are essential. Leveraging free-tier services where appropriate also helps manage expenditure.

In practice, I start by defining clear objectives and key performance indicators (KPIs). Then, I select the optimal services based on data volume, velocity, and variety. The overall strategy should be data-driven and focus on a balance between performance and cost-effectiveness.

Q 10. What are the various pricing models for GCP Big Data services?

GCP’s Big Data services use various pricing models, often a combination of pay-as-you-go and committed use discounts. Let’s break down some key services:

- BigQuery: Uses a pay-as-you-go model based on storage, query processing, and data egress. Committed use discounts are available for significant savings with longer-term commitments.

- Cloud Storage: Charges for storage used, data retrieval, and egress. Different storage classes (standard, nearline, coldline, archive) offer varying costs and access speeds.

- Dataflow: A serverless service, billed based on the amount of processing time used. Automatic scaling ensures you pay only for what you use.

- Dataproc: Charges for the virtual machines used, including preemptible instances for cost savings. The pricing is based on the number and type of machines used, duration, and software licenses.

- Cloud Composer: Billed based on the number of nodes in your environment, their type, and the time they are active. Similar to Dataproc, using preemptible VMs can reduce costs.

Understanding these pricing models is critical to building cost-effective solutions. I always analyze the project’s needs, including data volume, processing requirements, and expected usage patterns, to select the most cost-effective pricing model.

Q 11. How do you ensure data quality in your GCP Big Data projects?

Ensuring data quality is paramount in any Big Data project. It’s like building a house with strong materials – if the foundation is weak, the entire structure is at risk. My approach involves several key strategies:

- Data Validation at the Source: Implement data validation at the point of ingestion. This involves checks for data types, ranges, and consistency.

- Data Profiling: Regularly profile the data to understand its characteristics, identify anomalies, and detect potential quality issues. BigQuery’s built-in profiling capabilities are incredibly helpful.

- Data Cleansing and Transformation: Employ data cleansing techniques to handle missing values, outliers, and inconsistencies. This often involves using Dataflow or other ETL tools.

- Data Governance: Establish clear data governance policies, including data quality standards, roles and responsibilities, and processes for handling data quality issues. This often includes documentation and data dictionaries.

- Automated Quality Checks: Implement automated data quality checks as part of your data pipelines. These checks can be integrated into your data ingestion and processing workflows using tools like Dataflow.

- Monitoring and Alerting: Set up monitoring and alerting systems to track data quality metrics and alert you to potential problems. GCP’s monitoring and logging services are essential here.

In practice, I often use a combination of these strategies, tailoring the approach to the specific requirements of each project. For instance, creating custom data quality checks using SQL in BigQuery allows for targeted monitoring of critical data fields.

Q 12. Discuss your experience with different data formats (Avro, Parquet, ORC).

Avro, Parquet, and ORC are all columnar storage formats optimized for analytical processing. Choosing the right one depends on the specific needs of your project.

- Avro: Is a row-oriented format that’s also schema-aware. It offers excellent schema evolution capabilities, making it suitable for data that changes over time. It’s often chosen for its flexibility and cross-language support.

- Parquet: A columnar storage format optimized for analytical queries. Its columnar structure allows efficient data retrieval, significantly improving query performance compared to row-oriented formats. It’s widely adopted due to its excellent compression and performance in Big Data environments.

- ORC (Optimized Row Columnar): Similar to Parquet, ORC is a columnar format offering good compression and query performance. It often provides a good balance between compression ratio and query performance.

The best choice depends on factors like data size, query patterns, and schema evolution requirements. Parquet is often preferred for its widespread adoption and generally excellent performance in BigQuery. Avro’s schema evolution is a key advantage in projects expecting frequent schema changes. ORC is a solid alternative if you need a balance of performance and compression.

Q 13. Describe your experience with different data ingestion methods into BigQuery.

BigQuery supports various data ingestion methods, catering to diverse data sources and volumes.

- BigQuery Storage Write API: This offers high-throughput ingestion for large datasets, ideal for batch processing. It’s perfect for loading large amounts of data efficiently.

- Streaming Inserts: Ideal for real-time ingestion of streaming data. This method allows you to insert data into BigQuery continuously.

- Bulk Loads: Used for loading large datasets from various sources like Cloud Storage (CSV, JSON, Avro, Parquet, ORC). It’s efficient for loading batch data.

- Data Transfer Service: A fully managed service that simplifies the transfer of data from various on-premises or cloud-based data sources to BigQuery.

- Third-party tools: Many ETL (Extract, Transform, Load) tools integrate seamlessly with BigQuery, providing a robust solution for complex data ingestion pipelines.

Choosing the right method depends on factors such as data volume, velocity, and the source of your data. For example, streaming inserts are perfect for real-time analytics dashboards, while bulk loads are more suitable for batch processing of large datasets. I often use a combination of methods, optimizing based on the data flow and overall system architecture.

Q 14. Explain your understanding of data lineage and how to track it in GCP.

Data lineage refers to the complete history of a data asset, tracing its origin, transformations, and usage. It’s like a data family tree, showing how data is created, processed, and used throughout its lifecycle. In GCP, tracking data lineage is critical for data governance, compliance, and troubleshooting.

Several methods help track data lineage in GCP:

- Cloud Data Catalog: Provides metadata management, including data lineage information. It automatically captures lineage information for many BigQuery operations.

- Custom Lineage Tracking: Develop custom solutions using tools like Cloud Logging or Cloud Monitoring to capture and store lineage information from your data pipelines. This provides more granular control.

- Third-party Tools: Several third-party data lineage tools integrate with GCP services, providing comprehensive lineage tracking and visualization.

- Dataflow and Dataproc metadata: These services provide metadata about job execution, including input and output datasets, which can be leveraged for lineage tracking.

A robust data lineage strategy is essential for debugging, compliance auditing, and understanding how data flows through your organization. I typically incorporate data lineage tracking into my pipelines from the outset, using a combination of automated tracking via Cloud Data Catalog and custom logging where needed to create a complete and auditable data lineage history.

Q 15. How do you handle data anomalies and outliers in your Big Data analysis?

Handling data anomalies and outliers is crucial for accurate Big Data analysis. Think of it like cleaning your house before a party – you wouldn’t want a pile of dirty laundry ruining the atmosphere! My approach involves a multi-step process:

- Detection: I employ statistical methods like Z-score or IQR (Interquartile Range) to identify data points significantly deviating from the norm. Visualization techniques like box plots are also helpful in spotting outliers. For example, a Z-score above 3 or below -3 could indicate an outlier.

- Investigation: Once detected, I investigate the root cause. Is it a genuine anomaly (e.g., a sensor malfunction), or a data entry error? Understanding the context is key. I might query related datasets or consult domain experts to determine the validity of the outlier.

- Treatment: The best treatment depends on the root cause and the impact of the outlier. Options include:

- Removal: If the outlier is due to an error, it can be safely removed.

- Transformation: Techniques like winsorizing (capping outliers at a certain percentile) or log transformation can mitigate their influence.

- Imputation: If removal isn’t ideal, I may replace the outlier with a more representative value, such as the median or mean of the remaining data points.

- Modeling: Some machine learning algorithms are robust to outliers (e.g., Random Forest), and might not require any pre-processing.

- Validation: After applying the chosen treatment, I re-run my analysis and compare the results to the original data to ensure the process hasn’t inadvertently skewed the findings.

For instance, in a project analyzing e-commerce data, I discovered unusually high order values. Investigation revealed a pricing error. After correcting the error, the analysis yielded more reliable insights into customer buying behavior.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. What are your experiences with serverless technologies in GCP for Big Data?

Serverless technologies in GCP, like Cloud Functions and Cloud Run, are game-changers for Big Data. They eliminate the headache of managing servers, allowing me to focus on the data analysis itself. Think of it as having a team of on-demand chefs, instead of managing a whole restaurant kitchen.

I’ve used Cloud Functions extensively for processing small, event-driven tasks within larger pipelines. For example, I’ve used them for triggering data validation checks whenever new data lands in Cloud Storage. This ensures data quality before it enters the main processing pipeline.

Cloud Run has been invaluable for deploying and scaling microservices for more complex data processing needs. I can easily scale the number of instances based on demand, ensuring my application handles peak loads without performance issues. For example, I deployed a microservice on Cloud Run that performed sentiment analysis on large volumes of social media text data. The auto-scaling capabilities of Cloud Run ensured consistent performance even during periods of high traffic.

Q 17. Describe your experience with using Cloud Composer for managing airflow.

Cloud Composer, GCP’s managed Apache Airflow service, is my go-to for orchestrating complex Big Data workflows. It’s like having a highly skilled project manager for my data pipelines. It handles scheduling, monitoring, and managing the entire process from data ingestion to final analysis.

My experience encompasses building and managing DAGs (Directed Acyclic Graphs) for various purposes:

- ETL (Extract, Transform, Load): I’ve built DAGs to extract data from various sources (databases, APIs, cloud storage), transform it using Spark or Dataflow, and load it into BigQuery or other data warehouses.

- Data Quality Monitoring: I’ve created DAGs to automate data quality checks, ensuring accuracy and consistency throughout the pipeline.

- Machine Learning Model Deployment: I’ve used Airflow to schedule the training and deployment of machine learning models.

One project involved creating a DAG that automated the entire process of extracting website log data from Google Cloud Storage, preprocessing it with Apache Beam using Dataflow, analyzing it with BigQuery, and finally visualizing the results in Data Studio. Cloud Composer’s built-in monitoring and alerting features helped me track the DAG’s progress and identify any potential issues quickly.

Q 18. Explain your understanding of the different GCP logging and monitoring tools related to big data.

GCP provides a robust suite of logging and monitoring tools for Big Data, each serving a specific purpose. It’s like having a comprehensive security system for your data center.

- Cloud Logging: This centralized logging service collects logs from various GCP services, including BigQuery, Dataflow, Dataproc, and Cloud Storage. It allows me to search, filter, and analyze logs to identify and troubleshoot issues. For example, I can track the execution time of my BigQuery jobs and quickly detect performance bottlenecks.

- Cloud Monitoring: This tool provides comprehensive monitoring of GCP resources, including metrics, alerts, and dashboards. I use it to track resource usage (CPU, memory, network), identify performance issues, and set up alerts for critical events. For example, I can set up an alert that triggers when the latency of a Dataflow job exceeds a predefined threshold.

- BigQuery’s built-in monitoring: BigQuery provides its own set of metrics and monitoring tools, allowing me to track query performance, data load times, and other relevant statistics. This helps me optimize my BigQuery queries for speed and efficiency.

- Stackdriver Error Reporting: This integrates with Cloud Logging to provide detailed information on errors that occur within my applications, helping me pinpoint and resolve bugs.

By using these tools in concert, I can gain a comprehensive overview of my Big Data infrastructure’s health and performance, enabling proactive problem-solving and operational efficiency.

Q 19. How would you design a real-time data processing pipeline using Pub/Sub, Dataflow, and BigQuery?

A real-time data processing pipeline using Pub/Sub, Dataflow, and BigQuery is like a fast-paced assembly line for data. Let’s break down the architecture:

- Pub/Sub: This acts as the message broker, receiving incoming data streams. Think of it as the conveyor belt bringing in raw materials. Data sources publish messages (e.g., sensor readings, social media posts) to specific Pub/Sub topics.

- Dataflow: This is the processing engine, consuming messages from Pub/Sub topics and applying transformations. It’s the assembly line workers that process and transform the data. Dataflow uses Apache Beam, which allows for both batch and streaming processing. Here, we’d leverage the streaming capabilities to process data in real time.

- BigQuery: This is the data warehouse, storing the processed data for analysis and reporting. This is the final product storage area. Dataflow writes the transformed data directly to BigQuery tables.

Workflow: Data sources publish messages to a Pub/Sub topic. Dataflow subscribes to this topic, reads messages, and performs the necessary transformations (e.g., cleaning, aggregation, enrichment). Finally, Dataflow writes the results to a BigQuery table. This entire process is designed to operate in real time, enabling immediate insights based on the incoming data streams.

Example: Imagine building a real-time dashboard for a ride-sharing service. Data from ride requests, driver locations, and ride completions are published to Pub/Sub. Dataflow processes this data, calculates key metrics (e.g., wait times, ride completion rates), and writes the results to BigQuery. A dashboard application pulls data from BigQuery to visualize these metrics in real time.

Q 20. Explain your experience with machine learning integration in a GCP big data environment.

Integrating machine learning into a GCP Big Data environment is crucial for extracting deeper insights. Think of it as adding artificial intelligence to your analysis team. My experience involves leveraging various GCP services:

- Vertex AI: This unified platform provides a comprehensive set of tools for building, training, and deploying machine learning models. I’ve used it to train models using BigQuery data, optimizing the process with pre-trained models where applicable.

- BigQuery ML: This allows creating and executing machine learning models directly within BigQuery, simplifying the process and reducing data movement. This is incredibly efficient for tasks where model training can be done directly on the existing data.

- Dataflow/Dataproc: I’ve used Dataflow (for streaming data) and Dataproc (for batch processing) to prepare data for machine learning models, performing feature engineering and data transformation tasks before feeding the data to Vertex AI or BigQuery ML.

- Cloud Storage: Cloud Storage is essential for storing training data, model artifacts, and other related files.

In a recent project involving fraud detection, I used BigQuery ML to train a model directly on transactional data stored in BigQuery. The model identified suspicious transactions with high accuracy, improving the efficiency of our fraud detection system. The entire model training, evaluation, and deployment process was managed within the BigQuery environment, significantly simplifying the workflow.

Q 21. How do you optimize BigQuery queries for performance?

Optimizing BigQuery queries for performance is critical for cost-effectiveness and responsiveness. It’s like fine-tuning a sports car engine for maximum speed.

- Proper Schema Design: A well-designed schema with appropriate data types and partitioning significantly improves query performance. Choosing the correct data type and utilizing clustering can dramatically speed up query execution.

- Partitioning and Clustering: Partitioning divides tables into smaller, manageable chunks based on time or other criteria. Clustering organizes data within partitions based on frequently queried columns. Both techniques improve query speed by reducing the amount of data that needs to be scanned.

- Query Optimization Techniques: Utilize techniques like filtering with

WHEREclauses, avoidingSELECT *(select only necessary columns), using appropriate join types (INNER JOINis generally faster thanLEFT JOIN), and leveraging BigQuery’s built-in functions for efficient processing. - Using Views and Materialized Views: Create views for frequently used queries to reduce execution time. Materialized views store the results of pre-computed queries, providing near-instantaneous access to data.

- Analyzing Query Execution Plans: BigQuery provides tools to analyze the execution plan of your queries, identifying bottlenecks and areas for optimization. This offers valuable insights into how to enhance your query strategy.

- Data Location: Ensure that the data is stored in the same location as the query to minimize latency.

For example, instead of SELECT * FROM mytable WHERE date > '2024-01-01', a better query would be SELECT column1, column2 FROM mytable WHERE date > '2024-01-01', focusing on only needed columns. Properly partitioning the table by date would further enhance the query performance.

Q 22. What are your preferred methods for data visualization and dashboarding related to your Big Data analysis?

For data visualization and dashboarding in my Big Data analysis, I primarily leverage Google Cloud’s offerings, combined with open-source tools for specific needs. My go-to choices include:

- Looker: For complex, enterprise-grade dashboards and embedded analytics. Looker’s strong integration with BigQuery allows for seamless data exploration and visualization directly from the data warehouse, minimizing data movement and latency. I’ve used it to create interactive dashboards showing key performance indicators (KPIs) for marketing campaigns, enabling stakeholders to easily monitor campaign effectiveness.

- Data Studio (now Looker Studio): A more user-friendly option for creating interactive reports and dashboards, particularly beneficial for sharing insights with less technically inclined team members. I’ve utilized Data Studio to build dashboards displaying real-time sales data, providing quick insights into sales trends and performance.

- Tableau or Power BI (with connectors): When specific visualization requirements aren’t fully met by Google’s tools, I integrate with these robust platforms, leveraging custom connectors to access data in BigQuery or Datastore. For example, if a client needed a very specific chart type not readily available in Looker Studio, I’d use Tableau’s rich visualization capabilities.

The choice depends heavily on the project’s scale, the technical skills of the end-users, and the complexity of the visualizations needed. I always prioritize ease of use and maintainability when selecting a visualization tool.

Q 23. Explain your experience with automating data pipeline tasks using Cloud Functions or similar tools.

I extensively use Cloud Functions and Cloud Composer (for more complex orchestrations) to automate data pipeline tasks. Cloud Functions are excellent for event-driven architectures; for example, I’ve used them to trigger data processing when new data lands in Cloud Storage. This ensures near real-time processing without the overhead of constantly running a server.

Here’s an example of automating a data ingestion pipeline using Cloud Functions:

// Cloud Function triggered by a Cloud Storage event

exports.processNewData = (data, context) => {

// Get the file name from the event

const fileName = context.resourceName;

// Download the file from Cloud Storage

// ...

// Process the data (e.g., using BigQuery)

// ...

// Delete the file from Cloud Storage (optional)

// ...

};For more complex, scheduled tasks or workflows, I utilize Cloud Composer, which provides a managed Apache Airflow environment. This allows for creating sophisticated DAGs (Directed Acyclic Graphs) to orchestrate multiple steps in a data pipeline, including data extraction, transformation, loading (ETL), and quality checks. I’ve used this for complex batch processing jobs that involve multiple data sources and transformation steps.

My automation approach always focuses on reliability, scalability, and maintainability. This includes implementing robust error handling, logging, and monitoring to ensure the pipeline’s smooth operation.

Q 24. How do you approach data governance and compliance within a GCP Big Data architecture?

Data governance and compliance are paramount in GCP Big Data architectures. My approach involves a multi-faceted strategy:

- Data Catalog: I leverage Data Catalog to create a centralized metadata repository, enabling data discovery, classification, and lineage tracking. This is crucial for understanding data ownership, access controls, and compliance requirements.

- IAM (Identity and Access Management): Implementing granular access control through IAM is fundamental. This ensures only authorized users or services can access specific data, preventing unauthorized data breaches. I often use service accounts with least privilege principles.

- Data Loss Prevention (DLP): I utilize DLP APIs to scan datasets for sensitive information (like PII) and implement appropriate redaction or masking to comply with regulations like GDPR or HIPAA. This proactive approach minimizes risk.

- Encryption: Data encryption at rest (using Cloud Storage encryption) and in transit (using HTTPS) is essential. This protects data from unauthorized access, even if a security breach occurs.

- Compliance auditing: Regularly auditing access logs and data usage patterns is key to ensuring compliance. I use Cloud Logging and Cloud Monitoring for this, and I set up alerts for suspicious activities.

My approach is proactive and preventative, integrating data governance principles at every stage of the Big Data lifecycle, from data ingestion to analysis and reporting.

Q 25. Describe a challenging Big Data problem you solved and your approach to the solution.

I once faced a challenge involving near real-time processing of high-volume streaming data from various IoT devices. The data was highly unstructured, and the requirements included immediate anomaly detection and alerting. A naive approach would have resulted in significant latency and scalability issues.

My solution involved a layered approach:

- Data Ingestion: I used Pub/Sub to ingest the streaming data from the IoT devices, leveraging its scalability and fault tolerance. This ensured reliable data ingestion even with intermittent connectivity issues.

- Data Processing: Apache Beam, running on Dataflow, was chosen for its ability to process both batch and streaming data. I developed a Beam pipeline to perform real-time data cleansing, transformation, and anomaly detection using machine learning algorithms (trained using BigQuery ML).

- Alerting: Cloud Functions were used to trigger alerts based on the anomaly detection results. These alerts were sent through various channels (email, SMS) based on the severity and type of anomaly.

- Monitoring and Logging: Cloud Monitoring and Cloud Logging provided real-time visibility into the pipeline’s performance, enabling proactive identification and resolution of issues.

This solution ensured near real-time processing, scalability, and robust error handling. The use of managed services minimized operational overhead and allowed me to focus on developing the core data processing logic. The success was measured by a significant reduction in response time to anomalies and improved overall system reliability.

Q 26. What are some best practices for cost optimization in GCP Big Data projects?

Cost optimization in GCP Big Data projects requires a holistic approach, starting with careful planning and extending to ongoing monitoring and optimization. Here are some key best practices:

- Right-sizing resources: Choose appropriate compute instance sizes for your workload. Avoid over-provisioning, as it leads to unnecessary costs. Utilize autoscaling to adjust resources based on demand.

- Data lifecycle management: Implement a strategy for archiving or deleting data that’s no longer needed. Older data can be moved to cheaper storage options like Cloud Storage Nearline or Coldline.

- Efficient query optimization: Optimize BigQuery queries using techniques like proper partitioning, clustering, and the use of appropriate data types. Inefficient queries can dramatically increase costs.

- Use preemptible VMs: For certain batch processing jobs where interruptions are acceptable, using preemptible VMs can significantly reduce costs.

- Monitoring and alerting: Continuously monitor resource usage and costs using Cloud Billing and set up alerts for unexpected spikes in costs. This allows for timely intervention.

- Leverage free tier services: Utilize the free tier for eligible services during development and testing phases to minimize upfront costs.

Regular cost analysis and optimization is crucial. Using the Cloud Billing APIs can help automate cost reporting and analysis.

Q 27. How familiar are you with the different types of GCP managed services for databases (e.g., Cloud SQL, Cloud Spanner) and their suitability for Big Data workloads?

I’m familiar with various GCP managed database services and their suitability for Big Data workloads:

- Cloud SQL: Best suited for transactional workloads with well-defined schemas. While it can handle moderate-sized datasets, it’s not ideally suited for massive scale or complex analytical queries typical of Big Data.

- Cloud Spanner: A globally-distributed, scalable, strongly consistent database. It’s excellent for applications requiring high availability, low latency, and strong consistency across multiple regions. Suitable for certain Big Data applications requiring transactional consistency but might not be the most cost-effective choice for pure analytical processing.

- BigQuery: The primary choice for large-scale data warehousing and analytics on GCP. Its serverless architecture, scalability, and optimized query engine make it ideal for most Big Data analytical workloads. I’ve extensively used it for ETL processes, complex analytical queries, and machine learning model training.

- Cloud Datastore: A NoSQL document database, suitable for applications requiring flexibility in schema design and high scalability. It’s a good choice for certain Big Data applications where structured data is not the primary requirement.

The choice of database depends heavily on the specific needs of the project, including data volume, query patterns, consistency requirements, and cost considerations. I always assess these factors carefully before selecting a database service.

Q 28. Explain your understanding of different data processing frameworks (e.g., Apache Spark, Hadoop) within the GCP ecosystem.

I have extensive experience with various data processing frameworks within the GCP ecosystem:

- Apache Spark on Dataproc: I frequently use Spark on Dataproc for distributed data processing. Dataproc provides a managed Hadoop and Spark environment, simplifying cluster management and reducing operational overhead. I’ve utilized Spark for ETL processes, large-scale machine learning model training, and complex data transformations.

- Apache Beam with Dataflow: Beam offers a unified programming model for both batch and streaming data processing. Dataflow is a fully managed service that executes Beam pipelines, providing scalability and fault tolerance. I’ve leveraged this combination for real-time data processing pipelines, ETL jobs, and stream analytics.

- Hadoop on Dataproc (legacy): While Spark is often preferred for its performance, I’m still proficient with Hadoop on Dataproc for specific scenarios where MapReduce is necessary or where existing Hadoop-based applications need to be migrated to GCP.

My framework selection depends on factors like the nature of the data (batch vs. streaming), the complexity of the processing tasks, and the desired level of scalability and fault tolerance. I always strive to select the most efficient and cost-effective framework for each specific project.

Key Topics to Learn for GCP Big Data Interview

- Data Storage & Processing: Understand the nuances of Google Cloud Storage (GCS), Cloud Dataproc (Spark, Hadoop), and Cloud Dataflow (for batch and stream processing). Explore data partitioning, schema design, and optimization strategies for different workloads.

- Data Warehousing & Analytics: Master BigQuery’s capabilities, including SQL querying, data modeling (star schema, snowflake schema), and performance tuning. Practice creating and optimizing complex queries for large datasets. Understand the differences between BigQuery and other warehousing solutions.

- Data Integration & ETL: Learn how to effectively ingest data from various sources into GCP using tools like Cloud Data Fusion and Cloud Composer. Explore techniques for data cleansing, transformation, and loading (ETL) processes, and understand best practices for data quality.

- Data Governance & Security: Familiarize yourself with GCP’s security features related to Big Data, including IAM roles, access control lists (ACLs), and data encryption. Understand data lineage and compliance considerations.

- Cost Optimization: Learn strategies for optimizing your Big Data solutions on GCP to minimize costs. This includes understanding pricing models, resource utilization, and efficient query design.

- Machine Learning on Big Data: Explore how to integrate machine learning models with your Big Data pipelines using services like Vertex AI. Understand how to prepare data for ML, train models, and deploy predictions at scale.

- Monitoring & Logging: Understand how to monitor and troubleshoot your Big Data pipelines using tools like Cloud Monitoring and Cloud Logging. Learn to identify performance bottlenecks and resolve issues efficiently.

- Practical Application: Be prepared to discuss real-world scenarios where you’ve applied your Big Data skills, even if from personal projects. Focus on the challenges faced, your approach to problem-solving, and the outcomes achieved.

Next Steps

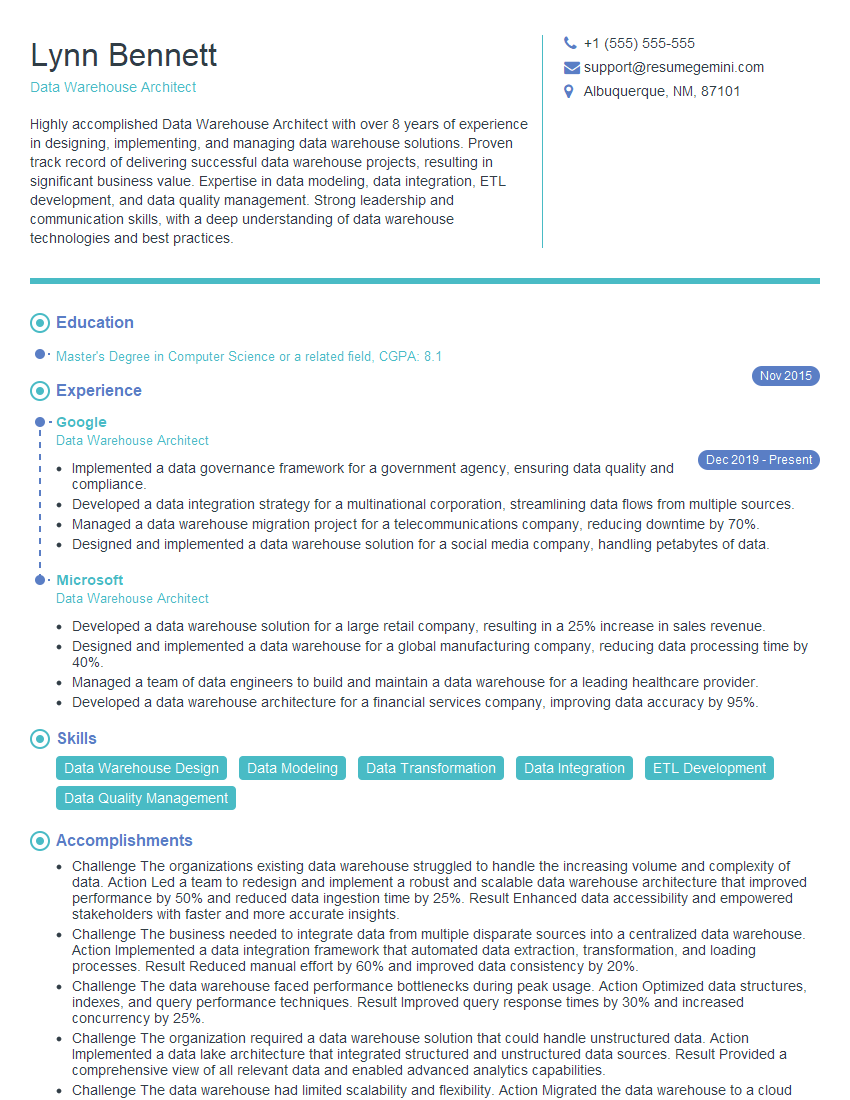

Mastering GCP Big Data significantly enhances your career prospects, opening doors to high-demand roles with competitive salaries. To stand out, crafting an ATS-friendly resume is crucial. A well-structured resume highlights your skills and experience effectively, maximizing your chances of landing an interview. We strongly recommend using ResumeGemini to build a professional and impactful resume. ResumeGemini offers a user-friendly platform and provides examples of resumes tailored to GCP Big Data roles, helping you present your qualifications in the best possible light.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Very informative content, great job.

good