The right preparation can turn an interview into an opportunity to showcase your expertise. This guide to Geographic Knowledge and Expertise interview questions is your ultimate resource, providing key insights and tips to help you ace your responses and stand out as a top candidate.

Questions Asked in Geographic Knowledge and Expertise Interview

Q 1. Explain the difference between vector and raster data.

Vector and raster data are two fundamental ways to represent geographic information in a GIS (Geographic Information System). Think of it like drawing a map: you can either draw it with lines and points (vector) or by coloring in squares (raster).

Vector data represents geographic features as points, lines, and polygons. Each feature has its own distinct location and attributes. For instance, a point could represent a tree, a line a road, and a polygon a building. Vector data is precise and scalable; it doesn’t lose detail when zooming in. Its file formats include Shapefiles (.shp), GeoJSON, and GeoPackage.

Raster data represents geographic features as a grid of cells or pixels, each with a value representing a characteristic like elevation, temperature, or land cover. Satellite imagery and aerial photographs are typical examples. Raster data is good for representing continuous phenomena, but can be large and less precise at finer scales, especially when zoomed in.

In short: Vector is precise, scalable, and good for discrete features. Raster is good for continuous phenomena but can be large and less precise at high zoom levels.

Q 2. Describe the process of georeferencing.

Georeferencing is the process of assigning real-world coordinates (latitude and longitude) to points on an image or map that doesn’t initially have them. Think of it as giving an address to a picture. This allows integration of the image into a GIS, aligning it with other geospatial data.

The process typically involves identifying control points – points whose coordinates are known in both the image and a reference map (e.g., a topographic map or satellite image with known coordinates). GIS software then uses these control points to transform the image using a transformation method (e.g., affine transformation, polynomial transformation). The more control points used, the more accurate the georeferencing. Finally, the software interpolates the transformation to assign coordinates to all other pixels in the image.

Example: Georeferencing a scanned historical map. We would identify several landmarks (e.g., intersections, building corners) on the scanned map and find their corresponding coordinates on a modern map. The GIS software would then use these points to correctly position the historical map in a geographical coordinate system.

Q 3. What are the common coordinate reference systems (CRS)?

Coordinate Reference Systems (CRS), also known as spatial reference systems, define how geographic coordinates are placed on the Earth’s surface. They are crucial for correctly representing and analyzing spatial data.

Some common CRS include:

- WGS 84 (EPSG:4326): The most widely used geodetic datum, based on the World Geodetic System 1984. It uses latitude and longitude coordinates.

- UTM (Universal Transverse Mercator): A projected coordinate system that divides the Earth into 60 zones, each with its own projection. It uses meters as units and is commonly used for mapping and surveying.

- State Plane Coordinate Systems (SPCS): These are projected coordinate systems specific to individual states in the US, designed to minimize distortion within the state boundaries.

- Web Mercator (EPSG:3857): Used extensively in web mapping applications such as Google Maps and OpenStreetMap. It’s a projected coordinate system that preserves directions but distorts areas at high latitudes.

The choice of CRS depends on the specific application and the area being mapped. Using the incorrect CRS will lead to inaccuracies and misalignments in spatial analysis.

Q 4. What is spatial autocorrelation and how does it affect analysis?

Spatial autocorrelation describes the degree to which a variable’s values at nearby locations are similar. In simpler terms, it means that nearby locations tend to be more alike than locations further apart. Think of it like this: if a city has high crime rates in one area, there’s a good chance nearby areas will also have high crime rates, which is spatial autocorrelation.

Positive spatial autocorrelation indicates that similar values cluster together (e.g., high values near high values, low values near low values). Negative spatial autocorrelation indicates that dissimilar values are clustered together (e.g., high values near low values). No spatial autocorrelation implies that values are randomly distributed.

Spatial autocorrelation significantly affects spatial analysis. Ignoring it can lead to inaccurate results and misleading conclusions. For example, in regression analysis, ignoring spatial autocorrelation can violate the assumption of independence between observations and result in biased estimates.

Techniques like Moran’s I and Geary’s C are commonly used to measure spatial autocorrelation, and methods like spatial regression models are used to account for it in analysis.

Q 5. Explain the concept of map projections and their limitations.

Map projections are mathematical transformations that represent the three-dimensional Earth’s surface onto a two-dimensional map. Because it’s impossible to perfectly flatten a sphere without distortion, all map projections involve some form of compromise. They all involve tradeoffs between preserving area, shape, distance, and direction.

Common map projections include:

- Mercator Projection: Preserves direction and shape at the expense of area distortion (areas near the poles appear greatly exaggerated).

- Albers Equal-Area Conic Projection: Preserves area but distorts shape and distances.

- Lambert Conformal Conic Projection: Preserves shape and direction but distorts area.

Limitations: All projections distort at least one of the four properties (area, shape, distance, and direction). The choice of projection depends on the application. For example, a Mercator projection is suitable for navigation, but not for accurately representing the size of countries. Choosing the right projection is crucial for accurate and unbiased geographic analysis.

Q 6. What are the different types of map scales and their applications?

Map scale represents the relationship between the distance on a map and the corresponding distance on the ground. It’s expressed in different ways:

- Representative Fraction (RF): A ratio showing the relationship, e.g., 1:100,000 means 1 unit on the map equals 100,000 units on the ground.

- Verbal Scale: A descriptive statement, e.g., ‘1 inch equals 1 mile’.

- Graphic Scale: A visual representation using a bar scale, allowing easy measurement directly on the map.

Applications: The choice of scale depends on the map’s purpose and the level of detail required. Large-scale maps (e.g., 1:1000) show fine detail over a small area (e.g., a city block), while small-scale maps (e.g., 1:1,000,000) show a broader area with less detail (e.g., a whole country). Small scale maps emphasize geographic context while large scale maps show fine details relevant for local planning and analysis.

Q 7. Describe your experience with different GIS software (e.g., ArcGIS, QGIS).

I have extensive experience with both ArcGIS and QGIS, two leading GIS software packages. My expertise includes data management, spatial analysis, cartography, and geoprocessing using both platforms.

ArcGIS: I’m proficient in using ArcMap and ArcGIS Pro for tasks such as creating and managing geodatabases, performing spatial analysis (overlay analysis, proximity analysis, network analysis), creating thematic maps, and automating workflows using model builder and Python scripting. I’ve used ArcGIS extensively in projects involving land use planning, environmental impact assessment, and infrastructure development.

QGIS: I’m well-versed in QGIS’s open-source capabilities, utilizing its tools for similar tasks as ArcGIS but with a focus on cost-effectiveness and community contributions. I’ve leveraged QGIS for its strong geoprocessing functionalities and extensibility through plugins for specialized tasks. QGIS has proven particularly valuable in projects with budget constraints or needing specific plugin functionalities not readily available in other commercial software.

I am comfortable working with both proprietary and open-source tools and select the best option based on project needs and resources.

Q 8. How do you handle spatial data errors and inconsistencies?

Handling spatial data errors and inconsistencies is crucial for accurate GIS analysis. Think of it like building a house – you can’t have crooked walls or misaligned foundations! Errors can stem from various sources: digitization errors during map creation, inconsistencies in data collection methods, or even simple typos. My approach is multi-faceted.

Data Cleaning and Validation: I start by identifying and correcting obvious errors. This involves checking for inconsistencies in attribute tables (e.g., duplicate entries, illogical values), examining geometries for overlaps or gaps (using tools like topology checks), and using data validation rules to flag problematic records. For example, I might find a polygon with an area of zero, indicating an error in its creation that needs fixing.

Spatial Consistency Checks: I leverage spatial analysis tools to identify inconsistencies. For instance, I might use topology rules to ensure that polygons share common boundaries without overlaps or gaps. This is especially important for creating accurate maps and performing reliable analyses. Think of checking the consistency of property boundaries on a cadastral map – no overlaps are allowed!

Data Transformation and Projection: Ensuring all data uses a consistent coordinate system and projection is vital. Differences in projections can lead to significant spatial discrepancies. I use coordinate transformation tools to ensure consistency before any analysis. Imagine trying to merge data from two maps using different projections – the result would be a mess!

Error Propagation Assessment: Understanding how errors might propagate throughout the analysis is critical. I consider the potential impact of inaccuracies in the input data on the final results and document this in reports.

Data Integration Techniques: When merging data from multiple sources, I use techniques like spatial joins and overlay analysis carefully, understanding the implications of potential discrepancies and employing strategies to manage these differences, such as assigning weights or using fuzzy logic.

Q 9. What are the common data formats used in GIS?

GIS uses a variety of data formats, each with its strengths and weaknesses. The choice depends on the specific application and data type. Some of the most common include:

Shapefile (.shp): A widely used vector format storing point, line, and polygon geometries along with attribute data in a related database file (.dbf). It’s simple, but limited to a single spatial layer. Think of it as a digital equivalent of a hand-drawn map.

GeoJSON: A text-based, open standard vector format increasingly used for web mapping and data exchange. It’s human-readable and easily integrated with web technologies.

GeoDatabase (.gdb): A proprietary Esri format offering advanced capabilities for managing complex spatial data, including versioning and geodatabase relationships. It is efficient for large-scale data management.

Raster formats (e.g., TIFF, GeoTIFF, IMG): Used for storing gridded spatial data, such as satellite imagery and elevation models. GeoTIFF adds geospatial metadata for precise location referencing.

KML/KMZ: Formats used for sharing geographical information on Google Earth and Google Maps. KMZ files are compressed KML files.

Q 10. Explain your experience with spatial analysis techniques (e.g., buffering, overlay, interpolation).

Spatial analysis is the heart of GIS. I have extensive experience with various techniques, including:

Buffering: Creating zones around features. For example, I’ve used buffering to determine the area within a 5-kilometer radius of a proposed highway to assess potential environmental impacts.

Overlay Analysis: Combining multiple layers of spatial data. For example, I’ve overlaid land use maps with soil maps to identify areas suitable for specific agricultural practices. This involves operations such as intersection, union, and symmetrical difference.

Interpolation: Estimating values at unsampled locations. I’ve used interpolation techniques (like kriging or inverse distance weighting) to create continuous surfaces from point data, for example, creating an elevation model from a set of elevation points or estimating air pollution levels across a city based on measurements at monitoring stations.

In one project, I used a combination of buffering, overlay, and interpolation. We were analyzing the risk of flooding in a coastal region. I buffered river channels, overlaid the buffer with elevation data, and then interpolated flood depths based on proximity to the river and elevation.

Q 11. How do you perform spatial joins and their uses?

A spatial join is a GIS operation that combines attributes from two layers based on their spatial relationships. Imagine joining information about houses (like their addresses) with a layer containing information about school districts (like school quality). A spatial join will tell you which school district each house is in.

How it’s performed: This typically involves specifying the join type (e.g., one-to-one, one-to-many) and the spatial relationship (e.g., intersects, contains, closest). The software then merges the attribute data based on the specified criteria.

Uses: Spatial joins are extremely useful for enriching datasets. For instance:

Enriching point data: Joining point data (e.g., crime locations) with polygon data (e.g., census tracts) to analyze crime rates by neighborhood.

Analyzing relationships: Determining which parcels of land fall within a specific zoning district or which businesses are located within a certain distance of a highway.

In a project involving traffic accident analysis, I used a spatial join to link accident locations with road segment attributes (speed limits, road type) to understand the relationship between these factors and accident frequency.

Q 12. Describe your experience with remote sensing data and its applications.

Remote sensing data provides a powerful perspective for geographical analysis. I have experience processing, analyzing, and interpreting various types of remote sensing data, including:

Satellite imagery: Analyzing Landsat and Sentinel imagery to monitor deforestation, assess crop health, or map urban sprawl. One project involved using Landsat imagery to track changes in glacial extent over several decades.

Aerial photography: Utilizing high-resolution aerial photographs for precise mapping tasks, such as creating orthomosaics for land surveying or identifying individual trees in forest inventories.

LiDAR (Light Detection and Ranging): Processing LiDAR data to generate Digital Elevation Models (DEMs) for hydrological modeling, slope analysis, or identifying areas prone to landslides. This technology provides highly accurate 3D surface data.

My experience involves using various software packages for image pre-processing (geometric correction, atmospheric correction), classification (supervised and unsupervised), and change detection. I have also worked with data from different sensors to extract information about various aspects of the earth’s surface.

Q 13. What are the different types of remote sensing data?

Remote sensing data comes in several types, categorized by the sensor used and the type of energy detected:

Optical Imagery: Captures reflected sunlight, similar to a camera. It provides information about surface features like color, texture, and vegetation. Landsat and Sentinel are examples of optical sensors.

Thermal Imagery: Detects infrared radiation emitted by objects, allowing measurement of temperature variations. This is useful for identifying heat sources, monitoring volcanic activity, or detecting wildfires.

Microwave Imagery: Uses microwave radiation to penetrate clouds and vegetation, revealing information about surface roughness, soil moisture, and even subsurface structures. SAR (Synthetic Aperture Radar) is a key technology in this domain.

LiDAR: As mentioned earlier, LiDAR uses laser pulses to measure distances to the ground, providing accurate elevation data and information about surface features.

Each type of data offers unique perspectives and is chosen based on the specific application. For example, if you need to monitor deforestation in a cloudy region, microwave data would be a good choice because it penetrates cloud cover.

Q 14. How do you interpret satellite imagery?

Interpreting satellite imagery requires a systematic approach:

Visual Interpretation: The first step is often visual inspection to identify broad features like land cover types (forests, urban areas, water bodies), identifying patterns and anomalies.

Spectral Analysis: Analyzing the spectral signatures of different features. Different materials reflect and absorb light differently at different wavelengths. This allows for distinguishing between various features based on their spectral response.

Image Classification: Assigning pixels to specific land cover classes using techniques like supervised classification (training the algorithm using labeled data) or unsupervised classification (letting the algorithm automatically group similar pixels). This process can be aided by digital image processing techniques like filtering and edge detection.

Change Detection: Comparing images acquired at different times to identify changes over time. This can be quantitative (using pixel-by-pixel comparison) or qualitative (using visual interpretation).

It’s important to consider metadata (information about the image’s acquisition, processing, and sensor characteristics) during interpretation. Understanding the sensor’s capabilities and limitations is vital for making reliable conclusions. Think of it like understanding the limitations of your camera before taking a picture – similar principles apply to satellite imagery interpretation.

Q 15. Explain the concept of GPS and its accuracy limitations.

GPS, or the Global Positioning System, uses a network of satellites to pinpoint a receiver’s location on Earth. It works by measuring the time it takes for signals to travel from multiple satellites to the receiver. By knowing the precise location of the satellites and the time it took for the signal to reach the receiver, sophisticated calculations can determine the receiver’s three-dimensional coordinates (latitude, longitude, and altitude).

However, GPS accuracy is not perfect. Several factors limit its precision:

- Atmospheric Conditions: The ionosphere and troposphere can delay GPS signals, causing errors in location calculations. These delays are more significant in extreme weather conditions.

- Multipath Errors: Signals can bounce off buildings, mountains, or other surfaces before reaching the receiver, leading to inaccurate measurements. This is particularly problematic in urban canyons.

- Satellite Geometry (GDOP): The relative positions of the satellites being used significantly impact accuracy. A poor geometric arrangement (high GDOP) results in larger errors.

- Receiver Limitations: The quality of the GPS receiver itself plays a crucial role. Inexpensive receivers may have lower accuracy than professional-grade devices.

- Signal Interference: Other radio signals can interfere with GPS signals, potentially leading to inaccurate readings.

For example, while consumer-grade GPS devices might offer accuracy within 10-20 meters, high-precision GPS systems used in surveying can achieve centimeter-level accuracy by employing techniques like Differential GPS (DGPS) or Real-Time Kinematic (RTK) GPS, which correct for atmospheric and other errors.

Career Expert Tips:

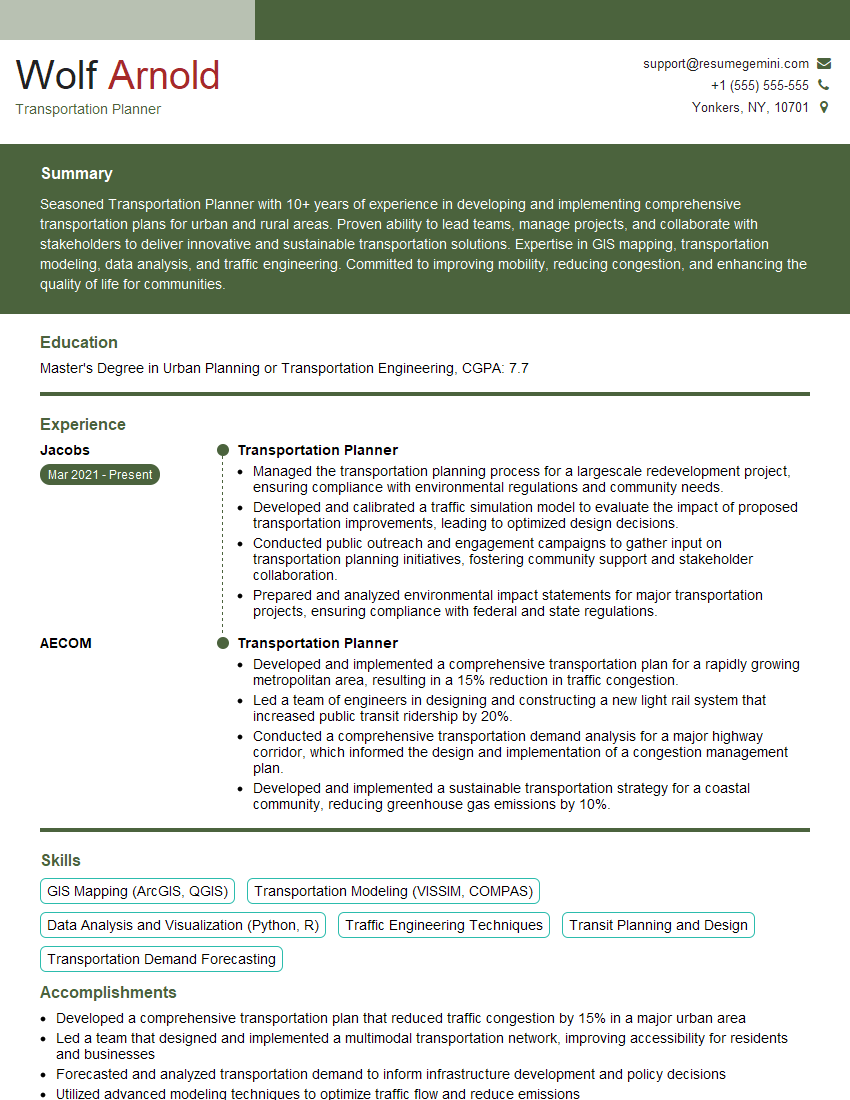

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. Describe your experience with spatial data modeling.

My experience with spatial data modeling spans over a decade, encompassing various methodologies and applications. I’m proficient in designing and implementing both vector and raster data models, adapting my approach based on the specific project requirements and the type of geographic information being managed.

For instance, in a recent project involving urban planning, I utilized a vector model to represent the street network, buildings, and land parcels using points, lines, and polygons. The attributes associated with these features included street names, building heights, land use classifications, and property ownership information. This allowed for complex spatial queries and analyses, such as identifying areas suitable for new developments based on proximity to existing infrastructure and zoning regulations.

In contrast, when working on a project analyzing deforestation patterns in the Amazon rainforest, I employed a raster model. Satellite imagery was processed to create a raster dataset with pixel values representing vegetation density. This allowed for effective analysis of changes in forest cover over time and the identification of deforestation hotspots. My experience extends to database management systems such as PostGIS and spatial analysis using ArcGIS and QGIS software.

Q 17. What are the key considerations when designing a GIS database?

Designing a robust and efficient GIS database requires careful consideration of several key factors:

- Data Model Selection: Choosing between vector and raster models, or a hybrid approach, is crucial and depends heavily on the nature of the geographic data and the types of analyses intended.

- Data Structure: Optimizing the database structure for efficient spatial queries and analyses is paramount. This involves designing appropriate tables with relevant attributes and establishing relationships between different data layers.

- Spatial Reference System (SRS): Defining a consistent and appropriate spatial reference system (e.g., UTM, WGS84) is fundamental for accurate spatial analysis. Inconsistencies in SRS can lead to significant errors.

- Data Integrity and Validation: Implementing rules and constraints to ensure data accuracy and consistency is vital. This could include range checks, data type checks, and spatial constraints like topological rules.

- Metadata Management: Comprehensive metadata is essential for understanding the data’s origin, quality, and limitations. It’s crucial to document all aspects of the database, including data sources, projection information, and attribute descriptions.

- Scalability and Performance: The database should be designed to handle the anticipated volume of data and to perform efficiently under different analytical workloads. This might involve database optimization techniques and appropriate indexing.

For example, I would consider using a PostGIS extension in PostgreSQL for a large, spatially-complex database due to its scalability and support for spatial functions. In simpler scenarios, a shapefile-based approach might suffice.

Q 18. How do you ensure data quality and accuracy in a GIS project?

Ensuring data quality and accuracy in a GIS project is a multi-step process that requires attention to detail at every stage. It starts long before the data is even entered into the system:

- Data Source Selection: Choose reliable and authoritative data sources. Consider the data’s accuracy, completeness, and currency.

- Data Acquisition and Preprocessing: Utilize appropriate techniques for data acquisition (e.g., GPS surveys, remote sensing, digitization) and carefully preprocess the data to correct errors, remove inconsistencies, and ensure data consistency.

- Data Cleaning and Validation: Implement rigorous data cleaning procedures to identify and rectify errors such as outliers, duplicates, and missing values. Employ validation rules and checks to ensure data conforms to predefined standards.

- Data Transformation and Projection: Accurately project and transform data into a consistent coordinate system to avoid spatial inconsistencies.

- Regular Audits and Quality Control: Conduct regular data audits and quality control checks throughout the project lifecycle to identify and address data quality issues.

- Documentation: Thoroughly document all data processing steps, including methods used, assumptions made, and potential limitations.

For example, in a project involving land parcel mapping, I would implement a spatial validation rule to ensure that neighboring parcels do not overlap. This helps to ensure that the geometry of the data is accurate and consistent.

Q 19. Explain your experience with data visualization techniques in GIS.

My experience with data visualization techniques in GIS is extensive, encompassing a wide range of methods tailored to communicate spatial information effectively. I’m proficient in creating various map types, including choropleth maps, isopleth maps, point maps, and cartograms.

For example, to illustrate population density across a region, I would utilize a choropleth map, where different color shades represent population density ranges. For displaying pollution levels across a city, I might create an isopleth map showing contour lines of equal pollution concentrations.

Beyond static maps, I have experience creating interactive web maps using tools like ArcGIS Online and Leaflet. This allows for dynamic exploration of data, providing users with the ability to zoom, pan, and query information directly on the map. I also use 3D visualization techniques to represent complex spatial relationships, such as creating 3D models of terrain or buildings to better understand elevation changes or urban landscapes.

The choice of visualization method always depends on the intended audience and the specific message I need to communicate. Simplicity, clarity, and accuracy are always top priorities.

Q 20. What are the ethical considerations when using geospatial data?

Ethical considerations in using geospatial data are paramount. The misuse of this powerful technology can have significant societal consequences. Key ethical considerations include:

- Privacy: Geospatial data often contains personally identifiable information (PII). Protecting individual privacy is crucial. Anonymization or aggregation techniques should be employed where possible to safeguard sensitive data.

- Bias and Discrimination: GIS data may reflect existing societal biases. Careful consideration must be given to ensure that analyses and visualizations do not perpetuate or exacerbate inequalities.

- Accuracy and Transparency: It’s crucial to ensure that geospatial data is accurate, complete, and properly documented. Transparency in data sources and methodologies is essential to build trust and avoid misleading interpretations.

- Data Security: Geospatial data is a valuable asset, and appropriate security measures should be in place to prevent unauthorized access, use, or modification.

- Data Ownership and Access: Respecting data ownership rights and ensuring equitable access to geospatial data is important. Concerns surrounding open data versus proprietary data must be carefully considered.

- Environmental Impact: The environmental impact of data acquisition and processing should be minimized. Sustainable practices should be prioritized.

For instance, when working with census data, I would be mindful of the potential for revealing sensitive information about specific individuals or neighborhoods, taking steps to ensure aggregation and anonymization are implemented to protect their privacy.

Q 21. Describe a challenging GIS project you worked on and how you overcame the challenges.

One particularly challenging project involved creating a real-time flood forecasting system for a coastal city prone to severe flooding. The challenge stemmed from several factors:

- Data Integration: The system required integrating data from diverse sources, including real-time rainfall data from weather stations, tidal gauge data, river flow measurements, and high-resolution elevation models. Each data source had its own format and level of accuracy.

- Data Processing and Analysis: Processing large volumes of real-time data and performing complex hydrological modeling required robust and efficient algorithms. The system needed to be responsive enough to provide timely flood warnings.

- System Scalability: The system needed to be scalable to handle future increases in data volume and users. Ensuring the system’s stability and performance under high load was critical.

- Data Visualization and Communication: The system had to effectively communicate flood risk information to the public in a clear and understandable way using various visualization tools such as interactive maps, alerts, and dashboards.

To overcome these challenges, I employed a modular design approach, breaking down the system into smaller, manageable components. This allowed for parallel development and testing of individual modules. I used cloud computing resources to handle the large data volumes and ensure system scalability. I developed automated data processing pipelines and implemented rigorous quality control measures to ensure data accuracy and consistency. Finally, I collaborated closely with stakeholders to design user-friendly interfaces and communication protocols for effectively disseminating flood warnings.

Q 22. How do you stay up-to-date with the latest advancements in GIS technology?

Staying current in the rapidly evolving GIS field requires a multi-pronged approach. I actively participate in online communities like GeoNet and follow leading GIS blogs and journals, such as those published by ESRI and other prominent vendors. Attending conferences like the Esri User Conference (Esri UC) and regional GIS events is crucial for networking and learning about the latest innovations firsthand. I also actively pursue professional development opportunities, including online courses offered by platforms like Coursera and edX, and workshops focused on specific software updates or emerging technologies like AI integration in GIS. Finally, I believe in continuous self-directed learning, experimenting with new tools and techniques on personal projects to solidify my understanding and identify areas for improvement.

Q 23. What are some common applications of GIS in your field of expertise?

GIS applications are incredibly diverse, but in my field, I frequently use it for:

- Spatial Analysis for Conservation: Identifying suitable habitats for endangered species, analyzing deforestation patterns, and modeling climate change impacts on biodiversity. For example, I’ve used overlay analysis to map areas of high biodiversity overlapping with areas vulnerable to deforestation, prioritizing conservation efforts.

- Urban Planning and Infrastructure Management: Analyzing population density, optimizing transportation networks, and assessing the impact of development projects. I’ve assisted in designing efficient bus routes based on real-time traffic data integrated with GIS.

- Disaster Response and Risk Assessment: Mapping flood zones, assessing earthquake vulnerability, and providing real-time information to emergency responders. This often involves working with high-resolution imagery and incorporating data from various sources like weather satellites.

- Precision Agriculture: Analyzing soil conditions, optimizing crop yields, and monitoring irrigation systems. This has allowed me to contribute to precision farming strategies that reduce water usage and fertilizer costs.

Q 24. Explain your understanding of spatial statistics.

Spatial statistics involves applying statistical methods to geographically referenced data to understand spatial patterns, relationships, and processes. It moves beyond simply mapping data by quantifying spatial autocorrelation (the degree to which nearby locations are similar), identifying spatial clusters (hotspots and coldspots), and analyzing the relationships between variables across space. For instance, we might use spatial regression to model the relationship between crime rates and socioeconomic factors, accounting for spatial dependencies to avoid misleading conclusions. Common techniques include Getis-Ord Gi* statistics for hotspot analysis, Moran’s I for measuring spatial autocorrelation, and spatial regression models like geographically weighted regression (GWR) for accounting for spatial heterogeneity.

Q 25. What is your experience with geoprocessing tools and scripting?

I have extensive experience with geoprocessing tools and scripting within ArcGIS and QGIS environments. My skills encompass a wide range of tools including spatial joins, overlay analysis (intersection, union, clip), buffering, proximity analysis, and raster calculations. I’m proficient in Python scripting, using libraries like ArcPy (for ArcGIS) and PyQGIS (for QGIS) to automate repetitive tasks, build custom geoprocessing tools, and integrate GIS with other data sources. For example, I developed a Python script that automatically processed hundreds of satellite images, extracted relevant features, and generated reports for vegetation change monitoring. This significantly increased efficiency compared to manual processing.

Q 26. How do you handle large datasets in GIS?

Handling large datasets in GIS requires a strategic approach focusing on data management, efficient processing techniques, and appropriate software. This involves:

- Data Partitioning: Dividing the dataset into smaller, manageable chunks for processing. This can significantly reduce processing time and memory usage.

- Database Management Systems (DBMS): Utilizing spatial databases like PostGIS or Oracle Spatial to store and manage large datasets efficiently. They are optimized for spatial queries and analysis.

- Data Compression: Employing appropriate compression techniques to reduce storage requirements and improve processing speed. Different formats have varying levels of compression.

- Cloud Computing: Leveraging cloud platforms like AWS or Azure for processing and storage when dealing with exceptionally large datasets that exceed local resources.

- Parallel Processing: Utilizing multi-core processors and distributed computing frameworks to speed up processing time.

The choice of strategy depends on the size of the dataset, the available resources, and the specific analytical task.

Q 27. Explain your knowledge of different map types (e.g., thematic, topographic).

Different map types serve distinct purposes.

- Thematic Maps: These highlight a particular theme or attribute, such as population density, rainfall patterns, or vegetation types. Choropleth maps, using color shading to represent data values across areas, are a common example. Dot density maps use dots to show the concentration of a phenomenon. Isopleth maps use lines to connect points of equal value (e.g., contour lines on a topographic map).

- Topographic Maps: These display the shape and elevation of the Earth’s surface, using contour lines to represent elevation levels, with additional features like rivers, roads, and buildings. They provide a three-dimensional representation on a two-dimensional plane.

- Reference Maps: These show the location of features, but usually without emphasis on any specific theme. Examples include road maps, political maps showing boundaries, and atlas maps.

The choice of map type depends critically on the data and the message intended to be conveyed.

Q 28. Describe your familiarity with spatial decision support systems.

Spatial Decision Support Systems (SDSS) are computer-based systems designed to help decision-makers solve complex spatial problems. They integrate GIS with other data sources, modeling tools, and user interfaces to facilitate informed decision-making. An SDSS might integrate data on land use, environmental factors, and economic conditions to help determine optimal locations for new infrastructure projects. It could also include scenario planning capabilities, allowing users to evaluate the potential impacts of various alternative decisions. My experience includes using SDSS for land-use planning, environmental impact assessment, and resource management, allowing users to model the consequences of diverse policy decisions before implementation.

Key Topics to Learn for Geographic Knowledge and Expertise Interview

- Geographic Information Systems (GIS): Understanding fundamental GIS concepts, data structures (vector, raster), and common software (ArcGIS, QGIS). Practical application: Analyzing spatial data to identify trends or patterns in population distribution, resource management, or environmental impact.

- Cartography and Map Design: Principles of map projection, symbolization, and cartographic communication. Practical application: Creating effective and informative maps for various audiences and purposes, considering readability and data representation.

- Spatial Analysis Techniques: Familiarity with techniques like overlay analysis, buffering, proximity analysis, and network analysis. Practical application: Solving real-world problems such as site selection, route optimization, or identifying areas at risk from natural hazards.

- Remote Sensing and Imagery Interpretation: Understanding satellite and aerial imagery, image processing techniques, and their applications in environmental monitoring, urban planning, and resource assessment. Practical application: Analyzing satellite imagery to assess deforestation rates or monitor changes in land cover.

- Geographic Data Modeling and Database Management: Understanding different data models (e.g., relational, object-oriented) and database management systems used in storing and managing geographic data. Practical application: Designing and implementing efficient databases for large-scale geographic projects.

- Geovisualization and Presentation Skills: Effectively communicating geographic information through maps, charts, graphs, and presentations. Practical application: Presenting complex spatial data to a non-technical audience in a clear and concise manner.

- Spatial Statistics and Data Analysis: Understanding statistical methods used in analyzing spatial data, including spatial autocorrelation and regression. Practical application: Identifying statistically significant patterns and relationships in geographic data.

Next Steps

Mastering Geographic Knowledge and Expertise opens doors to exciting and impactful career opportunities in fields like environmental science, urban planning, transportation, and market research. A strong foundation in these areas significantly enhances your employability and allows you to contribute meaningfully to your chosen field. To maximize your job prospects, focus on creating an ATS-friendly resume that effectively highlights your skills and experience. ResumeGemini is a trusted resource that can help you build a professional and impactful resume. They provide examples of resumes tailored to Geographic Knowledge and Expertise to guide you through the process, ensuring your qualifications shine.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Very informative content, great job.

good