Every successful interview starts with knowing what to expect. In this blog, we’ll take you through the top Hyperspectral Data Analysis interview questions, breaking them down with expert tips to help you deliver impactful answers. Step into your next interview fully prepared and ready to succeed.

Questions Asked in Hyperspectral Data Analysis Interview

Q 1. Explain the concept of hyperspectral imaging and its advantages over multispectral imaging.

Hyperspectral imaging captures images across a very large number of continuous, narrow spectral bands, creating a ‘spectrum’ for each pixel. Think of it like taking thousands of photographs of the same scene, each using a slightly different color filter. This is in stark contrast to multispectral imaging, which uses only a few broad spectral bands (like RGB in a typical camera). This increased spectral resolution is the key advantage.

Multispectral imaging, while useful, provides a limited view of the scene’s spectral signature. Hyperspectral imaging, however, provides much richer information, allowing for precise identification and characterization of materials. For instance, multispectral imagery might struggle to distinguish between different types of vegetation, while hyperspectral imagery can easily differentiate between healthy and stressed plants based on subtle variations in their spectral reflectance.

- Higher spectral resolution: Hyperspectral offers far more spectral bands than multispectral, leading to finer detail in material identification.

- Improved material identification: Subtle spectral differences become apparent, enabling better classification and discrimination.

- Quantitative analysis: Hyperspectral data allows for detailed quantitative analysis of material properties.

Q 2. Describe different atmospheric correction methods used in hyperspectral data processing.

Atmospheric correction is crucial in hyperspectral data processing because the atmosphere interacts with light, altering the spectral signature of objects. Several methods exist to compensate for this.

- Empirical Line Methods: These methods use a reference spectrum (often water or a dark object) to estimate and remove atmospheric effects. They are relatively simple but require careful selection of the reference. Examples include the Dark Object Subtraction method.

- Radiative Transfer Models (RTMs): These sophisticated models simulate the interaction of light with the atmosphere, offering a physically-based approach. They are more accurate but require detailed atmospheric parameters (e.g., water vapor, aerosol content), which are often obtained from weather stations or atmospheric profiling sensors. MODTRAN and 6S are commonly used RTMs.

- Statistical Methods: Techniques like Principal Component Analysis (PCA) can be used to identify and remove atmospheric signatures from hyperspectral data. These methods are often used in conjunction with other atmospheric correction methods.

The choice of method depends on the specific application and available data. For instance, simple empirical methods may suffice for relatively clear atmospheric conditions, while RTMs are preferable for complex atmospheric scenarios.

Q 3. What are the common challenges in hyperspectral data processing, and how do you address them?

Hyperspectral data processing presents unique challenges. High dimensionality (large number of bands) and data volume are primary concerns, leading to issues like:

- High dimensionality: Processing such large datasets requires significant computational resources and can lead to the ‘curse of dimensionality’ where relationships are difficult to discern.

- Data noise and artifacts: Hyperspectral sensors are susceptible to noise, striping, and other artifacts that need to be addressed.

- Computational complexity: Many algorithms for processing hyperspectral data are computationally expensive.

- Data storage and management: Storing and managing large hyperspectral datasets can be a logistical challenge.

These challenges are addressed through various techniques. Dimensionality reduction methods like PCA or Minimum Noise Fraction (MNF) transform the data into a smaller, more manageable dimension. Noise reduction techniques, such as median filtering and wavelet denoising, can improve data quality. Efficient algorithms and parallel processing capabilities help manage computational complexity. Finally, employing effective data compression and management strategies is essential for dealing with the large data volumes.

Q 4. Explain different techniques for hyperspectral image classification (e.g., supervised, unsupervised).

Hyperspectral image classification aims to assign labels (e.g., land cover types) to each pixel. Two main approaches exist:

- Supervised Classification: This method requires labeled training data, where the spectral signatures of known materials are used to train a classifier. Common algorithms include Support Vector Machines (SVMs), Random Forests, and Maximum Likelihood Classification (MLC).

- Unsupervised Classification: This method doesn’t require labeled training data and relies on the inherent structure of the data. Algorithms like k-means clustering group pixels based on spectral similarity.

Supervised methods generally offer higher accuracy, but require labeled data which can be time-consuming and expensive to acquire. Unsupervised methods are easier to apply but may yield less accurate results and require careful interpretation of the clusters.

Example: In precision agriculture, supervised classification might use labeled samples of different crops to map crop types in a field. Unsupervised classification could be used for preliminary analysis to identify potential areas of interest.

Q 5. Discuss the concept of spectral unmixing and its applications.

Spectral unmixing is a technique that decomposes the mixed pixels in a hyperspectral image into their constituent materials and their corresponding abundances. Think of it like separating the colors in a paint mixture to identify the individual pigments and their proportions.

Each pixel’s spectrum is modeled as a linear combination of pure material spectra (endmembers) and their fractional abundances. Algorithms like Non-negative Matrix Factorization (NMF) and Fully Constrained Least Squares (FCLS) are commonly employed. Endmember selection is a crucial step and can be done through methods like Pixel Purity Index (PPI) or Vertex Component Analysis (VCA).

Applications: Spectral unmixing is used extensively in various fields, including:

- Geology: Identifying minerals and their concentrations.

- Remote Sensing: Mapping vegetation types and assessing their health.

- Precision agriculture: Determining crop types and their biomass.

Q 6. How do you handle noise and artifacts in hyperspectral data?

Noise and artifacts in hyperspectral data can significantly impact analysis results. Various techniques are used to mitigate their effects:

- Spatial Filtering: Techniques like median filtering and Gaussian filtering smooth the data to reduce noise. However, caution is needed to avoid blurring edges.

- Spectral Filtering: This approach operates on the spectral dimension to remove noise. Wavelet denoising is a popular technique.

- Atmospheric Correction: As previously discussed, proper atmospheric correction can help reduce noise caused by atmospheric effects.

- Outlier Detection and Removal: Statistical methods can identify and remove outlier pixels that are significantly different from their neighbors.

The specific techniques used depend on the type and characteristics of the noise and artifacts present in the data.

Q 7. What are the different types of sensors used for hyperspectral data acquisition?

Various sensors are used for hyperspectral data acquisition, each with its own strengths and limitations:

- Pushbroom Sensors: These sensors use a linear array of detectors to acquire a single line of spectral data at a time. They are common in airborne and spaceborne systems. They offer high spatial resolution.

- Whiskbroom Sensors: These sensors use a rotating mirror to scan a scene, acquiring data point by point. They are more compact than pushbroom but have lower spatial resolution.

- Snapshot Sensors: These sensors use a focal plane array of detectors to acquire a complete image simultaneously. They are ideal for fast-moving applications but are often more expensive.

The selection of the sensor type depends on the specific application requirements, such as spatial resolution, spectral range, acquisition speed, and cost.

Q 8. Describe the process of calibrating a hyperspectral sensor.

Hyperspectral sensor calibration is a crucial step to ensure accurate and reliable data. It’s the process of correcting for systematic errors in the sensor’s measurements, ensuring that the recorded spectral values accurately reflect the true reflectance or radiance of the target material. This involves several steps.

- Dark Current Correction: Subtracting the signal measured when the sensor is completely dark to remove electronic noise. Imagine it like subtracting the background hum from a recording to hear the music clearly.

- White Reference Correction: Dividing the measured signal by the signal obtained from a known white reference (e.g., Spectralon panel). This accounts for variations in the sensor’s sensitivity across different wavelengths. Think of it as setting a baseline for the instrument’s response.

- Stray Light Correction: Removing the impact of light scattering within the sensor, which can lead to inaccurate spectral measurements. This is like cleaning a window to get a clear view.

- Geometric Correction: Correcting for distortions in the sensor’s image, ensuring that each pixel corresponds to a specific location on the ground. This is equivalent to aligning a distorted photograph to its actual shape.

- Atmospheric Correction: Removing the effects of the atmosphere (scattering and absorption) on the measured spectral signals to get values representative of the ground’s surface. Think of removing a haze from a photograph to see the landscape clearly.

The specific calibration procedures vary based on the sensor type and application. For instance, some sensors employ onboard calibration mechanisms, while others require external calibration targets. The goal remains consistent: to obtain radiometrically and geometrically accurate data for subsequent analysis.

Q 9. Explain the difference between reflectance and radiance in hyperspectral data.

Reflectance and radiance are two fundamental concepts in hyperspectral imaging, often confused. They both represent the electromagnetic energy emanating from a target, but they differ significantly in their physical meaning and units.

Radiance is the amount of electromagnetic energy emitted, reflected, or transmitted by a surface per unit solid angle, per unit projected area, and per unit wavelength. It’s essentially the raw energy measured by the sensor at each wavelength and is affected by factors like illumination and atmospheric conditions. Think of it as the raw intensity of light hitting the sensor.

Reflectance is the ratio of the reflected radiant flux to the incident radiant flux. It’s a unitless quantity representing the fraction of incoming light that is reflected at each wavelength. It’s a surface property that describes the inherent reflectivity of a material, independent of illumination conditions. Think of it as the percentage of light reflected by an object, relative to the light shining on it.

In practice, we often aim to derive reflectance from measured radiance to achieve a consistent measurement that is independent of external illumination conditions. Atmospheric corrections are essential to this process.

Q 10. What are the key considerations for selecting appropriate algorithms for hyperspectral data analysis?

Selecting appropriate algorithms for hyperspectral data analysis depends critically on several factors.

- Data Characteristics: The spectral and spatial resolution of the data, the presence of noise, and the distribution of classes significantly impact algorithm choices. High dimensional data necessitates careful consideration of computational cost and the risk of overfitting.

- Application Goals: Are you aiming for classification, regression, anomaly detection, or target detection? The algorithm must align with the specific objective of the analysis. For example, a Support Vector Machine (SVM) might be suitable for classification, while Partial Least Squares Regression (PLSR) is appropriate for predictive modeling.

- Computational Resources: The computational complexity of an algorithm must be considered, especially when dealing with large hyperspectral datasets. Some algorithms are computationally intensive and may require high-performance computing resources.

- Interpretability: The need for interpretable results can influence algorithm selection. For example, simpler linear models might be preferred over complex deep learning models if understanding the relationships between spectral features and classes is crucial.

For example, if you have a large dataset with a high level of spectral variability and want to perform classification, a deep learning approach such as a Convolutional Neural Network (CNN) might be a suitable choice, but it needs to be tested against other classifiers and proper validation techniques must be used.

Q 11. Describe your experience with different hyperspectral data processing software (e.g., ENVI, PCI Geomatica).

I have extensive experience with various hyperspectral data processing software packages, including ENVI and PCI Geomatica. My experience ranges from data pre-processing to advanced analytical tasks.

ENVI: I’m proficient in using ENVI for various aspects of hyperspectral data analysis, including atmospheric correction (e.g., FLAASH), orthorectification, band math, spectral unmixing, and classification using various algorithms (e.g., Support Vector Machines, Maximum Likelihood Classifier). I’ve used ENVI’s extensive toolset to process and analyze numerous datasets for applications ranging from precision agriculture to mineral exploration. For example, I have used ENVI’s spectral libraries to identify various mineral types in hyperspectral imagery from mining sites.

PCI Geomatica: My experience with PCI Geomatica focuses primarily on geometric correction, orthorectification, and mosaicking of hyperspectral imagery using its robust geospatial processing capabilities. I found it particularly useful for handling large datasets and integrating hyperspectral data with other geospatial information. I often utilize the software’s capabilities for creating detailed orthomosaics and generating seamless hyperspectral image datasets.

Beyond these packages, I’m also familiar with other platforms like R and Python, utilizing packages like Spectroscopy and scikit-learn for specific analyses and algorithm development. This adaptability ensures that I can select the optimal tool for each project’s unique needs.

Q 12. How would you approach the problem of dimensionality reduction in hyperspectral data?

Dimensionality reduction is crucial in hyperspectral data analysis because the high number of spectral bands often leads to computational challenges and potential overfitting. Several techniques effectively reduce dimensionality while preserving essential information.

- Principal Component Analysis (PCA): This linear transformation finds the principal components (uncorrelated linear combinations of the original bands) that explain the most variance in the data. It is computationally efficient but may not effectively capture non-linear relationships.

- Maximum Noise Fraction (MNF): A transformation that separates noise from the signal in hyperspectral data. It enhances the signal and reduces noise to improve the quality of subsequent analysis.

- Independent Component Analysis (ICA): This technique finds statistically independent components in the data, often revealing hidden structures or sources. It’s particularly useful for separating mixed signals.

- Feature Selection Techniques: These methods select a subset of the original bands based on their relevance to the problem at hand. Examples include recursive feature elimination (RFE) and filter methods like information gain or mutual information.

- Nonlinear Dimensionality Reduction: Techniques like Isomap or t-distributed Stochastic Neighbor Embedding (t-SNE) can effectively capture non-linear relationships between bands and reduce dimensionality while preserving local neighborhood structures.

The choice of technique often depends on the specific application and dataset characteristics. For instance, PCA is often a good starting point for its simplicity and speed, while more sophisticated techniques like MNF or ICA may be necessary when dealing with noisy or highly correlated data.

Q 13. Explain your familiarity with various feature extraction techniques for hyperspectral images.

Feature extraction is crucial to extract meaningful information from hyperspectral data and improve classification or regression accuracy. I am familiar with several techniques.

- Spectral Indices: These are simple combinations of spectral bands designed to highlight specific phenomena. Examples include the Normalized Difference Vegetation Index (NDVI) for vegetation analysis, and Normalized Difference Water Index (NDWI) for water detection.

NDVI = (NIR - Red) / (NIR + Red)where NIR is the near-infrared band and Red is the red band. These are effective and computationally efficient. - Spectral Mixture Analysis (SMA): This technique decomposes the spectra of mixed pixels into the proportions of their constituent materials. It is particularly useful for identifying materials in urban areas or areas with dense vegetation cover.

- Wavelet Transforms: These decompose the spectral signal into different frequency components, helping to identify subtle spectral features. They can be effective for removing noise and highlighting details.

- Higher-Order Statistics: These methods analyze higher-order moments (beyond the mean and variance) of the spectral data to capture more complex relationships between bands and improve classification accuracy.

- Deep Learning Features: Convolutional Neural Networks (CNNs) can learn complex features directly from raw spectral data, often outperforming traditional feature extraction methods.

The choice of feature extraction method depends on the specific application and the available computational resources. For example, using simple spectral indices is faster and more efficient for large datasets compared to computationally-intensive deep learning methods. The effectiveness of a chosen feature extraction method is often validated through experimental analysis and comparison.

Q 14. How do you evaluate the performance of a hyperspectral image classification algorithm?

Evaluating the performance of a hyperspectral image classification algorithm requires a rigorous approach using appropriate metrics and validation strategies.

- Confusion Matrix: This matrix shows the counts of correctly and incorrectly classified pixels for each class. It provides detailed insights into the algorithm’s performance, revealing errors in both class-specific and overall terms.

- Overall Accuracy: This is the proportion of correctly classified pixels to the total number of pixels. It provides a general measure of algorithm performance, but it can be misleading in the case of imbalanced datasets.

- Kappa Coefficient: This metric corrects for random agreement and provides a more robust measure of classification accuracy, especially for imbalanced datasets.

- Producer’s Accuracy and User’s Accuracy: Producer’s accuracy measures the probability that a pixel belonging to a certain class is correctly classified, while user’s accuracy measures the probability that a pixel classified as belonging to a certain class is indeed from that class. These metrics provide class-specific insights into the performance of the algorithm.

- ROC Curve and AUC: The Receiver Operating Characteristic (ROC) curve and Area Under the Curve (AUC) are particularly useful for evaluating the performance of algorithms in binary classification problems.

- Cross-Validation: This technique involves dividing the dataset into multiple folds, training the algorithm on some folds and testing it on others, to obtain a more robust estimate of its generalization ability.

By combining these evaluation metrics and validation techniques, we obtain a comprehensive assessment of the algorithm’s performance and its suitability for the specific application.

Q 15. Describe your experience with programming languages relevant to hyperspectral data analysis (e.g., Python, MATLAB).

My expertise in hyperspectral data analysis heavily relies on Python and MATLAB. Python, with libraries like scikit-learn, NumPy, and spectral, provides a flexible and powerful environment for data preprocessing, feature extraction, classification, and visualization. I frequently use NumPy for efficient array manipulation and spectral for its specialized functions tailored to hyperspectral data. MATLAB, with its Image Processing Toolbox and Statistics and Machine Learning Toolbox, offers a strong alternative, especially for tasks involving advanced signal processing and visualization. I’ve used it extensively for tasks requiring high-performance computation, particularly in scenarios with very large datasets. For example, in one project involving the analysis of thousands of hyperspectral images, MATLAB’s parallel processing capabilities significantly reduced processing time compared to a purely Python-based approach. My proficiency extends beyond simply using these tools; I can write efficient and optimized code to handle the computationally intensive nature of hyperspectral data analysis.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. Discuss your understanding of different spectral indices and their applications.

Spectral indices are mathematical combinations of different wavelengths in a hyperspectral image, designed to highlight specific features or properties of interest. They act like ‘filters’ allowing us to focus on particular phenomena. For example, the Normalized Difference Vegetation Index (NDVI) ((NIR - Red) / (NIR + Red)), uses near-infrared (NIR) and red wavelengths to assess vegetation health. Higher NDVI values generally indicate healthier vegetation. Other commonly used indices include the Normalized Difference Water Index (NDWI) for water detection, and the Normalized Difference Red Edge Index (NDRE) for plant stress analysis. The choice of index depends heavily on the application. For instance, in precision agriculture, NDVI helps monitor crop growth and identify areas needing irrigation, while NDWI is crucial in hydrological studies for mapping water bodies and assessing water quality. My experience encompasses a wide array of indices, and I’m adept at designing custom indices tailored to specific research needs, considering the unique spectral signatures of the materials being analyzed.

Q 17. How would you handle missing data in a hyperspectral dataset?

Missing data in hyperspectral datasets is a common challenge. The approach depends on the nature and extent of the missing data. Simple methods like replacing missing values with the mean or median of the surrounding pixels can be used for small amounts of missing data, but these methods can distort the data if applied extensively. More sophisticated techniques include interpolation methods like spline interpolation or kriging, which predict missing values based on the spatial and spectral correlation of neighboring pixels. For larger gaps or structured missing data patterns, more advanced imputation techniques like machine learning algorithms (e.g., k-Nearest Neighbors, random forest) can be employed. The best method is often determined by the specific dataset characteristics and the acceptable level of data distortion. For example, in a remote sensing application where a cloud covers a portion of the image, spatial interpolation might be effective; however, for sensor malfunction resulting in sporadic missing bands, a more robust method such as machine learning-based imputation might be necessary. A critical step involves assessing the impact of the imputation method on downstream analyses to ensure data integrity.

Q 18. Explain the concept of spatial and spectral resolution in hyperspectral imaging.

Spatial resolution refers to the size of the smallest discernible detail on the ground, expressed as the ground sample distance (GSD). A higher spatial resolution means smaller pixels and more detailed images, allowing for finer-scale analysis. Spectral resolution refers to the width and number of contiguous spectral bands recorded by the sensor. A higher spectral resolution means narrower bands and more detailed spectral information, providing a richer description of material properties. Think of it like this: spatial resolution determines the ‘size’ of the pixels and the amount of detail in the image itself, whereas spectral resolution determines how finely you can analyze the ‘color’ of each pixel in terms of its spectral signature. In practice, the ideal combination depends on the application. For instance, high spatial resolution is important for identifying individual plants in precision agriculture, while high spectral resolution is vital for differentiating subtle variations in mineral composition in geological surveys. Often, there’s a trade-off between spatial and spectral resolution—high resolution in one often comes at the expense of the other.

Q 19. What are the ethical considerations in using hyperspectral data?

Ethical considerations in using hyperspectral data are multifaceted. Privacy is a major concern, especially when data contains information about individuals or sensitive locations. For example, hyperspectral imagery could potentially be used to identify individuals based on their unique spectral signatures. Data security is another key issue; ensuring the confidentiality and integrity of hyperspectral data requires robust security measures. Bias in algorithms and data interpretation needs careful consideration. Algorithms trained on biased datasets may perpetuate existing societal biases. Transparency and accountability are also crucial; methods used to collect, process, and interpret data must be clearly documented and made accessible. Furthermore, there are environmental and social implications related to the use of hyperspectral data. For example, the application of hyperspectral imaging in surveillance should always be critically assessed in terms of potential misuse.

Q 20. Describe a project where you used hyperspectral data analysis to solve a real-world problem.

In a recent project, I used hyperspectral imaging to detect early signs of disease in citrus trees. We collected hyperspectral data from both healthy and diseased trees. Using machine learning techniques (specifically, Support Vector Machines), we trained a model to classify the trees based on their spectral signatures. The model achieved high accuracy in identifying trees with early stages of disease, even before visual symptoms were apparent. This allowed for timely intervention, potentially reducing crop losses significantly. The project showcased the power of hyperspectral data analysis for precision agriculture and early disease detection, demonstrating its applicability in optimizing resource allocation and improving yields. The resulting findings contributed to improved disease management practices and reduced the economic impact of citrus diseases.

Q 21. How do you ensure the accuracy and reliability of your hyperspectral data analysis results?

Ensuring the accuracy and reliability of hyperspectral data analysis results requires a multifaceted approach. It starts with meticulous data acquisition, including proper calibration and atmospheric correction to minimize systematic errors. Robust data preprocessing is crucial, handling issues like noise, outliers, and missing data. I employ rigorous validation techniques, including independent data sets for model testing and cross-validation methods to assess model generalization performance. Uncertainty analysis is critical to quantify the uncertainty associated with the results. Careful consideration of the limitations of the methods and assumptions used is paramount. Furthermore, comparing results with ground truth data or established benchmarks helps assess the accuracy of our interpretations. Transparency in data handling and analysis procedures is maintained throughout the process, creating reproducible and verifiable results. Regular quality control checks and peer review significantly enhance the reliability of the final conclusions drawn.

Q 22. What are some limitations of hyperspectral imaging?

Hyperspectral imaging, while powerful, has several limitations. One major hurdle is the sheer volume of data generated. A single hyperspectral image can contain hundreds or even thousands of spectral bands, leading to massive file sizes and demanding computational resources for processing and analysis. Think of it like trying to assemble a giant jigsaw puzzle with thousands of incredibly similar pieces – it takes a lot of time and processing power.

Another limitation is the relatively slow acquisition speed compared to traditional RGB imaging. This can be problematic for applications requiring real-time or near real-time analysis, such as monitoring dynamic processes. For example, accurately imaging a fast-moving object like a bird in flight using hyperspectral imaging could be challenging.

Furthermore, atmospheric effects like scattering and absorption can significantly distort the acquired spectra, making accurate data interpretation difficult. Imagine trying to see through a hazy fog – it obscures the detail. Sophisticated calibration and atmospheric correction techniques are crucial to mitigate this issue.

Finally, the cost of hyperspectral imaging systems can be prohibitive, limiting accessibility for researchers and businesses with limited budgets. The specialized sensors, data processing software, and expertise needed all contribute to this high cost.

Q 23. Explain your understanding of different data formats used for hyperspectral data.

Hyperspectral data comes in various formats, each with its strengths and weaknesses. A common format is ENVI’s .hdr/.dat format, where the .hdr file contains metadata like spectral range, spatial resolution, and calibration information, while the .dat file holds the actual spectral data. This format is widely used and well-supported by many processing software packages.

Another popular format is the GeoTIFF format (.tif), which integrates geospatial information (latitude, longitude, etc.) directly into the image file. This is particularly useful for applications requiring geographic referencing, like precision agriculture or environmental monitoring. Think of it as having the image’s location embedded directly in the file – very useful for location-specific analysis.

Furthermore, raw data from hyperspectral sensors often needs to be converted into a usable format, frequently a multi-dimensional array. This array might be represented using formats such as numpy arrays in Python, or equivalent data structures in other programming languages. This allows flexible manipulation and processing using various algorithms.

The choice of data format depends heavily on the specific application and the software being used. Understanding these different formats is essential for efficient data management and analysis.

Q 24. How familiar are you with cloud-based platforms for hyperspectral data processing?

I have extensive experience with cloud-based platforms for hyperspectral data processing. Platforms like Google Earth Engine and Amazon Web Services (AWS) offer scalable computing resources, making it feasible to handle the vast datasets involved in hyperspectral analysis. They often come with pre-built algorithms and tools, which can significantly accelerate the processing workflow. For example, Google Earth Engine provides a wealth of pre-processed satellite imagery, including hyperspectral data, making accessing and analysing it a streamlined process.

Using cloud platforms allows for parallel processing of large datasets, dramatically reducing processing time. Imagine trying to process a terabyte-sized hyperspectral image on a single computer – it could take days or even weeks! Cloud platforms allow the task to be distributed across multiple machines, drastically shortening the processing time to potentially hours.

Furthermore, cloud-based storage offers cost-effective solutions for storing and managing large hyperspectral datasets. The accessibility of data from different locations and the collaborative features of cloud platforms facilitate project work among geographically dispersed teams. This simplifies team collaboration significantly.

Q 25. Discuss your experience with parallel computing for hyperspectral data analysis.

Parallel computing is crucial for hyperspectral data analysis because of the enormous data volumes involved. I’ve used various parallel computing frameworks, including MPI (Message Passing Interface) and OpenMP, to distribute computationally intensive tasks across multiple cores or processors. This significantly reduces processing time, which is especially vital when dealing with large datasets or complex algorithms.

For instance, I’ve implemented parallel algorithms for atmospheric correction, spectral unmixing, and classification. In one project involving large-area vegetation mapping, parallelization sped up the processing time from several days to a few hours. This enabled us to complete the project well within the deadline and with better efficiency.

My experience also encompasses the use of parallel processing capabilities in cloud computing environments like AWS and Google Earth Engine, where the inherent scalability offers substantial advantages in handling extremely large hyperspectral datasets. These environments handle the complexities of task distribution and resource management, enabling me to focus on the analytical aspects.

Q 26. What are some emerging trends in hyperspectral data analysis?

Several emerging trends are shaping the field of hyperspectral data analysis. One significant trend is the increasing integration of deep learning techniques. Convolutional Neural Networks (CNNs) and other deep learning architectures are being used for tasks like spectral classification, feature extraction, and target detection, often outperforming traditional methods.

Another trend is the development of more compact and cost-effective hyperspectral sensors. This improved accessibility is expanding the applications of hyperspectral imaging across diverse fields. Miniaturization of sensors is enabling the development of drones and handheld devices equipped with hyperspectral capabilities, creating new opportunities for data acquisition.

The fusion of hyperspectral data with other data sources, such as LiDAR and multispectral imagery, is also gaining traction. This data fusion approach leverages the complementary strengths of different data modalities to create more comprehensive and robust analytical models. Combining these datasets is analogous to combining various pieces of evidence to solve a complex case – the result is a more complete understanding.

Finally, advancements in computational techniques and the adoption of cloud computing are facilitating the processing and analysis of increasingly larger and more complex hyperspectral datasets.

Q 27. Describe your experience working with large hyperspectral datasets.

I’ve worked extensively with large hyperspectral datasets, often exceeding terabytes in size. My experience includes handling data from airborne and satellite-based hyperspectral sensors, employing various strategies to manage and process this data efficiently. These strategies are critical for handling the computational demands and storage requirements of large datasets.

For example, in one project involving the analysis of a large-scale hyperspectral image of an agricultural field, we employed a combination of parallel processing, data subsetting, and cloud-based storage to successfully analyze the dataset. Data subsetting involved breaking the massive dataset into smaller, manageable chunks, processing each individually, and then combining the results.

Furthermore, I have experience developing and optimizing algorithms for processing large datasets, utilizing techniques such as dimensionality reduction to reduce computational complexity without significant loss of information. The efficiency of these algorithms is essential for dealing with large hyperspectral datasets in a timely manner.

Q 28. How do you stay updated on the latest advancements in hyperspectral imaging technology?

Staying updated in the rapidly evolving field of hyperspectral imaging requires a multi-pronged approach. I regularly attend conferences like the IEEE International Geoscience and Remote Sensing Symposium (IGARSS) and the SPIE Defense + Commercial Sensing. These conferences provide a platform to learn about the latest research and technologies from leading experts in the field.

I also actively follow relevant journals, such as Remote Sensing of Environment and IEEE Transactions on Geoscience and Remote Sensing, keeping abreast of published research papers. This helps me understand the ongoing advancements in data acquisition, processing, and analytical techniques.

In addition to publications, I leverage online resources, such as pre-print servers like arXiv, to access the most recent research findings. This provides early access to breakthroughs and cutting-edge research that may not yet have been formally published.

Finally, participating in online communities and forums dedicated to hyperspectral imaging allows me to engage with other researchers and professionals, sharing knowledge and staying informed about the latest developments.

Key Topics to Learn for Hyperspectral Data Analysis Interview

- Data Acquisition and Preprocessing: Understanding sensor characteristics, atmospheric correction techniques (e.g., FLAASH, QUAC), radiometric and geometric calibration, and noise reduction methods.

- Spectral Feature Extraction and Selection: Exploring techniques like band ratios, principal component analysis (PCA), independent component analysis (ICA), and wavelet transforms to identify relevant spectral features for classification and analysis.

- Hyperspectral Image Classification: Mastering supervised (e.g., Support Vector Machines, Random Forests, Maximum Likelihood Classification) and unsupervised (e.g., K-means clustering) classification algorithms and their application to hyperspectral data. Understanding the importance of training data and evaluating classification accuracy.

- Target Detection and Recognition: Familiarizing yourself with techniques for detecting specific materials or objects within hyperspectral imagery, including anomaly detection methods and spectral unmixing algorithms.

- Data Visualization and Interpretation: Proficiency in visualizing hyperspectral data using various techniques, including false-color composites, spectral profiles, and dimensionality reduction plots. Understanding how to effectively communicate insights derived from hyperspectral analysis.

- Practical Applications: Understanding the applications of hyperspectral data analysis in diverse fields such as precision agriculture, remote sensing, environmental monitoring, medical imaging, and mineral exploration.

- Algorithm Optimization and Performance Evaluation: Familiarity with metrics for evaluating algorithm performance (e.g., accuracy, precision, recall, F1-score) and techniques for optimizing algorithms for speed and efficiency.

- Software and Tools: Demonstrating familiarity with commonly used software packages and tools for hyperspectral data analysis (mentioning specific software is optional, but acceptable if done generally, e.g., “popular open-source and commercial software packages”).

Next Steps

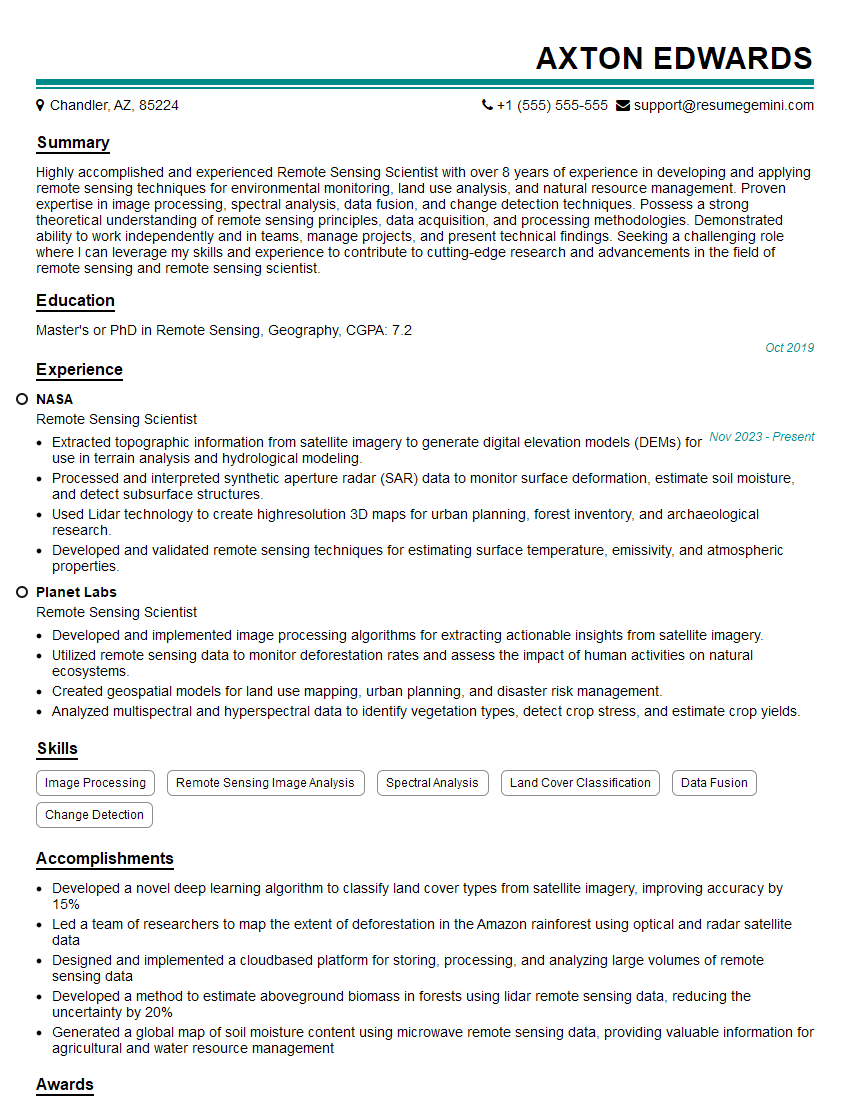

Mastering Hyperspectral Data Analysis opens doors to exciting and rewarding careers in cutting-edge fields. To maximize your job prospects, creating a strong, ATS-friendly resume is crucial. ResumeGemini is a trusted resource that can help you build a professional resume tailored to showcase your skills and experience in hyperspectral data analysis. ResumeGemini provides examples of resumes specifically designed for this field, giving you a head start in crafting a compelling application.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Very informative content, great job.

good