Every successful interview starts with knowing what to expect. In this blog, we’ll take you through the top Light and Shadow Rendering interview questions, breaking them down with expert tips to help you deliver impactful answers. Step into your next interview fully prepared and ready to succeed.

Questions Asked in Light and Shadow Rendering Interview

Q 1. Explain the difference between diffuse, specular, and ambient lighting.

Diffuse, specular, and ambient lighting are the three fundamental components of a lighting model, contributing to the overall appearance of a surface in a rendered scene. Think of them as different ways light interacts with an object.

- Diffuse Lighting: This represents the light scattered evenly in all directions from a surface. Imagine a matte surface like a piece of paper; light hits it and is reflected softly in every direction. The intensity depends on the angle of the light source and the surface normal (a vector perpendicular to the surface). It’s calculated using the Lambert’s cosine law:

intensity = I_light * max(0, N • L), whereI_lightis the light intensity,Nis the surface normal, andLis the vector pointing from the surface to the light source. - Specular Lighting: This describes the shiny, mirror-like reflection of light from a surface. Think of a polished metal or a glass ball. A bright highlight appears where the light source is reflected most directly. The Blinn-Phong and Cook-Torrance models are commonly used to calculate specular highlights, accounting for factors like surface roughness and the viewer’s position.

- Ambient Lighting: This represents the general, overall illumination in a scene. It’s the soft, indirect light that fills in shadows and prevents areas from being completely black. It’s often a constant value applied uniformly across the scene, but more advanced techniques can simulate ambient occlusion (reducing light in crevices).

In essence, diffuse lighting handles the overall brightness, specular lighting adds highlights, and ambient lighting provides base illumination, creating a realistic combination of light and shadow.

Q 2. Describe how you would achieve realistic shadows in a real-time rendering engine.

Achieving realistic shadows in real-time rendering requires careful consideration of performance and visual fidelity. A common approach involves shadow mapping. Shadow maps render the scene from the light’s perspective, storing the depth information in a texture. For each pixel in the main scene, we compare its depth to the corresponding depth in the shadow map. If the pixel is farther away than the value in the shadow map, it’s in shadow.

To improve quality, we can use techniques like:

- Percentage-Closer Filtering (PCF): This averages the depth values in a small area around the shadow map sample to reduce aliasing (jagged edges) and make shadows appear smoother.

- Variance Shadow Mapping (VSM): This stores both depth and depth variance in the shadow map to improve accuracy and reduce shadow artifacts.

For very large scenes, cascaded shadow maps are employed. They divide the scene into multiple sections, each rendered with its own shadow map at a different resolution. This avoids the problem of shadow maps becoming blurry at longer distances. Optimizations like using shadow atlases (packing multiple shadow maps into one texture) further enhance performance. Finally, some engines leverage screen-space techniques like screen-space ambient occlusion (SSAO) for a quick estimation of shadowing from objects close to the camera.

Q 3. What are the advantages and disadvantages of different shadow mapping techniques (e.g., shadow maps, cascaded shadow maps, etc.)?

Various shadow mapping techniques offer trade-offs between visual quality, performance, and implementation complexity:

- Shadow Maps: Simple to implement but suffer from aliasing (jagged shadows) and peter-panning (shadows floating above objects) issues at longer distances. They’re suitable for smaller scenes or situations where performance is critical over extreme visual fidelity.

- Cascaded Shadow Maps: Address the limitations of standard shadow maps by splitting the scene into multiple cascades (sections), each rendered with a different resolution. This reduces aliasing and maintains shadow accuracy at different distances. More complex to implement but significantly improves quality.

- Variance Shadow Maps: Offer improved precision and smoother shadows compared to standard shadow maps. They store both depth and variance information. However, they require more memory and might be slightly more complex to implement.

- Exponential Shadow Maps (ESM): Encode depth using an exponential function, improving precision particularly in shadowed areas, resulting in higher quality shadows than standard shadow maps. It’s computationally more expensive than standard shadow mapping, though.

The best choice depends on the project’s requirements. For mobile games or constrained environments, simple shadow maps or even no shadows might be the optimal choice. High-fidelity PC games often use cascaded shadow maps or more advanced techniques to enhance visual realism.

Q 4. Explain the concept of global illumination and its impact on rendering.

Global illumination (GI) simulates the indirect lighting effects in a scene. Unlike direct lighting (light directly from a source to a surface), GI accounts for light bouncing off surfaces multiple times. This results in more realistic lighting, including effects like color bleeding (nearby objects influencing each other’s color) and soft shadows.

GI significantly impacts rendering by creating a more visually rich and accurate scene. Without GI, scenes look flat and unrealistic, especially in enclosed environments. GI adds depth, realism, and visual appeal by producing accurate lighting interactions between objects. For example, GI can correctly simulate how light illuminates a room, reflecting off the walls and ceiling to create soft illumination rather than just directly lit areas.

There are various GI algorithms, ranging from computationally expensive path tracing to faster approximations like photon mapping, lightmaps, or irradiance caching. The choice of algorithm depends on the available resources and desired level of realism.

Q 5. How do you optimize a scene for real-time rendering with complex lighting?

Optimizing a scene with complex lighting for real-time rendering involves a combination of techniques:

- Level of Detail (LOD): Use lower-poly models and textures for objects far from the camera, reducing the number of polygons and pixels that need to be processed.

- Light Culling: Only process lights that affect visible objects. This avoids unnecessary calculations.

- Shadow Culling: Avoid rendering shadows for objects that are already in shadow or obscured.

- Lightmap Baking: Pre-calculate the indirect lighting for static geometry. This significantly reduces the real-time computational load.

- Simplified Lighting Models: Use less computationally intensive lighting models, like a simpler version of Phong or Blinn-Phong, when the visual impact justifies the performance gain.

- Occlusion Culling: Avoid rendering objects that are not visible to the camera, using techniques like frustum culling or hierarchical occlusion culling.

- Clustered Rendering: Divide the scene into spatial clusters and process lighting per cluster. This improves memory locality and cache performance.

The specific optimization strategies will depend on the scene’s complexity and the target hardware. Profiling tools are crucial for identifying performance bottlenecks and guiding the optimization process.

Q 6. Describe your experience with different lighting models (e.g., Phong, Blinn-Phong, Cook-Torrance).

I have extensive experience with various lighting models, including Phong, Blinn-Phong, and Cook-Torrance. Each offers a different balance between realism and computational cost:

- Phong: A simple model that is computationally inexpensive, but its specular highlight lacks realism, appearing slightly too sharp.

- Blinn-Phong: An improvement over Phong, offering smoother and more realistic specular highlights by using the half-angle vector. It is still relatively efficient for real-time applications.

- Cook-Torrance: A physically based model that accurately simulates microfacet reflection, yielding highly realistic specular highlights. However, it is computationally more demanding than Phong or Blinn-Phong, limiting its use in real-time scenarios with high polygon counts.

The choice depends on the project’s needs. For simpler games or applications requiring high frame rates, Blinn-Phong is often sufficient. High-end games and offline rendering frequently utilize Cook-Torrance to achieve photorealistic results.

Q 7. Explain how you would handle light bouncing in a scene.

Handling light bouncing (indirect lighting) requires more sophisticated techniques than simple direct lighting. Several approaches exist:

- Path Tracing: A highly realistic but computationally expensive method that simulates the path of light rays bouncing through the scene. Each bounce requires extensive calculations, making it unsuitable for real-time applications, except for very simplified scenes.

- Photon Mapping: Pre-calculates the distribution of photons (light particles) emitted from light sources and scattered in the scene. This information is then used to approximate indirect illumination. It’s computationally expensive but delivers high-quality results.

- Radiosity: Solves for the diffuse light interactions within a scene. It’s effective at capturing indirect diffuse light but less suited to specular reflections.

- Lightmaps/Baked Lighting: Pre-compute lighting information for static objects and store it in textures (lightmaps). These are efficient for real-time rendering but cannot handle dynamic objects.

- Screen Space Global Illumination (SSGI): Approximates global illumination in screen space, relying on information already rendered. This method is computationally cheaper and suitable for real-time applications but often produces less accurate results than other methods.

The optimal method depends on performance constraints, target platform, and desired level of realism. For real-time applications, a combination of techniques, possibly using lightmaps for static elements and screen-space methods for dynamic ones, is often employed.

Q 8. Discuss your experience with different rendering pipelines (e.g., deferred, forward).

Rendering pipelines determine the order in which lighting calculations are performed. Forward rendering calculates lighting for each object once, per light source. It’s simple to implement but can be inefficient with many lights. Deferred rendering, on the other hand, first renders geometric data and material properties to G-buffers (textures storing information like position, normal, etc.). Lighting calculations are then performed per-pixel, using the G-buffer data, making it very efficient with numerous light sources.

My experience encompasses both. I’ve used forward rendering in simpler projects, particularly those with limited light sources or real-time constraints where simplicity outweighed performance optimization. In more complex projects, involving hundreds of lights or advanced effects like global illumination, deferred rendering has been my go-to approach for performance reasons. For example, in a recent project simulating a bustling city at night, deferred rendering was crucial to handle the large number of streetlights, vehicle headlights, and ambient lighting effects without a significant performance hit.

Hybrid approaches also exist, combining the strengths of both methods. For instance, a pipeline might use forward rendering for a few key light sources (e.g., directional sunlight) and then defer the remaining lights for efficiency.

Q 9. What are the key considerations when choosing a light source for a specific scene?

Choosing the right light source is crucial for establishing mood, realism, and visual hierarchy. Key considerations include:

- Type of light: Directional (sun), point (light bulb), spot (spotlight), area (softbox) each creates a unique look. Directional lights are efficient but lack realism for close-up details; area lights provide soft, diffused shadows, making them ideal for indoor scenes; point and spot lights are best for highlighting specific objects.

- Intensity and color: These affect the overall brightness and color temperature of the scene, directly influencing the atmosphere (warm, cool, dramatic, serene). Correct color temperature is essential for photorealism.

- Shadow properties: Soft shadows, created by larger light sources or area shadows techniques, give a more natural look. Hard shadows, from smaller light sources or sharp light projections, provide contrast and drama.

- Scene context: The light source should be realistic within the scene’s setting. A harsh, direct light might be appropriate for a desert scene but unsuitable for a dimly lit forest.

For example, a scene requiring a mysterious atmosphere might use a single, cool-toned point light, creating strong, contrasting shadows, while a vibrant outdoor scene might utilize a directional light with soft shadows to create a warm feel.

Q 10. How do you create realistic reflections and refractions in your renders?

Realistic reflections and refractions require physically-based approaches. Reflections are achieved using techniques like screen-space reflections (SSR), which samples the scene’s reflection from the screen buffer, or ray tracing, which casts rays into the scene to find reflections directly. SSR is more efficient, better suited for real-time rendering; ray tracing offers higher fidelity but can be computationally expensive.

Refractions are similar, using techniques like ray tracing to simulate light bending as it passes through transparent materials. The refractive index of the material defines how much the light bends. Accurate representation of caustics (light patterns created by refraction) often requires complex path tracing methods.

In practice, I often combine these methods. For instance, I might use SSR for quick, approximate reflections, and then enhance them with a few ray-traced reflections for key reflective surfaces to boost realism without compromising performance too significantly. This kind of optimization is key to balancing visual quality with frame rates in real-time applications.

Q 11. Explain your experience with physically based rendering (PBR).

Physically Based Rendering (PBR) is a rendering technique that aims to simulate the behavior of light in the real world. It relies on physically accurate models of light interaction with surfaces, using parameters like albedo (base color), roughness (surface smoothness), metallicness (metallic content), and normal maps (surface detail). This approach ensures consistency and predictability in lighting across different materials and scenes.

My experience with PBR involves extensive use of PBR materials in various engines (Unreal Engine, Unity) and renderers (Arnold, V-Ray). I understand the importance of using energy conservation principles, where the total amount of light reflected and absorbed by a surface equals the incident light. I ensure these energy conservation principles are adhered to through careful selection of material parameters and lighting techniques. This guarantees realistic and consistent results, even in complex scenes with multiple light sources and various materials.

For example, I’ve leveraged PBR workflows to create realistic skin shaders in character rendering, accurately simulating the subtle variations in reflection, roughness, and subsurface scattering found in human skin.

Q 12. How do you troubleshoot lighting issues in a complex scene?

Troubleshooting lighting in complex scenes requires a systematic approach.

- Isolate the problem: Identify the specific area or object exhibiting the lighting issue. Disable other lights and objects to determine if the issue is localized.

- Check light settings: Verify the intensity, color, type, and shadow settings of the relevant light sources. Look for unexpected clipping or shadow artifacts.

- Examine material properties: Inspect the material properties of the affected objects, especially albedo, roughness, metallicness, and normal maps. Inaccurate or conflicting material settings can lead to unexpected lighting behavior.

- Analyze shadow maps: Examine shadow maps for artifacts like peter panning or shadow acne. This often indicates issues with shadow map resolution or bias settings.

- Use debug visualization: Many renderers provide debug visualizations for lighting, such as displaying normal maps, light probes, or irradiance volumes. These visualizations can help pinpoint the source of lighting problems.

- Simplify the scene: In extremely complex scenes, temporarily reduce the scene’s complexity (fewer lights, objects, or polygons) to rule out interactions between elements as the root cause.

For example, if you observe an object appearing unexpectedly dark, you might check if it’s accidentally occluded by another object, if the material’s albedo is set too low, or if the object isn’t receiving enough light from nearby sources.

Q 13. Describe your workflow for creating lighting in a game or film project.

My lighting workflow for game or film projects generally follows these steps:

- Concept and mood board: I start by defining the overall visual style and mood. This involves creating a mood board with reference images and descriptions of the desired lighting atmosphere.

- Blocking out the scene: I create a basic lighting setup using simple light sources to establish the overall illumination pattern. This is often done with placeholder assets to focus solely on lighting.

- Refining the lighting: I progressively refine the lighting, experimenting with different light types, intensities, and colors. This may involve iterating on multiple lighting passes or utilizing global illumination techniques.

- Adding details: Once the overall lighting is satisfactory, I add details such as rim lights, ambient occlusion, and volumetric lighting to further enhance realism and visual appeal.

- Testing and iteration: Throughout the process, I constantly test the lighting and make adjustments based on feedback and visual assessment. This iterative process ensures the lighting complements the scene’s narrative and aesthetic goals.

- Optimization: For real-time projects (games), optimization is a key aspect of the workflow, involving careful selection of lighting techniques and optimization strategies.

A recent film project involved meticulously recreating the lighting of a historical castle. The mood board focused on creating a sense of age and mystery. This guided my choice of soft, diffused light sources and strategic use of shadows to highlight architectural details and create a sense of depth. I used a mix of global illumination and local lighting techniques to generate believable lighting across different parts of the scene.

Q 14. What are some common techniques for optimizing lighting performance?

Optimizing lighting performance is crucial for real-time applications. Here are some common techniques:

- Light culling: Only render lights that affect visible objects. This drastically reduces the number of lighting calculations.

- Lightmap baking: Pre-compute lighting for static geometry onto texture maps (lightmaps). This removes runtime lighting calculations for these elements.

- Shadow map optimization: Use cascaded shadow maps (CSM) to efficiently render shadows at various distances or other techniques such as Variance Shadow Mapping (VSM) to reduce the cost of shadow calculations.

- Screen space ambient occlusion (SSAO): Approximates ambient occlusion effects in screen space, avoiding expensive ray tracing.

- Light probes: Pre-compute lighting in specific areas of the scene and use them to approximate lighting for nearby objects. This is efficient for indirect lighting.

- Level of detail (LOD) for lights: Use simplified light representations at far distances to reduce calculations.

- Use of clustered lighting: Organize lights into spatial clusters, and rendering lights in each cluster individually.

These optimizations help maintain acceptable frame rates without significantly compromising visual fidelity. The specific techniques used depend on the project’s specific requirements and rendering pipeline.

Q 15. How do you use light to create mood and atmosphere in a scene?

Light is the sculptor of mood and atmosphere in a scene. Think of a dimly lit alleyway versus a brightly lit park – the feeling is completely different. To control mood, I manipulate the color, intensity, and direction of light sources.

For a suspenseful, ominous scene, I might use cool-toned, low-intensity light sources, casting long shadows to emphasize darkness and mystery. Imagine a horror film – that’s where this technique shines. Conversely, for a warm, inviting scene, I’d use warm-toned, higher-intensity light, perhaps with soft shadows, to create a sense of comfort and security, like a cozy living room scene.

The direction of light also plays a crucial role. Backlighting can create silhouettes and drama, while front lighting emphasizes detail and realism. Side lighting, on the other hand, highlights texture and form.

I often use light to guide the viewer’s eye, drawing attention to specific elements within the scene through strategic lighting placement and intensity variations.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. Describe your experience with different shading techniques (e.g., Gouraud shading, Phong shading).

Gouraud shading and Phong shading are fundamental techniques for interpolating colors across polygon surfaces, creating the illusion of smooth, shaded objects. Gouraud shading interpolates colors at vertices, resulting in smoother transitions but potentially missing highlights. Phong shading interpolates normals at vertices, allowing for more accurate specular highlights and a more realistic appearance. It’s more computationally expensive, however.

I’ve used both extensively. For scenes where performance is critical and subtle highlights aren’t essential, Gouraud shading offers a good balance between speed and quality. However, for high-quality renders where realism is paramount, especially with reflective surfaces, Phong shading, or even more advanced techniques like Blinn-Phong or Cook-Torrance, are preferred.

For example, in a game where performance needs to be optimized, Gouraud might be suitable for many objects, but for critical objects like the main character’s face, Phong shading would be used to ensure high fidelity. In a film rendering pipeline, Phong or more sophisticated methods would be the norm.

Q 17. What are the advantages and disadvantages of using different light types (e.g., point lights, directional lights, spotlights)?

Different light types serve distinct purposes in scene illumination. Point lights radiate light equally in all directions, ideal for simulating lamps or small light sources. Directional lights simulate light from a distant source, like the sun, casting parallel rays. Spotlights emit light within a cone, mimicking focused light sources like flashlights or stage lights.

- Point Lights: Advantages: Simple to implement, effective for localized illumination. Disadvantages: Can be computationally expensive if many are used, falloff can be unrealistic at large distances.

- Directional Lights: Advantages: Efficient, excellent for simulating sunlight. Disadvantages: Don’t exhibit realistic falloff, not suitable for close-range illumination.

- Spotlights: Advantages: Precise control over illumination area and direction. Disadvantages: More complex to implement than point lights, can create harsh shadows if not carefully managed.

The choice depends heavily on the scene’s needs. A simple interior scene might use point lights for lamps and a directional light for ambient illumination. An outdoor scene would heavily rely on a directional light for the sun, possibly supplemented with point lights for streetlamps or spotlights for specific areas.

Q 18. Explain how you would create realistic subsurface scattering effects.

Subsurface scattering (SSS) simulates the way light penetrates translucent materials like skin, wax, or marble, scattering internally before re-emerging. Creating realistic SSS effects involves several steps.

- Material Properties: Define the material’s optical properties, such as scattering coefficient and albedo (the fraction of light reflected).

- Scattering Model: Choose a scattering model, such as the diffusion approximation or a more complex model that accounts for multiple scattering events. Simpler models are faster but less accurate.

- Rendering Technique: Implement the chosen model in the rendering pipeline. This might involve specialized shaders or ray tracing techniques.

- Iteration and Refinement: Iterate on material properties and scattering parameters to achieve a visually realistic result. Experimentation and fine-tuning are key.

In practice, I’d often use dedicated SSS shaders or plugins within a rendering engine. Many engines now offer built-in support for SSS, simplifying the process. The parameters within those shaders allow for granular control over scattering depth and color, enabling fine-tuning of the effect.

Q 19. How do you handle occlusion and indirect lighting in your work?

Occlusion and indirect lighting are crucial for realism. Occlusion refers to the blocking of light by objects, creating shadows. Indirect lighting refers to light that bounces off surfaces before reaching the viewer’s eye. Both are essential for creating a believable scene.

I handle occlusion through techniques like shadow mapping, ray tracing, or screen-space ambient occlusion (SSAO). Shadow mapping is a fast technique suitable for real-time rendering, while ray tracing produces more accurate results but is computationally expensive. SSAO is a screen-space technique that is efficient but can produce less accurate shadows than ray tracing.

For indirect lighting, I use techniques like global illumination (GI), which simulates the complex interplay of light bouncing between surfaces. Path tracing or photon mapping are common GI methods, providing high-quality results but demanding significant computational resources. Approximation techniques like ambient occlusion or light probes are used for faster rendering in real-time applications.

Choosing the right method depends on the project’s requirements. Real-time applications (games, interactive simulations) often need to prioritize speed, relying on approximations, while offline rendering (film, architectural visualization) allows the use of computationally intensive techniques to achieve high realism.

Q 20. Describe your experience with different rendering engines (e.g., Unreal Engine, Unity, Arnold, V-Ray).

I have extensive experience with various rendering engines, each offering unique strengths and weaknesses. Unreal Engine and Unity are real-time engines ideal for interactive applications, offering tools for efficient lighting and shadowing in real-time. Arnold and V-Ray are offline renderers that excel at creating photorealistic images and animations through highly sophisticated algorithms and features.

Unreal Engine’s strengths lie in its ease of use and real-time capabilities, making it well-suited for games and interactive experiences. Its lighting system is robust and offers excellent real-time global illumination approximations. V-Ray is a powerful choice for offline rendering, capable of producing incredibly high-quality images with advanced features for realistic materials and lighting. Arnold is known for its advanced ray tracing capabilities and high-quality rendering of complex scenes.

My choice of engine depends entirely on the project requirements. A real-time game would be built in Unreal or Unity, whereas a high-end architectural visualization or cinematic animation would leverage Arnold or V-Ray’s power.

Q 21. What are your preferred methods for creating volumetric lighting effects?

Creating realistic volumetric lighting effects, like fog, mist, or smoke, requires simulating the scattering and absorption of light within a volume. I employ several methods:

- Volumetric scattering shaders: These shaders simulate the interaction of light with particles in a volume, resulting in realistic scattering effects. Parameters such as density, scattering coefficient, and absorption coefficient control the look of the volume.

- Ray marching: This technique directly simulates light propagation through the volume by casting rays and accumulating scattering contributions along their path. It’s capable of producing high-quality effects but can be computationally expensive.

- Pre-computed volume textures: For performance optimization, I might pre-compute the volumetric lighting effect into 3D textures. This speeds up rendering but requires pre-processing steps.

The best approach depends on the desired quality and performance. Simple effects in a real-time game might use volumetric shaders with some approximations, while a high-quality cinematic render might employ ray marching for higher fidelity. Sometimes, a hybrid approach combining pre-computed textures with real-time shaders is used for optimal results.

Q 22. How do you balance realism and performance in your lighting work?

Balancing realism and performance in lighting is a constant tightrope walk. The most realistic rendering techniques, like path tracing, are computationally expensive, making them unsuitable for real-time applications. My approach involves a tiered system. For high-fidelity offline renders, I might use path tracing or a similar physically-based renderer, accepting the longer render times. For real-time applications like games or interactive simulations, I employ techniques like screen-space ambient occlusion (SSAO) and cascaded shadow maps to approximate global illumination and shadows efficiently. The key is understanding the limitations of the target platform and choosing the optimal balance between visual fidelity and frame rate. For example, I might use a higher-resolution shadow map for the main character, sacrificing quality for background objects where the detail is less critical.

I also leverage techniques like level of detail (LOD) for lighting. Far away objects might use simplified lighting calculations, while nearby objects get more detailed processing. This adaptive approach ensures that the most visually important elements receive the most computational effort.

Q 23. How do you use color temperature and color grading to enhance your lighting?

Color temperature and color grading are crucial for setting the mood and realism of a scene. Color temperature, measured in Kelvin, dictates the warmth or coolness of the light source. A lower Kelvin value (e.g., 2000K) indicates a warm, orange-yellow light like a candle, while a higher value (e.g., 6500K) represents a cool, bluish light like daylight. I use this to create specific atmospheres: a warm sunset scene might use a lower color temperature for the sun and ambient light, while a cold, sterile environment might employ a higher temperature.

Color grading comes into play after the initial lighting is established. It allows me to adjust the overall color palette, saturation, and contrast to further refine the mood and visual style. For instance, I might desaturate the colors slightly to create a more melancholic feel or boost the saturation to achieve a vibrant, energetic look. I often use a color grading tool to fine-tune the final look, making small adjustments to individual color channels to create a balanced and aesthetically pleasing result. I might use a LUT (Look-Up Table) to quickly apply a pre-determined color grading style for consistency across multiple projects.

Q 24. Describe your experience with HDR lighting and its benefits.

HDR (High Dynamic Range) lighting is a game-changer, allowing for a much wider range of brightness values than standard dynamic range (SDR). This translates to more realistic lighting and shadows, with brighter highlights and deeper blacks. In HDR, you can accurately represent the extreme brightness of the sun alongside the subtle details in deep shadows, something impossible with SDR.

My experience with HDR involves working with HDR image formats like OpenEXR and utilizing HDR-capable rendering engines. The benefits are significant: improved contrast and detail, a more natural and immersive experience, and the ability to capture subtle atmospheric effects like light scattering and volumetric lighting much more effectively. Working in HDR requires careful consideration of exposure and tone mapping to ensure that the final image, displayed on an SDR screen, remains visually appealing and avoids clipping or loss of detail. I typically use tone mapping operators to translate the HDR data to an SDR representation that preserves as much detail as possible. Examples include Reinhard and Filmic tone mappers.

Q 25. Explain your understanding of light baking and its applications.

Light baking is a technique where lighting information is pre-calculated and baked into textures. This significantly improves performance in real-time applications because the lighting calculations don’t need to be performed during gameplay. Instead, the baked lightmap is sampled during rendering, providing a very efficient way to represent lighting.

Light baking is typically used for static geometry, such as walls and floors in a building, and involves illuminating a 3D model with light sources and calculating the lighting values at each point on the surface. The results are stored in a texture called a lightmap. This lightmap is then used during runtime to accurately represent the lighting on the baked geometry. However, any dynamic elements like moving objects will require separate lighting calculations or techniques such as shadow maps.

Different baking techniques exist, such as irradiance caching, which provides higher fidelity at the cost of significantly higher memory requirements. The choice of method depends on available memory and the desired quality. For example, in a large open-world game, using irradiance caching might be impractical due to the massive memory footprint, whereas in a smaller, indoor scene, it might be more feasible.

Q 26. How do you handle dynamic lighting in a real-time application?

Handling dynamic lighting in real-time is challenging because it requires constant recalculation of lighting for any moving light sources or objects. I usually employ a combination of techniques to achieve this efficiently. For example, shadow maps are commonly used to render shadows from dynamic light sources. These shadow maps represent the shadow from a light source projected onto a plane. However, shadow map resolution is a limitation; higher-resolution maps are more accurate but consume more memory and processing power.

Another approach is to use deferred shading, where lighting calculations are performed after the geometry has been rendered. This allows for more efficient rendering of multiple lights. Furthermore, techniques like screen-space directional occlusion (SSDO) or SSAO can effectively approximate global illumination in real-time without the computational cost of full global illumination algorithms. Finally, I might use light probes or voxel cone tracing for more accurate and efficient real-time global illumination approximations, depending on the application’s needs and performance budget.

Q 27. Describe your experience with creating and using custom shaders.

Creating and using custom shaders is a powerful way to control and optimize lighting effects. My experience with custom shaders ranges from basic lighting models to highly complex effects. I’m proficient in various shading languages like HLSL (High-Level Shading Language) and GLSL (OpenGL Shading Language).

For instance, I’ve developed custom shaders for realistic subsurface scattering in skin, creating a more natural and lifelike appearance. I’ve also designed custom shaders for physically-based rendering (PBR), accurately simulating the interaction of light with different materials based on their properties, such as roughness and reflectivity. This often involves writing code that implements advanced BRDF (Bidirectional Reflectance Distribution Function) models to generate accurate reflections and specular highlights. Custom shaders allow me to tailor lighting effects precisely to the specific needs of a project, achieving levels of realism and visual fidelity that are not possible with pre-built shaders. For example, I might optimize a shader to reduce overdraw by only processing pixels that are truly affected by lighting changes.

// Example HLSL code snippet for a simple diffuse lighting shader float4 PS(float4 position : SV_POSITION, float3 normal : NORMAL) : SV_TARGET { float3 lightDir = normalize(lightPos - position.xyz); float diffuse = saturate(dot(normal, lightDir)); return float4(diffuse, diffuse, diffuse, 1.0f); } Q 28. What are some of the latest advancements in light and shadow rendering technology?

The field of light and shadow rendering is constantly evolving. Some of the most exciting recent advancements include:

- Path tracing advancements: Improvements in path tracing algorithms, such as denoising techniques and better acceleration structures, are making it more practical for real-time applications. This includes techniques like neural network-based denoisers, which significantly reduce the render time required for high-quality path tracing.

- Volumetric lighting and scattering: More efficient and realistic methods for simulating volumetric effects like fog, clouds, and atmospheric scattering are being developed. This often involves utilizing techniques like pre-computed scattering or more advanced ray marching methods.

- Real-time global illumination techniques: New approaches, such as photon mapping variations and screen-space global illumination methods, offer better approximations of global illumination in real-time, leading to more realistic and immersive scenes.

- Machine learning in rendering: The use of neural networks for tasks such as light transport simulation, denoising, and material representation is leading to significant improvements in rendering speed and quality. Examples include neural networks trained to predict light transport or to efficiently upsample low-resolution images to higher resolutions.

These advancements continue to push the boundaries of what’s possible in terms of realism and performance, enabling the creation of increasingly impressive and immersive visual experiences. The combination of traditional rendering techniques with machine learning offers a particularly exciting avenue for future developments.

Key Topics to Learn for Light and Shadow Rendering Interview

- Fundamentals of Light Transport: Understanding direct and indirect illumination, global illumination techniques (e.g., path tracing, radiosity), and their computational complexities.

- Shadow Mapping Techniques: Explore various shadow mapping algorithms (e.g., shadow volume, PCSS), their advantages and disadvantages, and how to optimize them for performance.

- Real-time vs. Offline Rendering: Discuss the differences in approaches, computational constraints, and algorithm choices for real-time (games, interactive applications) and offline (film, animation) rendering.

- Light Sources and Materials: Understand the properties of different light sources (point, directional, area lights) and how materials interact with light (diffuse, specular, subsurface scattering).

- Advanced Shading Models: Explore more sophisticated shading techniques like physically based rendering (PBR), microfacet theory, and their impact on realism and visual quality.

- Optimization Strategies: Discuss methods for optimizing rendering performance, such as level of detail (LOD) techniques, culling, and efficient data structures.

- Practical Application: Be prepared to discuss projects where you’ve implemented light and shadow rendering techniques, highlighting challenges overcome and solutions implemented.

- Problem-Solving Approaches: Demonstrate your ability to debug rendering issues, analyze performance bottlenecks, and propose solutions to improve visual quality and efficiency.

- Current Trends and Research: Stay updated on the latest advancements in light and shadow rendering, such as real-time global illumination techniques and novel shading models.

Next Steps

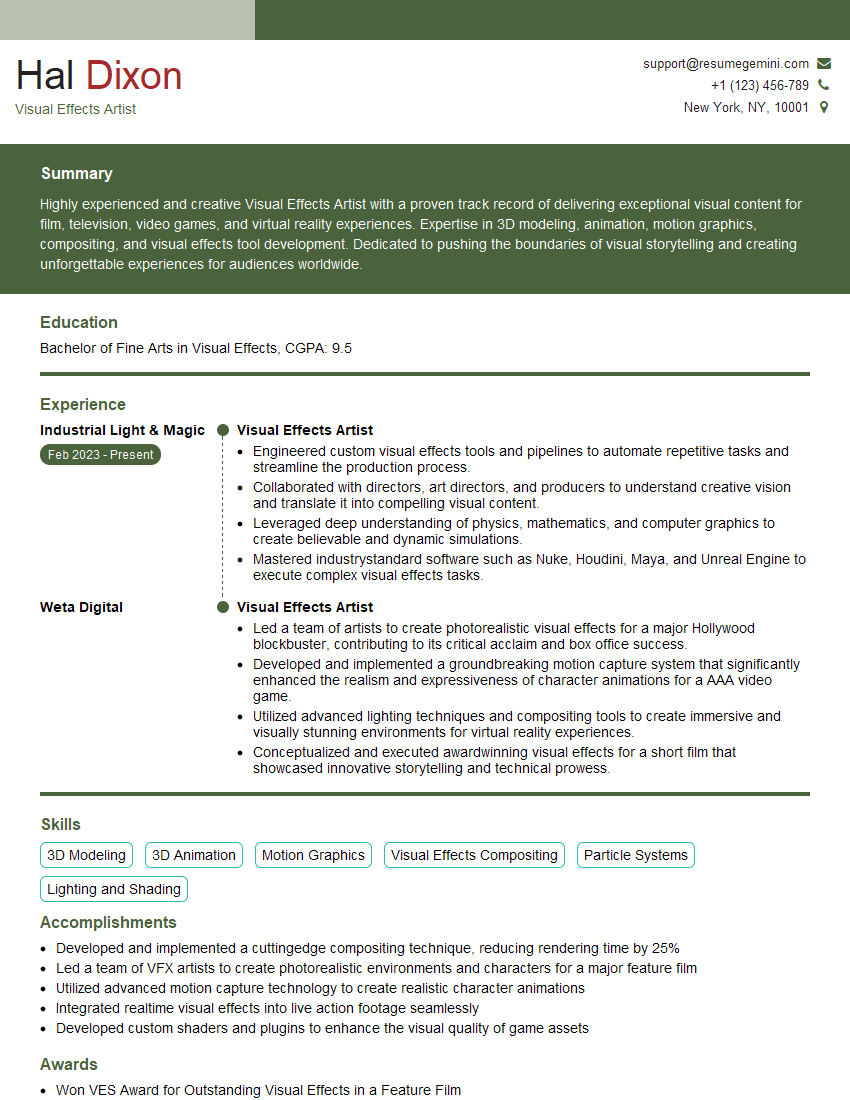

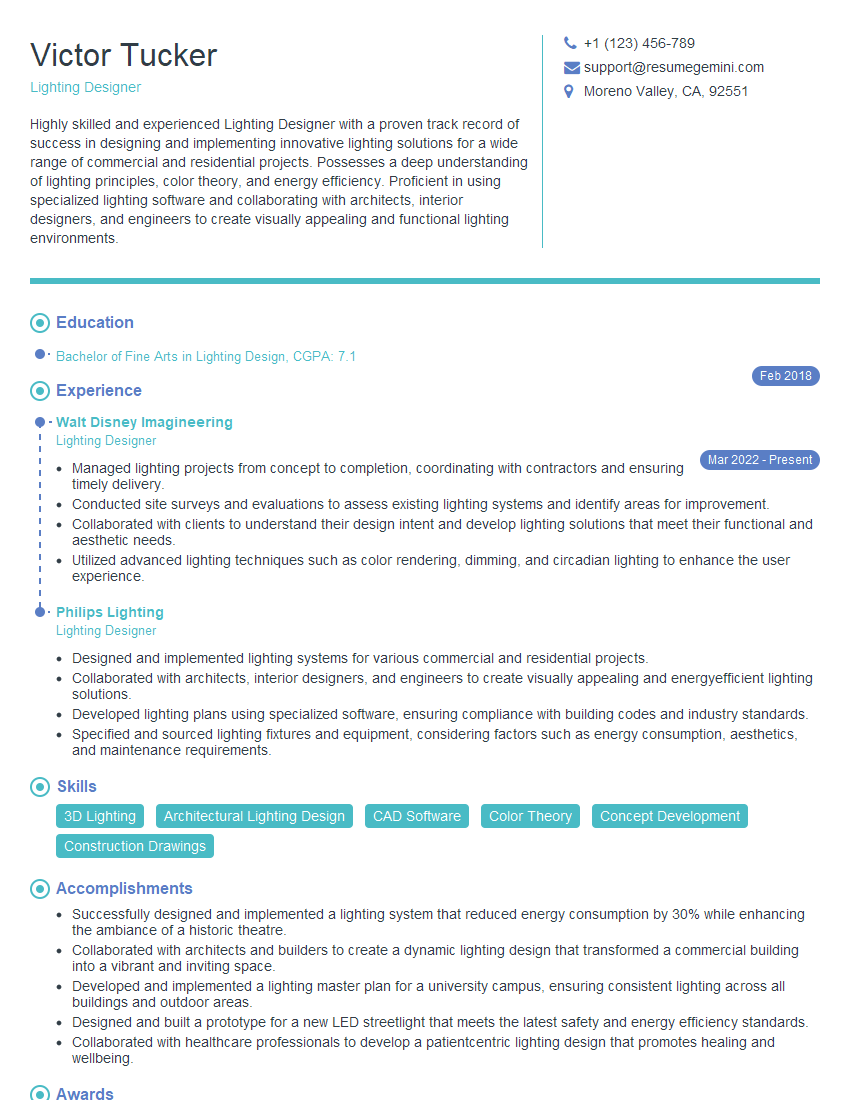

Mastering Light and Shadow Rendering is crucial for advancing your career in computer graphics, game development, VFX, and related fields. A strong understanding of these techniques demonstrates a high level of technical proficiency and problem-solving skills, highly valued by employers. To increase your chances of landing your dream role, creating a compelling and ATS-friendly resume is essential. We encourage you to leverage ResumeGemini, a trusted resource for building professional resumes that highlight your skills effectively. ResumeGemini provides examples of resumes tailored to Light and Shadow Rendering, giving you a head start in showcasing your expertise to potential employers.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Very informative content, great job.

good