Preparation is the key to success in any interview. In this post, we’ll explore crucial Metal Measurement interview questions and equip you with strategies to craft impactful answers. Whether you’re a beginner or a pro, these tips will elevate your preparation.

Questions Asked in Metal Measurement Interview

Q 1. Explain the different types of metal measurement techniques.

Metal measurement techniques are broadly categorized into dimensional metrology and material property measurement. Dimensional metrology focuses on the size, shape, and geometric characteristics of metal components, while material property measurement focuses on characteristics like hardness, tensile strength, and chemical composition.

- Dimensional Metrology: This involves techniques like length measurement (using calipers, micrometers, etc.), surface roughness measurement (using profilometers), and geometric dimensioning and tolerancing (GD&T) analysis.

- Material Property Measurement: This includes techniques like hardness testing (Rockwell, Brinell, Vickers), tensile testing, chemical analysis (spectroscopy, X-ray diffraction), and non-destructive testing (NDT) methods like ultrasonic testing and magnetic particle inspection.

Choosing the right technique depends heavily on the specific requirements of the application. For instance, a precision machined part would necessitate highly accurate dimensional metrology, while selecting a material for a high-stress application would require thorough material property testing.

Q 2. Describe the principles of dimensional metrology.

Dimensional metrology is based on the precise determination of a part’s dimensions and geometric characteristics. Its principles revolve around accuracy, traceability, and repeatability.

- Accuracy: The closeness of a measured value to the true value. This relies on calibrated instruments and controlled measurement environments.

- Traceability: The ability to link a measurement back to a national or international standard. This ensures consistency across different measurements and locations.

- Repeatability: The ability to obtain the same measurement result when the measurement is repeated under identical conditions. This is crucial for reliability.

Imagine building a car engine: Dimensional metrology ensures each piston fits precisely within its cylinder, ensuring optimal performance and preventing failure. Inaccurate dimensions could lead to catastrophic engine damage.

Q 3. What are the common instruments used for metal measurement?

The choice of instrument depends on the required accuracy and the specific dimension being measured. Common instruments include:

- Calipers: Used for measuring external and internal dimensions, depths, and steps. They offer good accuracy for many applications.

- Micrometers: Provide higher precision than calipers, ideal for precise measurements of smaller dimensions.

- Coordinate Measuring Machines (CMMs): Highly accurate 3D measurement systems used for complex shapes and large components.

- Optical Comparators: Used for comparing a part’s profile to a master drawing, highlighting discrepancies.

- Surface Roughness Testers (Profilometers): Measure the texture of a surface, important for functionality and aesthetics.

- Hardness Testers (Rockwell, Brinell, Vickers): Measure the resistance of a material to indentation, indicating its hardness.

For example, a machinist might use calipers for a quick check of a part’s length, while a quality control inspector might use a CMM for a detailed dimensional analysis of a complex assembly.

Q 4. How do you ensure the accuracy and precision of metal measurements?

Ensuring accuracy and precision in metal measurements involves a multi-faceted approach:

- Instrument Calibration: Regular calibration against traceable standards is paramount. This verifies that instruments are functioning within their specified tolerances.

- Environmental Control: Temperature, humidity, and vibrations can influence measurements. A controlled environment minimizes these effects.

- Proper Measurement Techniques: Following established procedures and using appropriate techniques minimizes human error. This includes proper handling of instruments and accurate data recording.

- Statistical Process Control (SPC): Using statistical methods to monitor measurement processes and identify potential sources of variation.

- Operator Training: Well-trained operators are essential for consistent and accurate measurements.

Think of it like baking a cake – you need precise measurements of ingredients and a properly calibrated oven to achieve the desired result. Inaccurate measurements in metalworking can lead to scrap, rework, or even product failure.

Q 5. What are the sources of error in metal measurement and how to mitigate them?

Several sources of error can affect metal measurements:

- Instrument Error: Imperfections or wear in the measuring instrument.

- Environmental Error: Temperature fluctuations, humidity, vibrations.

- Operator Error: Incorrect handling of instruments, parallax error (misreading the scale), improper data recording.

- Workpiece Error: Deformation of the workpiece during measurement, surface imperfections.

Mitigation Strategies: Calibration, environmental control, proper measurement techniques, using appropriate instruments, and statistical process control can effectively minimize these errors.

For instance, parallax error can be minimized by ensuring the operator’s eye is aligned perpendicular to the scale. Using a CMM in a temperature-controlled room reduces environmental errors.

Q 6. Explain the concept of tolerance and its importance in metal measurement.

Tolerance specifies the permissible variation in a dimension or geometric characteristic. It defines an acceptable range of values rather than a single, exact value. It’s crucial because:

- Manufacturing Feasibility: Manufacturing processes inherently have some variation. Tolerances account for this, ensuring parts can be produced within acceptable limits.

- Interchangeability: Tolerances ensure parts are interchangeable, meaning they can be used in place of each other without affecting functionality.

- Cost Control: Tight tolerances increase manufacturing costs; specifying appropriate tolerances balances quality and cost.

Imagine a bolt and nut: If the tolerances are too tight, it’ll be very expensive to produce. If they’re too loose, the connection won’t be secure. Well-defined tolerances guarantee a reliable and cost-effective fit.

Q 7. How do you interpret technical drawings related to metal components?

Interpreting technical drawings requires understanding symbols, dimensions, and tolerances. Key elements include:

- Views: Orthographic projections (top, front, side) show different views of the part.

- Dimensions: Numerical values indicating the size of features.

- Tolerances: Permissible variations in dimensions.

- Geometric Dimensioning and Tolerancing (GD&T): Symbols that specify the permissible variations in form, orientation, location, and runout.

- Material Specifications: Information about the material the part is made of.

For example, a drawing might show the diameter of a hole with a specified tolerance, indicating the acceptable range of hole sizes. Understanding GD&T symbols is crucial for interpreting complex geometric requirements.

Experience and training are essential for accurate interpretation. Using software specifically designed for CAD (Computer-Aided Design) drawings assists in precise understanding and analysis.

Q 8. Describe your experience with different types of calipers and micrometers.

My experience with calipers and micrometers spans over a decade, encompassing various types used in precision engineering and quality control. I’m proficient with both vernier calipers and digital calipers, understanding their strengths and limitations. Vernier calipers, while requiring more manual skill for accurate readings, offer excellent precision and are invaluable when power is unavailable. Digital calipers, on the other hand, provide quicker readings and are less prone to parallax error, making them suitable for high-throughput measurements. Similarly, I’ve worked extensively with both outside micrometers and inside micrometers, including those with different measuring ranges and anvil types. I’m familiar with the importance of proper zeroing, handling, and maintenance to ensure accuracy and longevity. For instance, I once used a set of vernier calipers to measure the extremely fine tolerance of a custom-machined gear component, where the digital caliper’s slight inherent error would have been unacceptable. The meticulous nature of using vernier calipers ensured the gear meshed perfectly.

I also have experience with depth micrometers, which are crucial in determining the depth of holes and slots, and leaf micrometers, which provide a very fine resolution for specialized applications. My experience extends to understanding the systematic errors associated with these instruments, such as wear on the anvils and temperature effects, and implementing corrective measures.

Q 9. What is the significance of surface finish measurement in metal components?

Surface finish measurement is paramount in metal components because it directly impacts functionality, durability, and aesthetic appeal. A rough surface might lead to increased friction, wear, and stress concentration, ultimately reducing the lifespan of a component or causing premature failure. Conversely, a finely finished surface is crucial in applications requiring low friction, corrosion resistance, or precise optical properties. For example, a smooth surface finish is essential in bearings to minimize wear and maximize efficiency, while a specific roughness might be required in a mold to ensure proper release of a casting.

Surface finish is characterized by parameters like roughness (Ra, Rz), waviness, and lay, typically measured using profilometers, surface roughness testers, or even CMMs equipped with appropriate stylus probes. These measurements ensure components meet design specifications and quality standards, preventing costly rework or field failures. In my experience, proper surface finish control has saved companies significant expenses by reducing waste and improving product reliability.

Q 10. Explain your experience with coordinate measuring machines (CMMs).

My experience with Coordinate Measuring Machines (CMMs) includes both operation and programming. I’m proficient in using various CMM types, including bridge-type, gantry-type, and articulated arm CMMs, each with its unique advantages and limitations. I’ve used CMMs to inspect complex parts with high accuracy, ensuring they conform to CAD models and specifications. This includes performing various measurements such as point-to-point distances, angles, radii, and surface geometry.

Programming CMMs involves creating measurement routines using specialized software. This includes defining measurement points, selecting appropriate probes, and generating reports. I’m experienced in using different probing strategies to optimize measurement speed and accuracy, avoiding collisions and ensuring proper contact. For example, I developed a CMM program to automate the inspection of a highly intricate engine block, which significantly reduced inspection time and improved consistency compared to manual methods. My expertise also includes interpreting CMM reports to identify deviations from specifications and recommending corrective actions.

Q 11. How do you perform hardness testing of metals?

Hardness testing of metals is a critical nondestructive method to determine a material’s resistance to permanent indentation. The process involves applying a controlled force with an indenter onto the metal’s surface for a specified duration. The resulting indentation is then measured to calculate the hardness value. The specific method used depends on the material’s expected hardness and the required level of detail. I have extensive experience with several common methods, including Rockwell, Brinell, and Vickers hardness testing.

The procedure generally involves surface preparation to ensure a clean and representative test area. This often includes cleaning, grinding, or polishing to remove any surface imperfections that might affect the results. The chosen method is then implemented using calibrated equipment and following strict procedures to ensure accuracy and repeatability.

Q 12. Describe different hardness testing scales (e.g., Rockwell, Brinell, Vickers).

Different hardness testing scales offer various levels of penetration and indenter geometries, making them suitable for different materials and hardness ranges.

- Rockwell Hardness: Uses a minor load followed by a major load, measuring the depth of penetration. This is a widely used method due to its speed and simplicity. There are various Rockwell scales (e.g., Rockwell A, B, C) using different indenters and loads, suited for various hardness levels.

- Brinell Hardness: Employs a hard steel or carbide ball indenter under a significant load. The diameter of the resulting indentation is measured to determine the hardness number. This method is suitable for softer materials and thicker sections.

- Vickers Hardness: Uses a square-based diamond pyramid indenter. The diagonal length of the resulting indentation is measured, providing a hardness value less sensitive to surface effects than Brinell. This method is versatile and suitable for a wide range of materials and hardness levels.

The choice of scale depends on the material’s anticipated hardness and the size and shape of the test piece. For example, Rockwell C is often used for hard steels, while Brinell is preferred for softer metals. Vickers is particularly useful for very hard materials and thin sections.

Q 13. How do you interpret hardness test results?

Interpreting hardness test results involves comparing the obtained hardness value to established standards and material specifications. This helps determine if the material meets the required hardness for its intended application. Deviations from the specified hardness can indicate problems with the material’s heat treatment, processing, or composition.

For instance, a hardness value significantly lower than the specification might suggest insufficient heat treatment or the presence of defects. Conversely, a value exceeding the specification might indicate over-hardening, potentially leading to brittleness and reduced ductility. It’s crucial to analyze the results in conjunction with other material properties and the intended application. In my experience, accurate interpretation of hardness results allows for timely detection of manufacturing defects and prevents costly failures in the field.

Q 14. Explain the principles of tensile testing.

Tensile testing is a fundamental materials characterization technique that measures a material’s response to uniaxial tensile loading. A standardized specimen is subjected to a controlled tensile force until it fractures. During the test, the applied force and the resulting elongation are continuously monitored. This data is used to generate a stress-strain curve, providing crucial information about the material’s mechanical properties.

The stress-strain curve reveals key properties like yield strength (the stress at which plastic deformation begins), ultimate tensile strength (the maximum stress the material can withstand), and elongation (the extent of plastic deformation before fracture). These properties are essential for designing and selecting materials for various engineering applications. For example, understanding the yield strength is vital in structural engineering to ensure a component won’t deform permanently under expected loads. Similarly, the ultimate tensile strength is crucial in determining the maximum load a component can withstand before failure. My experience in tensile testing includes selecting appropriate specimens, operating the testing machine, and analyzing the results to ensure the material meets the design specifications.

Q 15. How do you interpret stress-strain curves from tensile testing?

Stress-strain curves, obtained from tensile testing, are graphical representations of a material’s response to applied tensile force. The curve reveals crucial mechanical properties. The horizontal axis represents strain (the material’s deformation relative to its original length), while the vertical axis represents stress (the force applied per unit area).

Interpreting Key Regions:

- Elastic Region: The initial linear portion shows elastic deformation. Here, the material deforms proportionally to the applied stress, and it returns to its original shape upon removal of the load. The slope of this line represents Young’s Modulus (E), a measure of the material’s stiffness.

- Yield Point: The point where the curve deviates from linearity indicates the yield strength (σy). Beyond this point, plastic deformation occurs – the material deforms permanently.

- Plastic Region: This region shows permanent deformation. The material continues to elongate with increasing stress, exhibiting work hardening (strain hardening), where the material becomes stronger due to the rearrangement of its crystalline structure.

- Ultimate Tensile Strength: The peak point of the curve represents the ultimate tensile strength (σUTS), the maximum stress the material can withstand before necking (localized reduction in cross-sectional area) begins.

- Fracture Point: The point where the curve ends indicates the fracture point, signifying material failure. The elongation at fracture provides information on the material’s ductility.

Real-world Application: By analyzing the stress-strain curve, engineers can select materials suitable for specific applications. For instance, a material with high yield strength and high ductility would be preferred for applications requiring both strength and ability to deform before failure, such as automotive body panels. A brittle material with a steep stress-strain curve and low ductility might be suitable for applications needing high stiffness but less tolerance for deformation.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. Describe your experience with different types of non-destructive testing (NDT) methods for metals.

My experience encompasses several Non-Destructive Testing (NDT) methods frequently used for metals. These methods allow for inspection without damaging the component.

- Visual Inspection (VT): This is the most basic method, involving careful observation of the surface for defects like cracks, corrosion, or dimensional inconsistencies. I am proficient in using magnification tools and appropriate lighting to enhance detection.

- Liquid Penetrant Testing (LPT): LPT is used to detect surface-breaking flaws. A penetrant is applied to the surface, drawn into cracks, and then revealed using a developer. I have experience with various penetrant types and am familiar with the interpretation of indications.

- Magnetic Particle Testing (MPT): This method is suitable for ferromagnetic materials. A magnetic field is induced, and ferromagnetic particles are applied to the surface. Cracks disrupt the magnetic field, causing particles to accumulate, revealing flaws. I am adept at selecting the appropriate magnetic field strength and particle type based on component geometry and material.

- Ultrasonic Testing (UT): UT employs high-frequency sound waves to detect internal flaws. I have experience with different UT techniques, including pulse-echo and through-transmission methods, and can interpret ultrasonic waveforms to identify and characterize defects. I am familiar with different probe types and their applications.

- Radiographic Testing (RT): RT uses X-rays or gamma rays to penetrate the material and reveal internal flaws. I understand the safety protocols associated with RT and can interpret radiographic images to identify various defects.

Example: In one project, we used a combination of VT, LPT, and UT to inspect welded joints in a pressure vessel. VT revealed some surface imperfections, LPT confirmed the presence of surface cracks, while UT identified internal porosity, enabling us to ensure the integrity of the vessel.

Q 17. How do you perform visual inspection of metal components?

Visual inspection is a fundamental yet crucial NDT method. It involves systematically examining the metal component for any surface imperfections or anomalies. The process requires careful attention to detail and specific procedures.

Steps involved:

- Preparation: Ensure adequate lighting, proper surface cleaning (if necessary), and use of any necessary magnification tools (e.g., magnifying glasses, borescopes).

- Inspection: Systematically examine the entire surface of the component, paying close attention to areas prone to defects (e.g., welds, corners, edges). Look for cracks, corrosion, pitting, scratches, dents, or any other irregularities.

- Documentation: Thoroughly document all findings, including the type, location, and size of any defects. This may involve taking photographs or creating detailed sketches.

- Interpretation: Based on the observed defects and applicable standards, determine the severity and acceptability of the findings.

Example: During a routine inspection of a cast iron component, a visual inspection revealed a significant surface crack near a critical stress point. This defect, while initially small, could have potentially led to catastrophic failure. Proper documentation and subsequent actions prevented any safety hazard.

Q 18. Explain your understanding of material specifications and standards (e.g., ASTM).

Material specifications and standards, such as those published by ASTM (American Society for Testing and Materials), provide crucial information on the properties and performance requirements of metals. These standards ensure consistency and quality in material selection and manufacturing.

Understanding Specifications:

- Chemical Composition: Standards specify the allowable percentages of different elements in the alloy, affecting properties like strength and corrosion resistance. For example, an ASTM A36 steel specification will outline permitted carbon, manganese, silicon, and other element levels.

- Mechanical Properties: Standards define required mechanical properties such as tensile strength, yield strength, elongation, and hardness. These are often determined through tensile testing and hardness testing.

- Dimensional Tolerances: Specifications outline acceptable variations in dimensions of the finished component. This ensures the part fits within its intended application.

- Manufacturing Processes: Some standards may specify required manufacturing processes to ensure quality and reproducibility.

Real-world Example: A project involving the construction of a bridge requires adherence to specific ASTM standards for the steel used. These standards guarantee that the steel possesses the necessary strength, ductility, and weldability to withstand the anticipated loads and environmental conditions.

Q 19. How do you handle discrepancies in metal measurements?

Discrepancies in metal measurements can arise from various sources, including measurement errors, material variations, or inconsistencies in manufacturing processes.

Handling Discrepancies:

- Identify the Source: The first step is to identify the root cause of the discrepancy. This might involve reviewing measurement procedures, recalibrating equipment, or investigating the manufacturing process.

- Verify Measurements: Re-measure the component using different methods or equipment to verify the initial measurements. Multiple measurements increase the confidence in the result.

- Analyze Data: Statistical analysis can help determine if the discrepancy is within acceptable tolerances or if it represents a significant deviation.

- Implement Corrective Actions: Based on the root cause analysis, corrective actions must be implemented to prevent future discrepancies. This may include retraining personnel, improving equipment calibration procedures, or modifying manufacturing processes.

- Documentation: Meticulous record-keeping of the discrepancy, investigation, and corrective actions is essential for quality control and continuous improvement.

Example: If measurements of a component’s thickness show a consistent deviation from the specified value, an investigation might reveal that the manufacturing process needs adjustment, or the measuring instrument requires recalibration. Proper documentation ensures that similar issues are avoided in the future.

Q 20. Describe your experience with statistical process control (SPC) in metal measurement.

Statistical Process Control (SPC) is a powerful tool used to monitor and control the variability of metal measurement processes. It helps in identifying and addressing sources of variation, ultimately enhancing process capability and product quality.

Application in Metal Measurement:

- Control Charts: Control charts (e.g., X-bar and R charts) are used to track the central tendency and dispersion of measurement data over time. By monitoring these charts, we can detect shifts in the process mean or increases in variability.

- Process Capability Analysis: This analysis determines whether the process is capable of consistently producing measurements within the specified tolerances. It helps in assessing the process’s performance and identifying areas for improvement.

- Acceptance Sampling: SPC techniques are employed in acceptance sampling to determine if a batch of components meets the specified quality criteria.

Example: In a machining operation, we used X-bar and R charts to monitor the diameter of a metal shaft. The control charts helped identify a period of increased variability, which prompted an investigation into the cause and subsequent adjustments to the machining process, resulting in improved process stability and reduced scrap.

Q 21. Explain your experience with data analysis and reporting in metal measurement.

Data analysis and reporting are integral parts of metal measurement. Effective data analysis reveals trends, identifies anomalies, and provides valuable insights into process performance and product quality.

Data Analysis Techniques:

- Descriptive Statistics: Calculating means, standard deviations, and ranges to summarize the measurement data.

- Statistical Process Control (SPC): Using control charts and process capability analysis to monitor and improve process performance.

- Regression Analysis: Investigating relationships between different process variables and measurement outcomes.

- Hypothesis Testing: Testing claims about the process or product based on the measurement data.

Reporting: Reports should clearly communicate the findings of the data analysis. They should include key statistics, charts, graphs, and interpretations of the results. A well-structured report will help stakeholders understand the quality of the measurements, identify areas for improvement, and make informed decisions.

Example: A comprehensive report on the dimensional accuracy of a batch of components might include histograms showing the distribution of measurements, control charts illustrating process stability, and process capability indices showing whether the process is capable of meeting the required specifications. The report also includes recommendations for improvement.

Q 22. How do you ensure the traceability of metal measurements?

Traceability in metal measurement ensures that all measurements can be linked back to a known standard, establishing confidence in the accuracy and reliability of the data. This is crucial for quality control, regulatory compliance, and preventing costly errors. We achieve this through a robust system that includes:

- Calibration Certificates: All measuring instruments are calibrated regularly against traceable standards, with certificates documenting the calibration date, results, and the identification of the standard used. Think of it like verifying a ruler against a master ruler to ensure its accuracy.

- Chain of Custody: We maintain meticulous records detailing the instrument’s use, any adjustments made, and the measurements taken. This creates an unbroken chain of evidence linking the measurements to the original standard. For example, we would meticulously log which gauge was used to measure a specific batch of steel, noting the gauge’s calibration date.

- Standard Operating Procedures (SOPs): Following standardized procedures ensures consistency and reduces human error. These SOPs outline how instruments are used, maintained, and calibrated.

- Accreditation: Seeking accreditation from recognized bodies (like ISO 17025) demonstrates our commitment to traceability and provides independent verification of our processes.

In essence, traceability provides the audit trail needed to validate our measurements and build trust in the integrity of our data. It’s the foundation of accurate and reliable metal measurement.

Q 23. Describe your experience with calibration procedures for measurement instruments.

Calibration is the cornerstone of accurate metal measurement. My experience encompasses a wide range of instruments, including micrometers, calipers, optical comparators, coordinate measuring machines (CMMs), and surface roughness testers. The process generally involves:

- Instrument Selection: Choosing the appropriate instrument based on the required accuracy and the nature of the measurement (e.g., using a CMM for complex geometries, a micrometer for precise linear dimensions).

- Reference Standards: Using certified reference standards that are traceable to national or international standards. For example, we might use certified gauge blocks to calibrate a micrometer.

- Calibration Procedure: Following manufacturer instructions and established SOPs to calibrate the instrument. This often involves making measurements on the reference standard and adjusting the instrument as needed.

- Documentation: Meticulously recording the calibration data, including date, instrument details, reference standards used, and measurement results. This is crucial for traceability.

- Frequency: Calibrating instruments at regular intervals based on their usage and the required accuracy. High-precision instruments may need calibrating daily, while others might suffice with annual calibration.

I’ve experienced scenarios where miscalibration led to significant errors in production, highlighting the critical importance of adhering to strict calibration schedules and procedures.

Q 24. What are the safety precautions you take while performing metal measurements?

Safety is paramount in any metal measurement operation. My safety practices include:

- Personal Protective Equipment (PPE): Consistently wearing appropriate PPE, such as safety glasses, gloves, and sometimes hearing protection, depending on the machinery and materials involved. For example, when working with sharp tools like calipers, safety glasses are mandatory.

- Safe Handling of Materials: Properly handling metal samples to prevent cuts, abrasions, or other injuries. This includes using appropriate lifting techniques and avoiding contact with sharp edges.

- Machine Safety: Following manufacturer’s instructions when operating measuring instruments and equipment. This includes understanding and using safety interlocks and emergency stop mechanisms. CMMs, for instance, have specific safety protocols that need strict adherence.

- Work Area Safety: Maintaining a clean and organized work area to minimize trip hazards and prevent accidents. This includes proper disposal of any waste material.

- Awareness of Hazards: Being aware of potential hazards, such as sharp edges, hot surfaces, and moving parts. For instance, when measuring parts that have just been machined, I would ensure they have cooled down sufficiently.

A safe working environment not only protects individuals but also ensures the reliability of measurement data by preventing damage to instruments or samples.

Q 25. How do you select the appropriate measurement technique for a specific application?

Selecting the right measurement technique depends on several factors: the type of metal, the required accuracy, the geometry of the part, and the available resources. My approach involves:

- Understanding the Material: Different metals have different properties that affect the choice of technique. For example, measuring the thickness of a thin foil would require different techniques than measuring the diameter of a large steel rod.

- Accuracy Requirements: The required accuracy dictates the precision of the measurement instrument and technique. High-precision applications may require techniques like CMM measurements, while less demanding applications might use simpler methods like calipers.

- Part Geometry: Complex geometries may necessitate techniques like 3D scanning or CMM measurements, while simpler shapes can be measured with more basic tools.

- Available Resources: The availability of appropriate equipment, software, and expertise also plays a role. Using a CMM is only feasible if a suitable machine is available and operators are trained.

For instance, I would use a micrometer for precise linear dimensions of a cylindrical part, but a CMM for measuring the complex contours of a die-casting.

Q 26. Describe your experience with different types of metal alloys and their properties.

My experience encompasses a wide array of metal alloys, including:

- Steel Alloys: From low-carbon steel to high-strength, low-alloy steels and stainless steels, each with varying mechanical properties like tensile strength, yield strength, and hardness. Understanding these variations is crucial for selecting appropriate measurement techniques and interpreting results.

- Aluminum Alloys: Various aluminum alloys, categorized by their strength, corrosion resistance, and machinability. These differences influence how they’re measured and the precautions necessary to avoid damage.

- Copper Alloys: Brass, bronze, and other copper alloys, with properties suited to different applications. Their properties affect their dimensional stability and how they respond to measurement processes.

- Titanium Alloys: High-strength, lightweight titanium alloys used in demanding applications. Their measurement requires specialized techniques and careful handling due to their high value.

Understanding the properties of each alloy is critical for ensuring the accuracy and reliability of measurements and for interpreting the results in context. For example, the high thermal conductivity of aluminum necessitates careful consideration of temperature effects during measurements.

Q 27. Explain your understanding of the relationship between material properties and measurement techniques.

Material properties and measurement techniques are intimately linked. The choice of measurement technique is heavily influenced by the material’s properties. For example:

- Hardness: Hard materials might require specialized techniques like Rockwell or Brinell hardness testing, while softer materials can be measured using less demanding methods.

- Elasticity: Elastic materials may deform under pressure, influencing the choice of measurement methods to minimize deformation and ensure accurate readings. We might use non-contact methods like optical techniques.

- Thermal Expansion: The thermal expansion coefficient of the material affects the accuracy of measurements. Temperature control and compensation techniques are essential for certain materials.

- Surface Finish: The surface roughness of the material influences the choice of measuring equipment. Surface roughness testers are essential for characterizing surface textures and determining suitable measurement methods.

A thorough understanding of material properties is vital for selecting appropriate techniques and interpreting results accurately. Ignoring these relationships can lead to significant measurement errors and misinterpretations.

Q 28. How would you troubleshoot a problem with an inaccurate measurement?

Troubleshooting inaccurate measurements involves a systematic approach:

- Repeat the Measurement: The first step is to repeat the measurement several times to ensure the initial reading wasn’t a fluke. If the error persists, proceed to further investigation.

- Check the Instrument: Verify the instrument’s calibration status and ensure it’s functioning correctly. Compare readings with a known standard or another calibrated instrument.

- Assess the Technique: Review the measurement procedure to ensure proper technique. Were the correct settings used? Were environmental factors such as temperature or humidity adequately controlled? Small errors in technique can lead to significant inaccuracy.

- Inspect the Sample: Carefully examine the sample for any defects or irregularities that could be influencing the measurement. For example, surface imperfections could lead to errors in dimensional measurements.

- Consider Environmental Factors: Temperature, humidity, and vibrations can all affect measurements. Were these factors taken into account? It’s crucial to work within a controlled environment where possible.

- Investigate Systematic Errors: Check for sources of systematic errors, such as instrument drift, zero error, or parallax errors. Careful analysis can identify these errors and their effect on the measurements.

Documenting all steps and findings meticulously is crucial. This information aids in future troubleshooting and improves the overall quality control process. The goal is to identify the root cause of the inaccuracy and implement corrective actions to avoid future errors.

Key Topics to Learn for Metal Measurement Interview

- Dimensional Metrology: Understanding various measurement techniques like calipers, micrometers, and coordinate measuring machines (CMMs). Practical application: Accurately measuring dimensions of machined parts to ensure they meet specifications.

- Material Properties & Testing: Knowledge of tensile strength, yield strength, hardness, and other relevant material properties. Practical application: Selecting appropriate materials based on required strength and durability, interpreting test results.

- Surface Finish Measurement: Familiarity with surface roughness parameters (Ra, Rz) and their measurement methods. Practical application: Ensuring surface quality meets design requirements for functionality and aesthetics.

- Statistical Process Control (SPC): Understanding control charts and their use in monitoring and improving measurement processes. Practical application: Identifying and addressing sources of variation in measurement data.

- Measurement Uncertainty & Error Analysis: Understanding sources of error and methods for minimizing them. Practical application: Evaluating the reliability and accuracy of measurement results.

- Calibration & Traceability: Knowledge of calibration procedures and the importance of traceable measurement standards. Practical application: Ensuring the accuracy and reliability of measurement equipment.

- Specific Measurement Techniques (depending on the role): This could include techniques like optical metrology, X-ray inspection, or other specialized methods. Explore the specific requirements of the job description for relevant techniques.

Next Steps

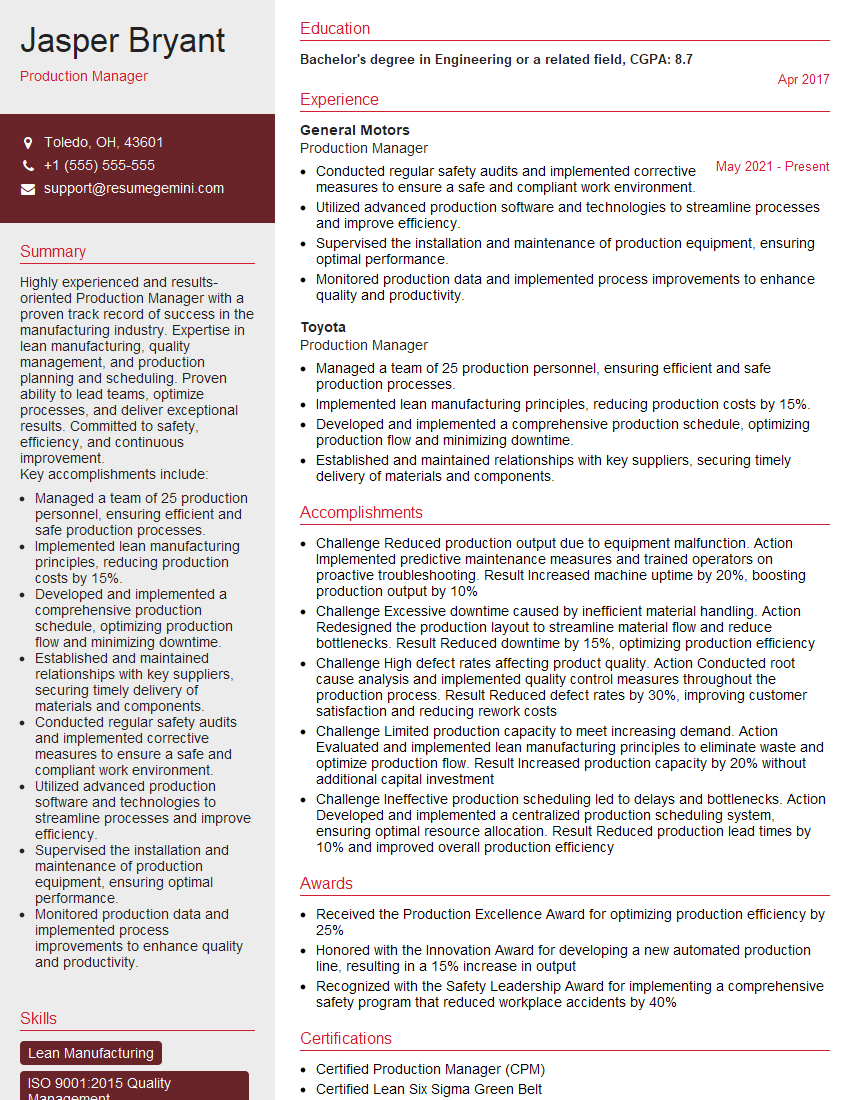

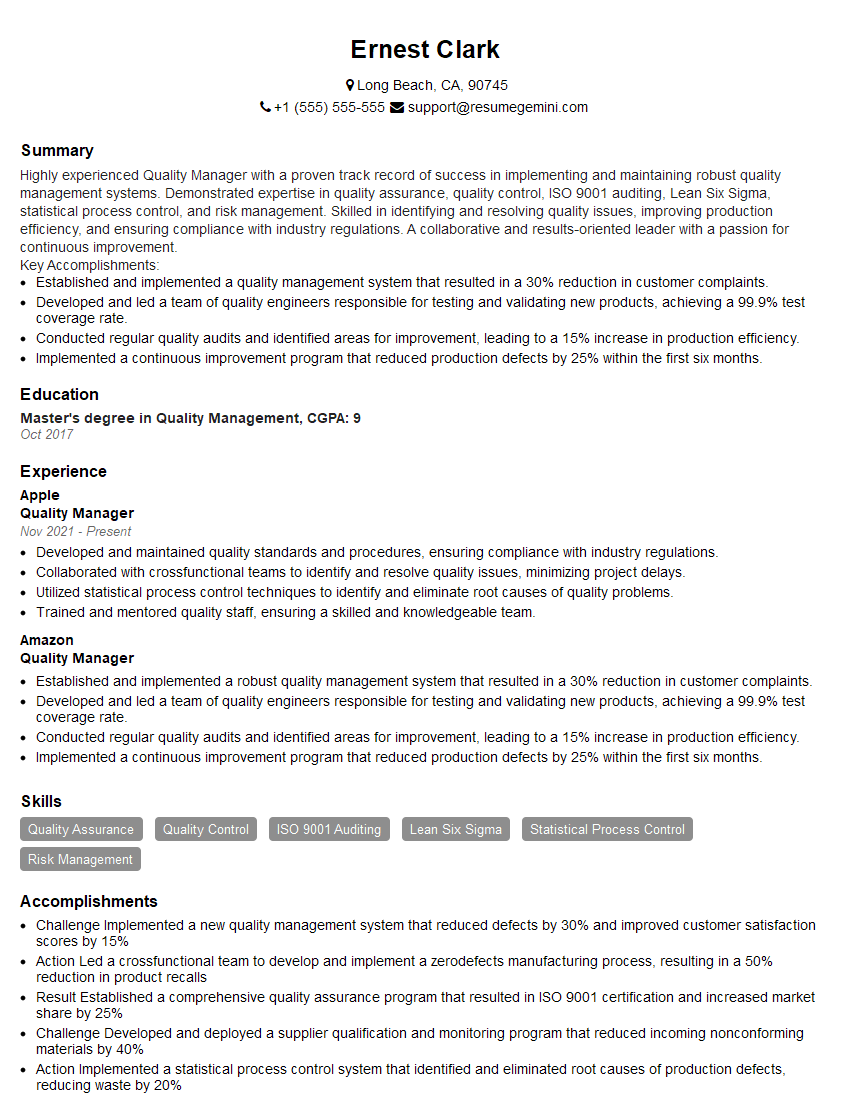

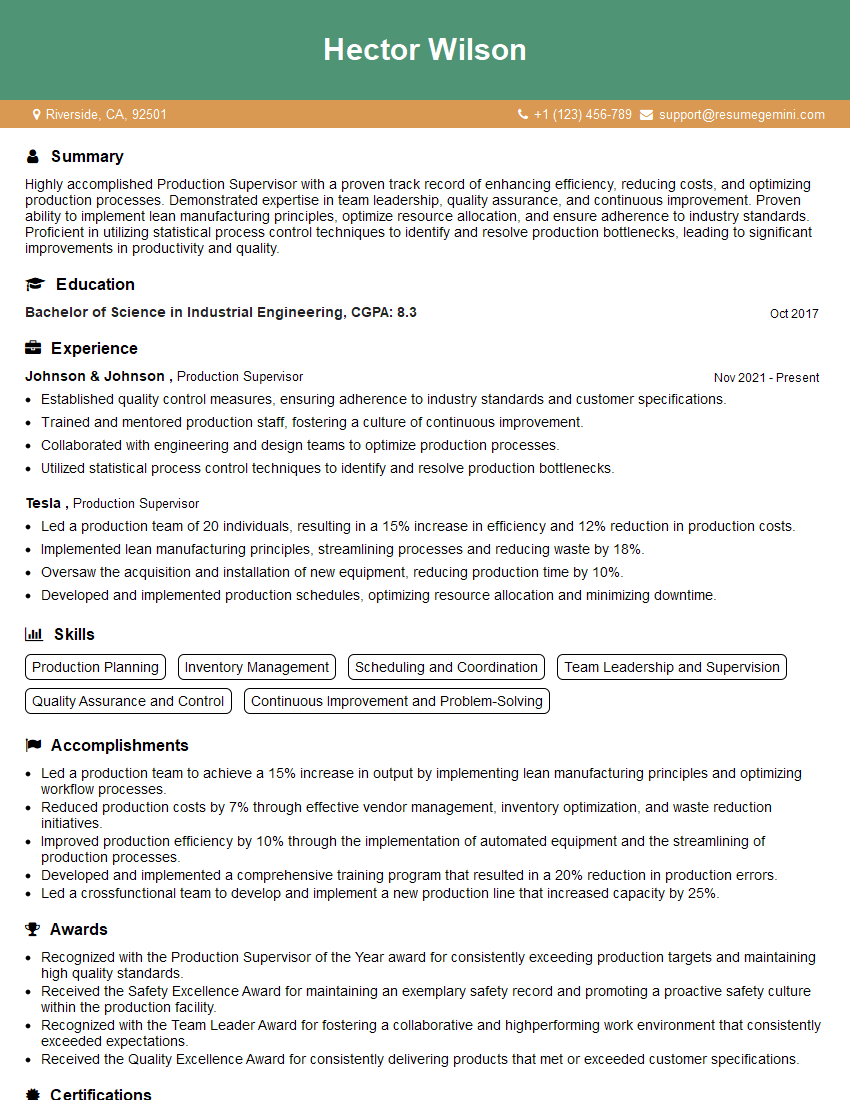

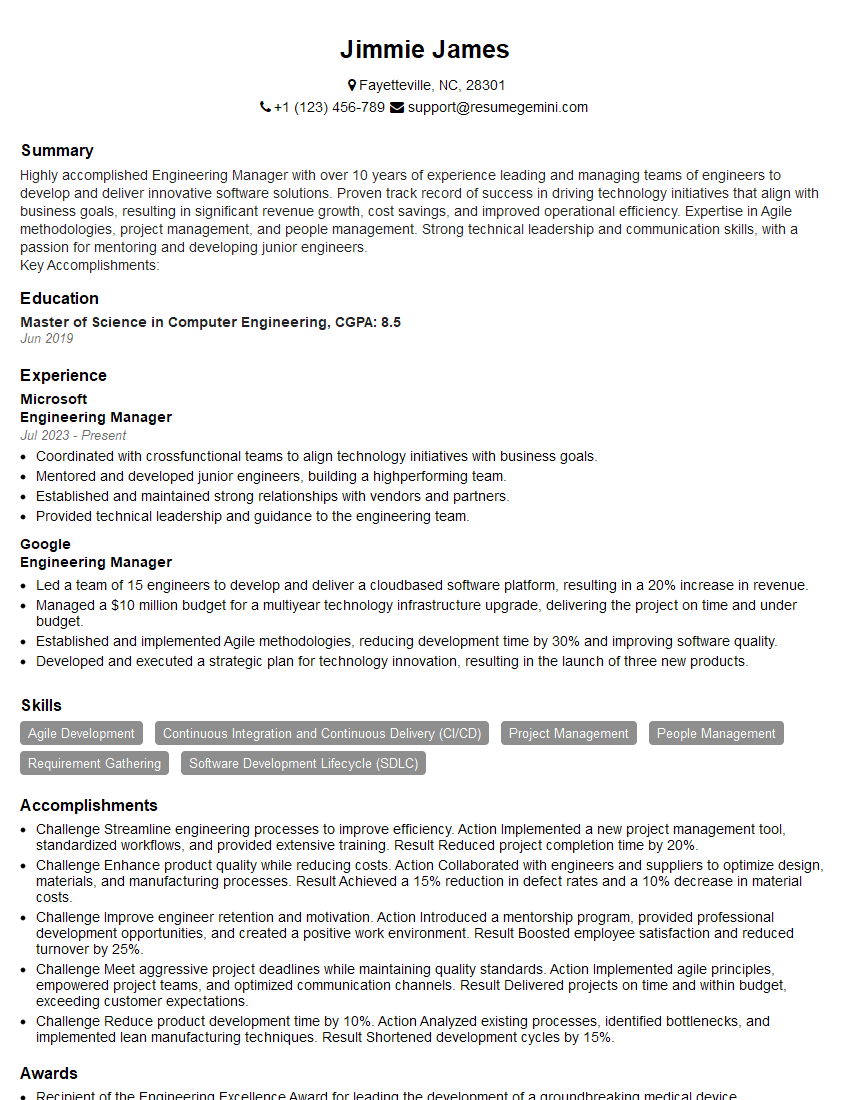

Mastering Metal Measurement is crucial for advancing your career in manufacturing, engineering, and quality control. A strong understanding of these principles opens doors to higher-paying roles and increased responsibility. To maximize your job prospects, it’s essential to create a resume that effectively showcases your skills and experience to Applicant Tracking Systems (ATS). ResumeGemini is a trusted resource to help you build a professional and ATS-friendly resume that highlights your expertise in Metal Measurement. Examples of resumes tailored to this field are available to guide you. Take the next step towards your dream career today!

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Very informative content, great job.

good