Every successful interview starts with knowing what to expect. In this blog, we’ll take you through the top Network Simulation and Modeling Tools interview questions, breaking them down with expert tips to help you deliver impactful answers. Step into your next interview fully prepared and ready to succeed.

Questions Asked in Network Simulation and Modeling Tools Interview

Q 1. Explain the difference between discrete-event and continuous-time simulation.

Discrete-event simulation and continuous-time simulation are two fundamentally different approaches to modeling systems over time. The key difference lies in how they handle time.

Discrete-event simulation focuses on significant events that cause changes in the system’s state. Time is advanced directly from one event to the next, ignoring the time between events. Think of it like watching a highlight reel of a game – you only see the important plays, not every second of the game. It’s ideal for modeling systems where events are infrequent, such as network packet transmissions or customer arrivals at a bank.

Continuous-time simulation, on the other hand, models the system continuously over time. Changes in the system’s state are modeled as continuous functions of time. Think of it like watching a live video of the game – you see every second of action. It’s more suitable for systems where changes occur smoothly and frequently, such as fluid dynamics or the spread of a disease.

Example: Imagine simulating network traffic. In a discrete-event simulation, you’d model each packet arrival and departure as individual events. In a continuous-time simulation, you might model the overall network load as a continuous function changing over time.

Q 2. Describe your experience with NS-3 or OMNeT++.

I have extensive experience with both NS-3 and OMNeT++, having utilized them for various research and professional projects. NS-3 is a particularly powerful tool for detailed modeling of networking protocols and performance analysis. I’ve used its C++ API extensively to create custom modules and protocols, for instance, building a novel congestion control algorithm and evaluating its impact on network throughput under different traffic conditions. A key project involved simulating a large-scale Software Defined Networking (SDN) environment with NS-3, analyzing the performance of various SDN controllers.

OMNeT++, while more general-purpose, offers a distinct advantage in its intuitive visual modeling capabilities using its NED language. I’ve leveraged this for simulating complex wireless networks, focusing on aspects like mobility models and interference management. One project utilized OMNeT++ to model a vehicular ad-hoc network (VANET), testing communication efficiency under varying vehicular densities and speeds. Both simulators offer unique strengths and their selection often hinges on the specifics of the project requirements.

Q 3. How would you model a specific network protocol (e.g., TCP, UDP) in a simulator?

Modeling network protocols like TCP and UDP in a simulator involves creating modules that accurately represent the protocol’s behavior. This usually entails defining message structures, state machines, and algorithms. Let’s take TCP as an example.

To model TCP in NS-3 or OMNeT++, you’d create a module that implements the TCP state machine (CLOSED, LISTEN, SYN_SENT, ESTABLISHED, etc.), including functionalities like:

- Connection establishment: Handling the three-way handshake (SYN, SYN-ACK, ACK).

- Data transmission: Managing the sliding window protocol, acknowledging received data.

- Congestion control: Implementing algorithms like TCP Reno or Cubic to adjust the transmission rate based on network congestion.

- Retransmission: Handling packet loss and retransmitting lost segments.

You would similarly model UDP, but it’s simpler as it doesn’t include these complex features. The code would focus on creating datagrams, encapsulating payloads, and sending them without any built-in congestion control or error correction. The choice of simulator would influence the specific coding style and available libraries, but the core concepts remain consistent.

// Example (conceptual NS-3 code snippet): //This is a simplified illustration and lacks many details. class TcpModule : public ns3::Application { public: TcpModule(); ~TcpModule(); void Start(); void Stop(); private: ns3::Ptr

Q 4. What are the advantages and disadvantages of using network simulation tools?

Network simulation tools offer significant advantages, but also have limitations.

Advantages:

- Cost-effective testing: Simulators allow for experimentation in a controlled environment, saving the cost and risk associated with testing in real-world networks.

- Reproducibility: Simulations can be repeated easily, producing consistent results, unlike real-world tests, which can be affected by many unpredictable factors.

- Scalability: Large and complex networks can be easily simulated, a task difficult to achieve in a physical environment.

- Control and analysis: Simulators provide detailed insights into network behavior allowing researchers to analyze internal network states and easily collect statistics.

Disadvantages:

- Model accuracy: The accuracy of the simulation depends heavily on the accuracy of the models used, which are always simplifications of reality.

- Complexity: Developing accurate and detailed simulations can be complex and time-consuming.

- Validation challenges: Validating simulation results against real-world data can be difficult.

- Computational resources: Large-scale simulations can require significant computational resources.

Q 5. How do you validate the results of a network simulation?

Validating simulation results is crucial to ensure their reliability. This involves comparing the simulation’s output against real-world data or analytical models. Several methods can be used:

- Comparison with real-world data: Collect data from a real network with similar characteristics to the one being simulated. Then, compare key performance indicators (KPIs) like throughput, latency, and packet loss rate.

- Analytical model comparison: If an analytical model exists for the network or a specific aspect of it, use this as a benchmark for the simulation results. Discrepancies would highlight potential issues in the simulation model.

- Sensitivity analysis: Perform a sensitivity analysis to assess how changes in simulation parameters affect the results. This helps identify potential biases or weaknesses in the model.

- Code verification: Employ software testing methods to verify the correctness of the simulation code itself, ensuring that the implementation accurately reflects the intended model.

The validation process is iterative. If discrepancies exist between the simulation and the benchmark, investigate possible causes, refine the model, and repeat the validation process.

Q 6. Explain different types of network topologies and how to model them.

Network topologies describe the physical or logical layout of a network. Common types include:

- Bus topology: All devices connect to a single cable. Simple but prone to single points of failure.

- Star topology: All devices connect to a central hub or switch. Reliable and easy to manage.

- Ring topology: Devices are connected in a closed loop. Data travels in one direction.

- Mesh topology: Multiple connections between devices provide redundancy and resilience.

- Tree topology: A hierarchical structure resembling an inverted tree.

Modeling topologies in simulators usually involves defining nodes (devices) and links (connections) between them. In NS-3, you might use the ns3::NodeContainer and ns3::NetDeviceContainer classes to create and connect nodes. In OMNeT++, the NED language allows for a more visual representation where you’d define nodes and their connections diagrammatically. For more complex topologies, you might use scripts to generate the network based on a predefined topology file.

Example (Conceptual NS-3):

// Create nodes ns3::NodeContainer nodes; nodes.Create (5); // Create 5 nodes // Create links (requires point-to-point channel creation) ns3::PointToPointHelper pointToPoint; //....

Q 7. How do you handle large-scale network simulations?

Handling large-scale network simulations presents computational challenges. Strategies to address this include:

- Parallel and distributed simulation: Divide the simulation into smaller parts that can be executed in parallel across multiple processors or machines. Tools like NS-3 support this through parallel simulation engines.

- Efficient data structures: Using data structures that minimize memory usage and computation time is critical for larger simulations. Techniques like sparse matrices might be employed.

- Abstraction and simplification: Focus on simulating only the relevant parts of the network at a desired level of detail. High-level models reduce computational burden.

- Smart sampling and analysis: Avoid collecting unnecessary data by strategically selecting points for measurement. This reduces I/O overhead.

- Cloud computing: Leverage cloud-based resources for the massive computational power needed for extremely large simulations.

The best approach often depends on available resources, simulation complexity, and desired accuracy. Careful planning and optimization are key to success in managing large-scale simulations.

Q 8. Discuss your experience with different network simulation metrics (e.g., throughput, latency, jitter).

Network simulation relies heavily on key performance indicators (KPIs) to evaluate the effectiveness and efficiency of a network design. Throughput, latency, and jitter are fundamental metrics. Throughput measures the amount of data successfully transmitted over a network link within a given time frame (e.g., bits per second or packets per second). Think of it like the speed of a highway – higher throughput means more cars (data) can travel simultaneously. Latency represents the delay experienced by data packets as they travel across the network. It’s the time it takes for a data packet to travel from source to destination, analogous to the travel time on a road. High latency leads to slow responses. Finally, Jitter refers to the variation in latency over time. Imagine cars on a highway sometimes speeding up and sometimes slowing down; this inconsistency is jitter. Inconsistent jitter negatively impacts real-time applications like video conferencing.

In my experience, I’ve extensively used these metrics in various projects. For instance, when simulating a VoIP network, we meticulously monitored latency and jitter to ensure call quality wasn’t compromised. High latency leads to noticeable delays in conversations, while high jitter causes choppy audio and video. We adjusted network parameters (bandwidth, buffer sizes, queuing algorithms) until we achieved acceptable latency and jitter levels to meet our quality of service (QoS) requirements. Similarly, when simulating a data center network, throughput was a critical metric, where I optimized network configurations to maximize data transfer rates.

- Example: In one project, we compared the throughput of different routing protocols (OSPF vs. BGP) under varying load conditions using NS-3. OSPF showed better throughput under low load, while BGP performed better under heavy loads and demonstrated greater scalability.

Q 9. How do you choose the appropriate simulation tool for a given project?

Selecting the right network simulation tool depends on several factors: the complexity of the network being modeled, the specific metrics of interest, the budget, and the team’s expertise. There’s no one-size-fits-all solution.

For simple networks and basic performance analysis, tools like NS2 or even custom scripts in Python might suffice. However, for more intricate networks with detailed protocols and features, more powerful tools like NS-3, OMNeT++, or commercial options such as Cisco Packet Tracer (for educational purposes and basic simulations) or OPNET Modeler (for large-scale simulations) are better suited. When choosing, consider:

- Scalability: Can the tool handle the size and complexity of your network?

- Protocol Support: Does it support the specific network protocols you need to simulate (e.g., TCP, UDP, various routing protocols)?

- Visualization Capabilities: Does it offer effective visualization tools to understand the simulation results?

- Customization: Can you extend the tool’s functionality through scripting or custom modules?

- Cost: Some tools are open-source, while others are commercial and can be quite expensive.

For example, if I need to simulate a large-scale wireless network with detailed physical layer modeling, OMNeT++ would be a strong contender due to its flexibility and capacity for complex scenarios. Conversely, for a quick assessment of a small LAN’s performance, Packet Tracer would likely be sufficient.

Q 10. Describe your experience with network performance analysis tools.

Network performance analysis tools play a crucial role in interpreting simulation results and diagnosing bottlenecks. I have extensive experience with tools like Wireshark (for packet-level analysis), tcpdump (for command-line packet capturing), and various network monitoring systems (e.g., Nagios, Zabbix) that provide real-time monitoring of key metrics. These tools help visualize network traffic patterns, identify slowdowns, and pinpoint areas for improvement.

For instance, using Wireshark, I can analyze captured packets to determine if packet loss is occurring, which would then help in identifying the root cause of a network simulation failure and improve my model based on the findings. Analyzing latency information from Wireshark can help diagnose problems with slow applications during simulation.

In one project, we used tcpdump to capture network traffic during a simulation of a high-frequency trading system. Analyzing the captured data revealed unexpected delays in specific parts of the system, which ultimately led to significant improvements in the overall system design.

Q 11. What are the common challenges encountered during network simulation?

Network simulation, while powerful, presents several challenges. One major hurdle is model accuracy; it’s crucial to strike a balance between model detail and computational tractability. Overly detailed models can become computationally expensive and time-consuming, while overly simplified models might fail to capture important aspects of network behavior.

Another challenge is validation. Ensuring the simulation results accurately reflect real-world network behavior is vital. This requires careful calibration of the simulation parameters and possibly comparison against real-world data.

Reproducibility is also an issue. It’s essential to ensure simulations are repeatable and the results are consistent across different runs. Randomness in the network behavior should be carefully handled to make the results reproducible. Finally, debugging and troubleshooting can be quite challenging due to the complexity of network interactions.

For example, I once spent days troubleshooting a simulation only to find a minor error in the configuration file that was creating inconsistencies.

Q 12. How do you troubleshoot errors and issues during network simulations?

Troubleshooting errors during network simulations involves a systematic approach. I typically start by examining the simulation logs for error messages and warnings. Then, I carefully review the simulation configuration files to ensure the parameters are correctly set.

If the problem persists, I use debugging tools provided by the simulation tool itself (e.g., NS-3’s built-in debugging facilities) or add print statements (or logging functions) to my code to trace the execution flow and identify the source of the error.

If the issue is related to specific network protocols, I might consult protocol specifications and relevant documentation to ensure my model correctly implements the protocol behavior. I also make use of packet analyzers like Wireshark to examine network traffic and identify anomalies. Finally, simplification of the model to isolate the issue can help significantly. This iterative approach helps effectively debug and resolve issues.

Q 13. Explain your experience with scripting languages (e.g., Python) in the context of network simulation.

Scripting languages like Python are invaluable in network simulation. I use Python extensively to automate simulation runs, process simulation results, and generate custom visualizations. Python’s rich ecosystem of libraries, such as matplotlib (for plotting graphs), NumPy (for numerical computation), and pandas (for data analysis), makes it a perfect tool for post-processing simulation data.

For instance, I’ve created Python scripts to automatically run simulations with varying network parameters, collect the results, and generate comprehensive reports, including graphs and statistical analysis. This automation significantly speeds up the simulation process and ensures consistency across multiple runs. I’ve also used Python to customize simulation environments and integrate external data sources into simulations.

Here’s a simple example of a Python snippet that processes simulation output data:

import pandas as pd

data = pd.read_csv('simulation_output.csv')

throughput = data['Throughput'].mean()

print(f'Average Throughput: {throughput}')Q 14. Describe your experience with network visualization tools.

Network visualization tools are essential for understanding the behavior of complex networks. I’ve worked with various visualization tools, both integrated within simulation tools and standalone applications. Some examples include tools that visualize network topologies (showing nodes and links), traffic flows (showing data packet movement), and performance metrics (such as latency and throughput) graphically.

For example, tools like Graphviz can be used to generate visual representations of network topologies. Simulation tools often include built-in visualization capabilities or interfaces for exporting data to external visualization tools. Good visualization helps communicate simulation results effectively, leading to more informed decision-making. In a recent project, I used custom Python scripts with matplotlib to create interactive visualizations of network performance metrics that were far more intuitive than default outputs.

Q 15. How do you handle different network traffic patterns in simulations?

Handling diverse network traffic patterns in simulations is crucial for achieving realistic results. We achieve this by leveraging various traffic generators and models within simulation tools like NS-3, OMNeT++, or even custom scripting. These generators allow us to specify characteristics like packet size distribution, arrival rate (following patterns like Poisson, self-similar, or even custom distributions to mimic real-world scenarios), and application-level behavior (e.g., HTTP, VoIP, video streaming).

For instance, to model a web server under heavy load, we might use a Poisson process to generate HTTP requests with varying inter-arrival times, reflecting the random nature of user access. Conversely, for video streaming, we might use a constant bitrate traffic model to simulate a predictable, continuous stream of data. More complex scenarios require combining multiple traffic models and potentially incorporating real-world traffic traces captured from network monitoring tools for high fidelity.

Furthermore, many simulators allow for the definition of custom traffic models. This is essential when dealing with less common or newly emerging traffic patterns. For example, we could create a custom model to simulate the traffic generated by a specific application or protocol that’s not inherently supported by the simulator’s built-in traffic generators.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. What are the key factors to consider when designing a network simulation experiment?

Designing a robust network simulation experiment demands careful consideration of several key factors. First, clearly define the objectives: what specific network behavior or performance metric are we trying to evaluate? This guides the entire design process. Next, determine the network topology – this could be a simple LAN or a complex WAN, potentially incorporating wireless components. The simulation parameters need careful setting. This includes the number of nodes, link bandwidth, propagation delays, and queueing mechanisms at each node. The traffic model, as discussed earlier, is vital for realism. We need to choose a model that accurately reflects the expected traffic patterns.

Equally important is the selection of performance metrics. These might include packet loss rate, delay, throughput, jitter, or CPU utilization at various network devices. The simulation duration is another critical aspect; it needs to be long enough to capture statistically significant results but not excessively long to avoid unnecessary computational time. Finally, we must define the experimental methodology. This involves determining the number of simulation runs, handling of random seeds (to ensure reproducibility), and analysis of results using statistical methods to identify confidence intervals and assess the significance of observed differences.

Q 17. How do you interpret simulation results and draw meaningful conclusions?

Interpreting simulation results requires a structured approach. First, we visually inspect the results (graphs, charts) to identify any apparent trends or anomalies. Then, we perform statistical analysis to determine the significance of our findings. This often involves calculating confidence intervals around performance metrics, ensuring that observed differences aren’t due to random variations. For instance, comparing the average latency under two different routing protocols requires a statistical test (e.g., t-test) to determine if the difference is statistically significant. The results should be presented clearly, highlighting key findings and limitations of the study.

Furthermore, it’s crucial to understand the context of the results. Are they consistent with our expectations? Do they align with real-world observations or prior research? If discrepancies exist, we need to investigate the potential causes – a flaw in the simulation model, inaccurate parameter settings, or unexpected interaction between different network components. A crucial step is to document all aspects of the simulation experiment, including the model, parameters, results, and interpretations, to ensure reproducibility and transparency.

Q 18. How do you ensure the accuracy and reliability of your simulation results?

Ensuring the accuracy and reliability of simulation results is paramount. This begins with using validated simulation tools and models that have been rigorously tested and proven to accurately represent real-world network behavior. Careful verification ensures the simulation model correctly implements the intended network design and algorithms. This often involves comparing the simulation results against analytical models or known theoretical results under simplified conditions. Validation then compares simulation results against real-world measurements or data from deployed networks to establish its accuracy in representing real-world phenomena. This often involves comparing key performance indicators (KPIs) like throughput, delay, and packet loss.

Another key aspect is careful parameterization. Incorrect parameter settings can lead to inaccurate results. Sensitivity analysis helps determine how sensitive the results are to changes in input parameters. Running multiple simulations with different random seeds (to account for stochasticity inherent in network traffic and behavior) and comparing the results helps assess the consistency and reliability of the observations. Careful documentation of the methodology, parameters, and results enables replication and verification by others.

Q 19. Explain your experience with queuing theory and its application in network modeling.

Queuing theory plays a central role in network modeling, providing a mathematical framework for analyzing the performance of systems with limited resources, such as network queues. I have extensive experience applying queuing models, like M/M/1, M/G/1, and their variations, to model packet queues in routers and switches. These models help predict key performance metrics such as average queue length, waiting time, and throughput, enabling us to optimize queue management strategies and assess network congestion. For example, using an M/M/1 model to simulate a single queue in a router allows us to predict the average packet delay based on the arrival rate of packets and the processing rate of the router. In practice, we often use approximations since the strict assumptions of some models may not always hold true for real-world networks. The choice of queueing model significantly impacts the results, so selecting the appropriate model is critical.

In more complex scenarios, I’ve utilized simulation tools to model networks with multiple interconnected queues. In these cases, I incorporate techniques like decomposition and approximation methods to analyze system performance, often comparing different queuing disciplines (e.g., FIFO, priority queuing) to determine their impact on network performance under various traffic conditions. I’ve also explored the use of more advanced queuing models to capture real-world behaviors, such as self-similar traffic patterns, which cannot be adequately addressed by simple M/M/1 models.

Q 20. Describe your experience with different network protocols and their impact on simulation results.

My experience encompasses a wide range of network protocols, including TCP, UDP, routing protocols (RIP, OSPF, BGP), and various application-layer protocols (HTTP, FTP, VoIP). Understanding these protocols’ mechanisms is essential for accurate simulation. For instance, TCP’s congestion control mechanisms significantly influence throughput and delay, particularly under high loads. Accurately modeling these mechanisms is crucial for reliable simulations. Similarly, different routing protocols impact network performance differently; OSPF’s link-state routing is more sophisticated than RIP’s distance-vector approach, impacting convergence time and routing efficiency. The choice of routing protocol can drastically alter simulation results.

In simulations, we often use protocol implementations provided by the simulator or implement custom modules to model specific aspects of protocols. For example, in NS-3, we can leverage built-in TCP implementations, or create custom TCP implementations to test new congestion control algorithms. The level of detail in protocol modeling influences the accuracy and the computational demands of the simulation. It’s essential to strike a balance between realism and computational efficiency, choosing a level of detail appropriate for the specific research question. Using higher-fidelity protocol models may lead to more realistic results but comes at a higher computational cost. Selecting the appropriate level of detail is a crucial decision.

Q 21. Explain how you would model network security aspects in a simulation.

Modeling network security aspects in a simulation involves incorporating security mechanisms and attacks into the network model. This can range from simple firewall rules to complex intrusion detection systems and various attack models. We can model firewalls by defining access control lists (ACLs) that filter packets based on source/destination IP addresses, ports, and protocols. Intrusion detection systems can be modeled by incorporating modules that monitor network traffic for suspicious patterns. Attacks can be simulated by injecting malicious traffic, such as denial-of-service (DoS) floods or malware propagation.

For example, to simulate a DoS attack, we might use a traffic generator to send a high volume of packets to a target server, overwhelming its resources. We can then evaluate the effectiveness of different mitigation techniques, such as rate limiting or intrusion detection systems, by comparing the system’s performance with and without the attack. Similarly, we can model the spread of malware by using a model that simulates the propagation of infected packets across the network. Analyzing the simulation results provides insight into the effectiveness of various security mechanisms and helps to evaluate network vulnerability to certain attacks.

The complexity of security modeling can vary widely depending on the simulation’s scope. It’s important to choose a level of detail that’s appropriate for the research question. For instance, simulating a sophisticated multi-stage APT attack requires a far more complex simulation model than evaluating the effectiveness of a simple firewall rule. It is also important to validate the model against real-world data to ensure the accuracy and reliability of the results.

Q 22. How do you incorporate real-world network data into your simulations?

Incorporating real-world network data into simulations is crucial for creating realistic and accurate models. This usually involves obtaining trace data, which captures the actual network traffic patterns and characteristics. This data can come from various sources, such as packet captures (using tools like tcpdump or Wireshark), network monitoring systems (like SNMP), or publicly available datasets.

Once the data is acquired, it needs to be pre-processed and cleaned. This may involve removing irrelevant information, handling missing data, and potentially aggregating or summarizing the data to make it manageable for the simulation. The cleaned data can then be fed into the simulator using different methods, depending on the simulation tool. Some tools allow direct import of trace files, while others require custom scripts to format the data. For example, NS-3 can ingest custom trace files, defining the packet arrival times and other properties, making it highly flexible.

Consider a scenario where we’re simulating a cellular network. Real-world call detail records (CDRs) provide valuable data on call durations, locations, and handoff frequencies. By feeding this CDR data into a simulation, we can accurately assess the impact of network upgrades or changes in user behavior.

Q 23. Describe your experience with cloud-based network simulation environments.

Cloud-based simulation environments offer scalability and accessibility that are unparalleled by traditional on-premise solutions. I have extensive experience with cloud platforms like AWS and Google Cloud, leveraging their virtual machine (VM) instances to host and run network simulators. This approach allows for easy scaling of simulations; I can easily add more VMs to handle larger simulations or more complex scenarios.

Moreover, cloud platforms provide access to powerful computing resources, such as GPUs, which can significantly accelerate computationally intensive simulations. For instance, I used AWS to run a large-scale simulation of a Software Defined Network (SDN) involving thousands of nodes and complex control plane interactions. The cloud’s scalability was instrumental in completing the simulation within a reasonable timeframe. Cloud environments also simplify collaboration, as multiple team members can access and share simulation results remotely.

Q 24. How would you optimize the performance of a network simulation?

Optimizing network simulation performance is vital, especially with large-scale or complex models. Several strategies can be employed. First, careful model design is crucial. Avoid unnecessary detail in areas that don’t significantly impact the simulation results. Using simplified models where appropriate can drastically reduce the computational burden.

- Reduce simulation granularity: Instead of simulating every single packet, consider aggregating packets into flows. This reduces the number of events processed.

- Employ efficient algorithms: Choose simulation algorithms and data structures optimized for speed and memory efficiency. Many simulators offer options for different algorithms.

- Parallel and distributed simulation: Leverage parallel and distributed computing techniques to distribute the workload across multiple processors or machines, significantly reducing simulation runtime.

- Profiling and optimization: Profile the simulation code to identify performance bottlenecks. Use profiling tools to pinpoint computationally expensive parts of your code and then optimize those sections.

For example, I once optimized a simulation of a large enterprise network by using a more efficient routing protocol implementation within the simulator, which led to a 70% reduction in simulation time.

Q 25. Discuss your experience with parallel and distributed simulation techniques.

Parallel and distributed simulation techniques are essential for handling complex, large-scale network models. These techniques break down the simulation into smaller, manageable parts that can be run concurrently on multiple processors or machines.

I have extensive experience using both conservative and optimistic parallel simulation approaches. Conservative methods ensure that causality is maintained but can suffer from synchronization overhead. Optimistic methods, on the other hand, can achieve higher speedup but require rollback mechanisms to handle causality violations. The choice between them depends on the specific simulation and the characteristics of the network being modeled.

For example, I used a parallel discrete event simulation (PDES) approach to model a large-scale data center network with thousands of virtual machines. The simulation was partitioned across multiple nodes, with each node responsible for simulating a portion of the network. This approach drastically reduced the simulation runtime, enabling efficient exploration of different network configurations.

Q 26. Explain the concept of statistical significance in the context of network simulation.

Statistical significance in network simulation refers to the confidence that the observed results are not due to random chance. When running simulations, we often repeat the experiment multiple times with different random seeds. If the results consistently show a particular trend, we can say that the trend is statistically significant.

Statistical significance is often evaluated using hypothesis testing. We formulate a null hypothesis (e.g., there is no difference between two network configurations) and then use statistical tests (like t-tests or ANOVA) to determine if the observed results are sufficiently unlikely under the null hypothesis. A low p-value (typically below 0.05) indicates strong evidence to reject the null hypothesis and conclude that the observed difference is statistically significant. Failing to achieve statistical significance may mean that more simulation runs are needed or that the observed differences are too small to be reliably detected.

Imagine comparing two different queuing algorithms. If one consistently outperforms the other across multiple simulation runs, with a low p-value, we can confidently conclude that this algorithm is superior, not just due to random variation.

Q 27. How would you approach a network simulation project with limited resources?

When faced with limited resources for a network simulation project, prioritization and strategic decision-making are crucial. I would start by defining a clear and concise scope, focusing on the most critical aspects of the network behavior. This might mean simplifying the model or using a smaller scale representation of the actual network.

Secondly, I would explore open-source simulation tools to avoid licensing costs. NS-3 or OMNeT++ are powerful options with active communities. Finally, I would prioritize using efficient algorithms and computing techniques to maximize the effectiveness of the limited resources. Careful optimization, as mentioned earlier, can make a significant difference.

In one project, we had limited computing resources to model a large-scale wireless network. By carefully selecting the simulation parameters and optimizing the simulation code, we managed to reduce the runtime and complete our analysis within the resource constraints.

Q 28. Describe a challenging network simulation project you’ve worked on and how you overcame the challenges.

One particularly challenging project involved simulating the impact of a new congestion control algorithm on a large-scale data center network. The challenge arose from the complexity of the network model, which included thousands of virtual machines, complex traffic patterns, and sophisticated queuing mechanisms within the switches. The sheer scale of the simulation meant extremely long run times, even on high-performance computing clusters.

To overcome this, we employed a multi-pronged approach. First, we carefully analyzed the network model, identifying areas where simplification could be made without sacrificing the accuracy of the results. Secondly, we leveraged parallel and distributed simulation techniques to break down the simulation into smaller, manageable parts. We also optimized the simulation code and selected efficient algorithms for key components. Finally, we developed custom visualization tools to effectively analyze and interpret the massive amount of simulation data generated. This collaborative effort resulted in successfully evaluating the new congestion control algorithm and providing valuable insights to the network design team.

Key Topics to Learn for Network Simulation and Modeling Tools Interview

- Network Topology Design & Analysis: Understanding different network topologies (mesh, star, bus, etc.), their strengths and weaknesses, and using simulation tools to analyze performance under various conditions.

- Protocol Simulation: Modeling and simulating the behavior of network protocols (TCP/IP, routing protocols like OSPF, BGP) to understand their performance characteristics and identify potential bottlenecks.

- Queueing Theory & Performance Metrics: Applying queueing theory concepts to analyze network performance, focusing on metrics like latency, throughput, packet loss, and jitter. Understanding how simulation tools help visualize and quantify these metrics.

- Traffic Modeling & Generation: Creating realistic traffic patterns for simulations, including different traffic types (voice, video, data) and their impact on network performance. Familiarize yourself with common traffic generators.

- Network Security Simulation: Simulating security threats and vulnerabilities within a network environment to assess the effectiveness of security measures. This includes intrusion detection and prevention systems.

- Specific Simulation Tools: Gaining hands-on experience with popular network simulation tools (e.g., NS-3, OMNeT++, Cisco Packet Tracer). Focus on their capabilities and limitations.

- Data Analysis & Interpretation: Understanding how to effectively analyze simulation results, interpret the data, and draw meaningful conclusions to improve network design and performance.

- Problem-Solving & Troubleshooting: Developing the ability to identify and resolve network performance issues using simulation results and your understanding of network principles.

Next Steps

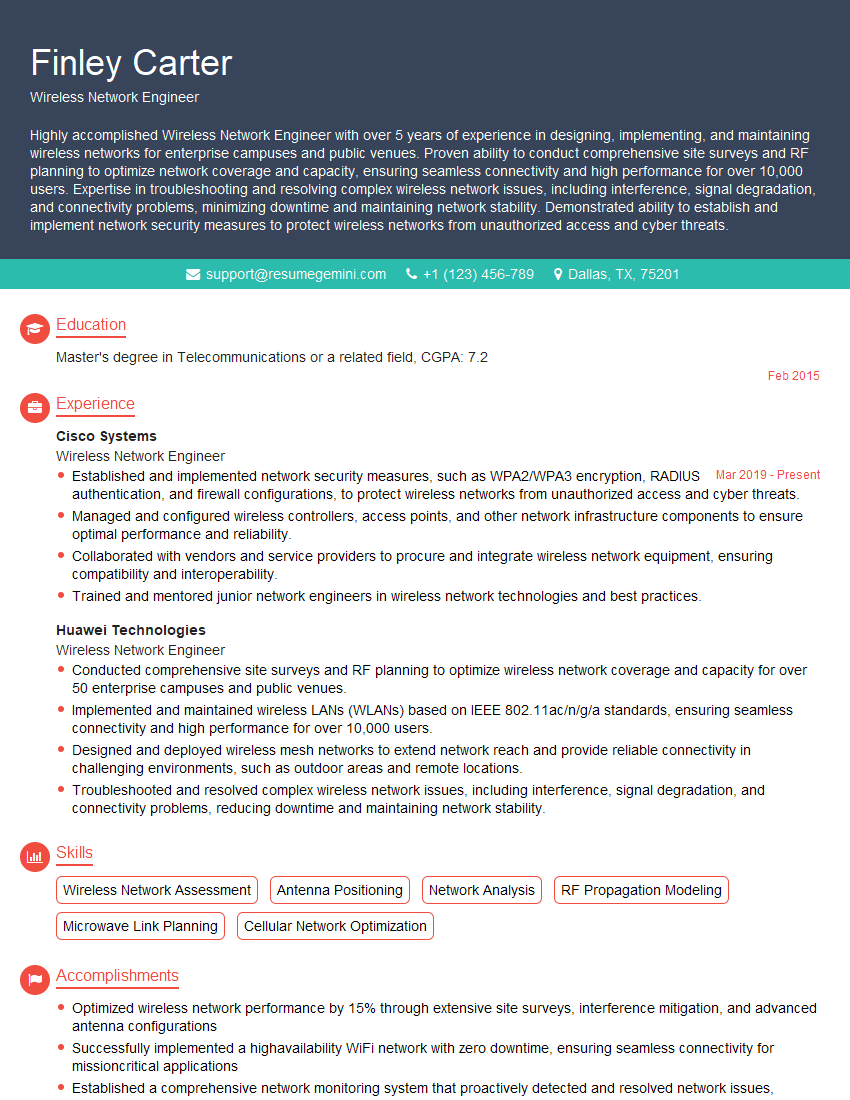

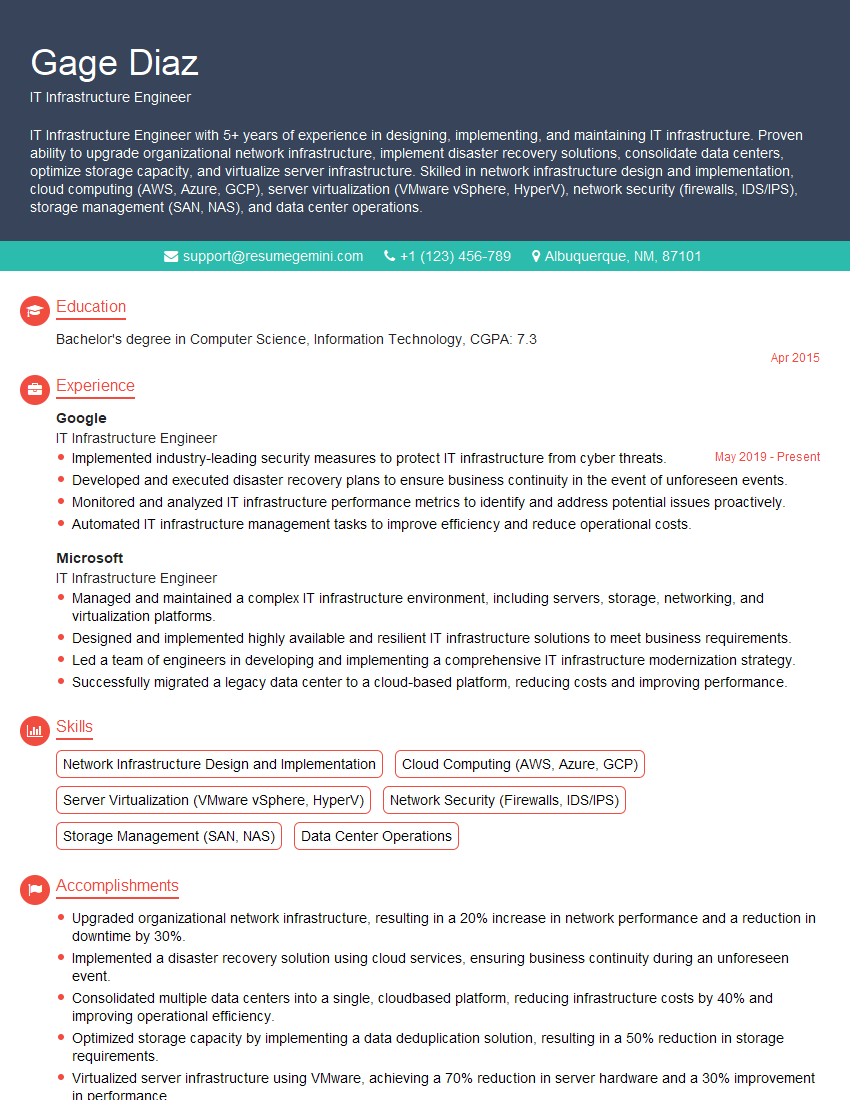

Mastering Network Simulation and Modeling Tools is crucial for a successful career in network engineering, offering you a competitive edge in today’s demanding job market. These skills are highly sought after, enabling you to design, optimize, and troubleshoot complex networks efficiently. To maximize your job prospects, it’s essential to present your skills effectively. Create an ATS-friendly resume that highlights your expertise in these areas. ResumeGemini is a valuable resource to help you build a professional and impactful resume that grabs recruiters’ attention. Examples of resumes tailored to Network Simulation and Modeling Tools are available to help guide you.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Very informative content, great job.

good