Are you ready to stand out in your next interview? Understanding and preparing for Production Records Analysis interview questions is a game-changer. In this blog, we’ve compiled key questions and expert advice to help you showcase your skills with confidence and precision. Let’s get started on your journey to acing the interview.

Questions Asked in Production Records Analysis Interview

Q 1. Explain the importance of accurate production records.

Accurate production records are the bedrock of any successful manufacturing operation. They’re not just a historical record; they’re a vital tool for continuous improvement, regulatory compliance, and efficient decision-making. Think of them as a detailed story of your production process, allowing you to trace every step, identify bottlenecks, and understand what contributes to high-quality output (or defects!). Without accurate records, troubleshooting issues becomes a guessing game, potentially leading to wasted resources, product recalls, or even regulatory fines.

For example, imagine trying to pinpoint the source of a contamination incident without detailed batch records documenting raw material sources, processing parameters, and personnel involved. Accurate records provide the crucial evidence needed for a swift and effective resolution.

Q 2. Describe your experience with different types of production records (e.g., batch records, logbooks).

My experience encompasses a wide range of production records, from the highly detailed batch records crucial in pharmaceutical and food manufacturing to simpler logbooks used in less regulated industries. Batch records are comprehensive documents detailing every step of a manufacturing run, including raw materials used, equipment employed, personnel involved, process parameters (temperature, pressure, time), in-process testing results, and final product testing data. This meticulous documentation ensures traceability and allows for thorough investigation in case of deviations or non-conformances.

Logbooks, on the other hand, might record simpler details like machine uptime, maintenance performed, or operator observations. I’ve worked with both types extensively, adapting my analysis techniques to the specific level of detail and format of the records. For instance, in a previous role, I analyzed batch records to identify a recurring issue with a specific piece of equipment leading to consistent product failures, enabling a preventative maintenance schedule to be implemented.

Q 3. How do you ensure data integrity in production records?

Data integrity in production records is paramount. It’s achieved through a multi-faceted approach involving robust record-keeping practices, electronic signature systems, and data validation checks. This begins with clear, standardized procedures for data entry and documentation, ensuring all relevant information is captured consistently.

- Electronic Signatures: Using electronic signatures and audit trails ensures accountability and prevents unauthorized modifications.

- Data Validation: Implementing checks for data entry errors, such as range checks (temperature within acceptable limits) and plausibility checks (batch size consistent with expected production rate), is essential.

- Version Control: Maintaining version control, with clear indication of any changes made to the records, is crucial for tracing back alterations and ensuring data accuracy.

- Regular Audits: Regular audits and spot checks help identify and address potential issues proactively.

Imagine the consequences of a simple typo in a critical parameter like temperature – it could lead to substandard product or even safety hazards. The systems we use are designed to minimize such risks.

Q 4. What methods do you use to identify and resolve discrepancies in production data?

Identifying and resolving discrepancies involves a systematic approach. I usually start by visually inspecting the data for outliers or inconsistencies. Statistical methods can then be used to identify trends and patterns. For instance, control charts can highlight significant deviations from established norms.

Once discrepancies are identified, the next step is to investigate their root cause. This could involve reviewing original source documents, interviewing personnel involved, examining equipment logs, or analyzing environmental data. Depending on the nature of the discrepancy, corrective actions are implemented, and the records are corrected or amended with proper documentation.

For example, if there’s a significant difference between expected yield and actual yield, I might investigate issues like raw material quality, equipment malfunction, or process deviations, using the data to form a hypothesis and then validate this hypothesis through further analysis.

Q 5. Describe your experience with data validation techniques in the context of production records.

Data validation techniques are central to ensuring the reliability of production records. I routinely use various techniques, including range checks (ensuring values fall within predefined limits), consistency checks (comparing data across different records), plausibility checks (verifying data makes logical sense), and completeness checks (confirming all required fields are populated).

Furthermore, I leverage data visualization techniques to spot anomalies that might be missed through simple numerical checks. For example, a scatter plot might reveal an unexpected correlation between two variables, hinting at a hidden problem or interaction. In a recent project, data validation revealed inconsistencies between raw material specifications and the actual materials used, ultimately leading to the identification of a supplier issue.

Q 6. How do you handle missing or incomplete production records?

Handling missing or incomplete records requires a cautious and thorough approach. The first step is to determine the extent and nature of the incompleteness. Is it a single missing data point, or are there large gaps in the data? Understanding the reason for the missing information is critical. Was it an oversight, a system failure, or intentional omission?

If possible, I attempt to recover the missing data through alternative sources, such as backup systems, related documents, or interviews with personnel. If data cannot be recovered, I document the missing information and its potential impact on analysis, ensuring transparency. In some cases, statistical imputation methods might be employed to estimate missing values, but only with careful consideration of potential biases. The key is to clearly communicate the limitations of any analyses conducted with incomplete data.

Q 7. How proficient are you with data analysis software (e.g., SQL, Excel, Tableau)?

I’m highly proficient in several data analysis software packages. My expertise includes SQL for querying and manipulating large datasets, Excel for data cleaning, transformation, and basic statistical analysis, and Tableau for creating interactive dashboards and visualizations to effectively communicate findings.

For example, I frequently use SQL to extract specific information from large production databases, Excel to clean and organize this data, and finally Tableau to create visualizations that allow stakeholders to easily understand production trends, identify potential problems, and track key performance indicators (KPIs). This integrated approach allows me to efficiently extract insights from often complex and voluminous production data.

-- Example SQL query to retrieve data for a specific batch SELECT * FROM BatchRecords WHERE BatchID = '12345';Q 8. Describe your experience using statistical methods for production data analysis.

Statistical methods are crucial for extracting meaningful insights from the often vast amounts of data generated in production. My experience encompasses a wide range of techniques, from descriptive statistics like calculating mean, median, and standard deviation to inferential statistics like hypothesis testing and regression analysis. For instance, I’ve used control charts (like Shewhart charts or CUSUM) to monitor process stability and identify potential shifts in key performance indicators (KPIs). I’ve also employed ANOVA (Analysis of Variance) to compare the performance of different production lines or machines. Furthermore, I’m proficient in regression analysis to model the relationships between various input variables (like raw material quality, machine settings) and output variables (like product yield, defect rate), allowing for predictive modeling and process optimization. In one project, we used regression analysis to predict potential downtime based on machine vibration data, resulting in proactive maintenance scheduling and reduced production losses.

Q 9. How do you identify trends and patterns in production records?

Identifying trends and patterns in production records involves a combination of visual inspection and statistical analysis. I typically begin by visualizing the data using tools like histograms, scatter plots, and time series plots to identify any immediate trends or anomalies. For example, a sudden spike in defect rates might indicate a problem with a specific machine or process. After initial visual inspection, I use statistical techniques such as moving averages, exponential smoothing, or seasonal decomposition to smooth out the noise and highlight underlying trends. For example, I might use a moving average to identify a gradual decrease in productivity over time, suggesting a potential need for workforce retraining or equipment upgrades. Pattern recognition often involves clustering techniques like k-means clustering to group similar data points, revealing hidden relationships between variables.

Q 10. Explain how production records contribute to process improvement.

Production records are the lifeblood of process improvement. They provide objective, quantifiable data to identify areas for enhancement. By analyzing trends in yield, defect rates, cycle times, and resource utilization, we can pinpoint bottlenecks and inefficiencies. For example, consistently high defect rates in a particular stage of the production process might indicate the need for operator retraining, equipment calibration, or raw material changes. Analysis of cycle times can reveal areas where streamlining processes would increase efficiency. Resource utilization data helps to optimize resource allocation and prevent waste. Through the use of statistical process control (SPC) charts, we can monitor process variation and identify deviations from established standards, leading to prompt corrective actions and continuous process improvement.

Q 11. How do you use production records for root cause analysis?

Root cause analysis (RCA) using production records is a systematic approach to identify the underlying reasons for production problems. I often use techniques like the 5 Whys, fishbone diagrams (Ishikawa diagrams), and Pareto analysis. For instance, if we see a significant increase in product defects, I’d start by using the 5 Whys to drill down into the root cause. The 5 Whys involve repeatedly asking ‘why’ to uncover the root problem. A fishbone diagram helps to visually organize potential contributing factors, and a Pareto analysis allows us to focus our efforts on addressing the most significant causes. Detailed production records—including machine logs, operator notes, and raw material specifications—provide the evidence needed to validate hypotheses and reach accurate conclusions about root causes.

Q 12. How do you ensure compliance with regulatory requirements regarding production records?

Ensuring compliance with regulatory requirements for production records involves a multi-pronged approach. First, I ensure that the data collection process adheres to all relevant guidelines, including Good Manufacturing Practices (GMP), ISO standards, or industry-specific regulations. This involves establishing clear data collection procedures, using standardized forms and documentation, and implementing robust data validation checks. Second, I ensure that records are properly stored, secured, and easily accessible for audits. This often involves using electronic record-keeping systems with appropriate access controls and audit trails. Third, I implement procedures for record retention and disposal in compliance with regulatory requirements. Regular internal audits and simulated regulatory inspections help to identify and address any compliance gaps proactively.

Q 13. What is your experience with data visualization related to production data?

Data visualization is integral to effective production data analysis. I’m proficient in using various software tools, such as Tableau, Power BI, and Python libraries like Matplotlib and Seaborn, to create compelling visuals. I use different chart types depending on the data and insights to convey. For instance, line charts are suitable for visualizing trends over time, while bar charts are effective for comparing different categories. Scatter plots can reveal correlations between variables, and heatmaps can show patterns in large datasets. In one instance, I created an interactive dashboard that displayed key production metrics in real-time, allowing managers to monitor performance and respond quickly to any issues.

Q 14. Explain your experience with reporting and presenting production data findings.

Reporting and presenting production data findings require a clear understanding of the audience and the key messages to be conveyed. I tailor my reports and presentations to the specific needs of my audience, using clear and concise language, visually engaging charts, and data tables. I focus on communicating the key findings in a way that is easily understandable, even for those without a technical background. I typically start with an executive summary highlighting the most important findings and recommendations, followed by a detailed analysis with supporting data. I also incorporate interactive elements, such as dashboards and simulations, where appropriate, to enhance engagement and understanding. I always ensure that my reports are well-structured, professionally formatted, and accurately reflect the data and analyses performed.

Q 15. Describe your experience with data mining techniques applied to production data.

Data mining in production records analysis involves extracting valuable insights from raw production data. My experience encompasses utilizing various techniques such as association rule mining (e.g., finding correlations between machine settings and product defects), clustering (e.g., grouping similar production runs to identify optimal parameters), and classification (e.g., predicting potential equipment failures based on historical data). For instance, in a previous role, I used association rule mining to identify a strong correlation between high humidity levels in the production facility and an increase in material defects. This led to the implementation of a humidity control system, resulting in a significant reduction in waste and improved product quality.

I’m proficient in using tools like R and Python with libraries such as scikit-learn and Weka to perform these analyses. The choice of technique depends heavily on the specific business problem and the nature of the data.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. How do you handle large datasets of production records?

Handling large production datasets requires a strategic approach. I leverage techniques like distributed computing (e.g., using Hadoop or Spark) to process data in parallel, significantly reducing processing time. Data sampling is also crucial for exploratory analysis and model building when dealing with massive datasets. This allows for quicker iteration and testing of different analysis methods without needing to process the entire dataset every time. Furthermore, I utilize database management systems optimized for analytical processing, such as data warehouses, to efficiently query and retrieve specific subsets of data. Imagine trying to analyze terabytes of data on a single machine – it would be incredibly slow and resource-intensive. Distributed computing allows me to break down the problem and solve it much faster.

Q 17. How do you ensure the security and confidentiality of production records?

Security and confidentiality are paramount. I strictly adhere to data governance policies and implement measures such as data encryption (both in transit and at rest), access control lists (limiting access based on roles and responsibilities), and regular security audits to ensure compliance. Data anonymization techniques, such as replacing personally identifiable information with pseudonyms, are employed wherever possible. This ensures that sensitive information remains protected while still enabling meaningful analysis. For example, instead of using employee IDs directly, I’d use a unique identifier that doesn’t reveal the employee’s identity. All this is documented thoroughly to maintain a clear audit trail.

Q 18. What are the common challenges in production records analysis?

Common challenges include data quality issues (missing values, inconsistencies, errors), the need to handle various data formats from different sources, the sheer volume and velocity of data, and the difficulty in interpreting the results and communicating findings to non-technical stakeholders. For example, inconsistent data entry practices can lead to inaccurate analysis. To mitigate this, I use data cleaning and validation techniques to ensure data quality. In addition, effectively visualizing the data and findings is key to making the analysis accessible and understandable to a wider audience.

Q 19. Describe your experience with auditing production records.

My auditing experience involves verifying the accuracy, completeness, and reliability of production records. This involves comparing production data against planned targets, identifying discrepancies, and investigating their root causes. I use statistical process control (SPC) charts and other visual tools to detect anomalies and potential errors. In one instance, I identified a significant discrepancy in reported production yields versus actual output by comparing data from different systems. This led to the discovery of a faulty sensor, which was promptly replaced, ensuring accuracy in future production records.

Q 20. How familiar are you with different data formats used in production records?

I’m familiar with a wide array of data formats commonly used in production records, including CSV, JSON, XML, and various database formats (SQL, NoSQL). I have experience extracting and transforming data from these diverse sources using scripting languages like Python and SQL, ensuring data compatibility for analysis. Understanding these formats is crucial because production data often comes from different systems with different data structures.

Q 21. How do you prioritize tasks when dealing with multiple production records requests?

Prioritizing tasks involves a combination of factors: urgency (immediate needs vs. long-term projects), business impact (high-impact projects get precedence), and resource availability (balancing workload). I typically use a project management methodology like Agile to manage multiple requests, breaking down larger tasks into smaller, manageable chunks. This allows for flexibility and adaptability to changing priorities. A well-defined prioritization system ensures that the most critical requests are addressed promptly and efficiently, maximizing the value delivered to the business.

Q 22. Describe your experience with different database management systems.

Throughout my career, I’ve worked extensively with various database management systems (DBMS), each offering unique strengths and challenges. My experience spans relational databases like MySQL, PostgreSQL, and SQL Server, as well as NoSQL databases such as MongoDB and Cassandra. The choice of DBMS often depends on the specific needs of the project. For instance, relational databases excel in managing structured data with well-defined relationships, ideal for production records with clearly defined fields like timestamps, machine IDs, and product codes. Conversely, NoSQL databases are better suited for handling large volumes of unstructured or semi-structured data, which can be useful for incorporating sensor readings or log files into the analysis. My proficiency includes not just querying and retrieving data, but also designing efficient database schemas, optimizing query performance, and ensuring data integrity.

For example, in a previous role, we migrated our production records from a legacy SQL Server database to a cloud-based PostgreSQL instance. This required careful planning to ensure minimal downtime and data accuracy. The process involved schema design, data migration strategies, and extensive testing to validate data integrity post-migration. We also implemented data monitoring to catch and address any inconsistencies.

Q 23. How do you contribute to a team’s effectiveness in production records analysis?

My contribution to a team’s effectiveness in production records analysis is multifaceted. I start by fostering a collaborative environment, ensuring open communication and knowledge sharing. I believe in breaking down complex problems into manageable tasks, delegating responsibilities effectively based on team members’ expertise, and providing constructive feedback. My strong analytical skills enable me to identify crucial insights hidden within the data, while my experience with data visualization tools allows me to effectively communicate those findings to both technical and non-technical stakeholders.

Specifically, I lead the definition of analysis objectives, helping the team focus on the most impactful questions. I also contribute significantly to data quality by implementing robust data validation procedures. Finally, I actively participate in the development of reports and dashboards, ensuring the information is clear, concise, and actionable for decision-making.

Q 24. What is your experience with data cleaning and transformation techniques?

Data cleaning and transformation are fundamental to accurate analysis. My experience encompasses a wide range of techniques, starting with identifying and handling missing values (imputation using mean, median, or more sophisticated methods like k-Nearest Neighbors). I’m proficient in dealing with outliers through statistical methods or by examining the root cause of the outlier. Data standardization and normalization are also crucial; I regularly employ techniques like z-score normalization or min-max scaling to prepare data for analysis. String manipulation, data type conversion, and date/time formatting are all routine parts of my workflow.

For instance, in one project, we encountered production records with inconsistent date formats. Using Python’s pandas library and regular expressions, I developed a script to automatically detect and correct the various date formats, ensuring data consistency and preventing errors in subsequent analyses. # Example Python code snippet (simplified): import pandas as pd; df['Date'] = pd.to_datetime(df['Date'], format='%m/%d/%Y', errors='coerce')

Q 25. Explain how you stay current with best practices in data analysis and production records management.

Staying current in data analysis and production records management is an ongoing process. I actively participate in online courses and workshops offered by platforms like Coursera and edX, focusing on advanced analytics techniques and new tools. I regularly follow industry blogs, publications, and journals dedicated to data science and manufacturing analytics. Attending conferences and webinars provides valuable insights into best practices and emerging trends. Further, I actively engage with professional organizations dedicated to data science and manufacturing, networking with peers and participating in discussions to share knowledge and learn from others’ experiences.

Membership in professional organizations such as the Institute of Industrial Engineers (IIE) or similar groups helps me to remain informed about the latest advancements and standards. I also maintain a curated list of relevant resources – articles, tutorials, and research papers – which I regularly review.

Q 26. Describe a situation where you had to solve a complex problem using production records.

In a previous role, we faced a significant production slowdown due to an unexpectedly high defect rate in a particular product line. Analyzing the production records, initially we saw no clear pattern. By carefully examining the data, I discovered a correlation between the defect rate and specific shifts and machine operators. Through further investigation, we found that a faulty machine component, not initially flagged in standard maintenance records, was the culprit. This component was causing subtle variations in the manufacturing process, undetectable by traditional quality control methods but evident in the detailed production records after more extensive analysis. By identifying the faulty component, we were able to rectify the issue, leading to a significant increase in production efficiency and a reduction in defective products. This case highlighted the importance of detailed records and the power of data analysis in solving complex problems.

Q 27. How would you handle conflicting data within production records?

Handling conflicting data in production records requires a methodical approach. The first step is to identify the source of the conflict, which might stem from human error (data entry mistakes), equipment malfunctions (faulty sensors), or software glitches. Next, I would prioritize the data source based on its reliability and accuracy. If possible, I’d cross-reference the conflicting information with other data sources to confirm the accuracy. For example, comparing sensor readings with manual quality control inspections. If no definitive resolution is found, I would flag the conflicting data points, document the discrepancies, and consult with stakeholders to determine the best course of action, possibly using statistical methods to determine the most probable value.

Sometimes, it is necessary to use data imputation techniques to fill in missing or incorrect values, but this requires careful consideration and transparent documentation. Ultimately, transparency and accurate record-keeping are essential for avoiding future conflicts and maintaining data integrity.

Q 28. What are your salary expectations for this role?

My salary expectations for this role are in the range of [Insert Salary Range]. This is based on my experience, skills, and the requirements of this position. I am open to discussing this further and am confident that my contributions would significantly benefit your organization.

Key Topics to Learn for Production Records Analysis Interview

- Data Collection & Management: Understanding various methods of production data collection (e.g., manual entry, automated systems, sensors), data cleaning techniques, and database management principles relevant to production records.

- Statistical Analysis & Interpretation: Applying statistical methods (e.g., descriptive statistics, regression analysis, hypothesis testing) to analyze production data, identify trends, and draw meaningful conclusions. Practical application includes identifying bottlenecks in the production process.

- Quality Control & Improvement: Utilizing production records to monitor product quality, identify defects, and implement process improvements. This includes understanding control charts and other quality management tools.

- Production Efficiency & Optimization: Analyzing production data to identify areas for efficiency improvement, such as reducing downtime, optimizing resource allocation, and improving overall productivity. This involves understanding key performance indicators (KPIs) and their relationship to production records.

- Reporting & Visualization: Creating clear and concise reports and visualizations (e.g., charts, graphs, dashboards) to communicate findings and insights from production data analysis to stakeholders.

- Root Cause Analysis: Employing techniques like the 5 Whys or Fishbone diagrams to identify the root causes of production problems using data from production records.

- Software & Tools: Familiarity with relevant software and tools used for production records analysis (e.g., statistical software packages, database management systems, data visualization tools). Demonstrate understanding of data manipulation and analysis within these systems.

Next Steps

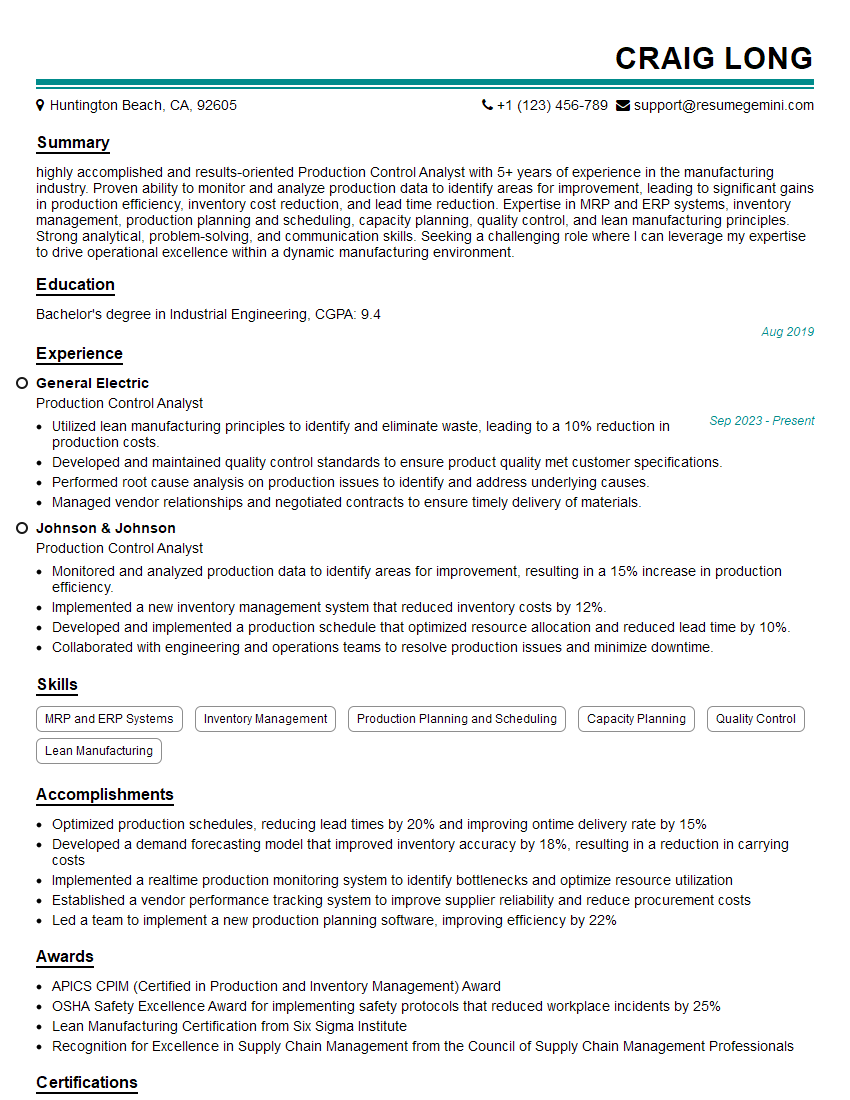

Mastering Production Records Analysis opens doors to exciting career advancements in operations management, quality control, and process improvement. Your analytical skills are highly valuable, and showcasing them effectively on your resume is crucial for securing your dream role. Creating an ATS-friendly resume is key to getting your application noticed. We strongly encourage you to leverage ResumeGemini, a trusted resource for building professional and impactful resumes. ResumeGemini provides examples of resumes tailored to Production Records Analysis, giving you a head start in presenting your skills and experience in the best possible light. Invest time in crafting a compelling narrative; it will significantly improve your job prospects.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Very informative content, great job.

good