Interviews are more than just a Q&A session—they’re a chance to prove your worth. This blog dives into essential Signals Processing interview questions and expert tips to help you align your answers with what hiring managers are looking for. Start preparing to shine!

Questions Asked in Signals Processing Interview

Q 1. Explain the Nyquist-Shannon sampling theorem.

The Nyquist-Shannon sampling theorem is a fundamental principle in signal processing that dictates the minimum sampling rate required to accurately reconstruct a continuous-time signal from its discrete-time samples. In essence, it states that to perfectly recover a signal containing frequencies up to a maximum frequency fmax, you must sample it at a rate at least twice that frequency, i.e., at a sampling frequency fs ≥ 2fmax. This minimum sampling rate, 2fmax, is known as the Nyquist rate.

Imagine trying to capture a spinning wheel with a camera. If you take pictures too slowly, the wheel might appear to be spinning backward or at a slower speed than it actually is. This is because you’re not capturing enough frames to represent its true motion. Similarly, if you sample a signal too slowly, you won’t capture all the information present in the original signal, leading to distortion and inaccuracies. This distortion, a consequence of undersampling, is called aliasing.

Mathematically, the theorem is based on the concept of Fourier transforms, which allow us to analyze a signal in the frequency domain. By sampling a signal, we essentially create a periodic repetition of its spectrum in the frequency domain. If the sampling rate is too low, these repetitions overlap, causing spectral leakage and information loss. Meeting the Nyquist rate ensures that these repetitions remain distinct, allowing perfect reconstruction.

Q 2. What are the differences between FIR and IIR filters?

FIR (Finite Impulse Response) and IIR (Infinite Impulse Response) filters are two fundamental types of digital filters, differing primarily in their impulse response and the way they process input signals.

- FIR filters have a finite-duration impulse response, meaning their output settles to zero after a finite number of samples. They are inherently stable, meaning their output remains bounded for any bounded input. This stability is a significant advantage. They are typically implemented using direct-form convolution, requiring more computations than IIR filters for the same filter order.

- IIR filters, on the other hand, have an infinite-duration impulse response; their output theoretically persists indefinitely after an impulse input. IIR filters can achieve a desired frequency response with a lower order than FIR filters, thus requiring fewer computations. However, this efficiency comes at the cost of potential instability, meaning their output can become unbounded for certain inputs. Careful design is essential to ensure IIR filter stability.

Consider designing a low-pass filter: an FIR filter might require many taps (coefficients) to achieve a sharp cutoff, while an IIR filter could achieve a similar response with fewer coefficients, leading to a more efficient implementation. However, the FIR filter’s guaranteed stability makes it the preferred choice in certain critical applications like medical devices, whereas IIR’s efficiency is preferable in systems with limited computing resources.

Q 3. Describe the Z-transform and its applications.

The Z-transform is a powerful mathematical tool used to analyze and design discrete-time systems. It transforms a discrete-time signal from the time domain to the complex frequency domain (z-domain), where ‘z’ is a complex variable. This transformation allows us to represent the signal as a polynomial or rational function of ‘z’, simplifying analysis and manipulation.

Think of it as a discrete-time equivalent of the Laplace transform for continuous-time systems. The Z-transform allows for easy manipulation of discrete-time signals, allowing us to perform operations like convolution (in the time domain) as simple multiplications in the z-domain. This simplifies system analysis significantly.

Applications of the Z-transform are abundant in digital signal processing, including:

- Filter design: Determining filter coefficients and analyzing filter stability.

- System analysis: Finding the system’s poles and zeros to understand its behavior and stability.

- Signal processing: Analyzing the frequency response of discrete-time systems.

- Control systems: Analyzing and designing digital control systems.

For example, determining the stability of a digital filter involves finding the poles of its transfer function in the z-domain. If all poles lie inside the unit circle, the filter is stable.

Q 4. How do you design a low-pass filter using the window method?

The window method is a simple yet effective technique for designing FIR filters. It involves taking the inverse Discrete Fourier Transform (IDFT) of an ideal frequency response, which is then multiplied by a window function to reduce sidelobe levels (ripples) in the resulting filter’s frequency response. This process mitigates the effects of the truncation of the ideal impulse response to a finite length.

Steps to design a low-pass filter using the window method:

- Specify filter parameters: Determine the desired cutoff frequency (fc), filter order (N), and the type of window function (e.g., Hamming, Hanning, Blackman).

- Design the ideal low-pass filter: Create the ideal frequency response Hd(ω) in the frequency domain. This is typically a rectangular function that is 1 for frequencies below fc and 0 above fc.

- Obtain the impulse response: Calculate the Inverse Discrete Fourier Transform (IDFT) of the ideal frequency response Hd(ω) to obtain the corresponding impulse response, hd[n]. This will be an infinite-length impulse response.

- Apply the window function: Truncate hd[n] to a finite length (N) and multiply it by a chosen window function (w[n]). The window function will smooth the transition between the passband and stopband.

- Obtain the filter coefficients: The resulting sequence, h[n] = hd[n]w[n], represents the filter coefficients.

The choice of window function significantly impacts the filter’s characteristics (tradeoff between transition width and stopband attenuation). For instance, a Hamming window provides a good balance between these factors.

Q 5. Explain the concept of aliasing and how to avoid it.

Aliasing is a phenomenon that occurs when a continuous-time signal is sampled at a rate below the Nyquist rate. It results in the higher frequency components of the signal appearing as lower frequencies in the sampled signal, thus causing distortion and loss of information. Imagine trying to draw a fast-spinning wheel with only a few strokes; you wouldn’t capture its true speed, instead representing it incorrectly.

For example, if a 10kHz signal is sampled at 8kHz, a component at 2kHz will appear in the sampled signal. This 2kHz component wasn’t initially present in the original signal but has been introduced due to aliasing.

Avoiding aliasing:

- Anti-aliasing filter: Using a low-pass analog filter before sampling to attenuate frequencies above half the sampling rate. This filter eliminates high-frequency components that would otherwise cause aliasing.

- Sufficient sampling rate: Sampling at a rate significantly higher than the Nyquist rate provides a margin of safety. This prevents aliasing even if there are slight variations in the signal’s frequency content.

- Oversampling: Sampling at a much higher rate than the Nyquist rate (oversampling) allows for more effective digital filtering to remove unwanted frequencies before downsampling to the desired rate.

Properly addressing aliasing is crucial for accurate signal representation and processing.

Q 6. What are different types of window functions and their properties?

Window functions are essential tools in digital signal processing, particularly in filter design and spectral analysis. They are used to modify the frequency response of signals, such as those obtained via the DFT, improving the accuracy and reducing artifacts. They act as a taper, smoothly reducing the amplitude towards the edges of a finite-length signal. The choice of window function depends on the desired trade-off between main lobe width (resolution) and sidelobe attenuation (leakage).

Common window functions include:

- Rectangular: Simple, but has high sidelobes leading to significant spectral leakage. Provides the best frequency resolution but the worst sidelobe attenuation.

- Hamming: Good compromise between main lobe width and sidelobe attenuation. Widely used in filter design.

- Hanning: Similar to Hamming, but with slightly wider main lobe and lower sidelobe attenuation.

- Blackman: Superior sidelobe attenuation compared to Hamming or Hanning, but with a wider main lobe.

- Kaiser: Provides a flexible trade-off between main lobe width and sidelobe attenuation, controlled by a shape parameter.

Each window function has specific properties. The rectangular window offers the highest resolution but suffers from significant spectral leakage. The other windows (Hamming, Hanning, Blackman, Kaiser) trade off resolution for reduced spectral leakage. The choice of window depends entirely on the application; spectral analysis might prioritize minimal leakage, whereas filter design may focus on the transition width in the frequency response.

Q 7. Describe the Fast Fourier Transform (FFT) algorithm and its advantages.

The Fast Fourier Transform (FFT) is an efficient algorithm for computing the Discrete Fourier Transform (DFT) of a sequence. The DFT transforms a time-domain signal into the frequency domain, revealing the frequencies present in the signal and their corresponding magnitudes and phases. While the DFT can be computationally expensive for long sequences, the FFT significantly reduces the computational complexity, making it suitable for real-time signal processing applications.

The FFT utilizes a divide-and-conquer approach, recursively breaking down the DFT computation into smaller DFTs. For a sequence of length N, where N is a power of 2, the FFT algorithm requires approximately N log2 N computations, compared to N2 computations for the direct DFT. This improvement in efficiency is substantial for large N.

Advantages of the FFT:

- Computational efficiency: Reduces computation time significantly for large sequences.

- Real-time processing: Enables efficient processing of signals in real-time applications.

- Spectral analysis: Provides a clear representation of the frequency components in a signal.

- Signal processing applications: Used extensively in various signal processing tasks, including filtering, spectral estimation, and modulation/demodulation.

For instance, the FFT is crucial in audio and image processing, telecommunications, and radar systems, where efficient analysis of large data sets is necessary.

Q 8. Explain the concept of spectral leakage and how to mitigate it.

Spectral leakage is a phenomenon in digital signal processing where energy from a frequency component in a signal ‘leaks’ into adjacent frequency bins during the Discrete Fourier Transform (DFT). This happens because the DFT assumes the signal is periodic over the observation window, but real-world signals are typically not perfectly periodic. If a signal’s frequency isn’t an exact multiple of the DFT’s frequency resolution (which depends on the sampling rate and the window length), the DFT will show energy spread across several bins, distorting the true spectrum. Imagine trying to measure the height of a wave using a ruler that doesn’t perfectly align with the wave’s crests and troughs. You’ll get an inaccurate measurement, just like spectral leakage provides an inaccurate frequency representation.

Mitigation strategies include:

- Windowing: Applying a window function (like Hamming, Hanning, or Blackman) to the signal before the DFT tapers the signal’s edges to reduce the abrupt discontinuities that cause leakage. This effectively makes the signal appear more periodic within the observation window.

- Zero-padding: Adding zeros to the end of the signal before the DFT increases the number of points in the DFT, improving the frequency resolution and making it less likely that a signal’s frequency will fall between bins. Think of it as using a finer ruler for the wave height measurement.

- Choosing an appropriate sampling rate and window length: Ensure the signal is sampled at a rate at least twice the highest frequency component (Nyquist-Shannon sampling theorem) and select a window length that is appropriate for the signal characteristics. A longer window provides better frequency resolution but might also increase leakage if the signal is non-stationary (changing over time).

For example, analyzing an audio signal containing a pure tone might show the tone’s energy spread across several frequency bins if spectral leakage occurs. Using a window function and appropriate zero-padding helps concentrate the energy within the correct bin, leading to a more accurate frequency representation.

Q 9. What are the different types of digital modulation techniques?

Digital modulation techniques are used to transmit digital information over analog channels, such as radio waves. They encode digital bits (0s and 1s) onto a carrier wave, altering its characteristics. The key difference between various techniques lies in how efficiently they use bandwidth and how robust they are to noise.

Common types include:

- Amplitude Shift Keying (ASK): The amplitude of the carrier wave represents the digital bits. Simple but susceptible to noise.

- Frequency Shift Keying (FSK): The frequency of the carrier wave represents the digital bits. More robust to noise than ASK.

- Phase Shift Keying (PSK): The phase of the carrier wave represents the digital bits. Can be more spectrally efficient than ASK or FSK. Variations include Binary PSK (BPSK), Quadrature PSK (QPSK), and others.

- Quadrature Amplitude Modulation (QAM): Combines ASK and PSK. The amplitude and phase of the carrier wave both represent digital bits. Highly spectrally efficient but sensitive to noise.

- Orthogonal Frequency Division Multiplexing (OFDM): Divides the transmission channel into multiple orthogonal subcarriers, each carrying a smaller portion of the data. Robust to multipath fading, making it ideal for wireless communication.

The choice of modulation scheme depends on factors like bandwidth availability, power constraints, and the required level of noise immunity.

Q 10. Explain the process of signal demodulation.

Demodulation is the reverse process of modulation. It recovers the original digital data from the modulated carrier wave. The process typically involves extracting the information encoded in the carrier’s amplitude, frequency, or phase. Think of it like reversing the encoding process.

The method used for demodulation depends on the modulation technique used in transmission. For example:

- ASK demodulation often uses a threshold detector to determine whether the received signal’s amplitude is above or below a certain threshold, representing 1 or 0 respectively.

- FSK demodulation uses a bandpass filter to separate the different frequencies corresponding to different bits.

- PSK demodulation involves comparing the phase of the received signal to reference phases to determine the transmitted bits.

- QAM demodulation requires more complex techniques, often involving matched filtering and symbol detection to recover both amplitude and phase information.

Demodulation is often followed by error correction to mitigate the effects of noise and channel impairments during transmission.

Q 11. Describe different types of noise and how to handle them in signal processing.

Noise is unwanted signals that interfere with the desired signal, degrading its quality and accuracy. Several types exist:

- Thermal noise (Johnson-Nyquist noise): Random fluctuations in the electron motion in any conductor at a finite temperature. It’s present in all electrical systems.

- Shot noise: Caused by the discrete nature of electric charge, arising from the random arrival of electrons or holes at a junction.

- Flicker noise (1/f noise): A frequency-dependent noise with power spectral density inversely proportional to frequency. Its origin is complex, related to various physical processes.

- Quantization noise: Introduced during analog-to-digital conversion (ADC) due to the finite precision of the ADC. This is a type of round-off error.

- Impulse noise (spikes): Short bursts of high-amplitude noise, often due to external interference.

Handling noise involves various techniques:

- Filtering: Use low-pass, high-pass, band-pass, or notch filters to remove noise outside the frequency range of the desired signal.

- Averaging: Repeating measurements and averaging the results can reduce the impact of random noise.

- Noise cancellation: Employing techniques that actively subtract the noise signal from the received signal.

- Signal averaging: Averaging multiple repetitions of a signal to reduce random noise components.

The best approach depends on the type and characteristics of the noise, and the specific application. For example, using a moving average filter can be effective for reducing random noise in a time series signal.

Q 12. How do you perform signal filtering in the frequency domain?

Signal filtering in the frequency domain involves modifying the signal’s frequency components directly using the Fourier Transform. First, the time-domain signal is transformed into the frequency domain using a DFT (or FFT for speed). Then, a filter is applied to the frequency components. This is typically a multiplication of the frequency domain representation with a filter’s frequency response.

This approach allows for precise control over which frequencies are passed or attenuated. For example:

- Ideal low-pass filter: Sets the magnitude of all frequency components above a cutoff frequency to zero.

- Ideal high-pass filter: Sets the magnitude of all frequency components below a cutoff frequency to zero.

- Ideal band-pass filter: Allows only the frequencies within a specific range to pass.

- Notch filter: Attenuates or eliminates a narrow range of frequencies.

After the filtering operation in the frequency domain, an Inverse DFT (IDFT) converts the modified frequency spectrum back to the time domain, giving the filtered signal. This process is significantly more efficient than filtering in the time domain for complex filtering operations. However, it can be computationally expensive for extremely long signals.

//Example (Conceptual Python): import numpy as np from scipy.fft import fft, ifft # Input signal signal = np.random.randn(1024) # DFT spectrum = fft(signal) # Ideal low-pass filter (example) cutoff_frequency = 100 # Adjust as needed filter = np.zeros_like(spectrum) filter[:cutoff_frequency] = 1 # Apply filter filtered_spectrum = spectrum * filter # IDFT filtered_signal = ifft(filtered_spectrum)

Q 13. What is the difference between linear and non-linear signal processing?

The core difference between linear and non-linear signal processing lies in how they handle superposition. Linear systems obey the principle of superposition: if input A produces output B and input C produces output D, then input (A+C) produces output (B+D). Non-linear systems do not obey this principle; the output for (A+C) is not necessarily (B+D).

Linear systems: These systems exhibit characteristics like scaling and additivity. Examples include filtering using linear time-invariant (LTI) systems, convolution, and correlation. Analysis and design are simplified using tools like the Fourier transform and Z-transform.

Non-linear systems: These systems introduce complexities. Examples include systems with saturation (clipping), thresholding, and rectification. Analysis is often more challenging, and tools like Volterra series are sometimes employed.

In practice:

- Linear systems are easier to model and analyze, and are suitable for many applications where superposition holds. They are well understood and many tools exist for analysis and design.

- Non-linear systems are more complex to design and analyze. While difficult, they are sometimes necessary for applications requiring specific operations that can’t be achieved linearly, such as compression or noise reduction in specific conditions.

Choosing between linear and non-linear approaches depends on the signal and the desired processing. A simple example is an amplifier; an ideal amplifier is a linear system, while one with clipping is nonlinear.

Q 14. Explain the concept of autocorrelation and its applications.

Autocorrelation is a measure of the similarity between a signal and a time-shifted version of itself. It quantifies how much the signal resembles itself at different lags (time shifts). A high autocorrelation value at a particular lag indicates a strong similarity between the signal and its shifted version.

Imagine sliding a copy of a waveform along itself; autocorrelation measures how well the two overlap at different positions. A periodic signal will have strong autocorrelation peaks at multiples of its period.

Applications of autocorrelation include:

- Signal detection: Identifying periodic signals buried in noise. The peaks in the autocorrelation function highlight the periodicities.

- Signal estimation: Estimating the parameters of a signal, like its period or frequency, especially useful when the signal is corrupted by noise.

- Pattern recognition: Identifying recurring patterns in signals, such as speech signals or biomedical signals.

- Time series analysis: Analyzing the temporal dependencies in a time series; detecting trends or cycles.

For example, in radar, autocorrelation is used to determine the range of a target by analyzing the time delay between the transmitted and received signals. Similarly, in telecommunications, autocorrelation can be used to extract a signal from a noisy channel.

Q 15. What are the different types of digital signal processing architectures?

Digital signal processing (DSP) architectures can be broadly classified into general-purpose processors, specialized DSP processors, and hardware architectures like FPGAs and ASICs. Each offers different trade-offs between flexibility, performance, and power consumption.

- General-Purpose Processors (GPPs): These are versatile processors like CPUs found in computers. They are highly flexible and can run a wide variety of algorithms, but they might lack the computational efficiency of specialized DSPs for computationally intensive signal processing tasks. Think of using a Swiss Army knife – it can do many things, but not always optimally.

- Specialized DSP Processors: These are designed specifically for DSP tasks. They have features like parallel processing capabilities, optimized instruction sets, and specialized memory architectures making them highly efficient for signal processing. They are analogous to a precision tool designed for a specific job – very efficient for that task, but limited in versatility.

- Field-Programmable Gate Arrays (FPGAs): FPGAs offer a balance between flexibility and performance. You can program their logic circuits to implement custom DSP algorithms directly in hardware, resulting in very high speeds. Think of them as configurable building blocks that can be tailored to your exact needs.

- Application-Specific Integrated Circuits (ASICs): ASICs are highly specialized and designed for a single, specific application. They offer the highest performance but are the least flexible and most expensive to develop. They’re like a custom-built machine, incredibly powerful but not adaptable to other tasks.

The choice of architecture depends on factors such as the specific application requirements (real-time constraints, processing power needed), budget, and development time.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. Describe your experience with different signal processing software and tools.

My experience spans several signal processing software and tools. I’m proficient in MATLAB, a widely used platform for prototyping and simulating DSP algorithms. I’ve extensively used its Signal Processing Toolbox for tasks like filtering, spectral analysis, and waveform generation. I’m also experienced with Python and its scientific computing libraries like NumPy and SciPy, offering flexibility and open-source advantages. For real-time applications, I’ve worked with LabVIEW, appreciating its graphical programming environment and excellent interface with hardware. Finally, I have experience with dedicated DSP software packages from manufacturers like Texas Instruments, enabling code optimization for their specific hardware.

For example, in a previous project involving audio signal processing, I used MATLAB to design an adaptive noise cancellation filter, verified its performance through simulations, and then implemented the optimized code on a Texas Instruments DSP for real-time processing on embedded hardware.

Q 17. How would you approach designing a system to detect a specific signal in noisy data?

Detecting a specific signal in noisy data is a common signal processing challenge. The approach involves several steps:

- Signal Characterization: First, thoroughly understand the characteristics of the target signal – its frequency content, amplitude, and any unique features. This helps choose the right signal processing techniques.

- Noise Characterization: Analyze the noise in the data. Is it white noise (random noise across all frequencies), colored noise (noise concentrated in specific frequency ranges), or some other type of noise?

- Signal Filtering: Employ appropriate filtering techniques. If the signal and noise occupy different frequency bands, a bandpass filter centered around the signal’s frequency can be highly effective. For more complex scenarios, adaptive filters (discussed in a later answer) may be necessary.

- Signal Enhancement: Techniques like wavelet transforms or matched filtering can enhance the signal-to-noise ratio (SNR), making the signal more prominent.

- Signal Detection: Finally, implement a detection algorithm based on thresholds or statistical tests to determine the presence or absence of the signal.

For example, detecting a specific radio frequency in noisy channel data would involve bandpass filtering to isolate the frequency band, followed by thresholding to determine if a signal exists above the noise floor.

Q 18. Explain your understanding of adaptive filtering.

Adaptive filtering is a powerful technique where the filter’s characteristics change dynamically to optimally respond to variations in the input signal or noise. Unlike fixed filters, whose parameters are constant, adaptive filters adjust their parameters based on an iterative process. This makes them ideal for applications with non-stationary signals or noise.

The core of adaptive filtering is an adaptive algorithm, such as the Least Mean Squares (LMS) algorithm or the Recursive Least Squares (RLS) algorithm. These algorithms iteratively adjust the filter coefficients to minimize an error signal, usually the difference between the desired output and the actual filter output. The LMS algorithm is particularly simple and computationally efficient, making it suitable for real-time applications. The RLS algorithm offers faster convergence but has a higher computational burden.

A practical application is adaptive noise cancellation in hearing aids. The algorithm adapts to the changing noise environment, improving speech intelligibility. Another example is echo cancellation in telecommunications, where the filter adapts to reduce unwanted echoes.

Q 19. Describe your experience with different types of signal processing algorithms.

My experience encompasses a broad range of signal processing algorithms. I’m proficient in:

- Filtering techniques: FIR and IIR filters, adaptive filters (LMS, RLS), wavelet filters, Kalman filters.

- Spectral analysis: Fast Fourier Transform (FFT), Short-Time Fourier Transform (STFT), wavelet transforms.

- Time-frequency analysis: Spectrograms, Wigner-Ville distribution.

- Signal detection and estimation: Matched filters, hypothesis testing, parameter estimation.

- Signal compression and coding: Discrete Cosine Transform (DCT), Linear Predictive Coding (LPC).

In a project involving biomedical signal processing, I used wavelet transforms for denoising EEG signals, followed by FFT-based spectral analysis to identify characteristic frequency bands associated with different brain states.

Q 20. How do you handle missing data in a signal processing application?

Missing data is a significant challenge in signal processing. Ignoring missing data can lead to inaccurate results. The approach to handling it depends on the nature and extent of the missing data. Several strategies exist:

- Interpolation: This involves estimating the missing data points based on the surrounding known data. Linear interpolation, spline interpolation, and more sophisticated methods like Kalman filtering can be used. The choice depends on the characteristics of the signal and the amount of missing data.

- Data imputation: More sophisticated methods of estimation that consider statistical properties of the data might be applied. Methods like k-Nearest Neighbors imputation or expectation-maximization algorithms provide robust solutions.

- Inpainting: This technique is used for images, but can be extended to other signals. It takes into account the surrounding structure and context to fill in the missing data.

- Model-based approaches: If you have a model of the signal generation process, you can integrate this information into the data reconstruction process.

The best approach depends on the specific application and the amount of missing data. For example, in a sensor network with occasional data dropouts, I’ve used linear interpolation for small gaps, while for larger gaps I’ve explored model-based approaches and incorporated redundancy and prediction schemes.

Q 21. Explain your experience with real-time signal processing.

Real-time signal processing requires careful consideration of computational constraints and latency requirements. Algorithms must be optimized for speed and efficiency. I have extensive experience developing real-time systems, focusing on aspects such as:

- Algorithm optimization: Choosing computationally efficient algorithms and implementing them using optimized code (e.g., using assembly language or dedicated DSP libraries).

- Hardware selection: Selecting appropriate hardware with sufficient processing power and memory to meet real-time constraints.

- Data buffering: Using efficient data buffering techniques to handle continuous data streams and prevent data loss.

- Parallel processing: Utilizing parallel processing techniques to improve processing speed, which is often necessary for real-time applications involving high data rates.

For instance, in a project involving real-time video processing, I used optimized code on a GPU to achieve the necessary frame rates, ensuring low latency for video effects.

Q 22. What are your preferred methods for signal compression?

Signal compression aims to reduce the size of a signal while preserving its essential information. My preferred methods depend heavily on the characteristics of the signal and the acceptable level of distortion. For lossless compression, I often use techniques like run-length encoding (RLE) for signals with many repeating values or entropy coding methods like Huffman coding or arithmetic coding which exploit the statistical redundancies in the data. For lossy compression, where some information loss is acceptable to achieve higher compression ratios, I favor transform coding. This involves transforming the signal into a different domain (like frequency using Discrete Cosine Transform (DCT) or Discrete Fourier Transform (DFT)), then quantizing and encoding only the most significant coefficients. DCT is widely used in JPEG image compression, for instance. Wavelet transforms (discussed in the next question) offer another powerful approach for lossy compression, particularly effective for signals with transient features.

The choice between lossless and lossy compression is a trade-off. Lossless methods ensure perfect reconstruction, crucial for applications like medical imaging where accuracy is paramount. Lossy methods, while introducing some error, allow for much smaller file sizes, beneficial for applications like audio or video streaming where bandwidth is limited.

Q 23. Describe your understanding of wavelet transforms and their applications.

Wavelet transforms decompose a signal into different frequency components at various scales, unlike the Fourier Transform which analyzes the entire signal at once. Think of it like zooming in and out of a picture to examine details at different levels of resolution. This multi-resolution analysis is particularly powerful for representing signals with non-stationary characteristics, meaning the frequency content changes over time – a common feature in many real-world signals like speech or seismic data. Wavelet transforms use a set of basis functions called wavelets, which are localized in both time and frequency. This localization allows for a better representation of transient events compared to the Fourier transform, where a sudden change impacts the entire frequency spectrum.

Applications of wavelet transforms are numerous: In image compression (like JPEG 2000), they achieve higher compression ratios than DCT for images with sharp edges. In denoising, wavelets can effectively remove noise by identifying and attenuating noisy coefficients in the wavelet domain. They are also used in feature extraction for pattern recognition, signal analysis in various fields such as biomedical signal processing (ECG analysis), and geophysical signal processing (seismic signal analysis). For example, the ability of wavelets to handle discontinuities makes them ideal for analyzing seismic signals featuring sharp changes during an earthquake.

Q 24. How do you evaluate the performance of a signal processing system?

Evaluating the performance of a signal processing system depends greatly on the specific application. However, several key metrics are often used:

- Mean Squared Error (MSE): Measures the average squared difference between the original and processed signal. Lower MSE indicates better performance, particularly relevant in tasks like signal restoration or reconstruction.

- Signal-to-Noise Ratio (SNR): Compares the power of the desired signal to the power of the noise. A higher SNR reflects better noise reduction.

- Peak Signal-to-Noise Ratio (PSNR): A logarithmic version of MSE, often used for image and video quality assessment.

- Computational Complexity: Measures the computational resources required, considering factors like execution time and memory usage. It’s crucial for real-time applications.

- Robustness: How well the system performs in the presence of variations or uncertainties in the input signal.

Objective metrics like MSE and SNR are often complemented by subjective evaluations, especially in applications involving human perception, such as audio or image quality. Listening tests or visual quality assessments involving human observers can provide valuable insights beyond numerical metrics.

Q 25. Explain your experience with different types of signal processing hardware.

My experience encompasses a range of signal processing hardware, from general-purpose processors (GPUs and CPUs) to specialized digital signal processors (DSPs) and field-programmable gate arrays (FPGAs). GPUs offer excellent parallel processing capabilities, suitable for computationally intensive tasks like image processing. DSPs are optimized for signal processing operations and offer low power consumption and high efficiency, often preferred in embedded systems. FPGAs offer flexibility by allowing custom hardware implementations tailored to specific algorithms. I’ve worked extensively with data acquisition systems, integrating analog-to-digital converters (ADCs) and digital-to-analog converters (DACs) for real-time signal processing. For example, in a project involving real-time audio processing, I used a DSP to implement a low-latency adaptive filter efficiently.

Q 26. Describe your experience with different programming languages for signal processing.

I’m proficient in several programming languages commonly used in signal processing. MATLAB is my primary tool, offering extensive built-in functions and toolboxes specifically designed for signal processing tasks. Python, with libraries like NumPy, SciPy, and matplotlib, is another favorite, offering flexibility and extensive community support. I’ve also used C/C++ for applications requiring low-level control and optimization, especially when dealing with embedded systems or hardware interaction. Recently, I’ve started exploring the use of Julia for its performance advantages in certain computationally intensive tasks.

For instance, in one project requiring real-time processing of sensor data, I used C++ for its efficiency in embedded microcontroller development, whilst utilizing MATLAB for rapid prototyping and algorithm development.

Q 27. Explain a challenging signal processing problem you faced and how you solved it.

One challenging project involved denoising biomedical signals containing artifacts from electromyography (EMG) interference. The EMG noise had a non-stationary character and was highly correlated with the desired signal, making standard filtering techniques ineffective. My solution involved a multi-stage approach combining empirical mode decomposition (EMD), which effectively separates signals based on their time scales, and wavelet thresholding, which removes small wavelet coefficients representing noise. EMD decomposed the signal into intrinsic mode functions (IMFs), allowing for targeted noise removal in specific frequency bands. Wavelet thresholding then refined the results, suppressing residual noise. This hybrid approach significantly improved the signal-to-noise ratio and enabled accurate feature extraction for subsequent analysis, resulting in a substantial improvement in the reliability of the diagnostic analysis.

Q 28. How do you stay up-to-date with the latest advancements in signal processing?

Staying current in the rapidly evolving field of signal processing requires a multi-pronged approach. I regularly attend conferences like ICASSP and IEEE Signal Processing Society workshops, where leading researchers present their latest findings. I actively follow leading journals, such as the IEEE Transactions on Signal Processing, and subscribe to relevant newsletters and online communities. I also dedicate time to reading research papers, focusing on areas like deep learning for signal processing, sparse signal processing and advanced techniques in signal compression. Furthermore, online courses and tutorials on platforms like Coursera and edX provide valuable opportunities for continuous learning and skill enhancement.

Key Topics to Learn for Signals Processing Interview

- Signal Classification and Representation: Understand different types of signals (continuous, discrete, analog, digital) and their representations in time and frequency domains. Explore techniques like Fourier Transform and its variations (DFT, FFT).

- Linear Time-Invariant (LTI) Systems: Master the concepts of convolution, impulse response, frequency response, and system stability. Understand how to analyze and design LTI systems using techniques like Z-transforms and transfer functions. Practical applications include filter design and signal restoration.

- Digital Signal Processing (DSP) Fundamentals: Grasp the basics of sampling, quantization, and aliasing. Familiarize yourself with common DSP algorithms like filtering (FIR, IIR), correlation, and spectral estimation. Consider applications in audio processing, image processing, and telecommunications.

- Filtering Techniques: Deepen your understanding of different filter types (low-pass, high-pass, band-pass, band-stop), their design methods (e.g., windowing, Parks-McClellan), and their applications in noise reduction, signal separation, and feature extraction.

- Transform Techniques: Beyond the Fourier Transform, explore other transforms like Laplace Transform, Wavelet Transform, and Discrete Cosine Transform (DCT). Understand their properties and applications in various signal processing tasks.

- Practical Problem-Solving: Practice applying your theoretical knowledge to solve real-world problems. This might involve designing a filter to remove noise from a signal, analyzing a system’s response to a specific input, or developing an algorithm for feature extraction from a dataset.

Next Steps

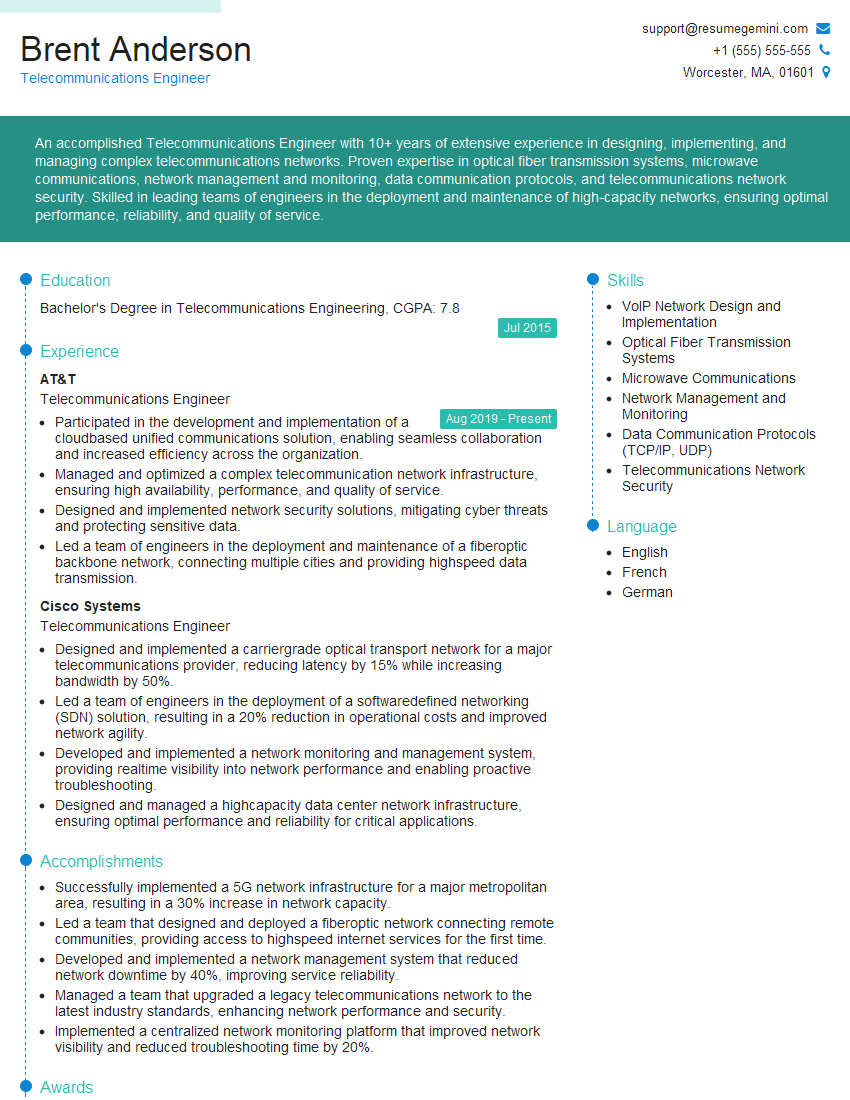

Mastering Signals Processing opens doors to exciting careers in various fields, including telecommunications, medical imaging, audio engineering, and more. A strong foundation in this area significantly enhances your job prospects and allows you to contribute meaningfully to innovative projects. To maximize your chances of landing your dream role, it’s crucial to present your skills effectively. Creating an Applicant Tracking System (ATS)-friendly resume is key to getting your application noticed. ResumeGemini is a trusted resource that can help you craft a professional and impactful resume tailored to your skills and experience. Examples of resumes specifically designed for Signals Processing professionals are available to help you get started.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Very informative content, great job.

good