Feeling uncertain about what to expect in your upcoming interview? We’ve got you covered! This blog highlights the most important Statistical Quality Control (SQC) Techniques interview questions and provides actionable advice to help you stand out as the ideal candidate. Let’s pave the way for your success.

Questions Asked in Statistical Quality Control (SQC) Techniques Interview

Q 1. Explain the difference between common cause and assignable cause variation.

In Statistical Quality Control (SQC), understanding the root causes of variation is crucial. Common cause variation is the inherent, ever-present variability within a process that’s predictable and stable. Think of it as the ‘noise’ in the system – small, random fluctuations that are expected and are a natural part of any process, no matter how well-designed. Assignable cause variation, on the other hand, is caused by specific, identifiable factors that significantly deviate from the norm. These are ‘special’ causes, and they indicate that something unusual is happening, disrupting the process’s stability. These might include machine malfunction, operator error, or a change in raw materials.

Analogy: Imagine a perfectly tuned musical instrument. Common cause variation is the slight variations in pitch each time you play a note due to temperature, humidity or even the player’s slight variations in finger pressure. Assignable cause variation would be a string breaking or a tuner getting knocked out of alignment – clear problems that need attention.

Q 2. Describe the control chart patterns and their interpretations.

Control charts visually represent process data over time, allowing us to identify shifts in process behavior. Several patterns signal assignable cause variation:

- Points beyond control limits: This indicates that the process is out of control and something needs investigation.

- Trends: A series of consecutive points steadily increasing or decreasing suggests a shift in the process mean.

- Cycles: Recurring patterns of points, perhaps periodic increases and decreases, point to recurring issues.

- Stratification: Data points clustering into distinct groups suggest subgroup differences (e.g., different operators, shifts).

- Runs: Several consecutive points above or below the center line hint at systematic variation.

Interpretation: When a pattern emerges, it’s vital to investigate the potential assignable causes. This involves searching for factors that could explain the deviation from the norm. These may be identified by asking what changed on the day when the pattern started.

Q 3. How do you calculate the control limits for an X-bar and R chart?

Calculating control limits for X-bar and R charts involves using data from subgroups. Let’s assume we have ‘k’ subgroups, each with ‘n’ observations.

- Calculate the subgroup averages (X-bar): For each subgroup, calculate the average value.

- Calculate the grand average (X-double bar): This is the average of all subgroup averages.

- Calculate the average range (R-bar): This is the average of the ranges for each subgroup (the difference between the highest and lowest values).

Control Limits for X-bar Chart:

- Upper Control Limit (UCL): X-double bar + A2 * R-bar

- Lower Control Limit (LCL): X-double bar – A2 * R-bar

Control Limits for R Chart:

- UCL: D4 * R-bar

- LCL: D3 * R-bar

A2, D3, and D4 are constants obtained from control chart constants tables based on the subgroup size (n).

Example: Suppose you collect data on the diameter of a part. You take samples of 5 (n=5) throughout the day (k=20). You’ll calculate the average diameter for each subgroup, the average of those averages, and the average range. Using the constants table for n=5, you’ll find A2, D3, and D4 values to plug into the formulas above.

Q 4. Explain the concept of process capability and Cp/Cpk indices.

Process capability assesses whether a process can consistently produce outputs within the specified customer requirements (specification limits). The Cp and Cpk indices quantify this capability.

Cp (Process Capability Index): Measures the potential capability of the process, assuming the process is centered. It compares the width of the process spread (6σ) to the tolerance width (USL – LSL).

Cp = (USL - LSL) / (6σ)

Cpk (Process Capability Index): Accounts for both process spread and process centering. It’s the smaller of two values, one considering the distance to the upper specification limit and the other to the lower specification limit.

Cpk = min[(USL - μ) / (3σ), (μ - LSL) / (3σ)]

where: USL = Upper Specification Limit, LSL = Lower Specification Limit, μ = process mean, σ = process standard deviation.

Interpretation: Higher Cp and Cpk values (generally >1.33) indicate a capable process that consistently meets specifications. Values less than 1 suggest the process is not meeting customer requirements, and improvements are needed.

Q 5. What are the benefits and limitations of using control charts?

Benefits of Control Charts:

- Early detection of problems: Control charts immediately highlight process shifts, allowing for timely corrective actions, avoiding waste and defects.

- Process monitoring and improvement: They provide a continuous record of process performance, enabling better understanding and improvement.

- Reduced variability: By identifying and addressing assignable causes, variability is reduced, enhancing quality and efficiency.

- Objective assessment: Control charts offer an objective way to evaluate process performance, removing bias from decision-making.

Limitations of Control Charts:

- Requires historical data: Establishing baseline control limits requires sufficient historical data representing stable process behavior.

- Not suitable for all processes: Control charts are most effective for continuous or repetitive processes.

- Interpretation requires expertise: Misinterpretation of control chart patterns can lead to incorrect actions.

- Doesn’t identify root causes directly: Control charts only signal problems; further investigation is necessary to pinpoint assignable causes.

Q 6. Describe the steps involved in a DMAIC process.

DMAIC is a structured approach for process improvement, commonly used in Six Sigma projects. It stands for Define, Measure, Analyze, Improve, Control.

- Define: Clearly define the project scope, goals, and customer requirements. What is the problem? What are the specific areas for improvement?

- Measure: Gather data to quantify the current process performance, identify key metrics, and establish a baseline.

- Analyze: Use data analysis techniques (e.g., Pareto charts, fishbone diagrams, regression analysis) to identify the root causes of defects or inefficiencies.

- Improve: Implement solutions to address the root causes identified in the analysis phase. This may involve design changes, process adjustments, or training programs.

- Control: Monitor the improved process to ensure it remains stable and within the desired limits. This often involves implementing control charts and other monitoring tools.

Example: A company wants to reduce the number of defective products. Using DMAIC, they define the problem (high defect rate), measure the current defect rate, analyze the reasons for defects (through data analysis), implement a training program for operators and improved equipment (improvement), and then monitor the defect rate continuously with control charts (control).

Q 7. Explain the difference between X-bar and R charts and X-bar and s charts.

Both X-bar and R charts and X-bar and s charts are used to monitor process means and variability, but they differ in how they measure variability.

X-bar and R charts: Use the range (R) within each subgroup to estimate variability. They are easier to calculate and understand but less precise, especially for larger subgroup sizes.

X-bar and s charts: Use the standard deviation (s) of each subgroup to estimate variability. This method is more efficient and statistically powerful, especially for larger subgroup sizes (n>10), providing a more precise assessment of variability.

In summary:

- X-bar and R charts: Simpler calculation, suitable for smaller subgroup sizes (n≤10).

- X-bar and s charts: More precise and statistically efficient, preferred for larger subgroup sizes (n>10).

The choice depends on the subgroup size and the desired level of precision in variability estimation.

Q 8. How do you select the appropriate sample size for control charts?

Selecting the appropriate sample size for control charts is crucial for effective process monitoring. Too small a sample size may fail to detect real shifts in the process, while too large a sample size can be costly and inefficient. The optimal sample size depends on several factors:

- Process Variability: A more variable process requires a larger sample size to accurately estimate the process parameters.

- Desired Sensitivity: Higher sensitivity (detecting smaller shifts in the process) requires a larger sample size.

- Frequency of Sampling: More frequent sampling allows for smaller sample sizes at each interval.

- Cost and Resources: The cost and time associated with sampling should be balanced against the benefits of improved process control.

There’s no single formula, but several approaches exist. One common method is to use statistical power analysis to determine the sample size needed to detect a specific shift in the process mean with a given probability. Alternatively, using historical data to estimate process variability can inform the choice. Often, a combination of these approaches and practical considerations guide the final decision. For example, if you are monitoring a critical process with high cost of non-conformance, you might opt for a larger sample size, even if it’s more expensive.

Q 9. What are some common causes of process variation?

Common causes of process variation can be categorized into two main groups: common causes (also known as ‘chance’ causes) and special causes (also known as ‘assignable’ causes).

- Common Causes: These are inherent to the process and are always present. They are small, random variations that are difficult to eliminate completely. Examples include slight variations in raw materials, minor fluctuations in ambient temperature, or normal machine wear and tear. Think of it like the normal, everyday background noise in a process.

- Special Causes: These are unusual events or factors that significantly affect the process. They are often identifiable and correctable. Examples include faulty equipment, incorrect operator settings, changes in raw material supplier, or a sudden power surge. These are like loud, disruptive events that stick out from the background noise.

Understanding the root causes of variation is essential for effective process improvement. Techniques like Pareto charts and fishbone diagrams (discussed later) help in systematically identifying and addressing these causes.

Q 10. How do you interpret a control chart with points outside the control limits?

A point outside the control limits on a control chart generally indicates a special cause of variation. It suggests that something unusual has happened, causing the process to deviate significantly from its normal behavior. This is not simply random fluctuation. Such a deviation warrants immediate investigation. Here’s a structured approach:

- Verify the Data: Double-check for data entry errors or any unusual circumstances during the sampling process that might explain the outlier.

- Investigate the Cause: Systematically investigate potential sources of variation using tools like fishbone diagrams or 5 Whys to identify the root cause of the deviation.

- Take Corrective Action: Implement appropriate corrective actions to eliminate the special cause and prevent recurrence. This might involve repairing equipment, retraining personnel, or changing the process parameters.

- Monitor the Process: Continue monitoring the process using the control chart to ensure that the corrective actions were effective and that the process is stable again.

It is important to note that even with a stable process, there’s a small probability (typically 0.27% for each limit on a standard control chart) that a point may fall outside the limits due to pure chance. However, it’s crucial to investigate points outside the control limits to be sure.

Q 11. What is the purpose of a Pareto chart?

A Pareto chart is a valuable tool for prioritizing problem-solving efforts by visually representing the relative frequency of different causes of defects or problems. It combines a bar chart (showing the frequency of each cause) with a line graph (showing the cumulative frequency). The causes are typically arranged in descending order of frequency, so the most significant factors are easily identified.

Imagine you’re a factory manager dealing with customer complaints. A Pareto chart would help you visualize the main sources of complaints – perhaps defective parts, late deliveries, or poor customer service. By focusing on the ‘vital few’ causes (those representing the largest portion of the cumulative frequency), you can prioritize improvement efforts for the greatest impact.

Q 12. Explain the concept of a fishbone diagram and its use in quality control.

A fishbone diagram, also known as an Ishikawa diagram or cause-and-effect diagram, is a visual tool used to brainstorm and identify potential root causes of a problem. It’s structured like a fish skeleton, with the problem statement at the ‘head’ and potential causes branching out from the ‘bones’.

The diagram typically categorizes causes into various categories (often the 6 Ms: Manpower, Machines, Materials, Methods, Measurement, and Milieu/Environment). A team collaborates to brainstorm potential causes within each category, leading to a comprehensive analysis of the problem’s roots. Imagine diagnosing a car that won’t start. A fishbone diagram could systematically explore potential causes under categories like battery (material), spark plugs (material), starting motor (machine), and operator error (manpower).

Q 13. What are some common tools used in statistical process control?

Statistical Process Control (SPC) utilizes a range of tools to monitor and improve process performance. Some common tools include:

- Control Charts: These are the cornerstone of SPC, graphically displaying process data over time to identify trends and detect special causes of variation. Common types include X-bar and R charts, p-charts, and c-charts.

- Histograms: These show the frequency distribution of a data set, providing insights into the process’s central tendency and variability.

- Pareto Charts: Used to identify and prioritize the most significant causes of defects or problems.

- Fishbone Diagrams (Ishikawa Diagrams): Help in brainstorming and identifying potential root causes of problems.

- Scatter Diagrams: Show the relationship between two variables, helping identify potential correlations.

- Check Sheets: Simple forms used to collect data systematically.

- Flowcharts: Visual representations of processes, aiding in understanding and improving process steps.

The choice of tools depends on the specific problem and the type of data being analyzed.

Q 14. How do you calculate the process capability index (Cpk)?

The process capability index (Cpk) measures how well a process is performing relative to its specifications. It indicates the process’s ability to consistently produce output within the specified tolerance limits. A higher Cpk value indicates better capability.

Cpk is calculated as the minimum of two values: Cpu (upper specification limit capability) and Cpl (lower specification limit capability). The formula for each is:

Cpu = (USL - μ) / (3σ)

Cpl = (μ - LSL) / (3σ)

Where:

USL= Upper Specification LimitLSL= Lower Specification Limitμ= Process Meanσ= Process Standard Deviation

The process standard deviation (σ) is often estimated using the data from control charts.

Example: Let’s say a process has an USL of 10, an LSL of 4, a process mean (μ) of 7, and a standard deviation (σ) of 0.5. Then:

Cpu = (10 - 7) / (3 * 0.5) = 2

Cpl = (7 - 4) / (3 * 0.5) = 2

Cpk = min(Cpu, Cpl) = 2

In this case, the Cpk is 2, indicating a very capable process. Generally, a Cpk of 1.33 or higher is considered acceptable.

Q 15. What is the difference between acceptance sampling and process control?

Acceptance sampling and process control are both crucial aspects of Statistical Quality Control (SQC), but they address different stages and goals. Acceptance sampling is a post-production technique where a sample of finished goods is inspected to determine whether an entire batch should be accepted or rejected. Think of it like a quality gate at the end of the production line. Process control, on the other hand, is a proactive approach focusing on monitoring and controlling the production process itself to prevent defects from happening in the first place. It’s like constantly adjusting the machinery to maintain optimal performance.

Imagine a factory producing light bulbs. Acceptance sampling would involve testing a random selection of bulbs from a batch to see if they meet the required lifespan. Process control, however, would involve monitoring the temperature and pressure during bulb manufacturing, adjusting them as needed to minimize faulty bulbs.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. Describe the different types of acceptance sampling plans.

Acceptance sampling plans fall into several categories, each with its own strengths and weaknesses:

- Single Sampling Plans: A single sample is drawn, and the batch is accepted or rejected based on the number of defectives in that sample. It’s simple but might require larger sample sizes for high confidence.

- Double Sampling Plans: If the result of the first sample is inconclusive, a second sample is drawn, and the decision is made based on the combined results. This offers a chance to reduce the sample size in some cases.

- Multiple Sampling Plans: Similar to double sampling, but multiple samples can be taken sequentially until a clear decision is reached. Offers more flexibility but can be more complex.

- Sequential Sampling Plans: Sampling continues until a decision is reached; sampling size is not predetermined. This offers an advantage when dealing with extremely low defect rates, as it can lead to smaller sample sizes on average.

The choice of plan depends on factors like the cost of inspection, the cost of accepting a bad batch, and the cost of rejecting a good batch. A risk-averse company might choose a more stringent plan, while a company focusing on speed might use a less strict plan.

Q 17. How do you determine the appropriate sample size for acceptance sampling?

Determining the appropriate sample size for acceptance sampling isn’t arbitrary; it depends on several key factors:

- Acceptable Quality Level (AQL): The maximum percentage of defective items considered acceptable in a batch.

- Lot Tolerance Percent Defective (LTPD): The percentage of defective items that would lead to the batch being rejected.

- Producer’s Risk (α): The probability of rejecting a good batch (Type I error).

- Consumer’s Risk (β): The probability of accepting a bad batch (Type II error).

Specialized tables and software are used to calculate the sample size based on these parameters. There are different standards such as MIL-STD-105E that provide these tables for specific acceptance sampling plans. For instance, if we want a low producer’s risk (low chance of rejecting good batches) and a low consumer’s risk (low chance of accepting bad batches), then we will require a larger sample size for more accurate results.

Q 18. Explain the concept of a run chart and its application.

A run chart is a simple yet powerful tool for visualizing data over time. It plots individual data points sequentially, connected by a line, to identify trends, cycles, or shifts in the data. The primary purpose is to detect process instability.

For example, imagine tracking the daily number of customer complaints. A run chart would quickly highlight if there’s an upward trend suggesting a problem needs addressing, or if the data is consistently within acceptable limits, signaling a stable process. Run charts also help identify special cause variation which should be investigated and common cause variation that is inherent in the process.

Q 19. What is the purpose of a scatter diagram in quality control?

A scatter diagram investigates the relationship between two variables. It’s used to visually assess correlation. By plotting data points representing pairs of values for two variables, we can see if there is a positive, negative, or no correlation between them. This is useful in identifying potential root causes of quality problems.

For example, a scatter diagram could be used to explore the relationship between machine temperature and the number of defects produced. A strong positive correlation would indicate that higher temperatures are linked to more defects, allowing for process adjustments.

Q 20. How do you use histograms to analyze process data?

Histograms are a visual representation of the distribution of a continuous data set. They show the frequency with which different values of a variable occur. In quality control, histograms are crucial for understanding process capability and variability.

For instance, if the histogram of a manufactured part’s dimension shows a normal distribution centered around the target value with minimal spread, it indicates good process capability. However, a skewed distribution or a wide spread would indicate issues requiring investigation and correction. Histograms can be used to determine whether the process is normally distributed, to help determine capability, and to detect shifts in the process.

Q 21. Explain the concept of statistical significance in quality control.

Statistical significance in quality control refers to the likelihood that an observed effect is not due to random chance. It’s about determining if changes in process data are truly meaningful or just random fluctuations. We usually use hypothesis testing to determine if an observed effect is statistically significant.

For example, if we implement a new manufacturing process and see a decrease in defect rate, we need to determine if this decrease is statistically significant, meaning it’s unlikely due to mere randomness and is actually a consequence of the new process. Statistical significance helps us make data-driven decisions about process improvements rather than relying on gut feelings.

Q 22. What is the difference between precision and accuracy?

Accuracy refers to how close a measurement is to the true value, while precision refers to how close repeated measurements are to each other. Think of it like shooting arrows at a target. High accuracy means your arrows are clustered near the bullseye, regardless of how spread out they are. High precision means your arrows are clustered tightly together, but they might all be far from the bullseye if your aim is off. In quality control, both are crucial. Inaccurate measurements lead to wrong decisions, while imprecise measurements make it hard to detect trends and variations.

Example: A scale consistently reads 1 gram heavier than the actual weight (low accuracy, potentially high precision). Another scale shows wildly different readings for the same weight (low precision, likely low accuracy).

Q 23. Describe the role of measurement systems analysis (MSA) in quality control.

Measurement Systems Analysis (MSA) is a crucial part of quality control because it evaluates the capability of a measurement system to provide accurate and precise data. Without reliable measurements, any analysis or improvement efforts are built on a shaky foundation. MSA helps identify sources of variation in measurements, distinguishing between variation due to the measurement system itself (e.g., operator error, instrument drift) and variation due to actual differences in the product being measured. This allows us to determine if our measurement system is adequate for its intended purpose.

Practical Application: Imagine a manufacturing process producing screws. If our measuring instruments are inconsistent, we might incorrectly conclude there’s a problem with the manufacturing process when, in reality, the problem lies in the measurement system.

Q 24. How do you calculate Gage R&R?

Gage Repeatability and Reproducibility (Gage R&R) assesses the variability within a measurement system. It quantifies the amount of variation attributable to repeatability (variation from repeated measurements by the same appraiser using the same instrument on the same part) and reproducibility (variation from measurements of the same part by different appraisers using the same instrument). The calculation typically involves using ANOVA (Analysis of Variance) or a simpler method based on the range of measurements.

Simplified Calculation (using Range Method): This method is easier to understand but less precise than ANOVA. You’d need to have multiple appraisers measure the same parts multiple times. The overall range of measurements is used to estimate the total variation. Then, you can separate the variation into components representing repeatability, reproducibility, and the part-to-part variation. Software packages and statistical textbooks provide detailed steps and formulas for both methods.

Example: Let’s say you’re measuring the diameter of a shaft. You have 3 appraisers measure the same 10 shafts, each three times. The Gage R&R study would quantify how much of the total variation is due to the appraisers (reproducibility) and how much is due to the repeatability of the measurement process for each appraiser. This helps you decide whether the measuring system is acceptable for quality control.

Q 25. Explain the concept of a designed experiment (DOE).

A Designed Experiment (DOE) is a structured approach to experimentation where factors are systematically varied to observe their effects on a response variable. Unlike trial-and-error approaches, DOE allows efficient identification of which factors significantly impact a process or product and how they interact. This leads to optimized processes and improved product quality. DOE is more than just changing one variable at a time – it involves strategically varying multiple variables simultaneously to understand their combined effects, including interactions.

Example: Consider optimizing the baking of a cake. Factors could include baking temperature, baking time, and the amount of sugar. A DOE allows you to systematically vary these factors to determine the optimal combination that results in the best cake quality (e.g., texture, taste).

Q 26. What are some common DOE designs?

Several DOE designs exist, each suited for different situations. Some common ones include:

- Full Factorial Designs: All possible combinations of factor levels are tested. Useful for understanding all main effects and interactions, but can be expensive for many factors.

- Fractional Factorial Designs: A subset of the full factorial design, useful when many factors are involved, making the full factorial design impractical.

- Taguchi Designs: Focus on minimizing the effect of noise factors (uncontrollable variables) on the response variable.

- Response Surface Methodology (RSM): Used to optimize a response variable by fitting a surface to the data.

The choice of design depends on the number of factors, the budget, and the desired level of detail in understanding the interactions.

Q 27. How do you analyze data from a DOE?

Analysis of DOE data typically involves statistical software packages. The goal is to determine which factors are statistically significant in affecting the response variable and estimate the magnitude of their effects. Common analytical techniques include:

- Analysis of Variance (ANOVA): Used to partition the total variation in the response variable into components attributable to different factors and their interactions.

- Regression Analysis: Used to build a model that predicts the response variable based on the levels of the factors.

- Response Surface Plots: Visual representations of the response surface, showing how the response variable changes with changes in the factors.

The analysis helps identify optimal settings for the factors to achieve desired results.

Q 28. Explain how you would approach a quality problem in a manufacturing setting.

Addressing a quality problem in manufacturing follows a structured approach, often using DMAIC (Define, Measure, Analyze, Improve, Control) methodology:

- Define: Clearly define the problem, its impact, and the goals for improvement. Gather data to quantify the problem.

- Measure: Collect data on the process variables and the quality characteristic of interest. This step might involve using control charts to monitor the process and identify patterns.

- Analyze: Use statistical tools to identify the root causes of the problem. This could involve Pareto charts, cause-and-effect diagrams, and regression analysis.

- Improve: Implement changes based on the analysis to eliminate or reduce the root causes. This could involve process adjustments, new equipment, or operator training.

- Control: Monitor the process after improvements to ensure the problem doesn’t recur. Control charts are essential in this step.

Throughout the entire process, strong communication and collaboration with all stakeholders are crucial for success.

Key Topics to Learn for Statistical Quality Control (SQC) Techniques Interview

- Control Charts: Understanding the principles behind various control charts (e.g., X-bar and R charts, p-charts, c-charts) and their applications in monitoring process stability. This includes interpreting control chart patterns and identifying assignable causes of variation.

- Process Capability Analysis: Learn how to assess process capability using Cp, Cpk, and Pp, Ppk indices. Understand the implications of capability indices and how they relate to customer specifications.

- Acceptance Sampling: Familiarize yourself with different sampling plans (e.g., single, double, multiple sampling) and their applications in ensuring product quality before shipment. Understand the concepts of producer’s and consumer’s risk.

- Design of Experiments (DOE): Grasp the basics of DOE, including factorial designs and their application in identifying key factors influencing process output and optimizing process parameters.

- Statistical Process Control (SPC) Software: Demonstrate familiarity with commonly used SPC software packages (mentioning specific packages is optional, focus on general understanding). Be prepared to discuss your experience using such tools for data analysis and interpretation.

- Hypothesis Testing: A strong understanding of hypothesis testing methodologies, including t-tests, ANOVA, and chi-square tests, is crucial for interpreting statistical results and making data-driven decisions.

- Regression Analysis: Understand the application of regression techniques in modeling relationships between process variables and identifying significant predictors of quality characteristics.

Next Steps

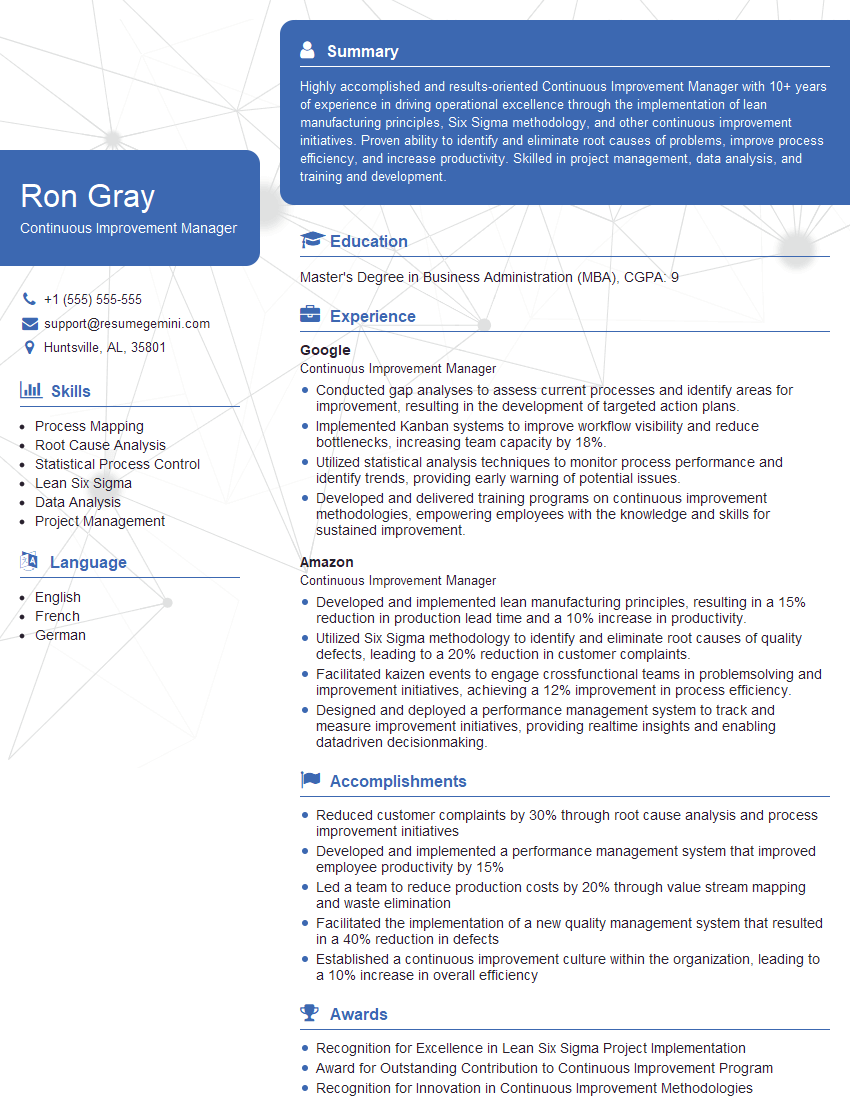

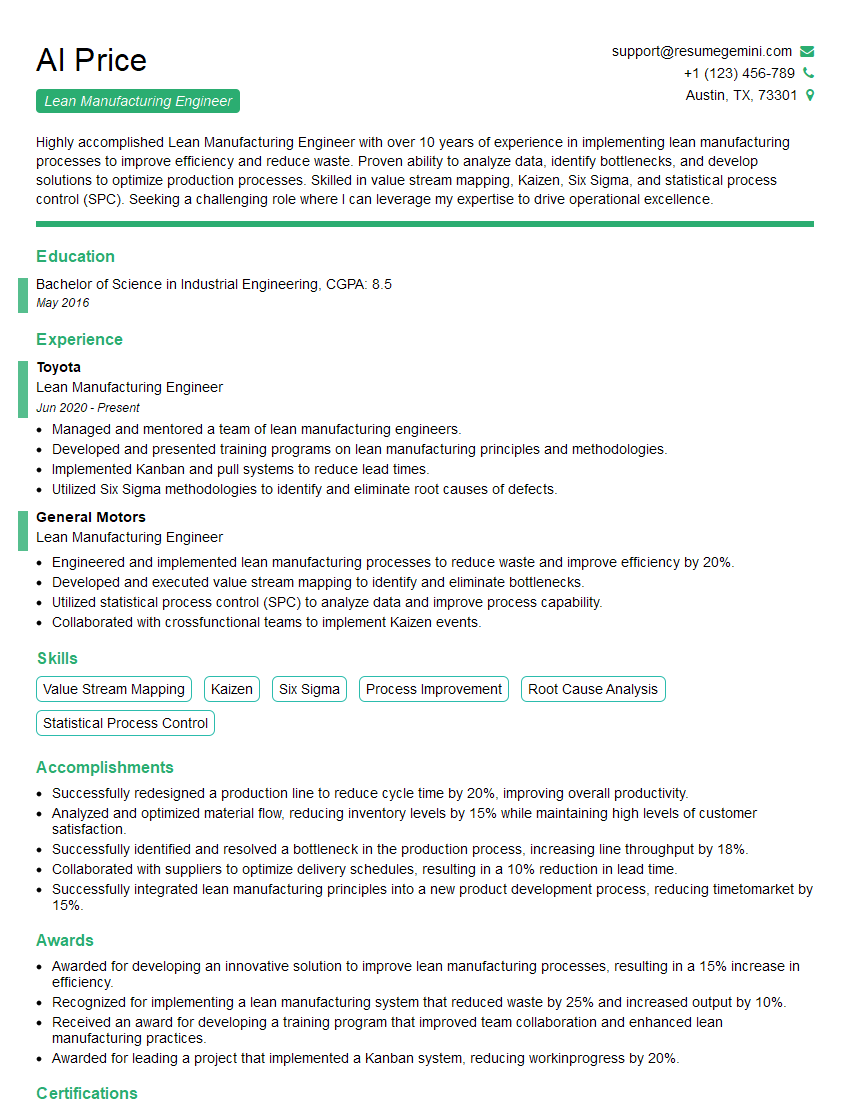

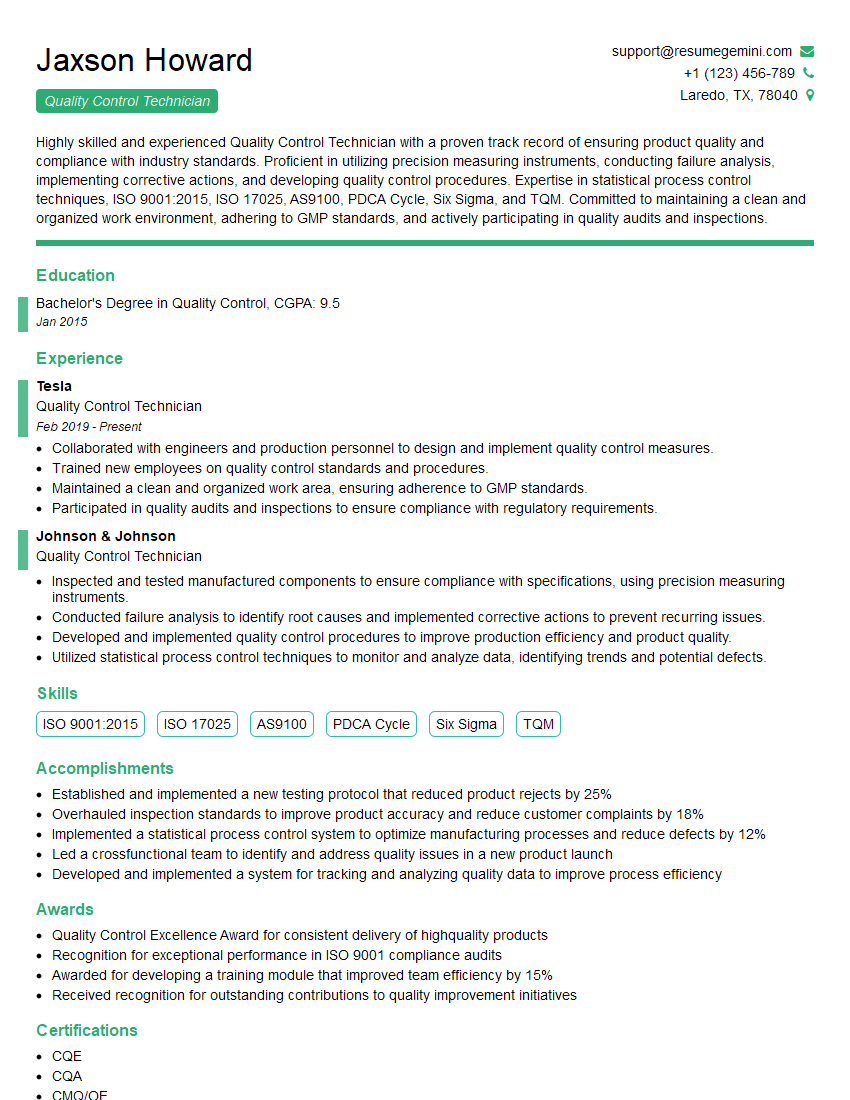

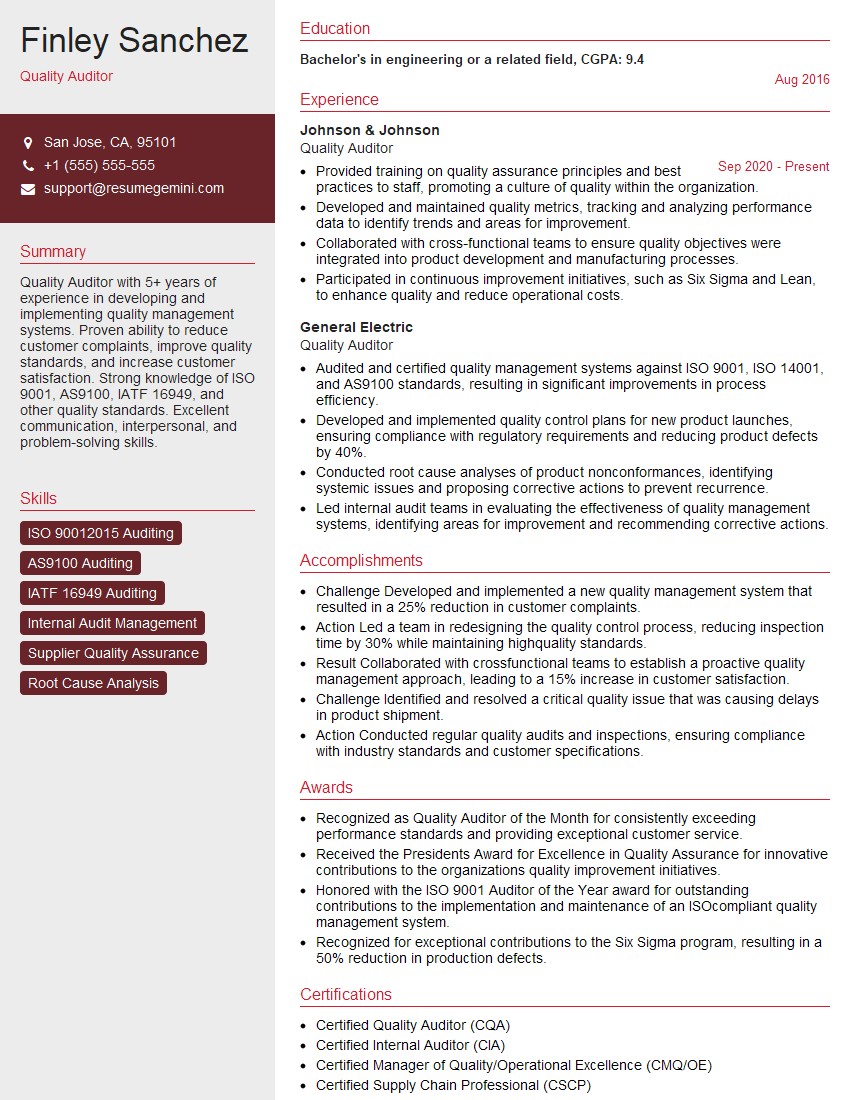

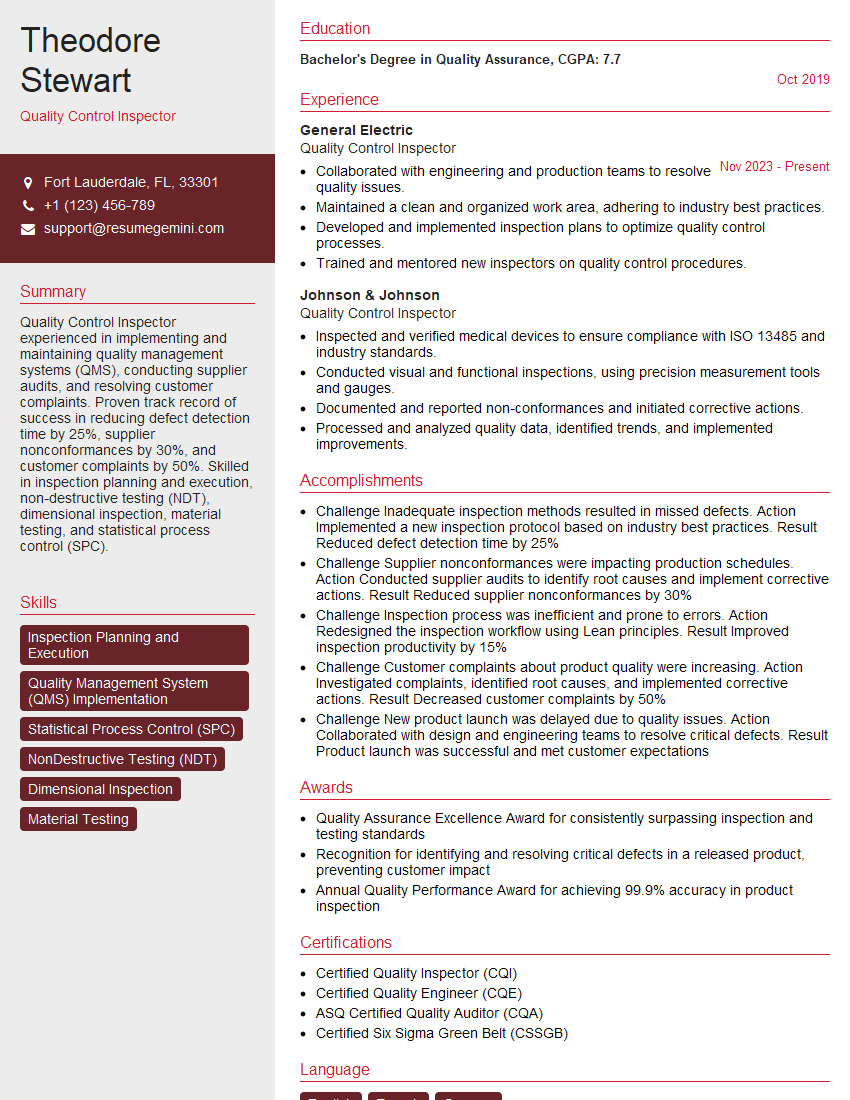

Mastering Statistical Quality Control (SQC) techniques is paramount for career advancement in various industries. A strong understanding of these techniques demonstrates your ability to improve processes, reduce defects, and enhance overall efficiency – highly sought-after skills in today’s competitive market. To significantly boost your job prospects, create an ATS-friendly resume that highlights your SQC expertise. ResumeGemini is a trusted resource that can help you build a professional and impactful resume. We provide examples of resumes tailored to Statistical Quality Control (SQC) Techniques to guide you through the process. Let ResumeGemini help you land your dream job!

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Very informative content, great job.

good