Every successful interview starts with knowing what to expect. In this blog, we’ll take you through the top Streaming Protocols (RTMP, SRT, HLS) interview questions, breaking them down with expert tips to help you deliver impactful answers. Step into your next interview fully prepared and ready to succeed.

Questions Asked in Streaming Protocols (RTMP, SRT, HLS) Interview

Q 1. Explain the differences between RTMP, SRT, and HLS.

RTMP (Real-Time Messaging Protocol), SRT (Secure Reliable Transport), and HLS (HTTP Live Streaming) are all streaming protocols, but they differ significantly in their architecture, transport mechanisms, and target use cases. RTMP is a proprietary protocol optimized for low-latency streaming over TCP, typically used for live interactions. SRT is a relatively newer, open-source protocol focusing on reliable, low-latency transmission over UDP, making it ideal for situations with unreliable network conditions. HLS, on the other hand, is an HTTP-based protocol using smaller, segmented media files, which makes it highly compatible with various devices and network infrastructures, though at the cost of some latency.

- RTMP: Think of it as a dedicated expressway for real-time video – fast, but potentially congested and only accessible to vehicles (clients) with the right access.

- SRT: Imagine a robust delivery truck that can navigate through rough terrain (unpredictable networks) while ensuring your package (video stream) arrives safely and on time.

- HLS: This is like a postal service – slower but incredibly reliable and accessible almost anywhere. It might take a bit longer to receive the entire message (video), but it’s more likely to reach you regardless of the delivery conditions.

Q 2. What are the advantages and disadvantages of RTMP?

Advantages of RTMP:

- Low Latency: RTMP excels at delivering low-latency streams, making it suitable for live interactions like gaming and live chat.

- Simple Implementation: Relatively straightforward to implement and integrate with streaming servers.

- Widely Supported: Has been a widely adopted protocol for years, with many servers and players supporting it.

Disadvantages of RTMP:

- Proprietary: Not an open standard, limiting flexibility and community support.

- TCP-based: Relies on TCP, making it less resilient to network issues; packet loss can lead to significant disruptions.

- Security Concerns: Can be vulnerable if not properly secured, necessitating strong authentication and encryption measures.

- Firewall Issues: Can have difficulties traversing firewalls due to the nature of its non-standard ports.

Q 3. What are the advantages and disadvantages of SRT?

Advantages of SRT:

- Low Latency: Provides low-latency streaming comparable to RTMP.

- Reliable Transport: Uses UDP but incorporates robust error correction and retransmission mechanisms, making it reliable even over unstable networks.

- Security: Offers built-in security features like encryption and authentication, enhancing data protection.

- Open Source: Open-source nature fosters community development and transparency.

- Cross-Platform Compatibility: Works well across various platforms and devices.

Disadvantages of SRT:

- Relatively Newer: Compared to RTMP and HLS, it has a shorter history, meaning fewer readily available resources and integrations.

- Complexity: Can be more complex to configure and manage than RTMP.

Q 4. What are the advantages and disadvantages of HLS?

Advantages of HLS:

- Wide Compatibility: Broad support across various devices and browsers, making it ideal for reaching a large audience.

- HTTP-based: Leverages HTTP, making it highly compatible with existing web infrastructure and easily traversing firewalls.

- Adaptive Bitrate Streaming: Allows for seamless adjustment of video quality based on network conditions.

- Reliable: Robust against network issues due to its fragmented and segmented nature.

Disadvantages of HLS:

- Higher Latency: Generally higher latency compared to RTMP and SRT, making it less suitable for live interactions requiring immediate feedback.

- Increased Bandwidth Consumption: Can consume more bandwidth due to the overhead of segmenting the video stream.

- Complexity for Low-Latency: Achieving low latency with HLS requires careful configuration and implementation of specific techniques like low-latency HLS (LL-HLS).

Q 5. Describe the process of streaming video using RTMP.

Streaming video using RTMP typically involves these steps:

- Encoding: The video source is encoded into a format compatible with RTMP, often using codecs like H.264 or H.265.

- Publishing: An RTMP encoder (software or hardware) pushes the encoded stream to an RTMP server. This involves establishing an RTMP connection and specifying a stream key.

- Server-Side Processing: The RTMP server receives the stream, potentially transcodes it into multiple bitrates, and manages its delivery to clients.

- Playback: Clients connect to the RTMP server using an RTMP player (often embedded in a website or application) and request the stream. The server streams the video data to the player in real-time.

Example: An online gaming platform might use an RTMP encoder to transmit gameplay footage to their servers, which then distribute it to viewers via RTMP players in their web browsers.

Q 6. Describe the process of streaming video using SRT.

Streaming video with SRT involves a similar process, but with crucial differences in the transport layer:

- Encoding: Similar to RTMP, the video is encoded into a suitable format.

- Publishing: An SRT encoder pushes the encoded video to an SRT server. SRT manages congestion control, packet loss recovery, and encryption more effectively than RTMP.

- Server-Side Processing: The SRT server manages the stream, handling aspects like re-transmission and security.

- Playback: SRT clients connect to the server using an SRT player and receive the video stream. SRT’s built-in reliability makes it less prone to disruption from network issues.

Example: A remote production scenario might use SRT for reliable transmission of video feeds across geographically distant locations, overcoming unreliable internet connections.

Q 7. Describe the process of streaming video using HLS.

HLS streaming is different due to its HTTP-based segmented nature:

- Encoding and Segmentation: The video is encoded and then sliced into small, self-contained segments (typically TS files). A manifest file (M3U8) is created, listing these segments.

- Uploading: These segments and the manifest file are uploaded to a web server.

- Playback: Clients access the manifest file via HTTP. The player downloads the manifest, identifies which segment to play next, and downloads it over HTTP. The player dynamically switches segments based on network conditions and user preferences.

Example: A video-on-demand service like Netflix uses HLS to deliver video content to users. The player seamlessly switches between different quality levels depending on their internet speed.

Q 8. How does HLS handle low bandwidth conditions?

HLS, or HTTP Live Streaming, handles low bandwidth conditions gracefully through its adaptive bitrate streaming capabilities. Instead of sending a single stream, HLS provides multiple versions of the video encoded at different bitrates (e.g., 240p, 480p, 720p, 1080p). When a viewer experiences low bandwidth, the HLS player automatically switches to a lower bitrate stream to maintain playback. Think of it like choosing a smaller file size when downloading – you sacrifice some quality to ensure the download completes successfully. This seamless switching between bitrates, managed by the player, ensures continuous viewing even with fluctuating network conditions.

For example, if a user starts watching a video on a fast Wi-Fi connection, the player might select the highest quality 1080p stream. If the user then moves to a cellular network with lower bandwidth, the player intelligently detects the slower connection and switches to a lower resolution, such as 480p, preventing buffering or interruptions. The process is entirely transparent to the user, offering a smooth viewing experience.

Q 9. How does SRT handle network congestion?

SRT, or Secure Reliable Transport, actively combats network congestion through several mechanisms. Unlike protocols that simply drop packets during congestion, SRT employs advanced congestion control algorithms that dynamically adjust the sending rate to match the available bandwidth. This adaptive behavior prevents overwhelming the network and minimizes packet loss. Furthermore, SRT utilizes forward error correction (FEC) to protect against packet loss, allowing the receiver to reconstruct lost data from redundant information sent alongside the main stream. This redundancy helps ensure smoother playback even during periods of significant network congestion.

Imagine a highway during rush hour. Traditional protocols would be like driving at a constant speed, risking a complete standstill if traffic jams appear. SRT, however, is like a smart driver who monitors traffic conditions and adjusts speed accordingly, avoiding bottlenecks and ensuring a relatively smooth journey. In essence, SRT’s proactive management of network conditions contributes significantly to a high-quality streaming experience, especially in challenging network environments.

Q 10. Explain the role of a CDN in video streaming.

A Content Delivery Network (CDN) is a geographically distributed network of servers designed to efficiently deliver content to users worldwide. In video streaming, CDNs play a crucial role by caching video content closer to viewers. When a user requests a video, the CDN directs them to the nearest server holding a copy of that content, dramatically reducing latency and improving delivery speed. Think of it as having multiple copies of a book distributed across various libraries – no matter where you are, you’ll find a copy nearby.

CDNs significantly enhance the viewing experience by minimizing latency (delay), ensuring faster loading times, and reducing the strain on the origin server (the server hosting the original video). They also help scale streaming services, handling sudden traffic spikes effectively, which is crucial during high-demand events like live sporting matches or concerts. In essence, CDNs are essential infrastructure for reliable and scalable video streaming services.

Q 11. What is adaptive bitrate streaming and how does it work with HLS?

Adaptive bitrate streaming (ABR) dynamically adjusts the quality of a video stream based on the viewer’s available bandwidth. In HLS, this is achieved by offering multiple video renditions (different bitrates and resolutions) within a single playlist (.m3u8 file). The HLS player constantly monitors the network conditions and seamlessly switches between these renditions to maintain a smooth viewing experience. If bandwidth is high, the player selects a high-quality stream; if bandwidth drops, it switches to a lower quality stream.

For example, consider a user watching a video on a mobile device with fluctuating network connectivity. With ABR and HLS, the player might initially use a 720p stream. When the network slows, it might drop to 480p or even 360p to avoid interruptions. Once connectivity improves, the quality is seamlessly increased back to 720p or higher. This dynamic adjustment ensures optimal viewing quality under various network conditions.

Q 12. How does buffering work in a streaming application?

Buffering in a streaming application involves pre-loading a portion of the video content in a temporary storage area (the buffer) on the viewer’s device. This allows for continuous playback even if the network connection experiences temporary dips in speed or latency. The player continuously downloads video data into the buffer while simultaneously playing content from the buffer. Think of it as filling a water glass while simultaneously drinking from it; if the filling rate matches the drinking rate, the glass never empties.

Effective buffering ensures smooth playback. A sufficiently large buffer can handle short interruptions without causing playback disruptions. However, excessively large buffers introduce latency (delay before playback starts), while insufficient buffering causes frequent interruptions or stalls. The optimal buffer size is a balance between minimizing latency and ensuring smooth playback.

Q 13. What are some common challenges in live video streaming?

Live video streaming presents numerous challenges, including:

- Network Congestion: Sudden traffic spikes can overload the network, resulting in buffering, dropped frames, and poor quality video.

- Latency: Delay between live events and viewer playback can be significant, especially in global streams.

- Scalability: Handling a large number of concurrent viewers can strain server resources and impact performance.

- Quality Variations: Maintaining consistent video and audio quality across diverse network conditions can be difficult.

- Security: Protecting the stream from unauthorized access and preventing piracy is crucial.

- Geo-restrictions: Delivering content only to specific geographical locations can require complex configurations.

These challenges necessitate robust infrastructure, efficient protocols, and sophisticated content delivery mechanisms to ensure a high-quality and reliable streaming experience.

Q 14. How would you troubleshoot a streaming issue with high latency?

Troubleshooting high latency in a streaming application involves a systematic approach. First, isolate the source of the latency: is it the network, the server, the player, or a combination?

- Network Diagnostics: Check network bandwidth, packet loss, and jitter using tools like ping, traceroute, and iperf. A high ping (latency) indicates slow network conditions.

- Server-Side Checks: Verify server health, CPU usage, and network performance. Overloaded servers can contribute significantly to latency.

- Player Settings: Ensure the player is configured optimally, including proper buffer settings and adaptive bitrate capabilities.

- CDN Analysis: If using a CDN, check its performance and identify the closest server to the viewer. Poor CDN performance can add latency.

- Content Encoding: Evaluate the encoding settings of the stream. Improperly encoded streams can increase processing time and add latency.

A step-by-step approach, starting with network diagnostics and progressively examining other potential causes, can help pinpoint the root of the high latency and implement appropriate solutions.

Q 15. How would you troubleshoot a streaming issue with dropped frames?

Troubleshooting dropped frames in streaming involves a systematic approach, investigating the entire pipeline from source to player. It’s like tracing a leak in a pipe – you need to find where the water (data) is escaping.

- Source Issues: Start at the camera or encoder. Check for encoding errors, insufficient bandwidth at the source, or hardware limitations. Look at the encoder logs for errors. For instance, if you’re using OBS Studio, check its log for dropped frames or encoding issues.

- Network Issues: Packet loss is a major culprit. Utilize network monitoring tools like Wireshark or ping/traceroute to pinpoint network bottlenecks or unstable connections. High latency or jitter can also cause frame drops. Consider using a QoS (Quality of Service) mechanism to prioritize streaming traffic.

- Server Issues: If the problem persists despite stable source and network, investigate the streaming server. Are the CPU and RAM resources sufficient? Are there any errors in the server logs? Consider upgrading hardware or optimizing server configurations.

- Player Issues: While less common, browser issues or player configurations can contribute. Try different players or browsers to see if the problem persists. Check for insufficient buffer size in the player settings.

- Protocol Issues: The chosen streaming protocol might not be suitable for the network conditions. For instance, RTMP is generally less resilient to packet loss than SRT. Consider switching to a more robust protocol.

A methodical approach, examining each stage of the streaming pipeline, is crucial for efficient troubleshooting. Remember to collect logs and metrics at each step to pinpoint the problem’s root cause.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. Explain the concept of low-latency streaming.

Low-latency streaming aims to minimize the delay between a live event and its viewing on a screen. Imagine watching a live sports event; a significant delay would be frustrating. Traditional streaming protocols often have delays of several seconds, whereas low-latency streaming aims for sub-second delays.

This is achieved through several techniques:

- Efficient Protocols: Protocols like SRT and WebRTC are designed for low latency. They use techniques like forward error correction (FEC) to mitigate packet loss without retransmission delays.

- Adaptive Bitrate Streaming (ABR): While ABR itself isn’t directly related to latency, using a well-configured ABR system helps maintain a smooth playback experience even under fluctuating network conditions, reducing the chances of buffering pauses that could feel like increased latency.

- Chunking and Fragmentation: Streaming data is segmented into smaller chunks, reducing the amount of data that needs to be buffered before playback starts, thus decreasing latency.

Low-latency streaming is crucial for interactive applications like live gaming, remote collaboration, and applications where immediate response time is critical. The trade-off is often a slightly reduced visual quality as the streaming system prioritizes speed over buffering extensive video segments.

Q 17. What are some common codecs used in video streaming?

Several codecs, or compression algorithms, are employed in video streaming, each offering different trade-offs between compression efficiency (smaller file sizes) and quality. Think of it like choosing the best suitcase for a trip – one might be small and lightweight, but hold less, whereas another might be larger and heavier but pack more.

- H.264 (AVC): A widely adopted codec, offering a good balance between compression and quality. Its maturity and broad support make it a reliable choice, although it’s becoming less efficient compared to newer codecs.

- H.265 (HEVC): Offers superior compression compared to H.264, leading to smaller file sizes and potentially higher quality at the same bitrate. However, it’s computationally more intensive and might require more powerful devices for decoding.

- VP9: Developed by Google, it provides comparable compression to H.265 but with lower licensing costs (it’s royalty-free), making it an attractive alternative.

- AV1: A royalty-free codec with even better compression efficiency than VP9 and H.265. However, it requires more processing power for encoding and decoding, and browser support is still evolving.

The selection of a codec depends on several factors including the target audience’s devices, desired quality, bandwidth limitations, and the computational resources available for encoding and decoding.

Q 18. What is the difference between ABR and CBR?

ABR (Adaptive Bitrate Streaming) and CBR (Constant Bitrate Streaming) represent two different approaches to managing the bitrate of a streaming video. Think of it like adjusting the water flow in a shower – ABR adapts to conditions, while CBR remains constant.

CBR maintains a constant bitrate throughout the stream. This results in consistent quality but can be inefficient. If the network conditions deteriorate, the stream may buffer or drop frames. It’s simpler to implement but less robust.

ABR dynamically adjusts the bitrate based on the available bandwidth and network conditions. If the bandwidth drops, the stream automatically switches to a lower resolution to maintain playback without buffering. This results in a more consistent viewing experience, even with fluctuating network conditions, but adds complexity to implementation.

ABR is preferred for most online streaming applications due to its resilience to varying network conditions, providing a better user experience. CBR might be suitable for situations where consistent quality is paramount and network conditions are reliably stable, like internal corporate streaming.

Q 19. Explain the concept of a manifest file in HLS.

In HLS (HTTP Live Streaming), the manifest file, typically named with a .m3u8 extension, acts as a playlist that directs the player to the individual media segments (small video and audio files) making up the stream. It’s like a table of contents for a book, listing chapter titles and page numbers.

The manifest file contains:

- Metadata: Information about the stream, such as the media type, bitrate, and duration.

- URLs: Links to the individual media segments, often organized by bitrate (allowing adaptive bitrate streaming).

For instance, a manifest file might look like this (simplified):

#EXTM3U #EXT-X-VERSION:3 #EXT-X-TARGETDURATION:10 #EXT-X-MEDIA-SEQUENCE:0 #EXTINF:9.999, media_segment_1.ts #EXTINF:9.999, media_segment_2.ts #EXT-X-ENDLIST The player uses this file to download and play the segments sequentially, providing a smooth viewing experience. The adaptive nature of HLS allows it to switch between different bitrate segments based on the available bandwidth, ensuring optimal quality even with varying network conditions.

Q 20. What are the security considerations when implementing streaming protocols?

Security in streaming protocols is critical to protect content and prevent unauthorized access. Consider these key aspects:

- Authentication and Authorization: Verify the identity of users and control access to the streaming content. This can be done using techniques like token-based authentication (JWT) or integrating with existing authentication systems.

- Encryption: Protect the stream from eavesdropping using encryption protocols like AES-128 or AES-256. This ensures that only authorized users can decrypt and view the content.

- HTTPS: Always use HTTPS for all communication between the client and server to secure the connection from man-in-the-middle attacks.

- DRM (Digital Rights Management): Implement DRM solutions to control access and usage of the content, preventing unauthorized copying or distribution. Widevine and PlayReady are common DRM technologies.

- Secure Server Infrastructure: Secure the streaming servers themselves using strong passwords, firewalls, and intrusion detection systems.

Ignoring these security considerations can lead to significant risks, including content theft, unauthorized access, and potential financial loss. A robust security strategy is vital for any streaming application.

Q 21. How would you choose the appropriate streaming protocol for a specific application?

Selecting the right streaming protocol depends on the specific application’s requirements. Think of it like choosing the right tool for a job – a hammer is not suitable for screwing in a screw.

- RTMP (Real-Time Messaging Protocol): Well-suited for low-latency streaming, particularly with Flash-based players (although its use is declining). It’s often used in live streaming scenarios where low latency is prioritized, but its stability can be an issue compared to others.

- SRT (Secure Reliable Transport): Offers a good balance between low latency, high reliability, and security. Ideal for scenarios where robustness and security are paramount, like broadcasting to a wide audience or transmitting over unreliable networks.

- HLS (HTTP Live Streaming): A widely adopted protocol, suitable for various devices and bandwidth conditions. Its adaptive bitrate streaming capabilities make it a strong choice for applications targeting a broad range of devices and network conditions. However, it typically introduces higher latency compared to SRT or RTMP.

Consider these factors when choosing:

- Latency Requirements: Low-latency applications need SRT or RTMP; HLS is suitable for applications with higher tolerance for delay.

- Bandwidth and Network Conditions: HLS’s adaptive bitrate capability makes it robust against varying bandwidth, while SRT’s resilience to packet loss makes it suitable for unreliable networks.

- Security Requirements: SRT offers built-in security features, but encryption should be used with all protocols.

- Device Compatibility: HLS has broad device support, while RTMP is becoming less common.

By carefully evaluating these factors, you can choose the protocol that best meets the specific needs of your streaming application.

Q 22. Explain the concept of a ‘master playlist’ and a ‘media playlist’ in HLS.

In HTTP Live Streaming (HLS), a master playlist acts as a table of contents for a video stream, while media playlists contain the actual video segments.

Think of it like a DVD menu. The master playlist is the main menu listing different quality levels (e.g., 240p, 480p, 720p) or language tracks. Each menu option (quality level or language) points to a separate media playlist.

Each media playlist then lists individual small video segments (typically .ts files). These segments are downloaded and played sequentially by the client. This segmented approach is key to HLS’s adaptability to varying network conditions. If the connection drops, only a small segment needs to be re-downloaded, not the entire video.

Example: A master playlist might contain entries pointing to media playlists named master.m3u8 pointing to 240p.m3u8, 480p.m3u8, and 720p.m3u8. Each of these would then list individual .ts segments.

Q 23. Describe how SRT’s UDP-based transport improves reliability.

SRT (Secure Reliable Transport) leverages UDP for its speed but adds several layers to ensure reliability, unlike standard UDP which is a simple ‘fire and forget’ protocol. SRT achieves this through sophisticated error detection and correction mechanisms.

Key mechanisms include:

- Forward Error Correction (FEC): SRT transmits redundant data along with the primary stream. If packets are lost, the receiver can reconstruct the missing information from the redundant data. This is particularly useful in unreliable network environments.

- Automatic Repeat reQuest (ARQ): If the receiver doesn’t receive a packet or detects an error, it requests retransmission. SRT employs various ARQ strategies to optimize retransmission requests and minimize latency.

- Congestion Control: SRT incorporates congestion control algorithms to adjust the sending rate based on network conditions. This prevents overwhelming the network and improves overall throughput.

- Encryption: SRT provides end-to-end encryption to secure the stream, protecting it from eavesdropping or tampering.

By combining the speed of UDP with these reliability features, SRT provides a robust solution for streaming applications where low latency and high reliability are critical, such as live broadcasts or remote production scenarios.

Q 24. How can you optimize streaming performance for mobile devices?

Optimizing streaming performance for mobile devices requires careful consideration of various factors, focusing on reducing bandwidth consumption and processing demands.

- Adaptive Bitrate Streaming (ABS): Employing HLS or DASH, which allows the player to dynamically switch between different bitrate streams based on network conditions. A weak connection will select a lower resolution stream to ensure smooth playback.

- Lower Resolution and Frame Rates: Offer lower resolutions (e.g., 360p or 480p) and frame rates to reduce bandwidth requirements. Mobile devices often have limited processing power and battery life; lower resolutions help to conserve both.

- Optimized Encoding: Use codecs optimized for mobile devices, such as H.264 or H.265 (HEVC). These codecs offer a good balance between compression and quality.

- HTTP/2 or QUIC: Leverage modern protocols that provide better efficiency and lower latency compared to older HTTP/1.1.

- Content Delivery Network (CDN): Distribute your streaming content across a CDN to reduce latency and improve delivery speeds, especially for geographically dispersed audiences.

- Pre-buffering: Allow for pre-buffering before playback begins to handle initial latency issues or temporary network hiccups.

By implementing these strategies, you ensure a smoother, more reliable, and power-efficient streaming experience for your mobile users.

Q 25. What is the role of metadata in streaming protocols?

Metadata in streaming protocols provides essential information about the stream itself and the content being streamed. It’s like adding extra labels to your videos to improve their discoverability and functionality.

Examples include:

- Stream Title and Description: Identifying information for viewers.

- Genre and Keywords: Facilitating search and categorization.

- Artist, Album, or Episode Information: Relevant for music or episodic content.

- Closed Captions or Subtitles: Accessibility features.

- Timestamps: Providing accurate markers within the stream.

- DRM Information: Controlling access to content.

This data can be embedded within the stream itself or transmitted separately through associated metadata files. This information is critical for players to display relevant information, facilitate search and indexing, and manage access control, ensuring a richer viewing experience.

Q 26. What are some common monitoring tools for streaming performance?

Several tools are available for monitoring streaming performance. The specific choice often depends on the streaming protocol and infrastructure used.

- Server-Side Monitoring: Most streaming servers (like Nginx, Wowza, or AWS Elemental MediaLive) have built-in dashboards and logging capabilities, providing insights into bandwidth usage, CPU utilization, and other key metrics.

- CDN Monitoring: CDNs typically offer detailed analytics and reporting tools focusing on delivery performance, geographic distribution of viewers, and error rates.

- Third-Party Monitoring Tools: Several companies provide dedicated streaming analytics platforms offering comprehensive dashboards and alerts. They often aggregate data from multiple sources, providing a holistic view of the streaming infrastructure’s health.

- Network Monitoring Tools: Tools like Wireshark or tcpdump allow for deep packet inspection to identify network-related issues affecting streaming quality.

The choice of monitoring tools depends on the specific needs and the scale of the streaming operation. A small-scale operation might rely on server logs, while larger deployments will necessitate a more comprehensive monitoring solution.

Q 27. Explain how you would test the quality of a streaming implementation.

Testing the quality of a streaming implementation requires a multi-faceted approach, combining objective and subjective assessments.

Objective Tests:

- Bitrate and Frame Rate Monitoring: Verify that the selected bitrates are being delivered accurately and consistently.

- Latency Measurement: Assess the delay between the source and the viewer. Low latency is crucial for live streaming.

- Packet Loss Analysis: Identify and quantify packet loss, a major contributor to poor video quality.

- Buffering Analysis: Monitor buffer levels to detect frequent buffering events.

- Automated Testing Tools: Use tools that simulate various network conditions and device configurations to stress-test the stream’s resilience.

Subjective Tests:

- User Feedback: Collect viewer feedback through surveys or feedback forms to gauge their experience.

- A/B Testing: Compare different encoding settings or streaming protocols to determine optimal settings.

- Blind Comparisons: Compare different streaming implementations without revealing which is which.

By combining objective data with subjective feedback, a comprehensive assessment can be made, ensuring the streaming implementation meets the required quality standards.

Q 28. Describe your experience with different streaming servers (e.g., Nginx, Wowza).

I’ve worked extensively with several streaming servers, including Nginx, Wowza, and AWS Elemental MediaLive, each offering unique strengths and weaknesses.

Nginx: A highly versatile and performant open-source solution known for its speed and flexibility. I’ve used Nginx for various projects, configuring it to deliver HLS and RTMP streams efficiently. Its modular design allows customization to meet specific needs, although it requires a more hands-on approach compared to some commercial solutions.

Wowza Streaming Engine: A commercial solution offering a user-friendly interface and a broader range of features compared to Nginx. I’ve leveraged Wowza’s capabilities for managing multiple streams, integrating with various CDNs, and implementing advanced features like DRM. Its ease of use can be beneficial for less technical teams, although the cost can be a factor.

AWS Elemental MediaLive: A cloud-based solution offered by Amazon Web Services. It’s ideal for large-scale, high-availability streaming deployments, particularly those benefiting from cloud infrastructure. I’ve used it for its scalability and integration with other AWS services, simplifying deployments and management. Its focus on live streaming makes it a powerful choice for live broadcast applications.

My experience with these servers allows me to select the best solution for the project’s specific needs, considering factors like scalability, cost, ease of use, and required features.

Key Topics to Learn for Streaming Protocols (RTMP, SRT, HLS) Interview

- RTMP (Real-Time Messaging Protocol):

- Understanding RTMP’s architecture and its role in live streaming.

- Comparing RTMP’s performance characteristics with other protocols.

- Troubleshooting common RTMP connection issues.

- Practical application: Setting up and configuring RTMP streaming servers.

- SRT (Secure Reliable Transport):

- Exploring SRT’s features focused on reliability and security in streaming.

- Analyzing SRT’s low-latency capabilities and its advantages over other protocols.

- Practical application: Implementing SRT for low-latency live broadcasts.

- Comparing and contrasting SRT with RTMP in various use cases.

- HLS (HTTP Live Streaming):

- Understanding the HTTP-based approach of HLS and its compatibility with diverse devices.

- Mastering the concept of adaptive bitrate streaming and its impact on user experience.

- Practical application: Optimizing HLS streams for various network conditions.

- Troubleshooting common HLS playback issues and optimizing segment size/duration.

- General Streaming Concepts:

- Bandwidth management and optimization strategies.

- Understanding different encoding formats (e.g., H.264, H.265).

- Latency considerations and techniques for minimizing delay.

- Security protocols and best practices for streaming security.

Next Steps

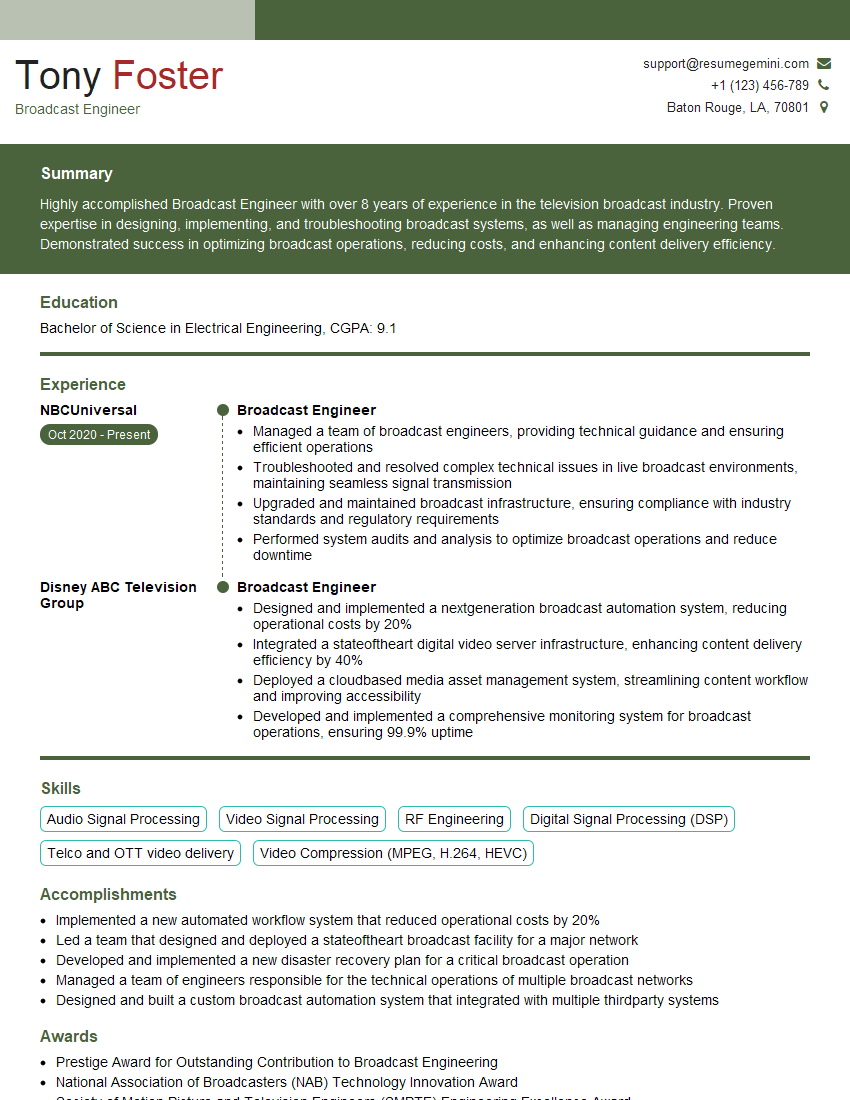

Mastering streaming protocols like RTMP, SRT, and HLS is crucial for career advancement in the media and technology industries. These protocols are fundamental to many streaming applications, and a strong understanding positions you for roles with greater responsibility and higher earning potential. To maximize your job prospects, crafting an ATS-friendly resume is essential. ResumeGemini can help you build a compelling resume that highlights your skills and experience effectively. Examples of resumes tailored to Streaming Protocols (RTMP, SRT, HLS) expertise are available through ResumeGemini to help guide your process.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Very informative content, great job.

good