Preparation is the key to success in any interview. In this post, we’ll explore crucial Systems Integration and Test interview questions and equip you with strategies to craft impactful answers. Whether you’re a beginner or a pro, these tips will elevate your preparation.

Questions Asked in Systems Integration and Test Interview

Q 1. Explain the difference between system integration testing and unit testing.

Unit testing and system integration testing are both crucial parts of software development, but they focus on different levels. Unit testing verifies the functionality of individual components (units) of code in isolation. Think of it like testing each brick of a wall separately to ensure it’s strong. System integration testing, on the other hand, checks how these individual units work together as a complete system. It’s like testing the entire wall to see if it stands firm and functions as expected.

For example, in an e-commerce application, a unit test might verify that the ‘add to cart’ function correctly updates the cart count. An integration test would then verify that the ‘add to cart’ function interacts correctly with the payment gateway, inventory management system, and user account database to complete a purchase successfully. The difference is the scope: unit tests are granular, while integration tests are holistic.

Q 2. Describe your experience with various integration testing methodologies (e.g., top-down, bottom-up, big bang).

I’ve extensive experience with various integration testing methodologies. Top-down testing starts by testing the highest-level modules first, then gradually moving down to lower-level modules. It’s like building a house from the roof down— you test the roof structure, then the walls, and finally the foundation. This approach helps identify high-level design flaws early. Bottom-up testing, conversely, starts with testing the lowest-level modules and gradually integrates higher levels. This is like building the house from the foundation up. It’s good for identifying issues with individual components early on. Big bang integration tests all modules simultaneously. This is a riskier approach as it can be difficult to isolate the root cause of failures, but it can be faster if the system is relatively small and well-understood.

In practice, I often find a hybrid approach most effective. For instance, a project involving a complex microservice architecture might leverage bottom-up testing for the individual microservices, followed by top-down integration across the services to ensure overall system functionality.

Q 3. How do you handle integration testing in an Agile environment?

In Agile environments, integration testing is tightly integrated into the iterative development process. We use continuous integration and continuous delivery (CI/CD) pipelines to automate the build and integration testing. This allows for frequent integration and validation of code changes. Test-driven development (TDD) is often employed, where tests are written *before* the code, driving the design and ensuring testability.

We typically integrate integration tests into the sprint cycle, aligning them with user story completion. This ensures early detection of integration issues and minimizes the risk of late-stage integration problems. Tools like Jenkins, GitLab CI, or Azure DevOps are essential for automating the build and running integration tests as part of the CI/CD process.

Q 4. What are some common challenges you’ve faced during system integration testing, and how did you overcome them?

One common challenge is dealing with dependencies between modules or systems. For example, integration issues might arise due to incompatible data formats or API changes in one component impacting others. I’ve overcome this by establishing clear communication between development teams, implementing robust version control, and using contract testing to verify the interfaces between components.

Another challenge is managing the sheer volume of test data needed for thorough integration testing. I’ve addressed this using data virtualization and test data management tools to efficiently generate and manage test data. Finally, dealing with test environment discrepancies between development, testing, and production environments is a common hurdle. We mitigate this by using tools for environment configuration management and containerization, ensuring consistent test environments across the lifecycle.

Q 5. Explain your experience with different integration testing tools.

My experience encompasses a range of integration testing tools. I’ve used Selenium for UI integration testing, especially for web applications, and JMeter for performance testing and load testing during integration. For API testing, I’ve used tools such as Postman and REST-assured, allowing efficient testing of APIs’ functionality and response times. For database integration testing, I have experience with tools like SQL Developer and DBUnit. The choice of tool depends on the specific technologies used in the project.

Q 6. How do you create effective test cases for system integration testing?

Effective integration test cases are created by focusing on the interactions between modules. They should cover various scenarios, including both positive and negative test cases. The key is to focus on the interface between modules, checking the data flow and the expected outcome of interactions.

For example, if you have a module A that sends data to module B, your test cases would include scenarios like: successful data transmission, handling of invalid data, handling of network errors, and recovery after failures. The test cases should be well-documented, clearly outlining the steps, expected results, and test data used. Using a structured approach, such as a test case template, is vital for consistency and maintainability.

Q 7. Describe your experience with test automation frameworks for integration testing.

I have experience with various test automation frameworks for integration testing, including JUnit and TestNG for Java-based systems, and pytest for Python-based projects. The selection depends on the programming language and the project’s specific needs. A key aspect of using these frameworks is to create modular and reusable test components to facilitate easy maintenance and extension of test suites.

Furthermore, I leverage tools and techniques to manage and report on test results. For instance, using a CI/CD pipeline to automatically run integration tests after each code commit and generate reports, allows for immediate feedback on the integration status of the system. This facilitates early identification and resolution of integration problems, crucial for maintaining the integrity and stability of the software.

Q 8. How do you prioritize test cases for system integration testing?

Prioritizing test cases for System Integration Testing (SIT) is crucial for efficient testing and early defect detection. We can’t test everything at once, so a strategic approach is needed. My approach involves a multi-faceted strategy combining risk analysis, dependency mapping, and business criticality.

Risk-Based Prioritization: I identify test cases that cover functionalities with the highest risk of failure, such as those involving complex interactions between multiple systems or those crucial for core business processes. For example, in an e-commerce application, processing payments would be a high-risk area.

Dependency-Based Prioritization: I prioritize test cases based on interdependencies between different modules or systems. Testing core modules first allows for the subsequent testing of dependent modules to proceed smoothly. Imagine a system with modules A, B, and C, where B depends on A, and C depends on both A and B; testing A first is logical.

Business Criticality Prioritization: I prioritize test cases that validate functionalities vital to the system’s core functionality and business objectives. Features directly impacting revenue or customer experience usually fall into this category.

Test Case Categorization: I categorize test cases into groups based on functionality, module, or risk level. This allows for organized execution and efficient reporting.

Combining these approaches ensures that the most critical and risky areas are tested first, maximizing the early detection of potential problems. This allows for faster feedback and more efficient use of resources.

Q 9. How do you manage defects found during system integration testing?

Defect management during SIT is a critical process for ensuring quality. My approach follows a structured lifecycle, starting with identification and ending with verification.

Defect Reporting: When a defect is found, I use a standardized defect tracking system (like Jira or Azure DevOps) to document it clearly. This includes a detailed description, steps to reproduce, expected and actual results, screenshots, and severity level.

Defect Triage: The defect is then reviewed by a team (developers, testers, and potentially stakeholders) to assess its severity, reproducibility, and root cause. This helps prioritize fixing defects.

Defect Assignment: The defect is assigned to the appropriate development team for resolution.

Defect Resolution: Developers fix the defect and communicate the resolution to the testing team.

Defect Verification: The testing team verifies that the defect has been correctly fixed through retesting.

Defect Closure: Once verified, the defect is closed in the tracking system.

Regular status meetings and clear communication throughout the process are essential for effective defect management. Using a well-defined workflow within the defect tracking system ensures accountability and transparency.

Q 10. What are your strategies for managing the scope of integration testing?

Managing the scope of integration testing requires careful planning and a clear understanding of the system’s architecture and requirements. Over-testing can be wasteful, while under-testing increases risks. My strategy focuses on:

Defining Clear Scope: This involves identifying the specific modules, interfaces, and functionalities to be tested. It’s crucial to clearly define what’s in scope and what’s out of scope based on project timelines and priorities. A well-defined scope document is vital.

Risk Assessment: Evaluating the potential risks associated with each component helps determine the depth and breadth of testing needed. High-risk components receive more testing attention.

Prioritization: As previously discussed, prioritizing test cases ensures that testing focuses on the most critical aspects of the system first. This allows for quicker feedback on critical functionalities.

Test Case Design: Focusing on test case design that covers both positive and negative scenarios, boundary conditions, and edge cases ensures comprehensive testing without unnecessary redundancy. For instance, instead of countless individual tests, consider parameterized tests.

Timeboxing: Setting realistic time constraints for the testing phase is important to prevent scope creep and ensure timely delivery. A clear timeline helps keep testing focused.

Regular monitoring and communication are crucial for effective scope management. Stakeholders need to be involved to adjust the scope as necessary while keeping the goal of releasing a high-quality product.

Q 11. Explain your experience using different test management tools.

I have extensive experience with various test management tools, each with its strengths and weaknesses. Here are a few examples:

Jira: A widely used tool, excellent for managing defects and tracking progress. Its flexibility and integration capabilities make it suitable for various project methodologies.

Azure DevOps: A comprehensive platform integrating requirements management, test planning, execution, and reporting. Its strong integration with other Microsoft tools makes it a good choice for organizations using a Microsoft stack.

TestRail: Specifically designed for test case management, offering features like test case organization, execution tracking, and reporting. It is particularly useful for managing a large number of test cases.

Zephyr: Another popular test management tool that integrates well with other tools and supports various testing methodologies.

My choice of tool depends on the specific project needs and the organization’s infrastructure. Regardless of the tool, I focus on proper configuration, training, and adherence to best practices to maximize the tool’s benefits.

Q 12. How do you ensure comprehensive test coverage during system integration testing?

Ensuring comprehensive test coverage in SIT is vital for detecting defects early. My approach focuses on multiple strategies:

Requirement Traceability Matrix (RTM): This matrix links test cases to requirements, ensuring that all requirements are covered by at least one test case. This offers a clear overview of test coverage and allows for identification of gaps.

Test Case Design Techniques: Utilizing techniques like equivalence partitioning, boundary value analysis, and state transition testing helps design efficient test cases that cover a wide range of scenarios.

Code Coverage: While not always feasible for integration testing, code coverage tools can provide insight into the areas of code that have been executed during testing. This helps pinpoint untested areas.

Review and Peer Checks: Having multiple testers review test cases and test results helps identify any gaps or blind spots in test coverage.

Test Data Management: Using comprehensive test data ensures the tests cover different scenarios and edge cases. Data generation and management tools can help create diverse data sets.

The combination of these methods aims to achieve high test coverage and reduce the risk of undiscovered defects.

Q 13. How do you handle integration testing in distributed systems?

Integration testing in distributed systems presents unique challenges due to the complexity of communication and data exchange between different systems and geographical locations. My approach incorporates:

Service Virtualization: When dealing with dependencies on external systems or services, service virtualization simulates these systems to enable isolated testing. This allows testing without relying on external systems that might be unavailable or unstable.

Mock Objects and Stubs: These techniques replace real components with simplified representations, allowing for targeted testing of specific interactions within the distributed system.

Contract Testing: Defining and verifying contracts (APIs, message formats) between different services ensures that they interact correctly. This verifies that services meet their agreed-upon specifications.

Monitoring Tools: Tools to monitor network traffic, latency, and system performance provide valuable insight into how the distributed system behaves during integration tests. This helps identify performance bottlenecks and communication issues.

Testing across different locations: If the system’s components are geographically dispersed, it’s essential to test it from various locations and simulate real-world network conditions to discover any issues related to network latency or connectivity problems.

The goal is to simulate the real-world environment as much as possible to identify and address integration issues before deployment.

Q 14. Explain your experience with performance testing within the context of system integration testing.

Performance testing within the context of SIT is crucial to ensure the system meets performance requirements under expected load. My experience involves:

Load Testing: Simulating realistic user loads to assess the system’s behavior under expected conditions. This helps identify performance bottlenecks and ensure the system can handle anticipated traffic.

Stress Testing: Pushing the system beyond its expected limits to determine its breaking point. This helps identify the system’s resilience and capacity.

Endurance Testing: Testing the system over extended periods under sustained load to assess its stability and reliability. This identifies issues that only surface after prolonged usage.

Performance Monitoring Tools: Utilizing tools such as JMeter or LoadRunner to monitor key performance indicators (KPIs) like response times, throughput, and resource utilization. This data provides valuable insights into performance bottlenecks.

Performance testing is usually integrated into the SIT phase to identify performance-related issues early in the development lifecycle. It is highly beneficial to conduct performance testing alongside functional testing during integration rather than as a separate phase.

Q 15. How do you ensure traceability between requirements, test cases, and defects?

Ensuring traceability between requirements, test cases, and defects is crucial for effective system integration testing. It’s like building a house – you need to know which brick goes where and why. We achieve this through meticulous documentation and a well-defined traceability matrix.

- Requirement Traceability: Each requirement is uniquely identified (e.g., using a numbering system like REQ-001). This ID is then linked to all related test cases designed to verify that requirement.

- Test Case Traceability: Each test case (TC-001, TC-002, etc.) clearly states which requirement(s) it’s testing. This ensures complete test coverage.

- Defect Traceability: When a defect is found, it’s linked back to the specific test case that revealed it and, ultimately, to the requirement(s) affected. This allows us to quickly identify the root cause and prioritize fixes.

- Tools: We often use requirements management tools (e.g., Jira, DOORS) and test management tools (e.g., TestRail, Zephyr) that facilitate this traceability through automated linking and reporting features. These tools help visualize the relationships between requirements, test cases, and defects, providing a clear picture of the entire testing process.

For example, if a defect is found during the integration testing of a payment gateway (e.g., the system fails to process a transaction), we trace it back to the test case that uncovered the issue (e.g., TC-025 – Test Payment Transaction), and then to the relevant requirements (e.g., REQ-012 – Process successful payment transactions). This ensures that the fix addresses the root cause and doesn’t create new problems elsewhere.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. Describe your experience with different types of integration testing environments (e.g., development, staging, production).

My experience spans various integration testing environments, each serving a distinct purpose in the software development lifecycle. Think of it like a product’s journey from its creation to the market:

- Development Environment: This is the sandbox for developers. Integration testing here is focused on unit and component integration. It’s often less stable but allows for quick iterative testing and early defect detection. We utilize mocking and stubbing techniques extensively to simulate dependencies that are not yet fully implemented.

- Staging Environment: This mirrors the production environment closely, allowing us to perform more realistic integration tests. It’s where we test the interaction between different modules and systems in a near-production setting. The focus here shifts to system-level functionality and performance.

- Production Environment: Testing in the production environment is usually limited to controlled, monitored deployments and performance monitoring. We rarely perform full integration tests here due to the risk of impacting live users. However, we might conduct canary releases or A/B testing to validate functionality in a live setting gradually. This is all done with very strict monitoring and rollback capabilities.

In each environment, the testing strategy and the scope of testing vary to match the level of stability and the risks involved. For example, in the development environment, we might focus on validating individual API calls, while in staging we’d test the entire user flow using the integrated system. The level of automation also differs, with more extensive automation applied to the staging and, to a lesser extent, the development environment.

Q 17. How do you collaborate effectively with developers and other stakeholders during system integration testing?

Effective collaboration is the cornerstone of successful system integration testing. It’s a team sport, not a solo act. My approach emphasizes open communication, clear expectations, and proactive problem-solving.

- Regular Meetings: Frequent meetings (daily stand-ups, weekly status updates) provide opportunities to discuss roadblocks, share progress, and align on priorities. This includes developers, testers, business analysts, and other stakeholders.

- Defect Tracking and Management: Using a centralized defect tracking system (e.g., Jira) ensures that issues are clearly documented, assigned to the appropriate team members, and tracked to resolution. This promotes transparency and accountability.

- Test Case Reviews: Joint reviews of test cases with developers ensure clarity on the expected functionality and improve test case quality. This helps reduce misunderstandings and avoids misinterpretations.

- Proactive Communication: Keeping stakeholders informed of the testing progress and any significant issues is crucial. This can be done through regular reports, email updates, or presentations. A well-defined communication strategy is essential.

I’ve found that fostering a collaborative, open environment where everyone feels comfortable raising concerns and sharing ideas leads to the most effective integration testing process. For instance, if a defect is found, rather than blaming, I work with the developer to understand the root cause and find an effective solution. This collaborative approach speeds up problem resolution and strengthens the overall team dynamics.

Q 18. Explain your experience with continuous integration and continuous delivery (CI/CD) pipelines.

My experience with CI/CD pipelines is extensive. CI/CD automates the process of building, testing, and deploying software. Think of it as an assembly line for software, constantly churning out updated versions.

- Continuous Integration (CI): This involves integrating code changes frequently into a shared repository. Automated build and testing steps are triggered by each code commit. This helps identify integration issues early in the development cycle. I’ve worked with Jenkins, GitLab CI, and Azure DevOps for implementing CI pipelines.

- Continuous Delivery (CD): This extends CI by automating the process of deploying the software to various environments (dev, staging, prod). This allows for faster release cycles and reduces deployment risks. We often employ automated deployment tools and infrastructure-as-code to manage this process.

- Integration Testing in CI/CD: Integration tests are seamlessly integrated into the CI/CD pipeline, ensuring that all code changes undergo rigorous testing before being deployed. This often involves running automated integration tests as part of the build process, with results reported back to the pipeline for analysis. Failing tests will halt the pipeline until fixed.

A well-defined CI/CD pipeline significantly reduces the time it takes to release new software versions. It also decreases the risk of errors during deployment and allows for more frequent releases, delivering value to the customer faster. For example, In a recent project, we were able to deploy new features several times per week thanks to our automated CI/CD pipeline, greatly accelerating feedback loops and ensuring quicker adaptation to changing customer needs.

Q 19. Describe your experience with different types of integration (e.g., database, API, UI).

Integration testing encompasses various types, depending on the system’s architecture. Each type requires a different approach and specific test techniques.

- Database Integration Testing: This focuses on testing the interaction between the application and the database. It includes verifying data integrity, database transactions, and data access performance. We use techniques like schema validation, data comparison, and stored procedure testing.

- API Integration Testing: This involves testing the interaction between different APIs. We use tools like Postman or REST-Assured to send requests to APIs and validate the responses, focusing on message formats, response codes, and data transformation.

- UI Integration Testing: This focuses on end-to-end testing of the user interface, ensuring that all components work seamlessly together. We often use automated UI testing tools like Selenium or Cypress to simulate user interactions and validate the system’s behavior.

For instance, in a e-commerce application, database integration testing would verify that order details are correctly stored in the database, API integration testing would check the communication between the order processing API and the payment gateway API, and UI integration testing would validate that the user can successfully place an order through the website interface.

Q 20. How do you handle changes in requirements during system integration testing?

Handling changes in requirements during system integration testing requires a flexible and adaptable approach. It’s like adjusting a recipe mid-baking—you need to make changes carefully to avoid ruining the dish.

- Impact Analysis: First, we assess the impact of the changes on the existing test cases. This involves identifying which test cases need to be updated or added to accommodate the new requirements.

- Test Case Updates: We update the affected test cases and ensure that they accurately reflect the new requirements. This may involve adding new test cases, modifying existing ones, or removing obsolete ones.

- Retesting: We retest the impacted areas to ensure that the changes haven’t introduced new defects or broken existing functionality. This may involve rerunning existing test cases or adding new ones.

- Communication: Maintaining open communication with stakeholders is essential to manage expectations regarding the timing and scope of the retesting effort. Transparency is key.

- Configuration Management: A well-defined configuration management system will track the changes, their impact, and the related testing activities, ensuring that no changes are overlooked. This aids in traceability and managing the ripple effect of the changes.

For example, if a requirement changes in the middle of system integration testing, we hold a meeting with the development team and stakeholders to discuss the implications. We then re-prioritize the testing activities, update our test plans and test cases accordingly, and conduct necessary retesting to validate the changes. Careful tracking and documentation ensure that we maintain complete test coverage.

Q 21. How do you measure the effectiveness of your system integration testing efforts?

Measuring the effectiveness of system integration testing involves analyzing various metrics. Think of it as evaluating the health of your software before releasing it to the public.

- Defect Density: This metric measures the number of defects found per lines of code or per function point. A lower defect density indicates better software quality.

- Test Coverage: This metric measures the percentage of code or requirements covered by the test cases. High test coverage indicates a comprehensive testing effort.

- Defect Severity and Priority: Analyzing the severity and priority of the detected defects provides insight into the criticality of the issues found. High-severity defects need immediate attention.

- Test Execution Time: This measures the time taken to execute the test cases. Reducing test execution time is critical for rapid feedback cycles.

- Test Case Pass/Fail Rate: This simple metric indicates the overall success of the test suite. A high pass rate suggests high quality.

By tracking these metrics over time, we can identify trends and areas for improvement. For example, if the defect density is consistently high in a particular module, it may signal a need for improved coding practices or more rigorous testing in that area. Regularly analyzing these metrics gives a clear picture of our testing effectiveness and provides opportunities for continuous improvement. We use dashboards and reporting tools to visualize these metrics and communicate their meaning to stakeholders.

Q 22. Explain your experience with test data management for integration testing.

Test data management for integration testing is crucial for ensuring accurate and reliable results. It involves the planning, creation, and maintenance of datasets used to exercise the interactions between different system components. Poorly managed test data can lead to inaccurate test results, wasted time, and ultimately, system failures in production.

My approach typically involves several key steps:

- Requirements Gathering: Identifying the specific data elements and combinations needed to thoroughly test all integration points.

- Data Creation: This might involve using scripting (e.g., Python, SQL) to generate synthetic data that meets the requirements, or carefully selecting and masking subsets of production data to maintain privacy and security.

- Data Subsetting and Masking: Creating smaller, manageable datasets that accurately represent the production environment but protect sensitive information. Techniques include data masking (e.g., replacing real names with pseudonyms), anonymization, and data encryption.

- Data Refreshment: Regularly updating the test data to reflect changes in the production system. This ensures the tests remain relevant and effective.

- Data Management Tooling: Utilizing specialized test data management tools to streamline the process, including data creation, masking, and version control.

For example, in a recent project integrating an e-commerce platform with a payment gateway, we used a combination of synthetic data for order details (e.g., randomly generated product IDs, quantities, and addresses) and masked production data for customer IDs to realistically simulate real-world transaction flows without compromising sensitive customer information.

Q 23. How do you document the results of your system integration testing?

Documenting system integration testing results is essential for tracking progress, identifying issues, and demonstrating the overall system’s readiness for deployment. I use a multi-faceted approach that combines various techniques for effective communication and analysis.

- Test Execution Reports: Automated tools generate reports detailing the execution of test cases, including pass/fail status, execution time, and detailed error messages if any.

- Defect Tracking System: A centralized system (like Jira or Bugzilla) is used to log, track, and manage identified defects. Each defect includes a detailed description, steps to reproduce, severity, priority, and assigned developer.

- Test Summary Reports: Concise reports summarizing the overall test results, including metrics like test coverage, pass rate, and outstanding defects. These reports are typically distributed to stakeholders at regular intervals.

- Test Traceability Matrix: A document linking test cases to requirements, ensuring all requirements have been adequately tested.

- Test Logs and Screenshots: Detailed logs capture system activity during test execution, and screenshots help visualize issues. These are particularly useful when diagnosing complex problems.

The goal is to create a clear and comprehensive record that enables easy review and analysis by various stakeholders, from developers and testers to project managers and clients.

Q 24. Describe a time when you had to troubleshoot a complex integration issue.

During the integration of a new CRM system with an existing ERP system, we encountered a perplexing issue where customer order data wasn’t transferring correctly. Initially, the error messages were vague and unhelpful.

My troubleshooting approach involved the following steps:

- Isolating the Problem: We systematically eliminated potential causes by testing different parts of the integration pipeline. We started by confirming the order data was correctly generated by the CRM.

- Analyzing Logs and Traces: We meticulously examined the logs from both the CRM and ERP systems, focusing on the time period when the data transfer failure occurred. This revealed inconsistent timestamps, suggesting a clock synchronization problem.

- Debugging and Code Review: After pinpointing the problem to a timing issue, we reviewed the integration code, discovered a missing configuration setting related to clock synchronization between the systems. We also added additional logging statements to better track data flow in future debugging sessions.

- Verification and Retesting: After correcting the configuration, we thoroughly retested the integration process to confirm the issue was resolved. This also involved verifying data integrity and consistency.

This experience highlighted the importance of detailed logging, methodical troubleshooting, and close collaboration between development and testing teams in resolving complex integration issues.

Q 25. What are some common metrics used to track the progress of system integration testing?

Several metrics are crucial for tracking the progress of system integration testing. These metrics provide insights into test effectiveness, efficiency, and the overall quality of the integrated system.

- Test Case Execution Rate: The number of test cases executed per day or per iteration. This helps monitor the pace of testing and identify potential bottlenecks.

- Test Pass Rate: The percentage of test cases that pass without defects. A high pass rate indicates good integration quality.

- Defect Density: The number of defects found per unit of code or per test case. This helps assess the quality of the code and the effectiveness of the testing process.

- Test Coverage: The extent to which the integration points have been tested. This metric ensures comprehensive testing and helps minimize the risk of undiscovered defects.

- Test Completion Rate: The percentage of planned test cases that have been completed. This helps track overall progress and identify potential delays.

By tracking these metrics throughout the testing lifecycle, we gain valuable insights into the health of the integration and can proactively address potential problems.

Q 26. How do you ensure the security of your integration testing environment?

Security of the integration testing environment is paramount. Compromised test data or access to the test environment could have severe consequences.

My approach includes:

- Network Segmentation: Isolating the test environment from the production network to prevent unauthorized access or data breaches.

- Access Control: Implementing strict access control measures using role-based permissions. Only authorized personnel have access to the test environment and data.

- Data Encryption: Encrypting sensitive data both in transit and at rest to protect it from unauthorized access, even if the system is compromised.

- Regular Security Audits: Conducting periodic security audits and vulnerability scans to identify and address potential security weaknesses.

- Secure Configuration Management: Using configuration management tools and processes to ensure the test environment is properly configured and secured.

- Vulnerability Scanning: Utilizing tools like Nessus or OpenVAS to scan for vulnerabilities before testing begins.

A layered security approach, combining several of these techniques, provides robust protection for the integration testing environment.

Q 27. What is your experience with different scripting languages used in automation testing?

I have extensive experience with various scripting languages for automation testing, selecting the most appropriate language based on the project’s needs and existing infrastructure.

- Python: A highly versatile and widely used language for test automation. Its rich libraries (like `unittest`, `pytest`, `requests`) make it ideal for creating robust and maintainable test scripts. I often use Python for API testing and system-level integrations.

- JavaScript (with frameworks like Cypress, Selenium): Excellent for UI testing, particularly for web applications. These frameworks enable efficient scripting and easy interaction with browser elements.

- Shell Scripting (Bash, PowerShell): Ideal for automating tasks related to environment setup, data manipulation, and execution of other test scripts.

- Groovy (with SoapUI): Well-suited for API testing, particularly with SOAP and REST services. SoapUI provides a user-friendly interface alongside the flexibility of Groovy for complex automation.

For example, I used Python with the `requests` library to automate API testing for a recent microservices integration project. The scripts sent HTTP requests to various microservices, verified the responses, and generated detailed reports. This approach helped ensure the smooth integration of the microservices with minimal manual intervention.

Q 28. How do you handle conflicts between different teams involved in integration testing?

Conflicts between teams involved in integration testing are inevitable, especially in large projects. Effective communication and collaboration strategies are essential for mitigating these conflicts.

My approach involves:

- Clearly Defined Roles and Responsibilities: Establishing clear roles and responsibilities for each team involved in the integration testing process from the outset. This minimizes confusion and overlapping efforts.

- Regular Communication and Meetings: Holding frequent meetings to discuss progress, address issues, and resolve conflicts promptly. A collaborative platform (like Slack or MS Teams) can facilitate continuous communication.

- Shared Test Plan and Repository: Using a shared test plan and version-controlled repository ensures all teams have access to the same information, reducing the likelihood of inconsistencies.

- Mediation and Conflict Resolution: In case of disagreements, acting as a neutral mediator to facilitate discussions and find mutually agreeable solutions. This might involve facilitating a compromise between conflicting priorities or proposing alternative solutions.

- Escalation Process: Establishing a clear escalation process for unresolved conflicts that ensures senior management can intervene when necessary.

Effective communication, proactive conflict management, and clearly defined processes help ensure a smooth and efficient integration testing process despite the involvement of multiple teams.

Key Topics to Learn for Systems Integration and Test Interview

- Understanding System Architectures: Gain a firm grasp of different system architectures (microservices, monolithic, etc.) and their impact on integration and testing strategies.

- Test Planning and Strategy: Learn to develop comprehensive test plans, including scope definition, test case design, and resource allocation. Practical application: Create a test plan outline for a hypothetical system.

- Integration Testing Techniques: Master various integration testing approaches like top-down, bottom-up, and big-bang integration. Understand their strengths and weaknesses.

- Test Automation Frameworks: Familiarize yourself with popular automation frameworks (Selenium, Appium, etc.) and their application in automating integration tests.

- API Testing: Develop proficiency in testing APIs using tools like Postman or REST-assured. Understand concepts like RESTful APIs and API documentation.

- Data Management and Test Data: Learn strategies for managing and preparing test data effectively. Understand the importance of data integrity and security in testing.

- Defect Tracking and Reporting: Master the use of defect tracking systems (Jira, Bugzilla) and learn how to effectively report and track bugs throughout the integration testing process.

- Performance and Load Testing: Understand the basics of performance and load testing and how to identify bottlenecks in integrated systems.

- Security Testing in Integration: Learn about security vulnerabilities that can arise during integration and how to test for them.

- Continuous Integration/Continuous Delivery (CI/CD): Understand the principles of CI/CD and how integration testing fits into the pipeline.

Next Steps

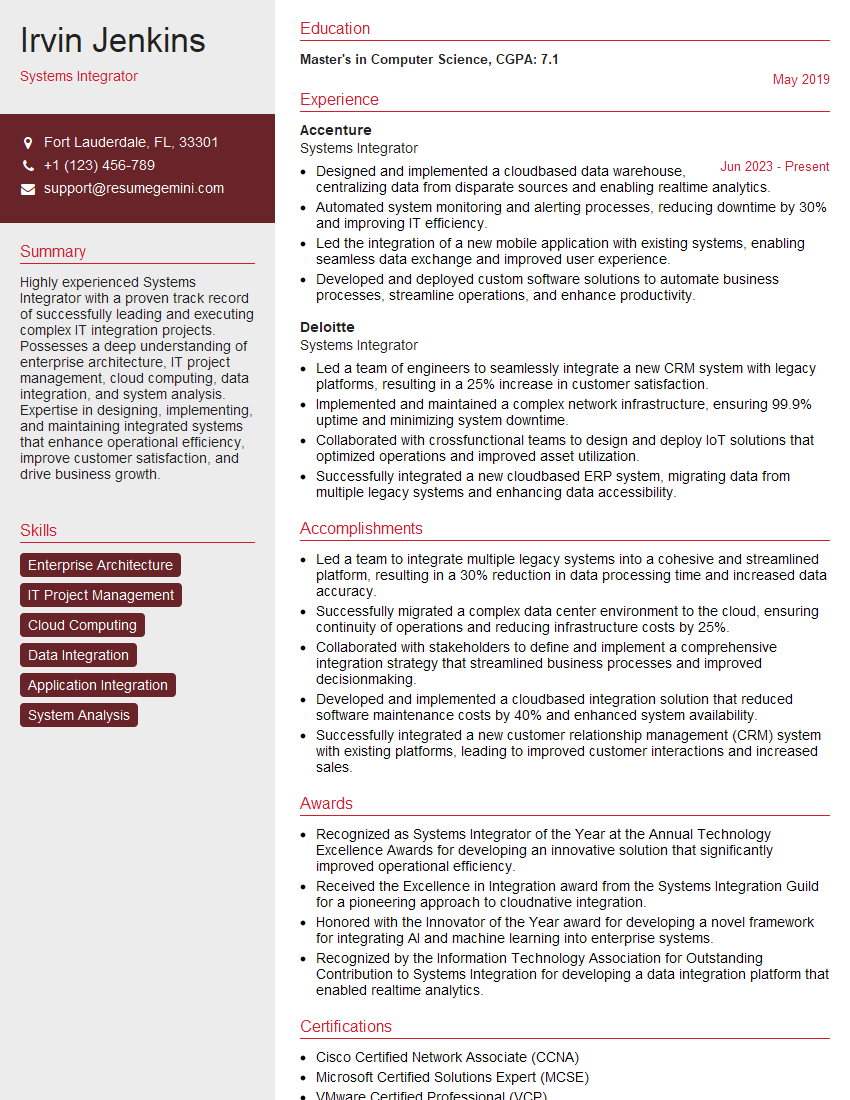

Mastering Systems Integration and Test is crucial for a successful and rewarding career in software development and related fields. It demonstrates a deep understanding of the software development lifecycle and opens doors to senior roles with increased responsibility and compensation. To significantly enhance your job prospects, focus on building a strong, ATS-friendly resume that highlights your skills and experience effectively. ResumeGemini is a trusted resource to help you craft a professional and impactful resume tailored to the demands of the job market. Examples of resumes specifically designed for Systems Integration and Test professionals are available to guide you.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Very informative content, great job.

good