Feeling uncertain about what to expect in your upcoming interview? We’ve got you covered! This blog highlights the most important Proficient in Digital Audio Workstations (DAWs) interview questions and provides actionable advice to help you stand out as the ideal candidate. Let’s pave the way for your success.

Questions Asked in Proficient in Digital Audio Workstations (DAWs) Interview

Q 1. What DAWs are you proficient in and what is your level of expertise with each?

My core proficiency lies in Logic Pro X and Ableton Live. I’d consider myself an expert in Logic Pro X, having used it professionally for over eight years across diverse projects, from orchestral scores to electronic music production. My Ableton Live skills are at an advanced level; I’m highly proficient in its workflow and its unique strengths in live performance and electronic music composition. I also possess working knowledge of Pro Tools, mainly for collaborative projects and industry-standard file compatibility.

Q 2. Explain the process of setting up a new project in your preferred DAW.

Setting up a new project in Logic Pro X, my preferred DAW, is a straightforward yet crucial process. First, I choose the sample rate (typically 44.1kHz or 48kHz, depending on the project’s requirements and target platform) and bit depth (usually 24-bit for maximum dynamic range). Next, I select the tempo, often starting with a default value and adjusting later if needed. I’ll then create audio tracks for instruments and vocals, and MIDI tracks for software instruments or external MIDI controllers. Finally, I create a master bus track to handle final mixing and mastering. This entire process ensures a clean and efficient foundation for the project. For instance, selecting a higher sample rate like 96kHz would provide greater audio fidelity, but also significantly larger file sizes.

Q 3. Describe your workflow for mixing a typical track.

My mixing workflow is iterative and focuses on achieving a balanced and cohesive sound. I begin with gain staging, ensuring appropriate levels across all tracks to prevent clipping and maximize headroom. Next, I address individual tracks, applying EQ to sculpt the frequency spectrum, compression to control dynamics, and potentially other effects like reverb or delay for spatial context. Once the individual tracks are balanced, I focus on the overall mix, using EQ and compression on the master bus to refine the final sound. Throughout this process, I frequently listen on different playback systems (headphones, studio monitors) to ensure consistency. For example, I might use a high-pass filter on bass tracks to remove muddiness, or a compressor on vocals to even out their volume variations. This whole process involves frequent A/B comparisons and adjustments until I achieve the desired sound.

Q 4. How do you handle latency issues in your DAW?

Latency, or the delay between audio input and output, is a common issue in DAWs, especially when using high-quality plugins or external effects. I handle it primarily by reducing buffer size in my DAW’s audio settings. Lower buffer sizes minimize latency but increase CPU load, potentially leading to crackling or dropouts. I find the optimal balance by experimenting with different buffer sizes until I find the lowest setting that allows for smooth playback. Furthermore, using low-latency plugins and optimizing my computer’s processing power (e.g., closing unnecessary applications) can further reduce latency. In extreme cases, I might resort to using hardware solutions like an external audio interface with dedicated low-latency DSP processing.

Q 5. What are your preferred plugins and why?

My plugin choices often depend on the specific project, but some favorites include Waves plugins (especially their compressors and EQs for their versatility and precision), FabFilter Pro-Q 3 (for its intuitive and powerful EQ capabilities), and ValhallaRoom (for its realistic reverb emulations). I appreciate Waves for their ability to accurately model classic hardware, while FabFilter excels in its clean and efficient design. ValhallaRoom provides exceptional quality without the heavy CPU load sometimes associated with other reverb plugins. Ultimately, plugin selection is about finding tools that effectively contribute to the creative process and achieve the desired sound.

Q 6. Explain the difference between destructive and non-destructive editing.

Destructive editing permanently alters the original audio file, while non-destructive editing allows modifications without permanently changing the source file. Imagine editing a photo: destructive editing is like directly altering the image pixels; non-destructive editing is like applying layers or filters that can be adjusted or removed later. In DAWs, destructive editing might involve permanently normalizing or clipping an audio file, while non-destructive editing would involve using plugins or automation that modify the signal without affecting the original audio data. Non-destructive editing offers greater flexibility and control, allowing for experimentation and revision.

Q 7. How do you manage large audio projects efficiently?

Managing large audio projects efficiently requires a structured approach. I use folder organization within my DAW, creating separate folders for different sections of the project (e.g., drums, vocals, instruments). I also employ techniques like freezing tracks (rendering them to audio to reduce CPU load) and bouncing down sections of the project to create smaller, manageable files. I regularly consolidate and save my project, and back up my files to an external drive. Regularly archiving unnecessary files and using optimized audio formats can further streamline the process. This methodical organization ensures that even large and complex projects remain manageable and prevent issues with project size and performance.

Q 8. Describe your experience with MIDI editing and sequencing.

MIDI editing and sequencing are fundamental to music production. MIDI (Musical Instrument Digital Interface) data doesn’t contain actual audio; instead, it’s a set of instructions telling a synthesizer or sampler what notes to play, how loud, and for how long. My experience encompasses a wide range of tasks, from basic note input and editing to complex automation and layering of MIDI tracks.

- Note Entry and Editing: I’m proficient in using various input methods, including keyboards, MIDI controllers, and drawing notes directly onto the piano roll within my DAW (primarily Ableton Live and Logic Pro X). This involves precise timing adjustments, velocity manipulation for dynamic expression, and the creation of complex rhythmic patterns.

- MIDI Sequencing: I’m skilled in creating sophisticated musical arrangements using MIDI sequencing. This involves arranging MIDI tracks, creating sections and structures, and implementing techniques like quantization, humanization, and note slicing for a more natural feel. For instance, I recently sequenced a complex drum pattern for a hip-hop track, using different velocity layers to add subtle variations in the snare hits.

- Working with MIDI Controllers: I’ve extensive experience using various MIDI controllers, from simple keyboards to advanced surfaces offering real-time control over multiple parameters. This allows for expressive performance and intuitive workflow.

- Advanced MIDI Techniques: My skills include working with MIDI effects such as arpeggiators, pitch bend, and modulation wheels, as well as utilizing advanced features like automation clips to dynamically change MIDI parameters throughout a song.

In essence, my proficiency in MIDI editing and sequencing allows me to craft compelling and nuanced musical compositions, bringing ideas from my head into a tangible form within my DAW.

Q 9. What is your approach to audio restoration?

Audio restoration is a meticulous process aiming to improve the quality of degraded audio recordings. My approach is methodical, focusing on identifying the types of noise and damage present and employing appropriate techniques to minimize their impact. It’s often an iterative process, requiring careful listening and adjustment.

- Noise Reduction: I use spectral editing and noise reduction plugins (e.g., iZotope RX, Waves plugins) to identify and remove various types of noise, such as hiss, hum, crackle, and clicks. This usually involves analyzing the noise profile and creating a noise print for effective reduction. I always prioritize preserving the integrity of the original audio, striking a balance between noise reduction and maintaining the sonic character.

- Click and Crackle Repair: I employ tools designed to identify and repair individual clicks and crackles, often using spectral editing or sophisticated repair algorithms. These tools can be surprisingly effective in restoring the clarity of older recordings.

- Declicking and De-essing: Specific tools tackle these common issues. Declicking algorithms can intelligently remove sudden, sharp noises, while de-essing reduces harsh sibilance (hissing sounds) in vocals.

- Dynamic Range Control: Careful application of compression and limiting can help even out the dynamics of a damaged recording, making quieter parts audible while reducing the impact of loud peaks.

- EQ and Spectral Editing: EQ is used to address frequency imbalances and remove unwanted resonances. Spectral editing offers even finer control, allowing for precise removal of specific frequencies or artefacts.

My approach involves using a combination of automated and manual techniques, always exercising careful judgement to maintain the sonic integrity of the restored audio.

Q 10. How do you troubleshoot common DAW issues?

Troubleshooting DAW issues is a crucial skill. My approach is systematic, starting with the simplest solutions and progressing to more complex ones. I always make a backup of my project before attempting any significant troubleshooting steps.

- Check the Obvious: I first ensure all connections are secure (audio interface, MIDI controllers). I check if the correct audio device is selected within the DAW settings.

- Restart the DAW and Computer: Often, a simple restart resolves temporary software glitches.

- Driver Updates: Outdated drivers are a frequent source of problems. I check for updates for my audio interface and other relevant hardware.

- Plugin Conflicts: Faulty or incompatible plugins can cause crashes or instability. I’ll disable plugins one by one to isolate the culprit.

- Disk Space: Insufficient hard drive space can lead to performance issues. I free up space if needed.

- DAW Preferences: Sometimes, resetting the DAW preferences to their default values resolves corruption issues.

- Reinstallation: As a last resort, I might reinstall the DAW software.

- Community Forums and Support: I leverage online communities and the DAW manufacturer’s support resources if I encounter unusual or persistent problems.

Through experience, I’ve developed a troubleshooting ‘toolkit’ that allows me to quickly address most issues efficiently.

Q 11. What are your preferred methods for audio file organization?

Organized audio files are essential for efficient workflow. My method uses a hierarchical folder structure combining date-based and project-based organization. It’s crucial for maintainability and collaboration.

- Project-Based Folders: Each project resides in its own folder, containing all related audio files, MIDI files, and project files. This is essential for project portability and backup.

- Date-Based Subfolders: Inside the project folder, I use subfolders organized by date (YYYY-MM-DD) for different recording sessions. This allows for easy tracking of revisions and versions.

- Descriptive File Names: I use clear and consistent file naming conventions (e.g., ProjectName_Date_TrackName.wav). This avoids confusion and ensures quick identification.

- Metadata: I use metadata tags (artist, album, title, etc.) within the audio files themselves to facilitate searching and organization within DAWs and media players.

- Backup Strategy: I use a robust backup strategy, employing cloud storage and external hard drives to avoid data loss. I regularly back up project folders, ideally using version control.

This system ensures that even large projects remain manageable and easy to navigate.

Q 12. Explain the concept of gain staging.

Gain staging is the process of managing signal levels throughout the recording and mixing process. It aims to optimize the signal flow to achieve the best possible sound quality while minimizing distortion and maximizing headroom.

Think of it like managing water flow in a system. If you try to force too much water through a narrow pipe, it overflows (distortion). Gain staging ensures there’s enough ‘space’ at each stage, from the microphone preamp to the final master bus. It’s crucial to get this right early on.

- Microphone Preamplification: Start by ensuring your microphone signal is at an appropriate level; it shouldn’t be too low (introducing noise) or too high (causing clipping). Aim for a comfortable level, leaving headroom.

- Channel Strips: Each track should have its own gain adjustment. Set levels before applying any plugins.

- Plugin Settings: Be mindful of plugin gain staging. Excessive gain in a compressor or EQ can introduce unwanted distortion.

- Mix Bus: Manage the overall mix volume using the mix bus faders. Again, avoid pushing levels too hard.

- Master Bus: Final level adjustments happen here; make sure not to clip the master bus.

Effective gain staging prevents distortion, improves dynamic range, and makes mixing smoother, ensuring the best possible sound.

Q 13. How do you use automation in your DAW?

Automation allows dynamic control over various DAW parameters over time, adding movement and expression to your mixes. I use it extensively to create interesting and evolving soundscapes.

- Volume Automation: I routinely automate volume levels, creating fades, swells, and dynamic changes within a track or across multiple tracks to enhance rhythm and feel.

- Panning Automation: Automating panning creates a sense of space and movement, especially effective for instruments or vocals.

- Effect Automation: This allows for dynamic control of effects like reverb, delay, or EQ, creating changes in ambience and tone throughout a song. A classic example is automating a reverb send to build a soundscape.

- Filter Automation: Automating filter cutoff frequencies can create sweeping or evolving sonic textures, useful for creating interesting transitions or effects.

- Plugin Parameter Automation: Almost any parameter within a plugin can be automated, giving tremendous creative control.

Automation is a powerful tool for adding nuance, dynamic range, and interest to your productions. I frequently use it not just for subtle changes but also for creating dramatic sweeps and transitions.

Q 14. Describe your experience with different audio formats (WAV, AIFF, MP3).

Understanding different audio formats is important for compatibility and quality. Each format involves trade-offs between file size and audio fidelity. I use them appropriately depending on the context.

- WAV (Waveform Audio File Format): A lossless format, preserving the original audio data without any compression. Excellent for archiving and mastering, but files are larger.

- AIFF (Audio Interchange File Format): Another lossless format, similar to WAV, commonly used on Apple platforms.

- MP3 (MPEG Audio Layer III): A lossy format using compression to reduce file size. Suitable for streaming and distribution where file size is critical. There’s some loss of audio quality, however, and its quality depends on the bitrate chosen (higher bitrate means better quality, larger file size).

For mastering, I predominantly use WAV, ensuring the highest possible quality. For online distribution, MP3 is often used. AIFF is used for projects primarily developed on Mac-based workflows. The choice depends on the balance between quality, storage space, and intended use.

Q 15. What are your preferred monitoring techniques?

My preferred monitoring techniques prioritize accuracy and consistency. I always start by calibrating my monitoring system using a measurement microphone and software like Room EQ Wizard (REW) to compensate for room acoustics. This ensures a flat frequency response, preventing me from making mix decisions based on room-specific coloration. I also use multiple sets of studio monitors – usually near-field and midfield – to check for frequency balance across different listening environments. It’s crucial to take regular breaks to avoid ear fatigue, as this can significantly impact my judgment. I often reference mixes on headphones, but I rely most heavily on the calibrated monitors, particularly when making critical decisions about low-end frequencies. Finally, I frequently check my mixes in different listening environments – in my car, on my laptop speakers, etc. – to assess how they translate outside my studio.

Career Expert Tips:

- Ace those interviews! Prepare effectively by reviewing the Top 50 Most Common Interview Questions on ResumeGemini.

- Navigate your job search with confidence! Explore a wide range of Career Tips on ResumeGemini. Learn about common challenges and recommendations to overcome them.

- Craft the perfect resume! Master the Art of Resume Writing with ResumeGemini’s guide. Showcase your unique qualifications and achievements effectively.

- Don’t miss out on holiday savings! Build your dream resume with ResumeGemini’s ATS optimized templates.

Q 16. How familiar are you with different EQ and compression techniques?

I’m very familiar with both EQ and compression techniques. EQ (Equalization) is about sculpting the frequency balance of a track, boosting certain frequencies to make them more prominent and cutting others to reduce muddiness or harshness. I frequently use parametric EQs, which offer fine control over the frequency, gain, and Q (bandwidth) of each adjustment. A common example is using a high-shelf EQ to slightly boost the high frequencies of a vocal to improve clarity, or using a narrow band cut to remove a specific resonance in a kick drum. Compression controls the dynamic range of an audio signal, reducing the difference between the loudest and quietest parts. Different compressors offer different characteristics; some are ‘fast’ and aggressive, others are ‘slow’ and subtle. I choose my compression based on the sound source and desired effect. For example, I might use a fast compressor on a snare drum to get a punchy attack, while using a slower compressor on a vocal to smooth out dynamics and control sibilance.

Q 17. Explain your understanding of reverb and delay effects.

Reverb and delay are time-based effects that add depth and space to a mix. Reverb simulates the natural reflections of sound in a room or environment, creating ambience and size. Different reverb types, like plate, hall, and room, mimic different acoustic spaces. I use reverb to add realism and atmosphere to instruments and vocals, carefully adjusting the decay time (how long the reverb lasts) and pre-delay (the delay before the reverb starts) to achieve a natural and well-integrated effect. Delay, on the other hand, creates distinct echoes by repeating a signal at set intervals. This can be used creatively for rhythmic effects, like slap-back delay on vocals, or to create a sense of width and space. I often use multiple delays at different settings to create interesting textures and rhythmic patterns. Choosing the correct type and parameters of reverb or delay is crucial; excessive use can lead to a muddy or unfocused mix.

Q 18. How do you approach creating a balanced mix?

Creating a balanced mix is a multi-step process that prioritizes clarity, punch, and emotional impact. My approach involves a combination of gain staging, EQ, compression, and panning. Gain staging ensures each track is at an appropriate level before applying further processing. I carefully EQ each track to remove unwanted frequencies and shape its tone within the mix. Compression is used to control dynamics and make sure no single element overwhelms others. Panning helps create a stereo image, distributing sounds across the left and right channels for width and interest. Throughout the process, I continuously listen critically, comparing different elements against each other to ensure they work together cohesively and avoid masking. A key part of this is referencing professional mixes in a similar genre, learning from the arrangements and techniques employed. I’ll often employ a phase meter to avoid phasing issues and check the stereo width consistently. Finally, I always take breaks and listen to the mix on different playback systems.

Q 19. What is your process for mastering a track?

My mastering process is designed to optimize a track for optimal playback across various systems. It’s crucial to start with a well-mixed track; mastering cannot fix fundamental mixing issues. My process begins with careful gain staging and checking the overall frequency balance. I focus on ensuring a consistent loudness level using tools such as LUFS meters. I might employ subtle EQ adjustments to improve overall clarity and punch. Gentle compression can be applied for additional dynamic control, but I prioritize retaining the original dynamic range. I then check for stereo imaging issues and phase correlation problems. I always aim for clarity and tonal balance in the low, mid, and high frequencies, ensuring the final product is cohesive and pleasing. Lastly, dithering is applied before exporting to reduce quantization noise, a form of distortion introduced when reducing the bit depth of the final file. Regular listening tests on various playback devices inform each decision throughout this critical final stage.

Q 20. Explain the difference between mixing and mastering.

Mixing and mastering are distinct but interconnected stages in audio production. Mixing focuses on balancing and shaping individual tracks within a song. It involves EQ, compression, effects processing, panning, and automation to create a cohesive and aesthetically pleasing sound. The goal of mixing is to create a well-balanced, detailed, and emotionally resonant composition. Mastering, on the other hand, is the final stage of audio post-production. It involves optimizing the overall mix for playback across a variety of systems and formats. Mastering engineers focus on overall loudness, frequency balance, and dynamic range, aiming for consistency and maximizing the sonic potential of the finished product. Think of mixing as building a house, carefully crafting each room individually, while mastering is like preparing the house for sale, ensuring everything works perfectly together and looks its best for the intended audience.

Q 21. Describe your experience with using external hardware with your DAW.

I have extensive experience integrating external hardware with my DAW. I regularly use hardware compressors (like an API 2500 or a Universal Audio 1176), equalizers (such as a Pultec EQP-1A emulation), and effects units (like Lexicon reverbs or Eventide harmonizers). The process involves setting up the hardware correctly within the DAW’s routing, often utilizing AD/DA converters for optimal signal conversion. I’ll often use analog summing to combine multiple tracks through a hardware mixer before converting back to digital. These hardware units offer a unique sonic character and workflow that complements the digital processing in the DAW. Careful calibration and monitoring are key to ensuring seamless integration. For example, I might use a hardware compressor on a drum bus to add warmth and punch, or an analog delay on a vocal to create a vintage-sounding effect. This blend of digital and analog techniques allows for a more versatile and nuanced production process. It’s important to remember to manage potential latency issues when working with external hardware.

Q 22. How do you work collaboratively with other audio professionals?

Collaborative audio production relies heavily on efficient communication and standardized workflows. I typically start by establishing clear communication channels, often using project management tools like Slack or Asana to track progress, share files, and discuss creative decisions. We agree upon a consistent file naming convention and a version control system, ideally using a cloud-based solution like Dropbox or Google Drive to ensure everyone has access to the latest revisions and prevent accidental overwrites.

For example, when working on a large orchestral score, I might use a cloud-based DAW project file, assigning specific sections to different engineers. We’d then use audio stems or separate tracks for mixing and mastering, ensuring a clear division of labor. We’d schedule regular check-in meetings, perhaps using Zoom, to discuss progress, identify bottlenecks, and collaboratively solve any emerging issues. This structured approach maintains transparency, avoids conflicts, and allows for a smooth, productive collaboration.

Q 23. What are some common challenges in audio production, and how do you overcome them?

Audio production is rife with challenges, from technical glitches to creative disagreements. One common issue is latency (delay) in recording or playback, often caused by insufficient processing power or buffer size settings within the DAW. To overcome this, I optimize my DAW settings, adjust buffer sizes, and use lower-latency audio interfaces. Another frequent problem is noise and unwanted artifacts in recordings. I mitigate this by using proper microphone techniques, noise gates, and EQ to surgically remove unwanted sounds.

Creative differences can also hinder projects. To navigate this, I prioritize open and respectful communication, encouraging everyone to share their perspectives and collaboratively arrive at solutions that satisfy the artistic vision. A thorough pre-production phase, defining the project’s scope, expectations, and individual roles, is crucial to avoid costly rework and misunderstandings down the line. Troubleshooting involves systematic investigation; I start by identifying the symptoms, isolating the potential causes, and testing different solutions methodically, always documenting my steps.

Q 24. Explain your understanding of signal flow.

Signal flow refers to the path an audio signal takes from its source to the final output. Understanding signal flow is essential for effective audio production. Imagine it like a river flowing from its source (the mountains) to the sea (your speakers). Each element in the path – microphones, preamps, compressors, equalizers, effects processors, and finally the DAW – modifies the signal.

For example, in a typical recording session, a vocal signal might first travel from the microphone to a preamp, then to a compressor to control dynamics, followed by an equalizer to shape the tone, then into a DAW, where it is processed further with plugins, before finally being routed to the speakers for monitoring or to the mastering stage. Each step in this process affects the signal’s characteristics, and a solid grasp of the signal flow ensures you can troubleshoot effectively and achieve the desired audio quality. A well-designed signal flow is clear, efficient, and minimizes unwanted interference or degradation of the signal.

Q 25. How do you ensure your audio projects are backed up and protected?

Data security is paramount. My backup strategy uses a multi-layered approach. First, I maintain regular incremental backups of my projects to an external hard drive, stored in a physically separate location. Second, I utilize cloud-based storage services like Dropbox or Backblaze, ensuring automatic and offsite backups. Third, I create version history within my DAW, allowing me to revert to previous versions if necessary.

For especially crucial projects, I may even employ a RAID (Redundant Array of Independent Disks) system for multiple redundancies. This multifaceted approach minimizes the risk of data loss from hardware failure, theft, or natural disasters. It’s not just about *having* backups; it’s about *testing* them regularly to ensure they’re functional and readily accessible when needed. Knowing your backups work is the ultimate peace of mind.

Q 26. What are some of your favorite audio production resources (tutorials, websites, etc.)?

My favorite resources are diverse and cater to different learning styles. I frequently consult websites like Sound on Sound magazine for in-depth articles on audio engineering techniques and gear reviews. YouTube channels such as Produce Like a Pro and In The Mix provide excellent tutorials and insights from seasoned professionals. I also find value in online DAW forums and communities, where I can ask questions, share knowledge, and stay up-to-date with the latest developments in the field.

Specific tutorials often focus on mastering particular skills; I may search for a tutorial on sidechaining compression or advanced mixing techniques depending on the demands of a given project. The key is to be selective and focus on resources that complement my existing skillset and address my current project needs. Consistent learning and exploration are vital for staying ahead of the curve in the ever-evolving field of audio production.

Q 27. Describe a time you had to solve a complex technical issue related to your DAW.

During a complex orchestral recording, I encountered an unexpected issue where certain MIDI tracks would randomly drop out during playback within my DAW. After systematically eliminating potential hardware issues, I discovered the problem originated from a corrupted sample library. I first isolated the problematic tracks and confirmed the problem wasn’t related to my DAW’s settings or the computer’s processing power.

My solution involved painstakingly identifying which sample library was causing the problem. I gradually disabled libraries until the issue resolved, then replaced the culprit library with a fresh copy. Thorough troubleshooting, combined with meticulous testing, eventually led to identifying a corrupted file. This experience reinforced the importance of regular maintenance of sample libraries and maintaining backups of all vital assets. This process highlighted the need for diligent testing and detailed documentation when tracking down complex issues.

Q 28. What are your future goals related to your DAW expertise?

My future goals involve expanding my expertise in immersive audio technologies such as Dolby Atmos and spatial audio. I also want to deepen my proficiency in programming, particularly in Max/MSP, to create custom plugins and tools to streamline my workflow and expand my creative capabilities. Furthermore, I’m keen to explore the applications of AI and machine learning in audio production, potentially involving tools for automated mixing, mastering, and sound design.

Ultimately, my aim is to integrate cutting-edge technology with traditional audio production techniques to enhance creative expression and efficiency. Continual learning and exploration of new technologies are crucial for adapting to the ever-changing audio landscape and pushing the boundaries of what’s possible in audio creation.

Key Topics to Learn for Proficient in Digital Audio Workstations (DAWs) Interview

- Audio Editing Fundamentals: Mastering basic editing techniques like cutting, copying, pasting, and fading. Understand the concepts of time stretching and pitch shifting.

- Mixing and Mastering Principles: Gain a solid understanding of EQ, compression, reverb, delay, and other effects. Learn how to achieve a balanced and polished final mix. Practical application: Explain your workflow for mixing a typical track.

- MIDI Editing and Sequencing: Demonstrate proficiency in working with MIDI data, including creating and editing MIDI tracks, using virtual instruments, and understanding MIDI controllers.

- Signal Flow and Routing: Understand how audio signals travel through a DAW, including the use of aux sends, buses, and group tracks. Be prepared to discuss different routing strategies for achieving specific effects.

- DAW-Specific Features: Deeply understand the features and workflows of at least one major DAW (e.g., Pro Tools, Logic Pro X, Ableton Live). Be ready to discuss your experience with its unique capabilities.

- Workflow Optimization and Efficiency: Discuss strategies for efficient project organization, including using automation, templates, and keyboard shortcuts. Be prepared to describe your process for handling large and complex projects.

- Troubleshooting and Problem-Solving: Practice identifying and resolving common audio issues such as latency, clipping, and noise. Be ready to discuss your approaches to debugging technical problems.

- Audio File Formats and Standards: Familiarize yourself with common audio file formats (WAV, AIFF, MP3) and their characteristics. Understanding sample rates, bit depths, and different compression techniques is crucial.

Next Steps

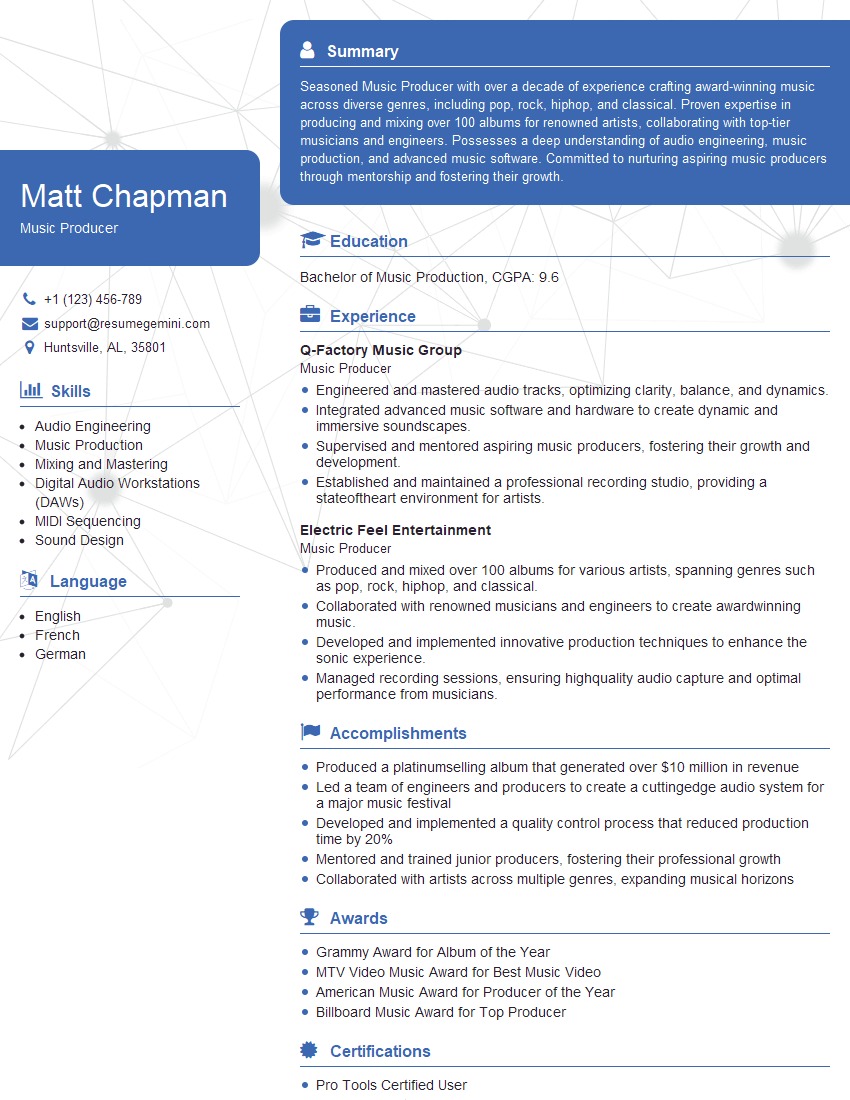

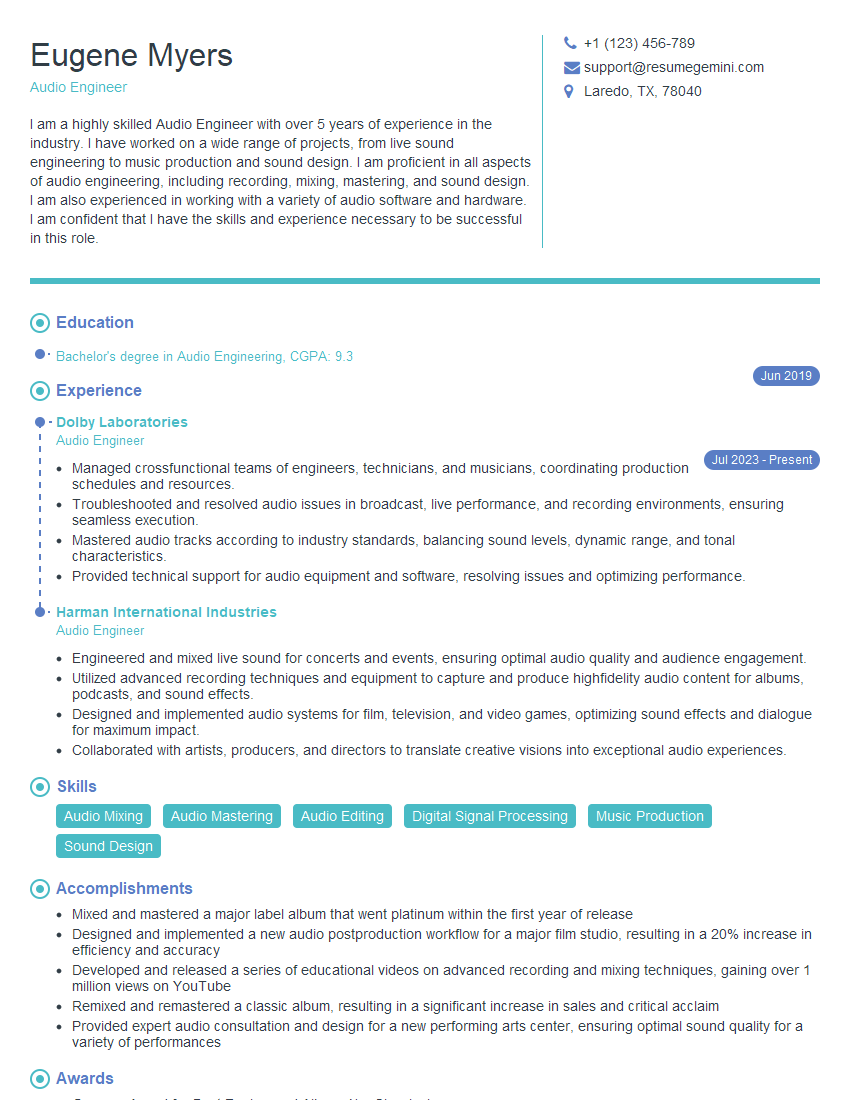

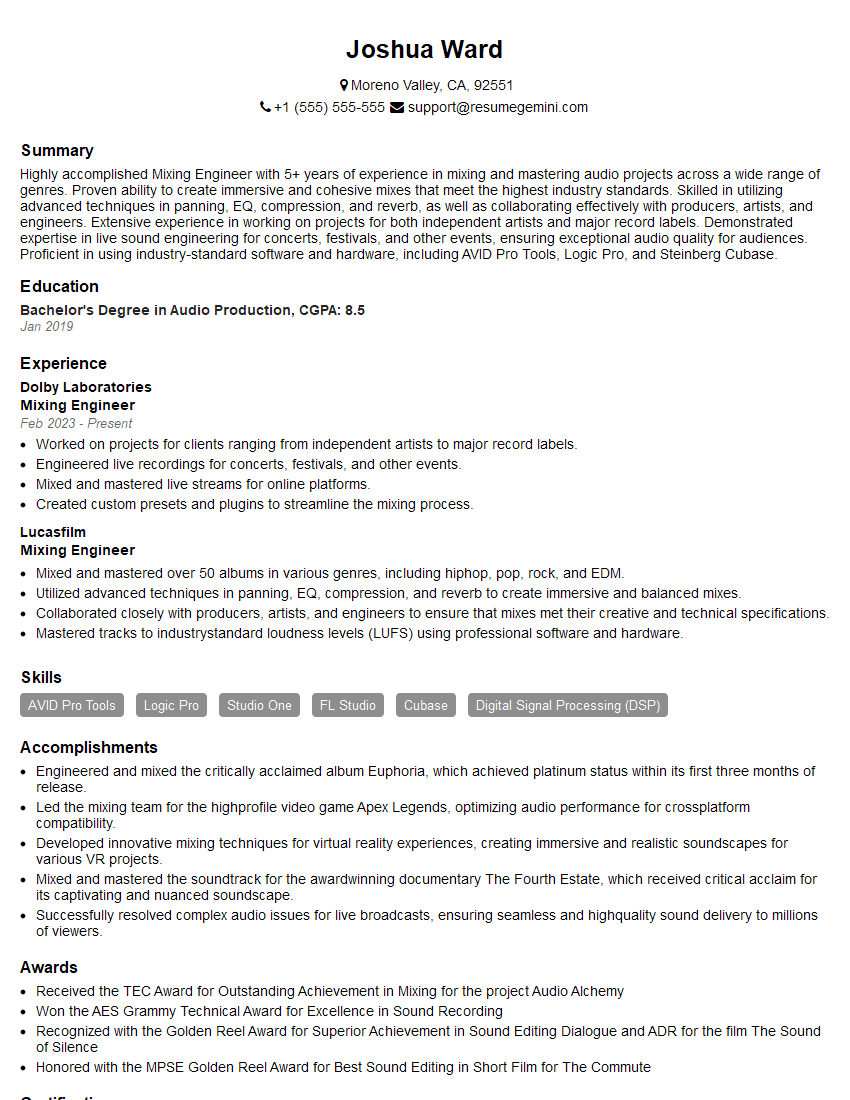

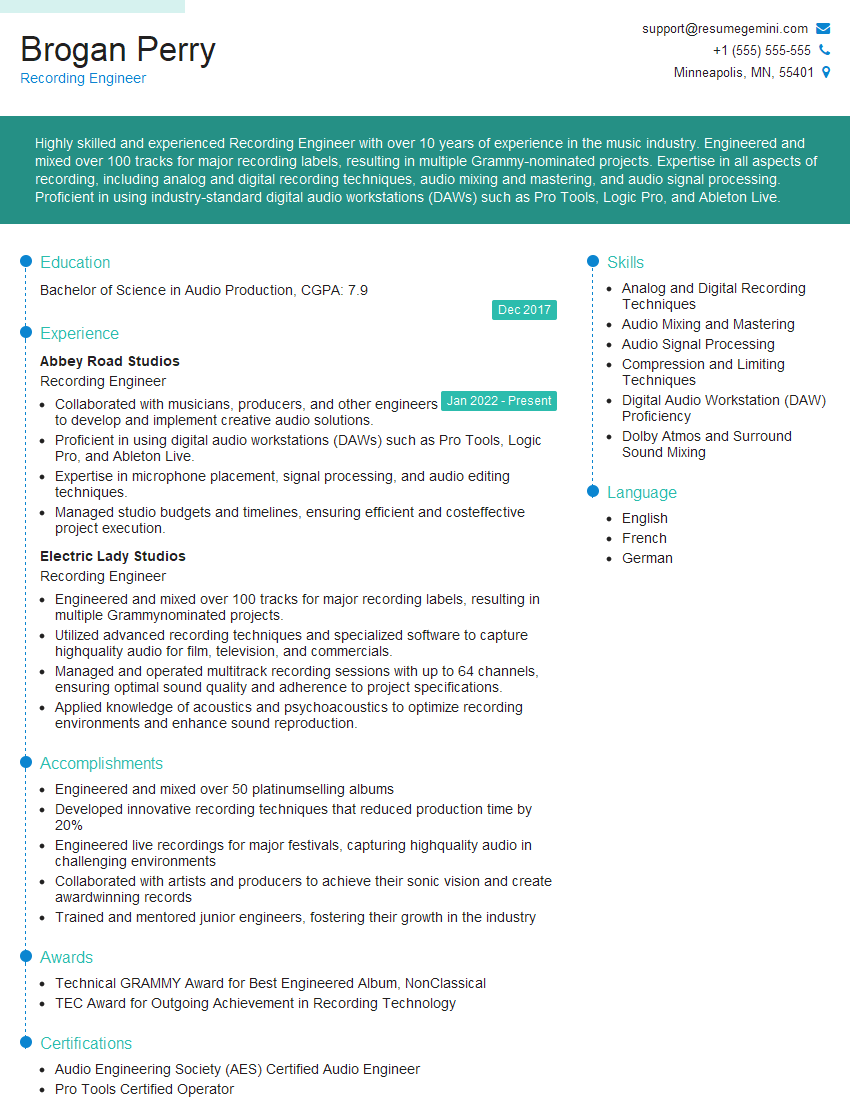

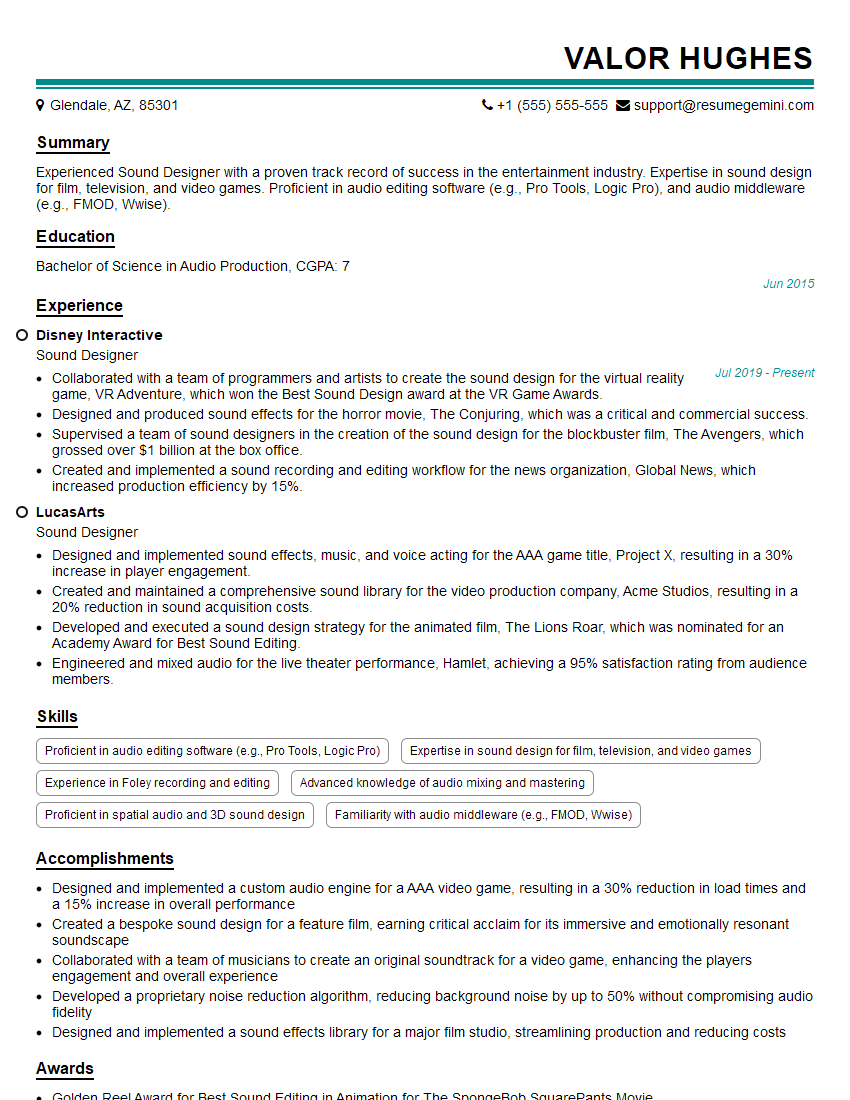

Mastering Digital Audio Workstations is essential for career advancement in music production, sound design, audio engineering, and related fields. A strong understanding of DAWs demonstrates technical proficiency and opens doors to exciting opportunities. To maximize your job prospects, create an ATS-friendly resume that effectively highlights your skills and experience. ResumeGemini is a trusted resource to help you build a professional and impactful resume, ensuring your qualifications stand out. Examples of resumes tailored to showcasing proficiency in Digital Audio Workstations are available to guide you.

Explore more articles

Users Rating of Our Blogs

Share Your Experience

We value your feedback! Please rate our content and share your thoughts (optional).

What Readers Say About Our Blog

Very informative content, great job.

good